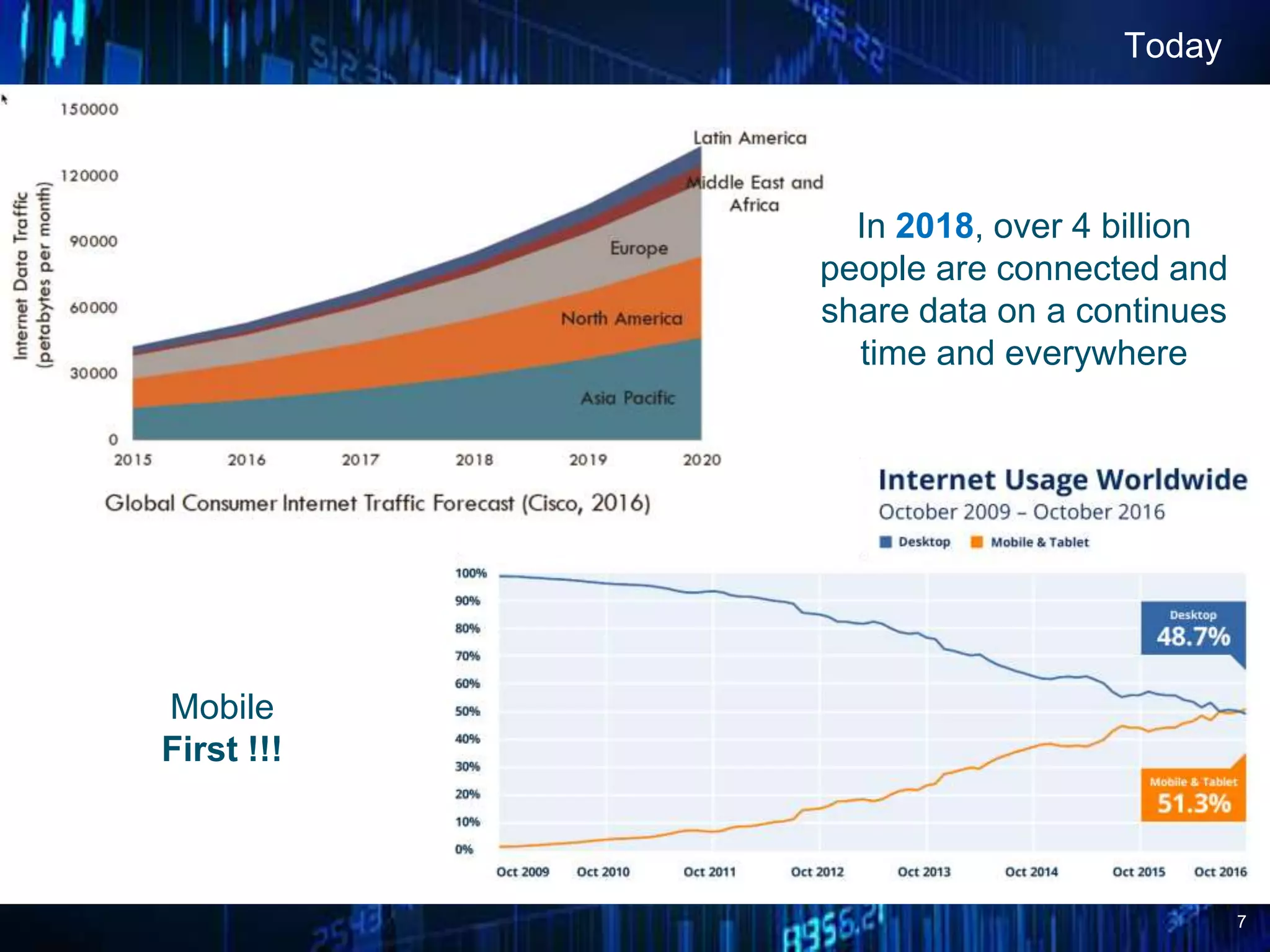

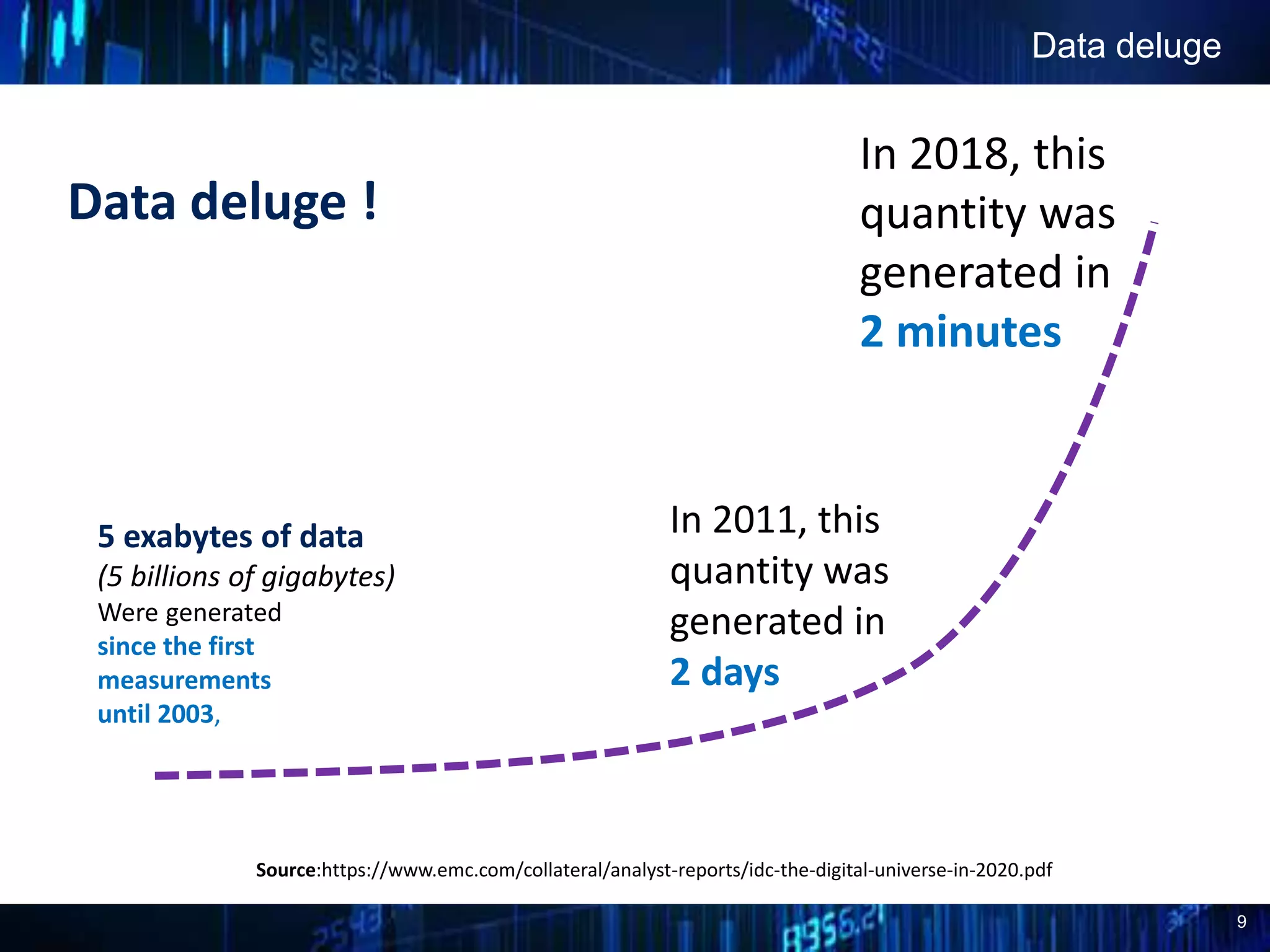

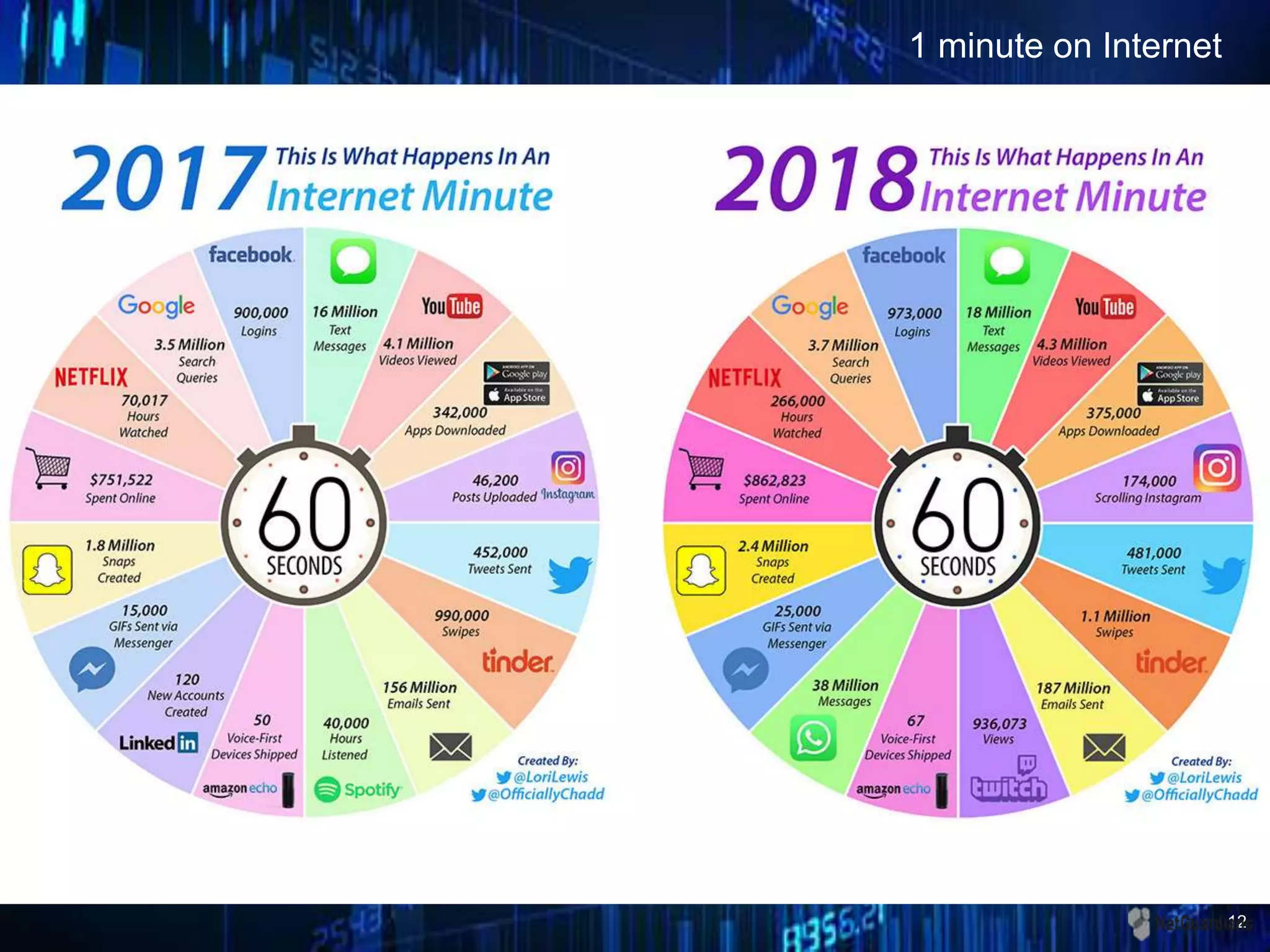

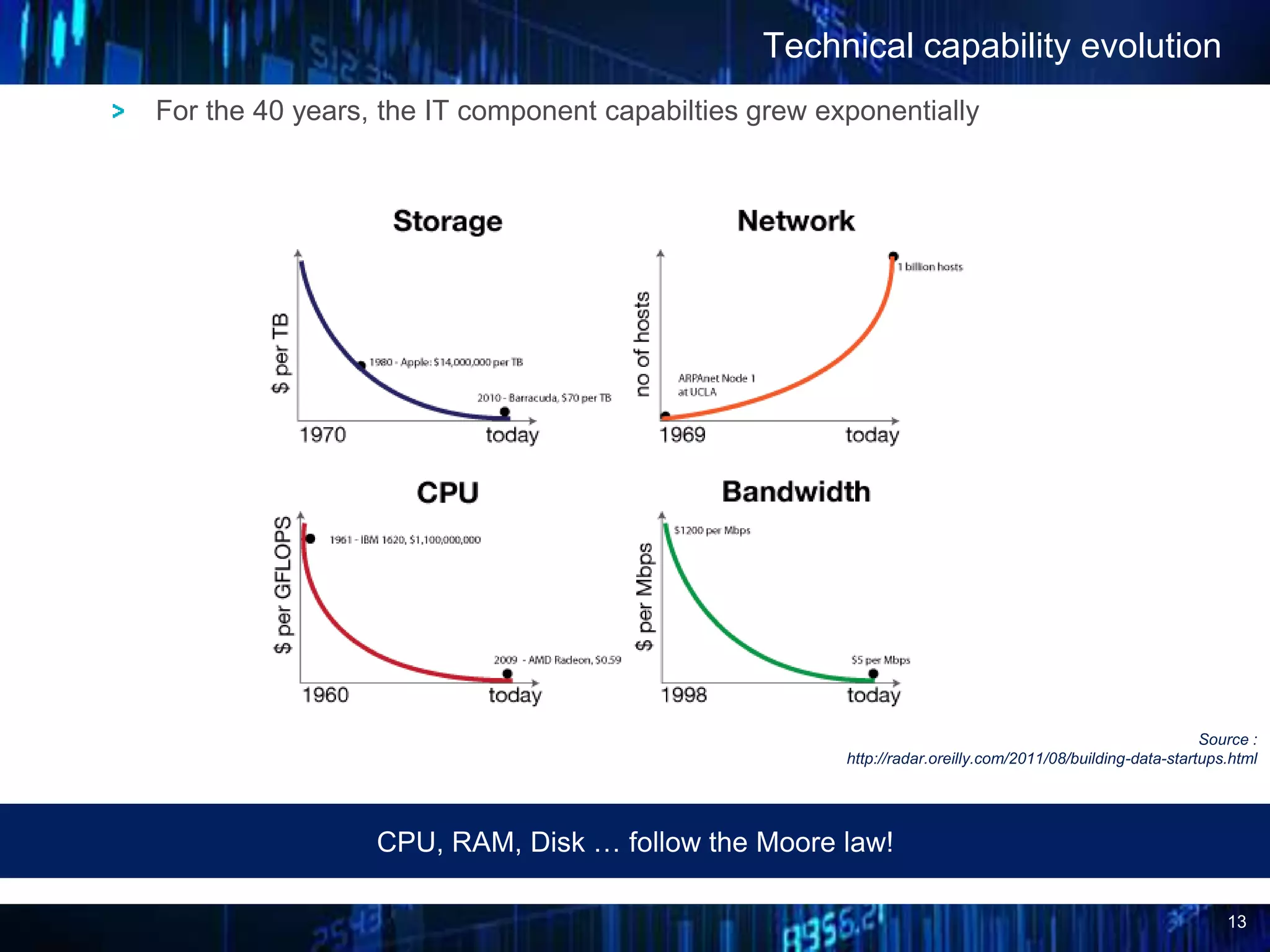

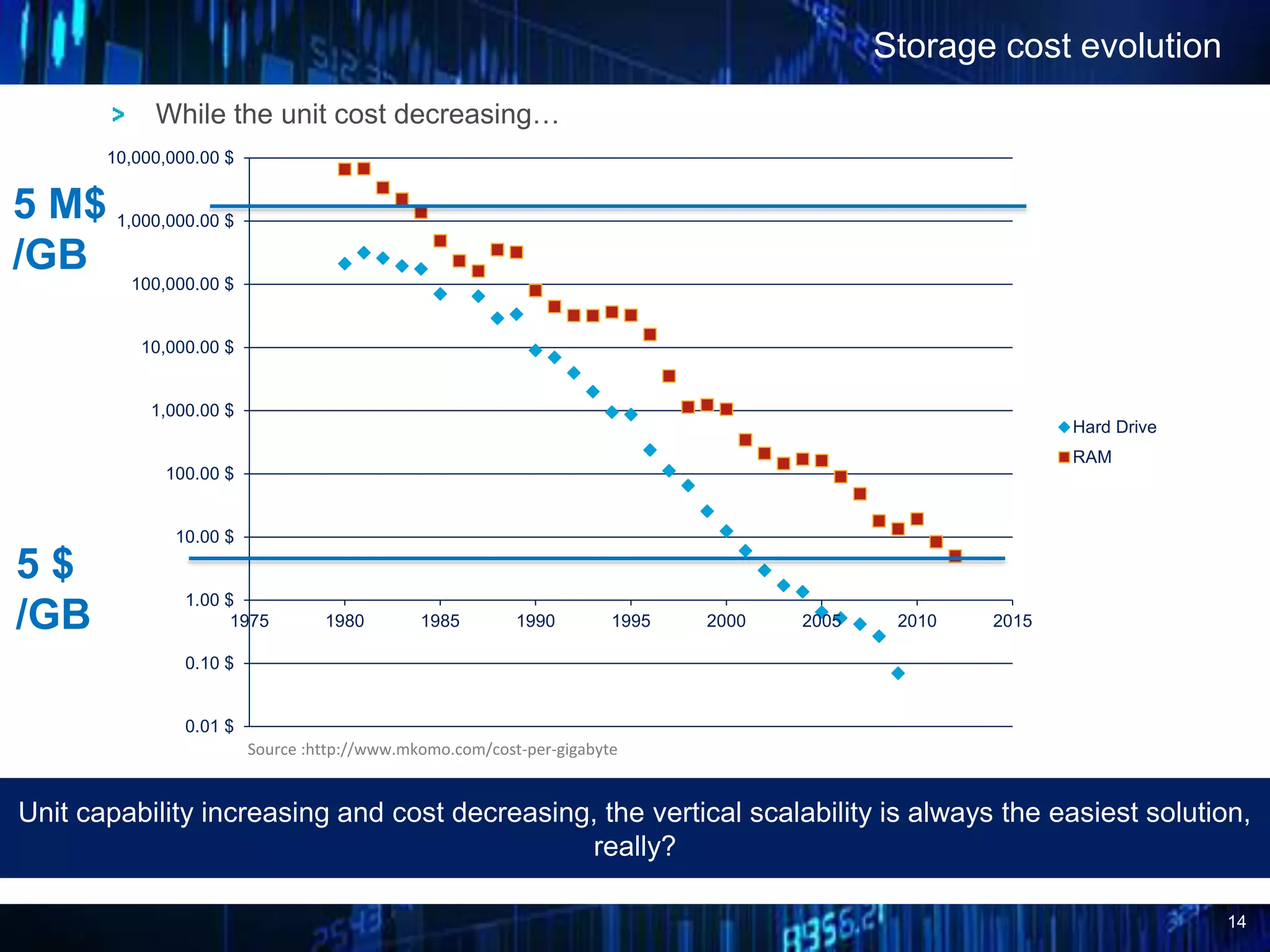

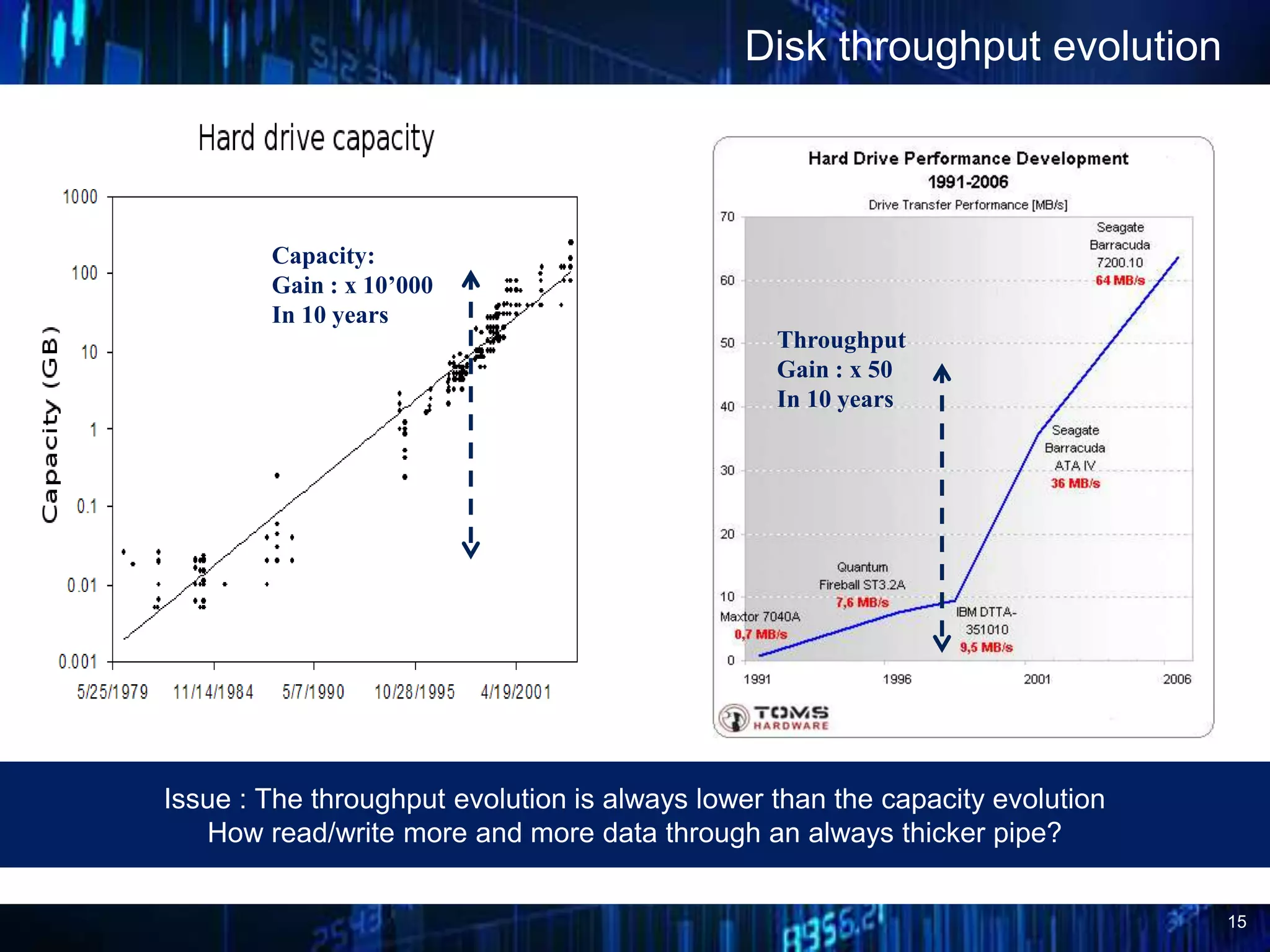

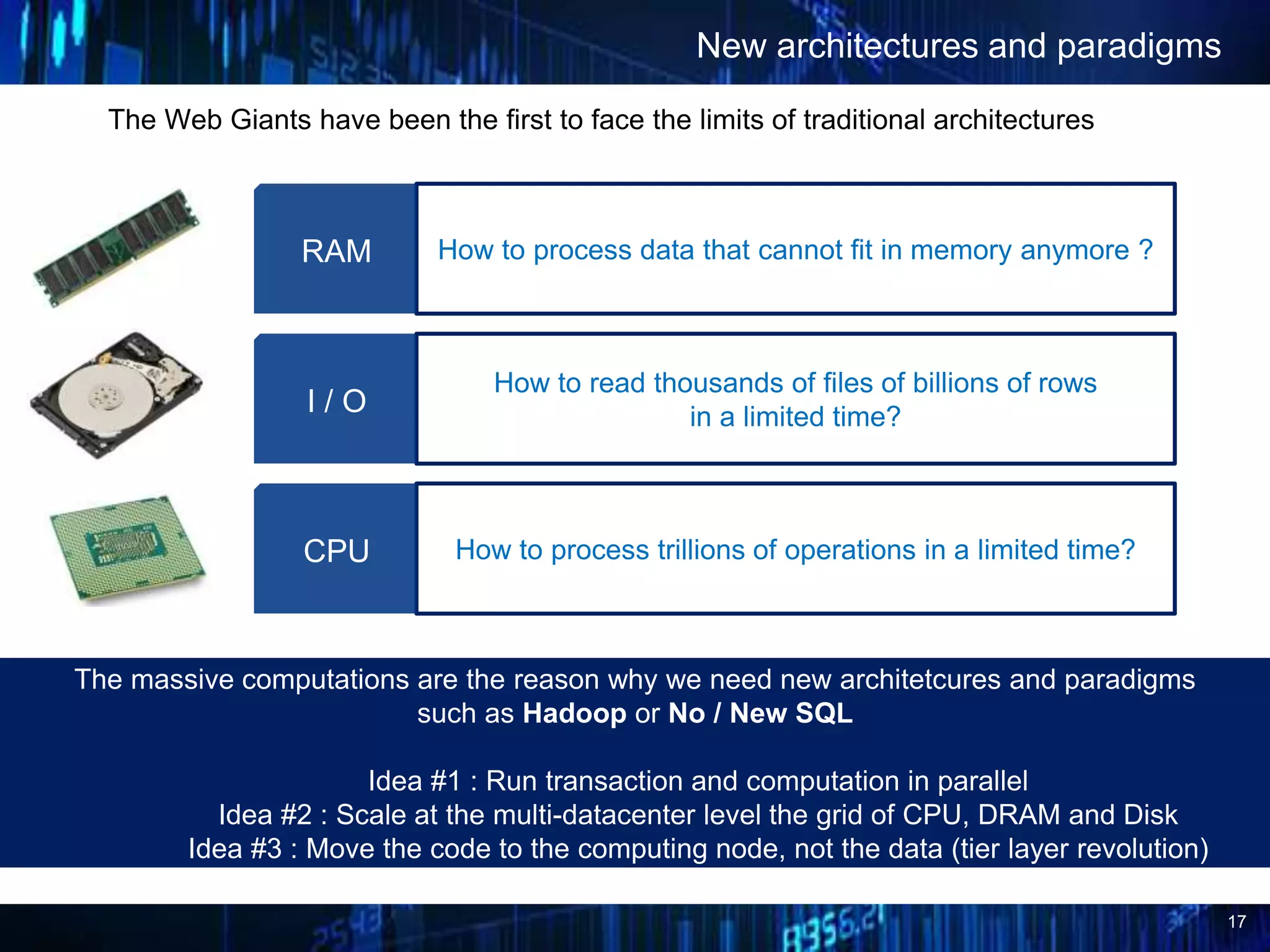

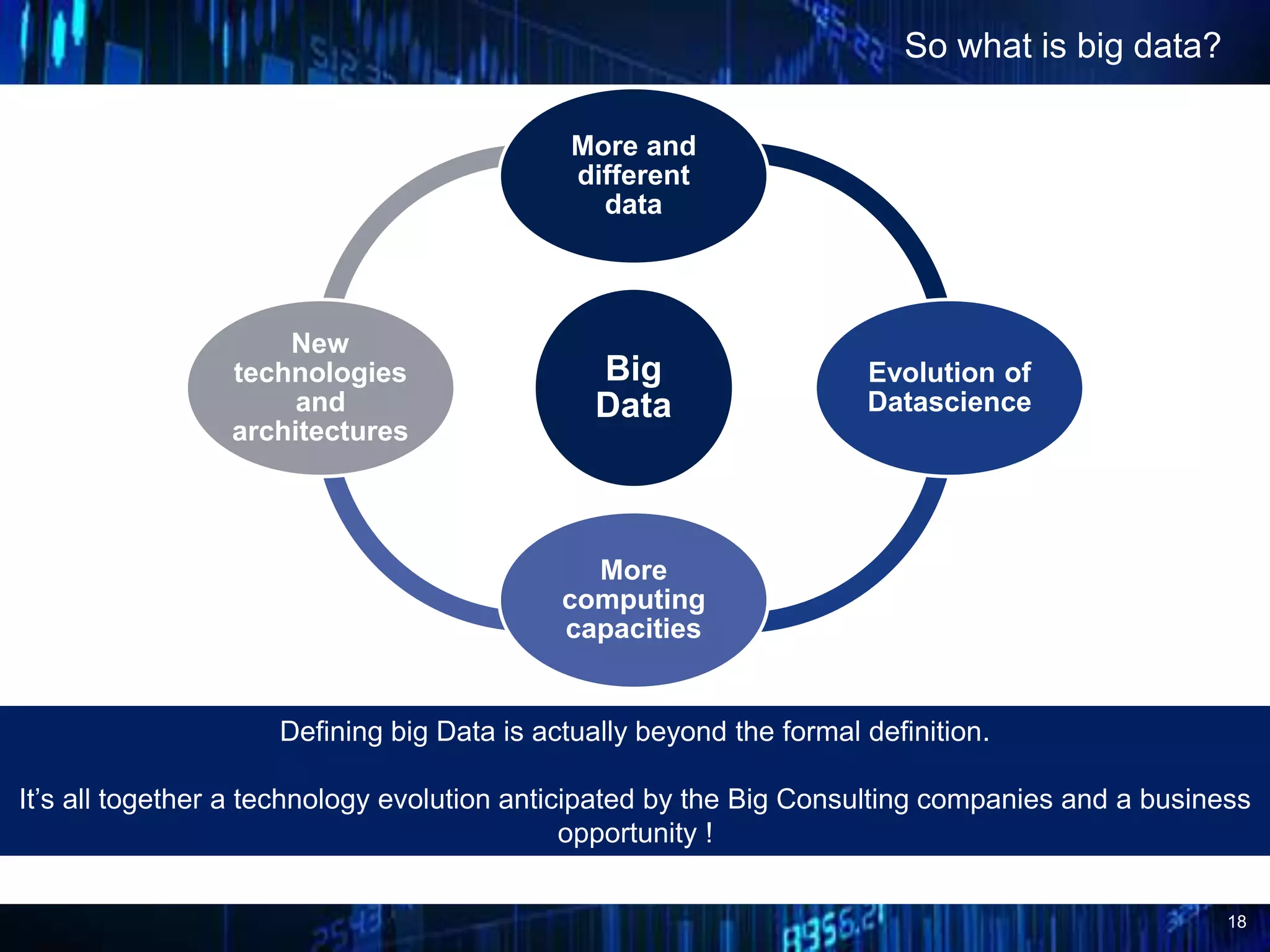

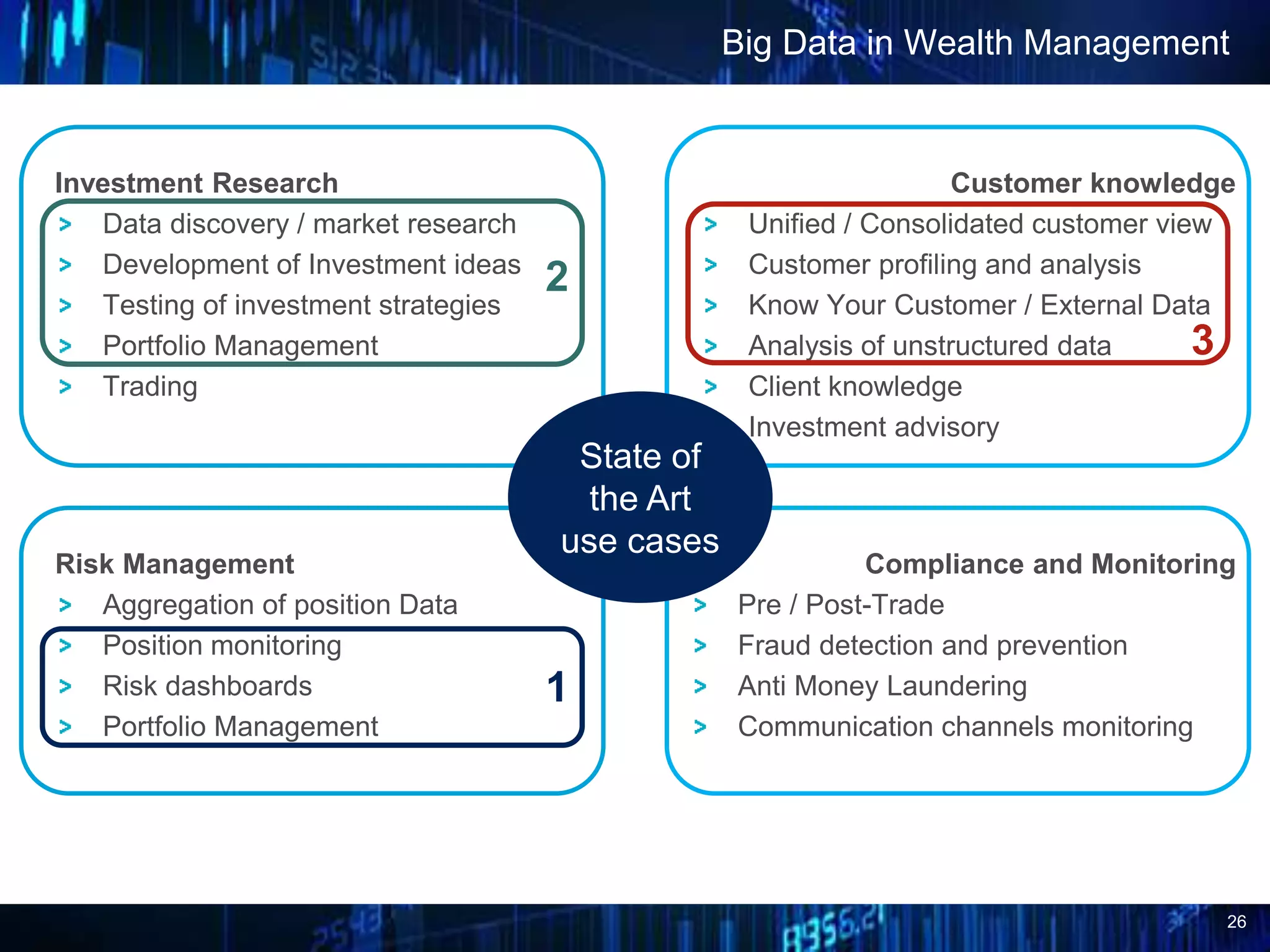

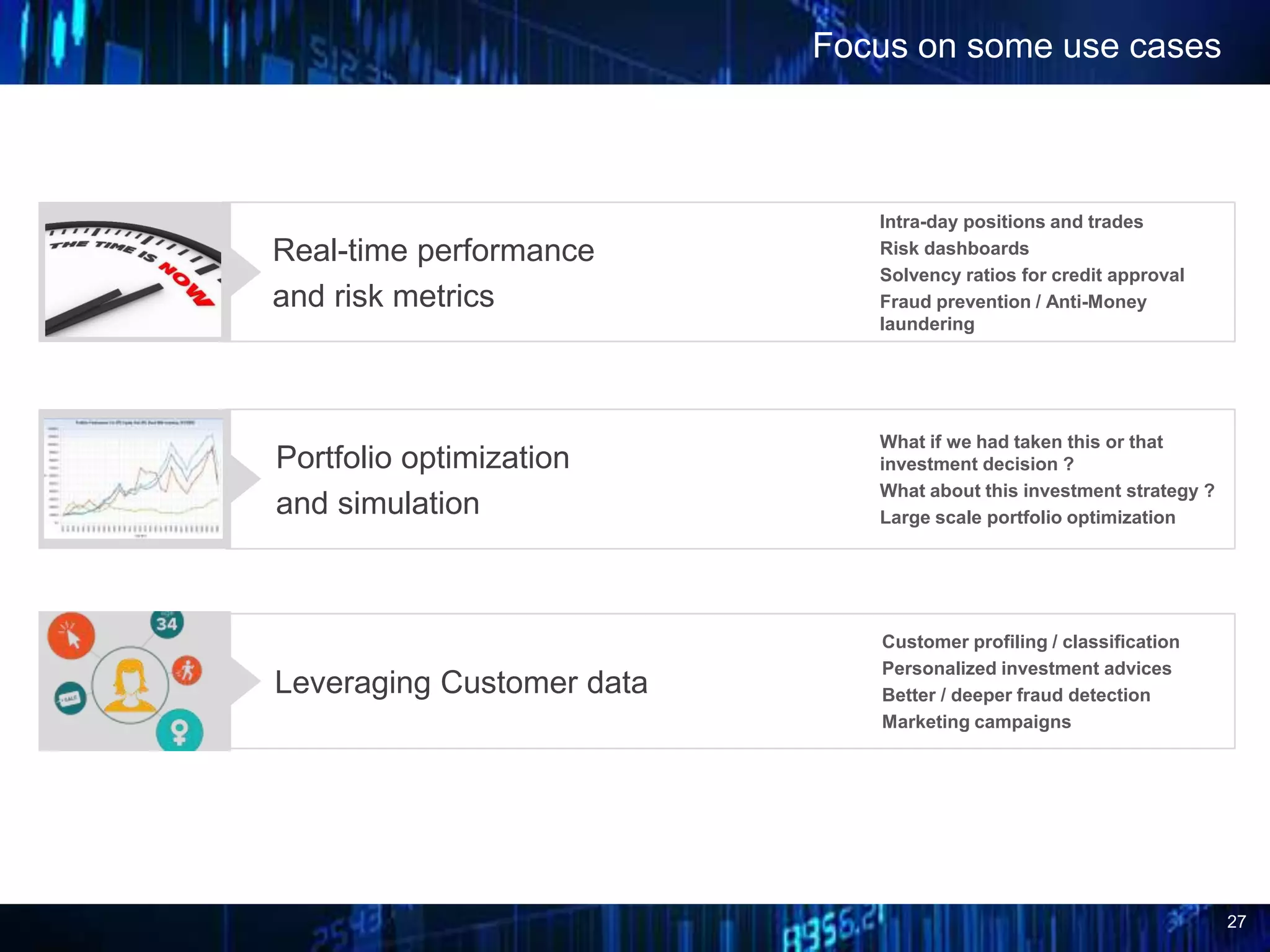

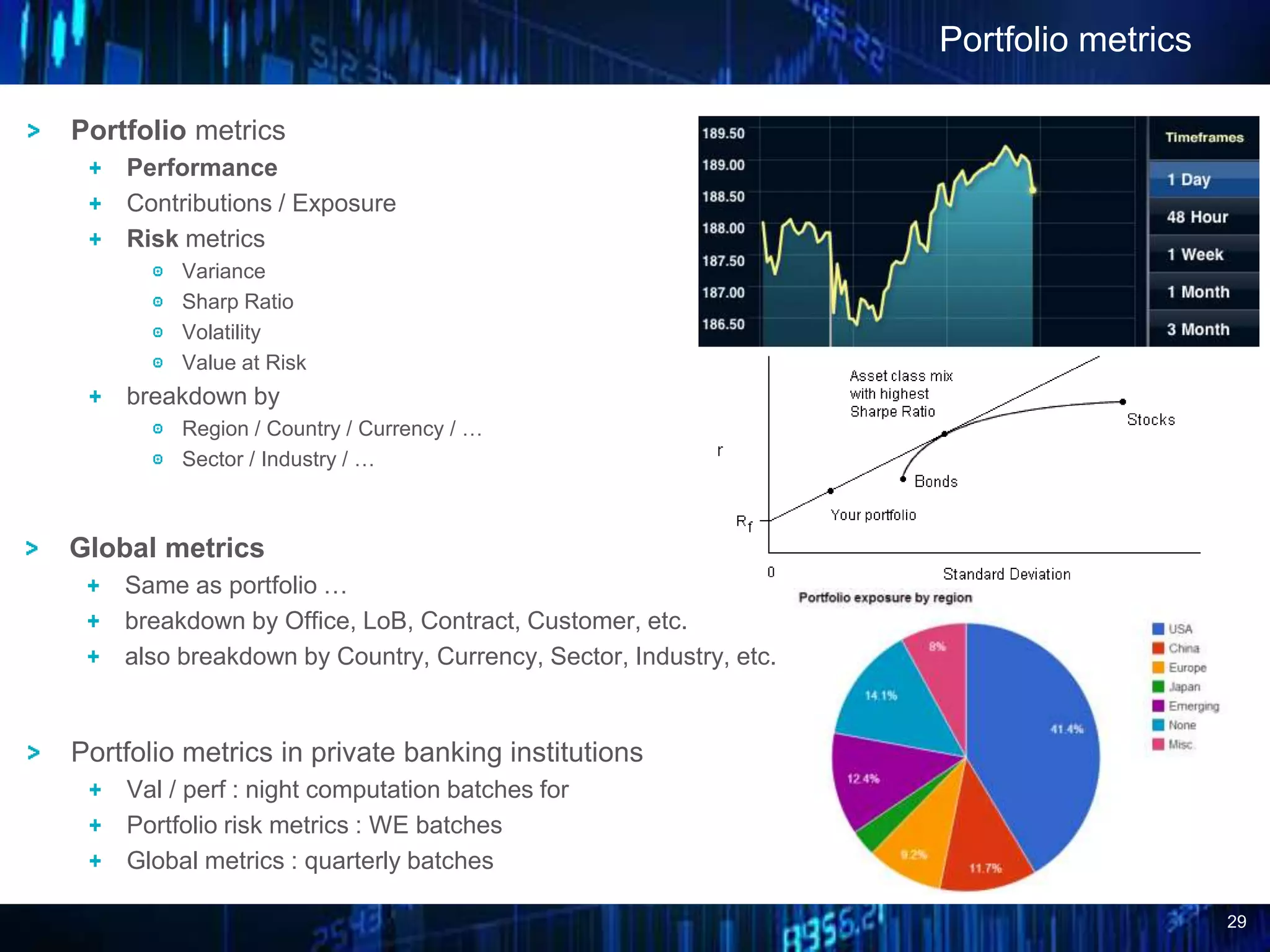

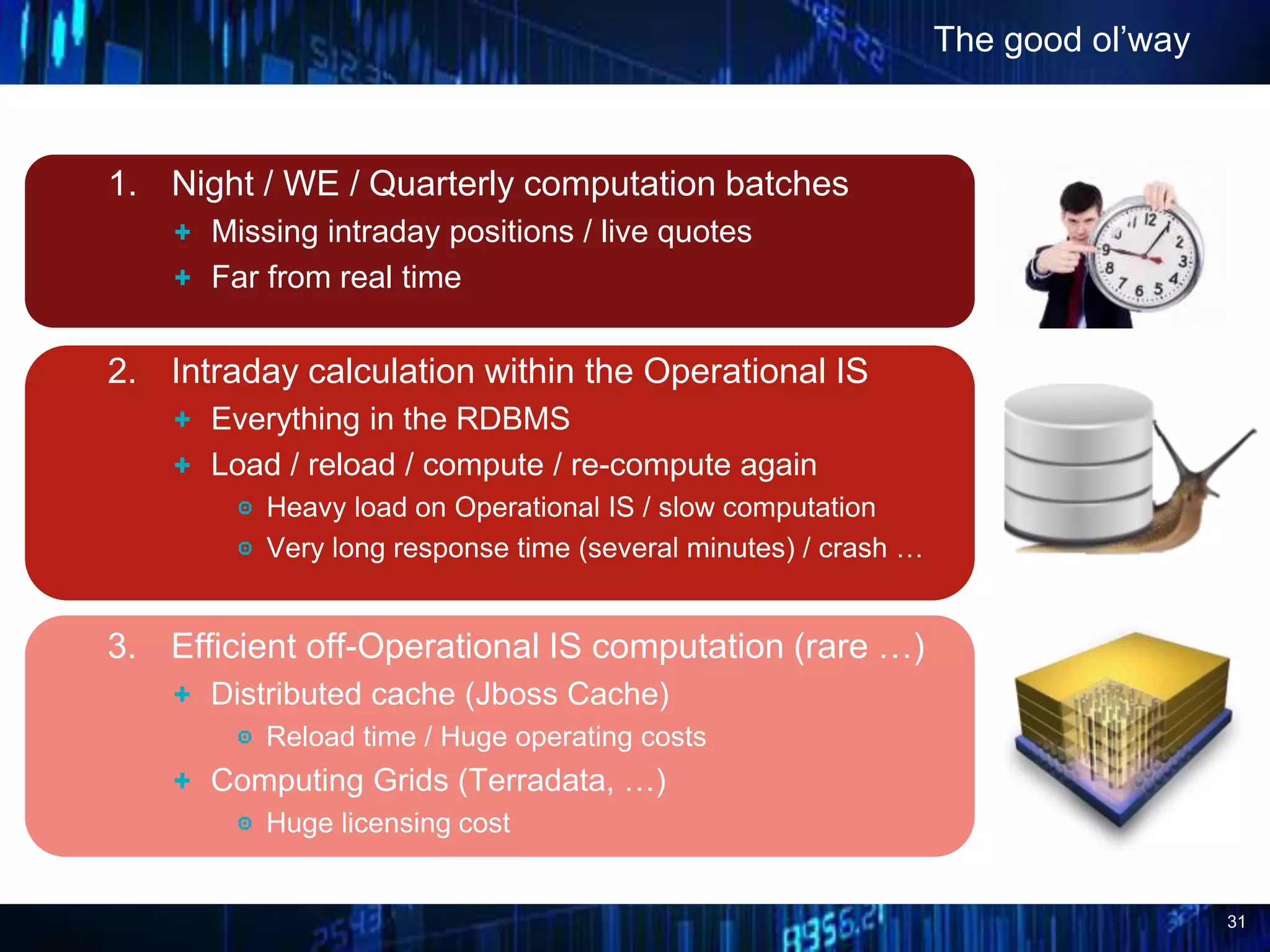

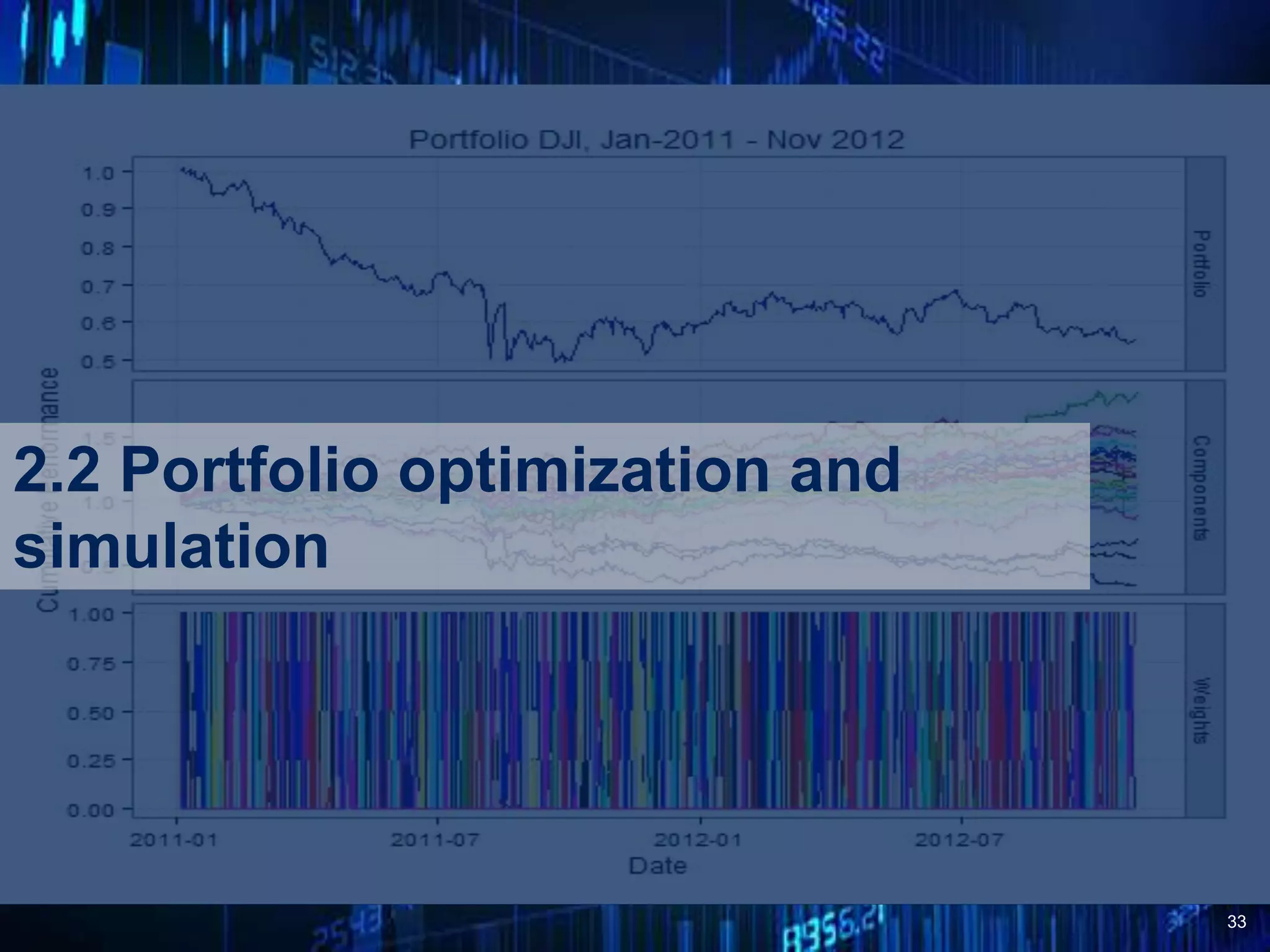

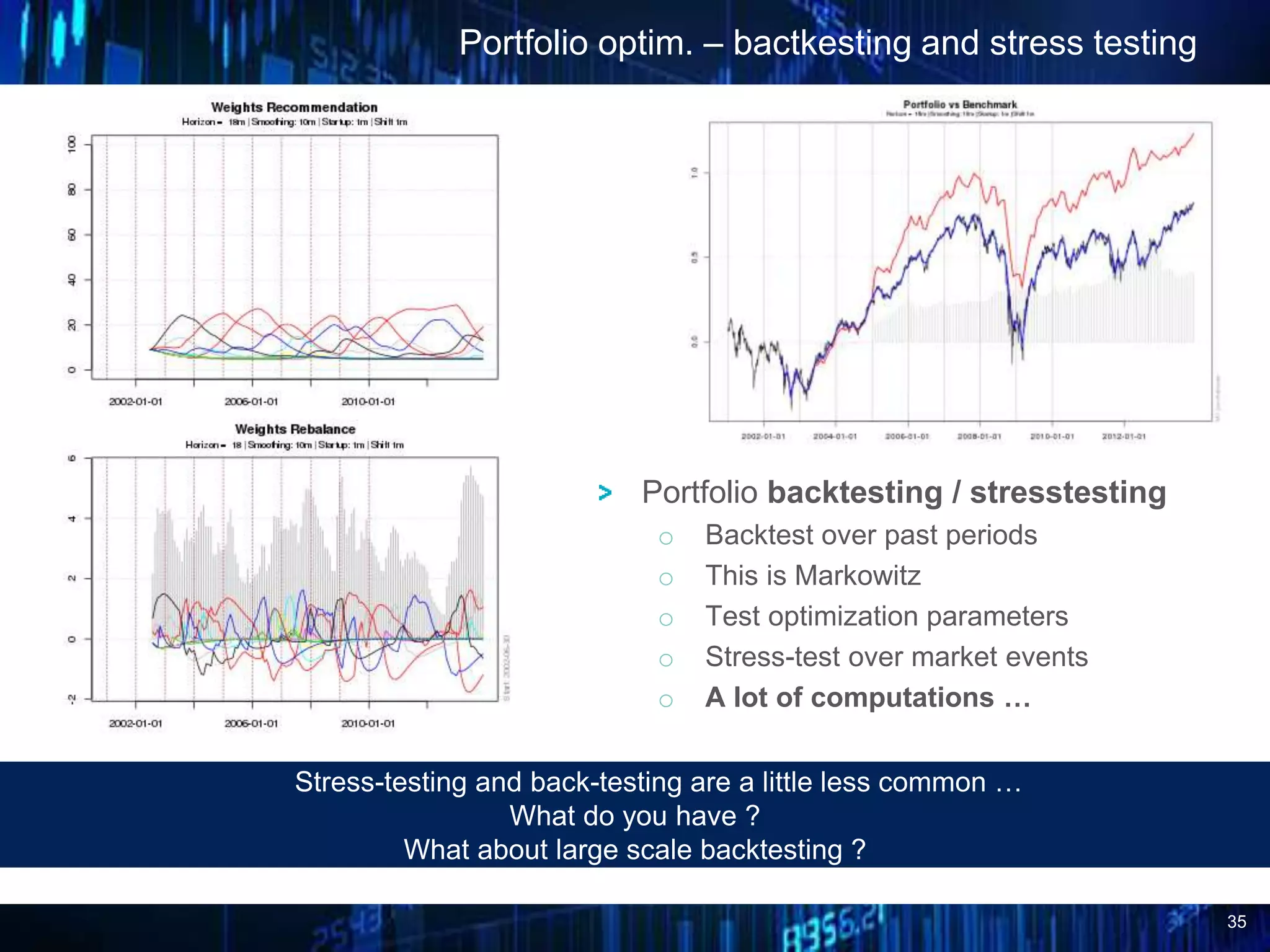

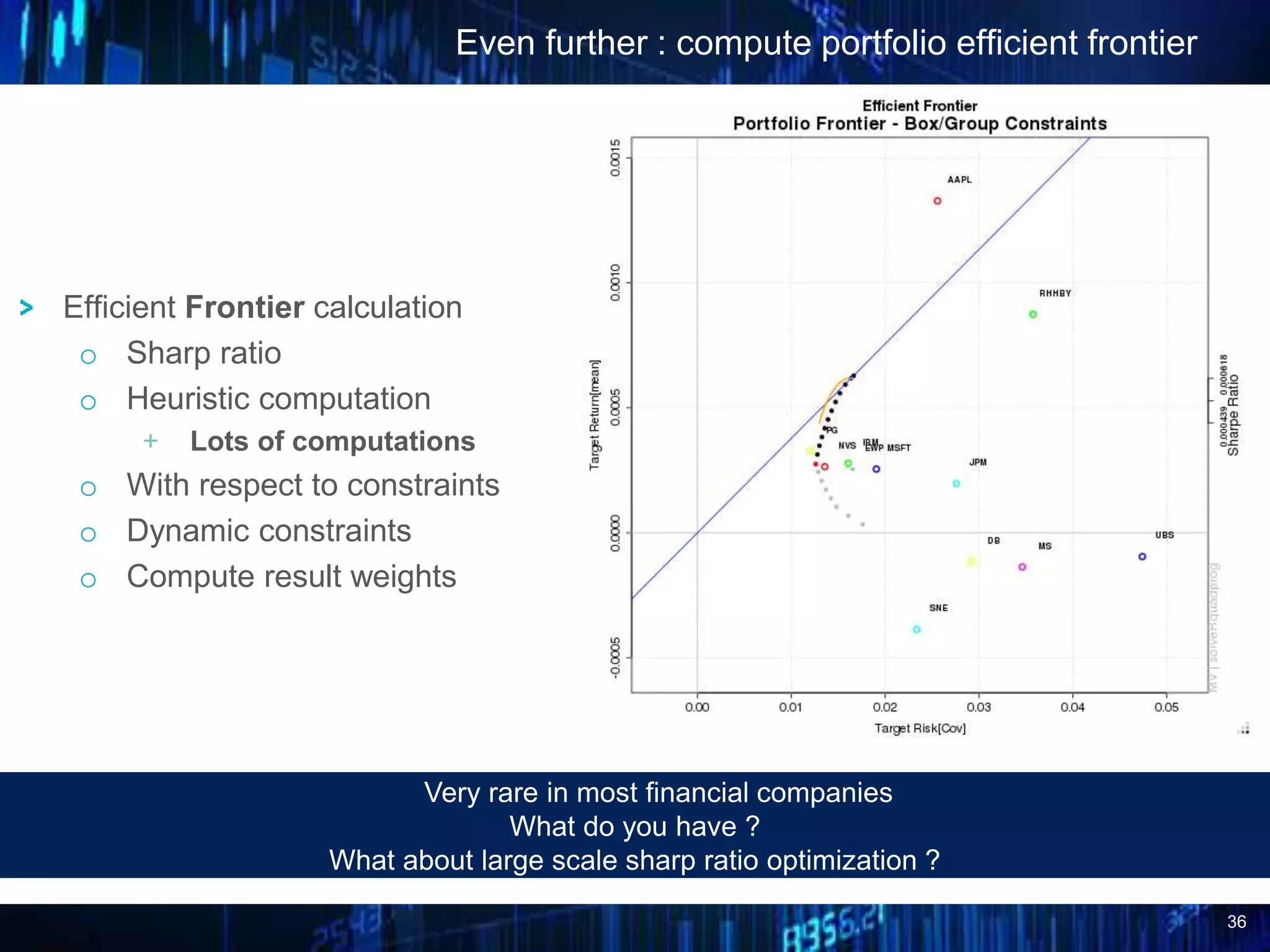

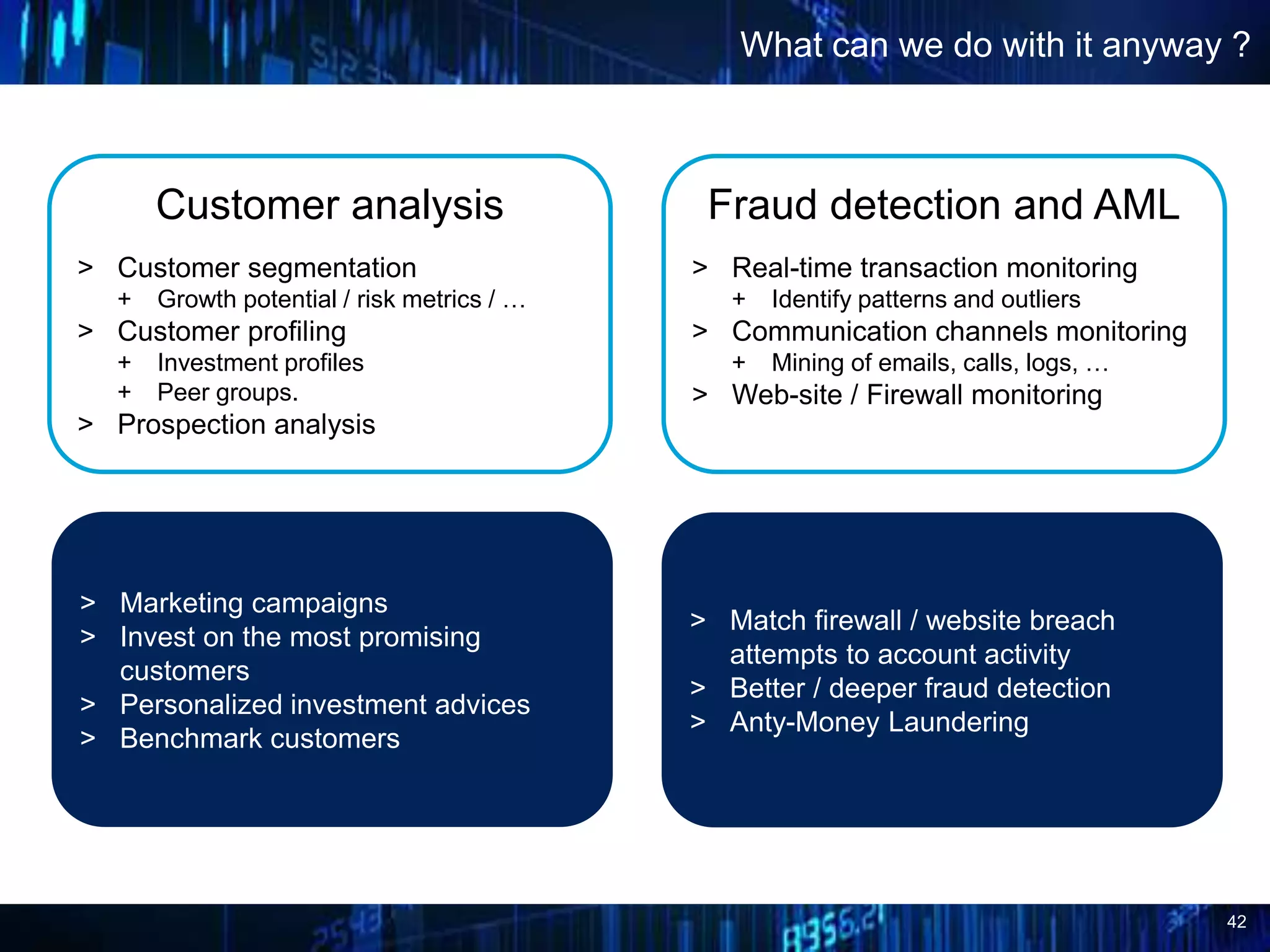

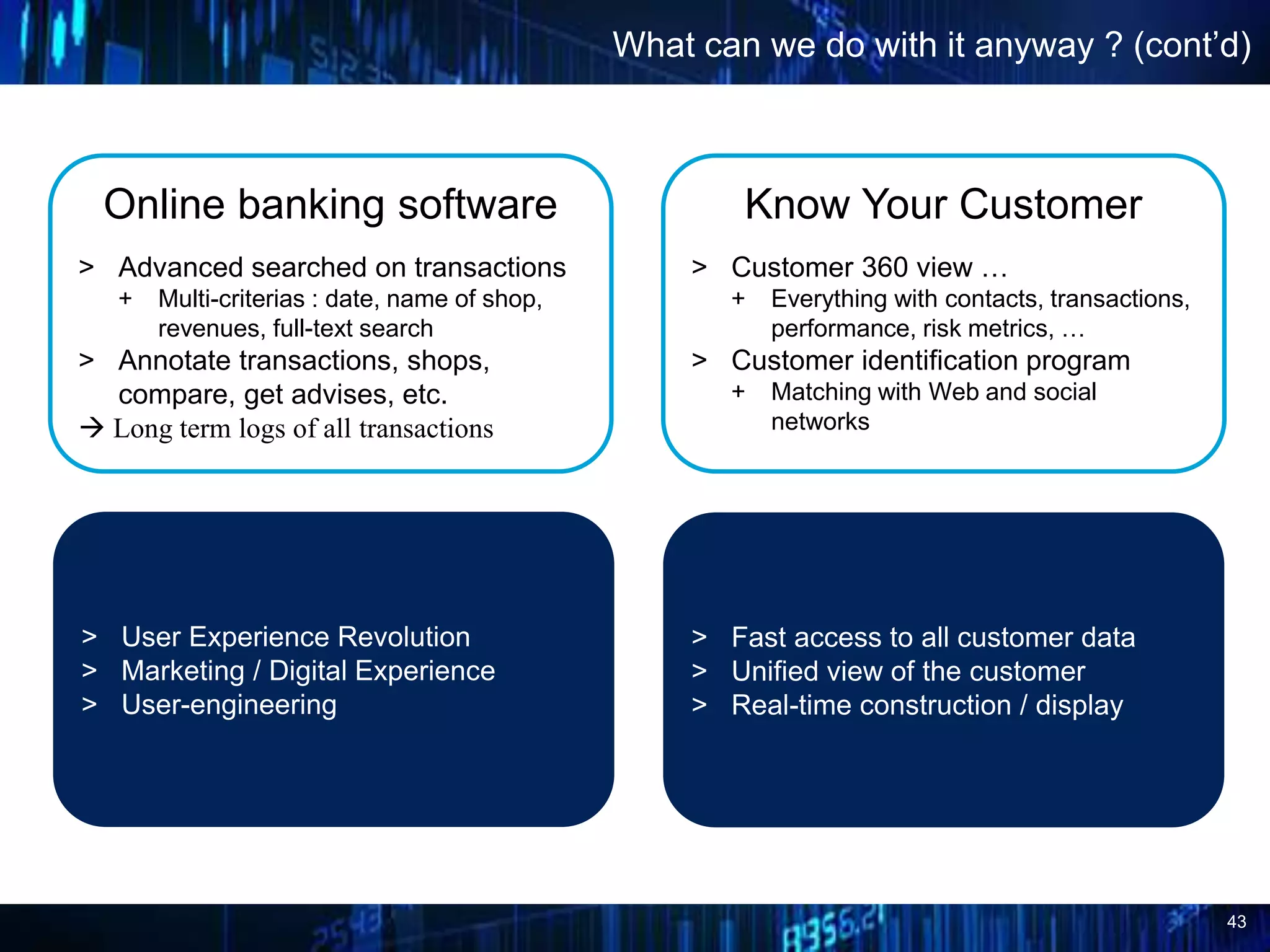

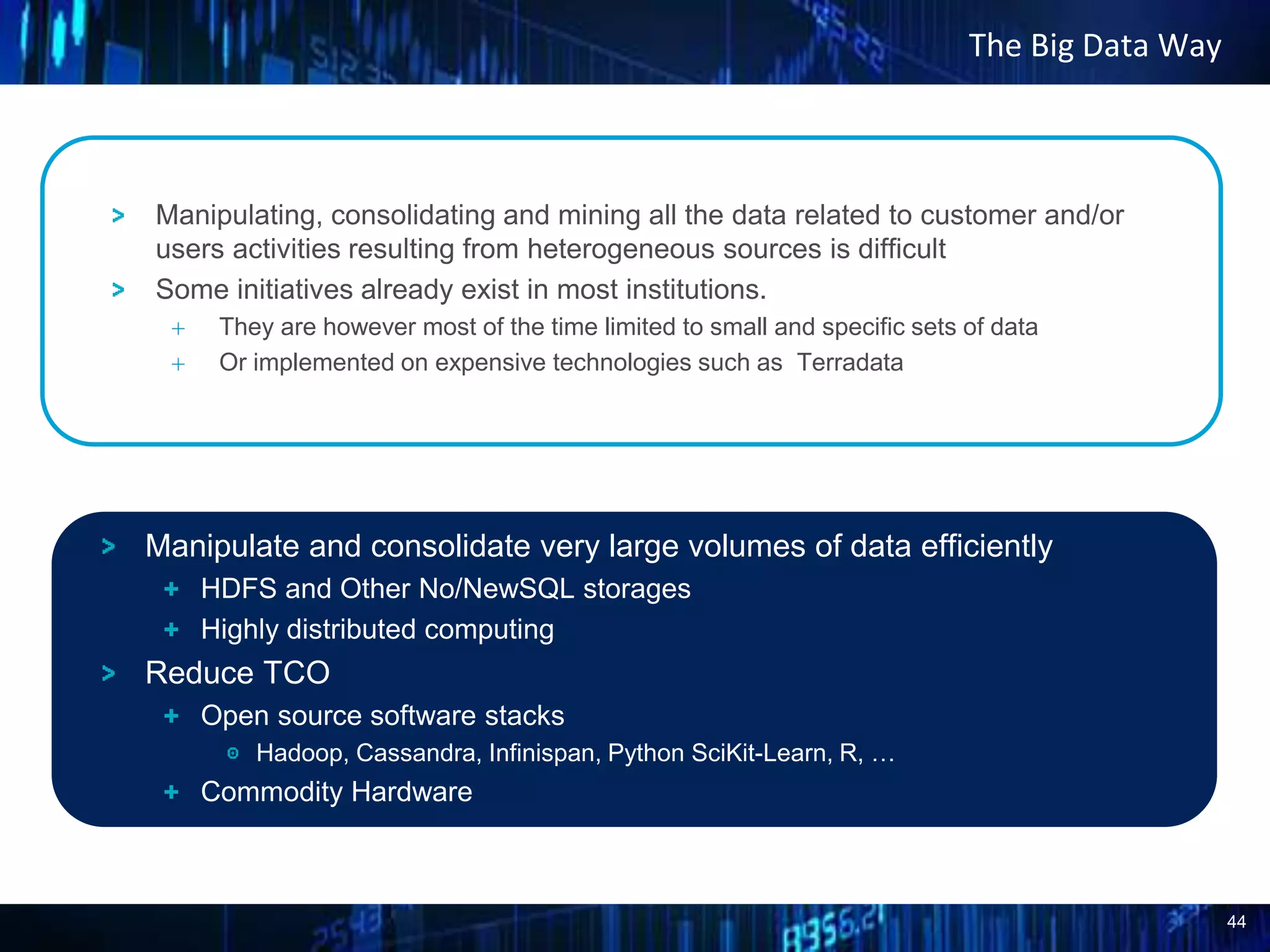

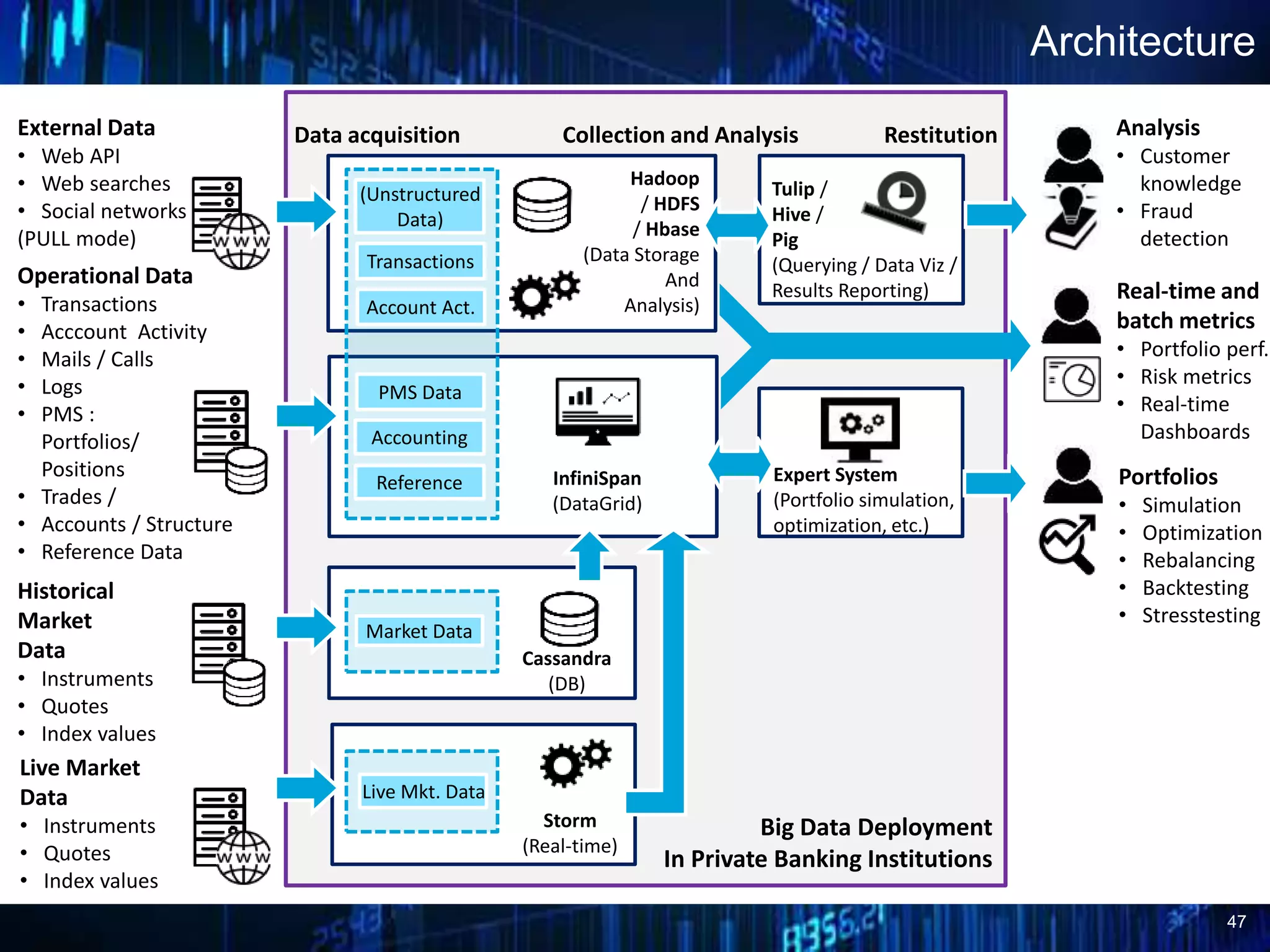

This document discusses opportunities for using big data in private wealth management. It begins by defining big data and describing how data volumes have increased exponentially. It then outlines several potential use cases for big data in areas like real-time performance metrics, portfolio optimization, and leveraging customer data. For each use case, it describes current limitations and how a big data approach could enable new capabilities. Finally, it proposes a phased approach for wealth managers to identify use cases, prioritize them, implement proofs of concept, and incrementally automate analysis and reporting. The overall message is that big data can enhance analytics and open up new opportunities previously only available to investment banks.