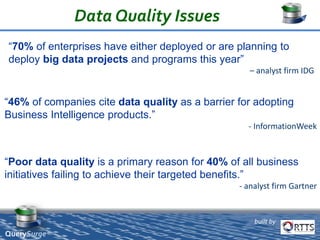

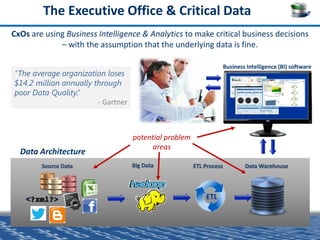

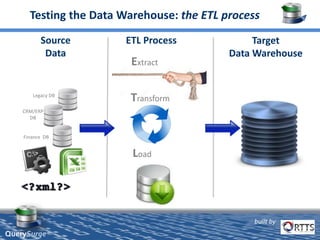

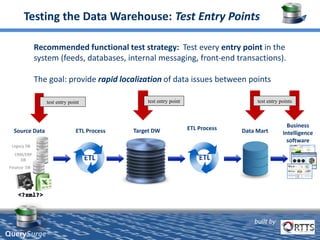

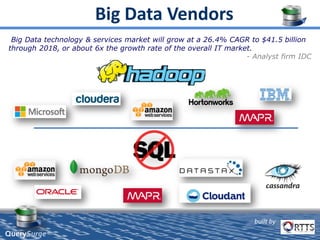

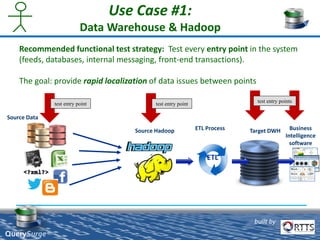

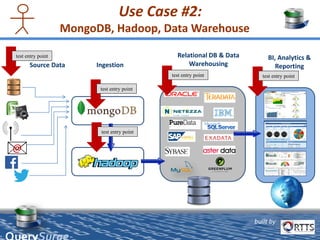

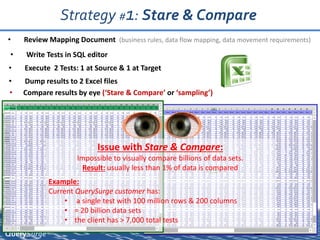

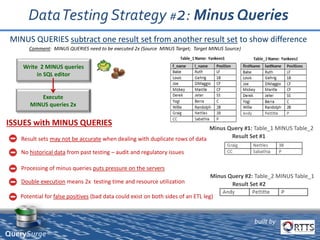

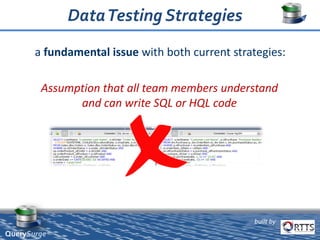

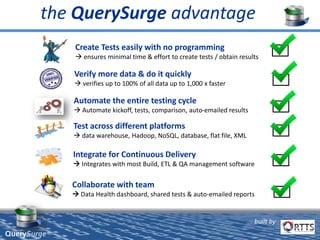

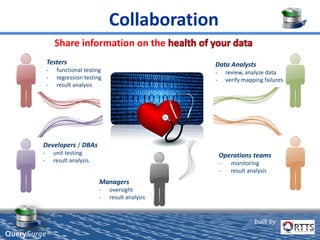

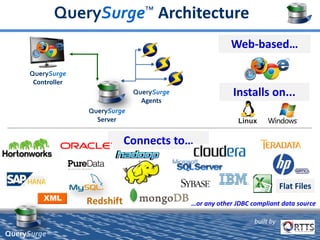

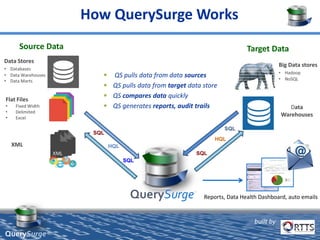

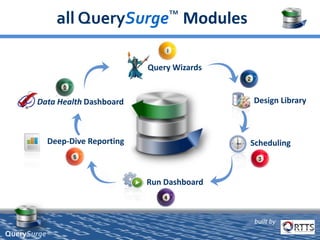

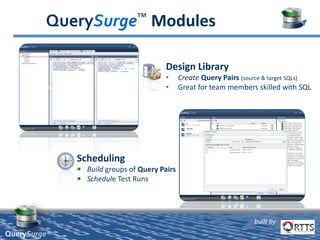

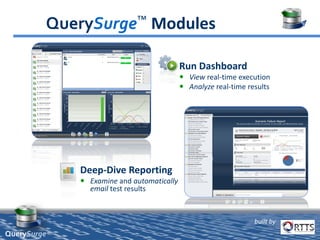

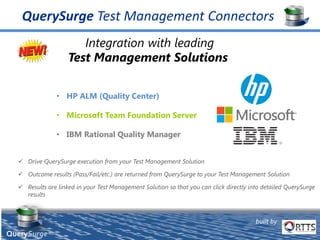

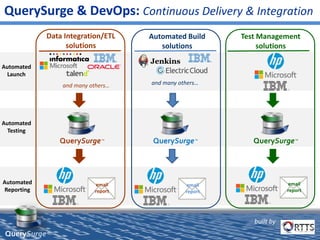

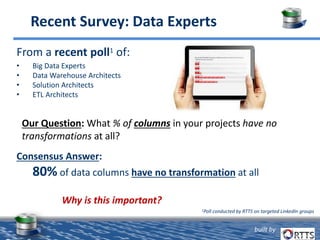

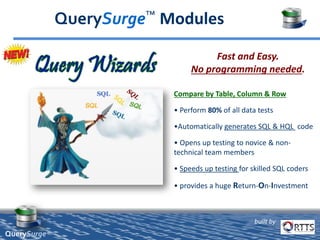

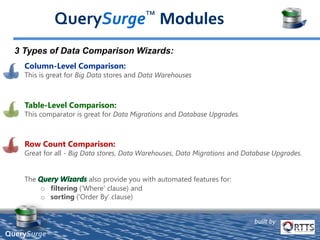

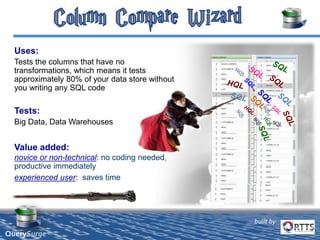

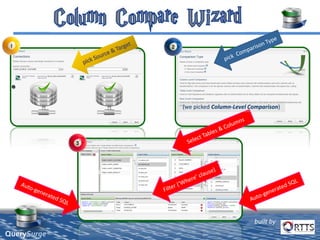

QuerySurge is an automated big data testing solution that enables enterprises to verify data quality without requiring coding expertise. It focuses on testing data warehouses and big data systems while addressing data quality issues that can lead to significant financial losses for organizations. The platform supports various data formats and integrates easily with existing software environments, offering functionalities like automated testing, reporting, and real-time data comparison.