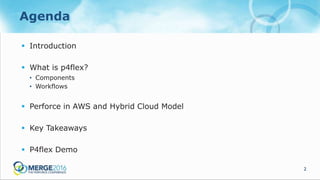

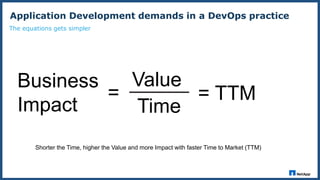

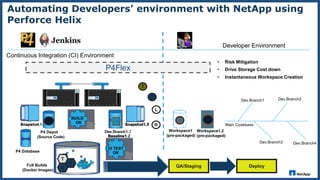

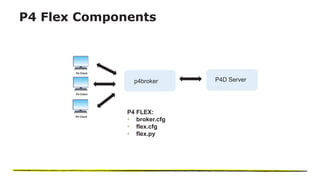

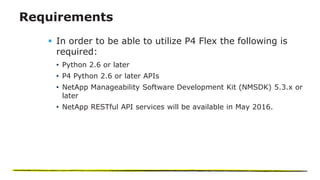

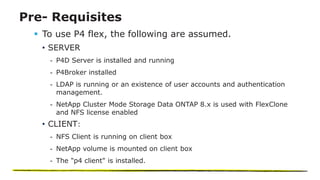

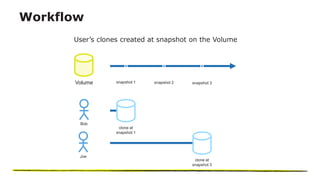

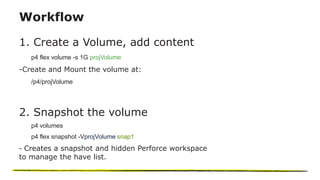

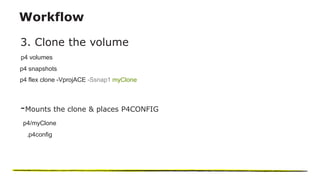

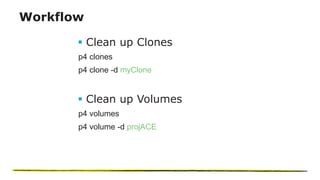

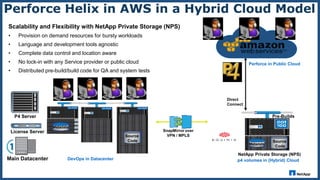

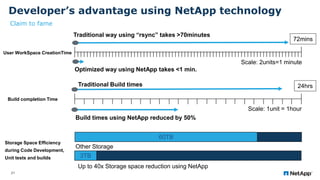

The document presents an overview of NetApp's P4Flex, a tool designed to enhance software development efficiency by automating developers' environments using Perforce Helix CI. It outlines the system requirements, workflows for volume management, and advantages of deploying P4Flex in a hybrid cloud model with NetApp technology. Key benefits include reduced workspace creation time and significant storage space efficiency during development and testing phases.