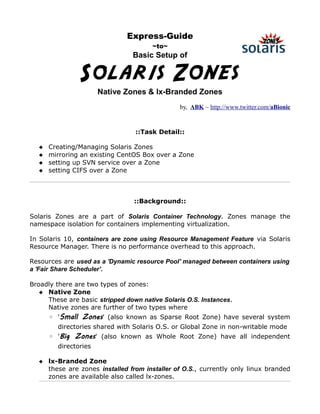

Solaris Zones (native & lxbranded) ~ A techXpress Guide

•

0 likes•2,768 views

Solaris Zones (native & lxbranded) ~ A techXpress Guide ~ Creating & Managing Solaris Zones; Mirroring an existing Linux Setup to a Zone; Setting up SVN, CIFS over a Zone

Report

Share

Report

Share

Download to read offline

Recommended

Red hat lvm cheatsheet

This document provides a cheat sheet on common Logical Volume Manager (LVM) commands for displaying, creating, modifying, and troubleshooting physical volumes (PVs), volume groups (VGs), and logical volumes (LVs) in Linux. It lists directory locations and files related to LVM, describes tools for diagnostics and debugging, and provides examples of commands for scanning and managing PVs, VGs, and LVs, including displaying information, creating, extending, reducing, removing, and changing attributes of volumes. It also discusses snapshots, mirroring, and procedures for repairing corrupted LVM metadata with and without replacing faulty disks.

2017-03-11 02 Денис Нелюбин. Docker & Ansible - лучшие друзья DevOps

Docker provides containerization capabilities while Ansible provides automation and configuration capabilities. Together they are useful DevOps tools. Docker allows building and sharing application environments while Ansible automates configuration and deployment. Key points covered include Docker concepts like images and containers, building images with Dockerfiles, and using Docker Compose to run multi-container apps. Ansible is described as a remote execution and configuration tool using YAML playbooks and roles to deploy applications. Their complementary nature makes them good DevOps partners.

Getting Started with Docker

The document provides an overview of getting started with Docker. It discusses what Docker is, how containerization differs from virtualization, and how to install Docker. It covers building Docker images using Dockerfiles, the difference between images and containers, and common Docker commands. The document also compares traditional deployment workflows to those using Docker, demonstrating how Docker can help ensure consistency across environments.

JDO 2019: Tips and Tricks from Docker Captain - Łukasz Lach

The document provides tips and tricks for using Docker including:

1) Installing Docker on Linux in an easy way allowing choice of channel and version.

2) Setting up a local Docker Hub mirror for caching and revalidating images.

3) Using docker inspect to find containers that exited with non-zero codes or show commands for running containers.

4) Organizing docker-compose files with extensions, environment variables, anchors and aliases for well structured services.

Useful Kafka tools

The document provides instructions on how to install, configure, and use various Kafka tools including kaf, kafkacat, and Node-RED. It shows how to produce messages to and consume messages from Kafka topics using the command line tools kaf and kafkacat. It also demonstrates integrating Kafka with Node-RED by adding Kafka nodes to consume and produce messages.

Describing Kafka security in AsyncAPI

This session will quickly show you how to describe the security configuration of your Kafka cluster in an AsyncAPI document. And if you've been given an AsyncAPI document, this session will show you how to use that to configure a Kafka client or application to connect to the cluster, using the details in the AsyncAPI spec.

Running High Performance and Fault Tolerant Elasticsearch Clusters on Docker

Sematext engineer Rafal Kuc (@kucrafal) walks through the details of running high-performance, fault tolerant Elasticsearch clusters on Docker. Topics include: Containers vs. Virtual Machines, running the official Elasticsearch container, container constraints, good network practices, dealing with storage, data-only Docker volumes, scaling, time-based data, multiple tiers and tenants, indexing with and without routing, querying with and without routing, routing vs. no routing, and monitoring. Talk was delivered at DevOps Days Warsaw 2015.

Replacing Squid with ATS

This document summarizes a talk given at ApacheCon 2015 about replacing Squid with ATS (Apache Traffic Server) as the proxy server at Yahoo. It discusses the history of using Squid at Yahoo, limitations with Squid that prompted the switch to ATS, key differences in configuration between the two systems, examples of forwarding and reverse proxy use cases, and learnings around managing open source projects and migration testing.

Recommended

Red hat lvm cheatsheet

This document provides a cheat sheet on common Logical Volume Manager (LVM) commands for displaying, creating, modifying, and troubleshooting physical volumes (PVs), volume groups (VGs), and logical volumes (LVs) in Linux. It lists directory locations and files related to LVM, describes tools for diagnostics and debugging, and provides examples of commands for scanning and managing PVs, VGs, and LVs, including displaying information, creating, extending, reducing, removing, and changing attributes of volumes. It also discusses snapshots, mirroring, and procedures for repairing corrupted LVM metadata with and without replacing faulty disks.

2017-03-11 02 Денис Нелюбин. Docker & Ansible - лучшие друзья DevOps

Docker provides containerization capabilities while Ansible provides automation and configuration capabilities. Together they are useful DevOps tools. Docker allows building and sharing application environments while Ansible automates configuration and deployment. Key points covered include Docker concepts like images and containers, building images with Dockerfiles, and using Docker Compose to run multi-container apps. Ansible is described as a remote execution and configuration tool using YAML playbooks and roles to deploy applications. Their complementary nature makes them good DevOps partners.

Getting Started with Docker

The document provides an overview of getting started with Docker. It discusses what Docker is, how containerization differs from virtualization, and how to install Docker. It covers building Docker images using Dockerfiles, the difference between images and containers, and common Docker commands. The document also compares traditional deployment workflows to those using Docker, demonstrating how Docker can help ensure consistency across environments.

JDO 2019: Tips and Tricks from Docker Captain - Łukasz Lach

The document provides tips and tricks for using Docker including:

1) Installing Docker on Linux in an easy way allowing choice of channel and version.

2) Setting up a local Docker Hub mirror for caching and revalidating images.

3) Using docker inspect to find containers that exited with non-zero codes or show commands for running containers.

4) Organizing docker-compose files with extensions, environment variables, anchors and aliases for well structured services.

Useful Kafka tools

The document provides instructions on how to install, configure, and use various Kafka tools including kaf, kafkacat, and Node-RED. It shows how to produce messages to and consume messages from Kafka topics using the command line tools kaf and kafkacat. It also demonstrates integrating Kafka with Node-RED by adding Kafka nodes to consume and produce messages.

Describing Kafka security in AsyncAPI

This session will quickly show you how to describe the security configuration of your Kafka cluster in an AsyncAPI document. And if you've been given an AsyncAPI document, this session will show you how to use that to configure a Kafka client or application to connect to the cluster, using the details in the AsyncAPI spec.

Running High Performance and Fault Tolerant Elasticsearch Clusters on Docker

Sematext engineer Rafal Kuc (@kucrafal) walks through the details of running high-performance, fault tolerant Elasticsearch clusters on Docker. Topics include: Containers vs. Virtual Machines, running the official Elasticsearch container, container constraints, good network practices, dealing with storage, data-only Docker volumes, scaling, time-based data, multiple tiers and tenants, indexing with and without routing, querying with and without routing, routing vs. no routing, and monitoring. Talk was delivered at DevOps Days Warsaw 2015.

Replacing Squid with ATS

This document summarizes a talk given at ApacheCon 2015 about replacing Squid with ATS (Apache Traffic Server) as the proxy server at Yahoo. It discusses the history of using Squid at Yahoo, limitations with Squid that prompted the switch to ATS, key differences in configuration between the two systems, examples of forwarding and reverse proxy use cases, and learnings around managing open source projects and migration testing.

Failsafe Mechanism for Yahoo Homepage

The document describes Yahoo's failsafe mechanism for its homepage using Apache Storm and Apache Traffic Server. The key points are:

1. The failsafe architecture uses AWS components like EC2, ELB, S3 and autoscaling to serve traffic from failsafe servers if the primary servers fail.

2. Apache Traffic Server is used as a caching proxy between the user and origin servers. The "Escalate" plugin in ATS fetches content from failsafe servers if the origin server response is not good.

3. Apache Storm Crawler crawls content for different devices and maps URLs to the failsafe domain for storage in S3 with query parameters in the path. This provides more relevant fail

Docker advance topic

This document provides an overview of advanced Docker topics including Docker installation, Docker networking using bridges and volumes, and creating Dockerfiles. It discusses installing Docker on CentOS, the different types of Docker networks including bridge, host, overlay and macvlan. It also covers creating and managing Docker volumes, starting containers with volumes, and creating Dockerfiles with components like FROM, RUN, COPY and ENTRYPOINT.

Under the Hood with Docker Swarm Mode - Drew Erny and Nishant Totla, Docker

Join SwarmKit maintainers Drew and Nishant as they showcase features that have made Swarm Mode even more powerful, without compromising the operational simplicity it was designed with. They will discuss the implementation of new features that streamline deployments, increase security, and reduce downtime. These substantial additions to Swarm Mode are completely transparent and straightforward to use, and users may not realize they're already benefiting from these improvements under the hood.

Installation Openstack Swift

The document describes the process of setting up OpenStack Swift object storage. It includes installing and configuring Swift packages on both storage and proxy nodes, generating ring files to map objects to storage devices, and registering the Swift service with Keystone for authentication. Key steps are installing Swift packages, adding storage devices to the ring, distributing ring files, and configuring the proxy server and authentication filter.

What’s new in Swarm 1.1

Docker Swarm 1.1 introduces improvements to node management in Docker clusters, including monitoring node health and status from the Docker info command. It also adds experimental rescheduling of containers when nodes fail to help ensure application availability. Additional event types have been added to provide more visibility into cluster operations. The demo shows how resource constraints and networks can be used to deploy a multi-tier voting application across multiple Docker Engines managed as a single virtual Engine by Swarm.

Deep dive in Docker Overlay Networks

The Docker network overlay driver relies on several technologies: network namespaces, VXLAN, Netlink and a distributed key-value store. This talk will present each of these mechanisms one by one along with their userland tools and show hands-on how they interact together when setting up an overlay to connect containers.

The talk will continue with a demo showing how to build your own simple overlay using these technologies.

Docker advance1

This document provides an overview and instructions for Docker installation, networking, volumes, and Dockerfiles. It discusses installing Docker on CentOS, the different network drivers including bridge, and how to create and manage user-defined bridges and volumes. It also explains the components and usage of Dockerfiles to build images, including base images, environment variables, copying files, setting entrypoints and commands. The document includes examples of building an image locally and pushing it to a Docker repository.

Docking postgres

Running services in virtualized systems provides many benefits, but has often presented performance and flexibility drawbacks. This has become critical when managing large databases, where resource usage and performance are paramount. We will explore a case study in the use of Docker to roll out multiple database servers distributed across multiple physical servers.

Docker Networking & Swarm Mode Introduction

This document introduces Docker networking and Docker Swarm mode. It discusses the different types of Docker networks including bridge, null, and host networks. It also covers multi-host networking using overlay networks. For Docker Swarm mode, it describes the key features including self-healing, self-organizing, blue-print deployment, load balancing using a routing mesh, and not requiring additional components for service discovery or load balancing. The document aims to provide an overview of these topics and includes examples.

Deep Dive in Docker Overlay Networks

The Docker network overlay driver relies on several technologies: network namespaces, VXLAN, Netlink and a distributed key-value store. This talk will present each of these mechanisms one by one along with their userland tools and show hands-on how they interact together when setting up an overlay to connect containers.

The talk will continue with a demo showing how to build your own simple overlay using these technologies.

Running High Performance & Fault-tolerant Elasticsearch Clusters on Docker

This document discusses running Elasticsearch clusters on Docker containers. It describes how Docker containers are more lightweight than virtual machines and have less overhead. It provides examples of running official Elasticsearch Docker images and customizing configurations. It also covers best practices for networking, storage, constraints, and high availability when running Elasticsearch on Docker.

Introductory Overview to Managing AWS with Terraform

The document provides an overview of Terraform including:

- Terraform is an open source tool from HashiCorp that allows defining and provisioning infrastructure in a code-based declarative way across multiple cloud platforms and services.

- Key concepts include providers that define cloud resources, configuration files that declare the desired state, and a plan-apply workflow to provision and manage infrastructure resources.

- Common Terraform commands are explained like init, plan, apply, destroy, output and their usage.

JDO 2019: Container orchestration with Docker Swarm - Jakub Hajek

This document discusses Docker Swarm and container orchestration. It provides an overview of key Docker Swarm features such as decentralized design, declarative service model, rolling updates, secrets, and configs. It then describes a demonstration environment running on AWS with Docker Swarm, NodeJS, Consul, Traefik, and Let's Encrypt. Diagrams are shown of the overlay networks, architecture, and stacks. The demonstration shows the cluster topology, service scaling, and response time testing. Contact information is provided to learn more about Docker Swarm, container orchestration, and the demonstration environment.

New Docker Features for Orchestration and Containers

- Swarm mode in Docker Engine allows clustering of Docker hosts into a single virtual Docker engine with features like services, scaling, global services, and constraints.

- Services allow deploying replicated applications on a swarm using the docker service command and benefit from features like rolling updates, restarts on failure, and scaling.

- The routing mesh provides load balancing, service discovery, and transparent rerouting of traffic between nodes and containers.

Docker up and running

This document provides an overview and agenda for a Docker presentation. It discusses the Docker architecture including underlying technologies like cgroups and namespaces. It also covers the Docker engine/daemon, API, Compose, networking, Swarm, Machine, security and storage. The presentation includes a demo of these Docker concepts and capabilities.

Automating complex infrastructures with Puppet

Automating Complex infrastructures with Puppet, with sipX as an example

as presented at Froscon in Sankt-Augusting (Germany) on August 21st 2011

Automated Java Deployments With Rpm

This document proposes using RPM packages to deploy Java applications to Red Hat Linux systems in a more automated and standardized way. Currently, deployment is a manual multi-step process that is slow, error-prone, and requires detailed application knowledge. The proposal suggests using Maven and Jenkins to build Java applications into RPM packages. These packages can then be installed, upgraded, and rolled back easily using common Linux tools like YUM. This approach simplifies deployment, improves speed, enables easy auditing of versions, and allows for faster rollbacks compared to the current process.

Percona Live 2012PPT: introduction-to-mysql-replication

This document provides an overview of MySQL replication including:

- Replication enables data from a master database to be replicated to one or more slave databases.

- Binary logs contain all writes and schema changes on the master which are used by slaves to replicate data.

- Setting up replication involves configuring the master to log binary logs, granting replication privileges, and configuring slaves to connect to the master and read binary logs from the specified position.

- Commands like START SLAVE are used to control replication and SHOW SLAVE STATUS displays replication status and lag.

Deeper dive in Docker Overlay Networks

The Docker network overlay driver relies on several technologies: network namespaces, VXLAN, Netlink and a distributed key-value store. This talk will present each of these mechanisms one by one along with their userland tools and show hands-on how they interact together when setting up an overlay to connect containers. The talk will continue with a demo showing how to build your own simple overlay using these technologies. Finally, it will show how we can dynamically distribute IP and MAC information to every hosts in the overlay using BGP EVPN

Infrastructure Deployment with Docker & Ansible

This is an introduction to Docker & Ansible. It shows how Ansible can be used as orchestration too for Docker. There are 2 real world examples included with code examples in a Gist.

Wlan

The document discusses various networking devices used to connect and extend local area networks (LANs). It describes repeaters as devices that receive and regenerate signals to allow them to travel longer distances. Hubs are multiport repeaters that connect multiple nodes to a single device. Bridges operate at the data link layer and logically separate network segments. Switches provide dedicated connections and are multiport bridges that separate collision domains for improved performance.

Lecture 19 dynamic web - java - part 1

Glassfish is an open source application server that supports Java EE technologies like Servlets, JSP, EJB. It uses Grizzly, which is based on Apache Tomcat, as its servlet container and uses Java NIO for improved performance. Key Java EE technologies it supports include Servlets, JSP, EJB, advanced XML technologies.

More Related Content

What's hot

Failsafe Mechanism for Yahoo Homepage

The document describes Yahoo's failsafe mechanism for its homepage using Apache Storm and Apache Traffic Server. The key points are:

1. The failsafe architecture uses AWS components like EC2, ELB, S3 and autoscaling to serve traffic from failsafe servers if the primary servers fail.

2. Apache Traffic Server is used as a caching proxy between the user and origin servers. The "Escalate" plugin in ATS fetches content from failsafe servers if the origin server response is not good.

3. Apache Storm Crawler crawls content for different devices and maps URLs to the failsafe domain for storage in S3 with query parameters in the path. This provides more relevant fail

Docker advance topic

This document provides an overview of advanced Docker topics including Docker installation, Docker networking using bridges and volumes, and creating Dockerfiles. It discusses installing Docker on CentOS, the different types of Docker networks including bridge, host, overlay and macvlan. It also covers creating and managing Docker volumes, starting containers with volumes, and creating Dockerfiles with components like FROM, RUN, COPY and ENTRYPOINT.

Under the Hood with Docker Swarm Mode - Drew Erny and Nishant Totla, Docker

Join SwarmKit maintainers Drew and Nishant as they showcase features that have made Swarm Mode even more powerful, without compromising the operational simplicity it was designed with. They will discuss the implementation of new features that streamline deployments, increase security, and reduce downtime. These substantial additions to Swarm Mode are completely transparent and straightforward to use, and users may not realize they're already benefiting from these improvements under the hood.

Installation Openstack Swift

The document describes the process of setting up OpenStack Swift object storage. It includes installing and configuring Swift packages on both storage and proxy nodes, generating ring files to map objects to storage devices, and registering the Swift service with Keystone for authentication. Key steps are installing Swift packages, adding storage devices to the ring, distributing ring files, and configuring the proxy server and authentication filter.

What’s new in Swarm 1.1

Docker Swarm 1.1 introduces improvements to node management in Docker clusters, including monitoring node health and status from the Docker info command. It also adds experimental rescheduling of containers when nodes fail to help ensure application availability. Additional event types have been added to provide more visibility into cluster operations. The demo shows how resource constraints and networks can be used to deploy a multi-tier voting application across multiple Docker Engines managed as a single virtual Engine by Swarm.

Deep dive in Docker Overlay Networks

The Docker network overlay driver relies on several technologies: network namespaces, VXLAN, Netlink and a distributed key-value store. This talk will present each of these mechanisms one by one along with their userland tools and show hands-on how they interact together when setting up an overlay to connect containers.

The talk will continue with a demo showing how to build your own simple overlay using these technologies.

Docker advance1

This document provides an overview and instructions for Docker installation, networking, volumes, and Dockerfiles. It discusses installing Docker on CentOS, the different network drivers including bridge, and how to create and manage user-defined bridges and volumes. It also explains the components and usage of Dockerfiles to build images, including base images, environment variables, copying files, setting entrypoints and commands. The document includes examples of building an image locally and pushing it to a Docker repository.

Docking postgres

Running services in virtualized systems provides many benefits, but has often presented performance and flexibility drawbacks. This has become critical when managing large databases, where resource usage and performance are paramount. We will explore a case study in the use of Docker to roll out multiple database servers distributed across multiple physical servers.

Docker Networking & Swarm Mode Introduction

This document introduces Docker networking and Docker Swarm mode. It discusses the different types of Docker networks including bridge, null, and host networks. It also covers multi-host networking using overlay networks. For Docker Swarm mode, it describes the key features including self-healing, self-organizing, blue-print deployment, load balancing using a routing mesh, and not requiring additional components for service discovery or load balancing. The document aims to provide an overview of these topics and includes examples.

Deep Dive in Docker Overlay Networks

The Docker network overlay driver relies on several technologies: network namespaces, VXLAN, Netlink and a distributed key-value store. This talk will present each of these mechanisms one by one along with their userland tools and show hands-on how they interact together when setting up an overlay to connect containers.

The talk will continue with a demo showing how to build your own simple overlay using these technologies.

Running High Performance & Fault-tolerant Elasticsearch Clusters on Docker

This document discusses running Elasticsearch clusters on Docker containers. It describes how Docker containers are more lightweight than virtual machines and have less overhead. It provides examples of running official Elasticsearch Docker images and customizing configurations. It also covers best practices for networking, storage, constraints, and high availability when running Elasticsearch on Docker.

Introductory Overview to Managing AWS with Terraform

The document provides an overview of Terraform including:

- Terraform is an open source tool from HashiCorp that allows defining and provisioning infrastructure in a code-based declarative way across multiple cloud platforms and services.

- Key concepts include providers that define cloud resources, configuration files that declare the desired state, and a plan-apply workflow to provision and manage infrastructure resources.

- Common Terraform commands are explained like init, plan, apply, destroy, output and their usage.

JDO 2019: Container orchestration with Docker Swarm - Jakub Hajek

This document discusses Docker Swarm and container orchestration. It provides an overview of key Docker Swarm features such as decentralized design, declarative service model, rolling updates, secrets, and configs. It then describes a demonstration environment running on AWS with Docker Swarm, NodeJS, Consul, Traefik, and Let's Encrypt. Diagrams are shown of the overlay networks, architecture, and stacks. The demonstration shows the cluster topology, service scaling, and response time testing. Contact information is provided to learn more about Docker Swarm, container orchestration, and the demonstration environment.

New Docker Features for Orchestration and Containers

- Swarm mode in Docker Engine allows clustering of Docker hosts into a single virtual Docker engine with features like services, scaling, global services, and constraints.

- Services allow deploying replicated applications on a swarm using the docker service command and benefit from features like rolling updates, restarts on failure, and scaling.

- The routing mesh provides load balancing, service discovery, and transparent rerouting of traffic between nodes and containers.

Docker up and running

This document provides an overview and agenda for a Docker presentation. It discusses the Docker architecture including underlying technologies like cgroups and namespaces. It also covers the Docker engine/daemon, API, Compose, networking, Swarm, Machine, security and storage. The presentation includes a demo of these Docker concepts and capabilities.

Automating complex infrastructures with Puppet

Automating Complex infrastructures with Puppet, with sipX as an example

as presented at Froscon in Sankt-Augusting (Germany) on August 21st 2011

Automated Java Deployments With Rpm

This document proposes using RPM packages to deploy Java applications to Red Hat Linux systems in a more automated and standardized way. Currently, deployment is a manual multi-step process that is slow, error-prone, and requires detailed application knowledge. The proposal suggests using Maven and Jenkins to build Java applications into RPM packages. These packages can then be installed, upgraded, and rolled back easily using common Linux tools like YUM. This approach simplifies deployment, improves speed, enables easy auditing of versions, and allows for faster rollbacks compared to the current process.

Percona Live 2012PPT: introduction-to-mysql-replication

This document provides an overview of MySQL replication including:

- Replication enables data from a master database to be replicated to one or more slave databases.

- Binary logs contain all writes and schema changes on the master which are used by slaves to replicate data.

- Setting up replication involves configuring the master to log binary logs, granting replication privileges, and configuring slaves to connect to the master and read binary logs from the specified position.

- Commands like START SLAVE are used to control replication and SHOW SLAVE STATUS displays replication status and lag.

Deeper dive in Docker Overlay Networks

The Docker network overlay driver relies on several technologies: network namespaces, VXLAN, Netlink and a distributed key-value store. This talk will present each of these mechanisms one by one along with their userland tools and show hands-on how they interact together when setting up an overlay to connect containers. The talk will continue with a demo showing how to build your own simple overlay using these technologies. Finally, it will show how we can dynamically distribute IP and MAC information to every hosts in the overlay using BGP EVPN

Infrastructure Deployment with Docker & Ansible

This is an introduction to Docker & Ansible. It shows how Ansible can be used as orchestration too for Docker. There are 2 real world examples included with code examples in a Gist.

What's hot (20)

Under the Hood with Docker Swarm Mode - Drew Erny and Nishant Totla, Docker

Under the Hood with Docker Swarm Mode - Drew Erny and Nishant Totla, Docker

Running High Performance & Fault-tolerant Elasticsearch Clusters on Docker

Running High Performance & Fault-tolerant Elasticsearch Clusters on Docker

Introductory Overview to Managing AWS with Terraform

Introductory Overview to Managing AWS with Terraform

JDO 2019: Container orchestration with Docker Swarm - Jakub Hajek

JDO 2019: Container orchestration with Docker Swarm - Jakub Hajek

New Docker Features for Orchestration and Containers

New Docker Features for Orchestration and Containers

Percona Live 2012PPT: introduction-to-mysql-replication

Percona Live 2012PPT: introduction-to-mysql-replication

Viewers also liked

Wlan

The document discusses various networking devices used to connect and extend local area networks (LANs). It describes repeaters as devices that receive and regenerate signals to allow them to travel longer distances. Hubs are multiport repeaters that connect multiple nodes to a single device. Bridges operate at the data link layer and logically separate network segments. Switches provide dedicated connections and are multiport bridges that separate collision domains for improved performance.

Lecture 19 dynamic web - java - part 1

Glassfish is an open source application server that supports Java EE technologies like Servlets, JSP, EJB. It uses Grizzly, which is based on Apache Tomcat, as its servlet container and uses Java NIO for improved performance. Key Java EE technologies it supports include Servlets, JSP, EJB, advanced XML technologies.

Syslog Centralization Logging with Windows ~ A techXpress Guide

Syslog Centralization Logging with Windows ~ A techXpress Guide ~ Setting up a centralized Syslog Server to get EventLogs from all Windows Hosts for analysis

Insecurity-In-Security version.2 (2011)

Presentation (version.2) from 2011 describing how Security mechanisms placed to secure us are insecure themselves.

Insecurity-In-Security version.1 (2010)

Presentation (version.1) from 2010 describing how Security mechanisms placed to secure us are insecure themselves.

Ethernet Bonding for Multiple NICs on Linux ~ A techXpress Guide

Ethernet Bonding for Multiple NICs on Linux ~ A techXpress Guide ~ for Load Balancing the Network Traffic on Multiple Etheret Cards attached on a Linux Box

DevOps with Sec-ops

it's presentation from a short 5-min talk at 1st DevOpsDays India about security implications and threats in DevOps related tasks

Viewers also liked (7)

Syslog Centralization Logging with Windows ~ A techXpress Guide

Syslog Centralization Logging with Windows ~ A techXpress Guide

Ethernet Bonding for Multiple NICs on Linux ~ A techXpress Guide

Ethernet Bonding for Multiple NICs on Linux ~ A techXpress Guide

Similar to Solaris Zones (native & lxbranded) ~ A techXpress Guide

An Express Guide ~ Zabbix for IT Monitoring

Zabbix is an open source infrastructure monitoring solution. It has two main parts - the Zabbix server and client.

The document provides step-by-step instructions to install and configure Zabbix on a Linux server. This includes installing prerequisites like NTP, PHP, MySQL, compiling and installing the Zabbix server and client, configuring the database, web interface, and more. Finally, it discusses initial configuration steps after installation like securing login credentials.

WSO2 Dep Sync for Artifact Synchronization of Cluster Nodes

The document discusses deployment synchronization between cluster nodes in WSO2 products. It introduces the need for deployment synchronization in clustered environments to distribute artifacts and metadata transparently. It demonstrates how the Deployment Synchronizer (DepSync) feature works in WSO2 products using a SVN repository. It shows how to configure a minimal WSO2 cluster with one management node and one worker node connected by a load balancer, by enabling DepSync and making the appropriate configuration changes.

[WSO2] Deployment Synchronizer for Deployment Artifact Synchronization Betwee...![[WSO2] Deployment Synchronizer for Deployment Artifact Synchronization Betwee...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[WSO2] Deployment Synchronizer for Deployment Artifact Synchronization Betwee...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Setting up a cluster is important when developing enterprise software and deploying them in production environments. Distributing deployment artifacts & related metadata to all nodes in a homogeneous cluster is a typical requirement for a clustered deployment of any middleware platform. In such a cluster, all nodes should contain the deployed artifacts as well as the related metadata.

The Deployment Synchronizer (DepSync) is the mechanism used in the WSO2 platform for distributing these artifacts and metadata across all nodes in the cluster. It provides the ability to synchronize data between the worker nodes of a product cluster. When used with the WSO2 Application Server, or the WSO2 ESB, you can synchronize your deployable artifacts like web services, and web applications etc. across the cluster nodes. In addition, with the latest WSO2 Carbon 4 release, WSO2 provides the ability to synchronize service metadata which includes service policies, transports, and service-type specific data. Now you only have to deploy and configure services in one node - called the manager. Then, DepSync will replicate those to other nodes - workers.

In this presentation, we present how this is done in the WSO2 Cloud-enabled middleware platform. Typical deployment artifacts will include webapps, JAXWS/JAXRS apps, data services, proxy services, and BPEL processes . The WSO2 platform also natively supports multi-tenancy. Tenants & tenant artifacts are loaded on demand. We will demonstrate how DepSync works efficiently with multi-tenancy.

Kasun Gajasinghe did the demonstration section of this webinar presentation while Pradeep Fernando provided technical aspects of Deployment Synchronizer

Step by Step to Install oracle grid 11.2.0.3 on solaris 11.1

The document provides step-by-step instructions to install Oracle Grid Infrastructure 11g Release 2 (11.2.0.3) on Solaris 11.1. It describes preparing the OS by creating users, groups and directories. It also covers configuring networking, disks and memory parameters. The main steps are: installing Grid software and configuring ASM, followed by installing the Oracle Database and configuring it on the RAC nodes using dbca. Setting up SSH access between nodes and troubleshooting installation errors are also addressed. The goal is to build a fully configured two-node Oracle RAC environment with ASM and single sign-on capabilities.

MongoDB – Sharded cluster tutorial - Percona Europe 2017

This document provides guidance on deploying and upgrading a MongoDB sharded cluster. It discusses the components of a sharded cluster including config servers, shards, and mongos processes. It recommends a production deployment has at least 3 config servers, 3 nodes per shard replica set, and multiple mongos instances. The document outlines steps for deploying each component, including initializing replica sets and adding shards. It also provides a checklist for upgrading between minor and major versions, such as changes to configuration options, deprecated operations, and connectivity changes.

MongoDB - Sharded Cluster Tutorial

This document provides an overview and instructions for deploying, upgrading, and troubleshooting a MongoDB sharded cluster. It describes the components of a sharded cluster including shards, config servers, and mongos processes. It provides recommendations for initial deployment including using replica sets for shards and config servers, DNS names instead of IPs, and proper user authorization. The document also outlines best practices for upgrading between minor and major versions, including stopping the balancer, upgrading processes in rolling fashion, and handling incompatible changes when downgrading major versions.

Sharded cluster tutorial

This document provides guidance on deploying and upgrading a MongoDB sharded cluster. It discusses the components of a sharded cluster including config servers, shards, and mongos processes. It recommends a production deployment have at least 3 config servers, 3 nodes per shard replica set, and multiple mongos instances. The document outlines steps for deploying each component, including initializing replica sets and adding shards. It also provides a checklist for upgrading between minor and major versions, such as changes to configuration options, deprecated operations, and connectivity changes.

[Devconf.cz][2017] Understanding OpenShift Security Context Constraints![[Devconf.cz][2017] Understanding OpenShift Security Context Constraints](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Devconf.cz][2017] Understanding OpenShift Security Context Constraints](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

The document discusses OpenShift security context constraints (SCCs) and how to configure them to allow running a WordPress container. It begins with an overview of SCCs and their purpose in OpenShift for controlling permissions for pods. It then describes issues running the WordPress container under the default "restricted" SCC due to permission errors. The document explores editing the "restricted" SCC and removing capabilities and user restrictions to address the errors. Alternatively, it notes the "anyuid" SCC can be used which is more permissive and standard for allowing the WordPress container to run successfully.

Terraform Cosmos DB

The document describes deploying Cosmos DB resources using Terraform in Azure. It outlines prerequisites, environment details, and the configuration files and process used to create a resource group, Cosmos DB account, database, and collection. The main.tf file defines these resources, variables.tf contains configurable values, and output.tf displays output after deployment. Running terraform init and terraform plan commands prepares for deploying the resources.

Docker and friends at Linux Days 2014 in Prague

Docker allows deploying applications easily across various environments by packaging them along with their dependencies into standardized units called containers. It provides isolation and security while allowing higher density and lower overhead than virtual machines. Core OS and Mesos both integrate with Docker to deploy containers on clusters of machines for scalability and high availability.

Docker container management

The document discusses Docker containers and Docker Compose. It begins with definitions of containers and images. It then covers using Docker Compose to define and run multi-container applications with a compose file. It shows commands for starting, stopping, and viewing containers. The document also introduces Portainer as a tool for visually managing Docker containers and provides installation instructions for Portainer.

Add and configure lu ns in solaris

The document provides instructions for installing and configuring new storage LUNs on a Solaris system. It includes steps to install required OS packages, check that the HBAs are detected, collect information about existing LUNs, assign new LUNs, format and mount the LUNs, and configure Veritas (if used) to recognize the new LUNs.

Running Docker in Development & Production (#ndcoslo 2015)

The document discusses running Docker in development and production. It covers:

- Using Docker containers to run individual services like Elasticsearch or web applications

- Creating Dockerfiles to build custom images

- Linking containers together and using environment variables for service discovery

- Scaling with Docker Compose, load balancing with Nginx, and service discovery with Consul

- Clustering containers together using Docker Swarm for high availability

Oracle goldengate and RAC12c

The document summarizes a presentation on integrating Oracle Real Application Clusters (RAC) with Oracle GoldenGate 12c. The presentation covers:

- Configuring an ASM Cluster File System (ACFS) for the shared storage needed by GoldenGate in a RAC environment.

- Installing Oracle GoldenGate 12c and configuring it to use the ACFS.

- Creating an application VIP and registering GoldenGate with the Oracle Grid Infrastructure bundled agent to enable automated startup and failover of GoldenGate processes on RAC nodes.

- Demonstrations of stopping GoldenGate on one node and verifying failover to the other node.

Ubic-public

The document discusses UBIC, a toolkit for writing daemons, init scripts, and services in Perl. It provides several key classes for common service tasks like starting, stopping, and getting the status of services. These classes standardize service management and make services more robust. UBIC sees wide use at Yandex across many packages, clusters, and hosts to manage services.

Ubic

The document describes UBIC, a toolkit for writing daemons, init scripts, and services in Perl. It provides common classes that handle tasks like starting, stopping, and monitoring services that simplify writing init scripts. Services can be organized hierarchically and non-root users can run services. The toolkit also provides HTTP status endpoints and watchdog functionality to restart services that fail. UBIC sees widespread use at Yandex across many packages, clusters, and hosts.

OpenStack Tokyo Meeup - Gluster Storage Day

November 2012 Tokyo OpenStack meetup was dedicated to using Gluster storage. This presentation showed the fuse mount method to integrating gluster into OpenStack. There are new drivers that have been developed that make mounting gluster volumes to instances more efficient. This presentation doesn't show how to use them.

The age of orchestration: from Docker basics to cluster management

The container abstraction hit the collective developer mind with great force and created a space of innovation for the distribution, configuration and deployment of cloud based applications. Now that this new model has established itself work is moving towards orchestration and coordination of loosely coupled network services. There is an explosion of tools in this arena at different degrees of stability but the momentum is huge.

On the above premise this session we'll delve into a selection of the following topics:

- Two minute Docker intro refresher

- Overview of the orchestration landscape (Kubernetes, Mesos, Helios and Docker tools)

- Introduction to Docker own ecosystem orchestration tools (machine, swarm and compose)

- Live demo of cluster management using a sample application.

A basic understanding of Docker is suggested to fully enjoy the talk.

Start tracking your ruby infrastructure

This document discusses using Docker and Ansible together for infrastructure as code. It begins with an overview of problems with traditional deployment approaches and advantages of defining infrastructure programmatically. It then provides in-depth explanations of Docker concepts like images, containers, Dockerfiles and how Docker works. The remainder covers using Ansible for configuration management, explaining concepts like modules, inventory, playbooks and roles. It emphasizes that Docker and Ansible together provide an easy way to start automating infrastructure while removing dependencies on specific technologies.

Real World Experience of Running Docker in Development and Production

Real World Experience of Running Docker in Development and Production. Presented 5th November 2015 at Oredev 2015

Similar to Solaris Zones (native & lxbranded) ~ A techXpress Guide (20)

WSO2 Dep Sync for Artifact Synchronization of Cluster Nodes

WSO2 Dep Sync for Artifact Synchronization of Cluster Nodes

[WSO2] Deployment Synchronizer for Deployment Artifact Synchronization Betwee...![[WSO2] Deployment Synchronizer for Deployment Artifact Synchronization Betwee...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[WSO2] Deployment Synchronizer for Deployment Artifact Synchronization Betwee...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[WSO2] Deployment Synchronizer for Deployment Artifact Synchronization Betwee...

Step by Step to Install oracle grid 11.2.0.3 on solaris 11.1

Step by Step to Install oracle grid 11.2.0.3 on solaris 11.1

MongoDB – Sharded cluster tutorial - Percona Europe 2017

MongoDB – Sharded cluster tutorial - Percona Europe 2017

[Devconf.cz][2017] Understanding OpenShift Security Context Constraints![[Devconf.cz][2017] Understanding OpenShift Security Context Constraints](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Devconf.cz][2017] Understanding OpenShift Security Context Constraints](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[Devconf.cz][2017] Understanding OpenShift Security Context Constraints

Running Docker in Development & Production (#ndcoslo 2015)

Running Docker in Development & Production (#ndcoslo 2015)

The age of orchestration: from Docker basics to cluster management

The age of orchestration: from Docker basics to cluster management

Real World Experience of Running Docker in Development and Production

Real World Experience of Running Docker in Development and Production

More from Abhishek Kumar

DevOps?!@

DevOps session#1 on what it is, which responsibilities it carries, what tools are already out there to help

xml-motor ~ What,Why,How

xml-motor

what, why & how about the new technique xml-parser rubygem

http://justfewtuts.blogspot.com/2012/03/xml-motor-what-it-is-how-why-should-you.html

XML-Motor

A new compact XML algorithm without any dependencies. Its implemented as a rubygem to provide Non-native XML parser for particular usages. RubyGem at http://rubygems.org/gems/xml-motor and https://github.com/abhishekkr/rubygem_xml_motor

Squid for Load-Balancing & Cache-Proxy ~ A techXpress Guide

Squid for Load-Balancing & Cache-Proxy ~ A techXpress Guide ~ Setting up a secured Chained-Proxy between different offices using Squid for a specific URL set.

An Express Guide ~ "dummynet" for tweaking network latencies & bandwidth

It's an Express Guide to "dummynet" for testing Web/Network Applications in real-use-case scenario ~~~~~ it can allow you to tweak Network Latencies and bandwidth to any value and test the application in those circumstances

An Express Guide ~ Cacti for IT Infrastructure Monitoring & Graphing

It's an Express Guide to "Setup of Cacti Server with purpose of IT Infrastructure Monitoring & Service Graphs" ~~~~~ its aimed at monitoring of various IT services and brilliant graphing of statistics

An Express Guide ~ SNMP for Secure Rremote Resource Monitoring

It's an Express Guide to "Basic & Secure Setup of SNMP with purpose of Remote Resource Monitoring" ~~~~~ described here with a use-case of setting it up for monitoring availability of Network Connection on a remote machine and Trap notification in case the link goes down ~~~~~ for both Linux & Windows platforms

Presentation on "XSS Defeating Concept in (secure)SiteHoster" : 'nullcon-2011'

Nullcon is an annual hacker conference held in India. The document discusses defeating web application attacks through offensive security techniques like bug hunting and disarming malicious script tags. It also covers techniques for preventing cross-site scripting attacks, such as parsing user input and only allowing safe HTML tags.

XSS Defeating Concept - Part 2

An Approach Eradicating Effect of JavaScript Events in

User Input Being A Part of Web2.0 Facilities... in short the final nail to coffin of XSS Attacks

XSS Defeating Trick ~=ABK=~ WhitePaper

This document proposes a technique to prevent XSS attacks by modifying how browsers render <script> tags inserted into the <body> of an HTML document. The technique involves the web server transforming the page generated by the application server by wrapping the <body> contents in a <script> tag. This causes any <script> tags in the original <body> to not execute while preserving those in the <head>. The goal is to enable security without requiring input validation by web developers. A proof-of-concept implementation demonstrates how this modification disables injected malicious scripts.

FreeSWITCH on RedHat, Fedora, CentOS

This document provides instructions to install FreeSWITCH on CentOS/RedHat/Fedora in 13 steps: 1) Install dependencies with YUM; 2) Download and extract FreeSWITCH source; 3) Add OpenZAP support to configuration; 4) Compile and install FreeSWITCH; 5) Create symlinks for main binaries; 6) Launch FreeSWITCH as a service or from the command line; 7) Use fs_cli to access the command line.

More from Abhishek Kumar (11)

Squid for Load-Balancing & Cache-Proxy ~ A techXpress Guide

Squid for Load-Balancing & Cache-Proxy ~ A techXpress Guide

An Express Guide ~ "dummynet" for tweaking network latencies & bandwidth

An Express Guide ~ "dummynet" for tweaking network latencies & bandwidth

An Express Guide ~ Cacti for IT Infrastructure Monitoring & Graphing

An Express Guide ~ Cacti for IT Infrastructure Monitoring & Graphing

An Express Guide ~ SNMP for Secure Rremote Resource Monitoring

An Express Guide ~ SNMP for Secure Rremote Resource Monitoring

Presentation on "XSS Defeating Concept in (secure)SiteHoster" : 'nullcon-2011'

Presentation on "XSS Defeating Concept in (secure)SiteHoster" : 'nullcon-2011'

Recently uploaded

GraphRAG for Life Science to increase LLM accuracy

GraphRAG for life science domain, where you retriever information from biomedical knowledge graphs using LLMs to increase the accuracy and performance of generated answers

Skybuffer AI: Advanced Conversational and Generative AI Solution on SAP Busin...

Skybuffer AI, built on the robust SAP Business Technology Platform (SAP BTP), is the latest and most advanced version of our AI development, reaffirming our commitment to delivering top-tier AI solutions. Skybuffer AI harnesses all the innovative capabilities of the SAP BTP in the AI domain, from Conversational AI to cutting-edge Generative AI and Retrieval-Augmented Generation (RAG). It also helps SAP customers safeguard their investments into SAP Conversational AI and ensure a seamless, one-click transition to SAP Business AI.

With Skybuffer AI, various AI models can be integrated into a single communication channel such as Microsoft Teams. This integration empowers business users with insights drawn from SAP backend systems, enterprise documents, and the expansive knowledge of Generative AI. And the best part of it is that it is all managed through our intuitive no-code Action Server interface, requiring no extensive coding knowledge and making the advanced AI accessible to more users.

TrustArc Webinar - 2024 Global Privacy Survey

How does your privacy program stack up against your peers? What challenges are privacy teams tackling and prioritizing in 2024?

In the fifth annual Global Privacy Benchmarks Survey, we asked over 1,800 global privacy professionals and business executives to share their perspectives on the current state of privacy inside and outside of their organizations. This year’s report focused on emerging areas of importance for privacy and compliance professionals, including considerations and implications of Artificial Intelligence (AI) technologies, building brand trust, and different approaches for achieving higher privacy competence scores.

See how organizational priorities and strategic approaches to data security and privacy are evolving around the globe.

This webinar will review:

- The top 10 privacy insights from the fifth annual Global Privacy Benchmarks Survey

- The top challenges for privacy leaders, practitioners, and organizations in 2024

- Key themes to consider in developing and maintaining your privacy program

Generating privacy-protected synthetic data using Secludy and Milvus

During this demo, the founders of Secludy will demonstrate how their system utilizes Milvus to store and manipulate embeddings for generating privacy-protected synthetic data. Their approach not only maintains the confidentiality of the original data but also enhances the utility and scalability of LLMs under privacy constraints. Attendees, including machine learning engineers, data scientists, and data managers, will witness first-hand how Secludy's integration with Milvus empowers organizations to harness the power of LLMs securely and efficiently.

Operating System Used by Users in day-to-day life.pptx

Dive into the realm of operating systems (OS) with Pravash Chandra Das, a seasoned Digital Forensic Analyst, as your guide. 🚀 This comprehensive presentation illuminates the core concepts, types, and evolution of OS, essential for understanding modern computing landscapes.

Beginning with the foundational definition, Das clarifies the pivotal role of OS as system software orchestrating hardware resources, software applications, and user interactions. Through succinct descriptions, he delineates the diverse types of OS, from single-user, single-task environments like early MS-DOS iterations, to multi-user, multi-tasking systems exemplified by modern Linux distributions.

Crucial components like the kernel and shell are dissected, highlighting their indispensable functions in resource management and user interface interaction. Das elucidates how the kernel acts as the central nervous system, orchestrating process scheduling, memory allocation, and device management. Meanwhile, the shell serves as the gateway for user commands, bridging the gap between human input and machine execution. 💻

The narrative then shifts to a captivating exploration of prominent desktop OSs, Windows, macOS, and Linux. Windows, with its globally ubiquitous presence and user-friendly interface, emerges as a cornerstone in personal computing history. macOS, lauded for its sleek design and seamless integration with Apple's ecosystem, stands as a beacon of stability and creativity. Linux, an open-source marvel, offers unparalleled flexibility and security, revolutionizing the computing landscape. 🖥️

Moving to the realm of mobile devices, Das unravels the dominance of Android and iOS. Android's open-source ethos fosters a vibrant ecosystem of customization and innovation, while iOS boasts a seamless user experience and robust security infrastructure. Meanwhile, discontinued platforms like Symbian and Palm OS evoke nostalgia for their pioneering roles in the smartphone revolution.

The journey concludes with a reflection on the ever-evolving landscape of OS, underscored by the emergence of real-time operating systems (RTOS) and the persistent quest for innovation and efficiency. As technology continues to shape our world, understanding the foundations and evolution of operating systems remains paramount. Join Pravash Chandra Das on this illuminating journey through the heart of computing. 🌟

Finale of the Year: Apply for Next One!

Presentation for the event called "Finale of the Year: Apply for Next One!" organized by GDSC PJATK

Choosing The Best AWS Service For Your Website + API.pptx

Have you ever been confused by the myriad of choices offered by AWS for hosting a website or an API?

Lambda, Elastic Beanstalk, Lightsail, Amplify, S3 (and more!) can each host websites + APIs. But which one should we choose?

Which one is cheapest? Which one is fastest? Which one will scale to meet our needs?

Join me in this session as we dive into each AWS hosting service to determine which one is best for your scenario and explain why!

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Main news related to the CCS TSI 2023 (2023/1695)

An English 🇬🇧 translation of a presentation to the speech I gave about the main changes brought by CCS TSI 2023 at the biggest Czech conference on Communications and signalling systems on Railways, which was held in Clarion Hotel Olomouc from 7th to 9th November 2023 (konferenceszt.cz). Attended by around 500 participants and 200 on-line followers.

The original Czech 🇨🇿 version of the presentation can be found here: https://www.slideshare.net/slideshow/hlavni-novinky-souvisejici-s-ccs-tsi-2023-2023-1695/269688092 .

The videorecording (in Czech) from the presentation is available here: https://youtu.be/WzjJWm4IyPk?si=SImb06tuXGb30BEH .

HCL Notes and Domino License Cost Reduction in the World of DLAU

Webinar Recording: https://www.panagenda.com/webinars/hcl-notes-and-domino-license-cost-reduction-in-the-world-of-dlau/

The introduction of DLAU and the CCB & CCX licensing model caused quite a stir in the HCL community. As a Notes and Domino customer, you may have faced challenges with unexpected user counts and license costs. You probably have questions on how this new licensing approach works and how to benefit from it. Most importantly, you likely have budget constraints and want to save money where possible. Don’t worry, we can help with all of this!

We’ll show you how to fix common misconfigurations that cause higher-than-expected user counts, and how to identify accounts which you can deactivate to save money. There are also frequent patterns that can cause unnecessary cost, like using a person document instead of a mail-in for shared mailboxes. We’ll provide examples and solutions for those as well. And naturally we’ll explain the new licensing model.

Join HCL Ambassador Marc Thomas in this webinar with a special guest appearance from Franz Walder. It will give you the tools and know-how to stay on top of what is going on with Domino licensing. You will be able lower your cost through an optimized configuration and keep it low going forward.

These topics will be covered

- Reducing license cost by finding and fixing misconfigurations and superfluous accounts

- How do CCB and CCX licenses really work?

- Understanding the DLAU tool and how to best utilize it

- Tips for common problem areas, like team mailboxes, functional/test users, etc

- Practical examples and best practices to implement right away

Letter and Document Automation for Bonterra Impact Management (fka Social Sol...

Sidekick Solutions uses Bonterra Impact Management (fka Social Solutions Apricot) and automation solutions to integrate data for business workflows.

We believe integration and automation are essential to user experience and the promise of efficient work through technology. Automation is the critical ingredient to realizing that full vision. We develop integration products and services for Bonterra Case Management software to support the deployment of automations for a variety of use cases.

This video focuses on automated letter generation for Bonterra Impact Management using Google Workspace or Microsoft 365.

Interested in deploying letter generation automations for Bonterra Impact Management? Contact us at sales@sidekicksolutionsllc.com to discuss next steps.

System Design Case Study: Building a Scalable E-Commerce Platform - Hiike

This case study explores designing a scalable e-commerce platform, covering key requirements, system components, and best practices.

Building Production Ready Search Pipelines with Spark and Milvus

Spark is the widely used ETL tool for processing, indexing and ingesting data to serving stack for search. Milvus is the production-ready open-source vector database. In this talk we will show how to use Spark to process unstructured data to extract vector representations, and push the vectors to Milvus vector database for search serving.

Introduction of Cybersecurity with OSS at Code Europe 2024

I develop the Ruby programming language, RubyGems, and Bundler, which are package managers for Ruby. Today, I will introduce how to enhance the security of your application using open-source software (OSS) examples from Ruby and RubyGems.

The first topic is CVE (Common Vulnerabilities and Exposures). I have published CVEs many times. But what exactly is a CVE? I'll provide a basic understanding of CVEs and explain how to detect and handle vulnerabilities in OSS.

Next, let's discuss package managers. Package managers play a critical role in the OSS ecosystem. I'll explain how to manage library dependencies in your application.

I'll share insights into how the Ruby and RubyGems core team works to keep our ecosystem safe. By the end of this talk, you'll have a better understanding of how to safeguard your code.

Recently uploaded (20)

GraphRAG for Life Science to increase LLM accuracy

GraphRAG for Life Science to increase LLM accuracy

Skybuffer AI: Advanced Conversational and Generative AI Solution on SAP Busin...

Skybuffer AI: Advanced Conversational and Generative AI Solution on SAP Busin...

WeTestAthens: Postman's AI & Automation Techniques

WeTestAthens: Postman's AI & Automation Techniques

Deep Dive: AI-Powered Marketing to Get More Leads and Customers with HyperGro...

Deep Dive: AI-Powered Marketing to Get More Leads and Customers with HyperGro...

Generating privacy-protected synthetic data using Secludy and Milvus

Generating privacy-protected synthetic data using Secludy and Milvus

Operating System Used by Users in day-to-day life.pptx

Operating System Used by Users in day-to-day life.pptx

Choosing The Best AWS Service For Your Website + API.pptx

Choosing The Best AWS Service For Your Website + API.pptx

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

HCL Notes and Domino License Cost Reduction in the World of DLAU

HCL Notes and Domino License Cost Reduction in the World of DLAU

Overcoming the PLG Trap: Lessons from Canva's Head of Sales & Head of EMEA Da...

Overcoming the PLG Trap: Lessons from Canva's Head of Sales & Head of EMEA Da...

Letter and Document Automation for Bonterra Impact Management (fka Social Sol...

Letter and Document Automation for Bonterra Impact Management (fka Social Sol...

System Design Case Study: Building a Scalable E-Commerce Platform - Hiike

System Design Case Study: Building a Scalable E-Commerce Platform - Hiike

Building Production Ready Search Pipelines with Spark and Milvus

Building Production Ready Search Pipelines with Spark and Milvus

Introduction of Cybersecurity with OSS at Code Europe 2024

Introduction of Cybersecurity with OSS at Code Europe 2024

Solaris Zones (native & lxbranded) ~ A techXpress Guide

- 1. Express-Guide ~to~ Basic Setup of Solaris Zones Native Zones & lx-Branded Zones by, ABK ~ http://www.twitter.com/aBionic ::Task Detail:: Creating/Managing Solaris Zones mirroring an existing CentOS Box over a Zone setting up SVN service over a Zone setting CIFS over a Zone ::Background:: Solaris Zones are a part of Solaris Container Technology. Zones manage the namespace isolation for containers implementing virtualization. In Solaris 10, containers are zone using Resource Management Feature via Solaris Resource Manager. There is no performance overhead to this approach. Resources are used as a 'Dynamic resource Pool' managed between containers using a 'Fair Share Scheduler'. Broadly there are two types of zones: Native Zone These are basic stripped down native Solaris O.S. Instances. Native zones are further of two types where ◦ 'Small Zones' (also known as Sparse Root Zone) have several system directories shared with Solaris O.S. or Global Zone in non-writable mode ◦ 'Big Zones' (also known as Whole Root Zone) have all independent directories lx-Branded Zone these are zones installed from installer of O.S., currently only linux branded zones are available also called lx-zones.

- 2. ::Execution Method:: (a.) Creating native {small,big} and lx-branded zones Setting up Resource Pool to be used by zones ◦ Enabling Resource Pool features ▪ #pooladm -e ◦ Saving current resource pool ▪ #pooladm -s ◦ List current Pools ▪ #pooladm ▪ {generally only 'pool_default' is present on fresh zone} ◦ Configuring 'default_pool' to enable Fair Share Scheduler over it ▪ #poolcfg -c 'modify pool pool_default (string pool.scheduler="FSS")' ▪ #pooladm –c ◦ Priority Controller moving all processes and resources under Fair Share Scheduler ▪ #priocntl -s -c FSS -i class TS ▪ #priocntl -s -c FSS -i pid 1 Configuring a Solaris Zone ◦ This lists the current zones ▪ #zoneadm list -cv ◦ Configuring a new Native Zone ▪ registering a new Zone • #zonecfg -z newZoneName ◦ regarding 3 different types of zones follow respective command ▪ for creating a native small-zone {with shared directories} • zonecfg:newZoneName>create ▪ for creating a native big-zone {with independent directories} • zonecfg:newZoneName>create -b ▪ for creating a lx-branded zone • zonecfg:lxZoneName>create -t SUNWlx

- 3. ◦ assigning it a location on HDD to be installed ▪ zonecfg:newZoneName>set zonepath=/export/home/zones/newZoneName ◦ Adding a Network Interface Resource to it ▪ zonecfg:newZoneName>add net ▪ zonecfg:newZoneName:net>set address=192.168.16.61 ▪ zonecfg:newZoneName:net>set physical=eth0 ▪ zonecfg:newZoneName:net>end ◦ Assign a Resource Pool (should be already existing) to it ▪ zonecfg:newZoneName>set pool=pool_default ◦ Adding a resource controller to this Zone ▪ zonecfg:newZoneName>add rctl ▪ zonecfg:newZoneName:rctl>set name=zone.cpu-shares ▪ zonecfg:newZoneName:rctl> add value (priv=privileged,limit=1,action=none) ▪ zonecfg:newZoneName:rctl>end ◦ Giving a CD-ROM access (required if installing lx-zone from ISO or CD) ▪ zonecfg:newZoneName>add fs ▪ zonecfg:newZoneName:fs>set dir=/cdrom ▪ zonecfg:newZoneName:fs>set special=/cdrom ▪ zonecfg:newZoneName:fs>set typr=lofs ▪ zonecfg:newZoneName>set options=[nodevices] ▪ zonecfg:newZoneName>end ◦ Verify, Save and Exit ▪ zonecfg:newZoneName>verify ▪ zonecfg:newZoneName>commit ▪ zonecfg:newZoneName>exit ◦ Creating the HDD location for Zone ▪ #mkdir -p /export/home/zones/newZoneName ◦ Granting required permissions to location ▪ #chmod 700 /export/home/zones/newZoneName ◦ Confirming the registration of Zone Configuration ▪ #zoneadm list -cv

- 4. ◦ It should show a listing for currently created zone like ▪ newZoneName configured at /export/home/zones/newZoneName, it is native and shared (small-zone) Installing the already configured zone ◦ Installing the zone if it's a Native {small or big} zone ▪ #zoneadm -z newZoneName install ◦ if it's a lx-brand zone with O.S. TarBall, automatically creating ZFS ▪ #zoneadm -z newZoneName install -d /tmp/os.tgz ◦ if it's a lx-branded zone with O.S. TarBall, not creating ZFS ▪ #zoneadm -z newZoneName install -x nodataset -d /tmp/os.tgz ◦ if no archive path is given then default is Disc Drive, but if you are installing from Disc Drive, you need to install VOLFS like: ▪ #svcadm enable svc:/system/filesystem/volfs:default ▪ #svcs | grep volfs ◦ If its installed without any error, just check its status using ▪ #zoneadm list -cv ◦ it should show a listing for currently created zone like newZoneName installed /export/home/zones/newZoneName native shared Using the installed Zone ◦ Now either make it ready to boot, or directly boot which will make it ready itself ▪ #zoneadm -z newZoneName ready • It should show a listing for currently created zone like ◦ newZoneName ready /export/home/zones/newZoneName native shared ◦ #zoneadm -z newZoneName boot ◦ It should show a listing for currently created zone like ▪ newZoneName running /export/home/zones/newZoneName native shared ◦ To login ▪ #zlogin newZoneName ▪ Now you are inside the Zone, running 'uname -a' should present you with newZoneName

- 5. ◦ To login into Zone Console like remote connect ▪ #zlogin -C newZoneName ◦ To exit the zone ▪ #exit ◦ To halt the zone simply use ▪ #zoneadm -z newZoneName halt ◦ it should show a listing for currently created zone like ▪ newZoneName running /export/home/zones/newZoneName native shared ◦ To reboot the zone simply use ▪ #zoneadm -z newZoneName reboot ◦ To uninstall the zone ▪ #zoneadm -z newZoneName uninstall -F (b.) Mirroring an existing CentOS Box over a Zone There are two ways to achieve this ◦ TarBall the entire distro you want to port to Zone and use that TarBall to install the Zone. ◦ Suppose, you already have an lx-branded zone and use the same. Then you need to use utility like RSync to Sync the files from Source Machine to lx-Zone. You can also add packages like svn, gcc, make, netsnmp, openssl, CoolStack's ( apache2, mysql, php, perl, python, ruby, squid) to lx-zone and they work great over Zone. (c.) Setting up SVN service over a Zone Users connect to svn mirror servers, the WebDAV SVN module serves content from the local system, and sends commits to the main server. Then main server pushes commit to mirrors using 'svnsync' over a protected link only writable by main server. ◦ Install Collabnet SVN client & server binaries {available at

- 6. 'http://www.collab.net/downloads/subversion/solaris.html'} ◦ Create a symlink collabnet modules a ▪ #ln -s /opt/CollabNet_Subversion/modules/mod_dav_svn.so /etc/httpd/modules/mod_dav_svn.so ▪ # ln -s /opt/CollabNet_Subversion/modules/mod_authz_svn.so /etc/httpd/modules/mod_authz_svn.so ◦ Add below lines to 'httpd.conf' under Apache2 directory as ▪ LoadModule dav_svn_module /etc/httpd/modules/mod_dav_svn.so LoadModule authz_svn_module /etc/httpd/modules/mod_authz_svn.so <Location /someproject> DAV svn SVNPath /repos/svn/repos/someproject AuthzSVNAccessFile /repos/svn/access/someproject/svn_access.conf AuthType Basic AuthName "Active Directory LDAP Authentication" AuthBasicProvider ldap AuthzLDAPAuthoritative off AuthLDAPBindDN user@adserver.thoughtworks.com AuthLDAPBindPassword somePassword AuthLDAPURL "ldap://adserver.company.com:389/ou=Principal,dc= dcString1,dc=dcStrin2?SAMAccountName?sub?(&(objectClass=user))" require vaild-user SVNPathAuthz off </Location> ◦ Reload httpd service ◦ ◦ Add following lines to '/repos/svn/access/someproject/svn_access.conf' ▪ can_write_group=aduserA, aduserB,aduserC read_only_group=aduserD,aduserE,aduserF no_access_group=aduserG,aduserH,aduserJ [repository:/] @can_write_group=rw @read_only_group=r @no_access_group= ◦ Create a repository as follows: ▪ svnadmin create /repos/svn/repos/someproject ▪ change permissions as follows ▪ chmod -R g+w /repos/svn/repos/someproject ▪ chown -R apache.apache /repos/svn/repos/someproject ◦ Similarly, you can setup mirror server with the configuration given at Link Above.

- 7. (d.) Setting CIFS over a Zone Initial reading disclosed its not possible over local zones, only global zone could support CIFS. So just did practical with setting up SAMBA server on Solaris Zones; implemented SWAT (Samba Web Admin Tool) for easy configuration. ◦ for Solaris 10, SAMBA came up real easy to configure ▪ #svcs samba wins swat ▪ #svcadm enable samba ▪ #svcadm enable wins ▪ #svcadm enable swat ◦ Simply browsing http://samba_Zone_IPaddress:901/ presents with a nice SWAT GUI to configure SAMBA service on that zone. To get start with, you need to ▪ > select 'Shares', add new share with proper configuration ▪ > select 'Users', to add Users ▪ > Restart Services from UI itself ▪ > now try accessing this share from Windows as normal Windows Share using User created ::Tools/Technology Used:: Solaris Zones: http://www.solarisinternals.com/wiki/index.php/Zones CoolStack Software Bundles: {now superseded by WebStack} ~ http://hub.opensolaris.org/bin/view/Project+webstack/sunwebstack Rsync: http://en.wikipedia.org/wiki/Rsync SVN: http://subversion.apache.org/ Apache: http://www.apache.org/ CIFS: http://msdn.microsoft.com/en-us/library/aa302188.aspx Samba: http://www.samba.org/ SWAT: http://linux.die.net/man/8/swat ::Inference:: Solaris Zones is a highly under-used and over-capable technology.

- 8. Due to its minimal overhead architecture on Virtualization, its the best option according to me for Virtualization of Linux Boxes. There is still a great scope left to be developed in this technology. ::Troubleshooting/Updates:: Problem: The Apache mod_dav and mod_dav_svn module was failing to integrate with SVN implementation. Solution: Initially I was using CoolStack's Software Bundle of Apache+PHP+MySQL due to ease of use on Native-Small Zone, but found out that actually it's implementation raised the incompatibility issue. So, created a Native Big- Zone and used standard Apache release, and it worked.