Embed presentation

Download as PDF, PPTX

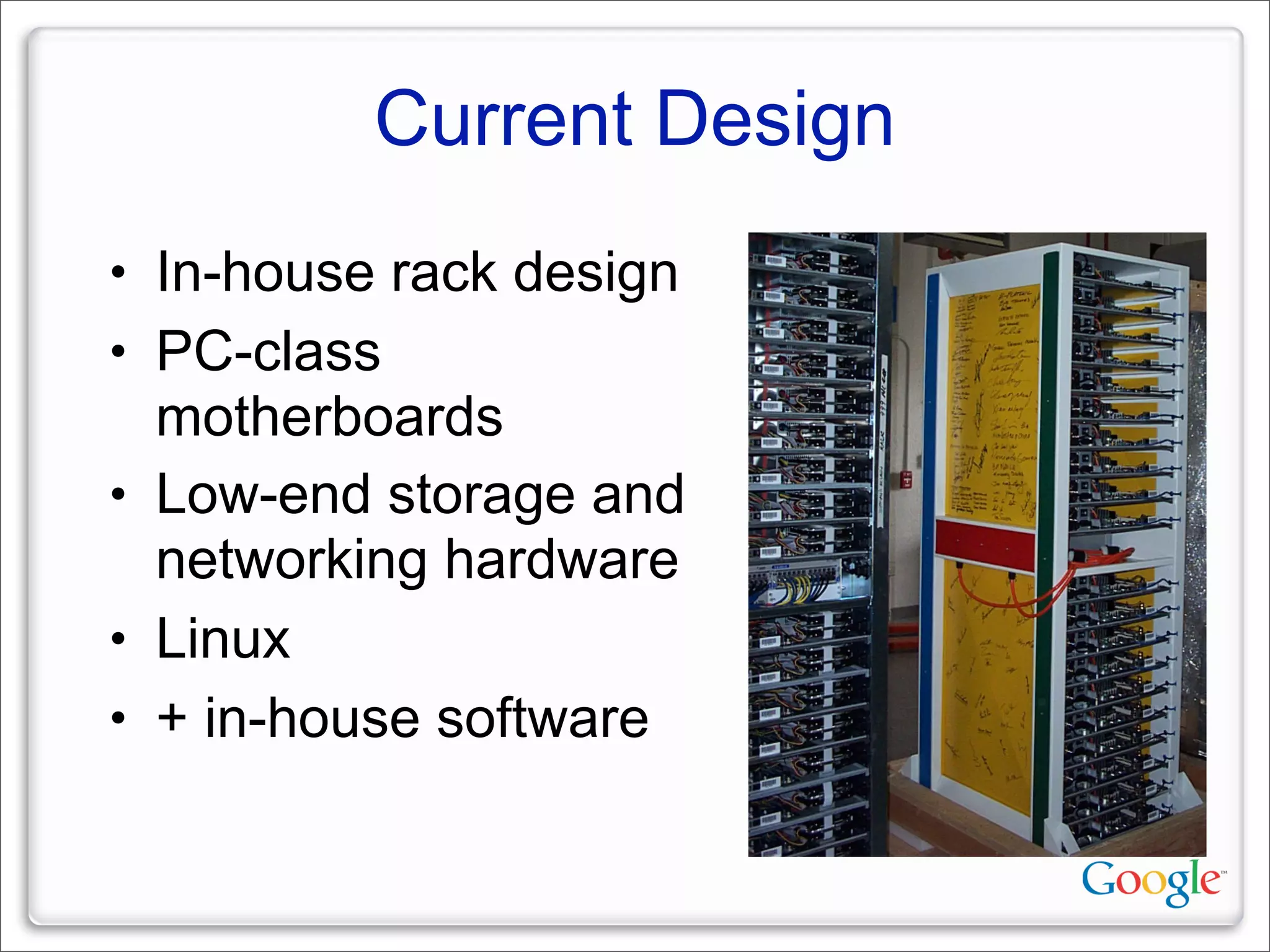

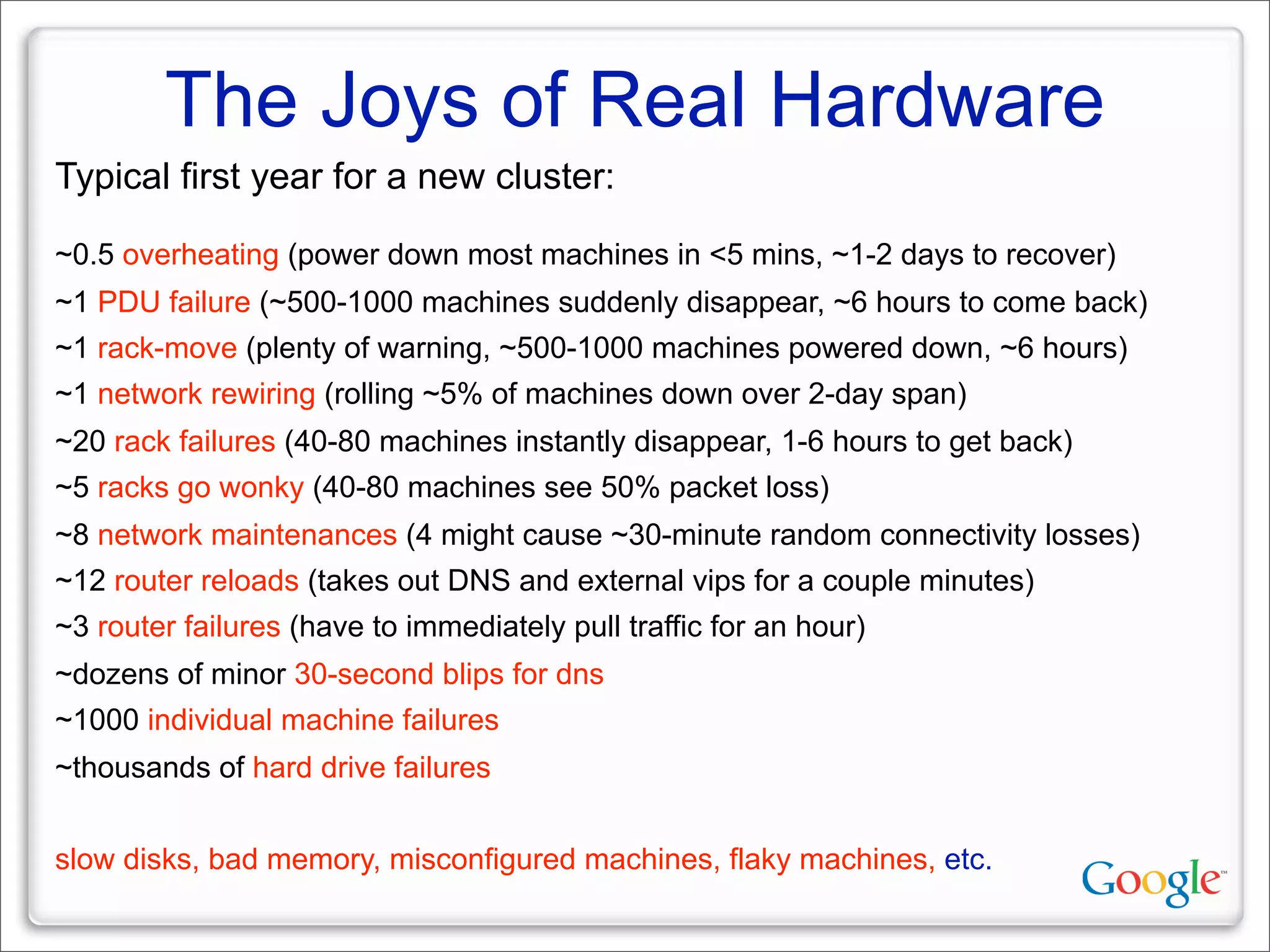

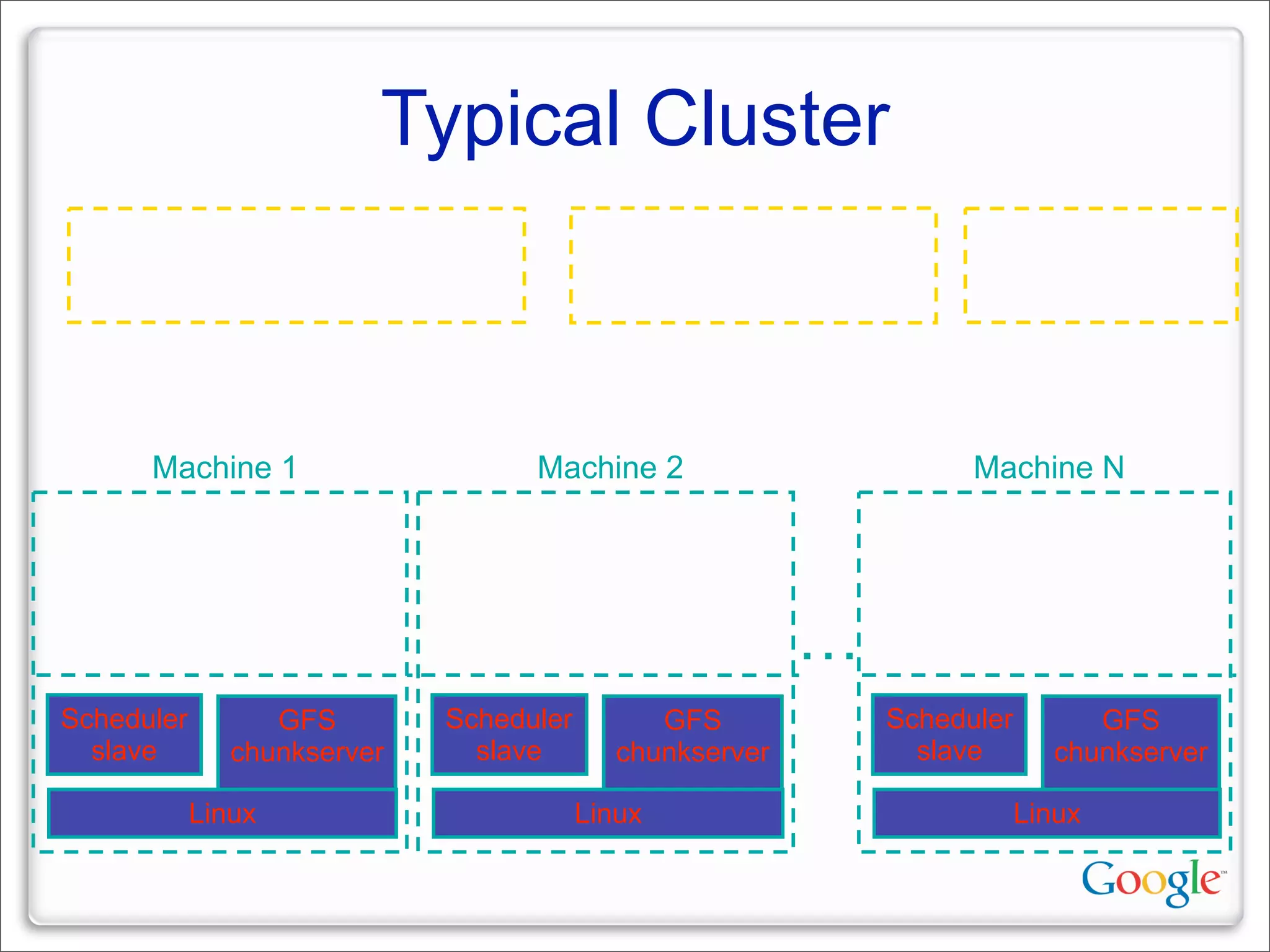

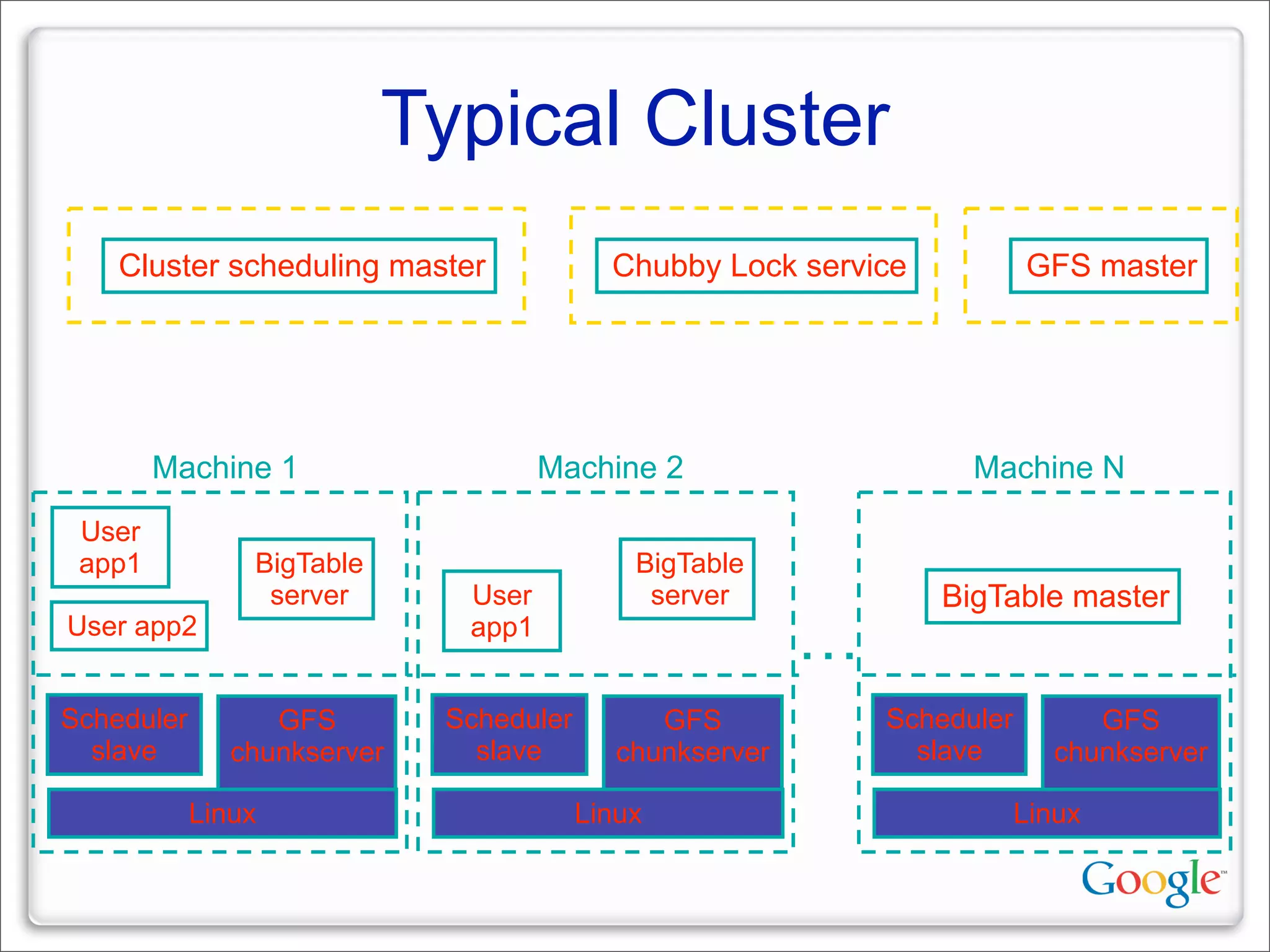

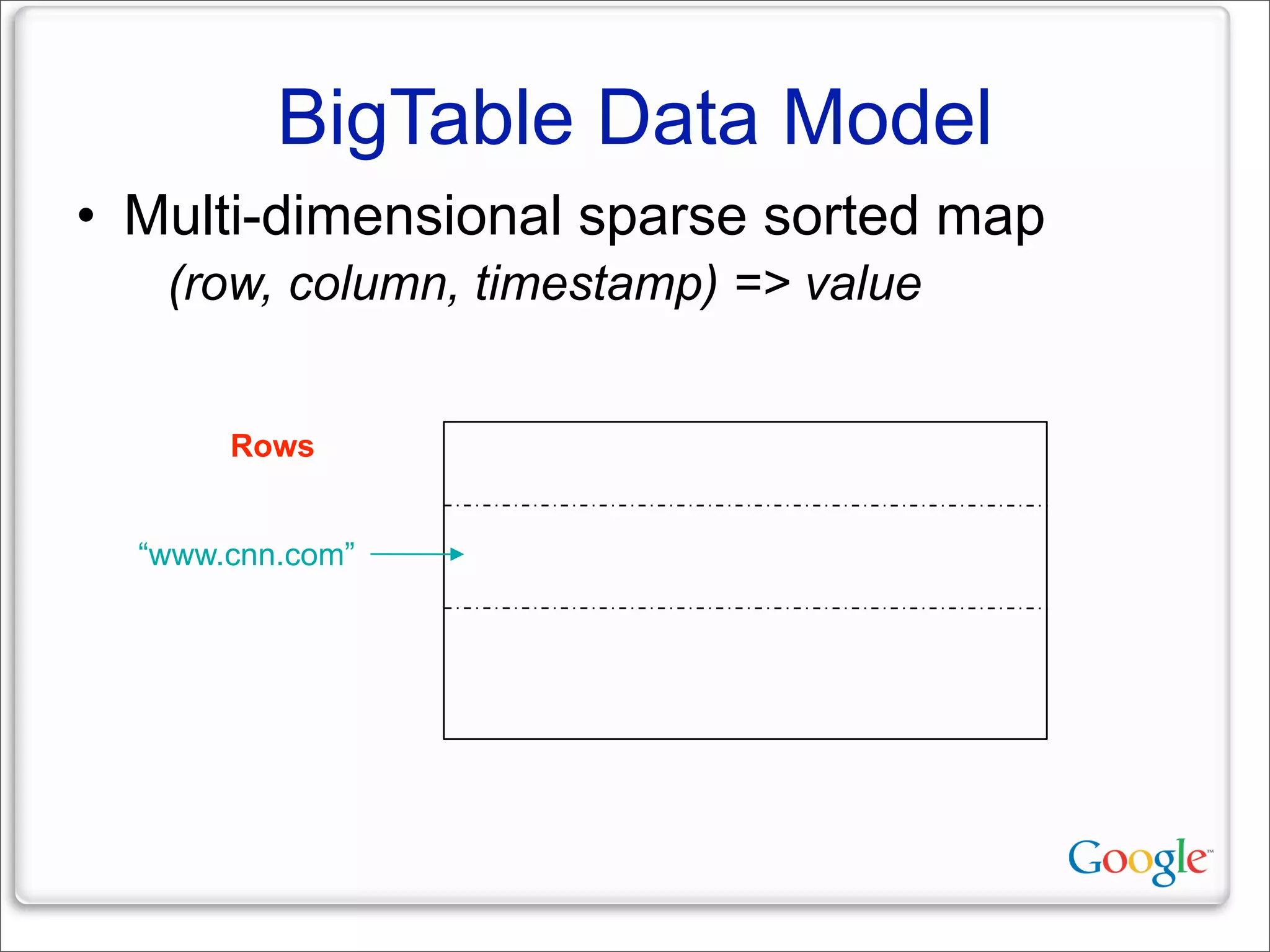

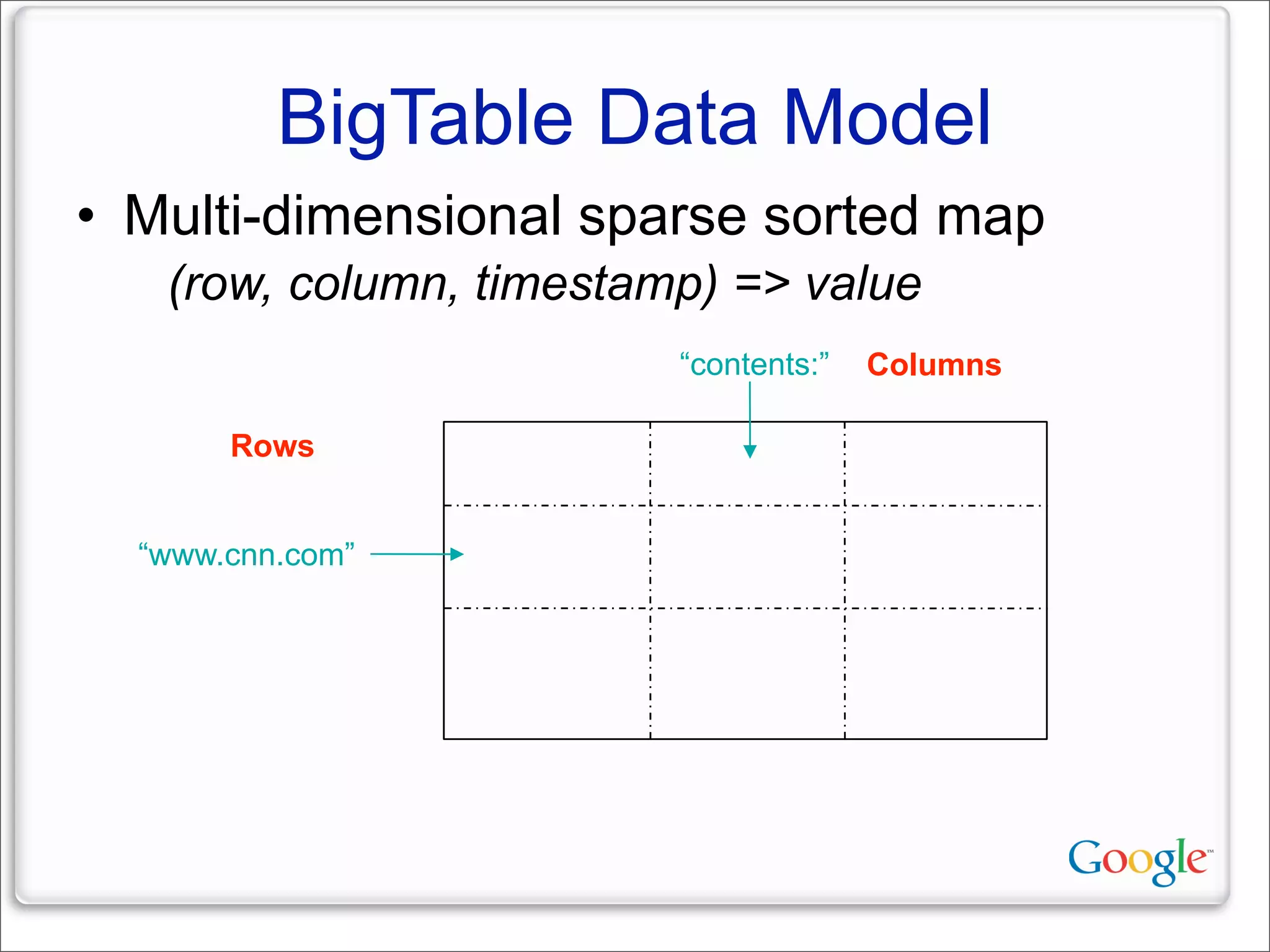

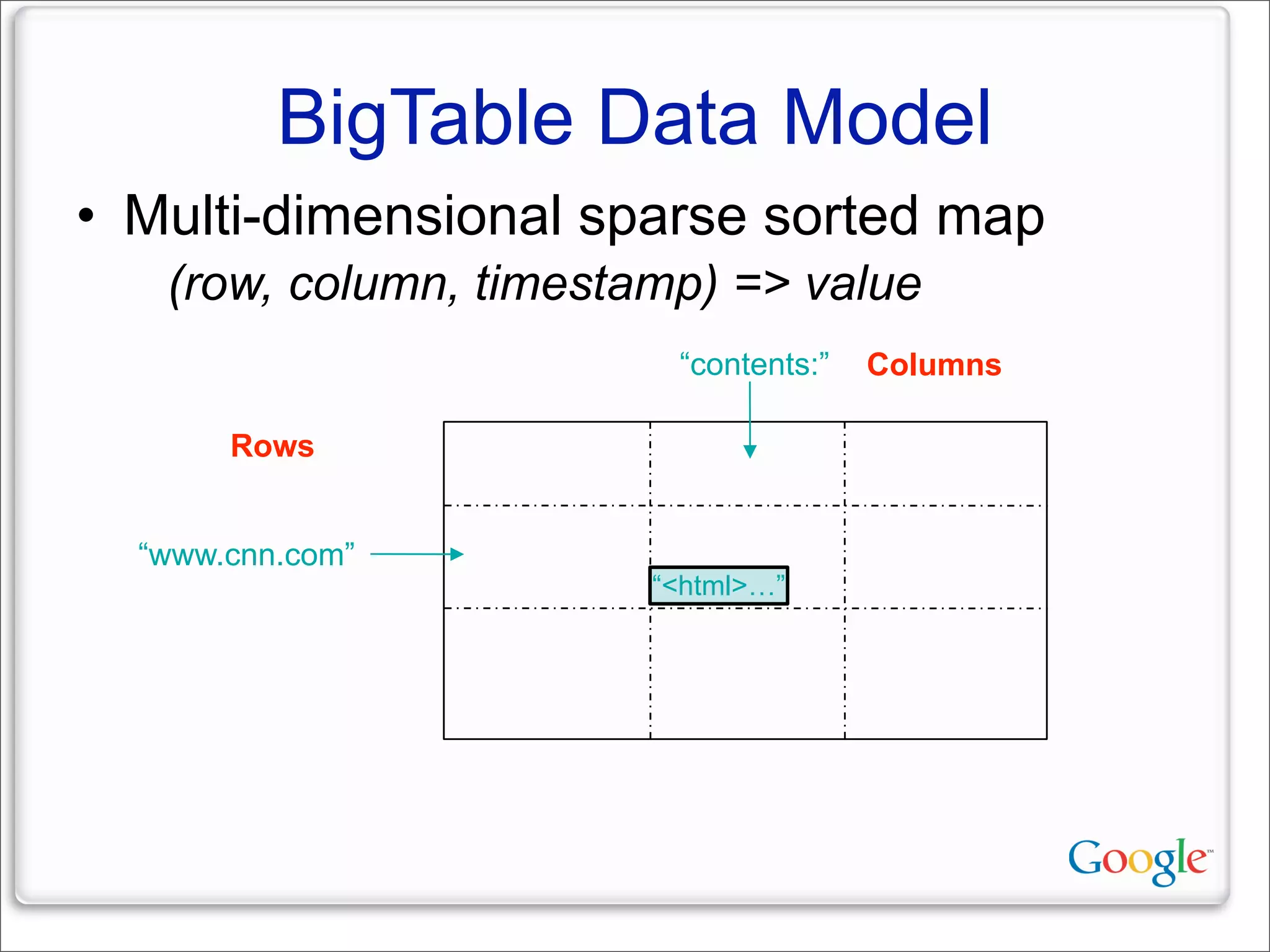

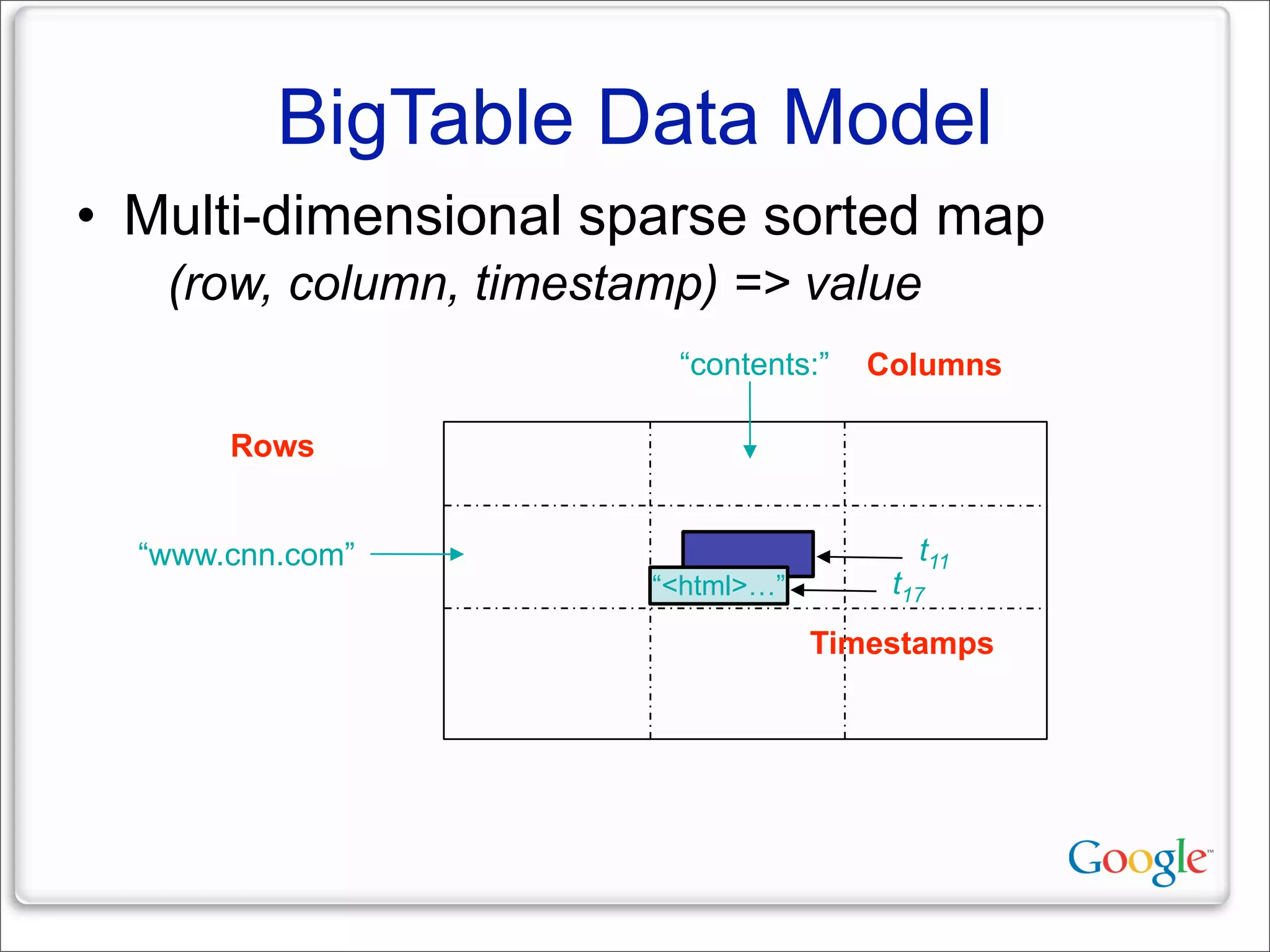

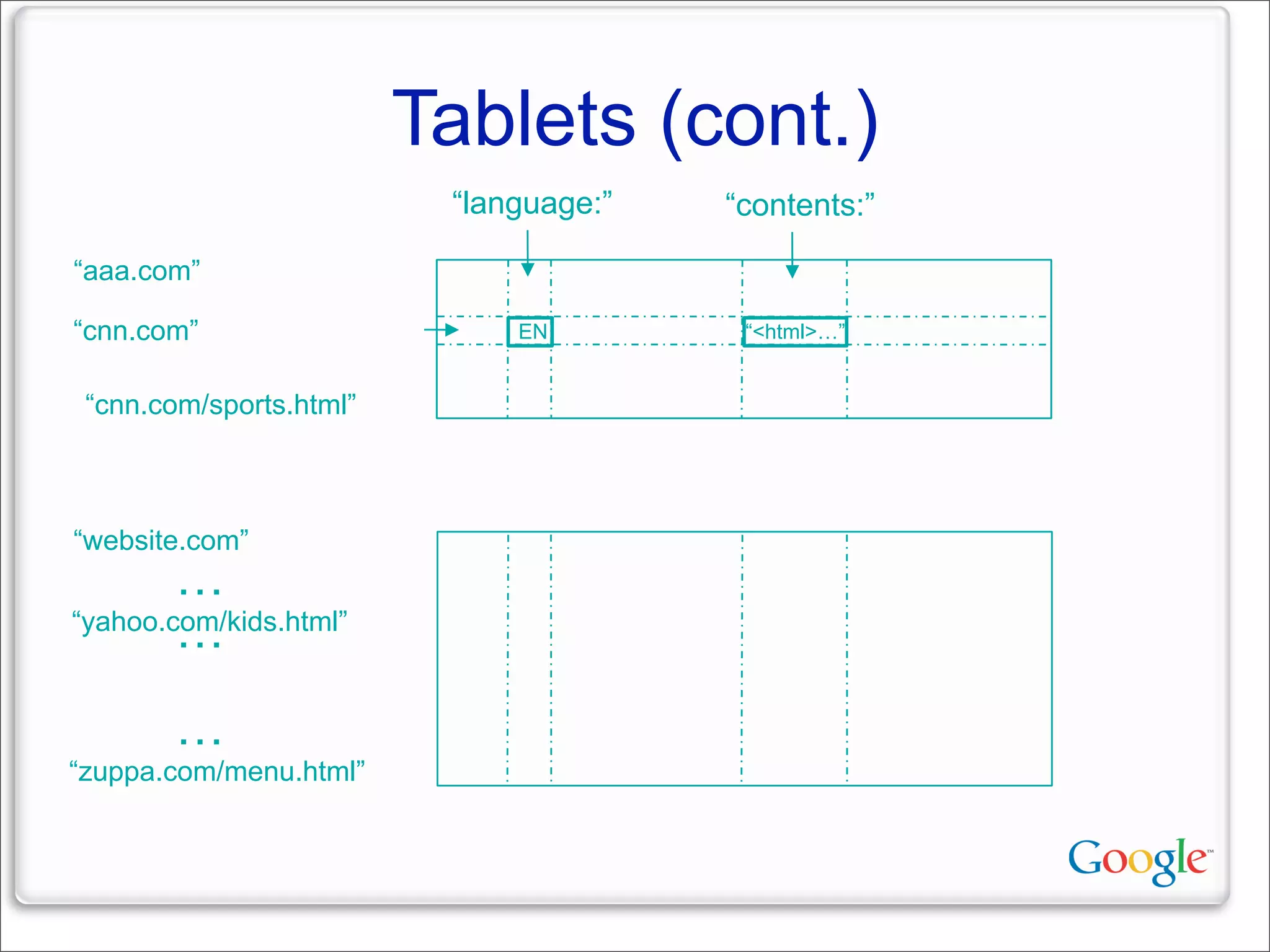

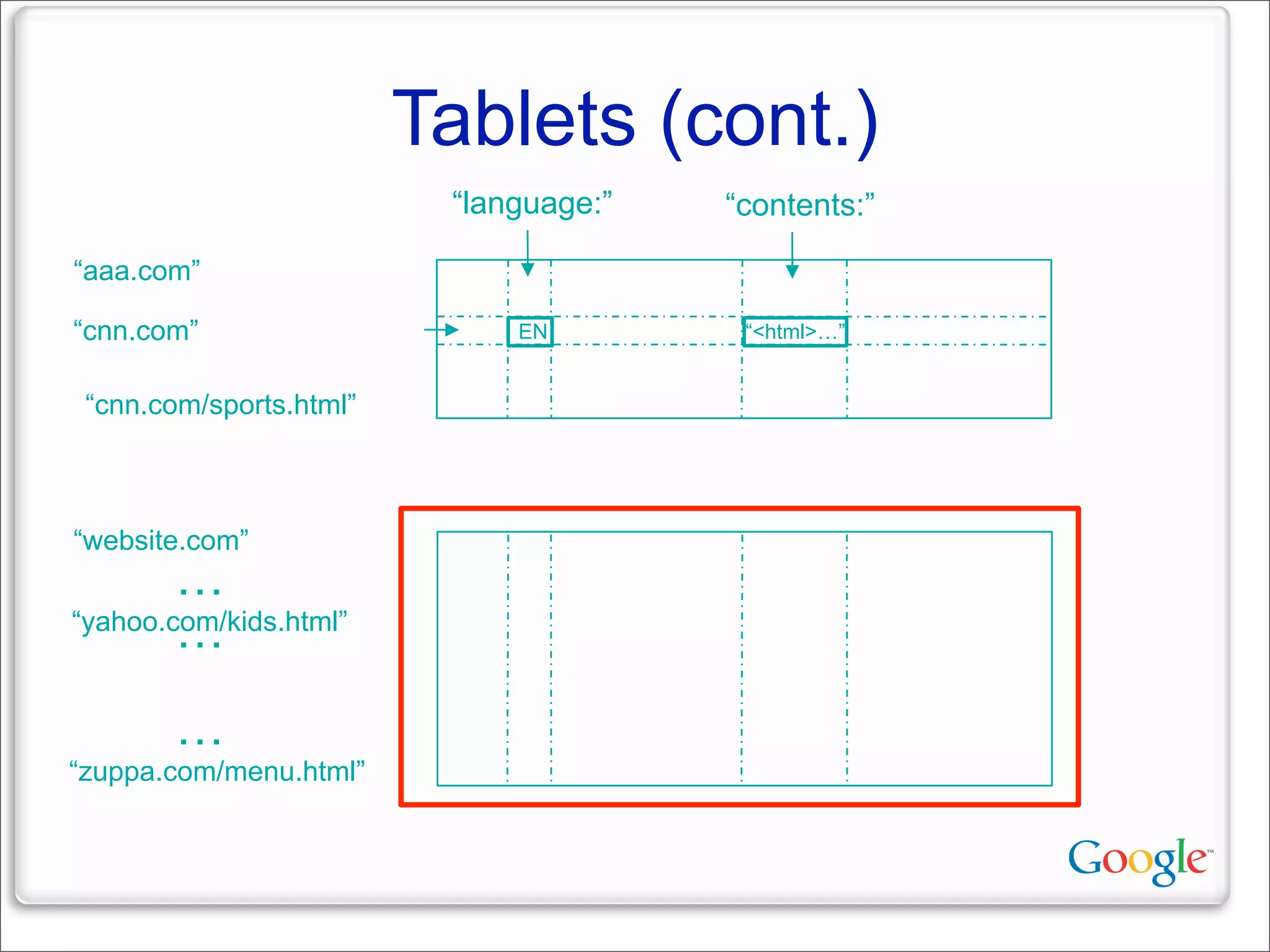

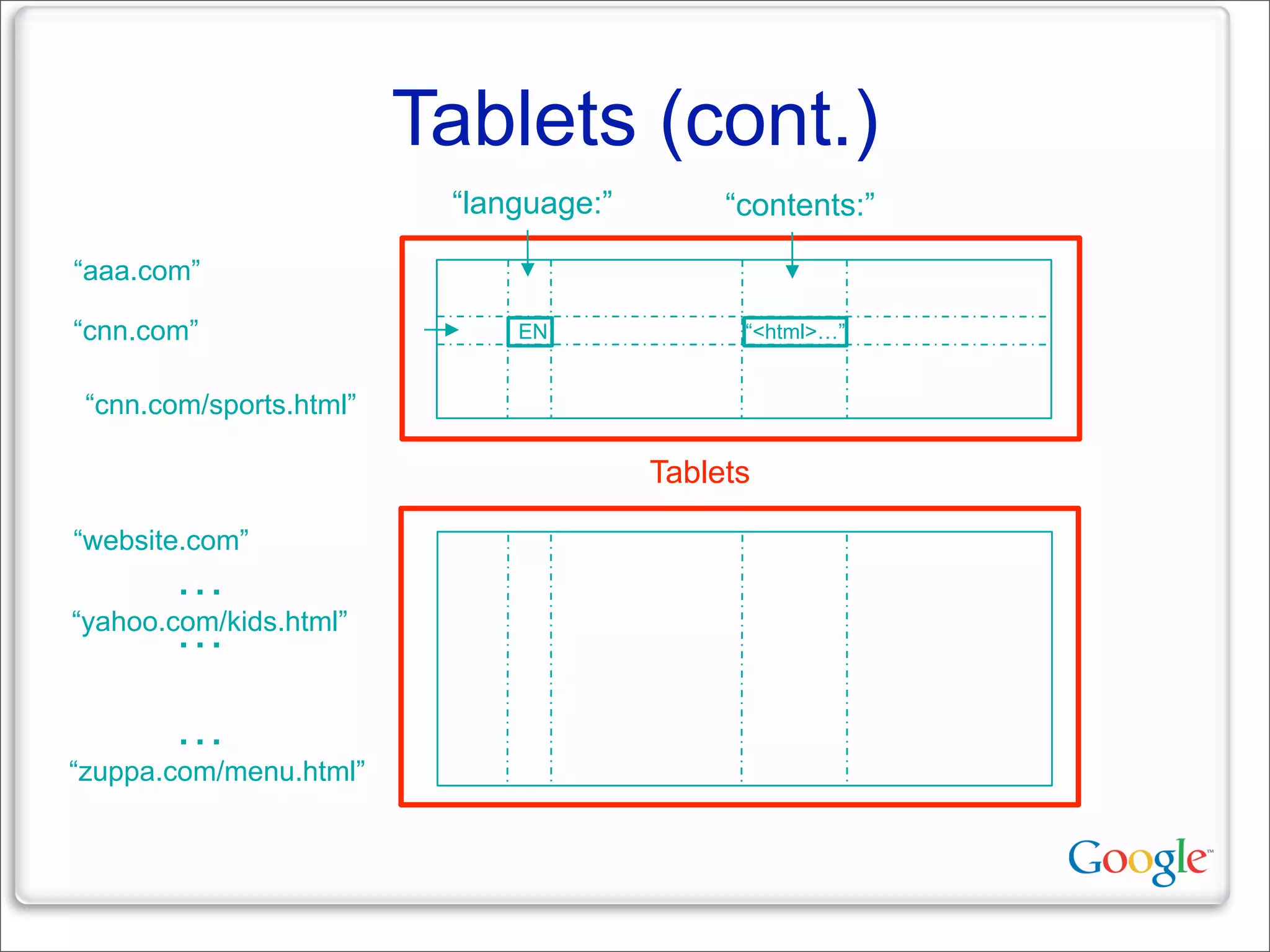

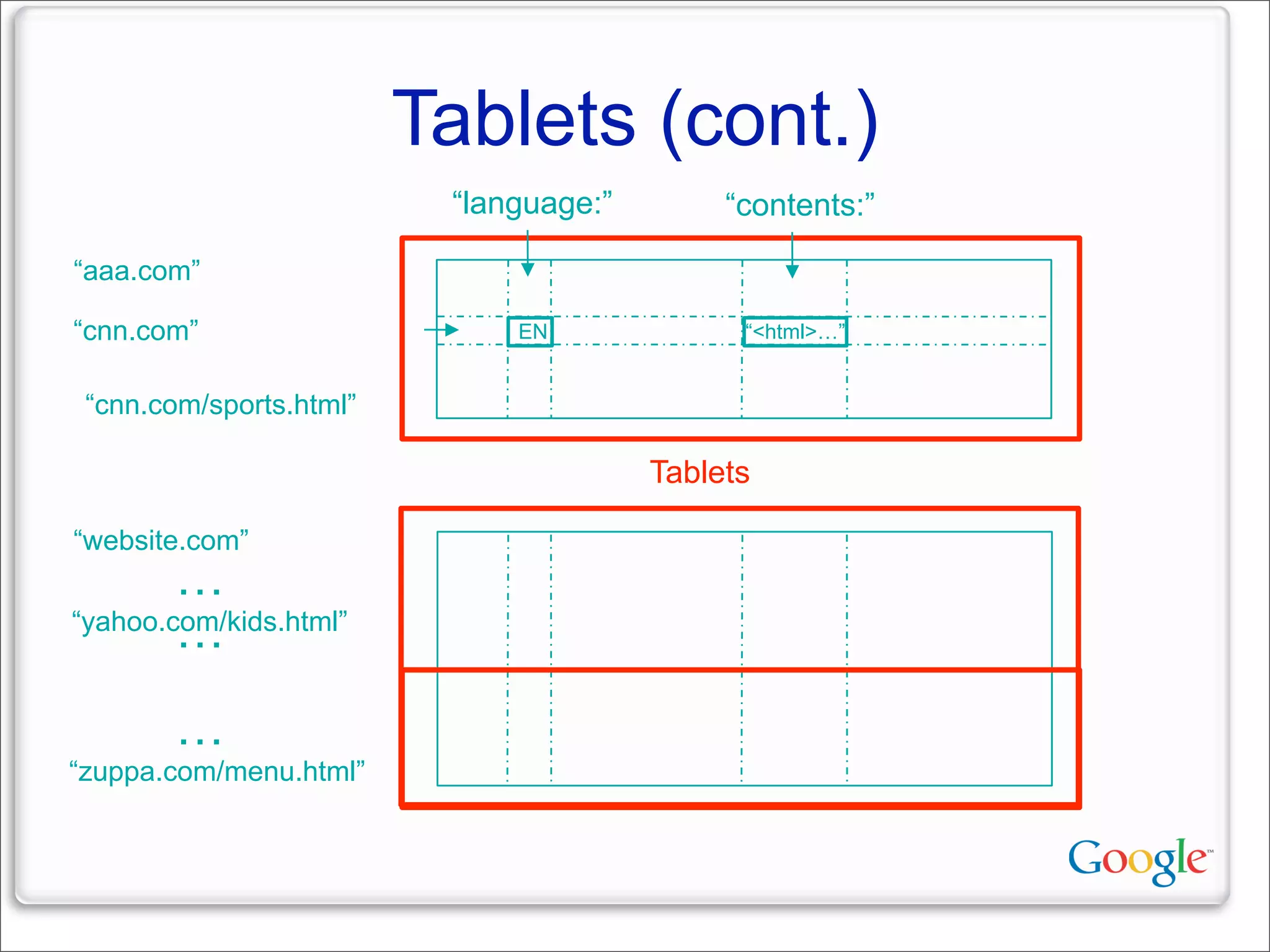

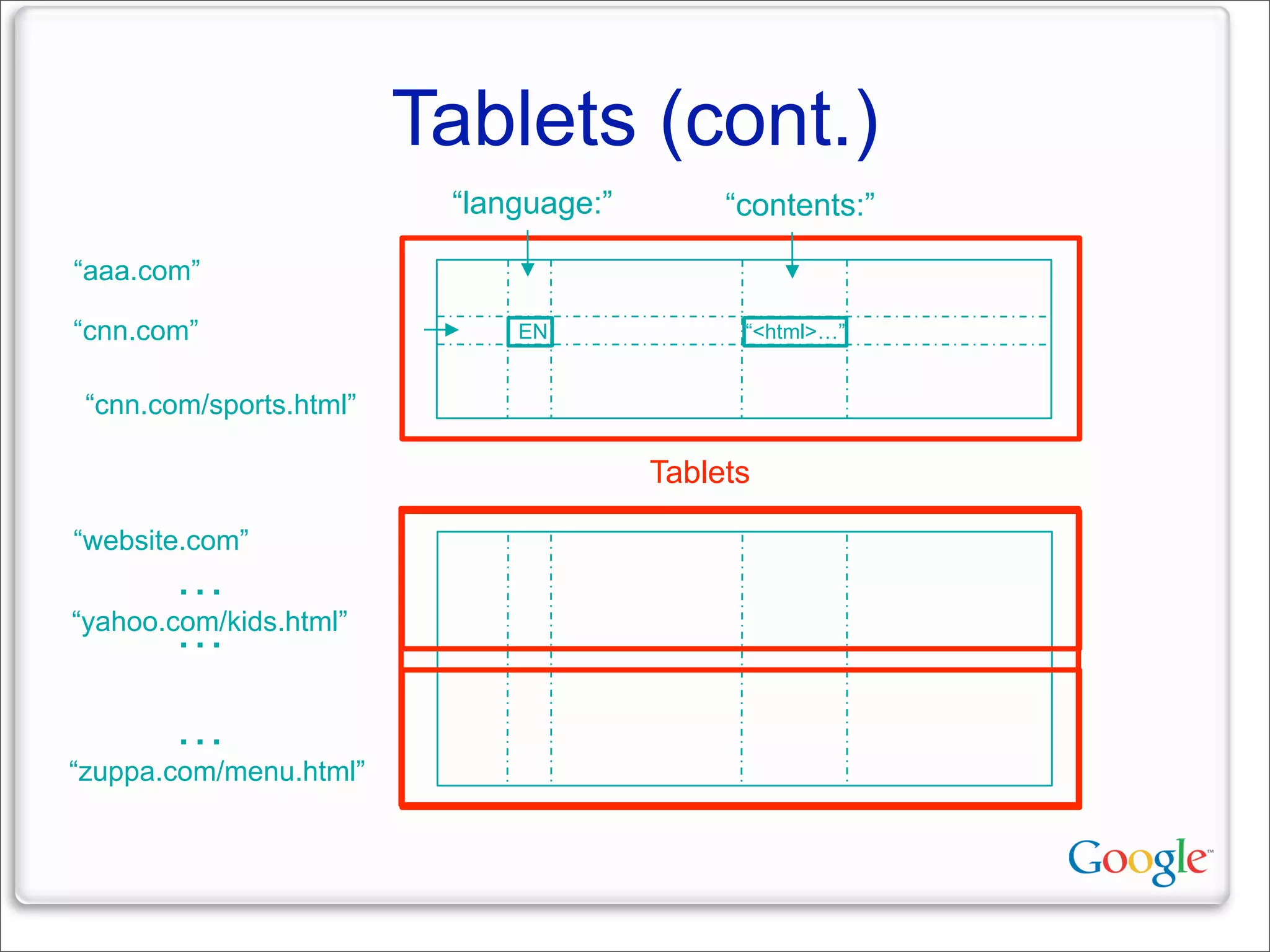

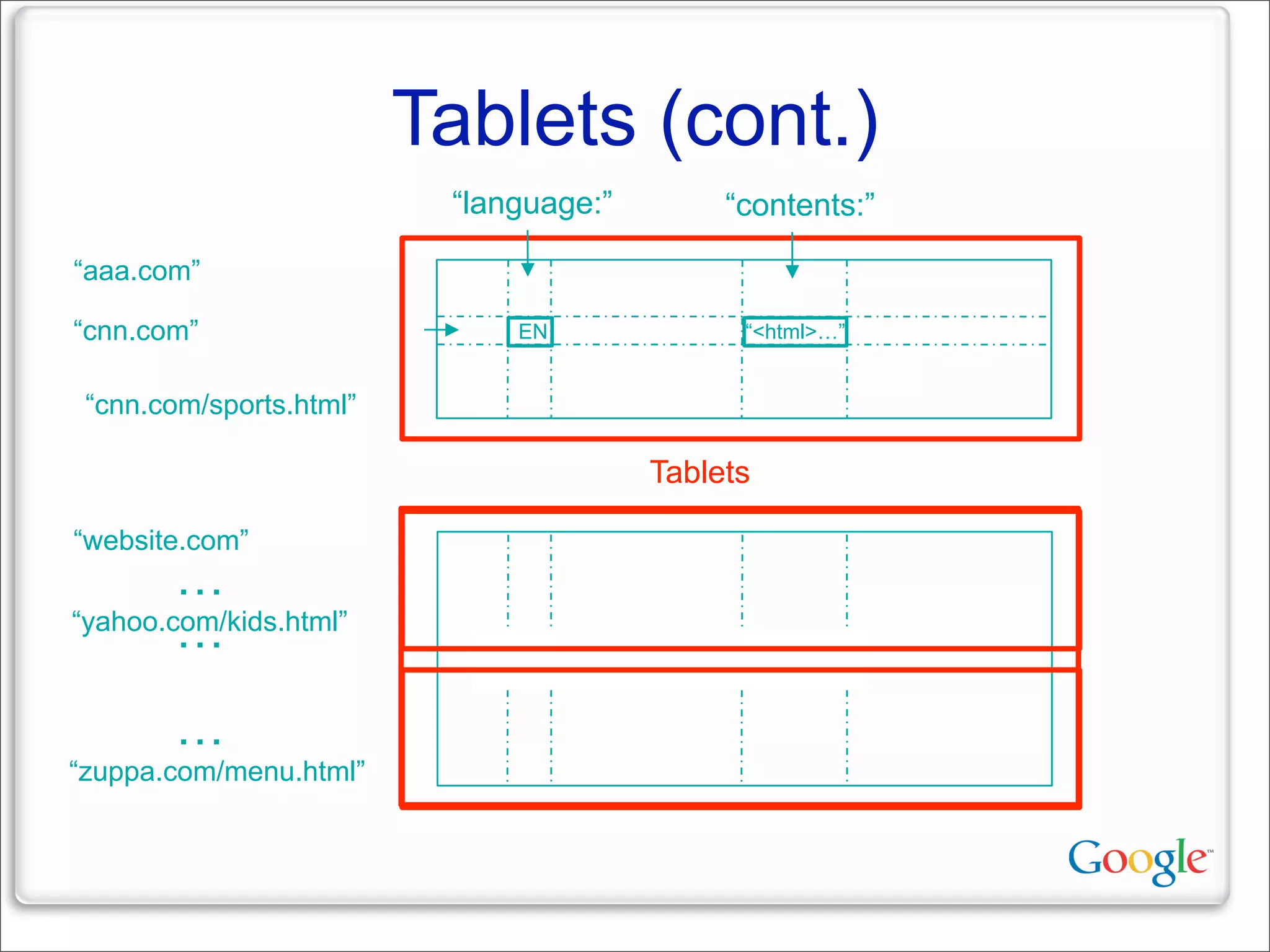

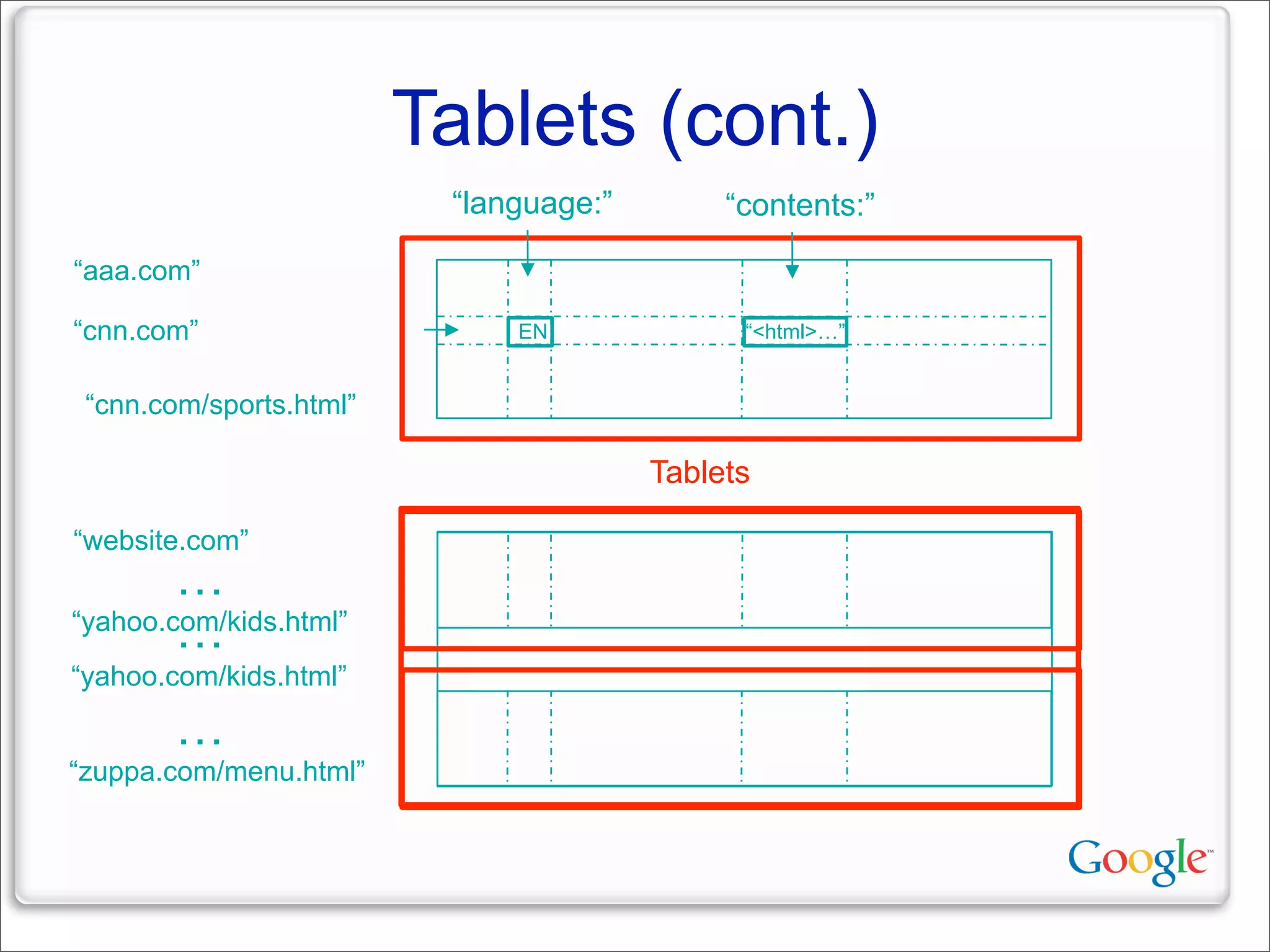

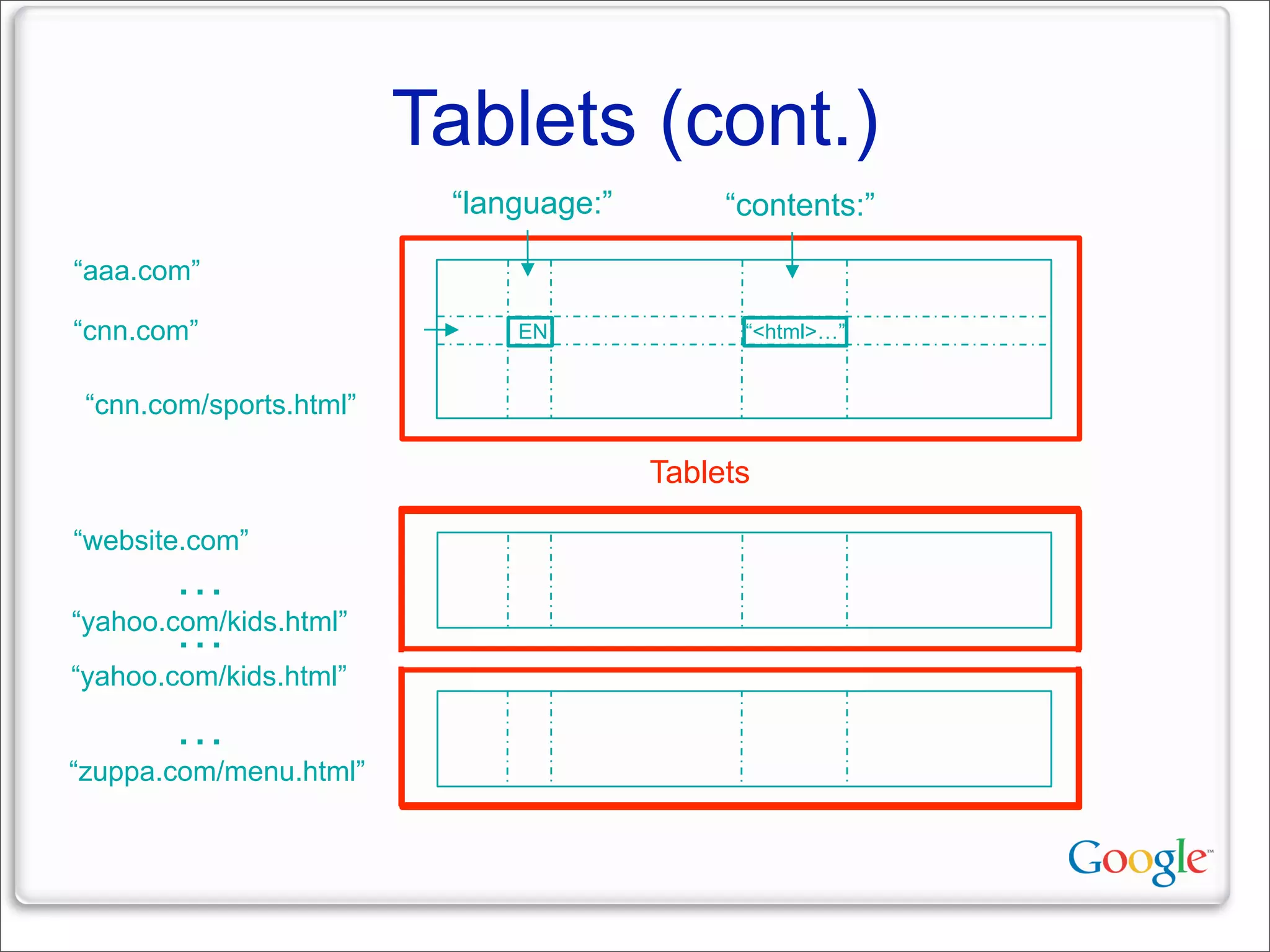

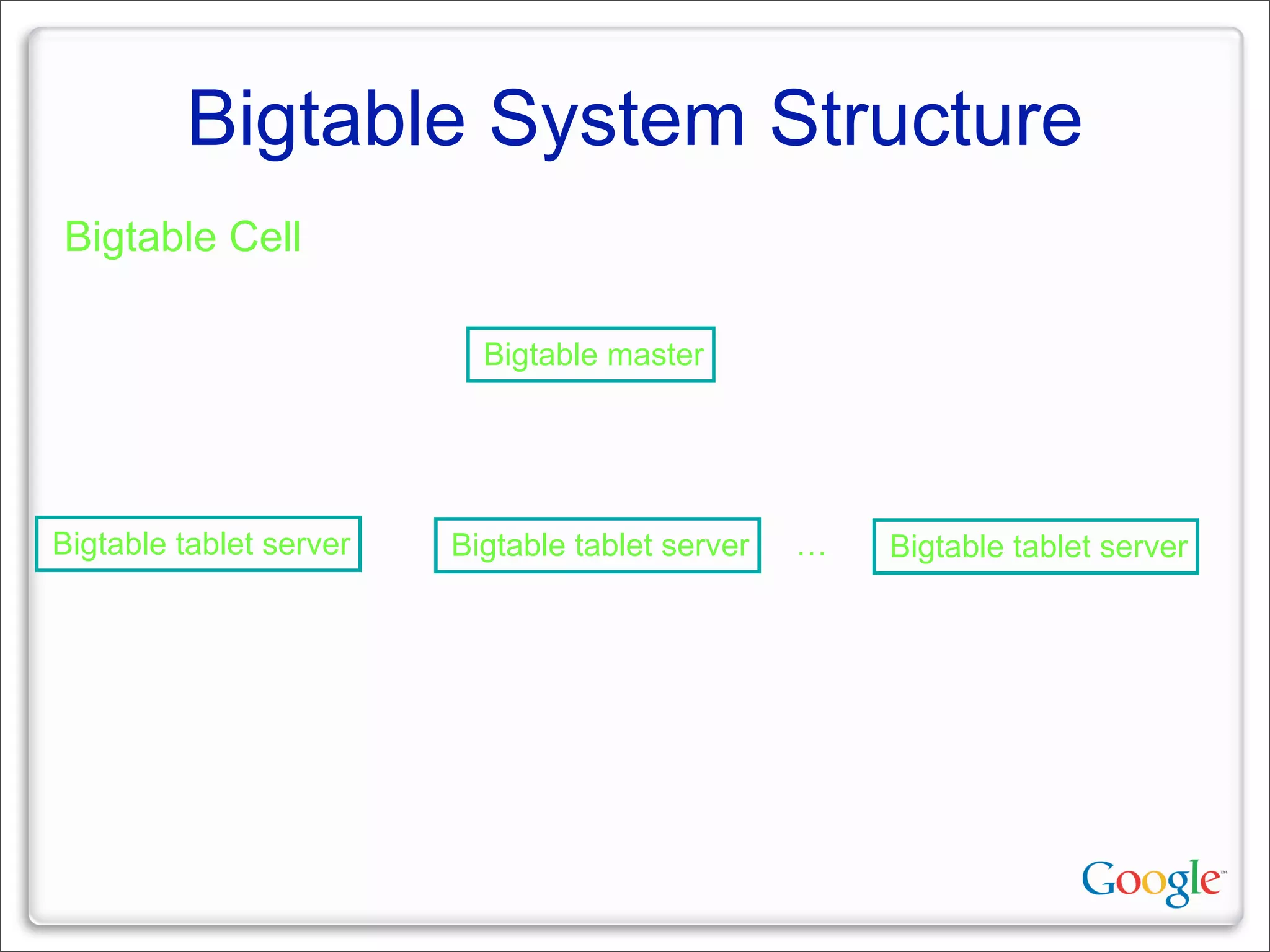

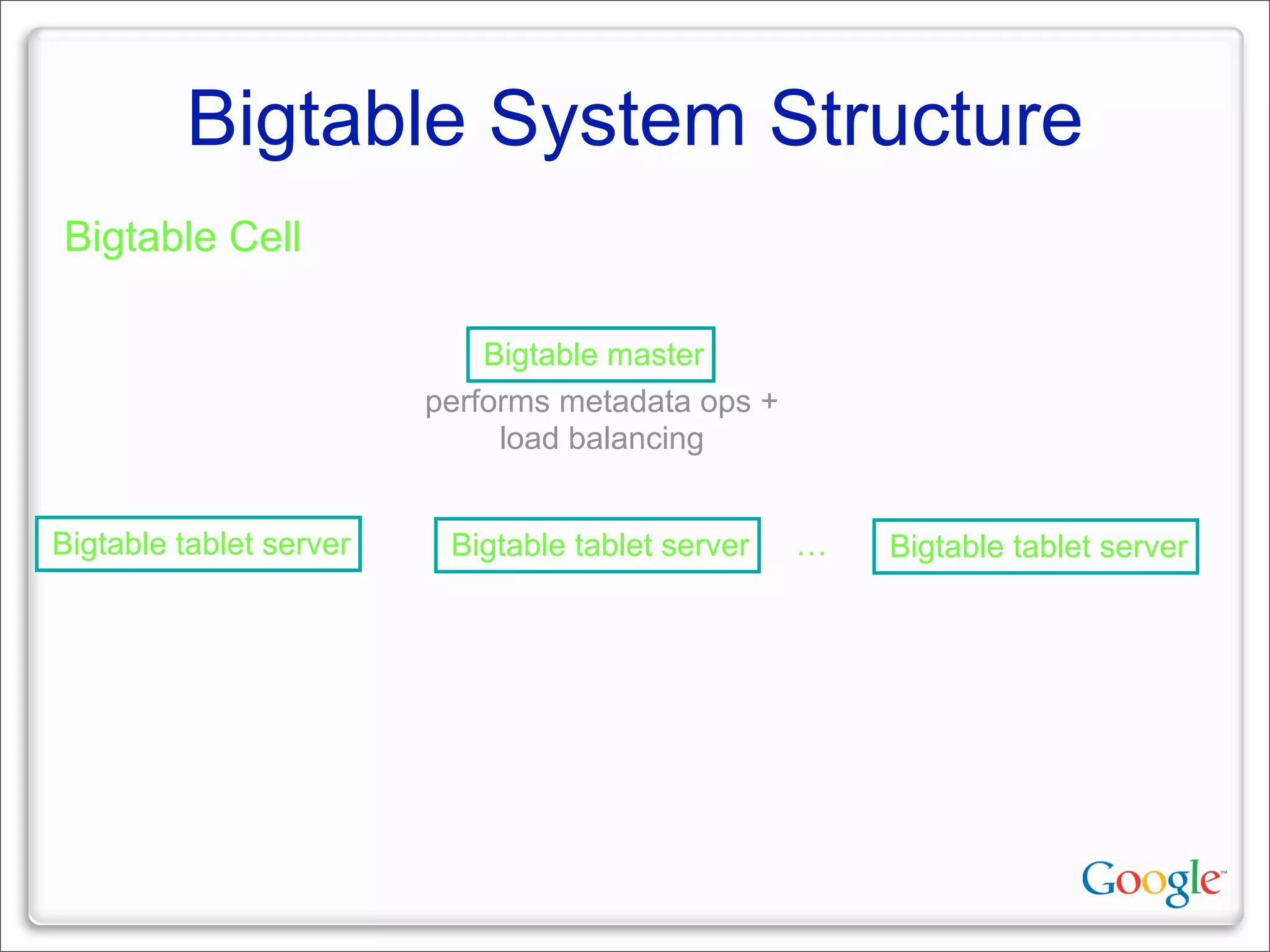

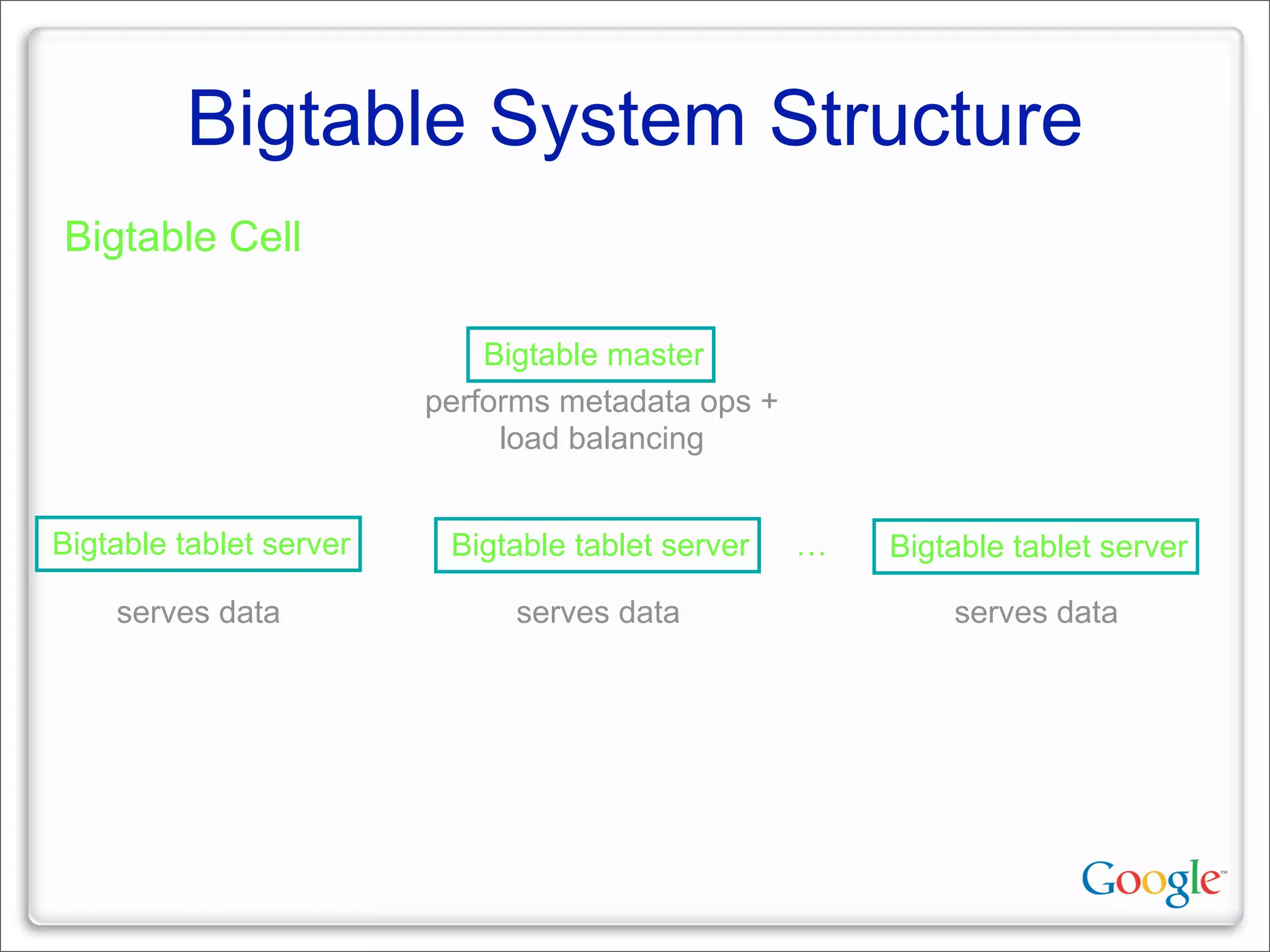

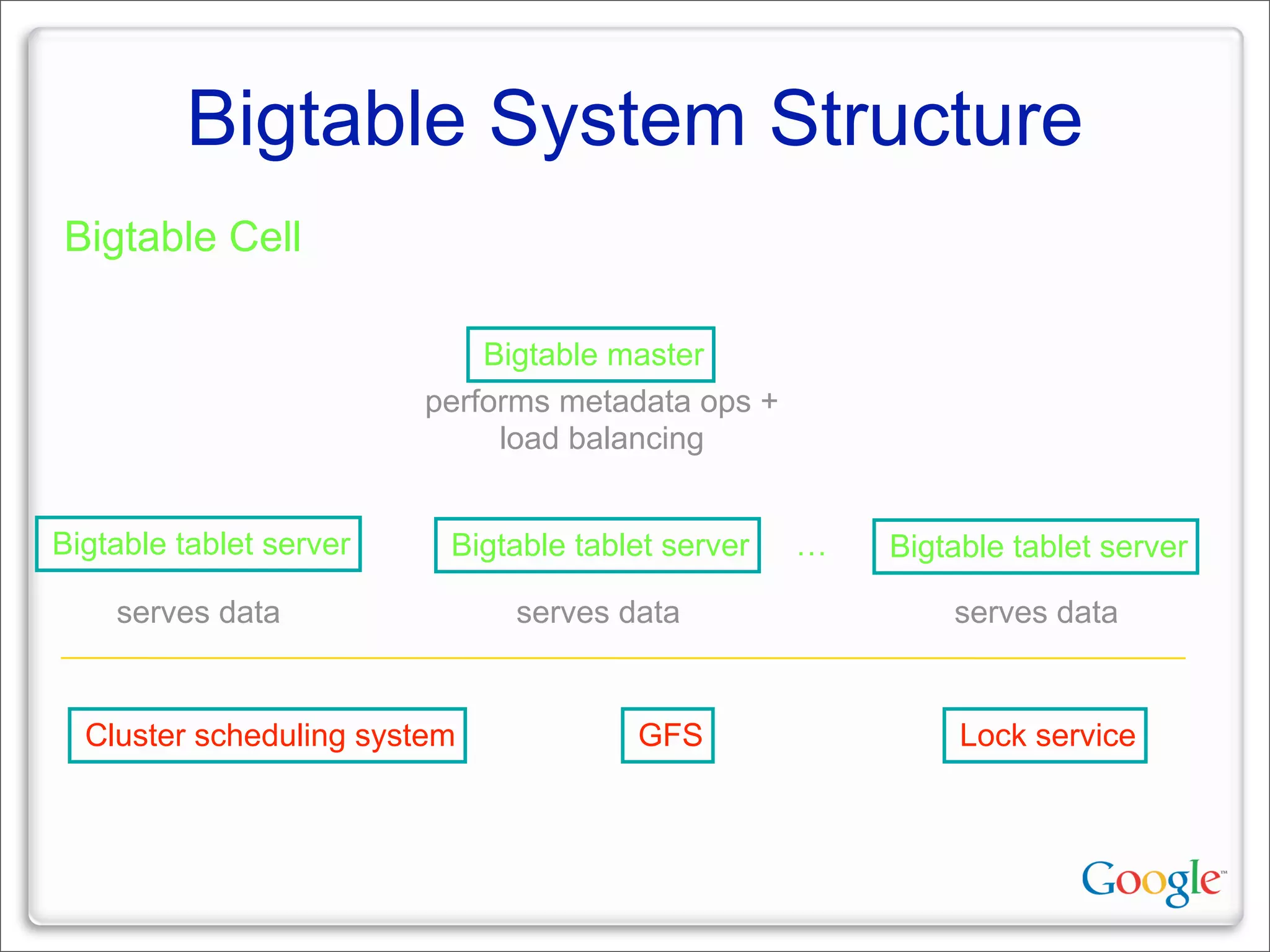

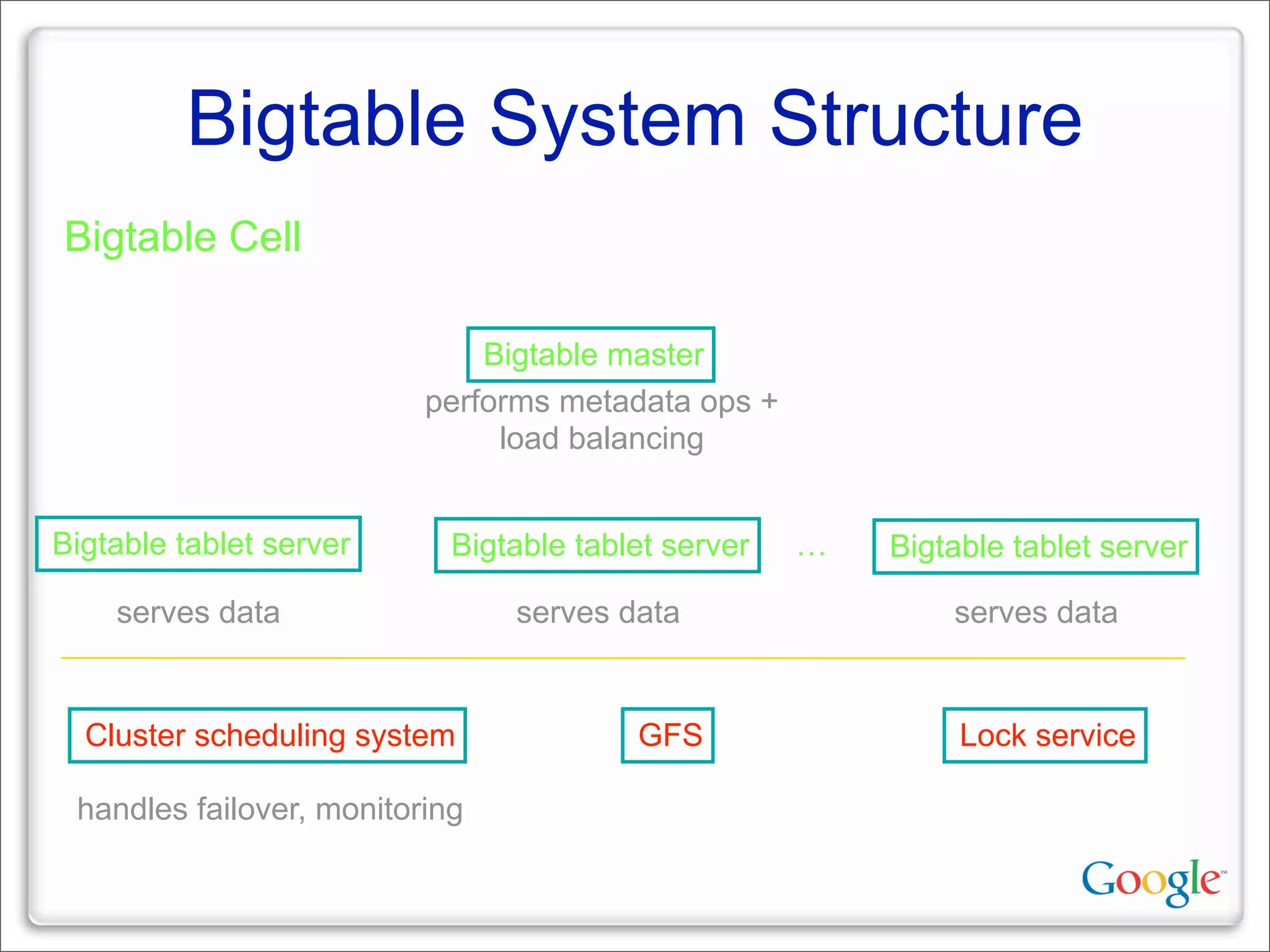

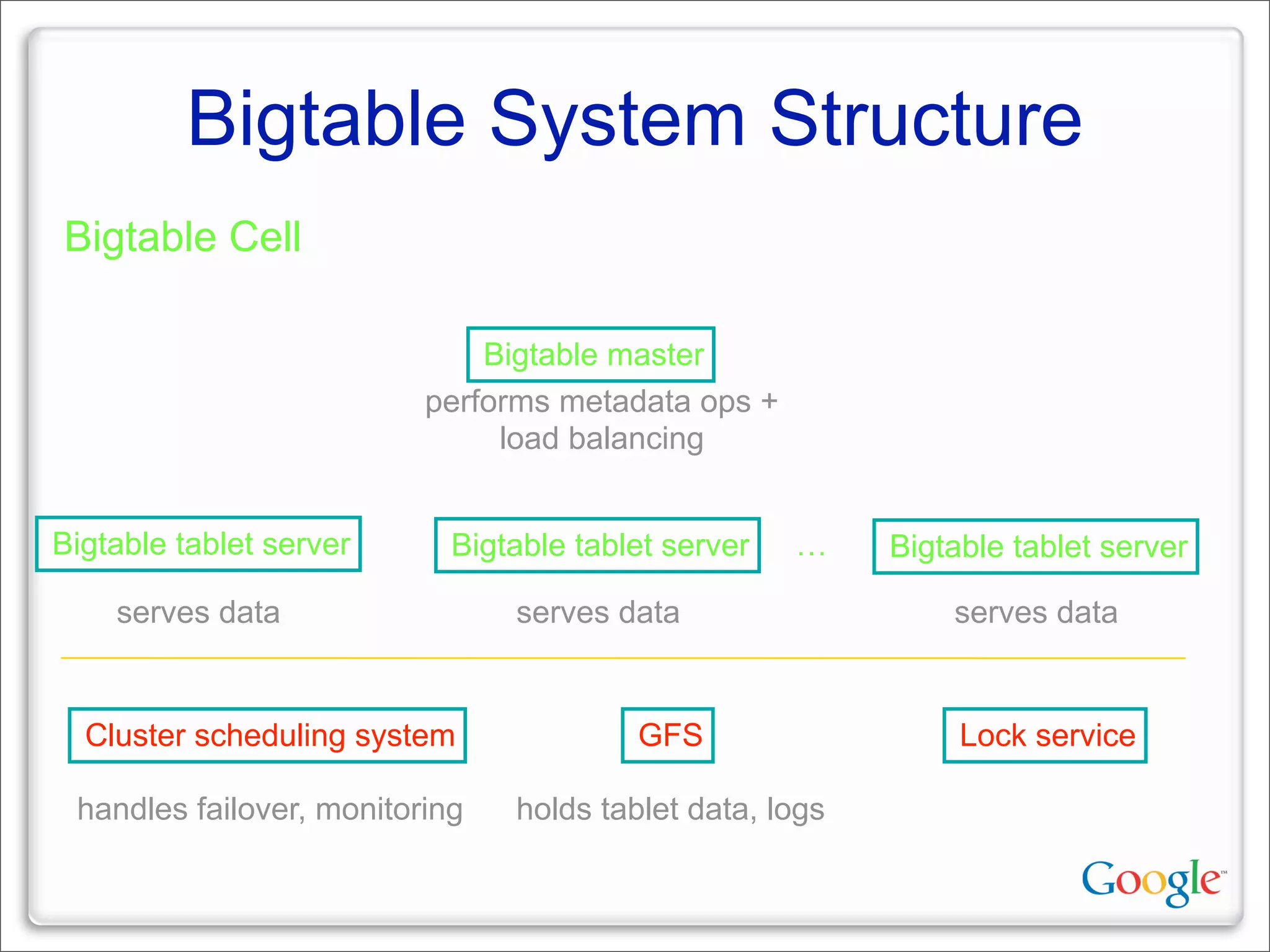

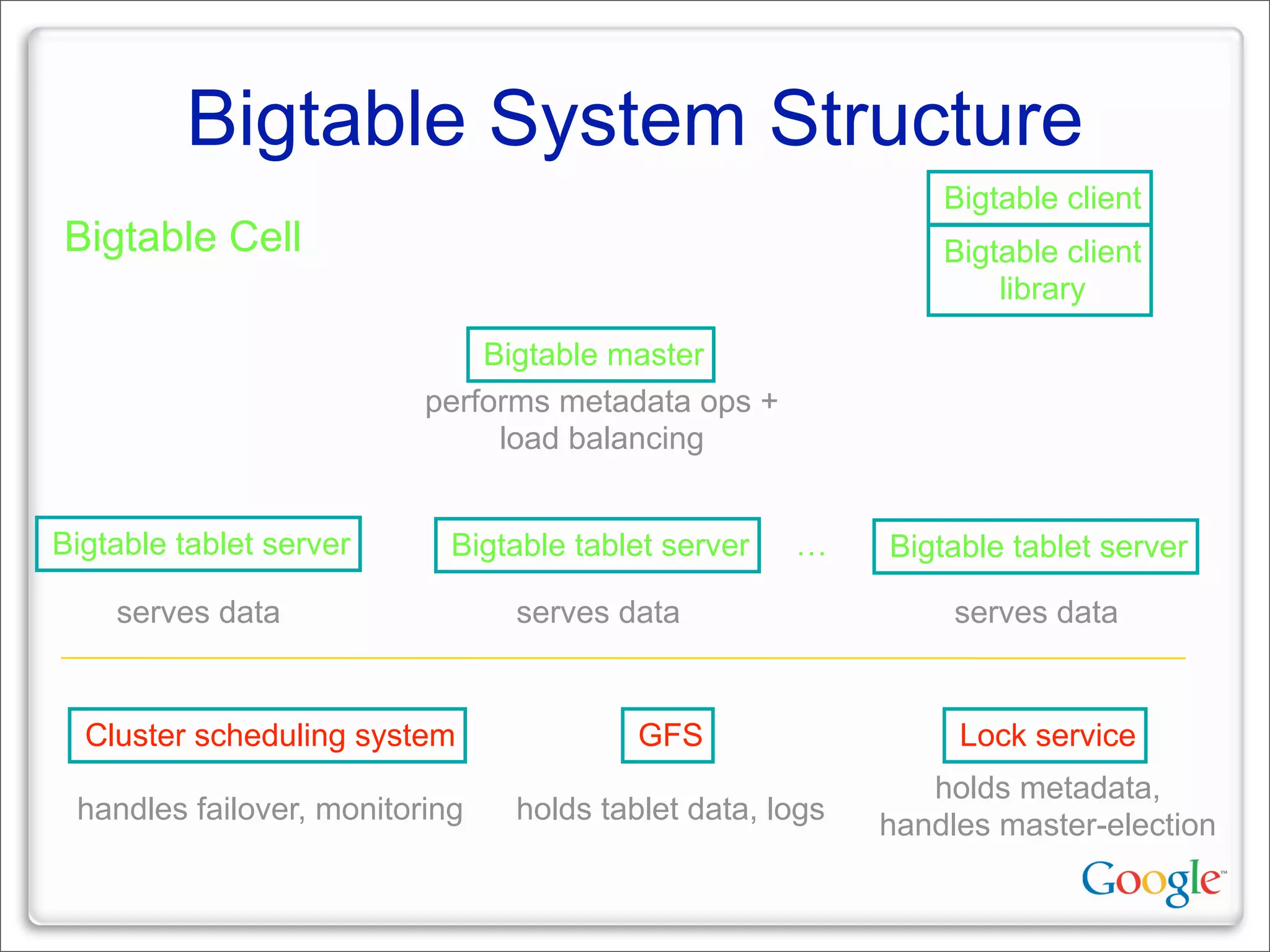

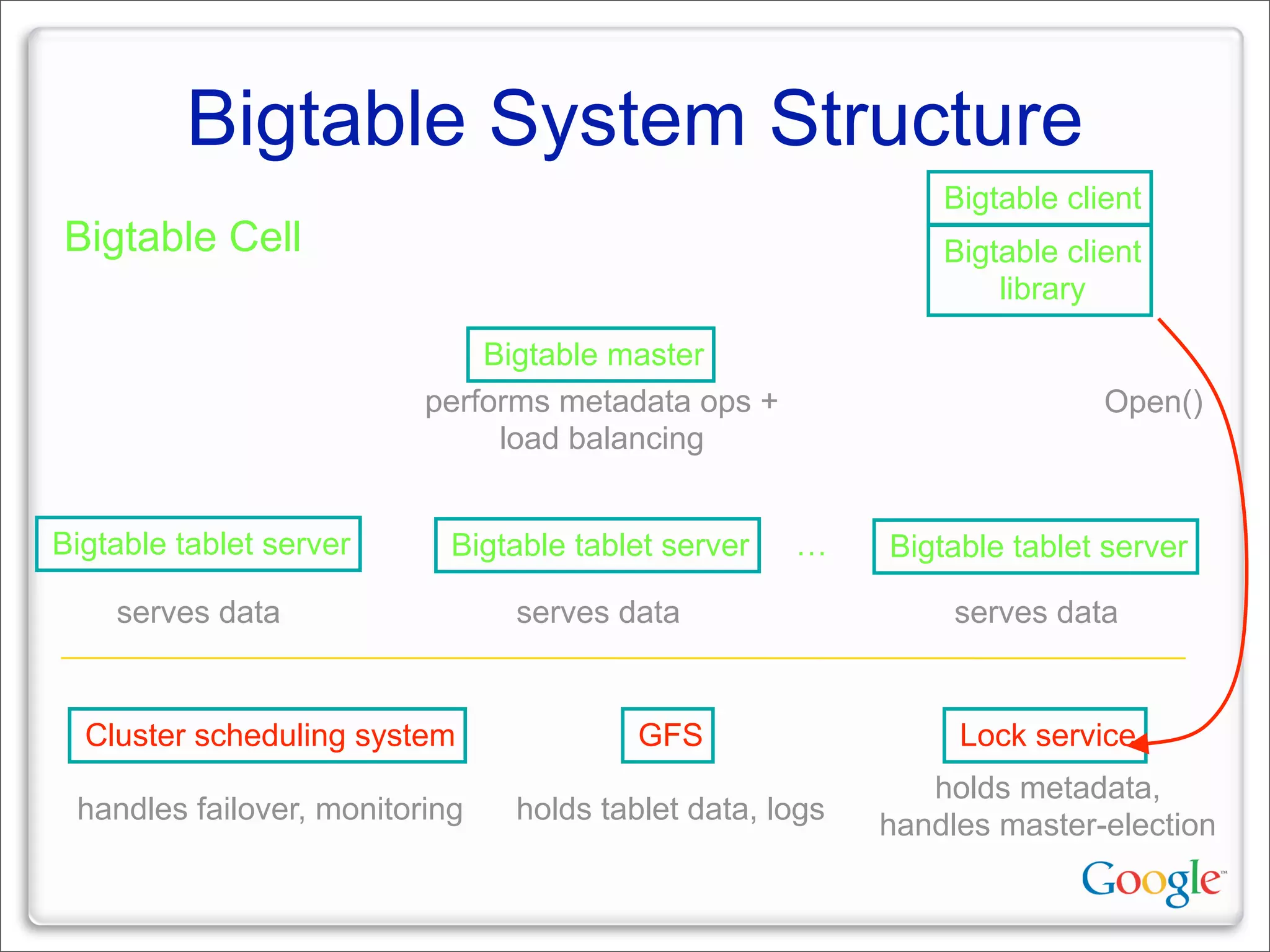

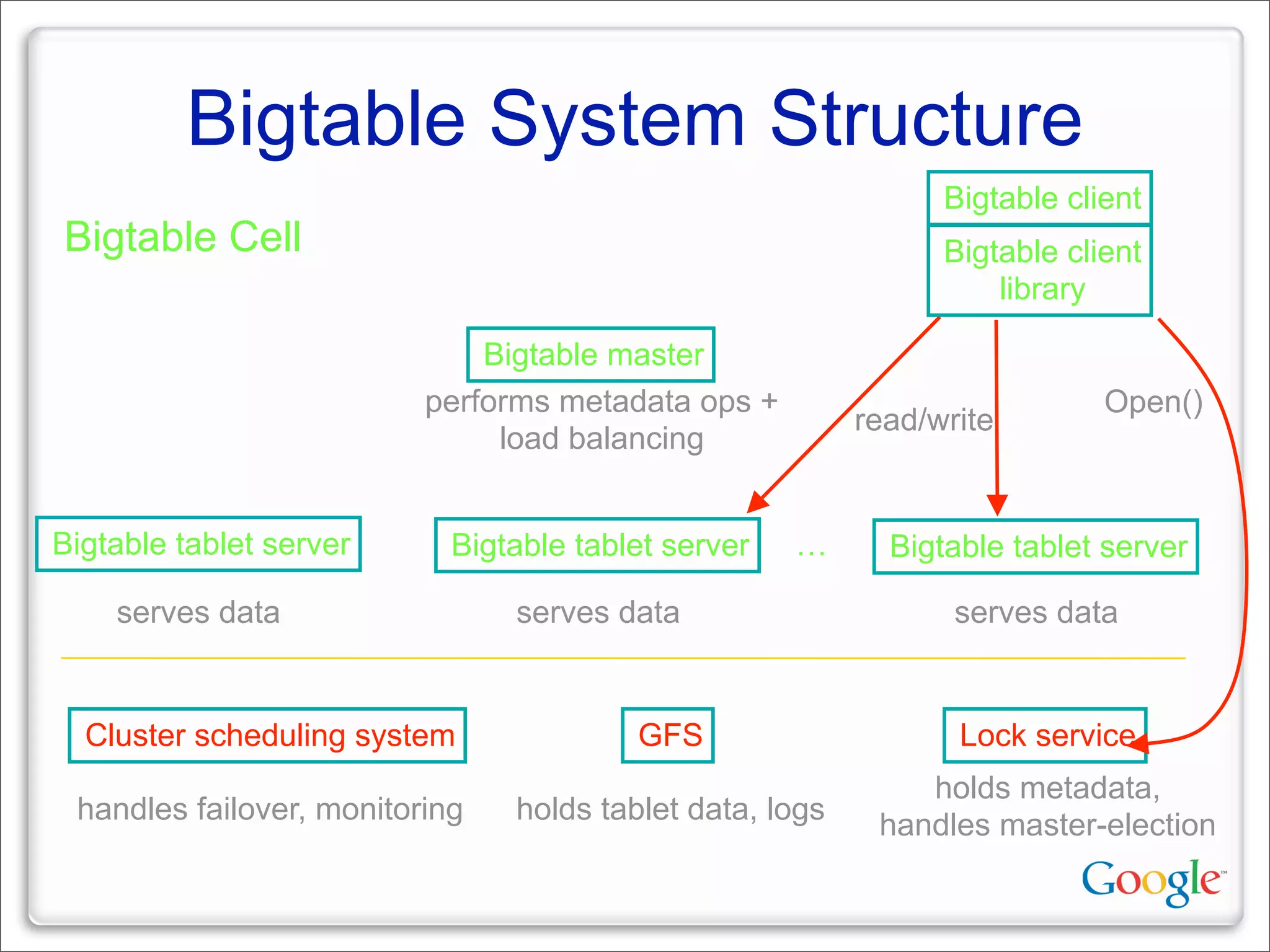

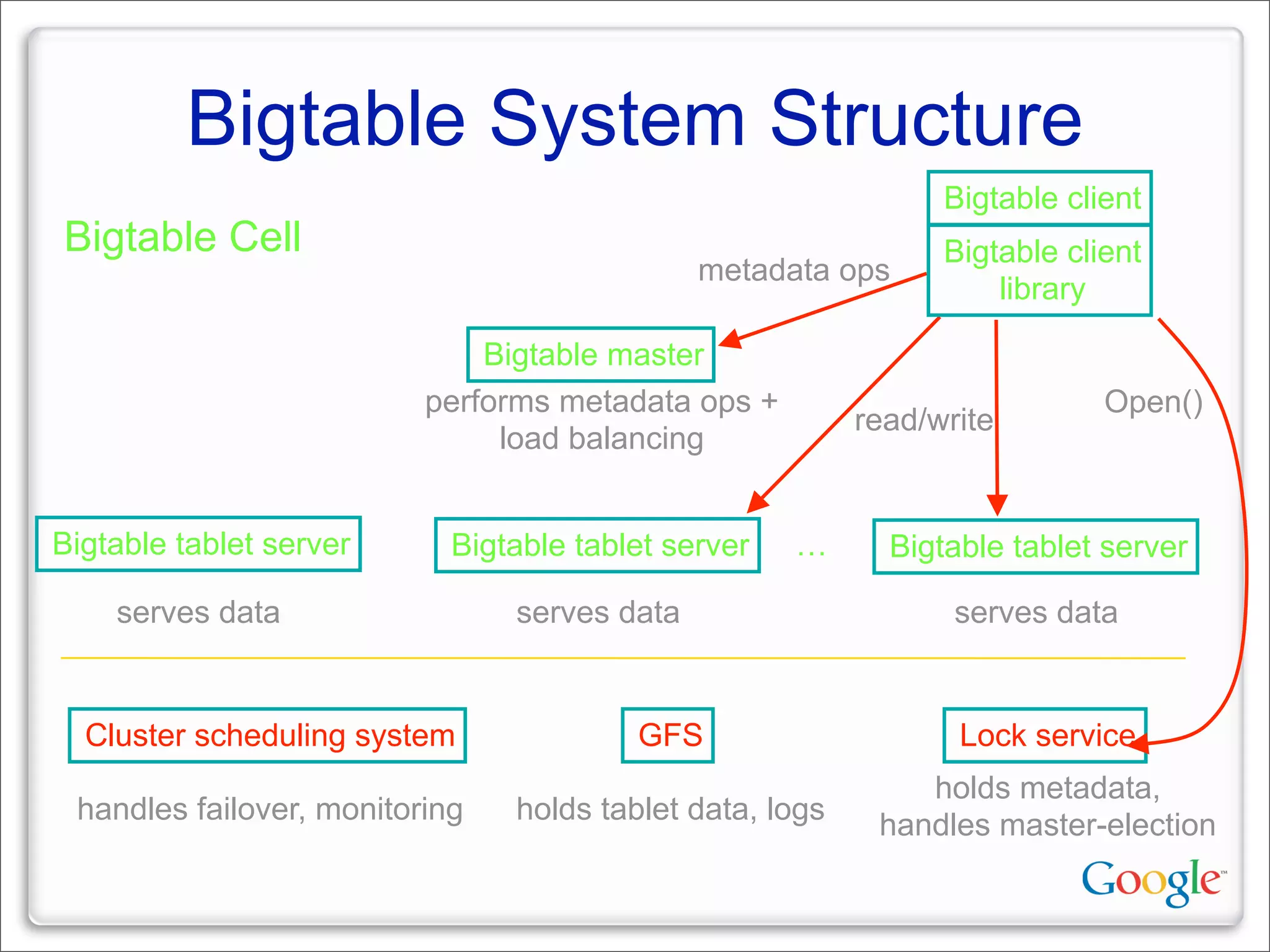

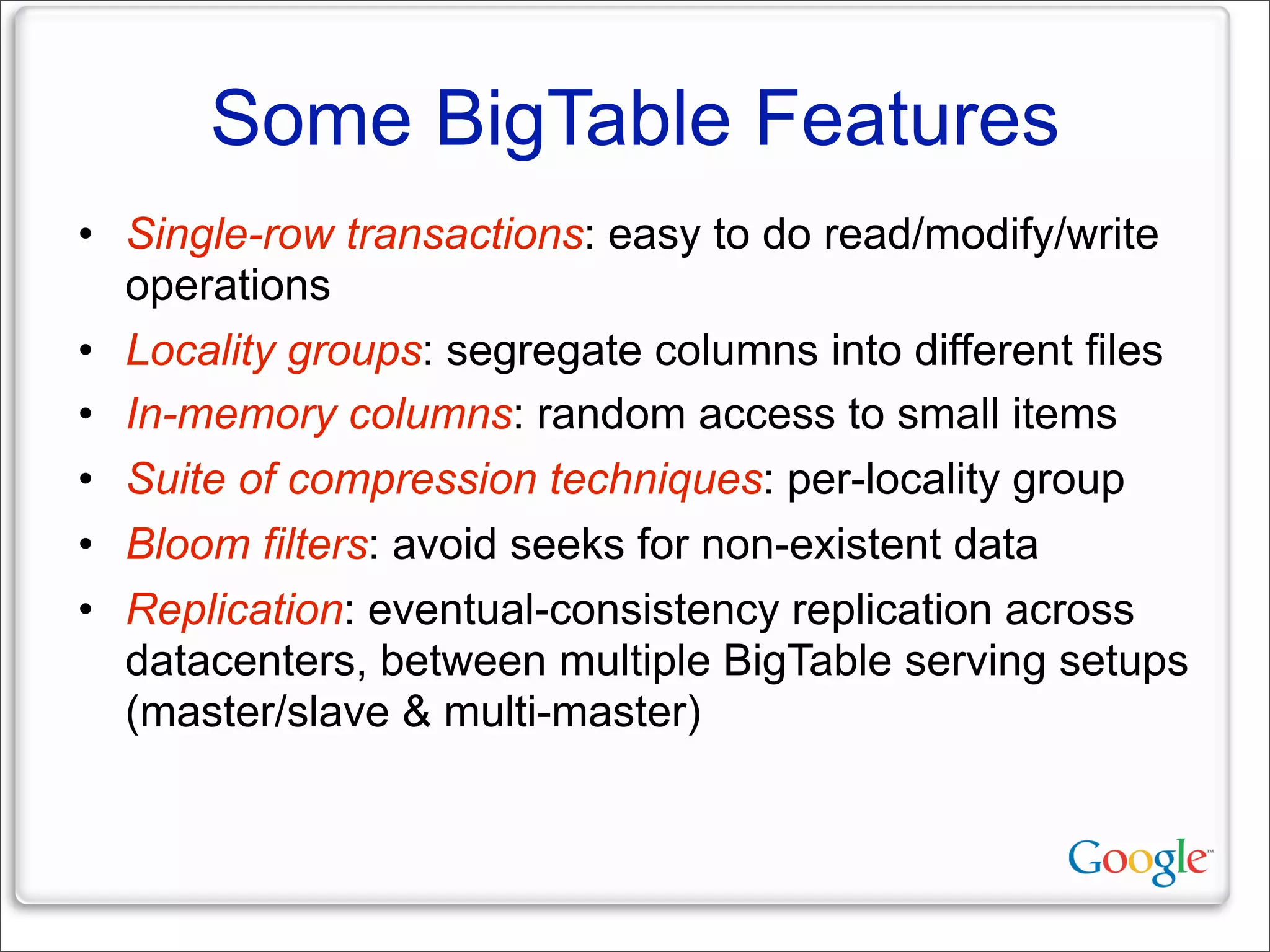

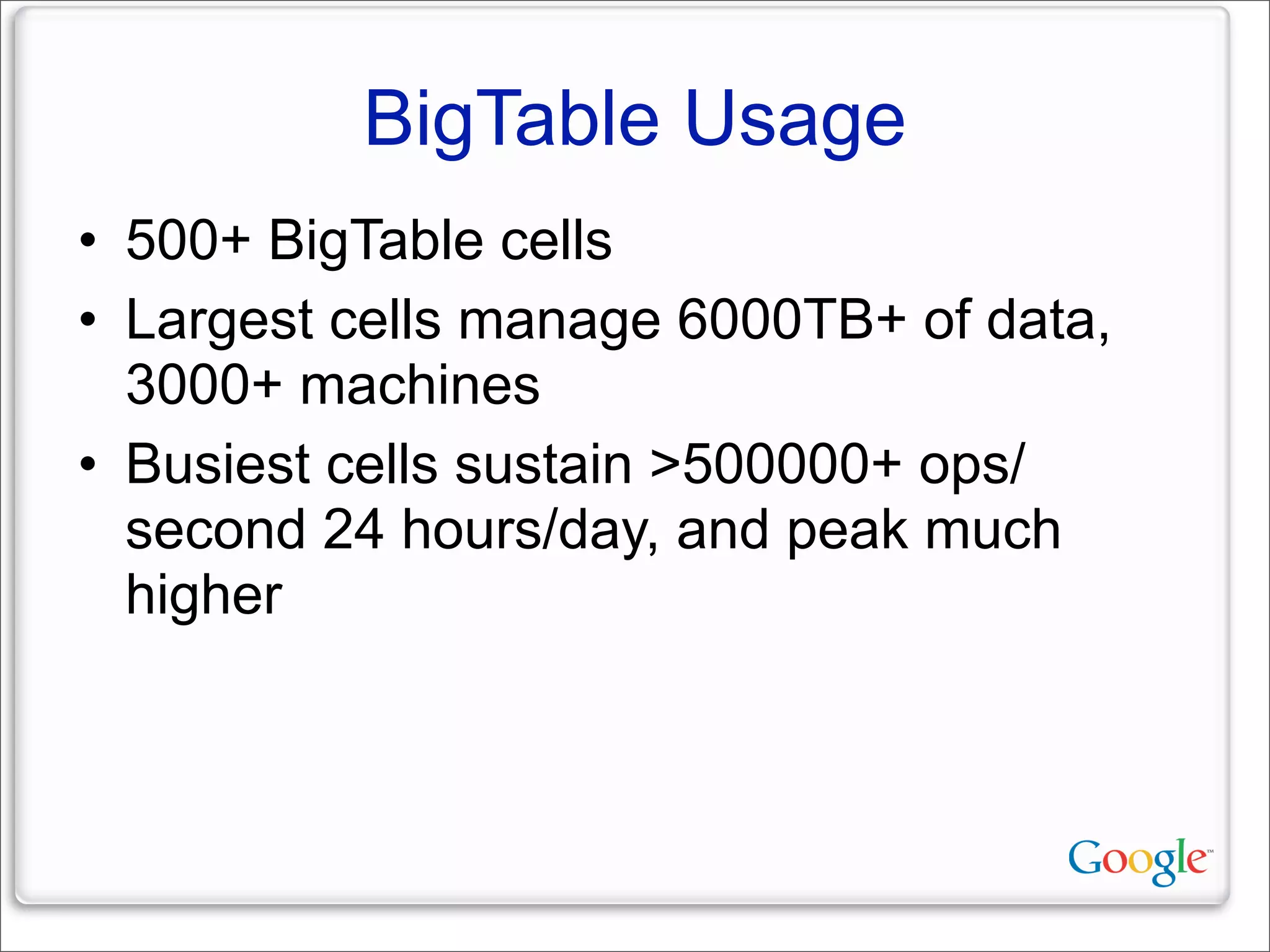

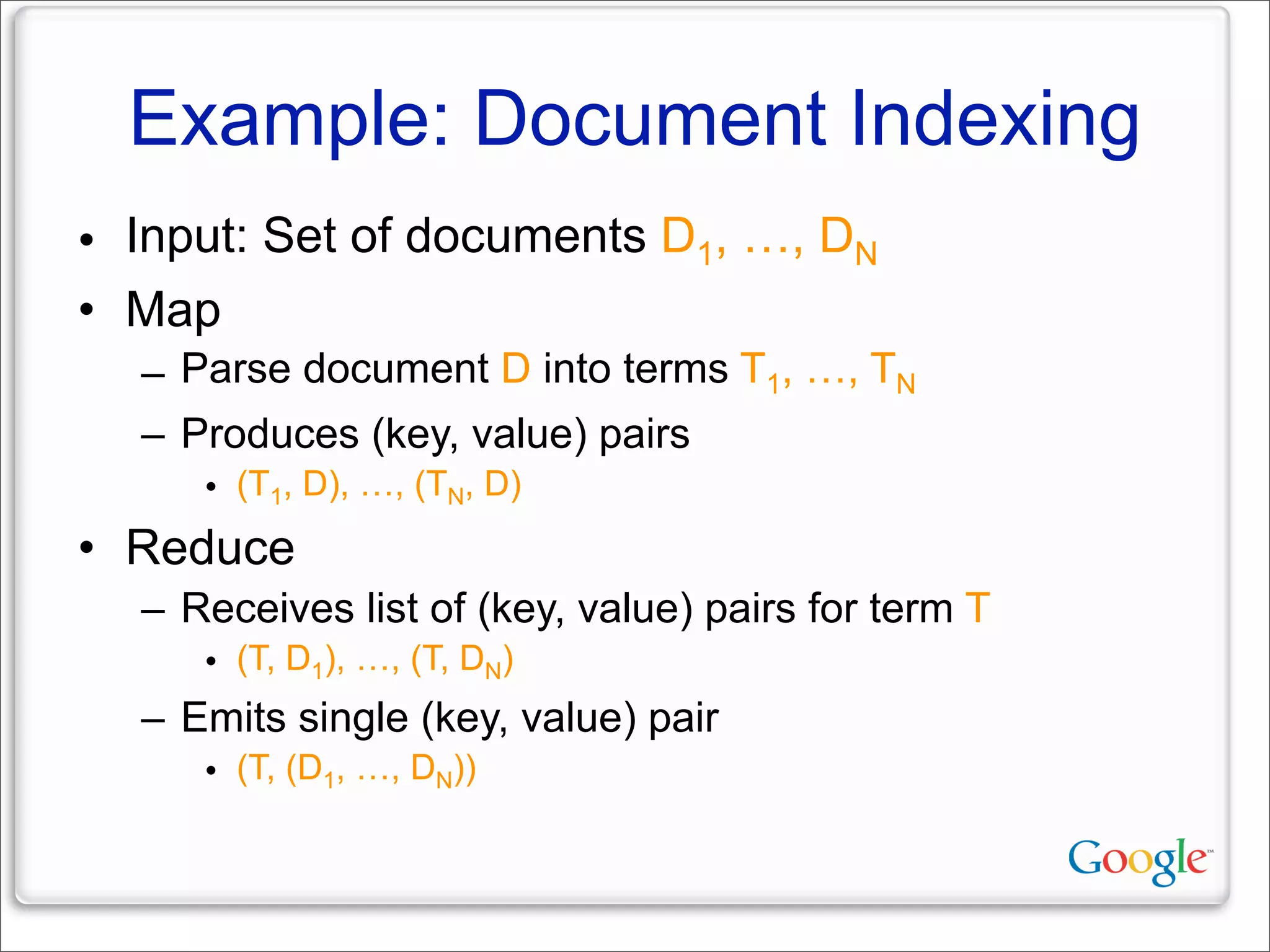

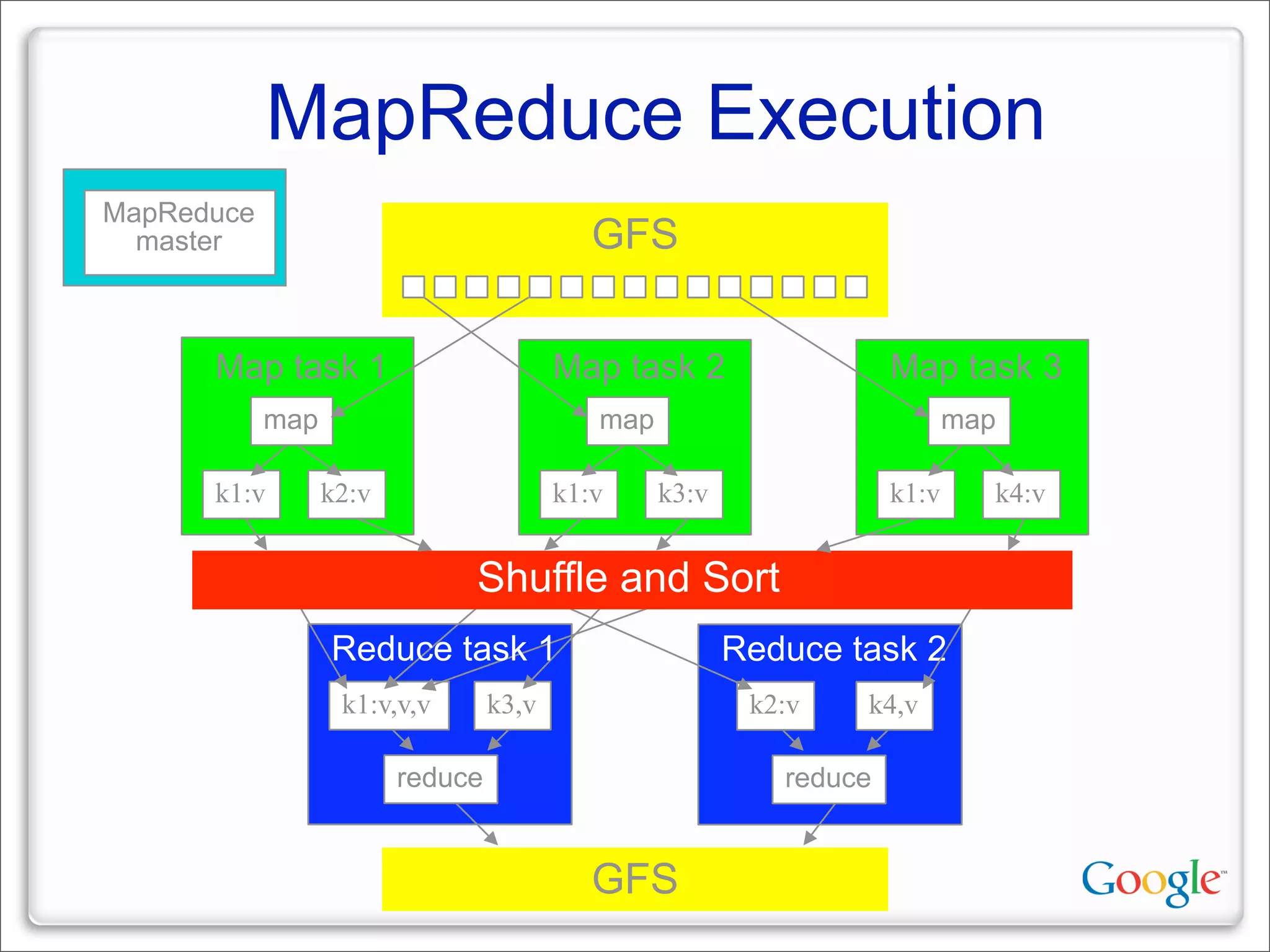

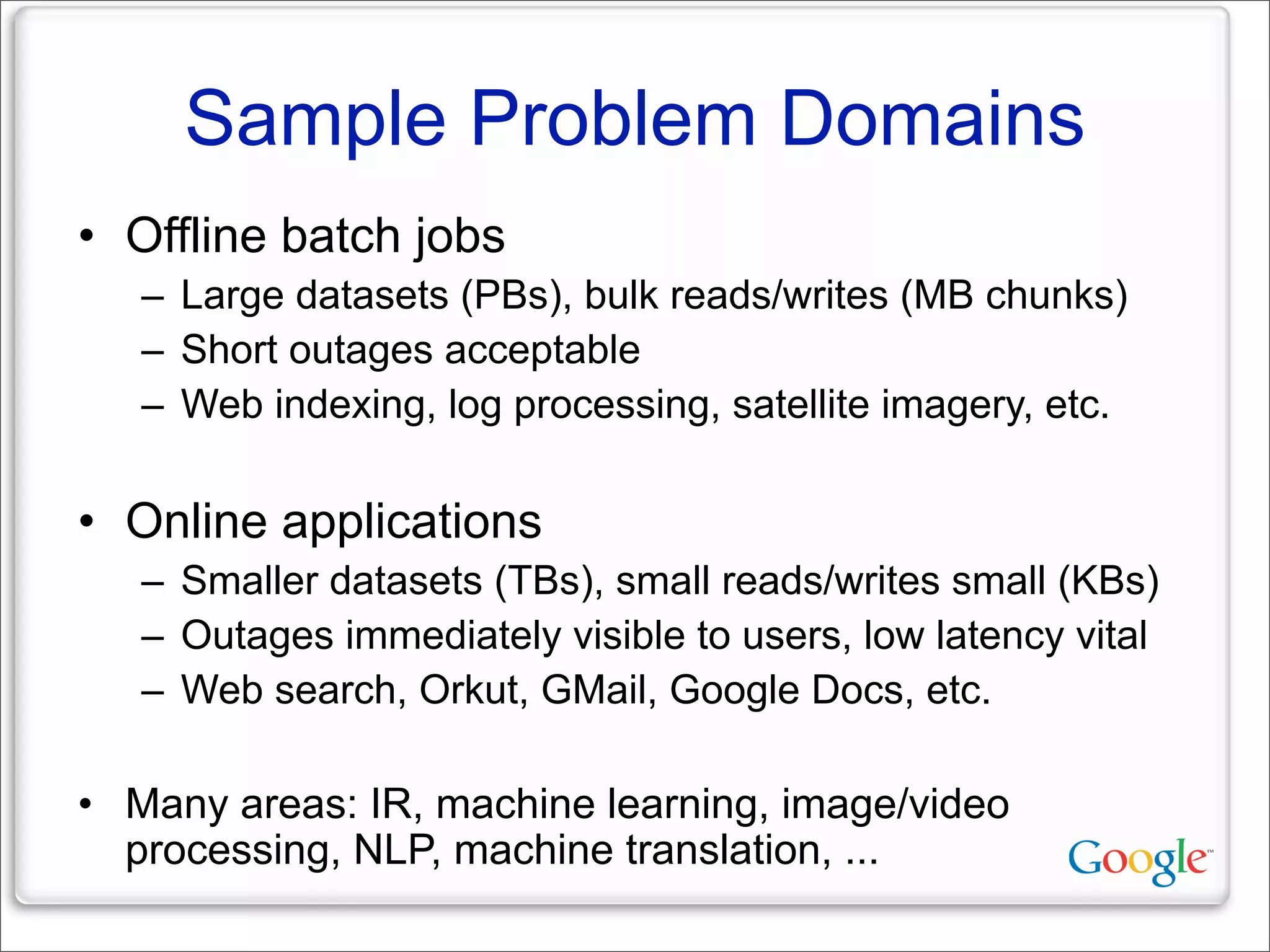

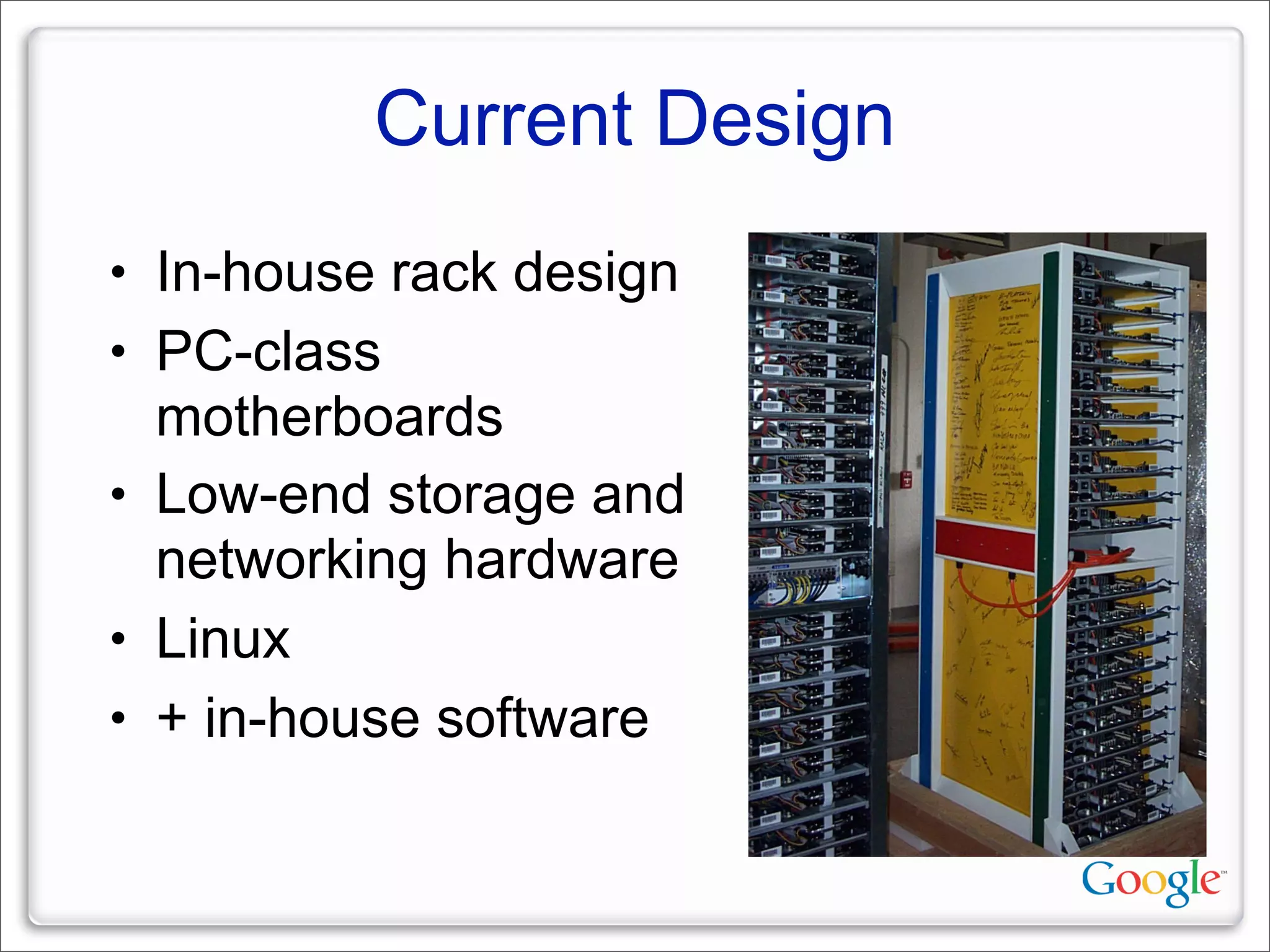

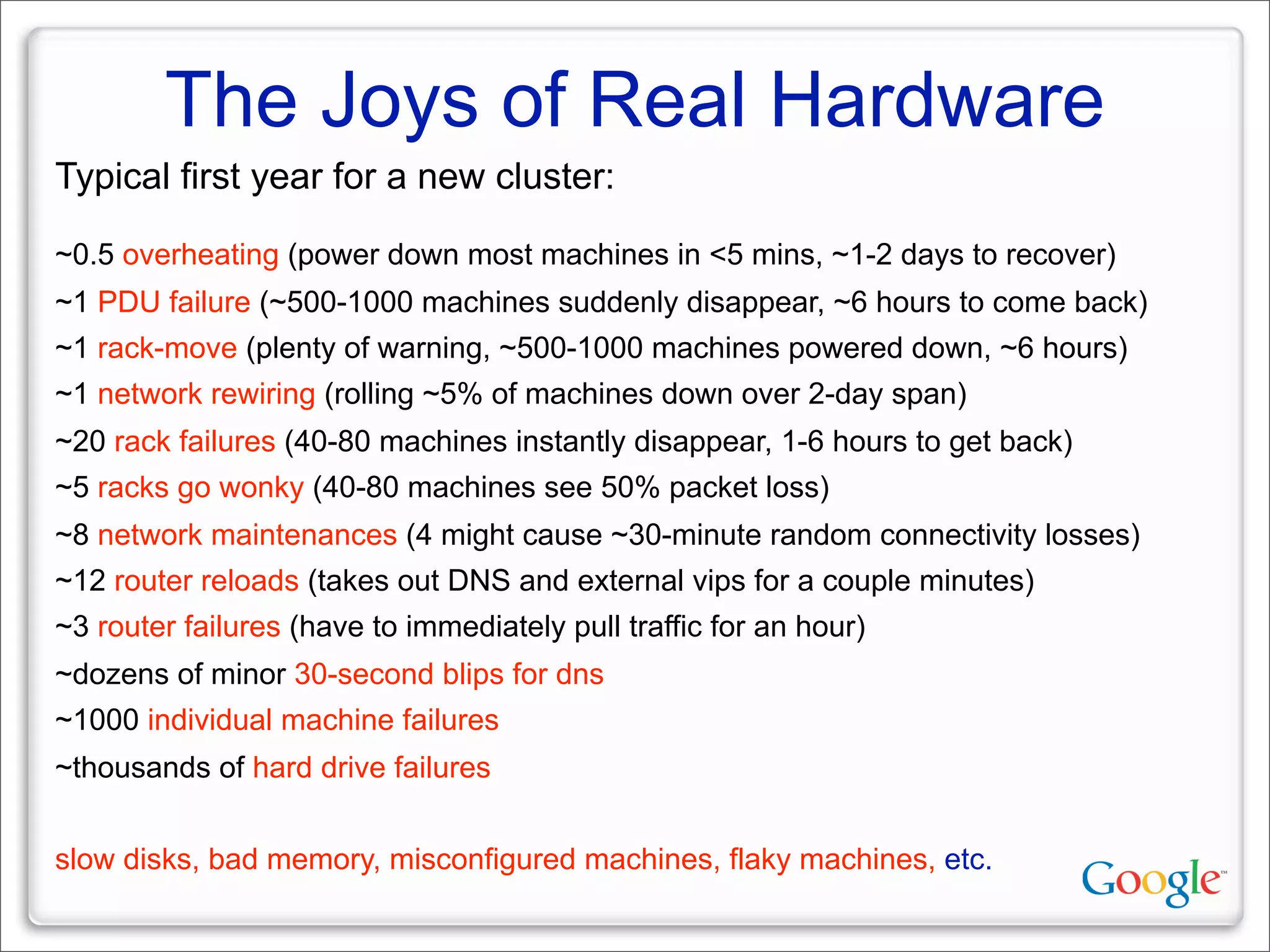

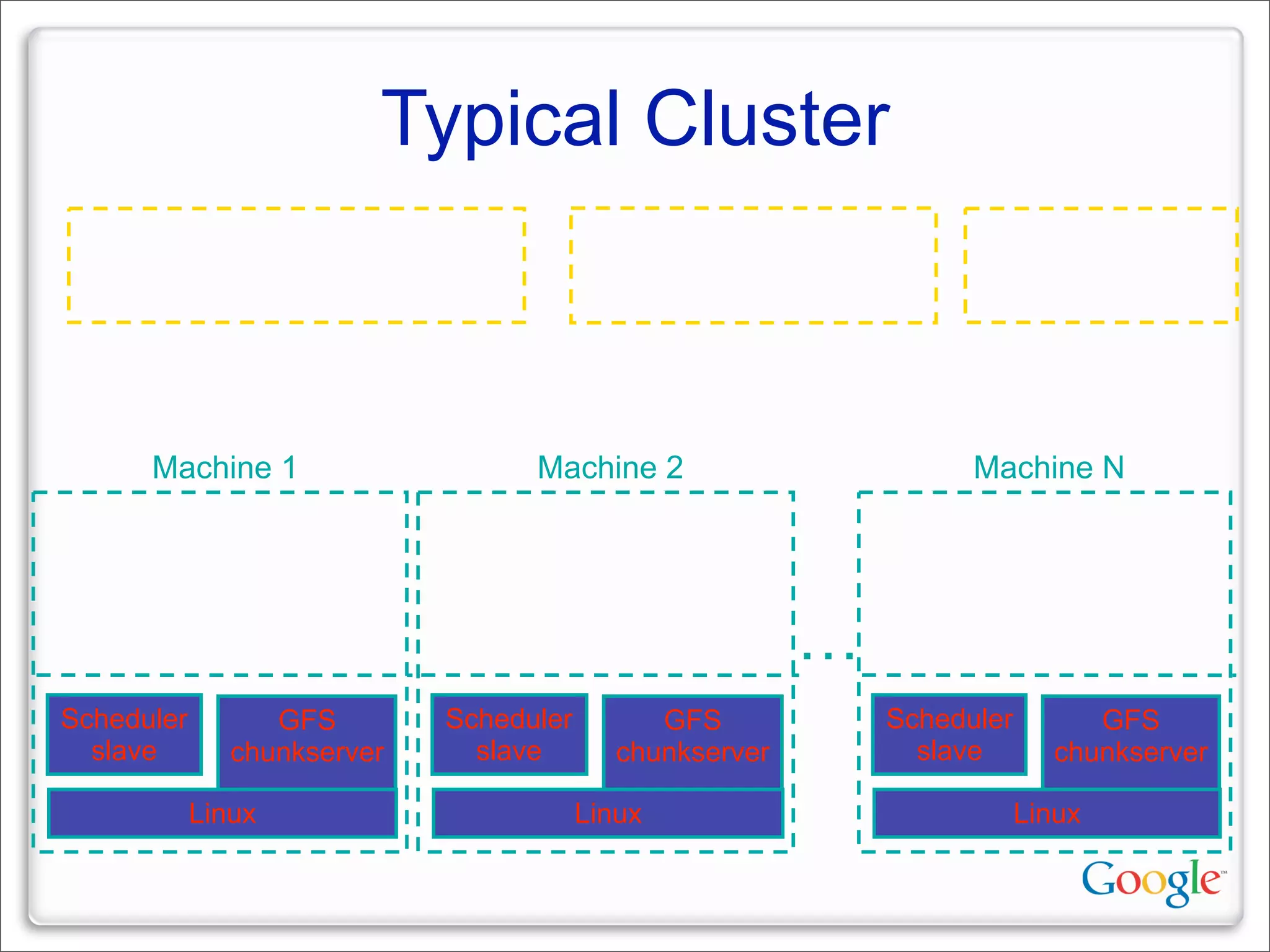

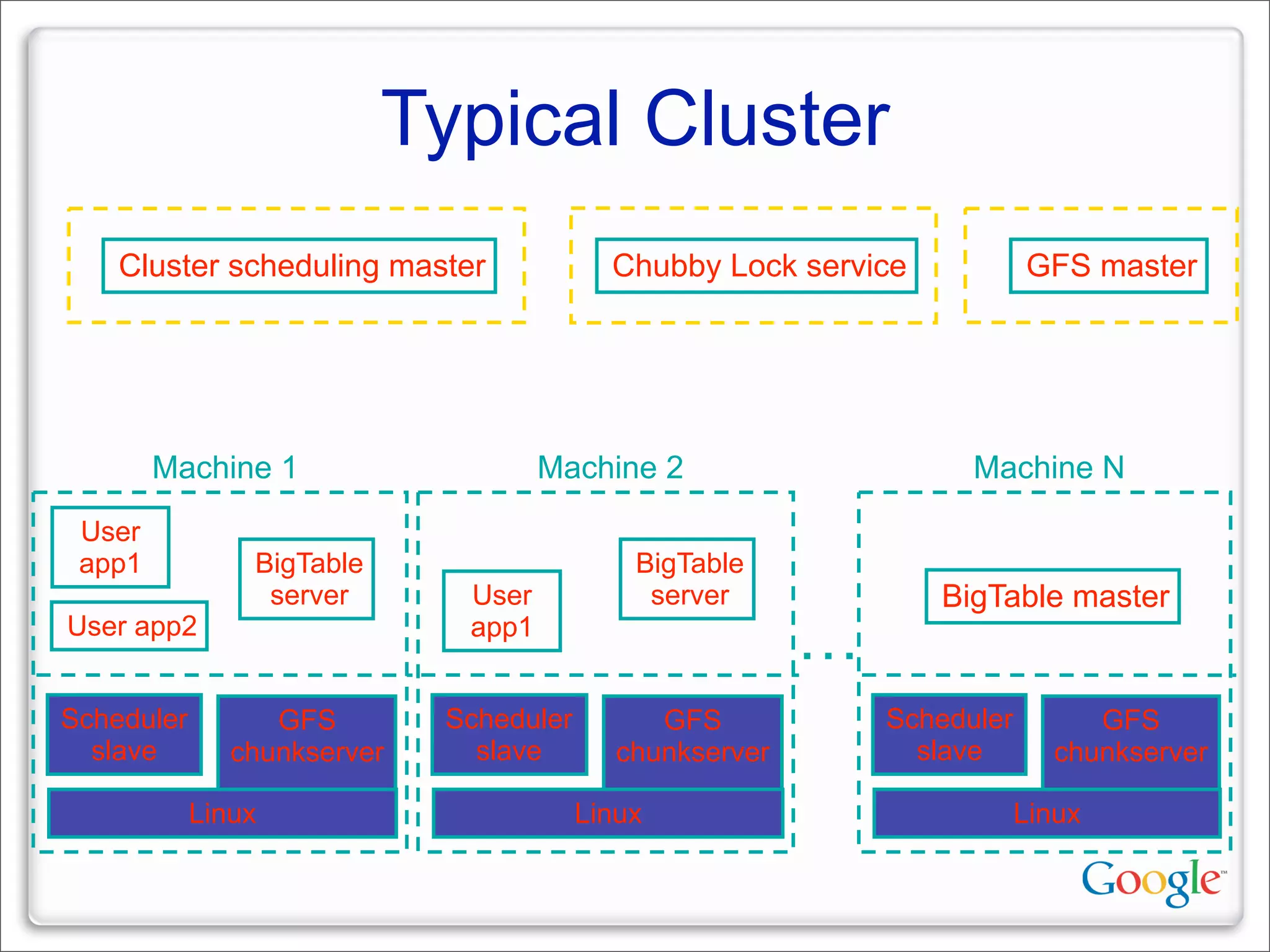

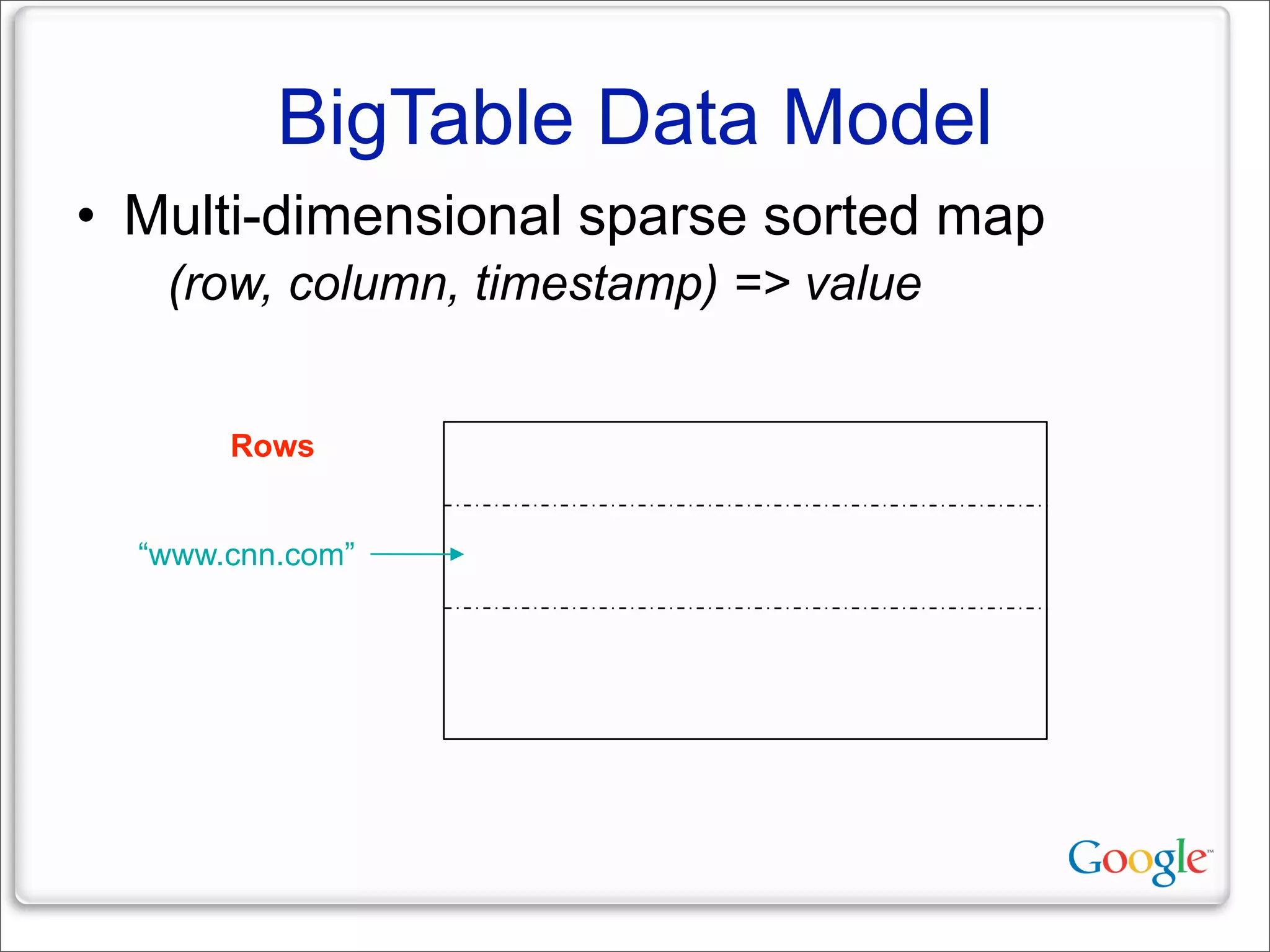

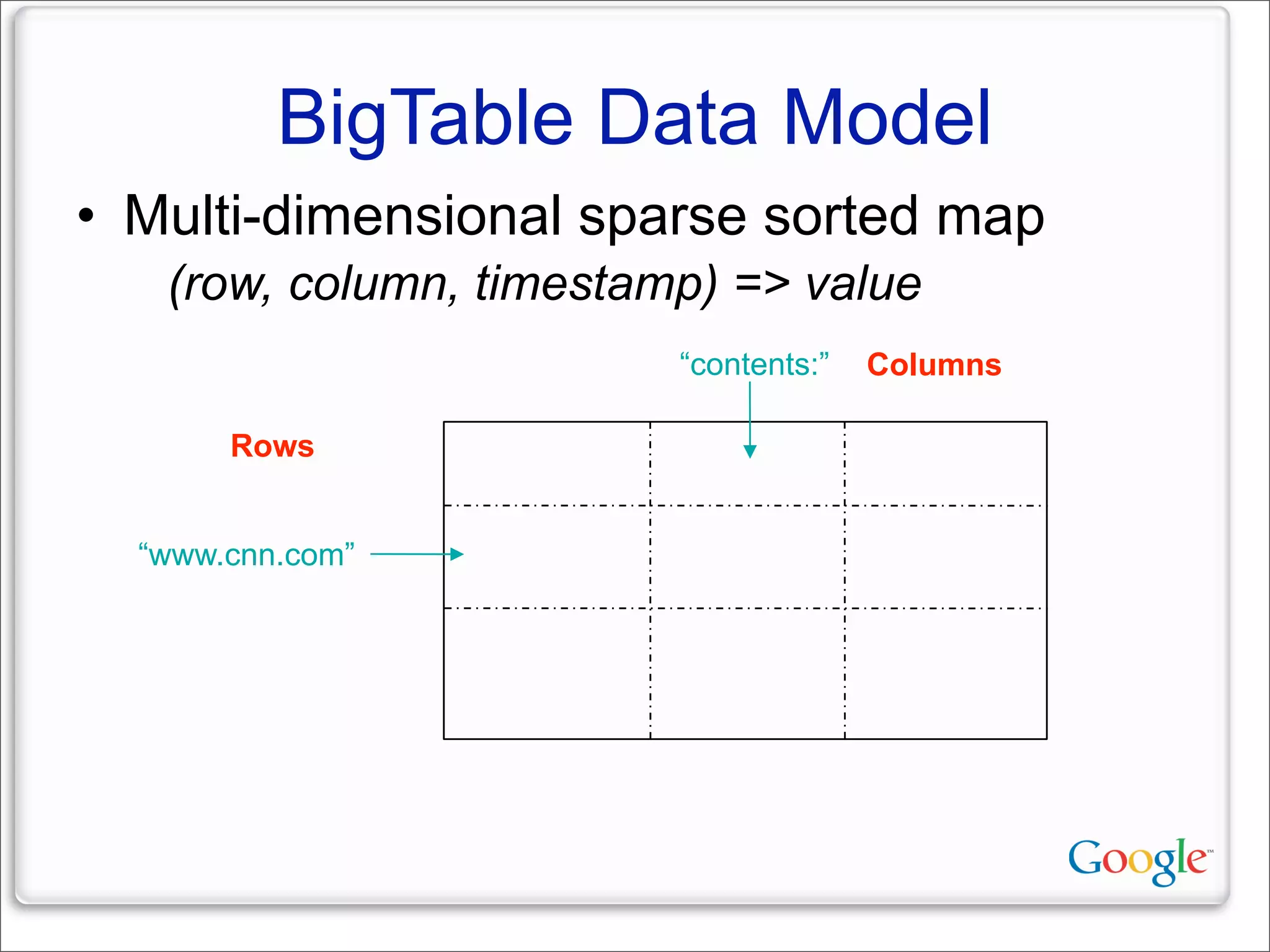

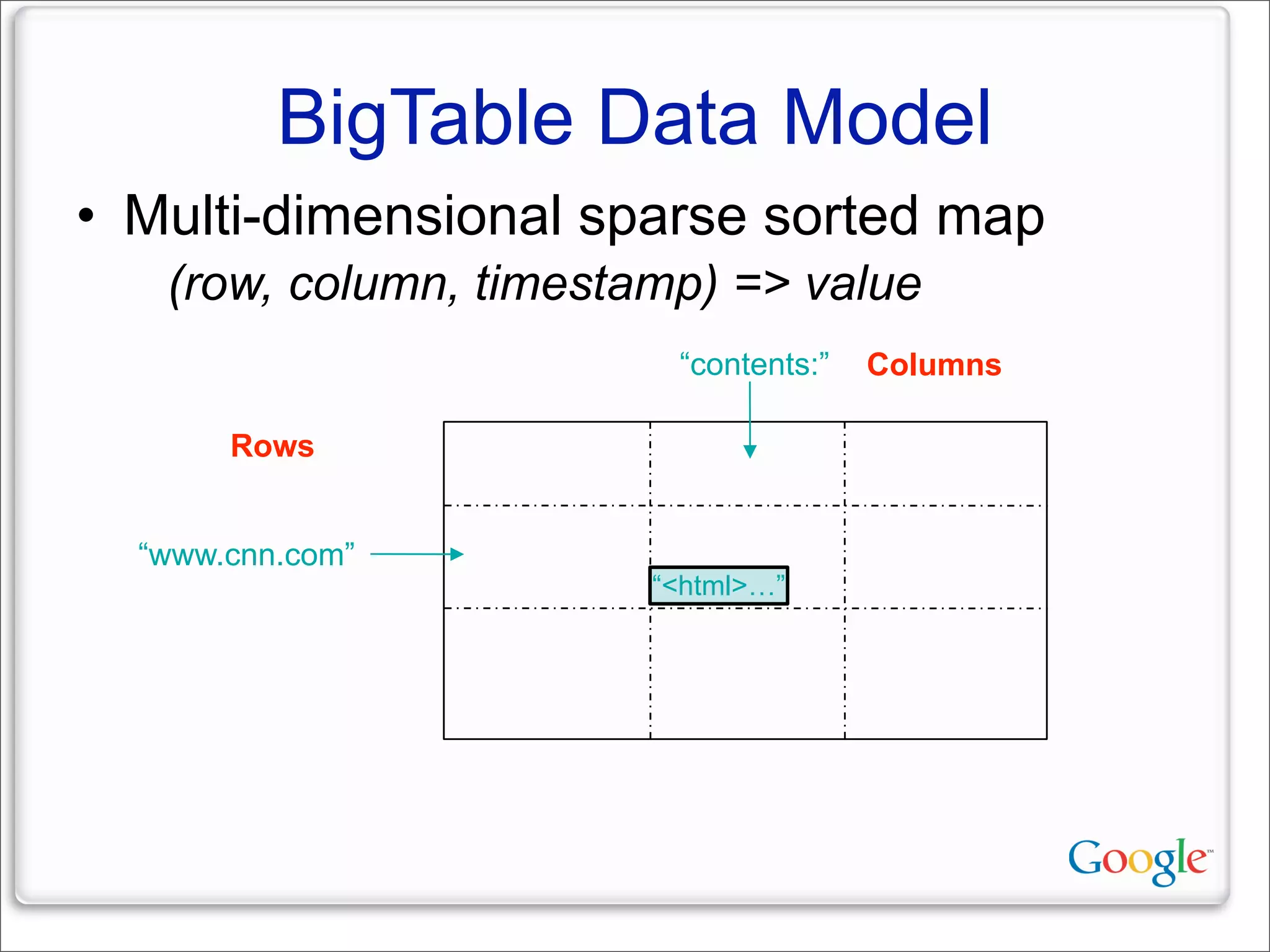

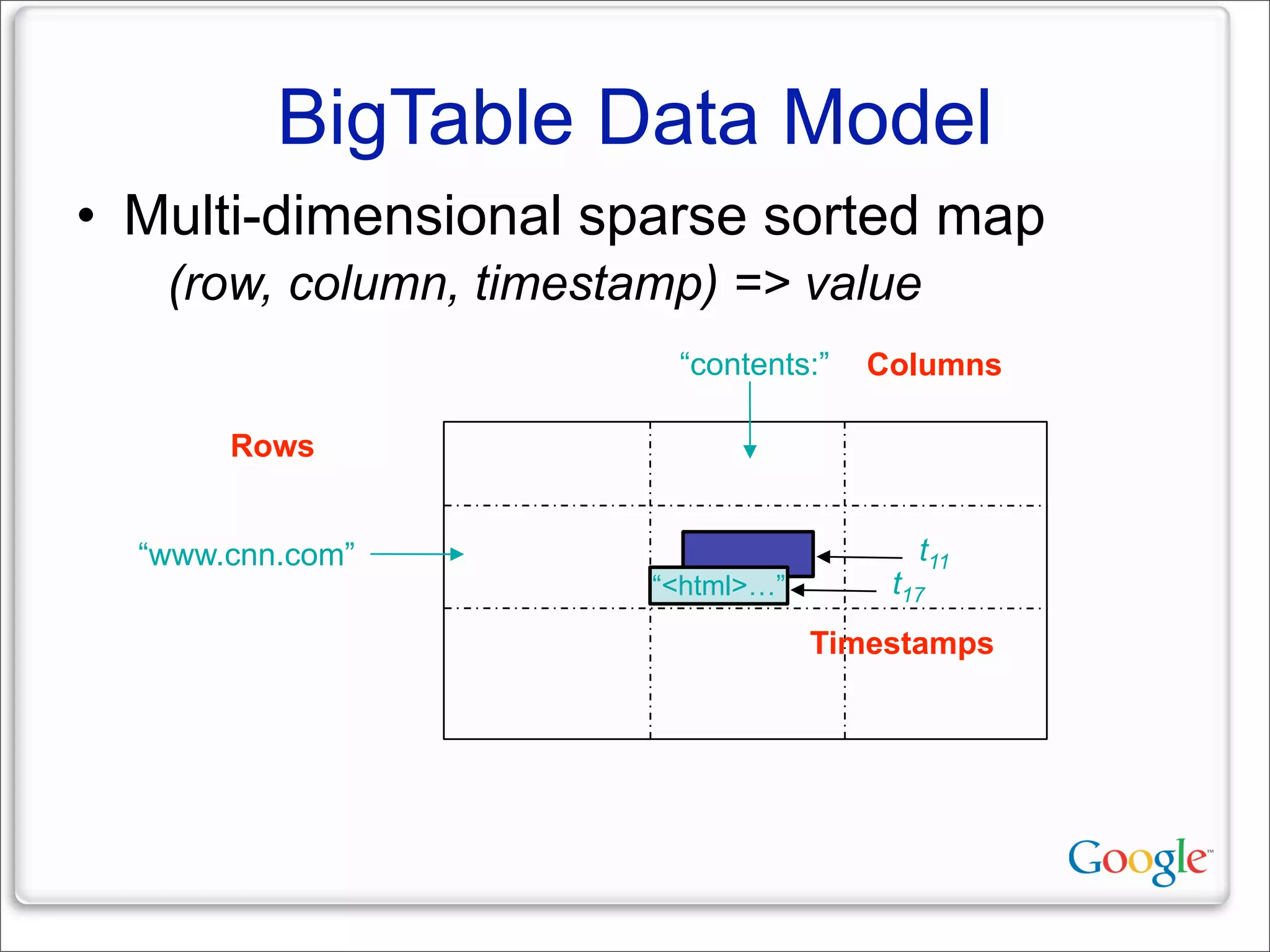

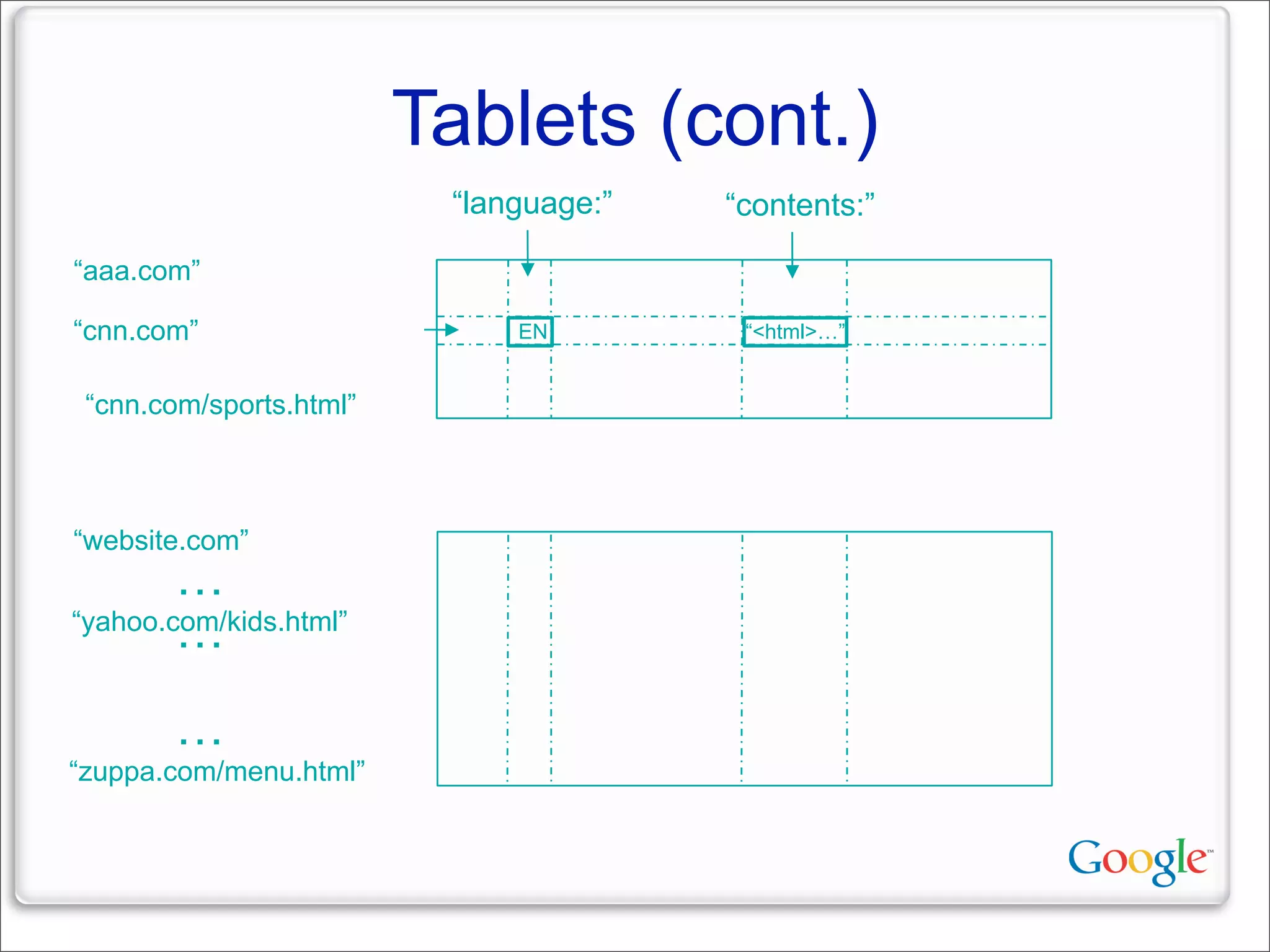

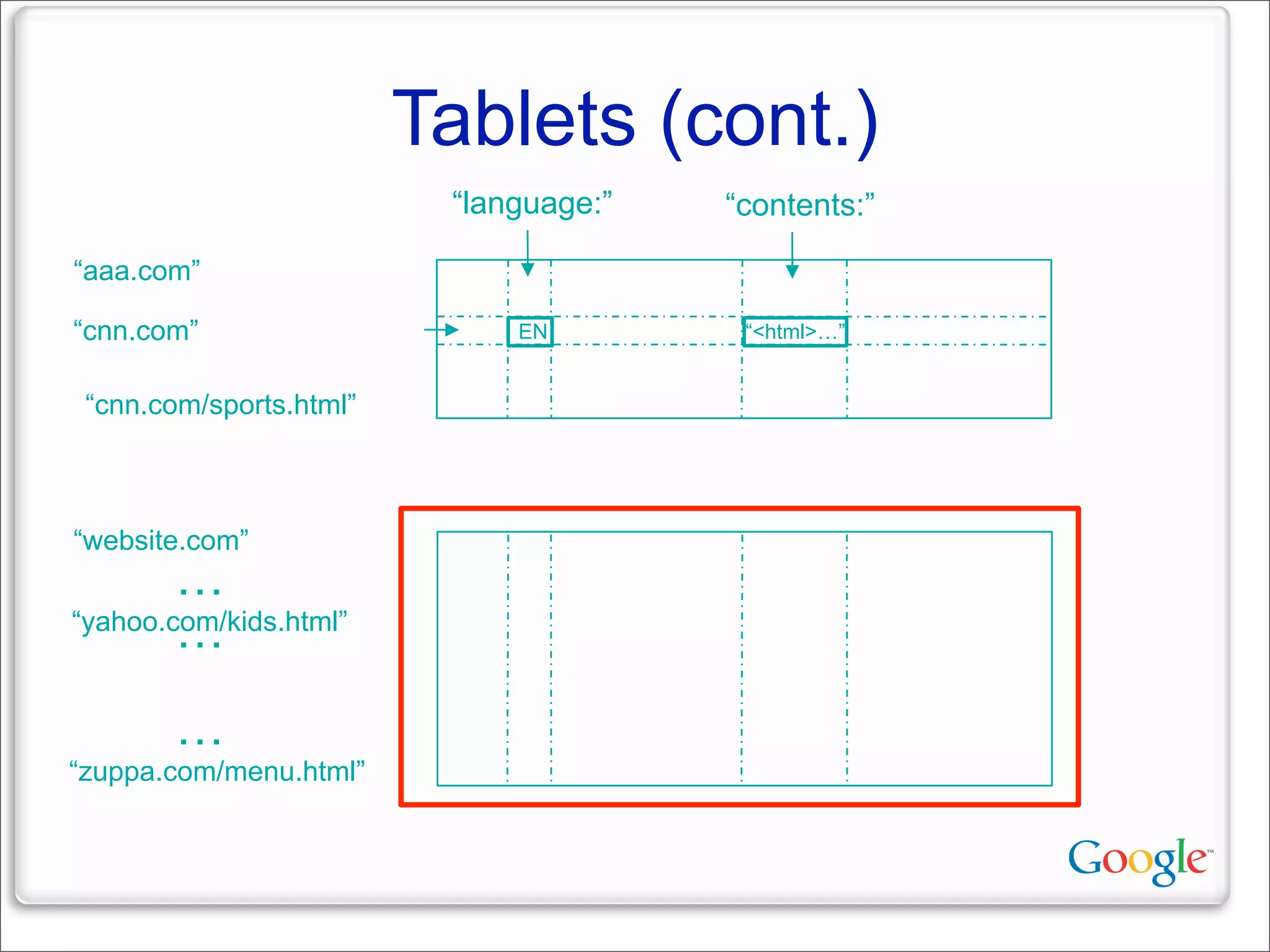

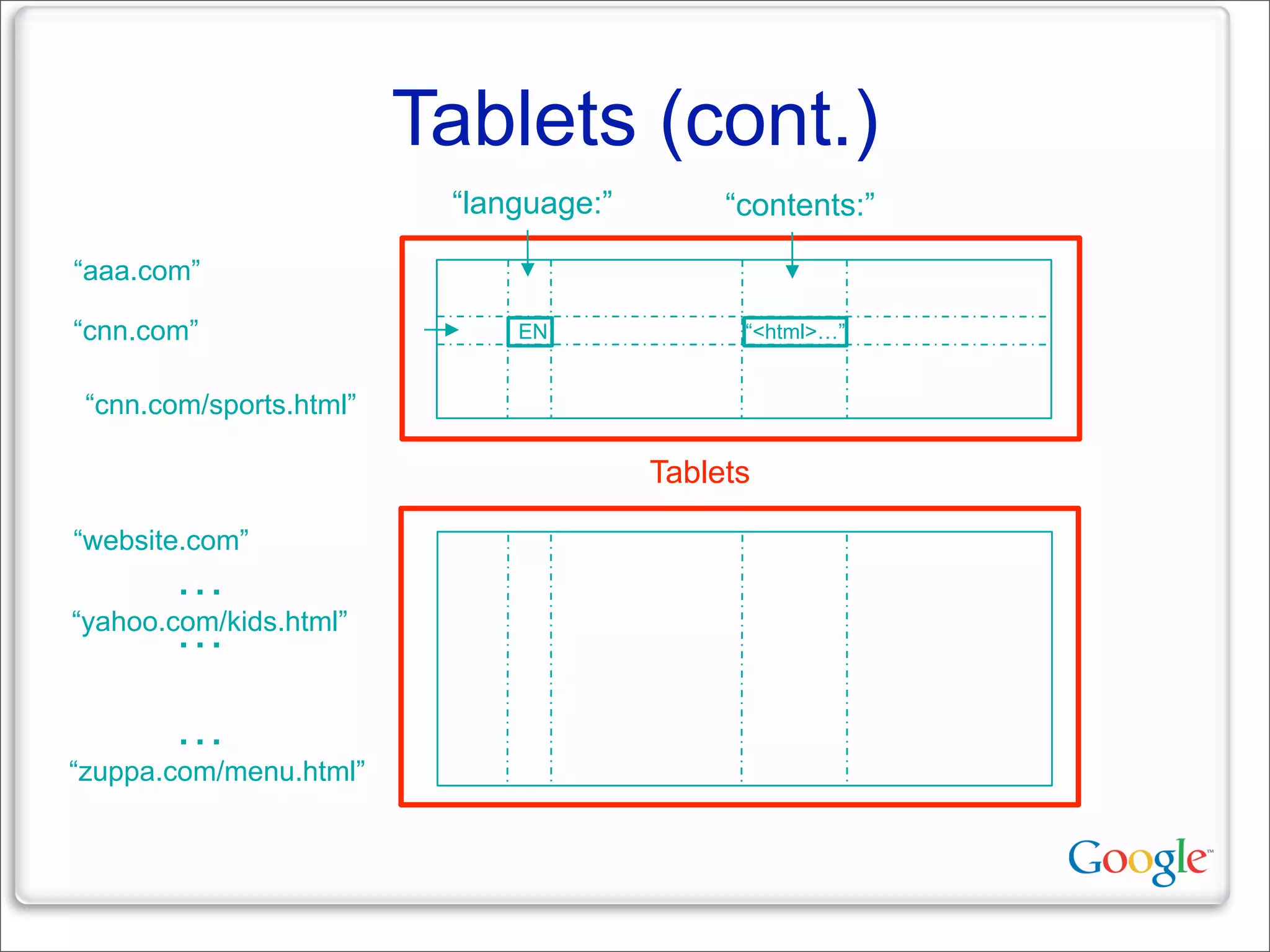

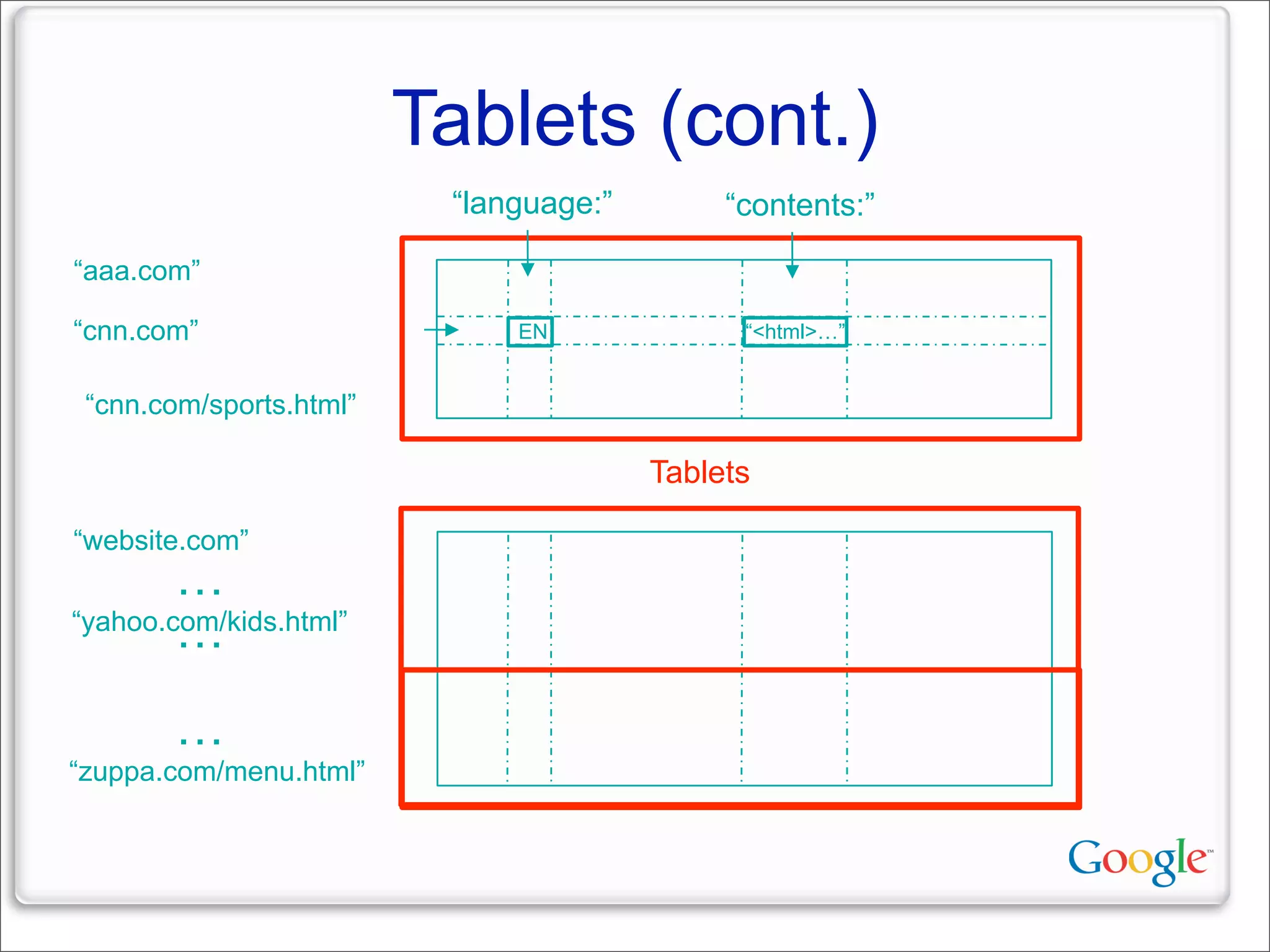

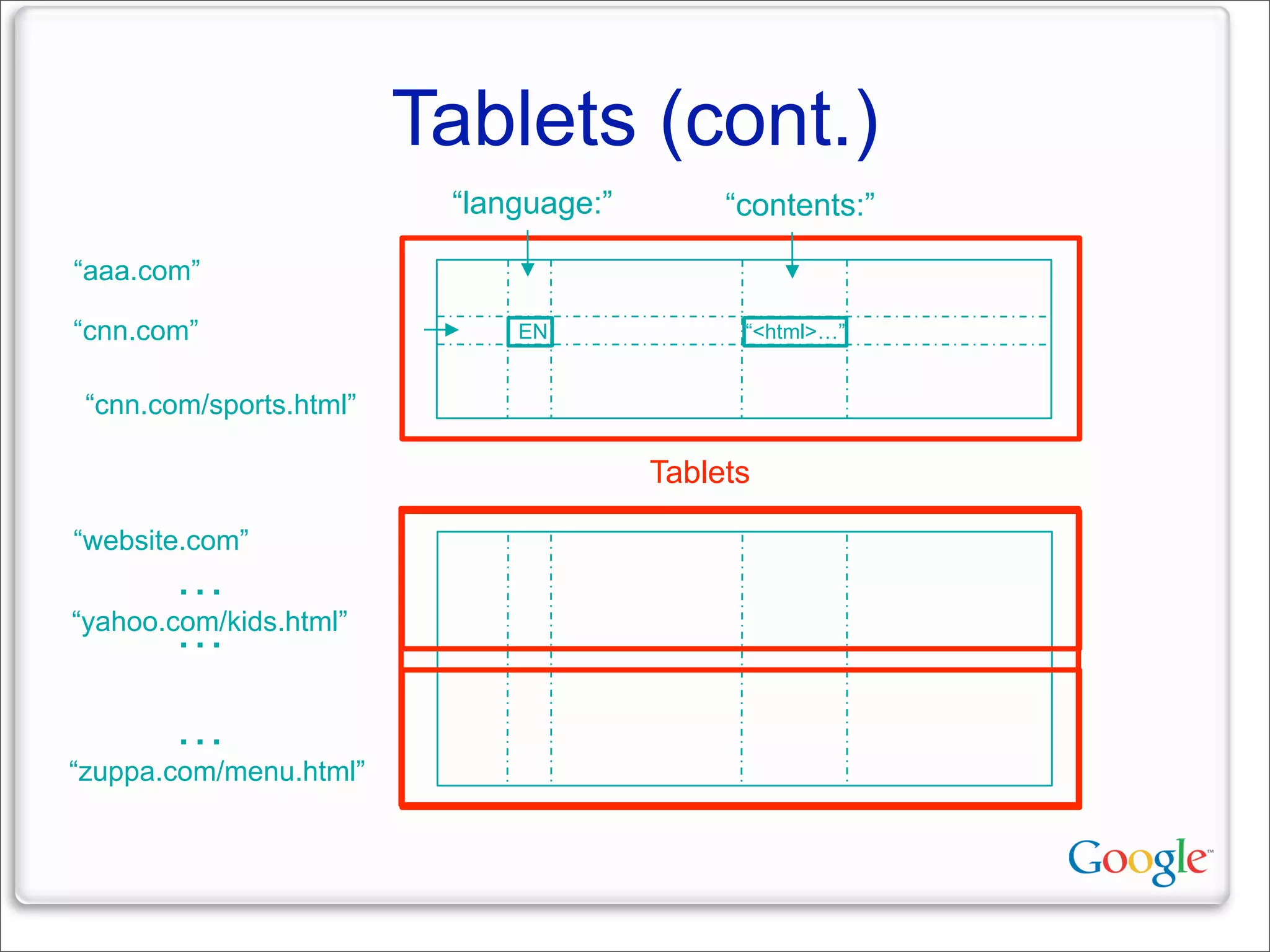

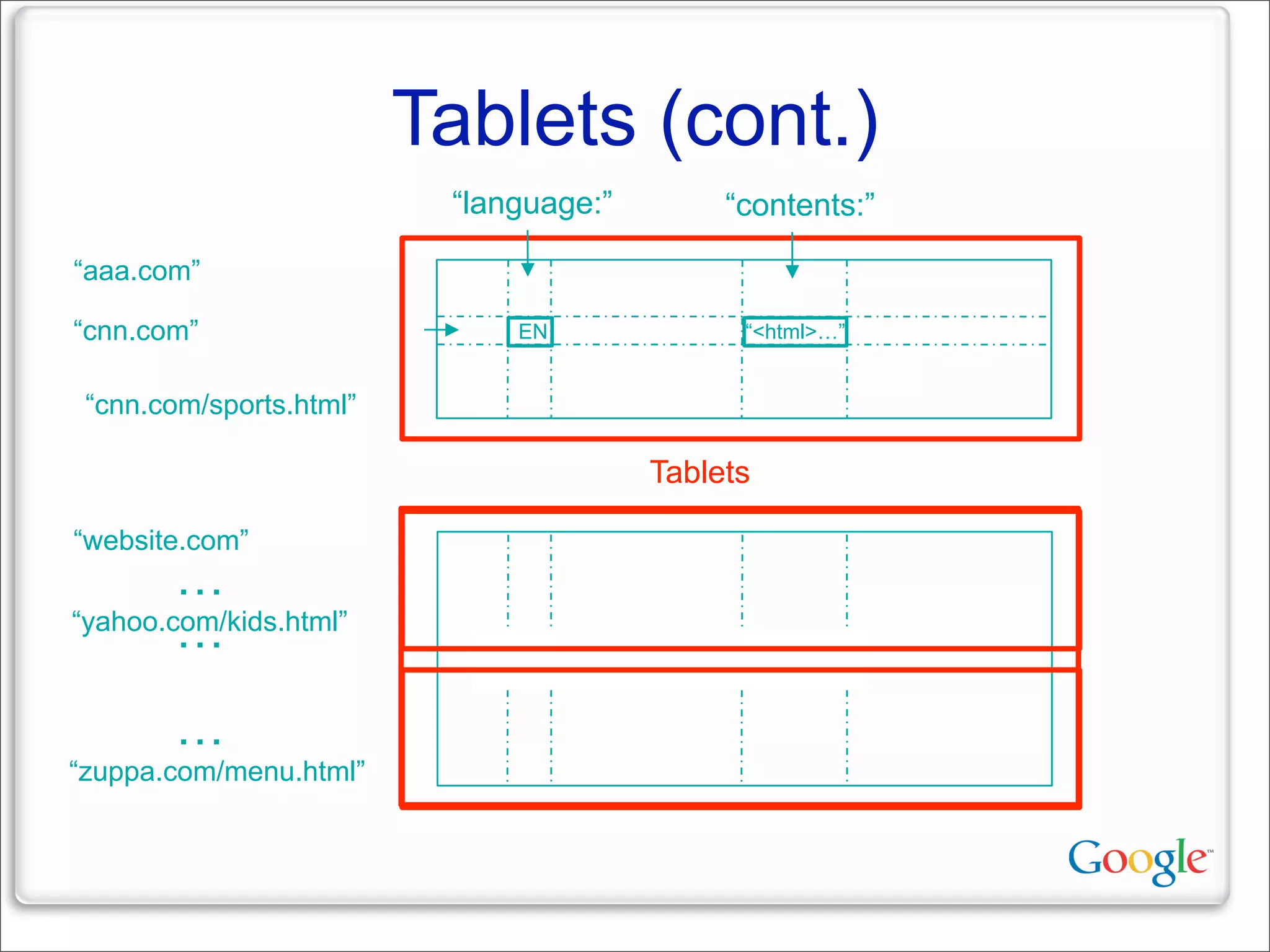

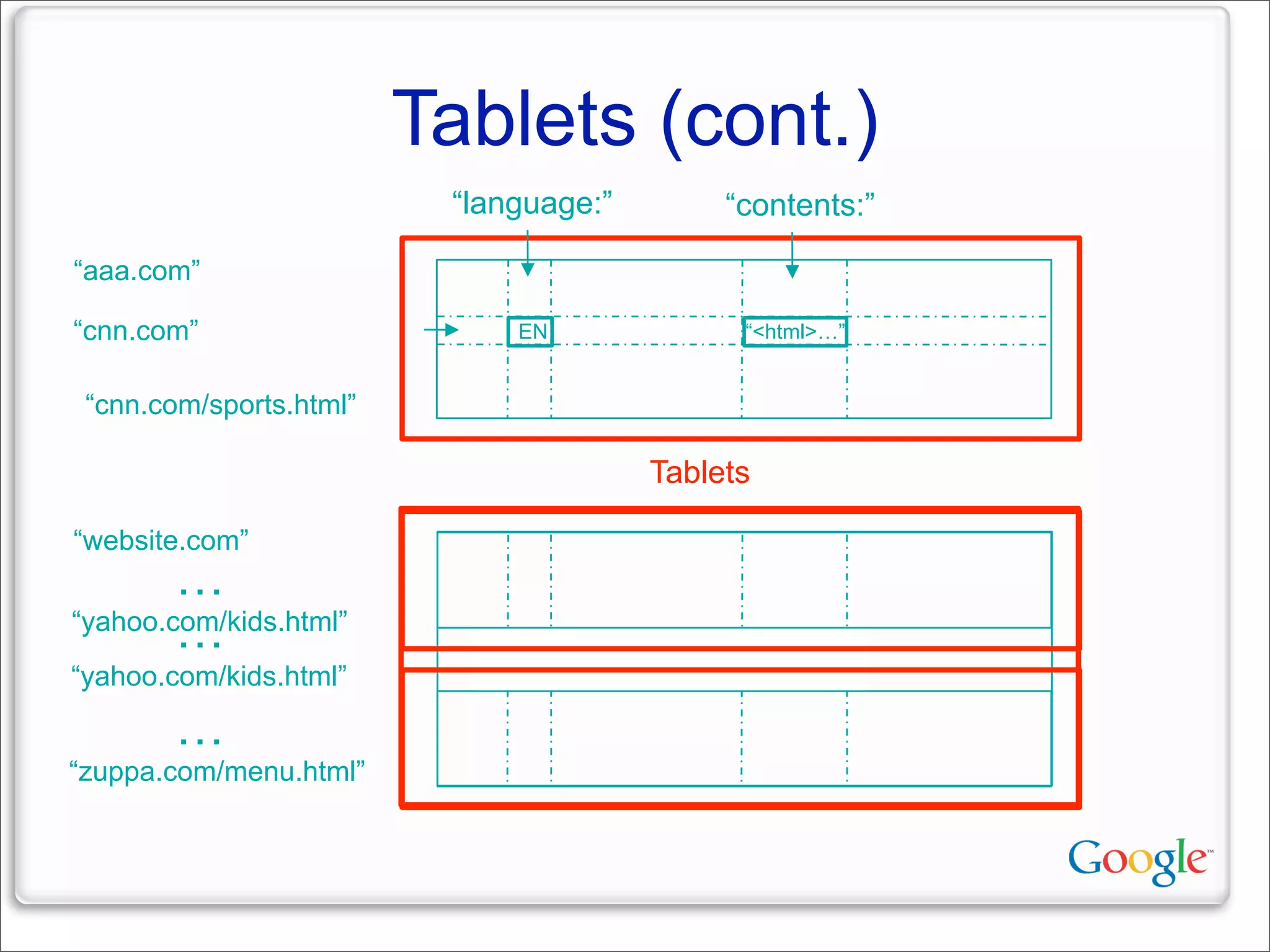

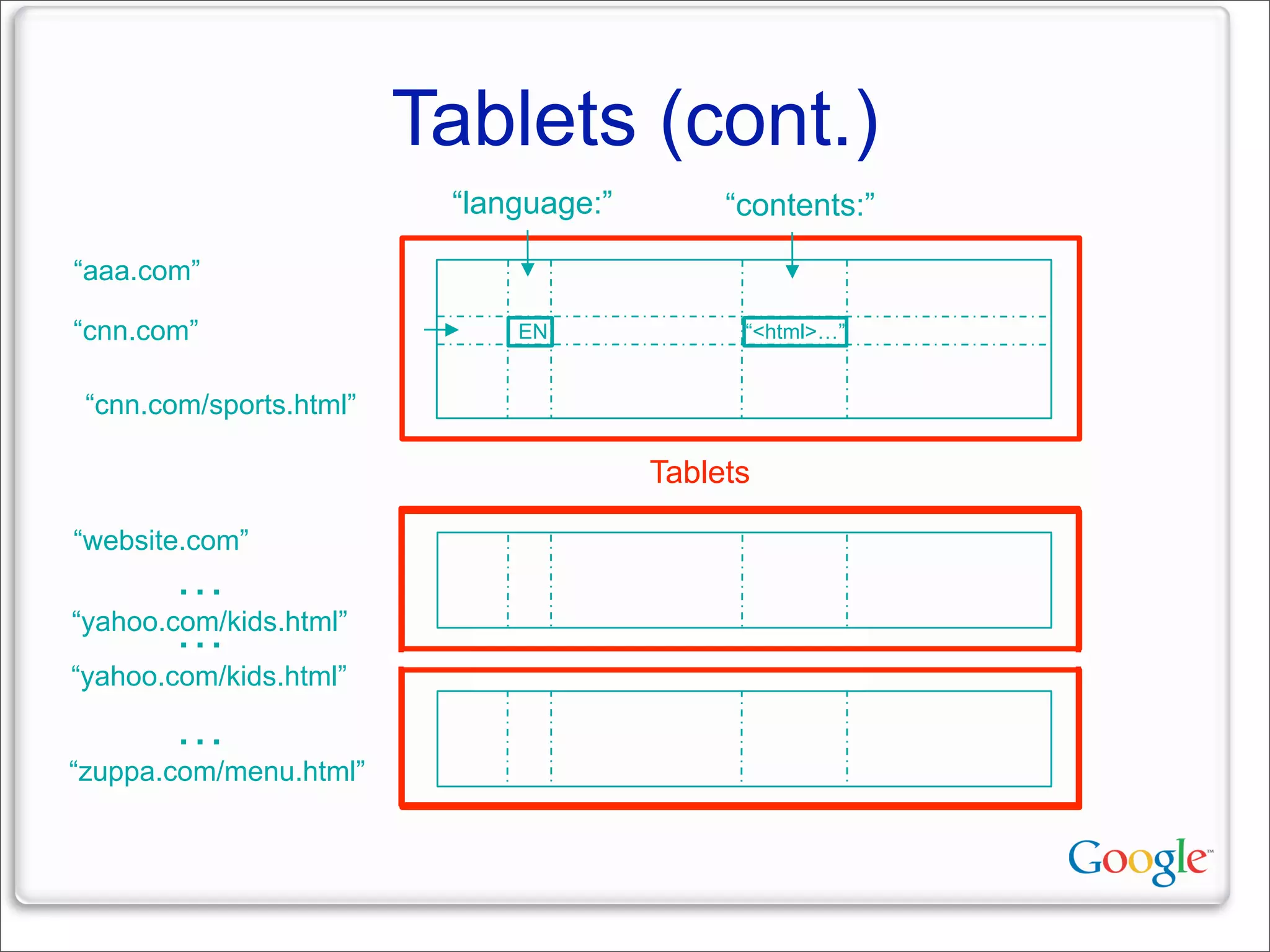

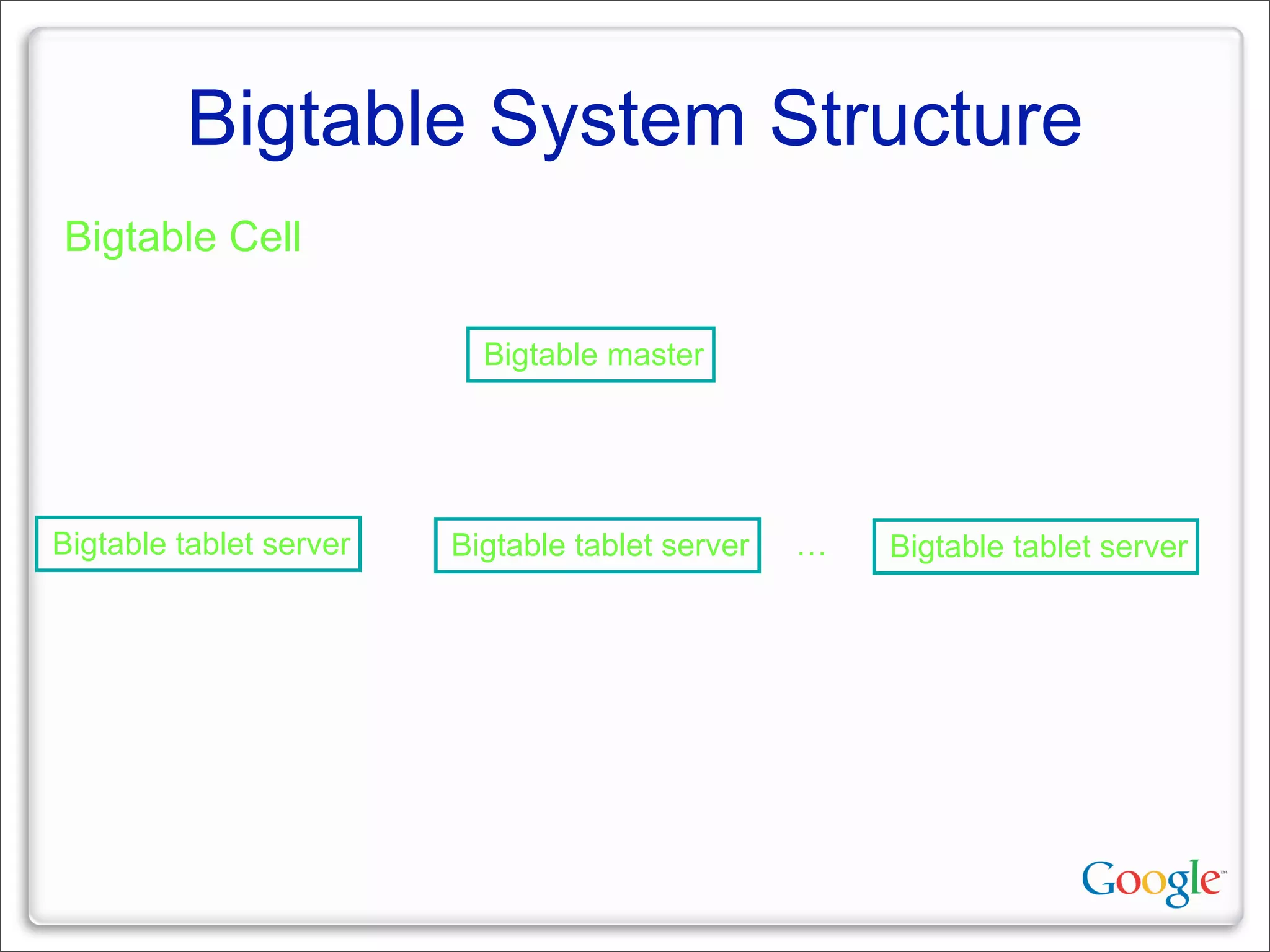

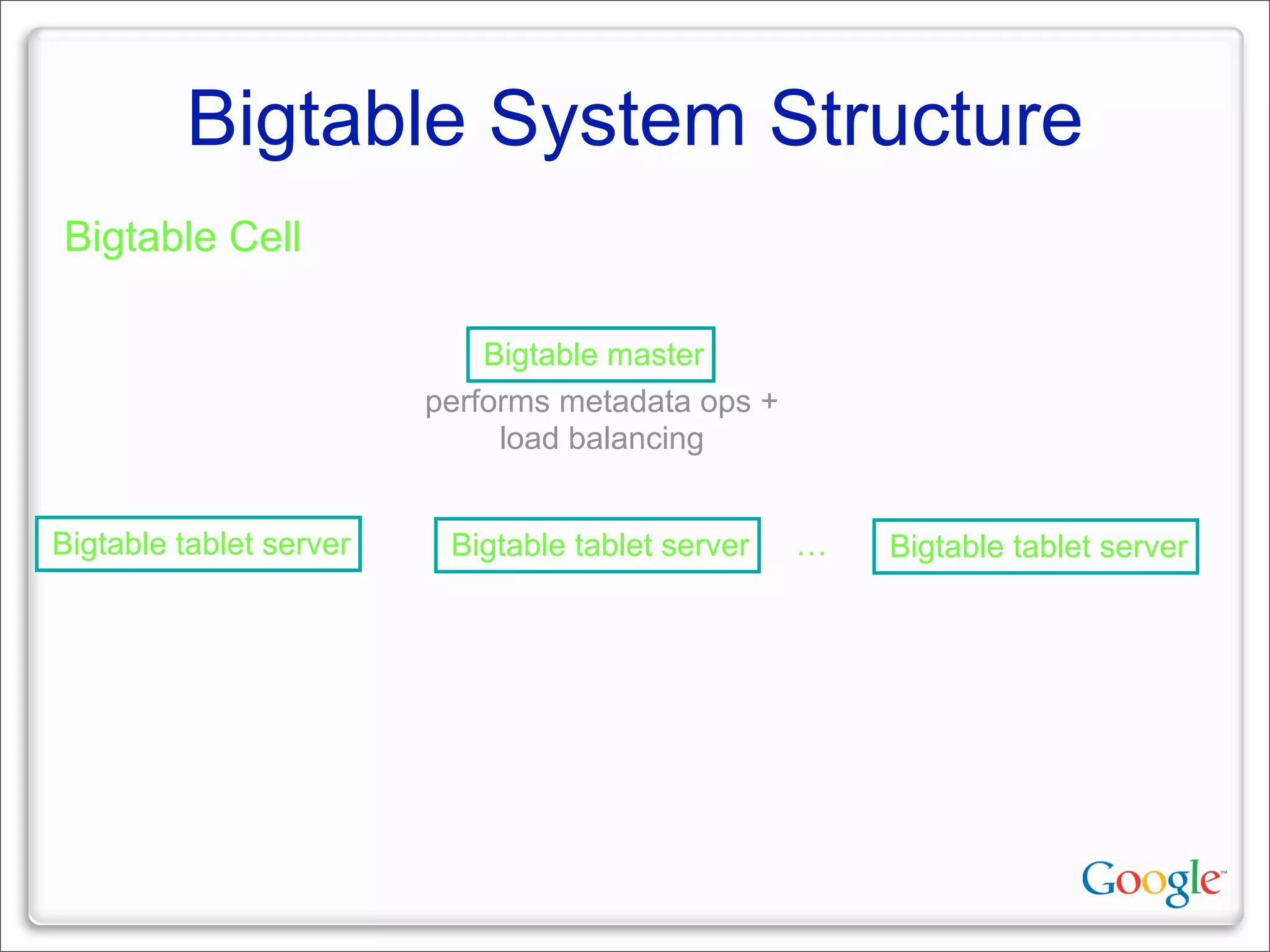

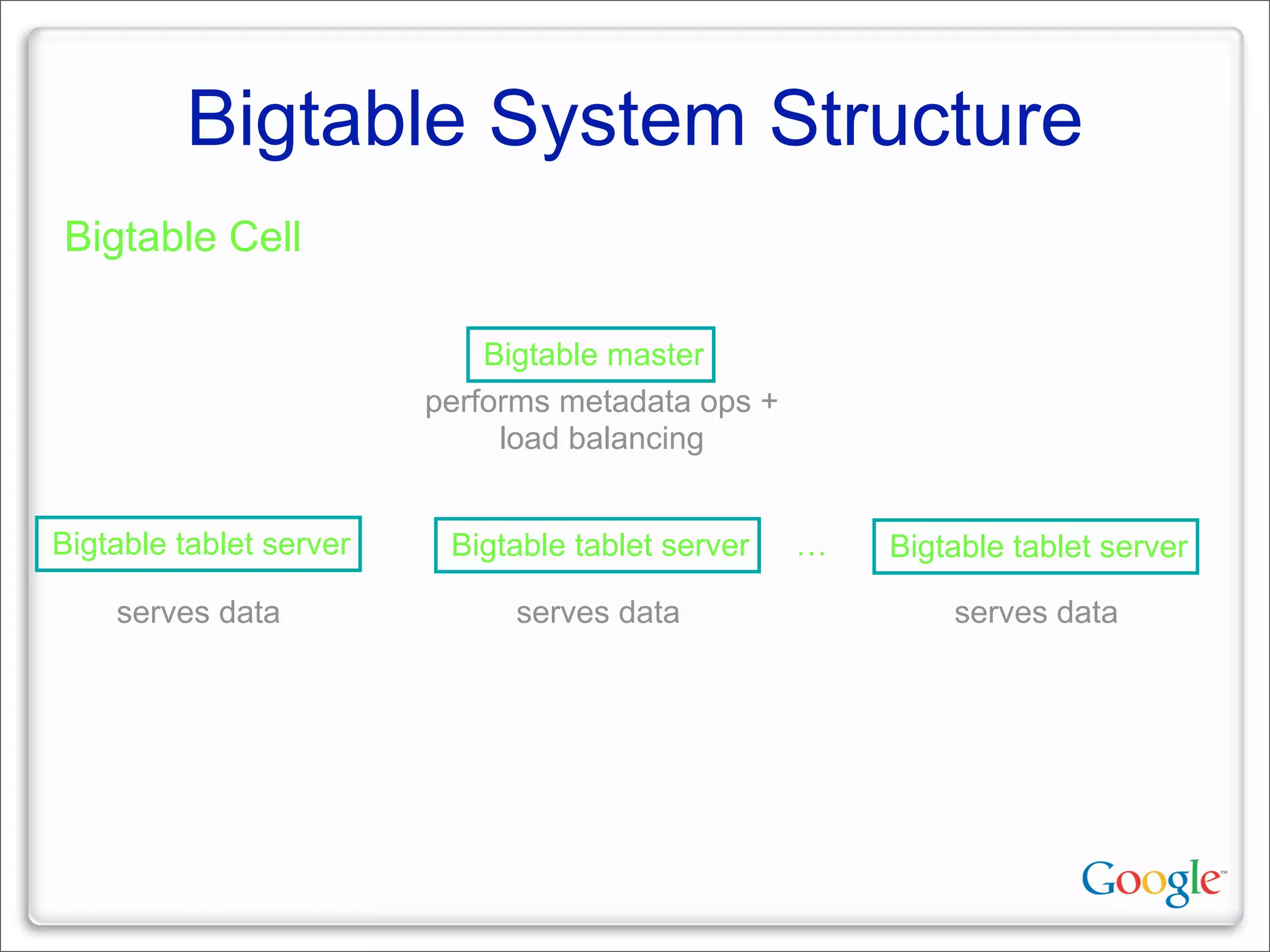

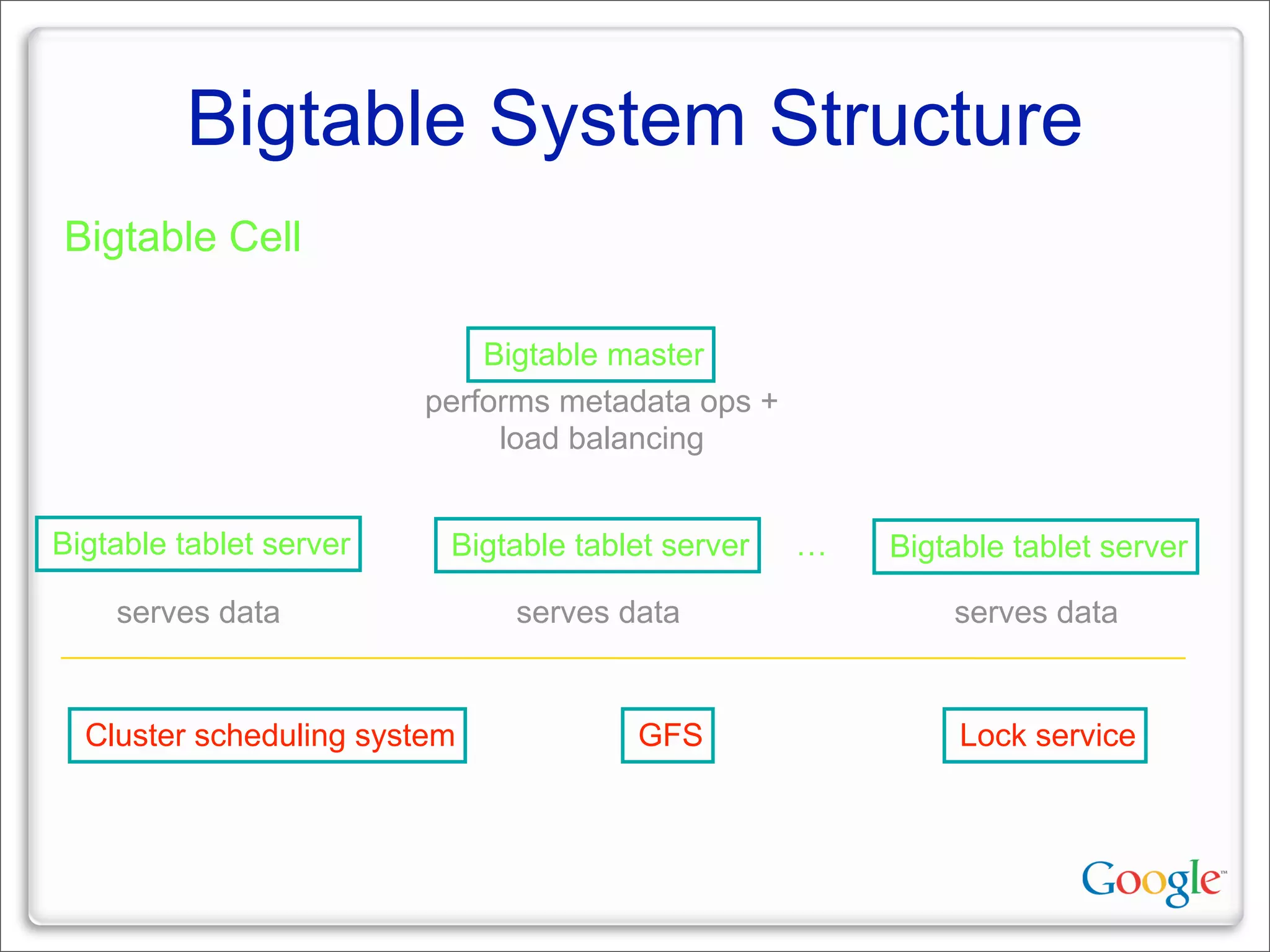

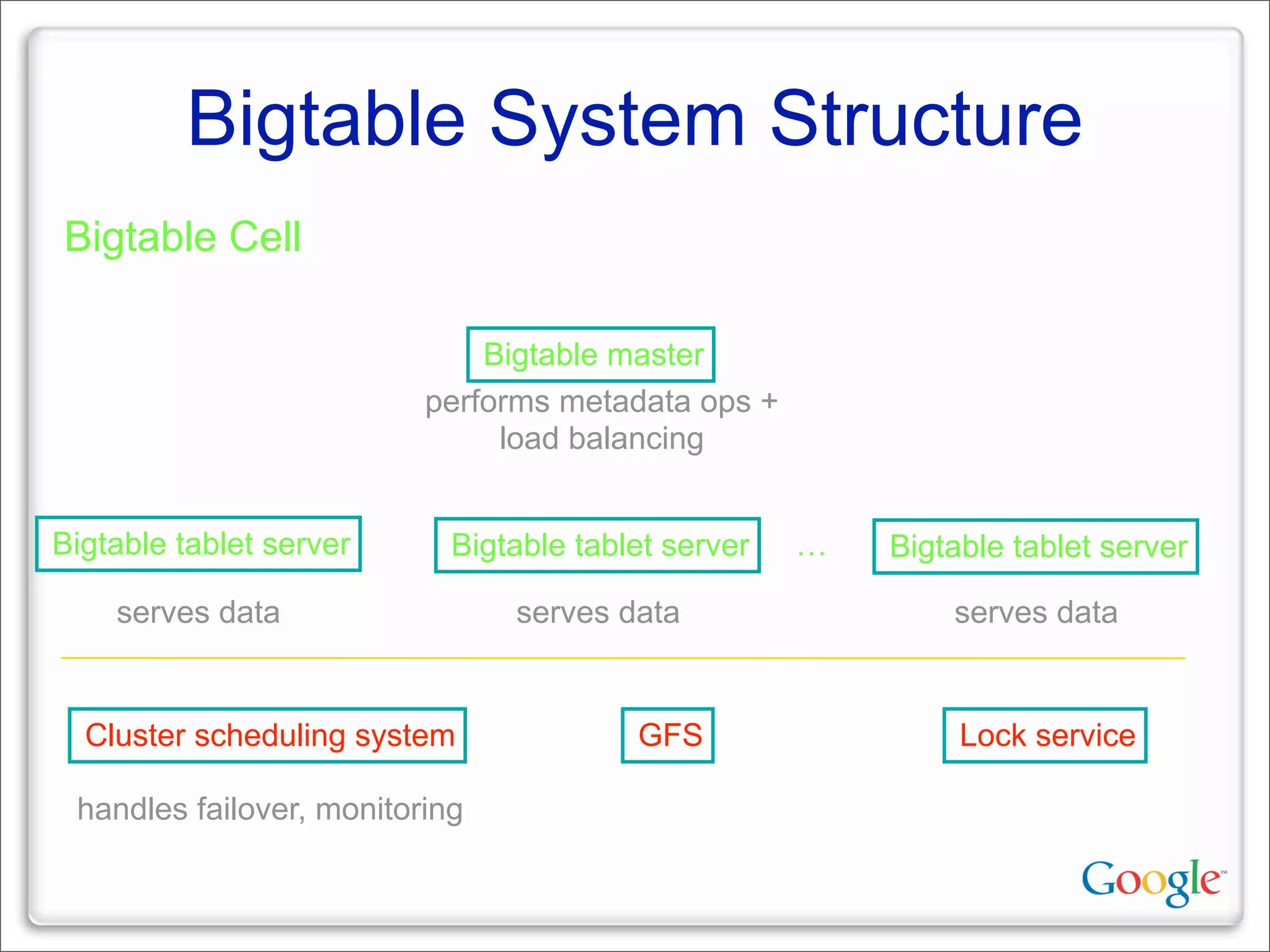

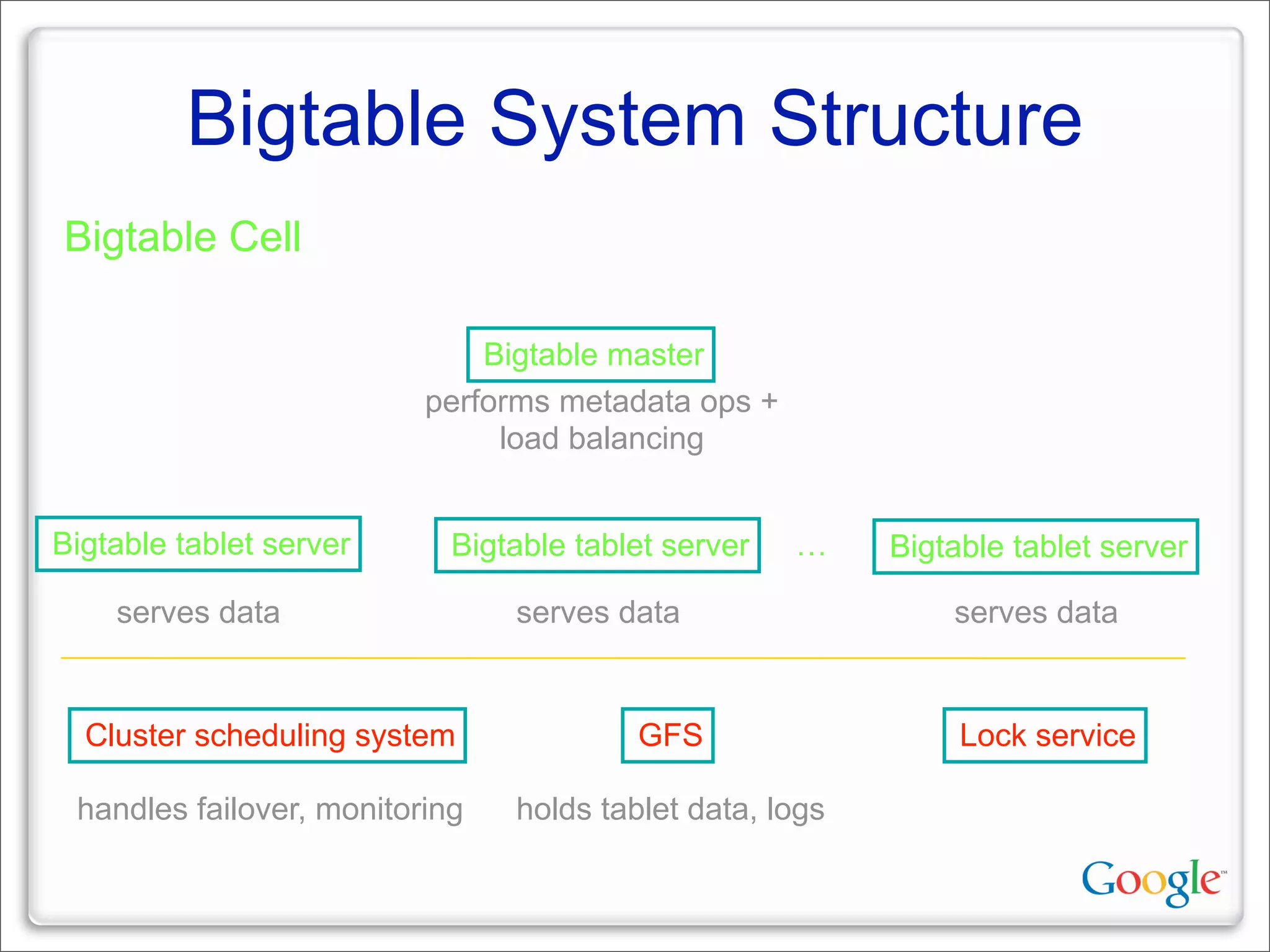

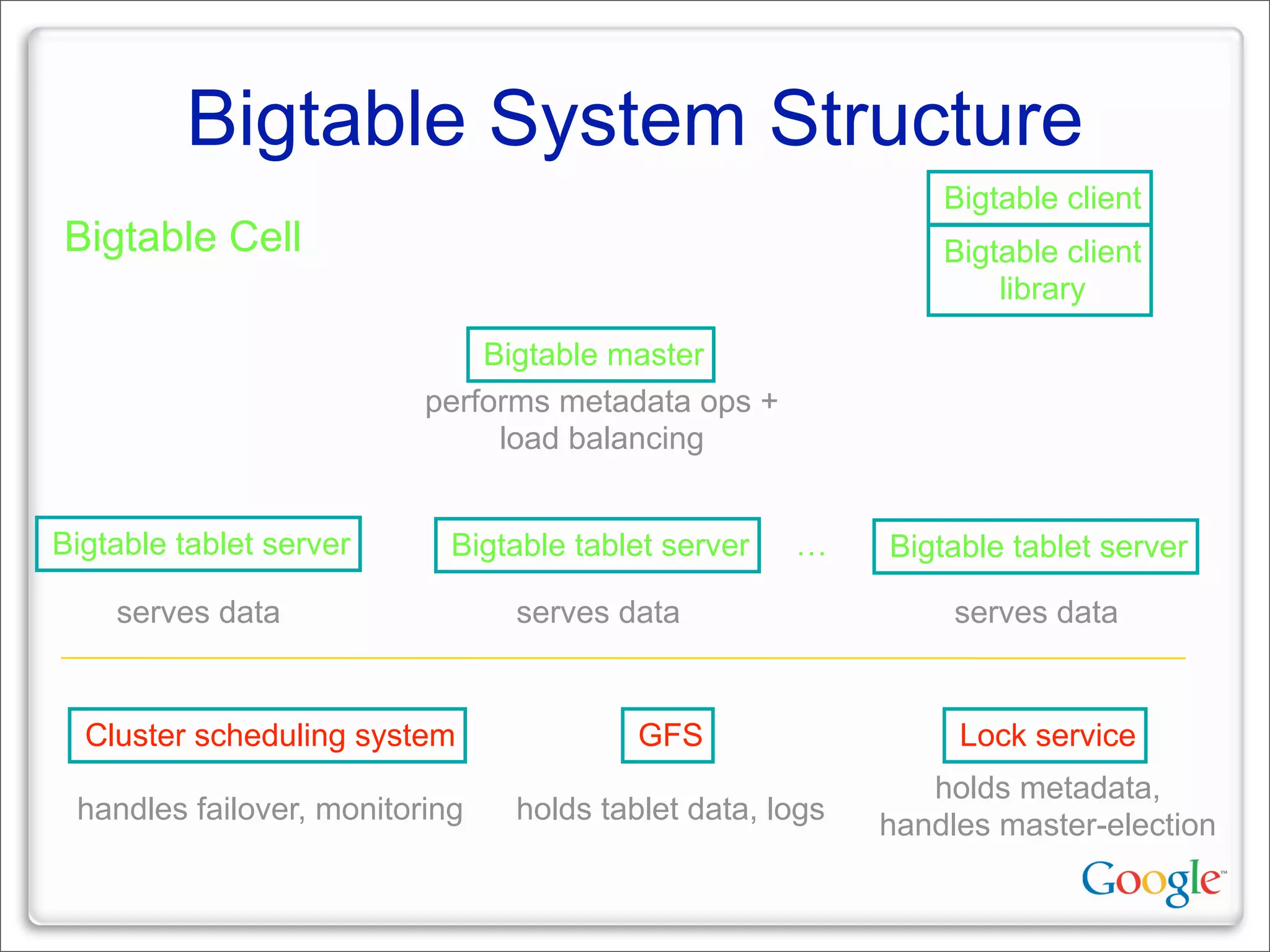

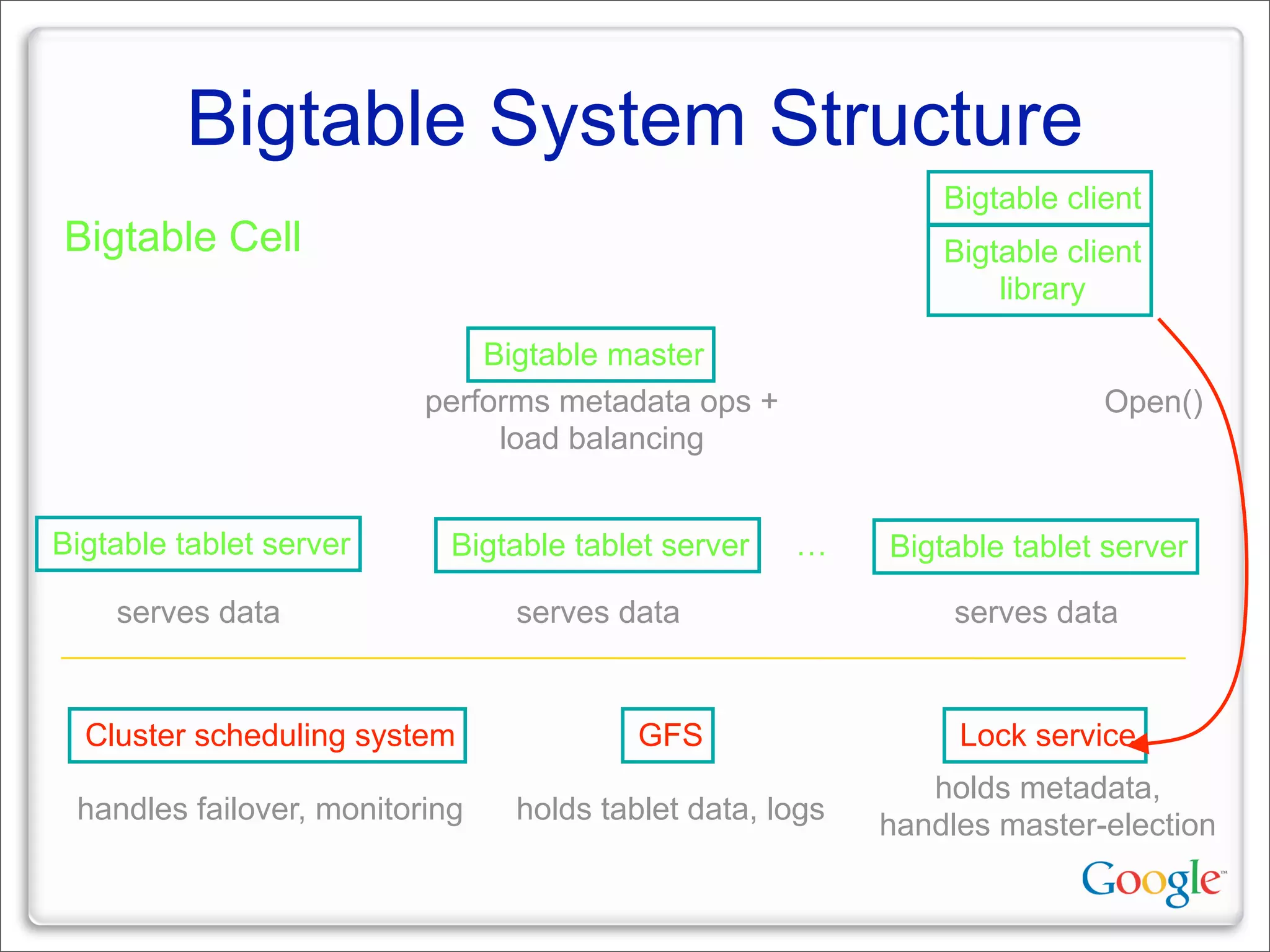

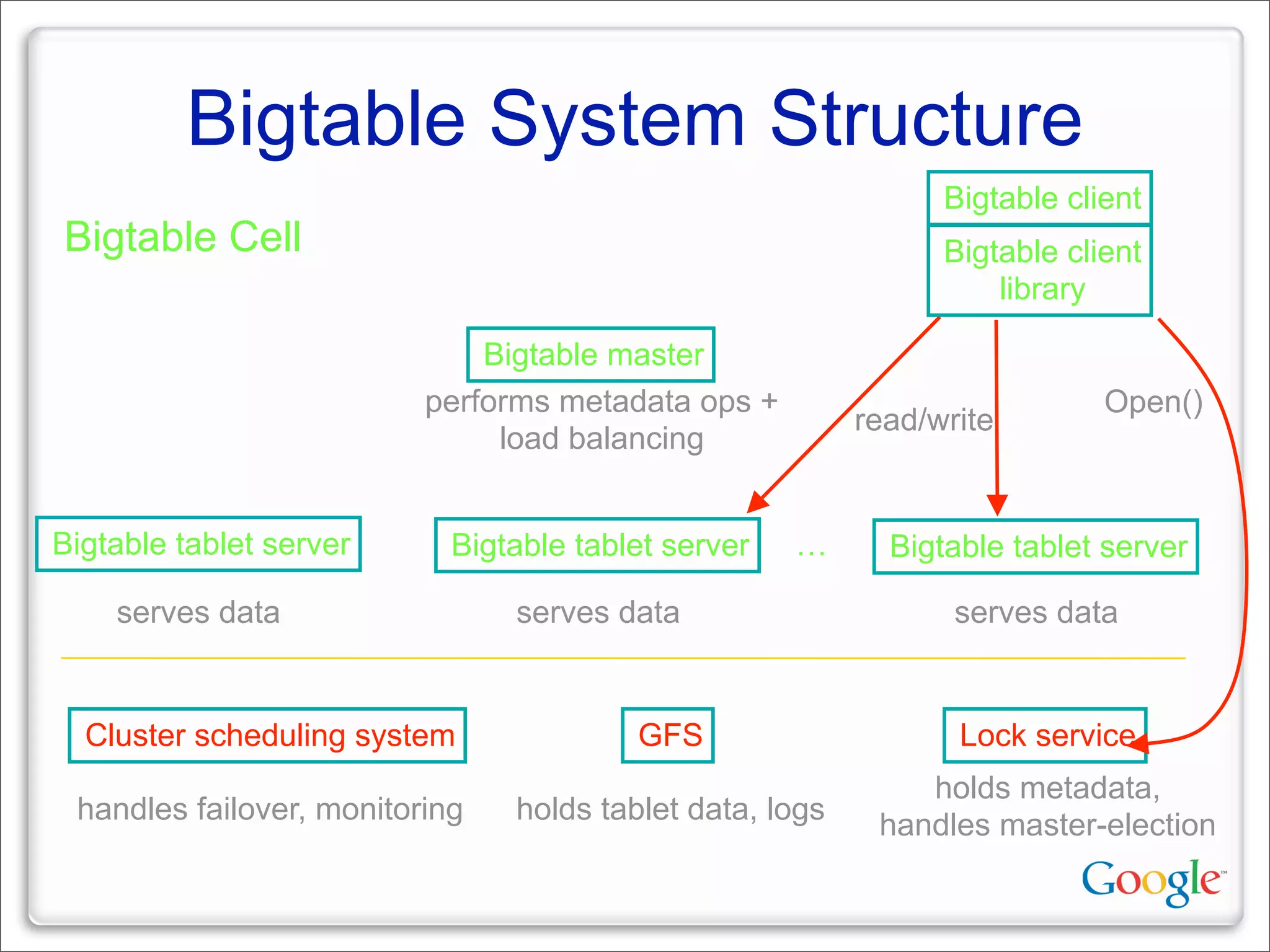

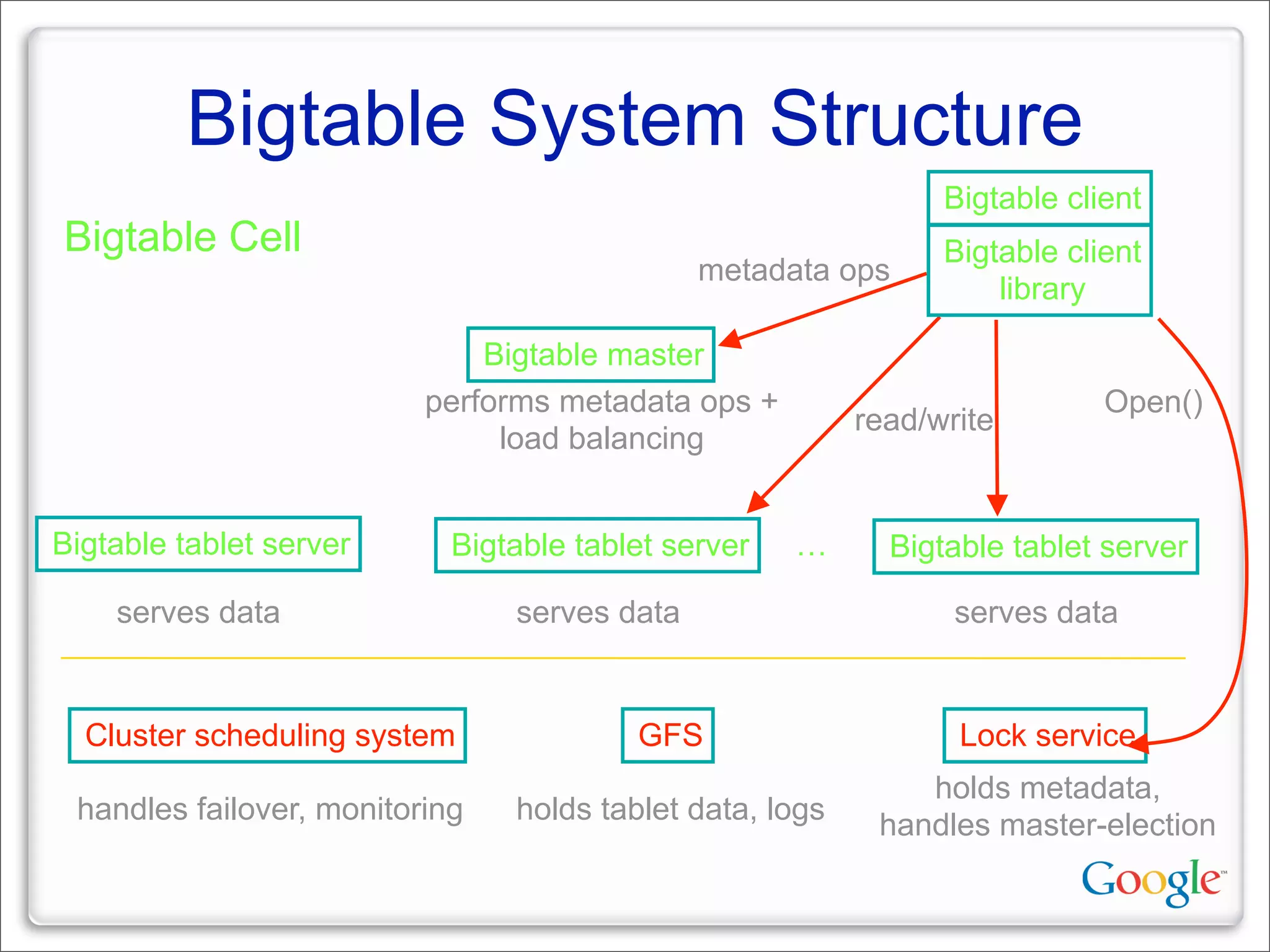

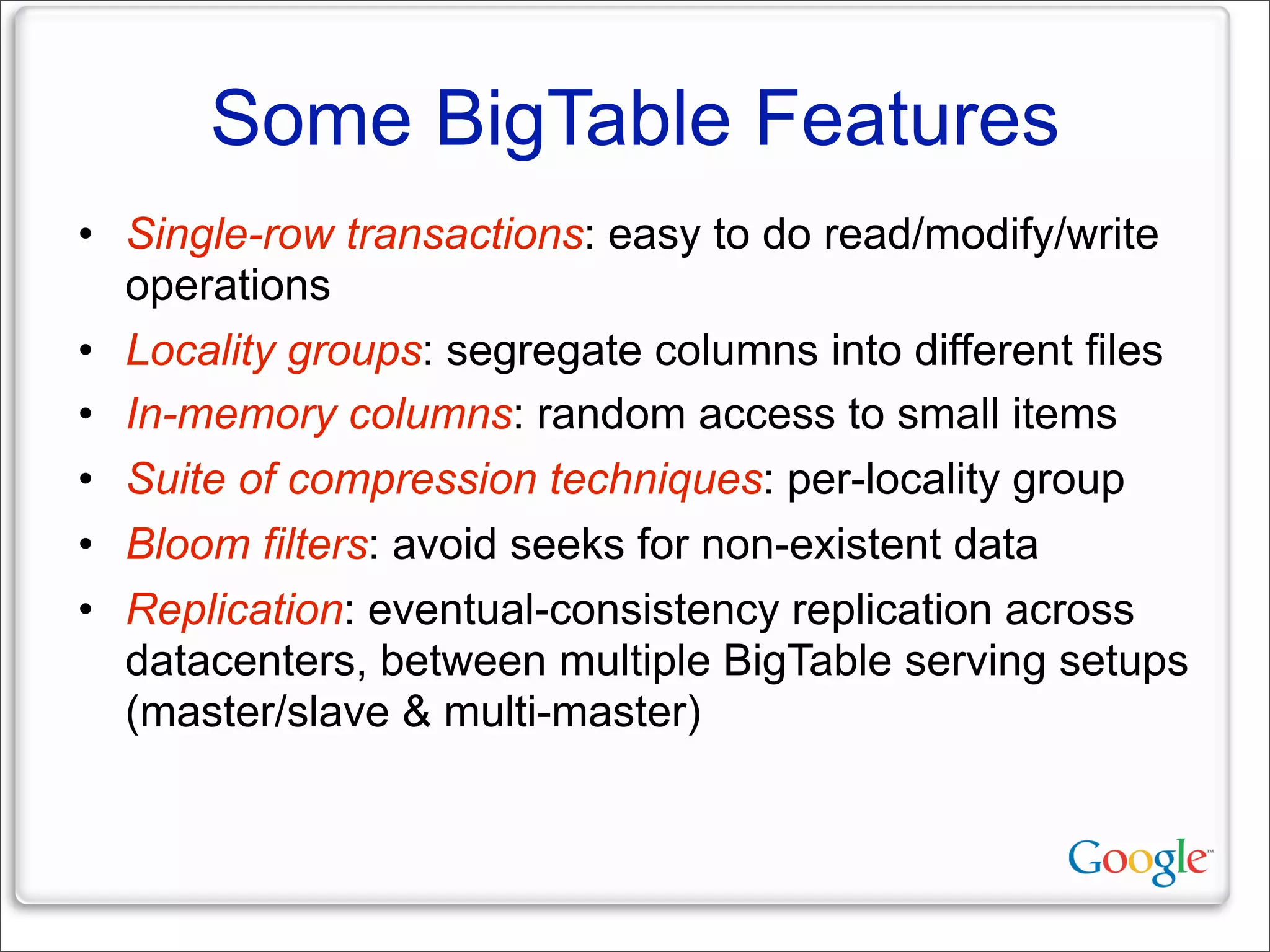

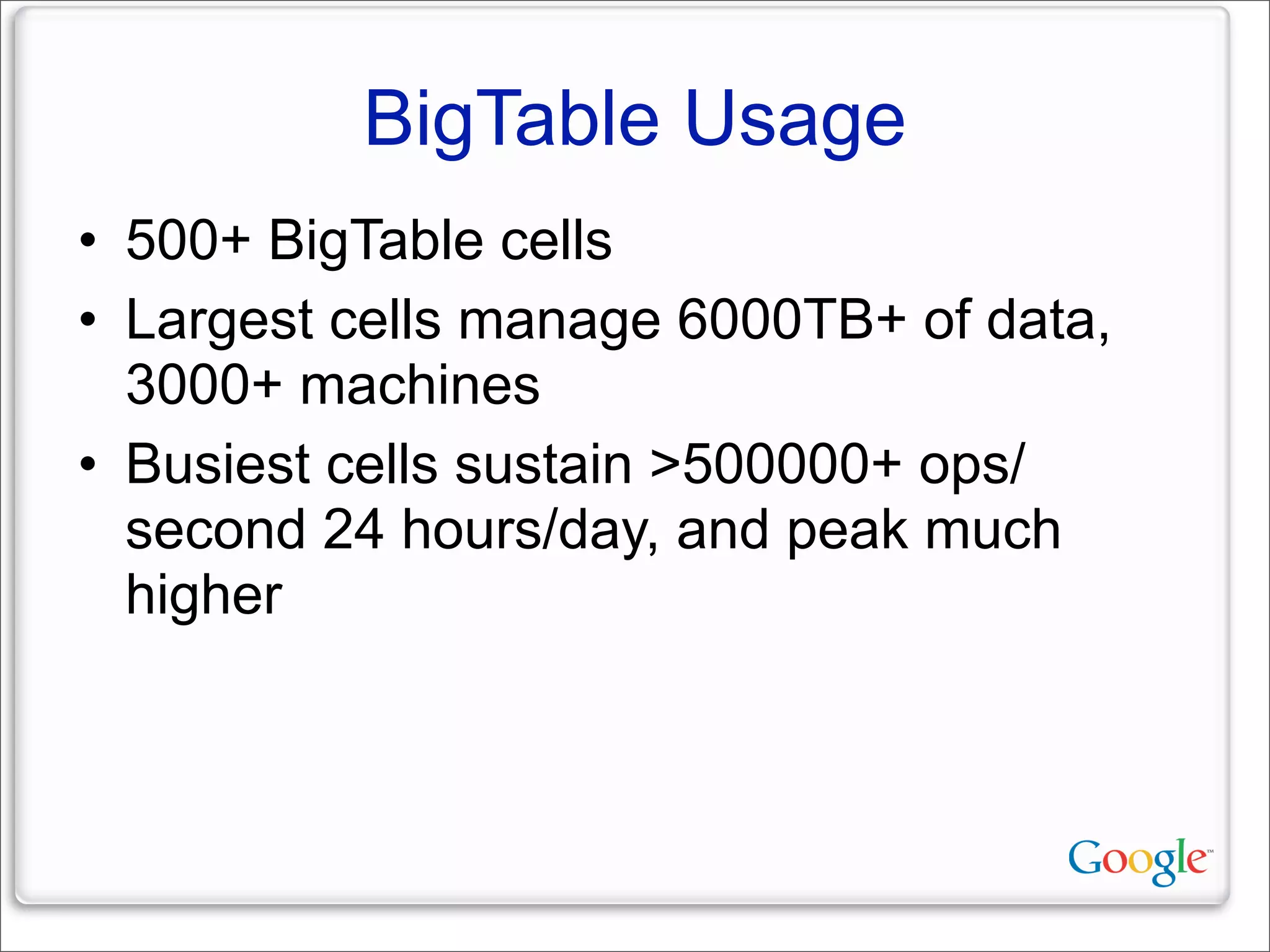

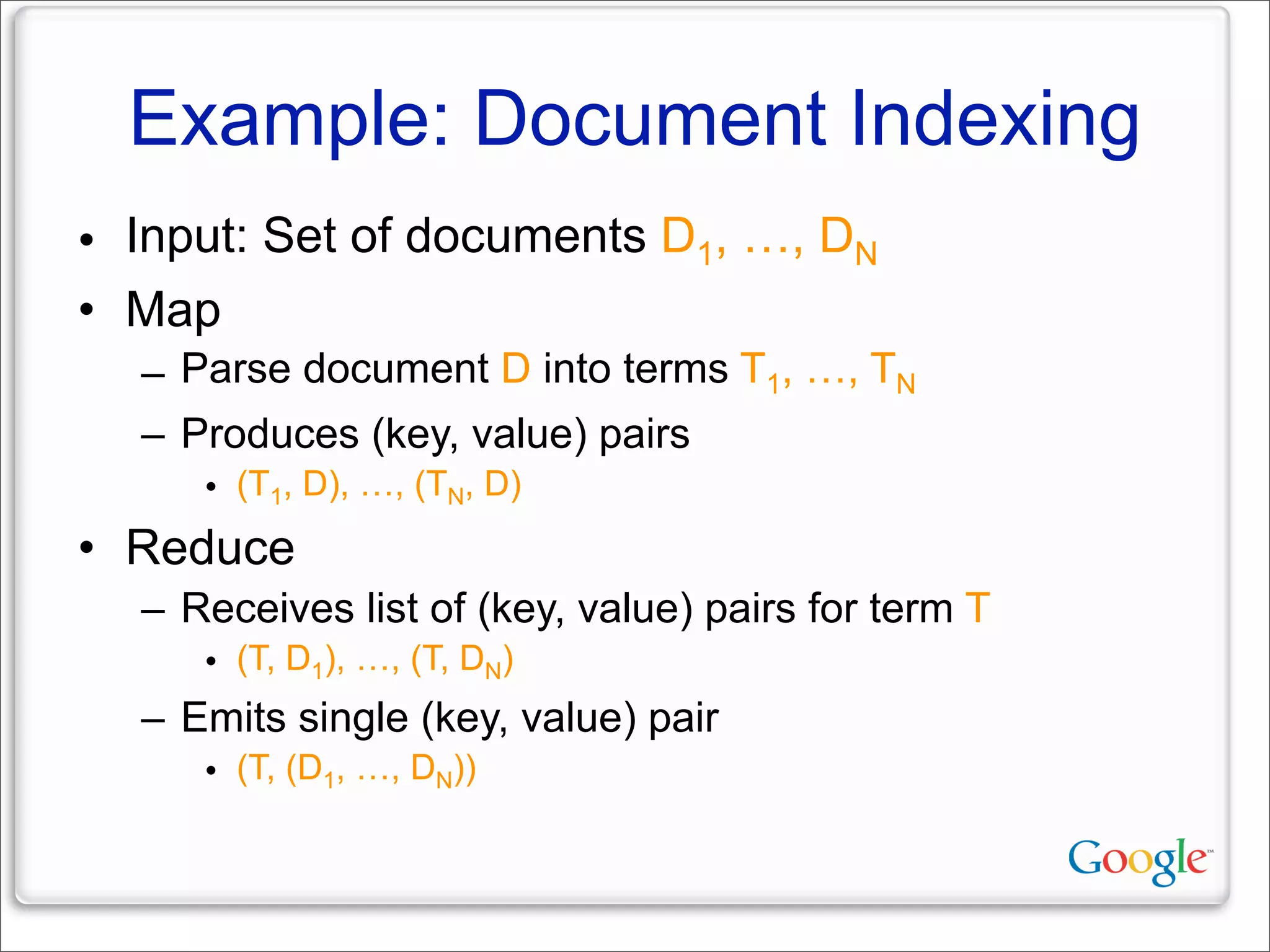

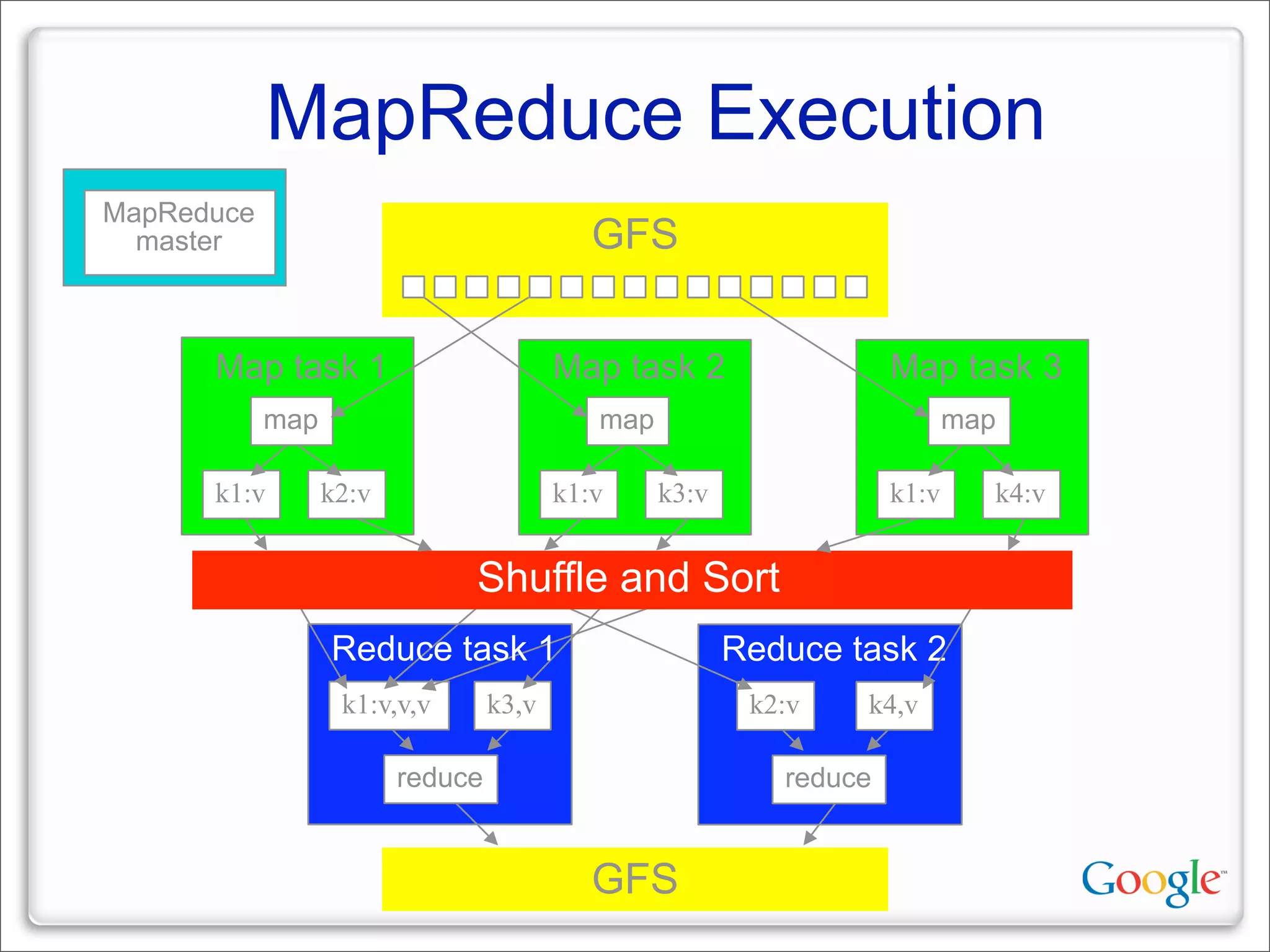

This document discusses Google's systems for handling large datasets, including their hardware infrastructure, distributed systems like GFS and BigTable, and future directions. It notes that Google uses many low-cost machines running Linux and in-house software to provide redundancy and scalability. Distributed file system GFS and database BigTable are used to store and access petabytes of data across thousands of machines.