This document discusses basic file management commands in Linux. It covers commands like cp, mv, rm, mkdir and touch to copy, move, remove, create directories and update file timestamps. It provides examples of using each command, explaining how to copy and move files between directories, remove both files and directories, and use wildcards and recursion. Special characters for file globbing like asterisks and question marks are also described.

![CoreLinuxforRedHatandFedoralearningunderGNUFreeDocumentationLicense-Copyleft(c)AcácioOliveira2012

Everyoneispermittedtocopyanddistributeverbatimcopiesofthislicensedocument,changingisallowed

Process text streams using filters

Rundown of commands

commands Overview

3

cp - copy files

find - find files based on command line specifications

mkdir - make a directory

mv - move or rename files and directories

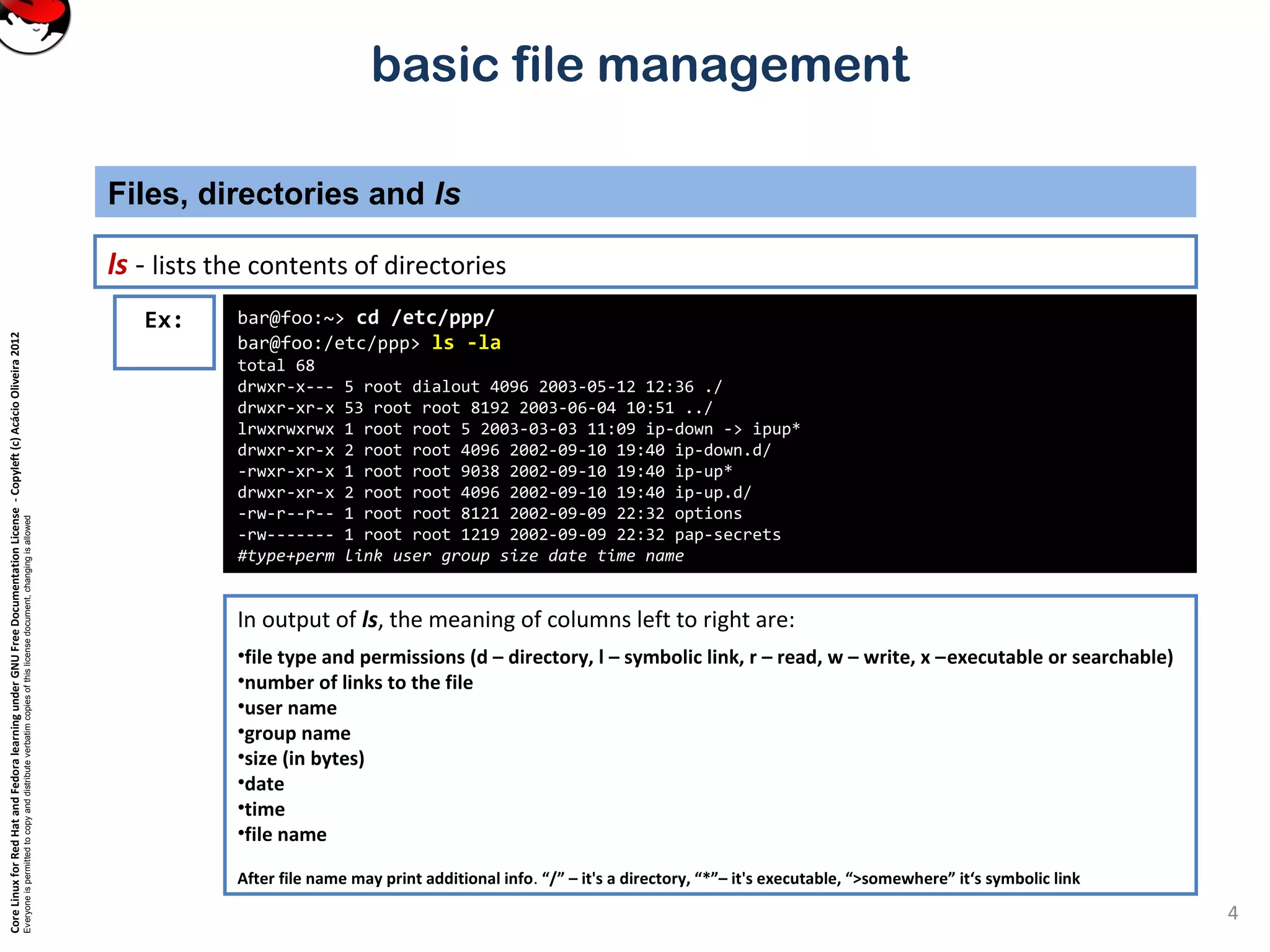

ls - list files

rm - remove files (and directories, if you ask nicely)

rmdir - remove directory

touch - touch a file to update its timestamp

file globbing - *, ?, [abc] and {abc,def} wildcards](https://image.slidesharecdn.com/txmjvkrtmeraxnjgzvb2-signature-b02a6c073768d8536fdd50c0a47af6292b168fbd705c8180447bb0b754b59738-poli-141223132219-conversion-gate02/75/3-3-perform-basic-file-management-3-2048.jpg)

![CoreLinuxforRedHatandFedoralearningunderGNUFreeDocumentationLicense-Copyleft(c)AcácioOliveira2012

Everyoneispermittedtocopyanddistributeverbatimcopiesofthislicensedocument,changingisallowed

basic file management

special characters and strings that can take the place of any characters, or specific characters.

File globbing (wildcards)

5

foo:/etc $ ls -l host*

-rw-r--r-- 1 root root 17 Jul 23 2000 host.conf

-rw-rw-r-- 2 root root 291 Mar 31 15:03 hosts

-rw-r--r-- 1 root root 201 Feb 13 21:05 hosts.allow

-rw-rw-r-- 1 root root 196 Dec 9 15:06 hosts.bak

-rw-r--r-- 1 root root 357 Jan 23 19:39 hosts.deny

foo:/etc $ ls [A-Z]* -lad

drwxr-xr-x 3 root root 4096 Oct 14 11:07 CORBA

-rw-r--r-- 1 root root 2434 Sep 2 2002 DIR_COLORS

-rw-r--r-- 1 root root 2434 Sep 2 2002 DIR_COLORS.xterm

-rw-r--r-- 1 root root 92336 Jun 23 2002 Muttrc

drwxr-xr-x 18 root root 4096 Feb 24 19:54 X11

Ex:

shell expands the following special characters just in case they are part of a file name.

Filename expansion is only performed if the special characters are in quote

~ /home/user or /root (the user's home directory) echo ~/.bashrc

* Any sequence of characters (or no characters) echo /etc/*ost*

? Any one character echo /etc/hos?

[abc] Any of a, b, or c echo /etc/host[s.]

{abc,def,ghi} Any of abc, def or ghi echo /etc/hosts.{allow,deny}](https://image.slidesharecdn.com/txmjvkrtmeraxnjgzvb2-signature-b02a6c073768d8536fdd50c0a47af6292b168fbd705c8180447bb0b754b59738-poli-141223132219-conversion-gate02/75/3-3-perform-basic-file-management-5-2048.jpg)

![CoreLinuxforRedHatandFedoralearningunderGNUFreeDocumentationLicense-Copyleft(c)AcácioOliveira2012

Everyoneispermittedtocopyanddistributeverbatimcopiesofthislicensedocument,changingisallowed

basic file management

mkdir – make directory

Directories and files

6

[jack@foo tmp]$ mkdir dir1

[jack@foo tmp]$ mkdir dir2 dir3

[jack@foo tmp]$ ls -la

total 20

drwxrwxr-x 5 jack jack 4096 Apr 8 10:00 .

drwxr-xr-x 12 jack users 4096 Apr 8 10:00 ..

drwxrwxr-x 2 jack jack 4096 Apr 8 10:00 dir1

drwxrwxr-x 2 jack jack 4096 Apr 8 10:00 dir2

drwxrwxr-x 2 jack jack 4096 Apr 8 10:00 dir3

[jack@foo tmp]$ mkdir parent/sub/something

mkdir: cannot create directory `parent/sub/something': No such file

or directory

[jack@foo tmp]$ mkdir -p parent/sub/something

[jack@foo tmp]$ find

.

./dir1

./dir2

./dir3

./parent

./parent/sub

./parent/sub/something

Ex:

To make directory, use mkdir. By default, parent directory must exist, but with the -p switch mkdir will

also create the required parent directories.](https://image.slidesharecdn.com/txmjvkrtmeraxnjgzvb2-signature-b02a6c073768d8536fdd50c0a47af6292b168fbd705c8180447bb0b754b59738-poli-141223132219-conversion-gate02/75/3-3-perform-basic-file-management-6-2048.jpg)

![CoreLinuxforRedHatandFedoralearningunderGNUFreeDocumentationLicense-Copyleft(c)AcácioOliveira2012

Everyoneispermittedtocopyanddistributeverbatimcopiesofthislicensedocument,changingisallowed

basic file management

rmdir – remove directory

Directories and files

8

[jack@foo tmp]$ rmdir dir1

[jack@foo tmp]$ rmdir dir2 dir3

[jack@foo tmp]$ rmdir parent

rmdir: `parent': Directory not empty

[jack@foo tmp]$ rmdir parent/sub/something/

[jack@foo tmp]$ rmdir parent

rmdir: `parent': Directory not empty

[jack@foo tmp]$ rmdir parent/sub

[jack@foo tmp]$ rmdir parent

[jack@foo tmp]$ find

.

[jack@foo tmp]$ rmdir /etc

rmdir: `/etc': Permission denied

Ex:

rmdir can be used to remove empty directories. If directory contains any files and directories, it will fail.

(rm -r can remove).](https://image.slidesharecdn.com/txmjvkrtmeraxnjgzvb2-signature-b02a6c073768d8536fdd50c0a47af6292b168fbd705c8180447bb0b754b59738-poli-141223132219-conversion-gate02/75/3-3-perform-basic-file-management-8-2048.jpg)

![CoreLinuxforRedHatandFedoralearningunderGNUFreeDocumentationLicense-Copyleft(c)AcácioOliveira2012

Everyoneispermittedtocopyanddistributeverbatimcopiesofthislicensedocument,changingisallowed

basic file management

touch – update a file's time stamp

Directories and files

9

[jack@foo tmp]$ mkdir dir4

[jack@foo tmp]$ cd dir4

[jack@foo dir4]$ ls -l

total 0

[jack@foo dir4]$ touch newfile

[jack@foo dir4]$ ls -l

total 0

-rw-rw-r-- 1 jack jack 0 Apr 8 10:28 newfile

Ex:

originally written to update the time stamp of files. However, due to programming error, touch would create an empty file if it did not

exist. By the time this error was discovered, people were using touch to create empty files, and this is now an accepted use of touch.

[jack@foo dir4]$ touch newfile

[jack@foo dir4]$ ls -l

total 0

-rw-rw-r-- 1 jack jack 0 Apr 8 10:29 newfile

The second time we run touch it updates the file time](https://image.slidesharecdn.com/txmjvkrtmeraxnjgzvb2-signature-b02a6c073768d8536fdd50c0a47af6292b168fbd705c8180447bb0b754b59738-poli-141223132219-conversion-gate02/75/3-3-perform-basic-file-management-9-2048.jpg)

![CoreLinuxforRedHatandFedoralearningunderGNUFreeDocumentationLicense-Copyleft(c)AcácioOliveira2012

Everyoneispermittedtocopyanddistributeverbatimcopiesofthislicensedocument,changingisallowed

basic file management

Directories and files

10

Ex: [jack@foo dir4]$ touch file-{one,two}-{a,b}

[jack@foo dir4]$ ls –l

total 0

-rw-rw-r-- 1 jack jack 0 Apr 8 10:33 file-one-a

-rw-rw-r-- 1 jack jack 0 Apr 8 10:33 file-one-b

-rw-rw-r-- 1 jack jack 0 Apr 8 10:33 file-two-a

-rw-rw-r-- 1 jack jack 0 Apr 8 10:33 file-two-b

-rw-rw-r-- 1 jack jack 0 Apr 8 10:29 newfile

touch can create more than one file at a time](https://image.slidesharecdn.com/txmjvkrtmeraxnjgzvb2-signature-b02a6c073768d8536fdd50c0a47af6292b168fbd705c8180447bb0b754b59738-poli-141223132219-conversion-gate02/75/3-3-perform-basic-file-management-10-2048.jpg)

![CoreLinuxforRedHatandFedoralearningunderGNUFreeDocumentationLicense-Copyleft(c)AcácioOliveira2012

Everyoneispermittedtocopyanddistributeverbatimcopiesofthislicensedocument,changingisallowed

basic file management

rm – remove files

Directories and files

11

[hack@foo dir4]$ cd ..

[hack@foo tmp]$ ls dir4/

file-one-a file-one-b file-two-a file-two-b newfile

[hack@foo tmp]$ rm dir4/file*a

[hack@foo tmp]$ ls dir4/

file-one-b file-two-b newfile

Ex:

[hack@foo tmp]$ rm -ri dir4

rm: descend into directory `dir4'? y

rm: remove regular empty file `dir4/newfile'? y

rm: remove regular empty file `dir4/file-one-b'? y

rm: remove regular empty file `dir4/file-two-b'? y

rm: remove directory `dir4'? y

rm -r can be used to remove directories and the files contained in them.

rm -i to specify interactive behaviour (confirmation before removal).

Ex:

rm -rf is used to remove directories and their files without prompting

[hack@foo tmp]$ rm -rf dir4

Ex:](https://image.slidesharecdn.com/txmjvkrtmeraxnjgzvb2-signature-b02a6c073768d8536fdd50c0a47af6292b168fbd705c8180447bb0b754b59738-poli-141223132219-conversion-gate02/75/3-3-perform-basic-file-management-11-2048.jpg)

![CoreLinuxforRedHatandFedoralearningunderGNUFreeDocumentationLicense-Copyleft(c)AcácioOliveira2012

Everyoneispermittedtocopyanddistributeverbatimcopiesofthislicensedocument,changingisallowed

basic file management

cp – copy files

Copying and moving

12

[[jack@foo jack]$ ls

[jack@foo jack]$ cp /etc/passwd .

[jack@foo jack]$ ls

passwd

copying a file (/etc/passwd) to a directory (.)Ex:

cp -v is verbose about the progress of the copying process.

[jack@foo jack]$ mkdir copybin

[jack@foo jack]$ cp /bin/* ./copybin

[jack@foo jack]$ cp -v /bin/* ./copybin

`/bin/arch' -> `./copybin/arch'

`/bin/ash' -> `./copybin/ash'

`/bin/ash.static' -> `./copybin/ash.static'

`/bin/aumix-minimal' -> `./copybin/aumix-minimal'

`/bin/awk' -> `./copybin/awk'

Ex:

cp has 2 modes of usage:

1.Copy one or more files to a directory – cp file1 file2 file3 /home/user/directory/

2.Copy a file and to a new file name – cp file1 file13

If there are more than 2 parameters given to cp, then last parameter is always the destination directory.](https://image.slidesharecdn.com/txmjvkrtmeraxnjgzvb2-signature-b02a6c073768d8536fdd50c0a47af6292b168fbd705c8180447bb0b754b59738-poli-141223132219-conversion-gate02/75/3-3-perform-basic-file-management-12-2048.jpg)

![CoreLinuxforRedHatandFedoralearningunderGNUFreeDocumentationLicense-Copyleft(c)AcácioOliveira2012

Everyoneispermittedtocopyanddistributeverbatimcopiesofthislicensedocument,changingisallowed

basic file management

mv – move or rename files

Copying and moving

13

[jack@foo jack]$ mkdir fred

[jack@foo jack]$ mv -v fred joe

`fred' -> `joe'

[jack@foo jack]$ cp -v /etc/passwd joe/

`/etc/passwd' -> `joe/passwd'

[jack@foo jack]$ mv -v joe/passwd .

`joe/passwd' -> `./passwd'

use -v so that mv is verbose.Ex:

mv is the command used for renaming files

[jack@foo jack]$ mv -v passwd ichangedthenamehaha

`passwd' -> `ichangedthenamehaha'

[jack@foo jack]$ cp /etc/services .

Ex:

mv (like cp) has 2 modes of operation:

1.Move one or more files to a directory – mv file1 file2 file3 /home/user/directory/

2.Move a file and to a new file name (rename) – mv file1 file13

If there are more than 2 parameters given to mv, then last parameter is always the destination

directory.](https://image.slidesharecdn.com/txmjvkrtmeraxnjgzvb2-signature-b02a6c073768d8536fdd50c0a47af6292b168fbd705c8180447bb0b754b59738-poli-141223132219-conversion-gate02/75/3-3-perform-basic-file-management-13-2048.jpg)

![CoreLinuxforRedHatandFedoralearningunderGNUFreeDocumentationLicense-Copyleft(c)AcácioOliveira2012

Everyoneispermittedtocopyanddistributeverbatimcopiesofthislicensedocument,changingisallowed

basic file management

find – able to find files

find

14

[jack@tonto jack]$ find /usr/share/doc/unzip-5.50/ /etc/skel/

/usr/share/doc/unzip-5.50/

/usr/share/doc/unzip-5.50/BUGS

/usr/share/doc/unzip-5.50/INSTALL

/usr/share/doc/unzip-5.50/LICENSE

/usr/share/doc/unzip-5.50/README

/etc/skel/

/etc/skel/.kde

/etc/skel/.kde/Autostart

/etc/skel/.kde/Autostart/Autorun.desktop

/etc/skel/.kde/Autostart/.directory

/etc/skel/.bash_logout

/etc/skel/.bash_profile

.. // ..

Ex:

Conditions to find files:

• have names that contain certain text or match a given pattern

• are links to specific files

• were last used during a given period of time

• are within a given range of size

• are of a specified type (normal file, directory, symbolic link, etc.)

• are owned by a certain user or group or have specified permissions.

Simplest way of using is to specify a list of directories for find to search. By default find prints the name of each file it finds.](https://image.slidesharecdn.com/txmjvkrtmeraxnjgzvb2-signature-b02a6c073768d8536fdd50c0a47af6292b168fbd705c8180447bb0b754b59738-poli-141223132219-conversion-gate02/75/3-3-perform-basic-file-management-14-2048.jpg)