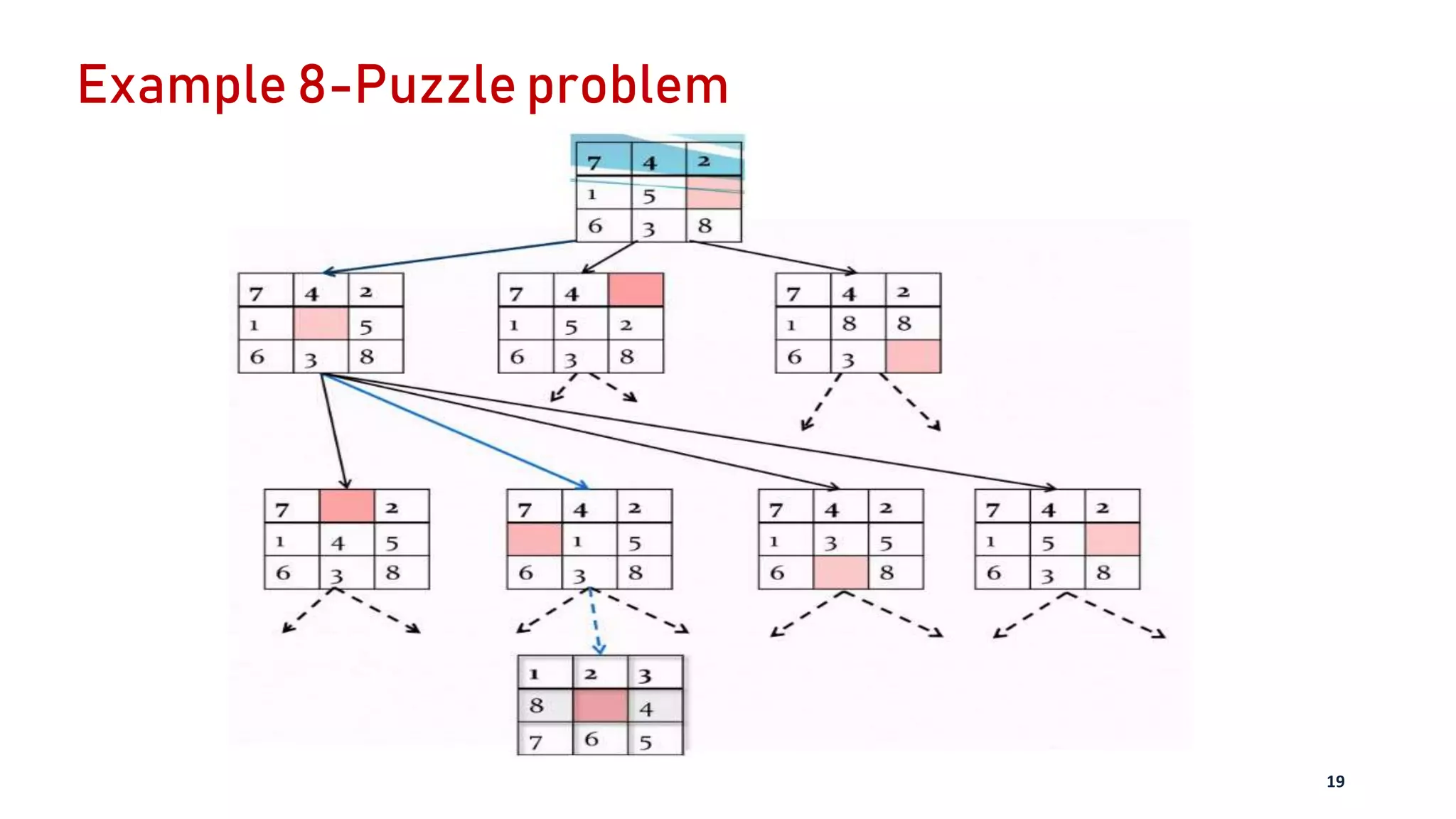

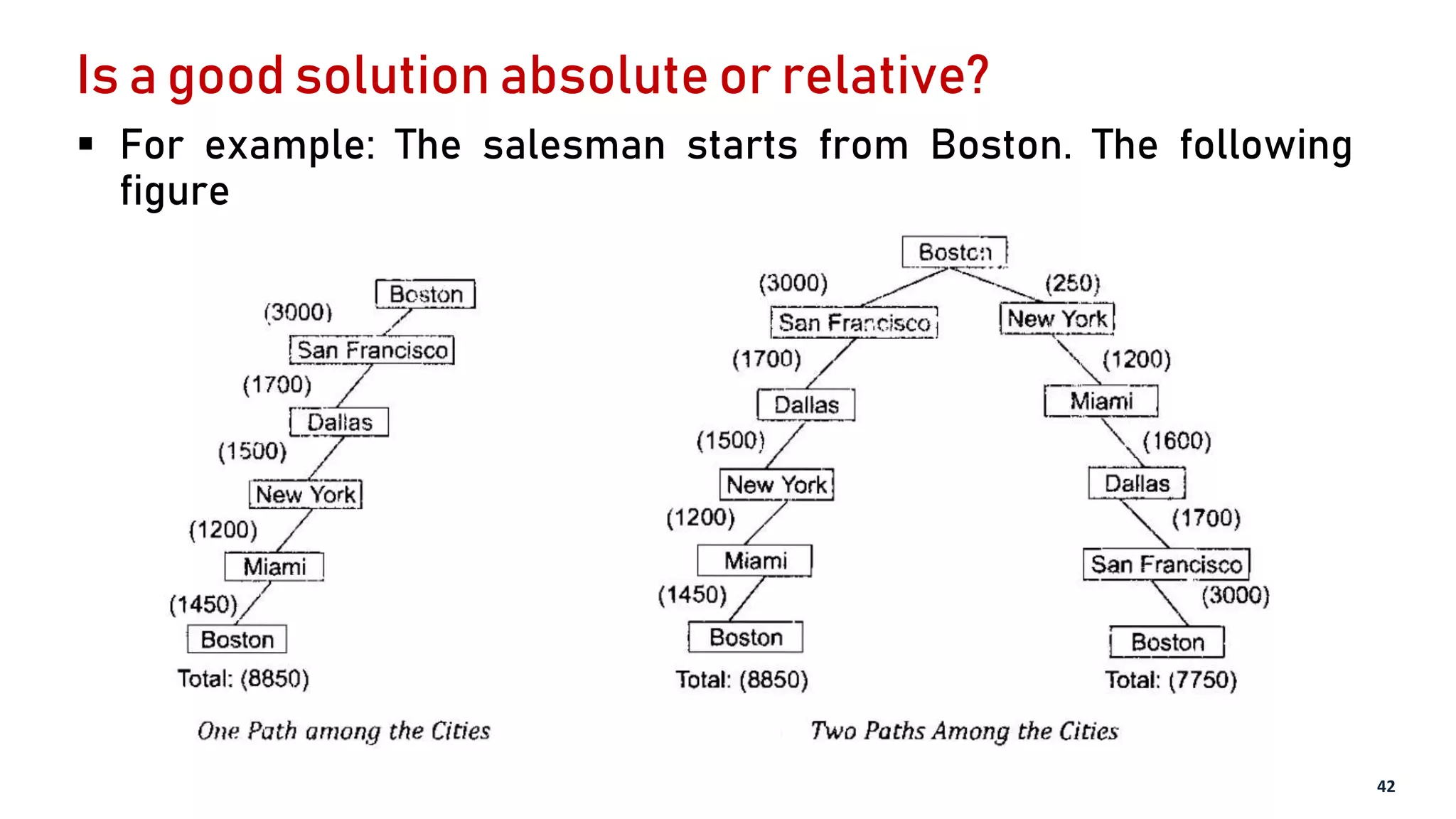

1. The document discusses defining problems as state space searches which involves representing the problem as a graph with nodes as states and edges as operators to transition between states.

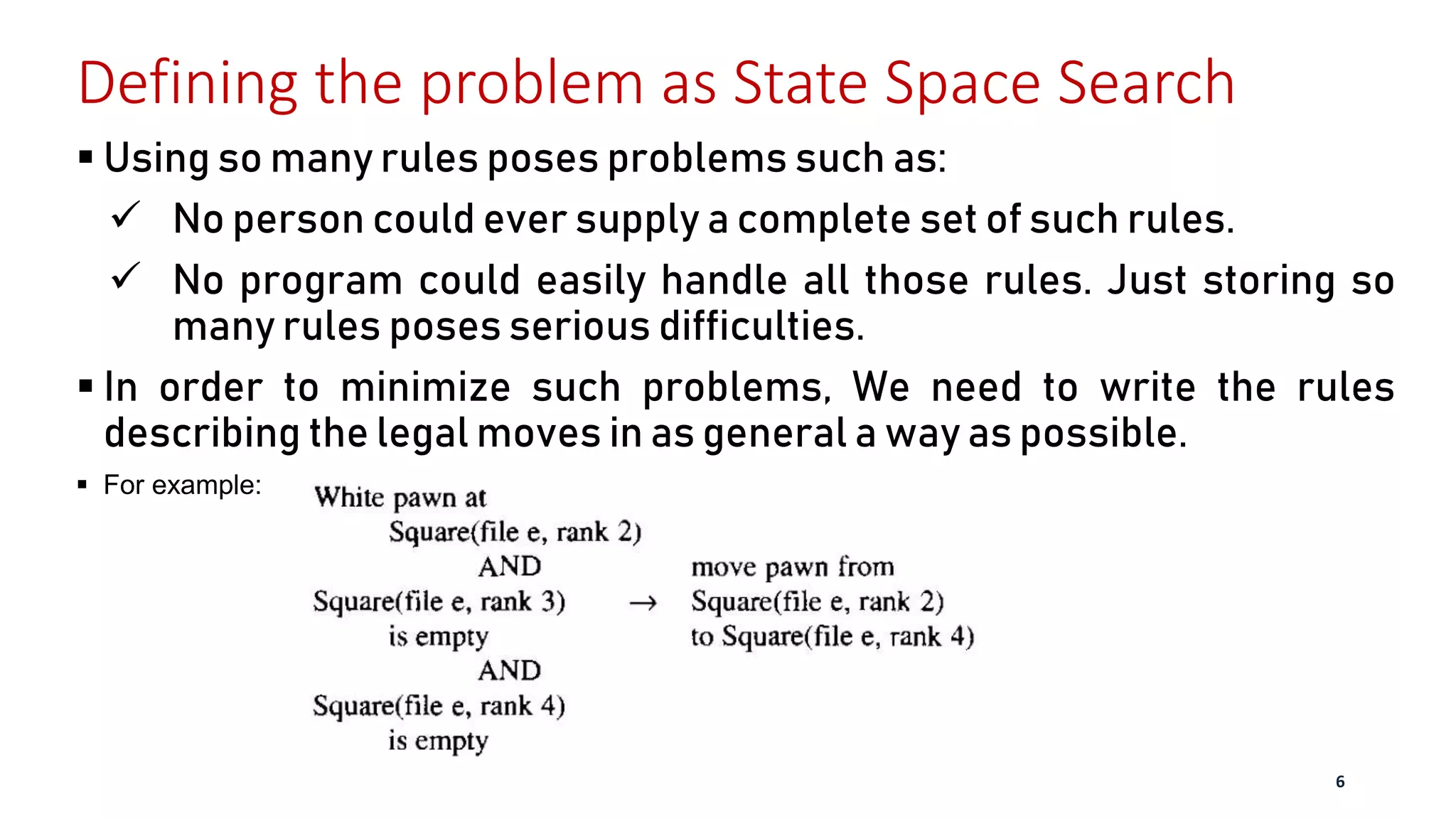

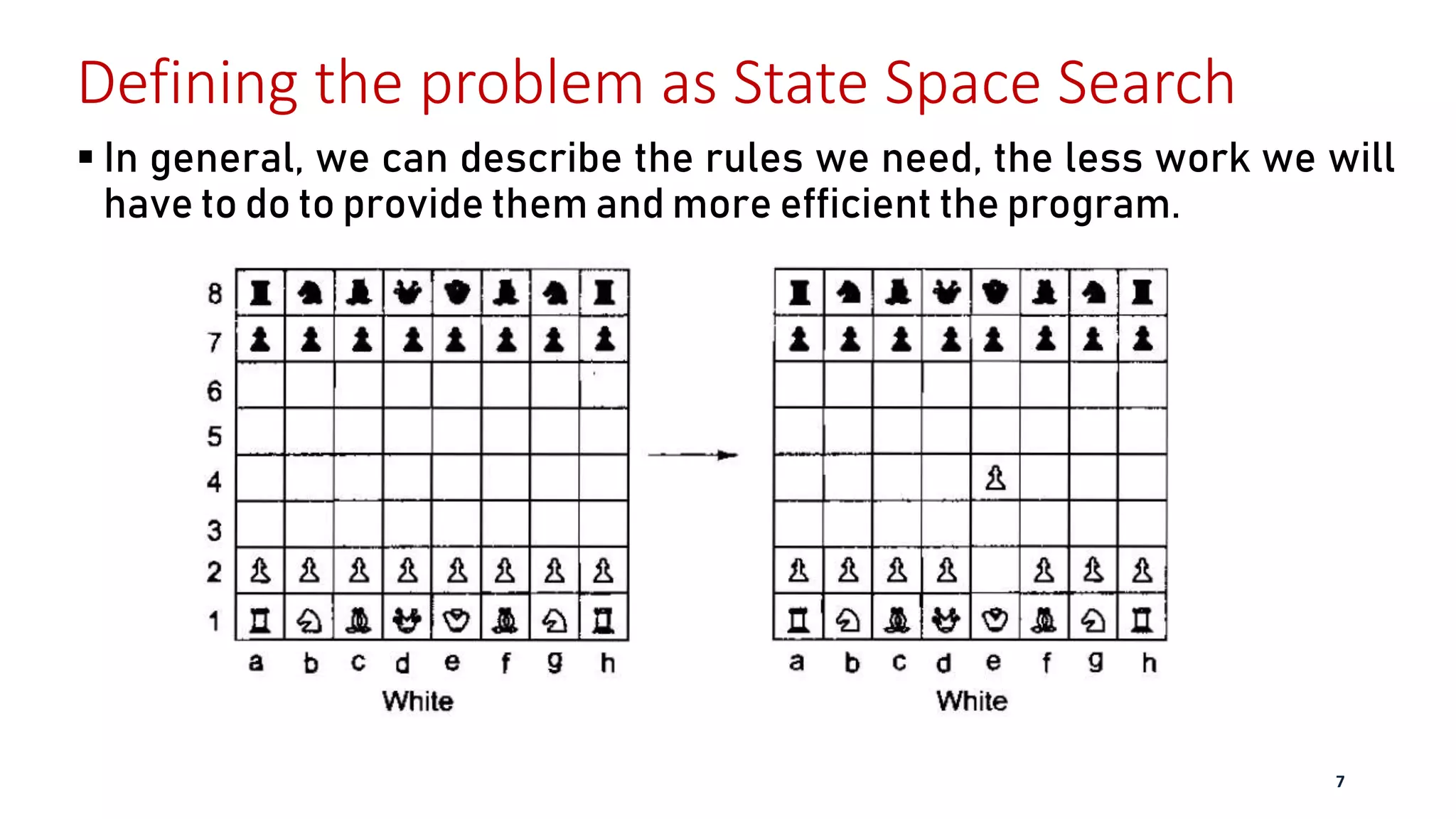

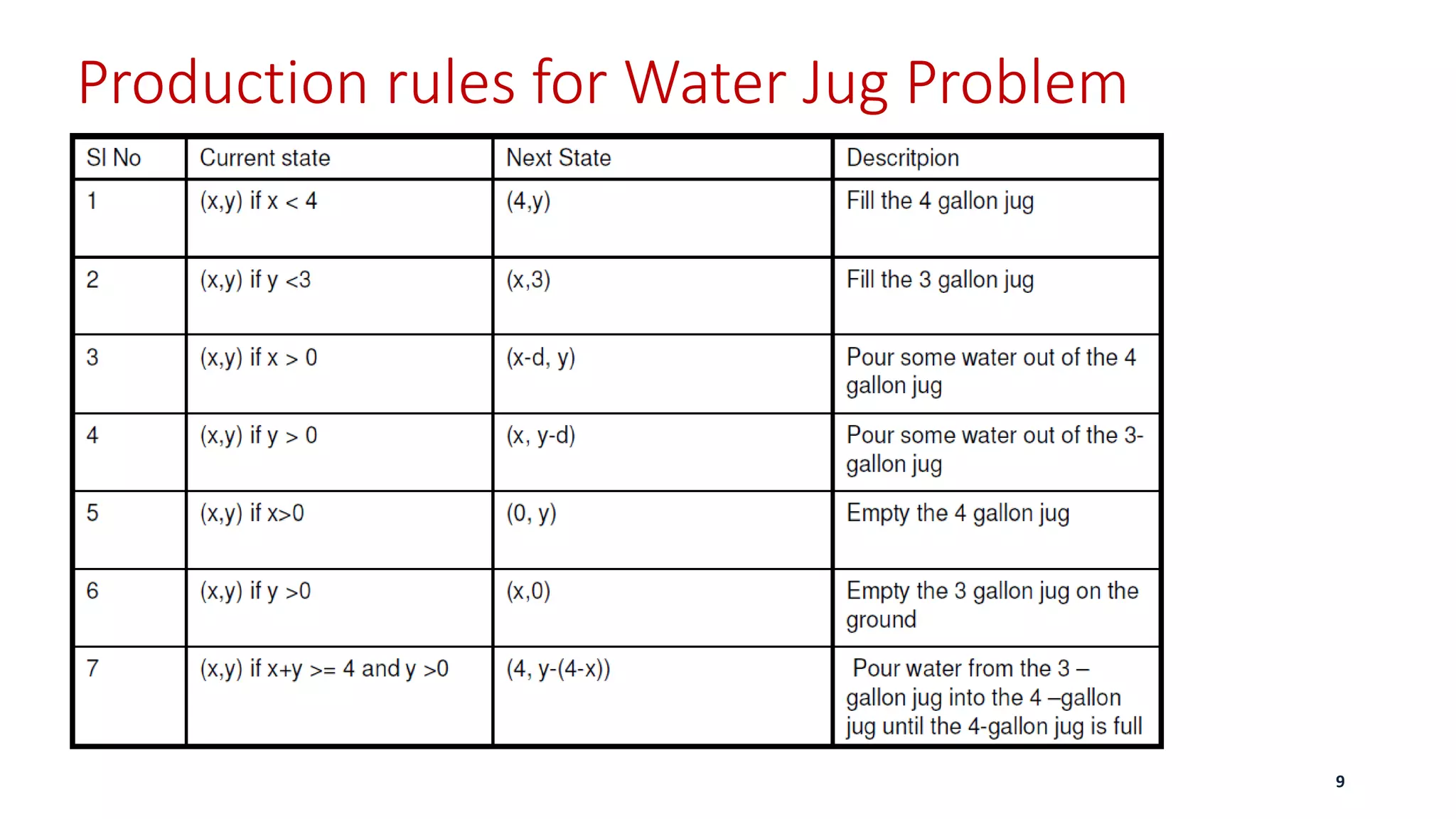

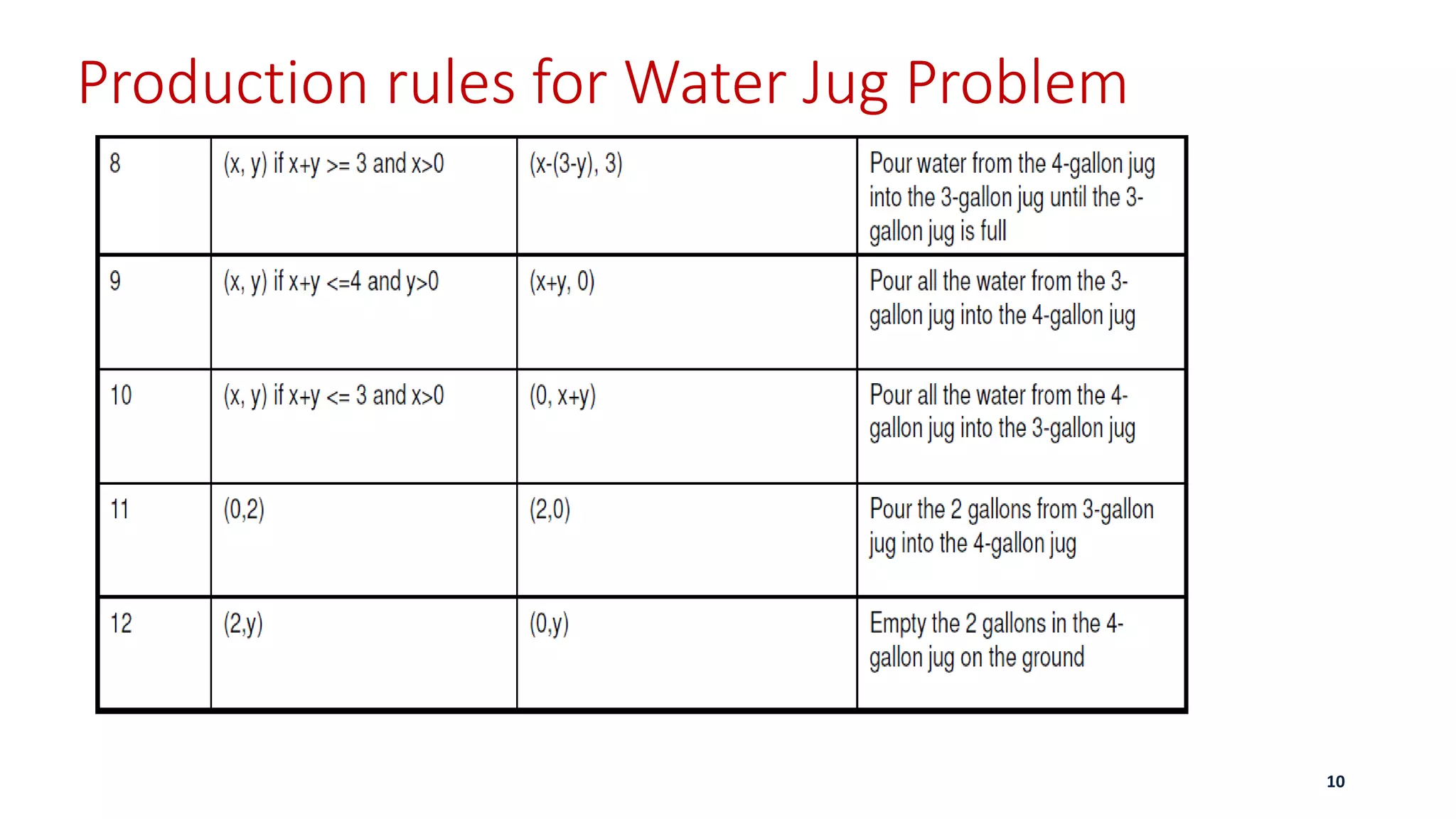

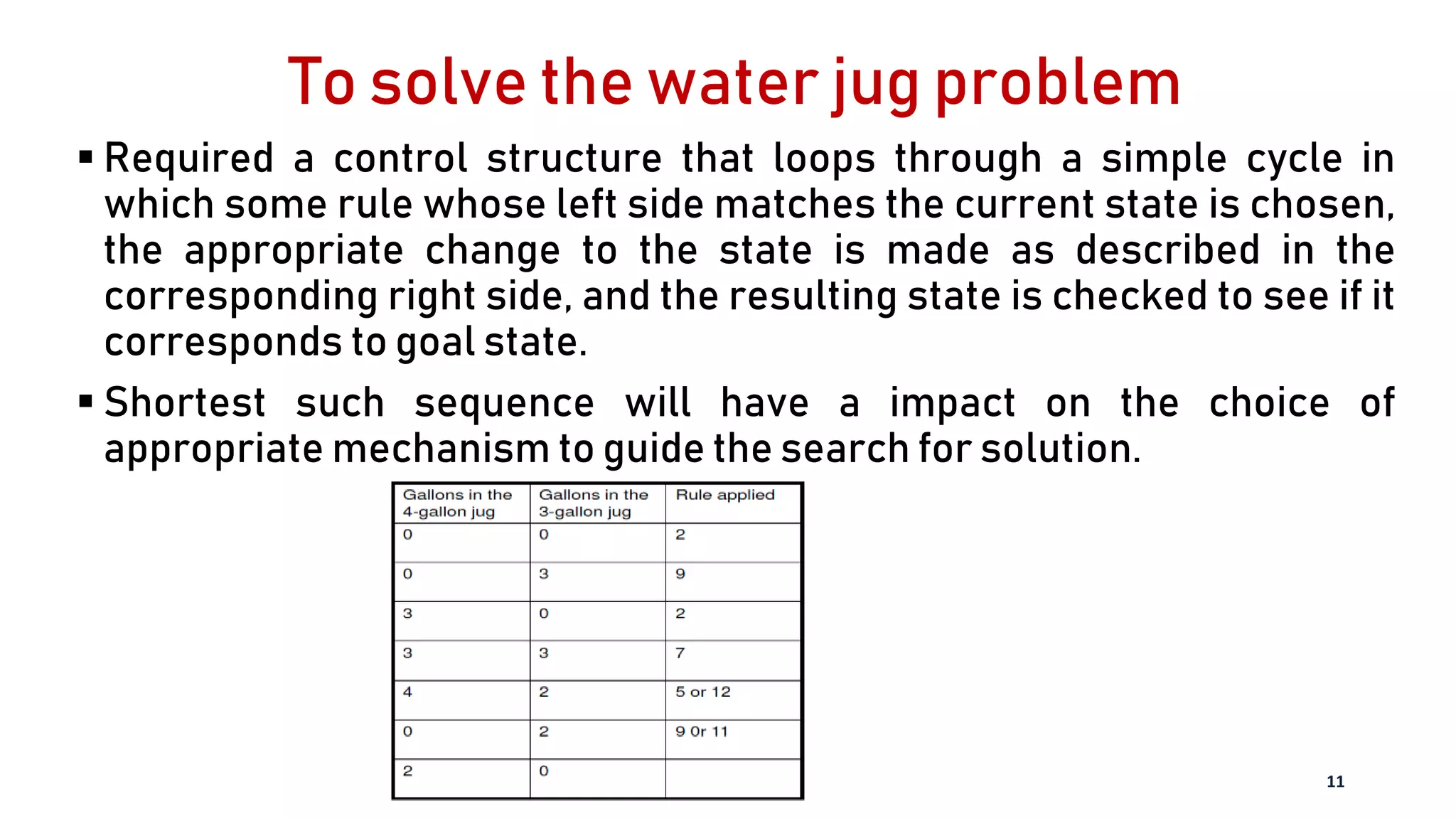

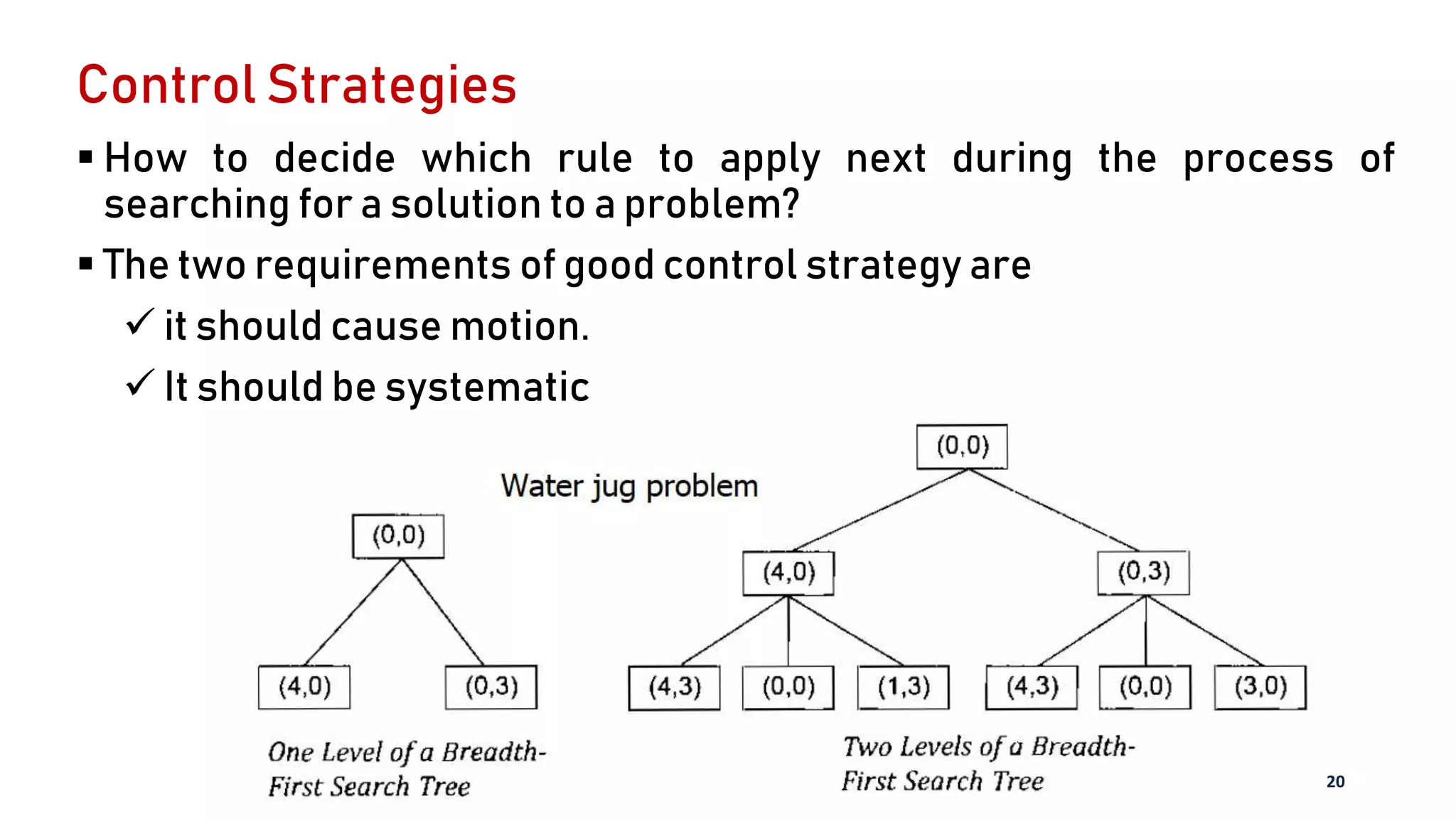

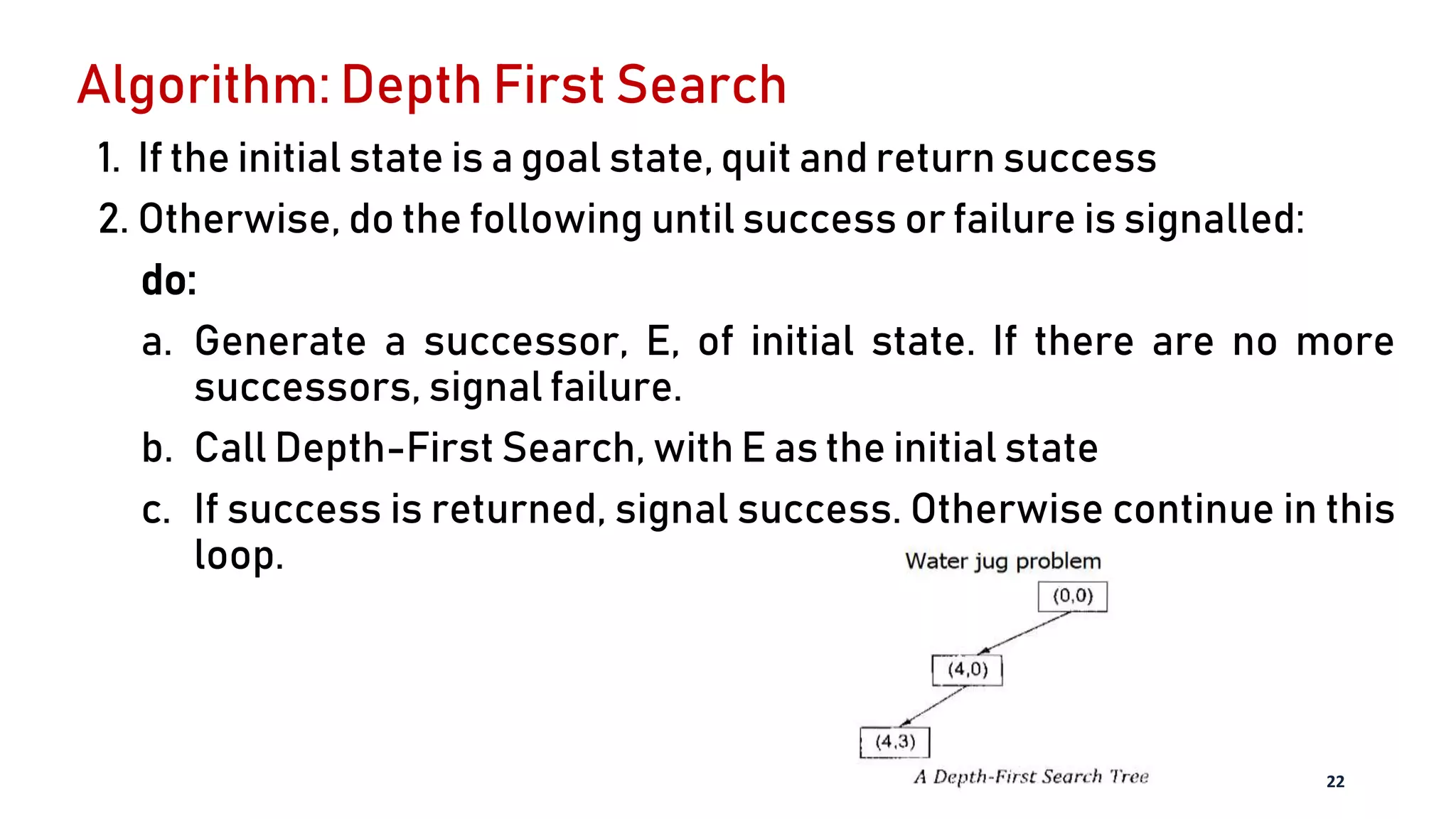

2. It provides examples of representing chess and the water jug problem as state space searches, defining the initial states, goal states, and production rules for the possible state transitions.

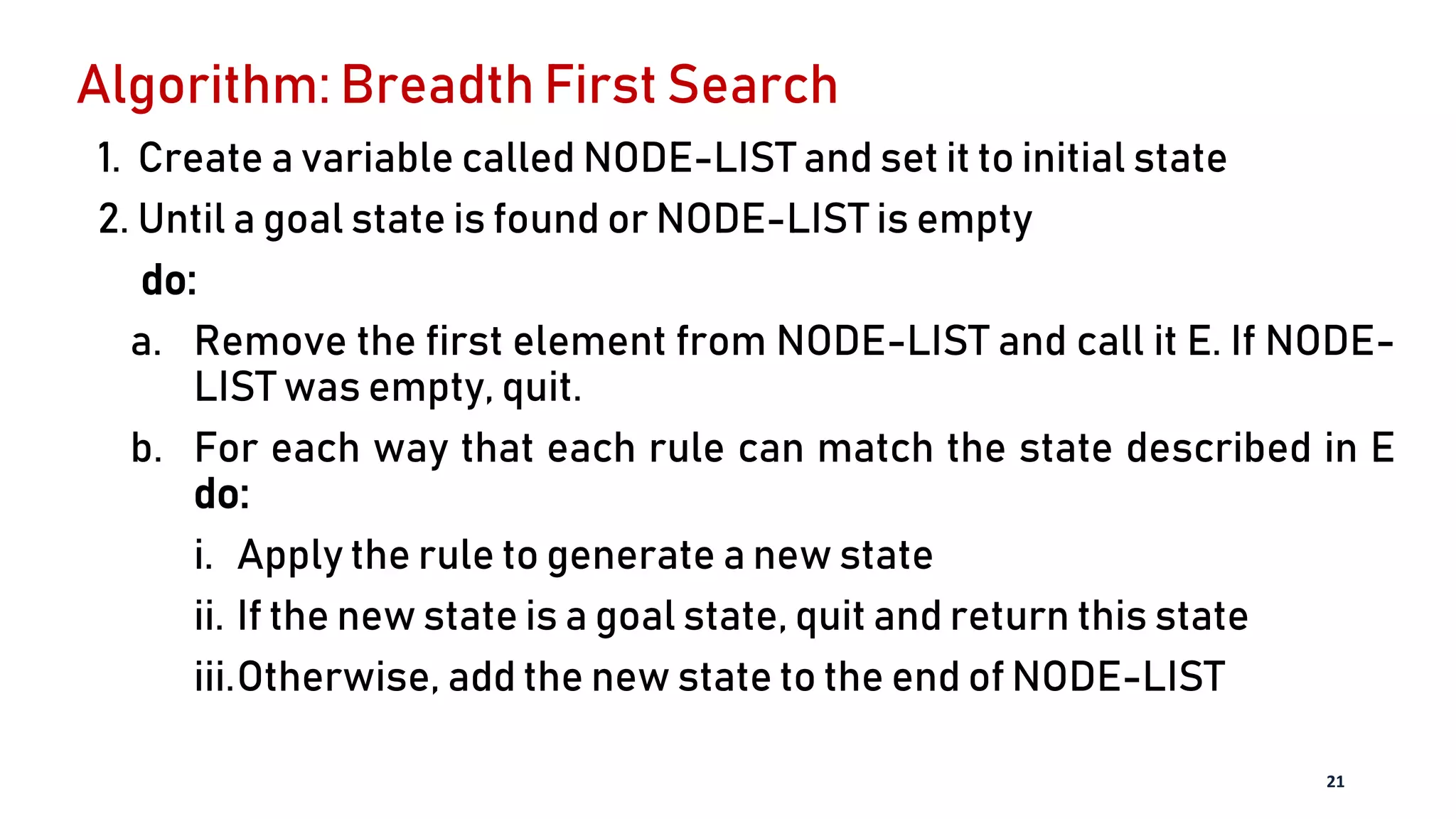

3. Search algorithms like breadth-first search and depth-first search are described for systematically exploring the state space to find a solution path from start to goal.