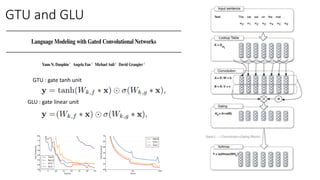

The document presents an overview of generative models, including autoencoders, variational autoencoders, GANs, and autoregressive models, discussing their strengths, weaknesses, and applications. Key concepts include the handling of latent variables, various architectures like VQVAE and PixelCNN, and issues such as blind spots and mode collapse in GANs. It also touches on advanced topics like flow-based models and the impact of initialization on performance.