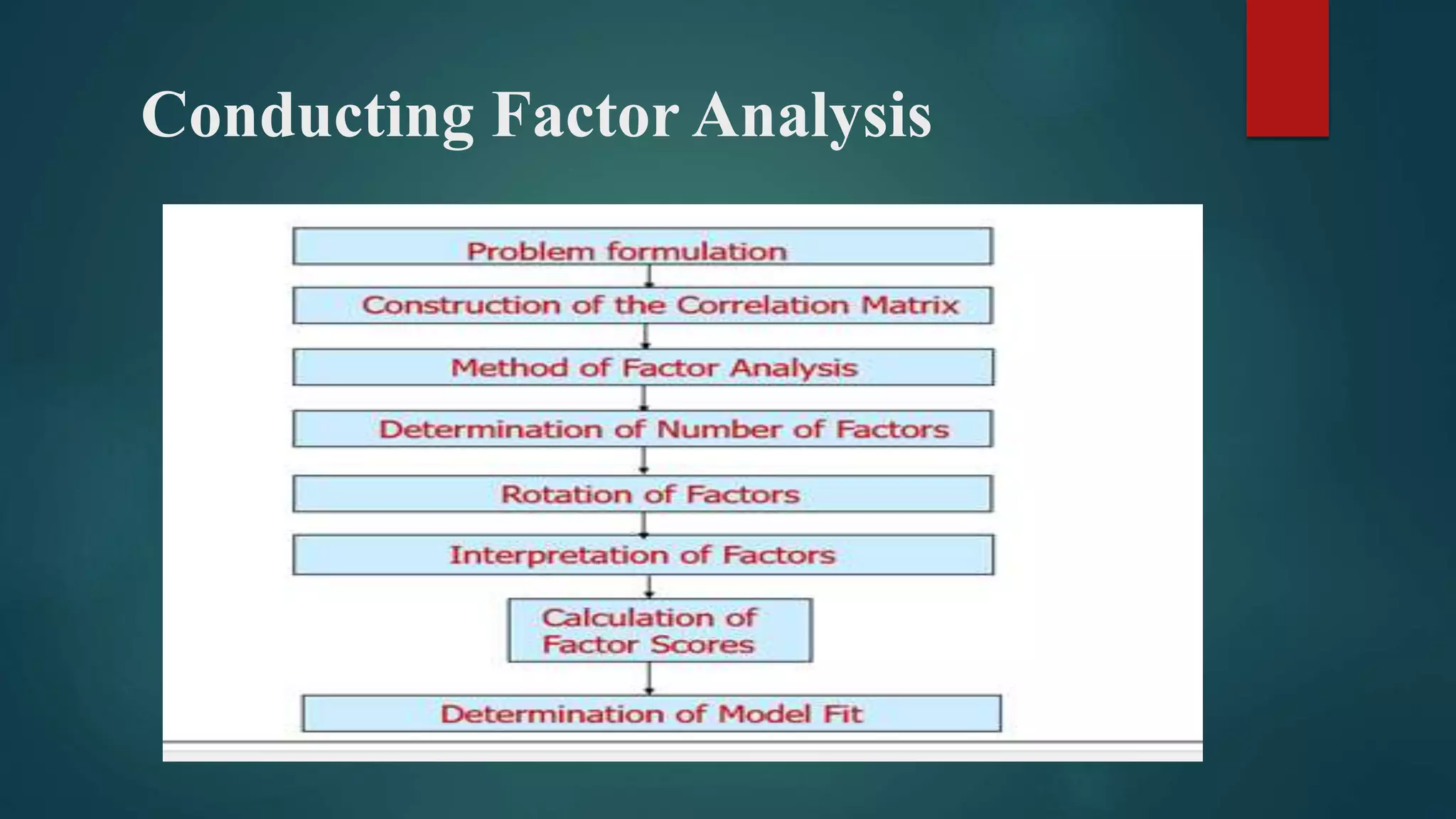

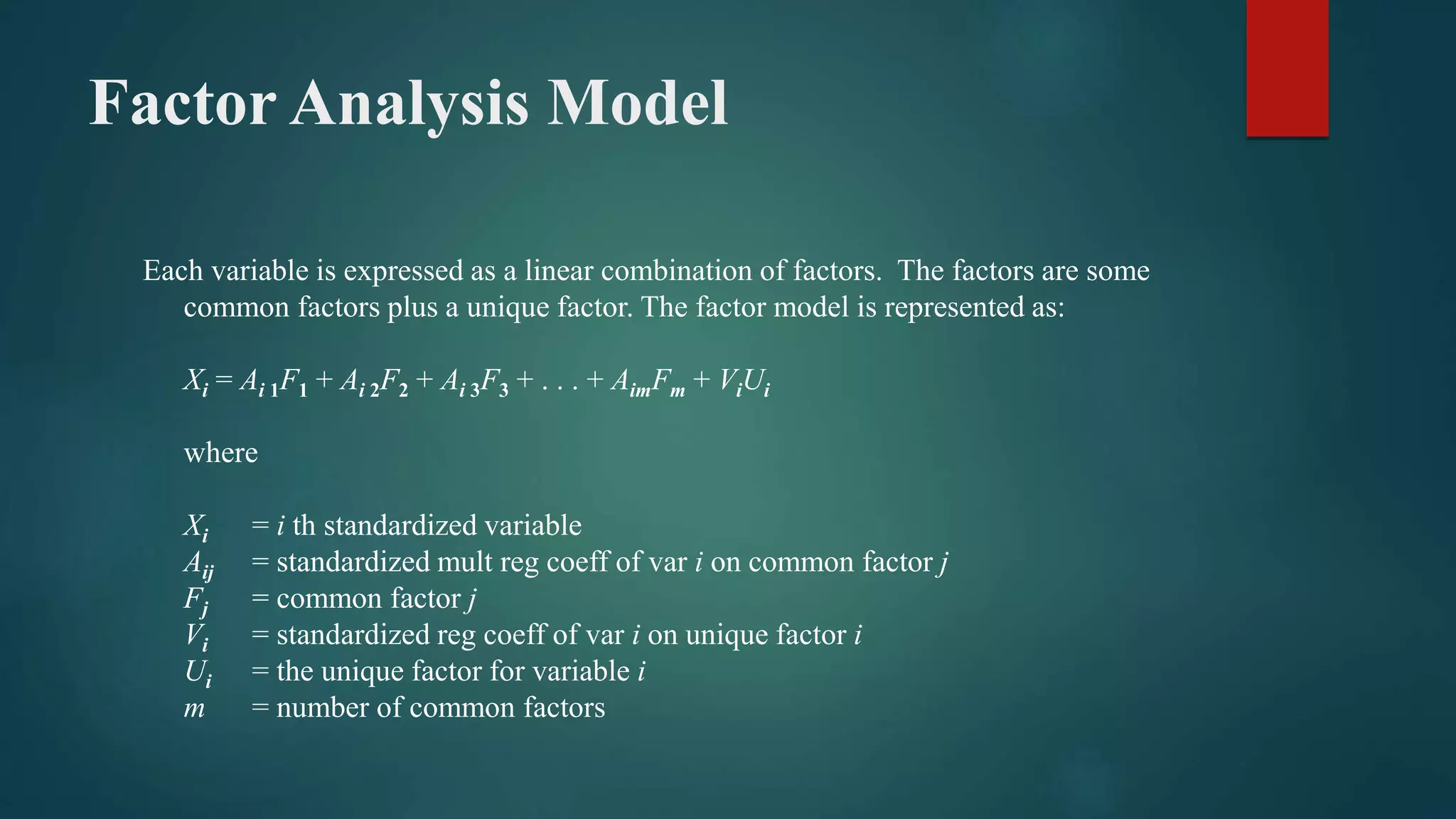

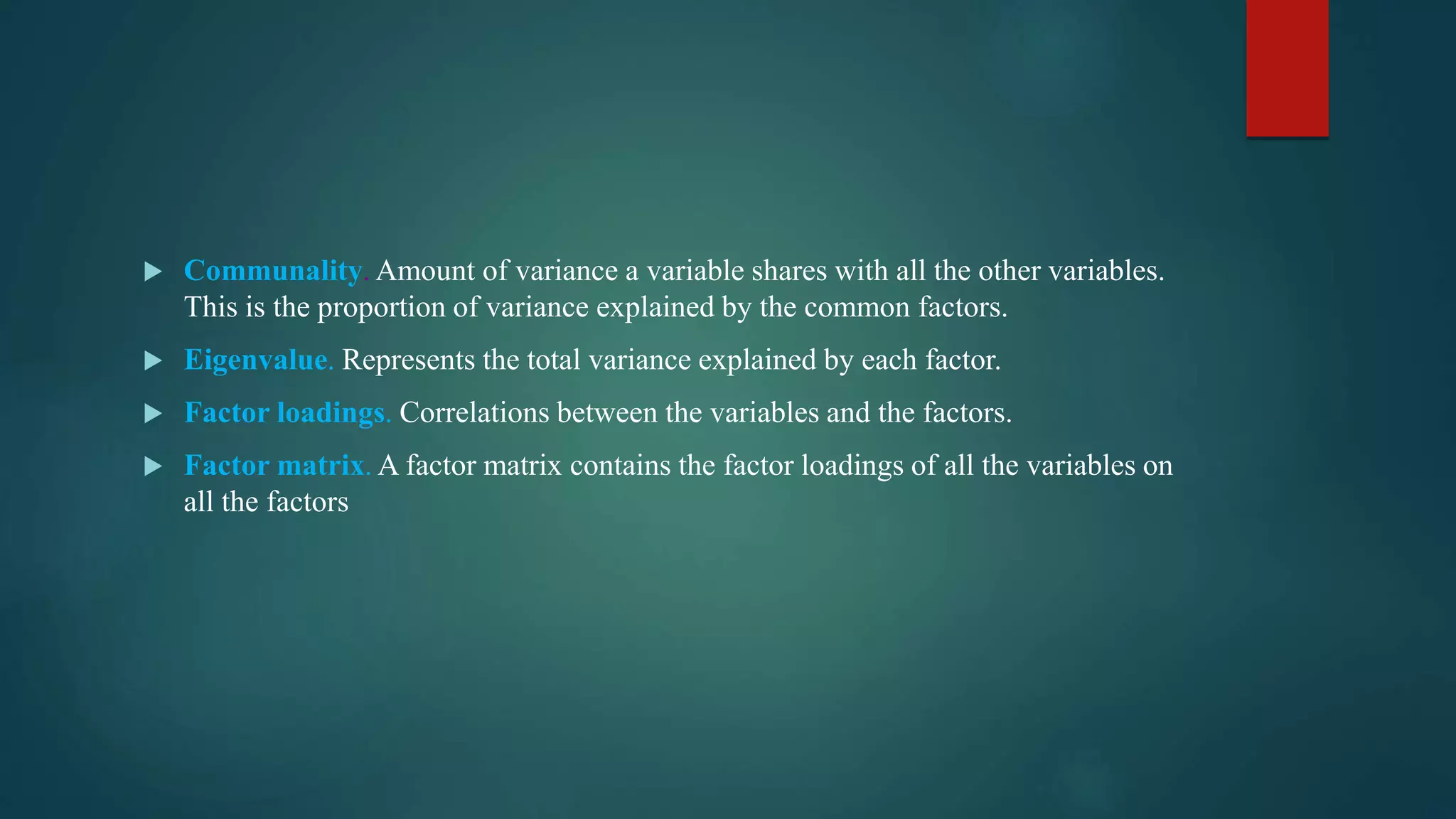

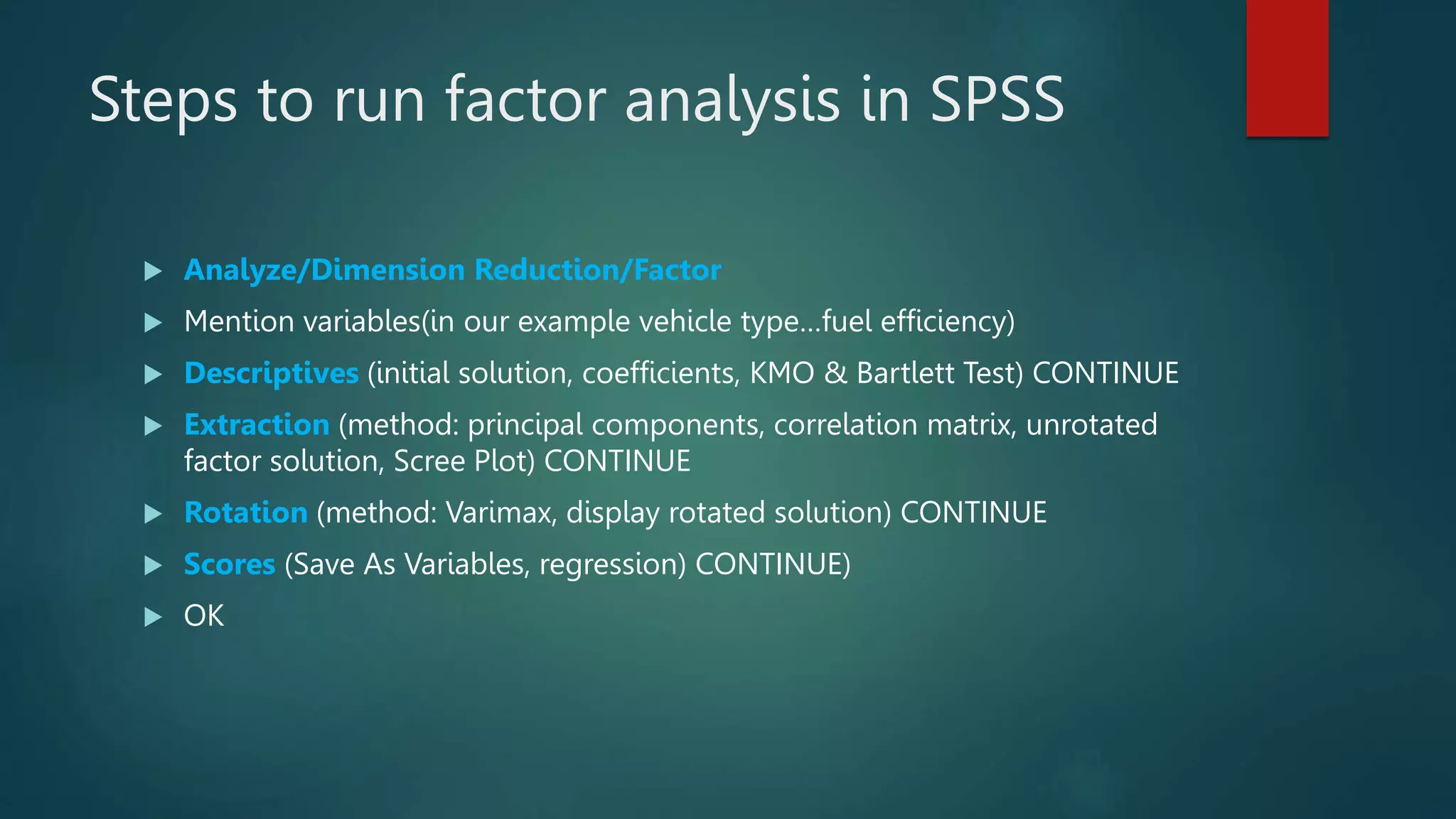

This document provides an overview of factor analysis. It describes factor analysis as a technique used for data reduction and summarization that examines interdependencies between variables without distinguishing between dependent and independent variables. The document outlines the key goals of factor analysis as identifying underlying dimensions that explain correlations among variables and identifying a smaller set of uncorrelated variables. It also describes the main types of factor analysis, methods used in SPSS, and the steps to conduct a factor analysis in SPSS. Key outputs from factor analysis like eigenvalues, factor loadings, and rotations are also explained.