Salus H4D 2021 Lessons Learned

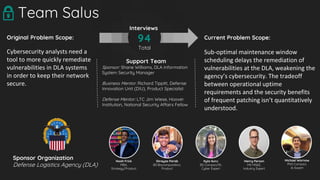

- 1. Current Problem Scope: Sub-optimal maintenance window scheduling delays the remediation of vulnerabilities at the DLA, weakening the agency’s cybersecurity. The tradeoff between operational uptime requirements and the security benefits of frequent patching isn’t quantitatively understood. Original Problem Scope: Cybersecurity analysts need a tool to more quickly remediate vulnerabilities in DLA systems in order to keep their network secure. Team Salus Support Team Sponsor: Shane Williams, DLA Information System Security Manager Business Mentor: Richard Tippitt, Defense Innovation Unit (DIU), Product Specialist Defense Mentor: LTC Jim Wiese, Hoover Institution, National Security Affairs Fellow 94 Total Interviews Noah Frick MBA, Strategy/Product Shreyas Parab BS Biocomputation, Product Kyla Guru BS Compsci/IR, Cyber Expert Henry Person MS MS&E, Industry Expert Michael Wornow PhD Compsci, AI Expert Sponsor Organization Defense Logistics Agency (DLA)

- 2. The Problem 2 The DLA provides critical logistics to the Department of Defense and across the federal government. Cyber attacks present an existential risk to a critical node that helps maintain readiness Example Vulnerability Breakdown: ● Critical 27,000 ● High 22,000 ● Medium 87,000 ● Low 17,00 19 critical business applications running on thousands of servers across several different hosted environments

- 3. Discovering the problem 3 10 9 8 7 6 5 4 3 2 Week 1 We learned about the problem sponsor and the current vulnerability management process, and set off on our first hypotheses.

- 4. At first, we thought it was all about detecting... 4 “We need an AI-powered malware detector based on cutting-edge research.” Team Salus DLA Sponsor “Taking a step back, a tool that simply scanned and ranked vulnerabilities might be super helpful!”

- 5. “Vulnerability scanning actually does a pretty good job at detecting known vulnerabilities, but we have to know what assets to scan.” Enterprise Vulnerability Scanner 5

- 6. Then, we thought it was asset management! 6 “We need an AI-powered malware detector based on cutting-edge research.” Team Salus DLA Sponsor “Taking a step back, a tool that simply scanned and ranked vulnerabilities might be super helpful!” Team Salus “Wait, the DLA doesn’t even know what computers are on their network; let’s fix that!”

- 7. Then, we thought it was asset management! 7 “We need an AI-powered malware detector based on cutting-edge research.” Team Salus DLA Sponsor “Taking a step back, a tool that simply scanned and ranked vulnerabilities might be super helpful!” Team Salus “Wait, the DLA doesn’t even know what computers are on their network; let’s fix that!” DLA Cyber Tools Team “Hold on, we already built an internal tool that solves that problem.”

- 8. So, we focused on learning about process Scanning Patch Testing Patch Deployment Patch Validation

- 9. And we learned... Requires an initial coordination process to test the patch... ...and then an additional coordination process to deploy the patch into production!

- 10. Focus on Patching 10 10 9 8 7 6 5 4 3 2 Week 1 We doubled down where we thought we could make a difference.

- 11. We realized we needed to update our Beneficiaries 11 J61 J62 J64 J6 Vulnerability Managers and Information System Security Managers Application Programs Infrastructure Programs Audit vulnerabilities, track patching progress Own the software and hardware which are affected by patches...Coordinate and implement patches! Information Technology Division

- 12. We realized we needed to update our Beneficiaries 12 J61 J62 J64 J6 Vulnerability Managers and Information System Security Managers Application Programs Infrastructure Programs and System Administrators “All I can do is ask nicely” “I care about patching, but it’s hard to coordinate with [infrastructure programs]” “We don’t want to annoy the applications, all they care about is uptime” Information Technology Division

- 13. “The problem pretty much always boils down to a lack of understanding across all involved parties regarding what will happen when we install this patch.” - @VA_Network_Nerd “Imagine Stanford grad students coming to reddit for help...” - @geezer1492 We found more validation in alternative sources... 13

- 14. And challenged common sense... 14 “We only schedule our maintenance windows in the middle of the payroll period ” J62 Application Program Manager “Nope! We just rely on common sense” “That makes sense. Do you look at any usage data that validates that belief?” J62 Application Program Manager

- 15. How is scheduling currently conducted? 15 J62 J64 ● Change Management Meetings ● Ticketing System or Emails ● Static Calendars “We want to be patching more on our terms. Our frustration is we have no say in the matter” J62 Application Program Manager “I need to be a little gun-shy with updates, because I’ve gotten blowback from applications” J64 Windows Patch Technician

- 16. Smart Maintenance Window Scheduler Patch 1 Patch 2 Patch 3 Application 1 x x Application 2 x x Application 3 x CCRI Exposur e Time Unremediated Patch 3 CRITICAL 2 5 Patch 2 MEDIUM 1 30 Patch 1 MEDIUM 2 10 1 2 3 2 Application 1&2 Patch 2 (Reason: Patch 2 has longer un-remediated time) 1 Application 2 Patch 3&1 (Patch 3 is CRITICAL, Optimal Time for Patch 1) 3 Application 3 Patch 3 (Reason: Optimal time for Patch 3) Click Here To Schedule w/ CM

- 17. And found validation presenting our ideas 17 “I really like how you’re thinking about this from a logistics point of view...right now, we’re [patching] blindly” - DARPA Cyber Researcher “Determining when maintenance windows should be, now THAT sounds helpful” - Industry Cybersecurity Professional

- 18. But our elation was short-lived…

- 19. Reality Checks 19 10 9 8 7 6 5 4 3 2 Week 1 “I like some of your ideas, but it’s clear to me you have a vastly oversimplified understanding of this stuff” - CSO Cybersecurity Firm

- 20. Mixed feedback... Refuting Validating “I’m not sure if it is possible.” - DLA Enterprise Infrastructure Director 20

- 21. Mixed feedback... Refuting Validating “I’m not sure if it is possible.” - DLA Enterprise Infrastructure Director “Scheduling is definitely something that needs to be considered.” - DLA Enterprise Infrastructure Director 21

- 22. Mixed feedback... Refuting Validating “I’m not sure if it is possible.” - DLA Enterprise Infrastructure Director “It needs to be optimized for the customer.” - DLA Enterprise Infrastructure Director “Scheduling is definitely something that needs to be considered.” - DLA Enterprise Infrastructure Director 22

- 23. Mixed feedback... Refuting Validating “I’m not sure if it is possible.” - DLA Enterprise Infrastructure Director “It needs to be optimized for the customer.” - DLA Enterprise Infrastructure Director “Scheduling is definitely something that needs to be considered.” - DLA Enterprise Infrastructure Director “Maybe we need to be willing to accept impacts to customers and business to improve our security.” - DLA Enterprise Infrastructure Director 23

- 24. Mixed feedback... Refuting Validating “I’m not sure if it is possible.” - DLA Enterprise Infrastructure Director “It needs to be optimized for the customer.” - DLA Enterprise Infrastructure Director “This is too simplified.” - CSO, Cybersecurity Vendor “Scheduling is definitely something that needs to be considered.” - DLA Enterprise Infrastructure Director “Maybe we need to be willing to accept impacts to customers and business to improve our security.” - DLA Enterprise Infrastructure Director 24

- 25. Mixed feedback... Refuting Validating “I’m not sure if it is possible.” - DLA Enterprise Infrastructure Director “It needs to be optimized for the customer.” - DLA Enterprise Infrastructure Director “This is too simplified.” - CSO, Cybersecurity Vendor “Scheduling is definitely something that needs to be considered.” - DLA Enterprise Infrastructure Director “Maybe we need to be willing to accept impacts to customers and business to improve our security.” - DLA Enterprise Infrastructure Director “I like your ideas of algorithm recommendations, and patching more frequently is the right mindset.” - CSO, Cybersecurity Vendor 25

- 26. We continued testing our MVP and receive mixed feedback... Refuting Validating “I’m not sure if it is possible.” - DLA Enterprise Infrastructure Director “It needs to be optimized for the customer.” - DLA Enterprise Infrastructure Director “This is too simplified.” - CSO, Cybersecurity Vendor “We don’t have enough changes for backlogs.” - Stanford ISO “Scheduling is definitely something that needs to be considered.” - DLA Enterprise Infrastructure Director “Maybe we need to be willing to accept impacts to customers and business to improve our security.” - DLA Enterprise Infrastructure Director “I like your ideas of algorithm recommendations, and patching more frequently is the right mindset.” - CSO, Cybersecurity Vendor 26

- 27. Mixed feedback... Refuting Validating “I’m not sure if it is possible.” - DLA Enterprise Infrastructure Director “It needs to be optimized for the customer.” - DLA Enterprise Infrastructure Director “This is too simplified.” - CSO, Cybersecurity Vendor “We don’t have enough changes for backlogs.” - Stanford ISO “Scheduling is definitely something that needs to be considered.” - DLA Enterprise Infrastructure Director “Maybe we need to be willing to accept impacts to customers and business to improve our security.” - DLA Enterprise Infrastructure Director “I like your ideas of algorithm recommendations, and patching more frequently is the right mindset.” - CSO, Cybersecurity Vendor “We sometimes have large patch backlogs that are from patches not being implemented in previous months.” - Stanford VM 27

- 28. And struggled to find a champion... 28 “I like your ideas, they seem very interesting!” DLA Chief of Application Support “It sounds interesting, and I’d love to help you in your research.” ...in other words… No... “Great! Would you be interested in writing a requirement for us?” DLA Chief of Application Support

- 29. 29 “I think there’s an opportunity in the space you’re looking at, but it has to do with how you’re pitching it. It’s a really tough sell to ask decision makers to invest in security, which is a cost-sucker and not a value-driver” - University of San Diego Cyber Researcher Decision-makers need to be convinced that patching more frequently will BOTH minimally impact business AND tangibly improve security TO more efficiently allocate limited resources A key learning!

- 30. 30 So we made an information sheet... Salus monitors the vulnerability state of your organization’s cyber assets and recommend more dynamic, smarter, and less disruptive maintenance windows 1) Decrease your risk exposure 2) Minimize impact to business operations 3) Allow for better allocation of limited IT resources.

- 31. 31 “This sounds great, if you could prove to me that it’s feasible.” - DLA Deputy Director of Strategic Business Operations

- 32. How can we do this? 32 10 9 8 7 6 5 4 3 2 Week 1 Focus on Key Activities, Partners, Deployment Options

- 33. 33 Data Collection Model Simulation Academic and Risk Research We focused on activities that could prove our feasibility

- 34. We searched for commercial proxies Multinational Non-Tech Companies Large, Decentralized Universities Agile companies who have modern tech stack, low technical debt and mostly built cybersecurity features within past 5 years 34

- 35. Department of Defense Stanford + National Labs Enterprise Customers And weighed several possible routes to deployment... ITCR with DLA SBIR / AFWERX grant DoD Integrator SaaS vendor (ServiceNow, SAP) Proof-of-concept on Stanford network CRADA for data Research collab (LBNL, Sandia) Open market

- 36. But finally learned that ServiceNOW is taking over the world! 36 “Your optimized scheduling idea could likely be implemented on the ServiceNOW development engine!” DLA Program Manager overseeing ServiceNOW implementation

- 37. What did we learn? What’s next? 37 10 9 8 7 6 5 4 3 2 Week 1 Reflection and Summer Plans

- 38. 38

- 39. 39

- 40. Final Recommendations for DLA 40 ● Implement ServiceNOW for Change Management with top- down emphasis ● Include ServiceNOW integration expectation in contracts with service providers ● Recognize that ServiceNOW does not provide insights into those tradeoffs with real data or risk analysis 1) Develop a NOW platform business application internally 2) Team Salus

- 41. 41 Day 1: We broadly wanted to help analysts remediate cyber vulnerabilities. Day 70: We aim to help program managers, infrastructure owners, and change managers better schedule their maintenance downtime for patching. Main Lesson: We often mistook curiosity and interest as strong validation. We didn’t ask “Would you buy?” often enough. Key Takeaway: Patch management is a surprisingly time- consuming, error-prone process, and we’re confident there is significant room for improvement in the space. Our understanding of business impact requires additional legwork. Looking back, we learned a lot...

- 42. Team Salus Noah Frick MBA, Strategy/Product Shreyas Parab BS Biocomputation, Product Kyla Guru BS Compsci/IR, Cyber Expert Henry Person MS MS&E, Industry Expert Michael Wornow PhD Compsci, AI Expert Improving cyber security by optimizing the vulnerability patching process Team Salus will continue to research and prove the feasibility of our optimization ideas and use of data and risk analysis in scheduling maintenance. If you or anyone you know would be interested in improving their organization’s cyber security posture, reach out to us at teamsalus.h4d@gmail.com

Editor's Notes

- Hi everyone, my name is Noah, and I am happy to be presenting tonight on behalf of Team Salus. We were paired with the Defense Logistics Agency with a simple problem statement: to more quickly help cybersecurity analysts fix cyber vulnerabilities. Over the course of 10 weeks, we learned a lot, and I’m looking forward to walking you through our journey. // We are mixed team of varying backgrounds and study focuses - prior military service, concurrent part-time work at Google, computer science PhD, undergrads with startup and cybersecurity experience...but we came together early on because we all were interested in ACTUALLY DOING something to make a difference...given some of our backgrounds in the space and the virtual environment constrained by COVID, we decided cybersecurity was a great problem area to pursue. We were paired with the Defense Logistics Agency with a simple problem statement: to more quickly help cybersecurity analysts remediate vulnerabilities in DLA systems. Cyber security analysts refers to those responsible for reporting on the security readiness of the organization and recommending action plans. We’ve interviewed over 90 experts: DLA practitioners, industry practitioners, business focused managers, academics, and many more, and in doing so, learned a LOT about what the vulnerability remediation process looks like and how we may be able to help. Over the next few minutes, we’ll walk through our journey and our learnings and how we arrived at our current problem statement.

- The DLA provides critical logistics to the DOD and across the federal government, and provides most of its services through online web applications. Every day, cyber vulnerabilities in underlying software and applications, are publicly identified. These vulnerabilities present malicious actors opportunities to conduct cyber attacks against the DLA and disrupt the flow of logistics that keeps our military running. At any given time, there are over 100k KNOWN vulnerabilities present on DLA assets....a figure that seemed to us absolutely mindblowing.- leaving critical systems exposed to attack. We were excited to take on this problem, and so we began our journey.

- In the first two weeks, we drank from the fire hose

- Our sponsor originally envisioned an AI-Powered solution, and we thought it sounded great!

- However, we quickly learned that scanning actually does a pretty good job. This was our first lesson in the complex process that is vulnerability management. Many different stakeholders, most of them NOT “cybersecurity analysts”, have a say in the matter, all with different perspectives, and different understanding of the complex process outside their immediate bubble. As we would come to find out, parsing apart fact from perception would be a common theme of our discovery

- We continued to interview, and discovered a new tangential problem: Asset management. It turns out, it’s really hard for organizations, and especially the DLA with over 190K assets, to know what servers, laptops, tablets, and other IT equipment is on their networks and what belongs to whom.

- However, we quickly learned that an internal DLA was already working on a solution for this very problem. We were excited that we had honed in on something important, but frankly, a little disappointed that it was already being fixed - we felt late to the party! Still, we suspected there were still other pain points where we could provide value, and we didn’t want to end our journey, so we kept on digging.

- So, we build out our understanding of the vulnerability process and built a process flowchart this was a tool to help us and most people we had interviewed had never seen the process laid out like this before. It was a useful tool to talk off of to establish a common language. As we mapped out the key activities we realized this was about patching! It seemed like there was A LOT of process friction and bottlenecks surrounding patching

- Patching, simply put, is simply upgrading software to fix vulnerabilities. Software upgrades on centralized servers can have unintended consequences and affect a wide-range of people, so patches need to be implemented at least twice, first to “test” and secondly to actually deploy it into what’s called “production”

- With a better understanding of the process, we began an important ideation phase.

- The focus on patching brought to light a change in our beneficiaries, and we identified the three primary beneficiary types within the J6 IT department: We identified the application program managers who are responsible for the smooth running of the DLA’s web applications The infrastructure owners who host these applications And tthe vulnerability managers who track and report vulnerabilities.

- We honed in on something that seemed important: coordination issues and misaligned incentives To put it simply : there is a tradeoff between security and operational uptime because patching require server reboots. Different stakeholders have different ideas of what this tradeoff should look like because of different incentives and focus areas.

- We even found validation in alternative sources, with one of are more social-media-minded teammates crowdsourcing insight from Reddit.

- Another thing we we noticed in our interviews is that many ideas about uptime requirements were based in perception and not validated facts - given that this H4D course was all about validating assumptions, we thought this was important!

- As we focused on coordination In our interviews, we dug into how exactly patch scheduling was currently conducted. We learned that there was a constant back and forth between the application programs and the infrastructure owners. The communication methods seemed antiquated, and as evidenced by the quotes on the right, communication breakdown was real. it didn’t seem like it was working as best it could!

- With these two ideas in mind, we iterated and landed on our best MVP yet, a Smart Maintenance Window Scheduler. Our idea was simple: let’s overlay different types of usage data, constraint data, and vulnerability state data, and recommend smarter maintenance windows to optimize security while minimizing impact normal business operations. We quickly received 10+ validating interviews on the idea with DLA employees We felt good!

- We even received feedback from outside the DLA, demonstrating to us that this idea may have some broader applicability. We were riding high!

- Just as we were getting excited, reality hit. After peaking early, we began what became a long bumpy road to the end. As the FireEye CSO pointed out, we weren’t appreciating the complexity of the process

- As we updated our ideas, we continued to receive mixed messages We heard questions about the feasibility of our ideas

- But then we also heard that scheduling could definitely be improved upon

- We heard that operational-impact was the only factor that mattered

- But moments later, a questioning of that very statement, and the fact that in a post-SolarWinds world, leaders needed to be willing to accept operational impacts in light of security

- We heard it was too Simplified

- But, despite the simplicity, our approach of doing it smarter resonated with people

- We heard that there may not be an issue at all

- But then those very same organizations contradicted themselves And while this was a little disheartening, we felt a little validation that the space was ripe for opportunity because there was SO MUCH misunderstanding and disagreement about the process: no one ACTUALLY KNEW what their uptime requirements were, or IF patching could be optimized, or WHAT the tradeoffs were, no one could definitively say it didn’t make sense.

- Unfortunately, during this time, despite the positive feedback on our ideas, we weren’t able to get the type of buy in necessary to support us initiate any sort of deployment process, which led to some of the lowest points in our discovery process

- But, we had only been thinking about the bottom-up ground-users that we envisioned (ie, the application program managers, infrastructure owners) This was more of an economic problem than anything - decision makers were the ones that needed to buy in and realized we might need to make a top-down approach

- So, we created an informational sheet that explained our ideas in terms that we thought would resonate with organizational leaders such as the Chief Information Officer

- As we tried to make our way up to a CIO interviewe, we received a challenge from the Deputy Director at DLA which set us on the next stage of our journey: we needed to prove it was possible, and unfortunately interviews weren’t going to help us too much this regards

- So, we hit a stasis...unfortunately, in the next few weeks, we struggled to find a way to prove this. DLA beneficiaries were hesitant to share information with us

- We initiated several tasks that we thought would propel us further along to prove feasibility. We began developing a model that could demonstrate the security benefits of increasing patch frequency We dug into literature about optimization of patching and valuing cyber risk to translate tradeoffs We solicited usage data from DLA applications, hoping to be able to test our hypothesis While we learned a lot, looking back at this period, we didn’t have enough time to further validate our ideas...instead of continuing to focus on finding that “champion”, we thought we we needed to prove some degree of feasibility. We still have mixed feelings about this - if we had been able to pull this all together, would someone have jumped? It’s a question we ask ourselves, and we’re not sure

- During this time, we also ramped up our search for commercial proxies. We learned that newer “tech” native companies are too sophisticated enough for our approach But that there is likely a sizeable number older, decentralized organizations who would benefit from our value proposition. It felt good that we may have identified a more niche market, and felt even better when we had some interest from Rocket Mortgage (although still no “we’ll buy” leaps) and were even granted access to some Stanford vulnerability management meetings

- And while we considered different routes to deployment, without a clear champion, we never fully pursued any one path

- In our final week of discovery we finally learned about a enterprise platform solution called ServiceNOW . Both the DLA, their hosting provider the Defense Information Systems Agency, or DISA, and Stanford are transitioning to ServiceNOW, and after a demo from Stanford, we saw that ServiceNOW offers a feature that caters to the scheduling and coordination issue pretty well. Just like Week 2 when we had learned about an area ripe for improvement only to learn of projects already being implemented, we were once again confronted a similar scenario. However, this time, late in Week 9, we felt a little better. There was some validation in the fact that ServiceNOW, a highly hyped platform, is catering to an area that is less well understood. We also learned that we could scope our Value Proposition more - while ServiceNOW addresses many of areas we had identified that have to do with scheduling, it does NOT explain or recommend HOW to schedule more frequently using data and risk analysis. Therefore, we believe there is still potential to improve on the platform, perhaps on the ServiceNOW development platform.

- So, as we entered Week 10, we reflected on our journey

- And here’s where we ended up. To highlight some of the most important learnings: Under beneficiaries, we learned about the many different stakeholders across organizations We identified value propositions and impact factors and honed in on the key tradeoff in security We identified possible partners and supporters, both as commercial integrators in ServiceNOW and SAP, government research at DARPA, and institutional proxies at Stanford that could assist with further deployment

- So, what are we doing with our learnings? In the end, settled on a few recommendations that we will be presenting to the CIO tomorrow. We will share our learnings and highlight the fact that ServiceNOW is important, but does not provide insights to help make scheduling decisions. To address this, we offer the ideas to develop a tool internally, or, if interested, to continue work with Team Salus as we develop a prototype to prove feasibility. ServiceNOW provides a scheduling tool that reduces friction and prompts thoughts about business-impact tradeoff, and we believe it will likely address coordination pain-points between change managers, business owners, and infrastructure owners. However, it will likely need top-down emphasis since we’ve learned there are many different processes that vary across teams, different teams do it differently and just because a tool exists, doesn’t mean everyone will use it. Additionally, it’s unclear how well integrated ServiceNOW will be with DISA and contractors who manage the cloud hosting servers. We also recommend to write into contracting documents the need to integrate ServiceNOW with change managers on those sides However, ServiceNOW does not provide insights into those tradeoffs. To improve the DLA’s understanding of these tradeoffs, two options: Develop a business application internally in ServiceNOW’s low-code development environment. The Logistics Application who provided us usage data or the Accenture Team who works with the Enterprise Business Center application would likely be a good teams to undertake Team Salus plans to continue to develop our ideas to prove feasibility. If interested, our efforts could be accelerated with a SBIR ServiceNOW provides a low-code development environment for customers and independent developers to create their own business applications.

- Looking back, we learned a lot, both about vulnerability management and about the process of trying to innovate in the national security ecosystem, lessons we hope to take forward with us as part of Team Salus and in our other future endeavors

- We plan on continuing our research to prove the feasibility of our ideas, and look forward to what the future holds. Thank you for your time