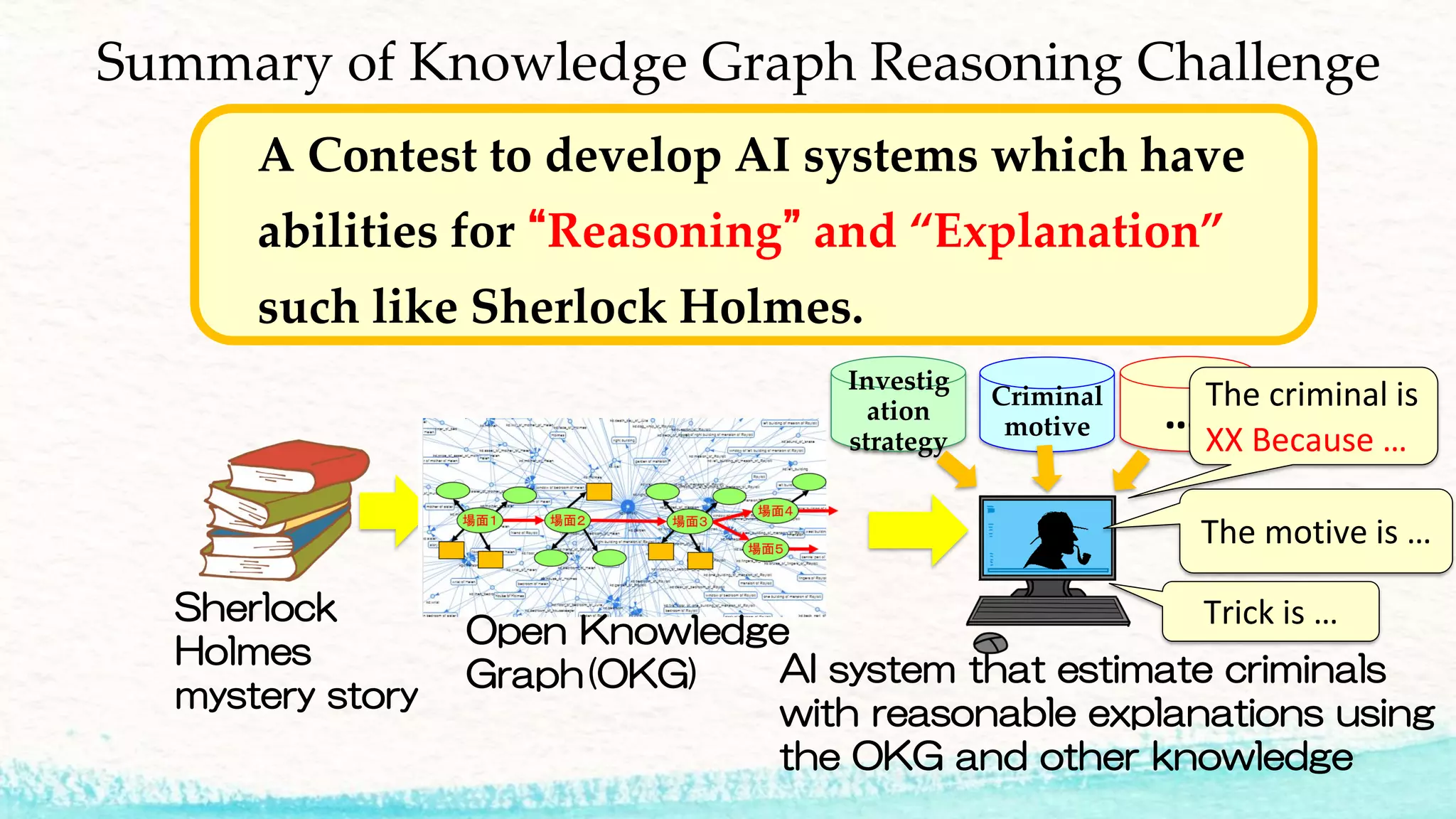

The 2018 Knowledge Graph Reasoning Challenge aimed to develop AI systems capable of reasoning and explanation, utilizing techniques exemplified by Sherlock Holmes. Key sessions included knowledge graph construction, evaluation of estimation methods, and subjective assessments from both experts and the public regarding explainability and performance. The document outlines the evaluation process, prize winners, and future challenges that build on this foundational work in explainable AI.