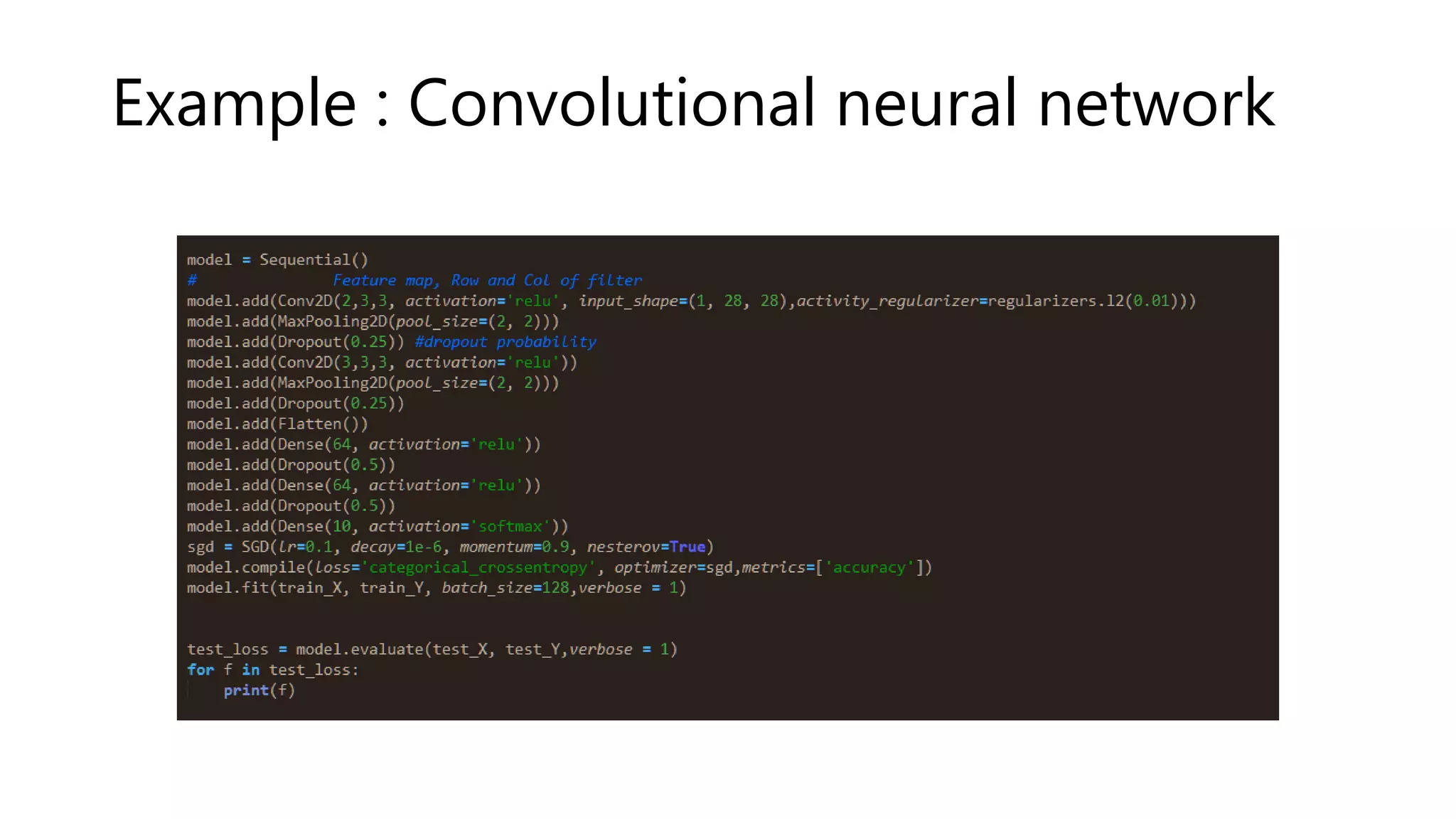

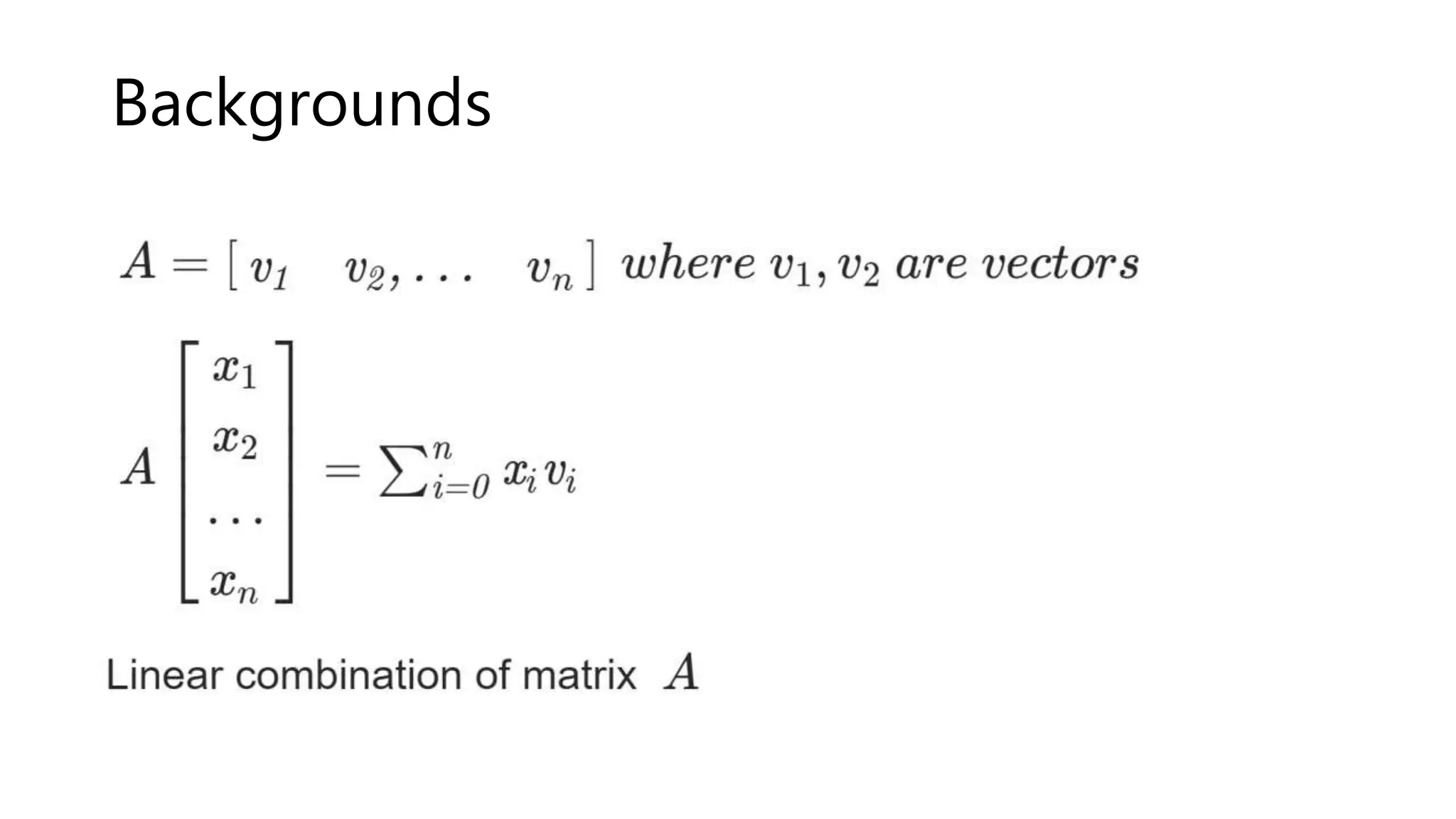

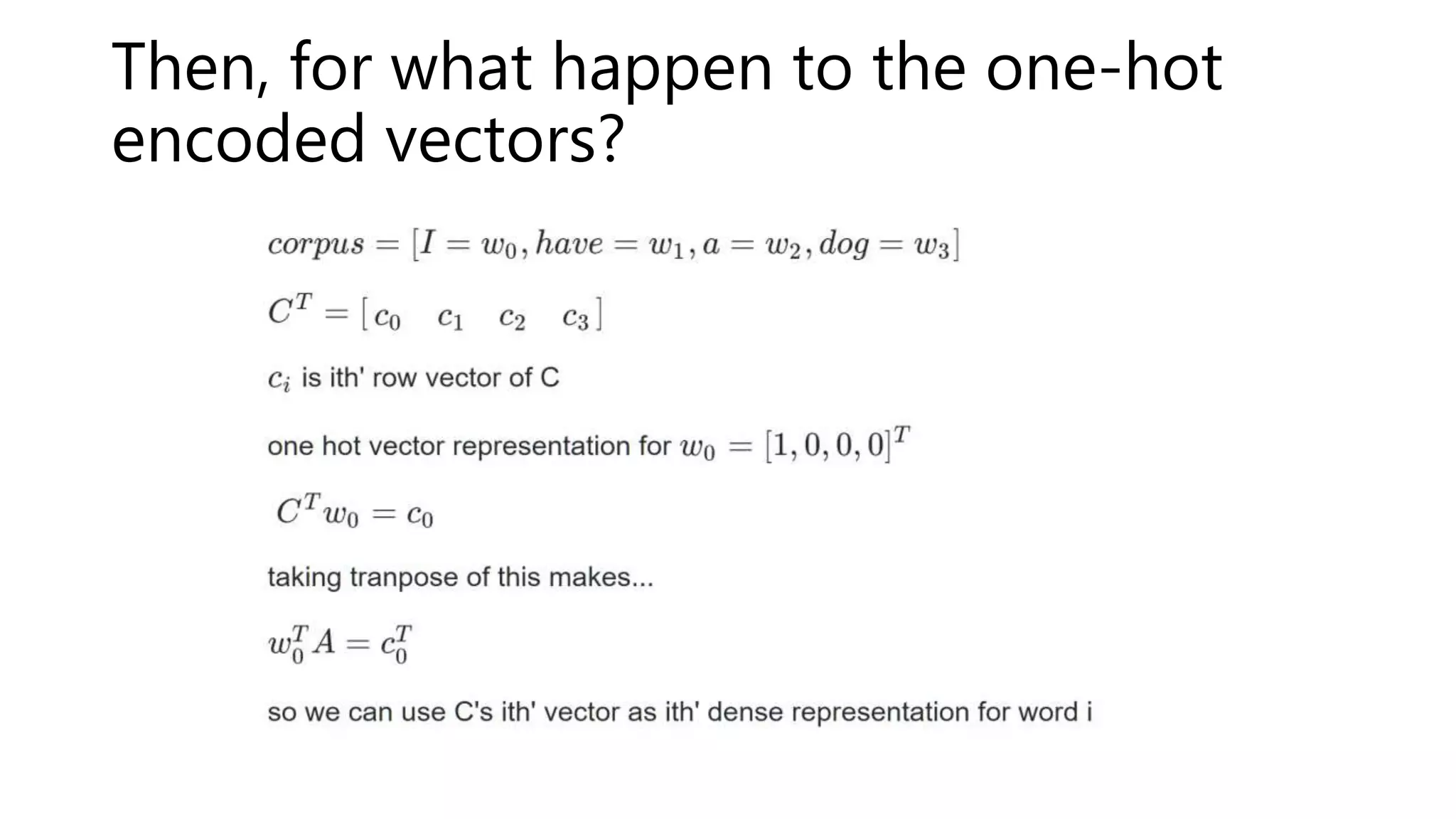

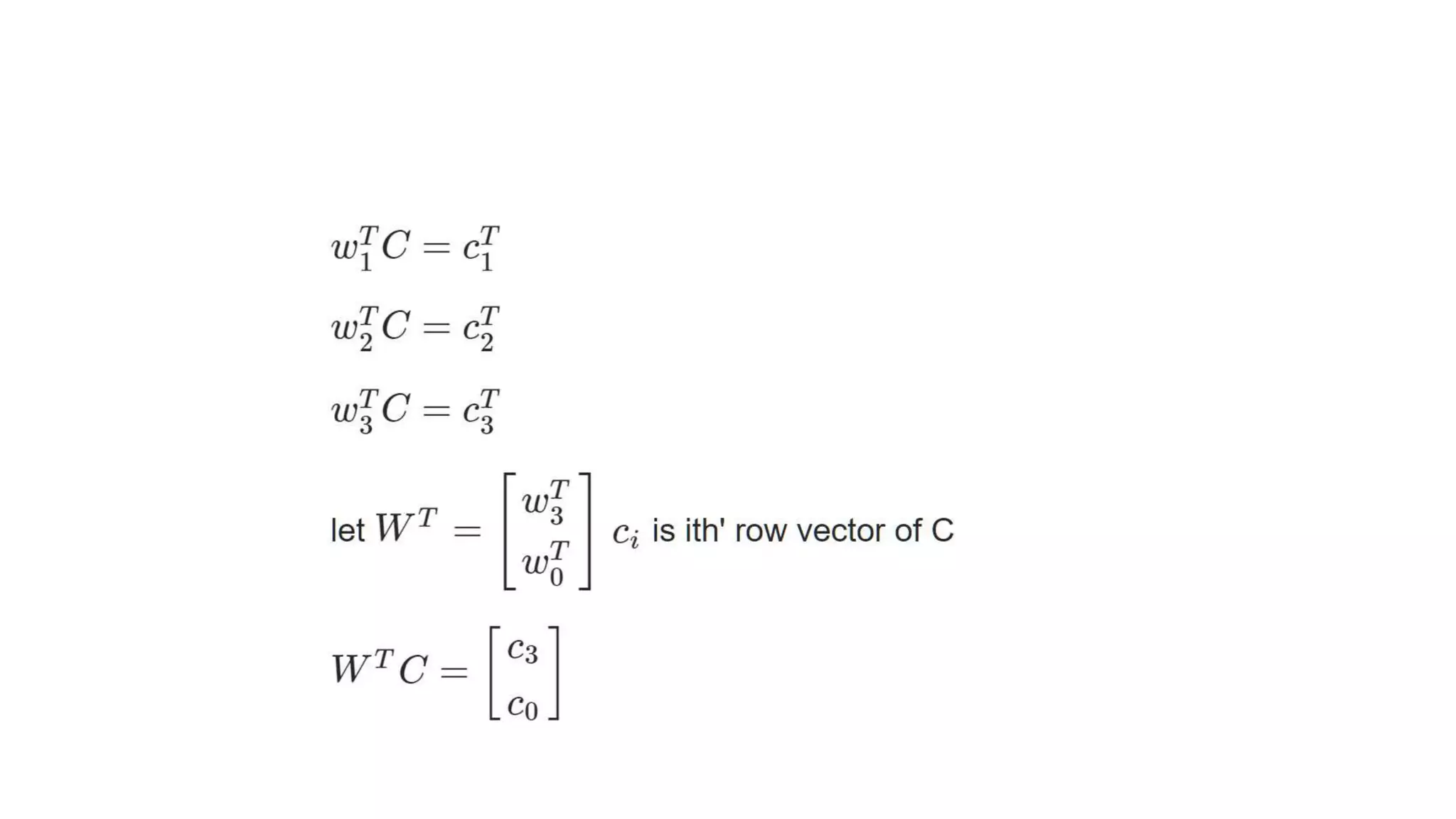

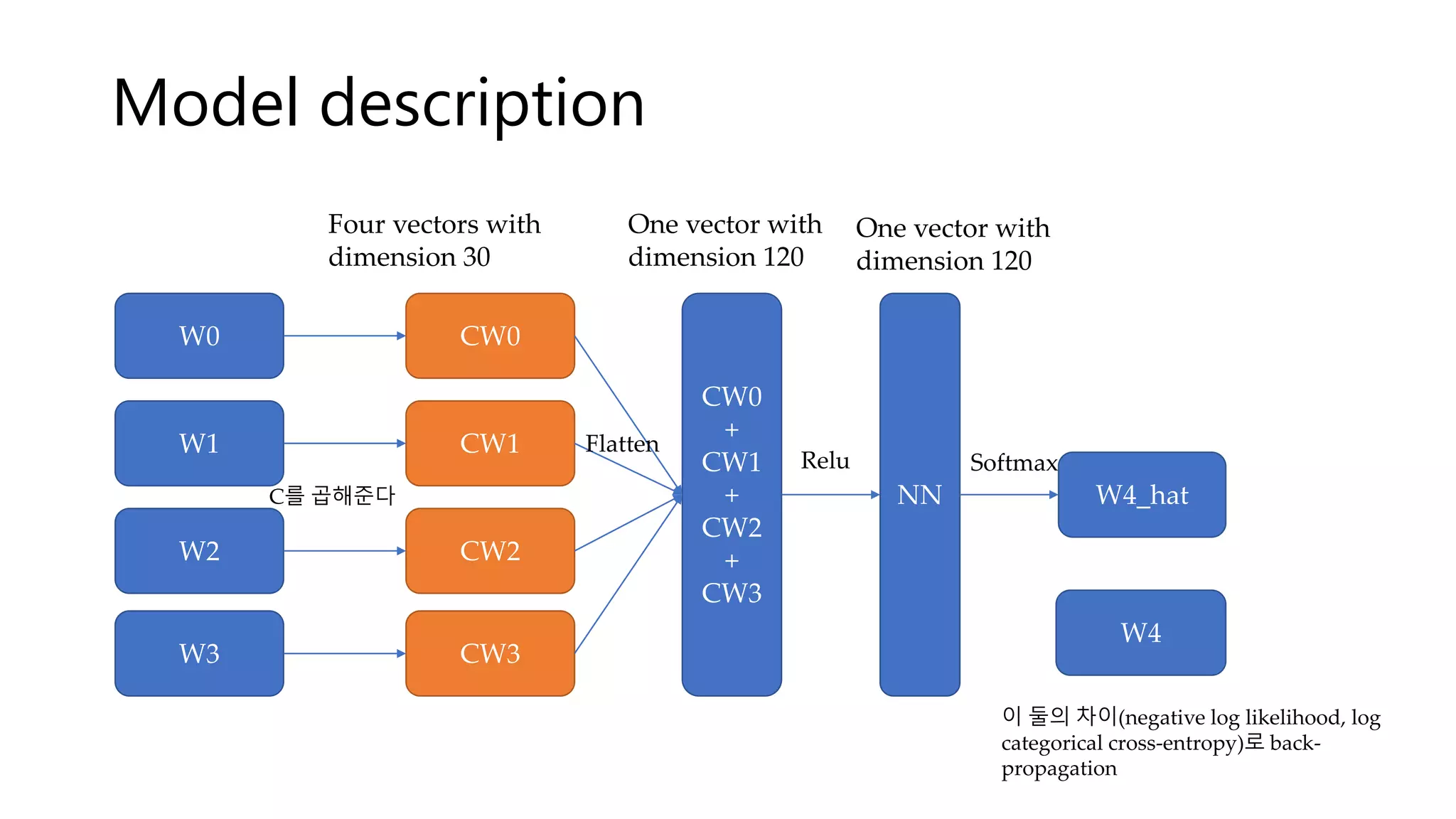

This document summarizes a presentation on word vectorization using neural networks. It introduces Keras as a deep learning library, provides background on word encoding, and describes a model that learns vector representations of words from n-grams such that similar words have similar vectors. The model is implemented with Keras and results show some words with syntactic or semantic similarity have similar vectors, while others like adjectives do not. Further improvements are discussed like using different neural network architectures, larger datasets, and better visualization.