WH1112 Imperialism

•Download as PPTX, PDF•

0 likes•234 views

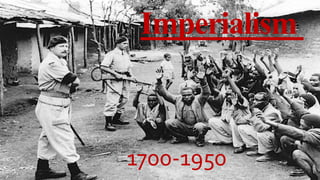

Imperialism was a period between 1700-1950 where powerful Western nations expanded their political and economic control over weaker nations and territories throughout Africa, Asia and other parts of the world. European countries and the United States aggressively colonized these regions and asserted their authority through military force. This era saw much of Asia and Africa come under the direct or indirect domination of Western colonial governments.

Report

Share

Report

Share

Recommended

Verona

Verona, Italy has a long history dating back to the 4th century BC when it was an important commercial and political center for the Romans. In the late 1200s, it became the site of power struggles between rival families like the della Scalas who ruled over the city. Some of Verona's most popular tourist destinations include the Arena, built in the 2nd century AD which hosts large opera performances, several bridges like the Ponte Scaligero and Ponte Pietra rebuilt after WWII, and Juliet's balcony from Shakespeare's Romeo and Juliet which dates to the 13th century. The document also discusses Verona's central square Piazza dei Signori and open air market Piazz

The City of Verona

Verona, Italy has a long history dating back to the 4th century BC when it was an important commercial and political center for the Romans. In the late 1200s, it became the focus of power struggles between rival families like the della Scalas, who ruled Verona in the 1300s and built defensive structures like Castelvecchio. Today, Verona attracts many tourists to landmarks from its Roman era like the Arena, and sites associated with Shakespeare's Romeo and Juliet like Juliet's balcony.

CRUCIGRAMA imperialismo y colonialismo

Imperialism and colonialism refer to the expansion of formal and informal control or sovereignty over foreign territories and peoples. European countries aggressively expanded their empires in the late 19th century, occupying lands in Africa, Asia, and other regions. This period saw an increase in the political and economic domination of weaker states and peoples, often through military campaigns and the establishment of colonial administrations.

2. 1530 1748

The Spanish branch of the Habsburg family dominated Italy between 1530-1700 under Emperor Charles V and his successor Philip. Charles V directly controlled the Kingdom of Naples, Sicily and the Duchy of Milan and wielded deep influence elsewhere, reorganizing some states and ushering in a period of relative peace and stability. Meanwhile, the city-states of Italy transformed into regional states. From 1701-1748, Western Europe went to war over succession of the Spanish throne, with battles in Italy and the region becoming a prize fought over between the Bourbons and Habsburgs. The Treaty of Aix-la-Chapelle ended shifting control in Italy and brought about 50 years of relative peace.

Historical figures of ancient rome

The document summarizes several important historical figures from Ancient Rome, including:

- Romulus and Remus, the twin founders of Rome according to mythology who were raised by a she-wolf and established the city.

- Hannibal, the Carthaginian military leader who surprised the Romans by crossing the Alps with elephants and winning several battles against Rome in the Second Punic War.

- Cicero, one of Rome's greatest orators who introduced Romans to Greek philosophy and had a major influence on later thinkers.

- Julius Caesar and his adopted heir Octavian (Augustus), the first emperor who ruled Rome from 27 BC until his death, establishing it as a principate instead of a

William shakespeare

William Shakespeare (1564-1616) was an English playwright, poet, and actor who is considered one of the greatest writers in the English language. Some key details about Shakespeare's life and works include that he was born in Stratford-upon-Avon and married Anne Hathaway in 1582, fathering three children. In the 1590s he began a successful career in London as an actor and playwright. His plays include comedies, histories, and tragedies and his works have been translated into every major language. Shakespeare's plays were highly popular both during his lifetime and after his death.

FCSarch 16 post-Baroque France 2

Baron Georges-Eugène Haussmann implemented a massive urban planning project in Paris under the direction of Napoleon III between 1854 and 1870. Haussmann demolished narrow, winding medieval streets and replaced them with wide boulevards and public parks to improve sanitation, traffic circulation, and military defense. He also built new government buildings and cultural institutions, standardizing the appearance of structures along the new avenues. This transformation of Paris' street layout and architecture is known as "Haussmannization" and dramatically reshaped the city.

AH 2112: World War I

World War I began in 1914 and lasted until 1917. The war was fought between two alliances called the Allied Powers and the Central Powers. The Central Powers consisted of Germany, Austria-Hungary, Bulgaria, and the Ottoman Empire fighting against the Allied Powers of France, Russia, Britain, Italy, Japan, and later the United States.

Recommended

Verona

Verona, Italy has a long history dating back to the 4th century BC when it was an important commercial and political center for the Romans. In the late 1200s, it became the site of power struggles between rival families like the della Scalas who ruled over the city. Some of Verona's most popular tourist destinations include the Arena, built in the 2nd century AD which hosts large opera performances, several bridges like the Ponte Scaligero and Ponte Pietra rebuilt after WWII, and Juliet's balcony from Shakespeare's Romeo and Juliet which dates to the 13th century. The document also discusses Verona's central square Piazza dei Signori and open air market Piazz

The City of Verona

Verona, Italy has a long history dating back to the 4th century BC when it was an important commercial and political center for the Romans. In the late 1200s, it became the focus of power struggles between rival families like the della Scalas, who ruled Verona in the 1300s and built defensive structures like Castelvecchio. Today, Verona attracts many tourists to landmarks from its Roman era like the Arena, and sites associated with Shakespeare's Romeo and Juliet like Juliet's balcony.

CRUCIGRAMA imperialismo y colonialismo

Imperialism and colonialism refer to the expansion of formal and informal control or sovereignty over foreign territories and peoples. European countries aggressively expanded their empires in the late 19th century, occupying lands in Africa, Asia, and other regions. This period saw an increase in the political and economic domination of weaker states and peoples, often through military campaigns and the establishment of colonial administrations.

2. 1530 1748

The Spanish branch of the Habsburg family dominated Italy between 1530-1700 under Emperor Charles V and his successor Philip. Charles V directly controlled the Kingdom of Naples, Sicily and the Duchy of Milan and wielded deep influence elsewhere, reorganizing some states and ushering in a period of relative peace and stability. Meanwhile, the city-states of Italy transformed into regional states. From 1701-1748, Western Europe went to war over succession of the Spanish throne, with battles in Italy and the region becoming a prize fought over between the Bourbons and Habsburgs. The Treaty of Aix-la-Chapelle ended shifting control in Italy and brought about 50 years of relative peace.

Historical figures of ancient rome

The document summarizes several important historical figures from Ancient Rome, including:

- Romulus and Remus, the twin founders of Rome according to mythology who were raised by a she-wolf and established the city.

- Hannibal, the Carthaginian military leader who surprised the Romans by crossing the Alps with elephants and winning several battles against Rome in the Second Punic War.

- Cicero, one of Rome's greatest orators who introduced Romans to Greek philosophy and had a major influence on later thinkers.

- Julius Caesar and his adopted heir Octavian (Augustus), the first emperor who ruled Rome from 27 BC until his death, establishing it as a principate instead of a

William shakespeare

William Shakespeare (1564-1616) was an English playwright, poet, and actor who is considered one of the greatest writers in the English language. Some key details about Shakespeare's life and works include that he was born in Stratford-upon-Avon and married Anne Hathaway in 1582, fathering three children. In the 1590s he began a successful career in London as an actor and playwright. His plays include comedies, histories, and tragedies and his works have been translated into every major language. Shakespeare's plays were highly popular both during his lifetime and after his death.

FCSarch 16 post-Baroque France 2

Baron Georges-Eugène Haussmann implemented a massive urban planning project in Paris under the direction of Napoleon III between 1854 and 1870. Haussmann demolished narrow, winding medieval streets and replaced them with wide boulevards and public parks to improve sanitation, traffic circulation, and military defense. He also built new government buildings and cultural institutions, standardizing the appearance of structures along the new avenues. This transformation of Paris' street layout and architecture is known as "Haussmannization" and dramatically reshaped the city.

AH 2112: World War I

World War I began in 1914 and lasted until 1917. The war was fought between two alliances called the Allied Powers and the Central Powers. The Central Powers consisted of Germany, Austria-Hungary, Bulgaria, and the Ottoman Empire fighting against the Allied Powers of France, Russia, Britain, Italy, Japan, and later the United States.

World war ii

The document provides a detailed overview of the key events of World War II from 1939-1945. It describes how the political and economic instability in Germany following WWI led to the rise of Hitler and the Nazis. It then outlines Germany's aggression across Europe in the late 1930s that precipitated the start of WWII. The document discusses the major military campaigns and battles across Europe and in the Pacific theater between the Allied and Axis powers. It also describes the implementation of the Holocaust by Nazi Germany and its allies that resulted in the genocide of approximately 6 million European Jews.

US 2111 Jacksonian democracy

Jacksonian Democracy emerged in the 1820s-1860s era and referred to both Andrew Jackson's presidency and the democratic reforms that accompanied his leadership. It aimed to reduce the power of elites and expand political power to more white men. However, it also took racism and slavery for granted. The market revolution transformed the economy but also generated tensions as not all whites benefited equally. Jacksonian policies aimed to curb the power of banks and centralized economic authority that favored the wealthy. However, the growing conflicts over slavery threatened to divide the major parties and the nation.

US 2111 Jeffersonian america

The document summarizes the development of political parties and elections in the early United States. It discusses the emergence of the Federalist and Democratic-Republican parties in the 1790s, culminating in Thomas Jefferson's election in 1800 as the first President from the opposing party. This peaceful transfer of power established an important precedent. The era was dominated by international events related to the French Revolution and Napoleon. Jefferson expanded the country through the Louisiana Purchase. His presidency saw the Supreme Court assert its power of judicial review in Marbury v. Madison.

US 2111 American Revolution

The document summarizes the key events of the American Revolutionary War and early United States history from 1765-1783. It discusses the growing tensions between British colonies and the colonial government, key battles of the Revolutionary War, French involvement in 1778 that turned the conflict into an international war, the American victory at Yorktown in 1781, and the adoption of the Articles of Confederation in 1777 which established the first national government of the US but had significant weaknesses.

US 1: Settlement of north america

This document summarizes the key events surrounding the settlement of North America by European powers between 1000-1650 CE. It discusses the political, economic, and technological developments in Europe that enabled exploration, including the Protestant Reformation, rise of nation-states, and advances in navigation. It then outlines the colonial efforts and impacts of the four major European powers in North America: Spain, France, the Netherlands, and England. Specific colonies founded by each are named and their economies, populations, and systems of governance discussed. The document also examines the growth of slavery in the English colonies and its importance to the development of plantation economies in the Americas.

US 1: History of native american tribes

The document summarizes the major migrations and cultures that inhabited the Americas between 10,000 BC to 1500 AD. It describes how ancient Siberians first crossed into Alaska over a land bridge and then spread throughout the Americas. It then outlines several major cultural periods and groups that developed, including the Clovis, Poverty Point, Hopewell, Coles Creek, Hohokam, Mississippian, and Iroquois cultures and their characteristic features such as mound building, irrigation, and confederacy formation.

WH 1111 Rome

Rome began as a small town on the Tiber River and grew into a massive empire through military expansion and conquest over centuries. It transitioned from a monarchy to a republic ruled by elected officials and senators. Internal political struggles and the rise of influential figures like Julius Caesar led to the end of the republic and establishment of the Roman Empire under Augustus in 27 BC. The empire reached its peak but then declined due to economic troubles, corruption, and invasions, with the western half falling in 476 AD.

WH 1111 Ancient greece

Ancient Greece emerged as the birthplace of Western civilization between 5000-300 BC. Key developments included the rise of city-states like Athens and Sparta between 800-500 BC, the Persian Wars in the 5th century BC which united the Greeks against an outside threat, and the Peloponnesian War between Athens and Sparta which weakened Greece and allowed Philip II and his son Alexander the Great to conquer the region.

WH 1111 Ancient china

Ancient China experienced several important dynasties between 5,000-200 BC. The Xia Dynasty is believed to have been the first, founded by Yu the Great to control flooding of the Yellow River. The next major dynasty was the Shang Dynasty, the first for which there is both archaeological and documentary evidence. Two important developments during the Shang were the earliest forms of Chinese writing and the beginning of bronze metalworking. The Zhou Dynasty overthrew the Shang in 1046 BC and saw further developments including the spread of ironworking, new agricultural technologies, and the philosophy of Confucianism. During the Spring and Autumn and Warring States periods, China fragmented into many warring states and new philosophies like

WH 1111 Ancient india

The ancient Indus Valley Civilization developed around 5000 BCE along the Indus River valley. Two major cities, Mohenjo-Daro and Harappa, had sophisticated urban planning with streets laid in a grid and advanced sanitation systems. Around 1800 BCE, the civilization began to decline due to drought or other factors. After this, Aryan tribes migrated into the region, establishing the Vedic civilization between 1500-500 BCE. During this period, sacred texts like the Vedas were composed, introducing Hindu concepts like dharma, karma, samsara and the caste system. The Mauryan Empire then unified much of the Indian subcontinent under Emperor Ashoka in the 3rd century BCE after adopting Buddhism following

WH 1111 Ancient egypt

Ancient Egypt was one of the earliest and most influential civilizations, located along the Nile River in Northeast Africa. For almost 30 centuries from its unification around 3100 BC until its conquest by Alexander the Great in 332 BC, Ancient Egypt was the preeminent civilization in the Mediterranean world. Ancient Egyptian history can be divided into eight distinct periods, including the Old Kingdom known for pyramid building, the Middle Kingdom considered Egypt's classical age, and the New Kingdom which saw Egypt's greatest expansion. Ancient Egyptian religion was polytheistic and centered around the king and gods, with religion playing an integral role in all aspects of Egyptian society.

Early civilization: Mesopotamia, Assyria, and Persia

1) Mesopotamia was the site of early civilization between the Tigris and Euphrates Rivers and saw the development of complex societies, cities, writing, and empires like Akkad and Babylon.

2) Sumerian cities like Uruk and Ur developed systems of irrigation canals, surplus agriculture, and specialized occupations, laying the foundations for civilization.

3) Kings like Sargon of Akkad and Hammurabi of Babylon built large empires through military conquest and established some of the world's first legal codes to govern their populations.

WH 1111, Human Origins and the Beginning of History

This document discusses the origins of human history, beginning with early hominins in Africa millions of years ago. Key developments that laid the foundations for modern humans included increased brain size, language, tool use, and gender roles. Around 200,000 years ago, Homo sapiens evolved in Africa and later migrated worldwide. Evidence suggests religion and culture predate recorded history. The Neolithic Revolution around 12,000 years ago marked the transition to agriculture and settlement, allowing larger human populations to form. Domestication of plants and animals in locations worldwide was a major part of this transition.

WH 111, Historical methodology/credibility

This document discusses different methods of determining the credibility of claims: philosophically, scientifically, and historically. It outlines the key aspects of evaluating credibility scientifically and philosophically. For history, it identifies three epistemological aspects: historical events or facts, the use of narrative to connect facts and explain causation, and a commitment to naturalism in explanations. The document also addresses challenges in evaluating source credibility today and factors to consider like evidence, inaccuracies, and expert opinions. It notes expertise can be undermined by claims outside an area, factual errors, conflicts of interest, or opposing other experts.

WH 1112 Radical islam

Radical Islam emerged in the 7th century and has continued to develop over the past 14 centuries. It advocates a strict interpretation of Sharia law and views more moderate interpretations of Islam as heretical. Radical Islamic groups like Al-Qaeda and ISIS have used terrorism and violence in an attempt to establish Islamic states governed by their extreme interpretation of Sharia.

WH1112 The industrial revolution

The Industrial Revolution occurred between 1760-1840 and marked a major transition in history with the development of new manufacturing processes and technologies. This included shifting from hand production methods to machines, new chemical and iron production processes, and the increased use of steam power. The Industrial Revolution significantly improved efficiency and led to unprecedented economic and population growth, but also often poor living and working conditions for many. It began in the United Kingdom and spread to Europe.

WH1112 The enlightenment

The document provides an overview of the Enlightenment period in Europe from the 17th to 19th centuries. It discusses key themes and time periods of the Enlightenment, including the Early Enlightenment from 1685-1730, the High Enlightenment from 1730-1780, and the Late Enlightenment from 1780-1815. It also summarizes major philosophical positions that emerged during the Enlightenment, such as rationalism, empiricism, skepticism, and how they influenced views of knowledge. Additionally, it outlines political revolutions and theories of the Enlightenment as well as perspectives on religion.

WH 1112 The scientific revolution

The Scientific Revolution saw major changes in how science was approached and natural philosophy was studied. Key figures like Copernicus, Kepler, Galileo and Descartes established a more mathematical and empirically-based approach focused on experimentation and observation over philosophical speculation. Newton built on this work in his Philosophiae Naturalis Principia Mathematica, formulating the laws of motion and universal gravitation that came to dominate scientists' views of the physical universe for centuries.

WH 1112, The Age of Discovery, Michael Granado

The document discusses the Age of Discovery from the 15th to 18th centuries when European powers like Portugal, Spain and others began exploring and colonizing other parts of the world. It identifies several factors that contributed to Europe's dominance including competition between states, advances in science and technology like ship design, emerging property rights and work ethics, and the growing consumer society. It then provides details on major Portuguese, Spanish and other European explorers and their voyages. It also summarizes the major pre-Columbian civilizations of South America including the Maya, Aztec and Inca empires, highlighting some of their cultural achievements and political structures.

WH1112, Unit 1: The Protestant Reformation

The Protestant Reformation began in 15th century Europe as a reaction against corrupt practices in the Catholic Church. Martin Luther protested the sale of indulgences in 1517 with his 95 Theses. This sparked the Reformation and divided Western Christianity between Protestant and Catholic denominations. Luther's theology emphasized salvation by faith alone rather than works. His refusal to recant at the Diet of Worms in 1521 had wide religious, political, and intellectual impacts that transformed Europe.

clinical examination of hip joint (1).pdf

described clinical examination all orthopeadic conditions .

BÀI TẬP BỔ TRỢ TIẾNG ANH LỚP 9 CẢ NĂM - GLOBAL SUCCESS - NĂM HỌC 2024-2025 - ...

BÀI TẬP BỔ TRỢ TIẾNG ANH LỚP 9 CẢ NĂM - GLOBAL SUCCESS - NĂM HỌC 2024-2025 - ...Nguyen Thanh Tu Collection

https://app.box.com/s/tacvl9ekroe9hqupdnjruiypvm9rdaneMore Related Content

More from Michael Granado

World war ii

The document provides a detailed overview of the key events of World War II from 1939-1945. It describes how the political and economic instability in Germany following WWI led to the rise of Hitler and the Nazis. It then outlines Germany's aggression across Europe in the late 1930s that precipitated the start of WWII. The document discusses the major military campaigns and battles across Europe and in the Pacific theater between the Allied and Axis powers. It also describes the implementation of the Holocaust by Nazi Germany and its allies that resulted in the genocide of approximately 6 million European Jews.

US 2111 Jacksonian democracy

Jacksonian Democracy emerged in the 1820s-1860s era and referred to both Andrew Jackson's presidency and the democratic reforms that accompanied his leadership. It aimed to reduce the power of elites and expand political power to more white men. However, it also took racism and slavery for granted. The market revolution transformed the economy but also generated tensions as not all whites benefited equally. Jacksonian policies aimed to curb the power of banks and centralized economic authority that favored the wealthy. However, the growing conflicts over slavery threatened to divide the major parties and the nation.

US 2111 Jeffersonian america

The document summarizes the development of political parties and elections in the early United States. It discusses the emergence of the Federalist and Democratic-Republican parties in the 1790s, culminating in Thomas Jefferson's election in 1800 as the first President from the opposing party. This peaceful transfer of power established an important precedent. The era was dominated by international events related to the French Revolution and Napoleon. Jefferson expanded the country through the Louisiana Purchase. His presidency saw the Supreme Court assert its power of judicial review in Marbury v. Madison.

US 2111 American Revolution

The document summarizes the key events of the American Revolutionary War and early United States history from 1765-1783. It discusses the growing tensions between British colonies and the colonial government, key battles of the Revolutionary War, French involvement in 1778 that turned the conflict into an international war, the American victory at Yorktown in 1781, and the adoption of the Articles of Confederation in 1777 which established the first national government of the US but had significant weaknesses.

US 1: Settlement of north america

This document summarizes the key events surrounding the settlement of North America by European powers between 1000-1650 CE. It discusses the political, economic, and technological developments in Europe that enabled exploration, including the Protestant Reformation, rise of nation-states, and advances in navigation. It then outlines the colonial efforts and impacts of the four major European powers in North America: Spain, France, the Netherlands, and England. Specific colonies founded by each are named and their economies, populations, and systems of governance discussed. The document also examines the growth of slavery in the English colonies and its importance to the development of plantation economies in the Americas.

US 1: History of native american tribes

The document summarizes the major migrations and cultures that inhabited the Americas between 10,000 BC to 1500 AD. It describes how ancient Siberians first crossed into Alaska over a land bridge and then spread throughout the Americas. It then outlines several major cultural periods and groups that developed, including the Clovis, Poverty Point, Hopewell, Coles Creek, Hohokam, Mississippian, and Iroquois cultures and their characteristic features such as mound building, irrigation, and confederacy formation.

WH 1111 Rome

Rome began as a small town on the Tiber River and grew into a massive empire through military expansion and conquest over centuries. It transitioned from a monarchy to a republic ruled by elected officials and senators. Internal political struggles and the rise of influential figures like Julius Caesar led to the end of the republic and establishment of the Roman Empire under Augustus in 27 BC. The empire reached its peak but then declined due to economic troubles, corruption, and invasions, with the western half falling in 476 AD.

WH 1111 Ancient greece

Ancient Greece emerged as the birthplace of Western civilization between 5000-300 BC. Key developments included the rise of city-states like Athens and Sparta between 800-500 BC, the Persian Wars in the 5th century BC which united the Greeks against an outside threat, and the Peloponnesian War between Athens and Sparta which weakened Greece and allowed Philip II and his son Alexander the Great to conquer the region.

WH 1111 Ancient china

Ancient China experienced several important dynasties between 5,000-200 BC. The Xia Dynasty is believed to have been the first, founded by Yu the Great to control flooding of the Yellow River. The next major dynasty was the Shang Dynasty, the first for which there is both archaeological and documentary evidence. Two important developments during the Shang were the earliest forms of Chinese writing and the beginning of bronze metalworking. The Zhou Dynasty overthrew the Shang in 1046 BC and saw further developments including the spread of ironworking, new agricultural technologies, and the philosophy of Confucianism. During the Spring and Autumn and Warring States periods, China fragmented into many warring states and new philosophies like

WH 1111 Ancient india

The ancient Indus Valley Civilization developed around 5000 BCE along the Indus River valley. Two major cities, Mohenjo-Daro and Harappa, had sophisticated urban planning with streets laid in a grid and advanced sanitation systems. Around 1800 BCE, the civilization began to decline due to drought or other factors. After this, Aryan tribes migrated into the region, establishing the Vedic civilization between 1500-500 BCE. During this period, sacred texts like the Vedas were composed, introducing Hindu concepts like dharma, karma, samsara and the caste system. The Mauryan Empire then unified much of the Indian subcontinent under Emperor Ashoka in the 3rd century BCE after adopting Buddhism following

WH 1111 Ancient egypt

Ancient Egypt was one of the earliest and most influential civilizations, located along the Nile River in Northeast Africa. For almost 30 centuries from its unification around 3100 BC until its conquest by Alexander the Great in 332 BC, Ancient Egypt was the preeminent civilization in the Mediterranean world. Ancient Egyptian history can be divided into eight distinct periods, including the Old Kingdom known for pyramid building, the Middle Kingdom considered Egypt's classical age, and the New Kingdom which saw Egypt's greatest expansion. Ancient Egyptian religion was polytheistic and centered around the king and gods, with religion playing an integral role in all aspects of Egyptian society.

Early civilization: Mesopotamia, Assyria, and Persia

1) Mesopotamia was the site of early civilization between the Tigris and Euphrates Rivers and saw the development of complex societies, cities, writing, and empires like Akkad and Babylon.

2) Sumerian cities like Uruk and Ur developed systems of irrigation canals, surplus agriculture, and specialized occupations, laying the foundations for civilization.

3) Kings like Sargon of Akkad and Hammurabi of Babylon built large empires through military conquest and established some of the world's first legal codes to govern their populations.

WH 1111, Human Origins and the Beginning of History

This document discusses the origins of human history, beginning with early hominins in Africa millions of years ago. Key developments that laid the foundations for modern humans included increased brain size, language, tool use, and gender roles. Around 200,000 years ago, Homo sapiens evolved in Africa and later migrated worldwide. Evidence suggests religion and culture predate recorded history. The Neolithic Revolution around 12,000 years ago marked the transition to agriculture and settlement, allowing larger human populations to form. Domestication of plants and animals in locations worldwide was a major part of this transition.

WH 111, Historical methodology/credibility

This document discusses different methods of determining the credibility of claims: philosophically, scientifically, and historically. It outlines the key aspects of evaluating credibility scientifically and philosophically. For history, it identifies three epistemological aspects: historical events or facts, the use of narrative to connect facts and explain causation, and a commitment to naturalism in explanations. The document also addresses challenges in evaluating source credibility today and factors to consider like evidence, inaccuracies, and expert opinions. It notes expertise can be undermined by claims outside an area, factual errors, conflicts of interest, or opposing other experts.

WH 1112 Radical islam

Radical Islam emerged in the 7th century and has continued to develop over the past 14 centuries. It advocates a strict interpretation of Sharia law and views more moderate interpretations of Islam as heretical. Radical Islamic groups like Al-Qaeda and ISIS have used terrorism and violence in an attempt to establish Islamic states governed by their extreme interpretation of Sharia.

WH1112 The industrial revolution

The Industrial Revolution occurred between 1760-1840 and marked a major transition in history with the development of new manufacturing processes and technologies. This included shifting from hand production methods to machines, new chemical and iron production processes, and the increased use of steam power. The Industrial Revolution significantly improved efficiency and led to unprecedented economic and population growth, but also often poor living and working conditions for many. It began in the United Kingdom and spread to Europe.

WH1112 The enlightenment

The document provides an overview of the Enlightenment period in Europe from the 17th to 19th centuries. It discusses key themes and time periods of the Enlightenment, including the Early Enlightenment from 1685-1730, the High Enlightenment from 1730-1780, and the Late Enlightenment from 1780-1815. It also summarizes major philosophical positions that emerged during the Enlightenment, such as rationalism, empiricism, skepticism, and how they influenced views of knowledge. Additionally, it outlines political revolutions and theories of the Enlightenment as well as perspectives on religion.

WH 1112 The scientific revolution

The Scientific Revolution saw major changes in how science was approached and natural philosophy was studied. Key figures like Copernicus, Kepler, Galileo and Descartes established a more mathematical and empirically-based approach focused on experimentation and observation over philosophical speculation. Newton built on this work in his Philosophiae Naturalis Principia Mathematica, formulating the laws of motion and universal gravitation that came to dominate scientists' views of the physical universe for centuries.

WH 1112, The Age of Discovery, Michael Granado

The document discusses the Age of Discovery from the 15th to 18th centuries when European powers like Portugal, Spain and others began exploring and colonizing other parts of the world. It identifies several factors that contributed to Europe's dominance including competition between states, advances in science and technology like ship design, emerging property rights and work ethics, and the growing consumer society. It then provides details on major Portuguese, Spanish and other European explorers and their voyages. It also summarizes the major pre-Columbian civilizations of South America including the Maya, Aztec and Inca empires, highlighting some of their cultural achievements and political structures.

WH1112, Unit 1: The Protestant Reformation

The Protestant Reformation began in 15th century Europe as a reaction against corrupt practices in the Catholic Church. Martin Luther protested the sale of indulgences in 1517 with his 95 Theses. This sparked the Reformation and divided Western Christianity between Protestant and Catholic denominations. Luther's theology emphasized salvation by faith alone rather than works. His refusal to recant at the Diet of Worms in 1521 had wide religious, political, and intellectual impacts that transformed Europe.

More from Michael Granado (20)

Early civilization: Mesopotamia, Assyria, and Persia

Early civilization: Mesopotamia, Assyria, and Persia

WH 1111, Human Origins and the Beginning of History

WH 1111, Human Origins and the Beginning of History

Recently uploaded

clinical examination of hip joint (1).pdf

described clinical examination all orthopeadic conditions .

BÀI TẬP BỔ TRỢ TIẾNG ANH LỚP 9 CẢ NĂM - GLOBAL SUCCESS - NĂM HỌC 2024-2025 - ...

BÀI TẬP BỔ TRỢ TIẾNG ANH LỚP 9 CẢ NĂM - GLOBAL SUCCESS - NĂM HỌC 2024-2025 - ...Nguyen Thanh Tu Collection

https://app.box.com/s/tacvl9ekroe9hqupdnjruiypvm9rdaneThe History of Stoke Newington Street Names

Presented at the Stoke Newington Literary Festival on 9th June 2024

www.StokeNewingtonHistory.com

BÀI TẬP DẠY THÊM TIẾNG ANH LỚP 7 CẢ NĂM FRIENDS PLUS SÁCH CHÂN TRỜI SÁNG TẠO ...

BÀI TẬP DẠY THÊM TIẾNG ANH LỚP 7 CẢ NĂM FRIENDS PLUS SÁCH CHÂN TRỜI SÁNG TẠO ...Nguyen Thanh Tu Collection

https://app.box.com/s/qhtvq32h4ybf9t49ku85x0n3xl4jhr15Chapter wise All Notes of First year Basic Civil Engineering.pptx

Chapter wise All Notes of First year Basic Civil Engineering

Syllabus

Chapter-1

Introduction to objective, scope and outcome the subject

Chapter 2

Introduction: Scope and Specialization of Civil Engineering, Role of civil Engineer in Society, Impact of infrastructural development on economy of country.

Chapter 3

Surveying: Object Principles & Types of Surveying; Site Plans, Plans & Maps; Scales & Unit of different Measurements.

Linear Measurements: Instruments used. Linear Measurement by Tape, Ranging out Survey Lines and overcoming Obstructions; Measurements on sloping ground; Tape corrections, conventional symbols. Angular Measurements: Instruments used; Introduction to Compass Surveying, Bearings and Longitude & Latitude of a Line, Introduction to total station.

Levelling: Instrument used Object of levelling, Methods of levelling in brief, and Contour maps.

Chapter 4

Buildings: Selection of site for Buildings, Layout of Building Plan, Types of buildings, Plinth area, carpet area, floor space index, Introduction to building byelaws, concept of sun light & ventilation. Components of Buildings & their functions, Basic concept of R.C.C., Introduction to types of foundation

Chapter 5

Transportation: Introduction to Transportation Engineering; Traffic and Road Safety: Types and Characteristics of Various Modes of Transportation; Various Road Traffic Signs, Causes of Accidents and Road Safety Measures.

Chapter 6

Environmental Engineering: Environmental Pollution, Environmental Acts and Regulations, Functional Concepts of Ecology, Basics of Species, Biodiversity, Ecosystem, Hydrological Cycle; Chemical Cycles: Carbon, Nitrogen & Phosphorus; Energy Flow in Ecosystems.

Water Pollution: Water Quality standards, Introduction to Treatment & Disposal of Waste Water. Reuse and Saving of Water, Rain Water Harvesting. Solid Waste Management: Classification of Solid Waste, Collection, Transportation and Disposal of Solid. Recycling of Solid Waste: Energy Recovery, Sanitary Landfill, On-Site Sanitation. Air & Noise Pollution: Primary and Secondary air pollutants, Harmful effects of Air Pollution, Control of Air Pollution. . Noise Pollution Harmful Effects of noise pollution, control of noise pollution, Global warming & Climate Change, Ozone depletion, Greenhouse effect

Text Books:

1. Palancharmy, Basic Civil Engineering, McGraw Hill publishers.

2. Satheesh Gopi, Basic Civil Engineering, Pearson Publishers.

3. Ketki Rangwala Dalal, Essentials of Civil Engineering, Charotar Publishing House.

4. BCP, Surveying volume 1

How to Manage Your Lost Opportunities in Odoo 17 CRM

Odoo 17 CRM allows us to track why we lose sales opportunities with "Lost Reasons." This helps analyze our sales process and identify areas for improvement. Here's how to configure lost reasons in Odoo 17 CRM

What is Digital Literacy? A guest blog from Andy McLaughlin, University of Ab...

What is Digital Literacy? A guest blog from Andy McLaughlin, University of Aberdeen

BÀI TẬP BỔ TRỢ TIẾNG ANH 8 CẢ NĂM - GLOBAL SUCCESS - NĂM HỌC 2023-2024 (CÓ FI...

BÀI TẬP BỔ TRỢ TIẾNG ANH 8 CẢ NĂM - GLOBAL SUCCESS - NĂM HỌC 2023-2024 (CÓ FI...Nguyen Thanh Tu Collection

https://app.box.com/s/y977uz6bpd3af4qsebv7r9b7s21935vdReimagining Your Library Space: How to Increase the Vibes in Your Library No ...

Librarians are leading the way in creating future-ready citizens – now we need to update our spaces to match. In this session, attendees will get inspiration for transforming their library spaces. You’ll learn how to survey students and patrons, create a focus group, and use design thinking to brainstorm ideas for your space. We’ll discuss budget friendly ways to change your space as well as how to find funding. No matter where you’re at, you’ll find ideas for reimagining your space in this session.

ISO/IEC 27001, ISO/IEC 42001, and GDPR: Best Practices for Implementation and...

Denis is a dynamic and results-driven Chief Information Officer (CIO) with a distinguished career spanning information systems analysis and technical project management. With a proven track record of spearheading the design and delivery of cutting-edge Information Management solutions, he has consistently elevated business operations, streamlined reporting functions, and maximized process efficiency.

Certified as an ISO/IEC 27001: Information Security Management Systems (ISMS) Lead Implementer, Data Protection Officer, and Cyber Risks Analyst, Denis brings a heightened focus on data security, privacy, and cyber resilience to every endeavor.

His expertise extends across a diverse spectrum of reporting, database, and web development applications, underpinned by an exceptional grasp of data storage and virtualization technologies. His proficiency in application testing, database administration, and data cleansing ensures seamless execution of complex projects.

What sets Denis apart is his comprehensive understanding of Business and Systems Analysis technologies, honed through involvement in all phases of the Software Development Lifecycle (SDLC). From meticulous requirements gathering to precise analysis, innovative design, rigorous development, thorough testing, and successful implementation, he has consistently delivered exceptional results.

Throughout his career, he has taken on multifaceted roles, from leading technical project management teams to owning solutions that drive operational excellence. His conscientious and proactive approach is unwavering, whether he is working independently or collaboratively within a team. His ability to connect with colleagues on a personal level underscores his commitment to fostering a harmonious and productive workplace environment.

Date: May 29, 2024

Tags: Information Security, ISO/IEC 27001, ISO/IEC 42001, Artificial Intelligence, GDPR

-------------------------------------------------------------------------------

Find out more about ISO training and certification services

Training: ISO/IEC 27001 Information Security Management System - EN | PECB

ISO/IEC 42001 Artificial Intelligence Management System - EN | PECB

General Data Protection Regulation (GDPR) - Training Courses - EN | PECB

Webinars: https://pecb.com/webinars

Article: https://pecb.com/article

-------------------------------------------------------------------------------

For more information about PECB:

Website: https://pecb.com/

LinkedIn: https://www.linkedin.com/company/pecb/

Facebook: https://www.facebook.com/PECBInternational/

Slideshare: http://www.slideshare.net/PECBCERTIFICATION

How to Make a Field Mandatory in Odoo 17

In Odoo, making a field required can be done through both Python code and XML views. When you set the required attribute to True in Python code, it makes the field required across all views where it's used. Conversely, when you set the required attribute in XML views, it makes the field required only in the context of that particular view.

How to Create a More Engaging and Human Online Learning Experience

How to Create a More Engaging and Human Online Learning Experience Wahiba Chair Training & Consulting

Wahiba Chair's Talk at the 2024 Learning Ideas Conference. PCOS corelations and management through Ayurveda.

This presentation includes basic of PCOS their pathology and treatment and also Ayurveda correlation of PCOS and Ayurvedic line of treatment mentioned in classics.

South African Journal of Science: Writing with integrity workshop (2024)

South African Journal of Science: Writing with integrity workshop (2024)Academy of Science of South Africa

A workshop hosted by the South African Journal of Science aimed at postgraduate students and early career researchers with little or no experience in writing and publishing journal articles.BBR 2024 Summer Sessions Interview Training

Qualitative research interview training by Professor Katrina Pritchard and Dr Helen Williams

Pengantar Penggunaan Flutter - Dart programming language1.pptx

Pengantar Penggunaan Flutter - Dart programming language1.pptx

Recently uploaded (20)

BÀI TẬP BỔ TRỢ TIẾNG ANH LỚP 9 CẢ NĂM - GLOBAL SUCCESS - NĂM HỌC 2024-2025 - ...

BÀI TẬP BỔ TRỢ TIẾNG ANH LỚP 9 CẢ NĂM - GLOBAL SUCCESS - NĂM HỌC 2024-2025 - ...

BÀI TẬP DẠY THÊM TIẾNG ANH LỚP 7 CẢ NĂM FRIENDS PLUS SÁCH CHÂN TRỜI SÁNG TẠO ...

BÀI TẬP DẠY THÊM TIẾNG ANH LỚP 7 CẢ NĂM FRIENDS PLUS SÁCH CHÂN TRỜI SÁNG TẠO ...

Chapter wise All Notes of First year Basic Civil Engineering.pptx

Chapter wise All Notes of First year Basic Civil Engineering.pptx

Liberal Approach to the Study of Indian Politics.pdf

Liberal Approach to the Study of Indian Politics.pdf

How to Manage Your Lost Opportunities in Odoo 17 CRM

How to Manage Your Lost Opportunities in Odoo 17 CRM

What is Digital Literacy? A guest blog from Andy McLaughlin, University of Ab...

What is Digital Literacy? A guest blog from Andy McLaughlin, University of Ab...

BÀI TẬP BỔ TRỢ TIẾNG ANH 8 CẢ NĂM - GLOBAL SUCCESS - NĂM HỌC 2023-2024 (CÓ FI...

BÀI TẬP BỔ TRỢ TIẾNG ANH 8 CẢ NĂM - GLOBAL SUCCESS - NĂM HỌC 2023-2024 (CÓ FI...

Reimagining Your Library Space: How to Increase the Vibes in Your Library No ...

Reimagining Your Library Space: How to Increase the Vibes in Your Library No ...

ISO/IEC 27001, ISO/IEC 42001, and GDPR: Best Practices for Implementation and...

ISO/IEC 27001, ISO/IEC 42001, and GDPR: Best Practices for Implementation and...

How to Create a More Engaging and Human Online Learning Experience

How to Create a More Engaging and Human Online Learning Experience

Digital Artefact 1 - Tiny Home Environmental Design

Digital Artefact 1 - Tiny Home Environmental Design

South African Journal of Science: Writing with integrity workshop (2024)

South African Journal of Science: Writing with integrity workshop (2024)

Pengantar Penggunaan Flutter - Dart programming language1.pptx

Pengantar Penggunaan Flutter - Dart programming language1.pptx