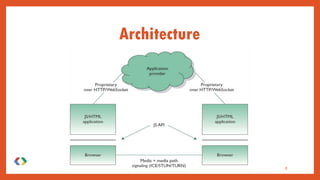

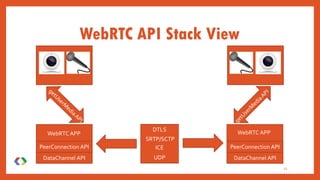

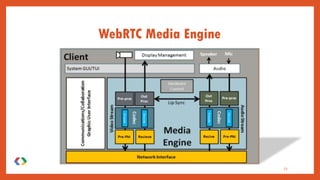

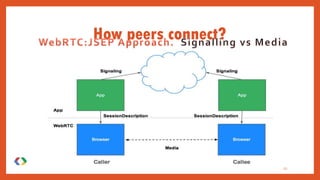

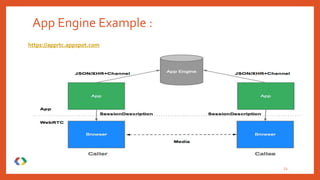

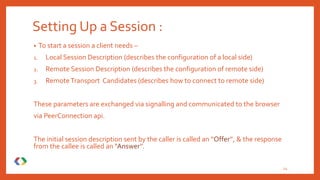

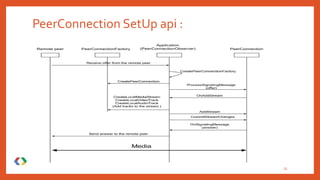

WebRTC (Web Real-Time Communication) is an emerging standard that facilitates real-time peer-to-peer communication among web browsers without the need for plugins, thereby simplifying integration. It enables seamless audio/video communications and data exchange, aiming to revolutionize online interactions while ensuring security and low-cost enterprise solutions. Though primarily browser-focused, it faces limitations such as the necessity for a server for user discovery and signalling, as well as challenges in multiparty conferencing and recording capabilities.