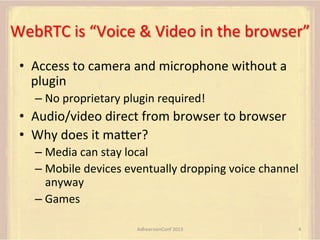

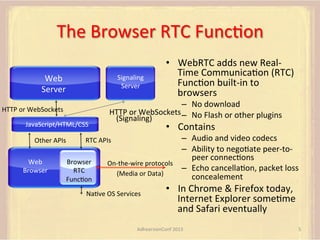

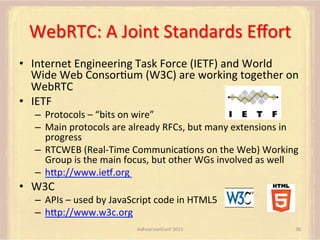

WebRTC enables real-time voice and video communication directly in web browsers without requiring plugins. It supports peer-to-peer connections, allowing media to remain local, and is built upon open standards from IETF and W3C. Key features include audio/video stream handling, NAT traversal capabilities, and secure communication protocols.

![WebRTC

with

SIP

Web

Server

SIP

Proxy/Registrar

Server

WebSocket

(SIP)

HTTP

(HTML5/CSS/

JavaScript)

Browser

M

(running

JavaScript

SIP

UA)

HTTP

WebSocket

(HTML5/CSS/

(SIP)

JavaScript)

SRTP

Media

Browser

T

(running

JavaScript

SIP

UA)

• Browser

runs

a

SIP

User

Agent

by

running

JavaScript

from

Web

Server

• SRTP

media

connecFon

uses

WebRTC

APIs

• Details

in

[dram-‐iec-‐sipcore-‐websocket]

that

defines

SIP

transport

over

AdhearsionConf

2013

13

WebSockets](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-13-320.jpg)

![Mobile

browser

code

outline

var signalingChannel =

createSignalingChannel();

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

• We

will

look

next

at

each

of

these

• .

.

.

except

for

creaFng

the

signaling

channel

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

61](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-61-320.jpg)

![funcFon

getMedia()

[1]

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

. . .

function attachMedia() {

presentation =

new MediaStream(

• Get

audio

• (Get

window

video

–

out

of

scope)

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

63](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-63-320.jpg)

![funcFon

getMedia()

[2]

. . .

constraint =

{"video": {"mandatory": {"facingMode": "environment"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"facingMode": "user"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

• Get

front-‐facing

camera

• Get

rear-‐facing

camera

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

64](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-64-320.jpg)

![Mobile

browser

code

outline

var signalingChannel =

createSignalingChannel();

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

• We

will

look

next

at

each

of

these

• .

.

.

except

for

creaFng

the

signaling

channel

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

65](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-65-320.jpg)

![funcFon

createPC()

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

pc = new RTCPeerConnection(configuration);

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

• Create

RTCPeerConnection

• Set

handlers

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

66](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-66-320.jpg)

![FuncFon

handleIncomingStream()

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

• If

incoming

stream

has

video

track,

set

to

av_stream

and

display

it

• If

it

has

two

audio

tracks,

must

be

stereo

• Otherwise,

must

be

the

mono

stream

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

68](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-68-320.jpg)

![FuncFon

show_av(st)

display.srcObject =

new MediaStream(st.getVideoTracks()[0]);

left.srcObject =

new MediaStream(st.getAudioTracks()[0]);

right.srcObject =

new MediaStream(st.getAudioTracks()[1]);

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

• Using

new

srcObject

property

on

media,

• Set

new

stream

as

source

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

69](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-69-320.jpg)

![Mobile

browser

code

outline

var signalingChannel =

createSignalingChannel();

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

• We

will

look

next

at

each

of

these

• .

.

.

except

for

creaFng

the

signaling

channel

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

70](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-70-320.jpg)

![funcFon

aUachMedia()

[1]

presentation =

new MediaStream(

[microphone.getAudioTracks()[0],

application.getVideoTracks()[0]]);

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

front.getVideoTracks()[0]]);

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

. . .

// Audio

// Presentation

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// Audio

// Presenter

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

// Audio

// Demonstration

• Create

3

new

streams,

all

with

same

audio

but

different

video

AdhearsionConf

2013

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

71](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-71-320.jpg)

![funcFon

aUachMedia()

[2]

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

• AUach

all

3

streams

to

Peer

ConnecFon

• Send

stream

ids

to

peer

(before

streams!)

}

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

72](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-72-320.jpg)

![Mobile

browser

code

outline

var signalingChannel =

createSignalingChannel();

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

• We

will

look

next

at

each

of

these

• .

.

.

except

for

creaFng

the

signaling

channel

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

73](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-73-320.jpg)

![funcFon

call()

pc.createOffer(gotDescription, e);

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

}

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

• Ask

browser

to

create

SDP

offer

• Set

offer

as

local

descripFon

• Send

offer

to

peer

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

AdhearsionConf

2013

74](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-74-320.jpg)

![How

do

we

get

the

SDP

answer?

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var microphone, application, front, rear;

var presentation, presenter, demonstration;

var remote_av, stereo, mono;

var display, left, right;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

getMedia();

createPC();

attachMedia();

call();

function getMedia() {

// get local audio (microphone)

navigator.getUserMedia({"audio": true }, function (stream) {

microphone = stream;

}, e);

// get local video (application sharing)

///// This is outside the scope of this specification.

///// Assume that 'application' has been set to this stream.

//

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "front"}}};

navigator.getUserMedia(constraint, function (stream) {

front = stream;

}, e);

constraint =

{"video": {"mandatory": {"videoFacingModeEnum": "rear"}}};

navigator.getUserMedia(constraint, function (stream) {

rear = stream;

}, e);

}

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function attachMedia() {

presentation =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

application.getVideoTracks()[0]]); // Presentation

presenter =

new MediaStream(

[microphone.getAudioTracks()[0],

// Audio

front.getVideoTracks()[0]]);

// Presenter

};

demonstration =

new MediaStream(

[microphone.getAudioTracks()[0],

rear.getVideoTracks()[0]]);

// Audio

// Demonstration

pc.addStream(presentation);

pc.addStream(presenter);

pc.addStream(demonstration);

• Signaling

channel

provides

message

• If

SDP,

set

as

remote

descripFon

• If

ICE

candidate,

tell

the

browser

AdhearsionConf

2013

}

signalingChannel.send(

JSON.stringify({ "presentation": presentation.id,

"presenter": presenter.id,

"demonstration": demonstration.id

}));

function call() {

pc.createOffer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.getVideoTracks().length == 1) {

av_stream = st;

show_av(av_stream);

} else if (st.getAudioTracks().length == 2) {

stereo = st;

} else {

mono = st;

}

}

function show_av(st) {

display.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

left.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

right.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[1]));

}

signalingChannel.onmessage = function (msg) {

var signal = JSON.parse(msg.data);

if (signal.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(signal.sdp), s, e);

} else {

pc.addIceCandidate(

new RTCIceCandidate(signal.candidate));

}

};

75](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-75-320.jpg)

![Signaling

channel

message

is

trigger

signalingChannel.onmessage = function (msg) {

if (!pc) {

prepareForIncomingCall();

}

var sgnl = JSON.parse(msg.data);

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var webcam, left, right;

var av, stereo, mono;

var incoming;

var speaker, win1, win2, win3;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

function prepareForIncomingCall() {

createPC();

getMedia();

}

attachMedia();

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

. . .

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function getMedia() {

navigator.getUserMedia({"video": true }, function (stream) {

webcam = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "left"}}};

navigator.getUserMedia(constraint, function (stream) {

left = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "right"}}};

navigator.getUserMedia(constraint, function (stream) {

right = stream;

}, e);

}

function attachMedia() {

av = new MediaStream(

[webcam.getVideoTracks()[0],

left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

stereo = new MediaStream(

[left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

mono = left;

};

// Video

// Left audio

// Right audio

// Left audio

// Right audio

// Treat the left audio as the mono stream

pc.addStream(av);

pc.addStream(stereo);

pc.addStream(mono);

}

function answer() {

pc.createAnswer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

• Set

up

PC

and

media

if

not

already

done

if (st.id === incoming.presentation) {

speaker.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

win1.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else if (st.id === incoming.presenter) {

win2.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else {

win3.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

}

}

signalingChannel.onmessage = function (msg) {

if (!pc) {

prepareForIncomingCall();

}

var sgnl = JSON.parse(msg.data);

if (sgnl.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(sgnl.sdp), s, e);

answer();

} else if (sgnl.candidate) {

pc.addIceCandidate(new RTCIceCandidate(sgnl.candidate));

} else {

incoming = sgnl;

}

};

AdhearsionConf

2013

77](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-77-320.jpg)

![Signaling

channel

message

is

trigger

signalingChannel.onmessage = function (msg) {

. . .

if (sgnl.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(sgnl.sdp), s, e);

answer();

} else if (sgnl.candidate) {

pc.addIceCandidate(new RTCIceCandidate(sgnl.candidate));

} else {

incoming = sgnl;

}

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var webcam, left, right;

var av, stereo, mono;

var incoming;

var speaker, win1, win2, win3;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

function prepareForIncomingCall() {

createPC();

getMedia();

}

attachMedia();

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function getMedia() {

navigator.getUserMedia({"video": true }, function (stream) {

webcam = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "left"}}};

navigator.getUserMedia(constraint, function (stream) {

left = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "right"}}};

navigator.getUserMedia(constraint, function (stream) {

right = stream;

}, e);

}

function attachMedia() {

av = new MediaStream(

[webcam.getVideoTracks()[0],

left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

stereo = new MediaStream(

[left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

mono = left;

// Video

// Left audio

// Right audio

// Left audio

// Right audio

// Treat the left audio as the mono stream

pc.addStream(av);

pc.addStream(stereo);

pc.addStream(mono);

}

function answer() {

pc.createAnswer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

};

• If

SDP,

*also*

answer

• But

if

neither

SDP

nor

ICE

candidate,

must

be

set

of

incoming

stream

ids,

so

save

AdhearsionConf

2013

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.id === incoming.presentation) {

speaker.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

win1.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else if (st.id === incoming.presenter) {

win2.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else {

win3.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

}

}

signalingChannel.onmessage = function (msg) {

if (!pc) {

prepareForIncomingCall();

}

var sgnl = JSON.parse(msg.data);

if (sgnl.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(sgnl.sdp), s, e);

answer();

} else if (sgnl.candidate) {

pc.addIceCandidate(new RTCIceCandidate(sgnl.candidate));

} else {

incoming = sgnl;

}

};

78](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-78-320.jpg)

![FuncFon

prepareForIncomingCall()

createPC();

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var webcam, left, right;

var av, stereo, mono;

getMedia();

var incoming;

var speaker, win1, win2, win3;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

function prepareForIncomingCall() {

createPC();

getMedia();

}

attachMedia();

function createPC() {

pc = new RTCPeerConnection(configuration);

attachMedia();

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function getMedia() {

navigator.getUserMedia({"video": true }, function (stream) {

webcam = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "left"}}};

navigator.getUserMedia(constraint, function (stream) {

left = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "right"}}};

navigator.getUserMedia(constraint, function (stream) {

right = stream;

}, e);

}

function attachMedia() {

av = new MediaStream(

[webcam.getVideoTracks()[0],

left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

stereo = new MediaStream(

[left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

mono = left;

• No

suprises

here

• Media

obtained

is

a

liUle

different

• But

aUached

the

same

way

AdhearsionConf

2013

// Video

// Left audio

// Right audio

// Left audio

// Right audio

// Treat the left audio as the mono stream

pc.addStream(av);

pc.addStream(stereo);

pc.addStream(mono);

}

function answer() {

pc.createAnswer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.id === incoming.presentation) {

speaker.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

win1.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else if (st.id === incoming.presenter) {

win2.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else {

win3.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

}

}

signalingChannel.onmessage = function (msg) {

if (!pc) {

prepareForIncomingCall();

}

var sgnl = JSON.parse(msg.data);

if (sgnl.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(sgnl.sdp), s, e);

answer();

} else if (sgnl.candidate) {

pc.addIceCandidate(new RTCIceCandidate(sgnl.candidate));

} else {

incoming = sgnl;

}

};

79](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-79-320.jpg)

![FuncFon

answer()

pc.createAnswer(gotDescription, e);

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

"credential":"myPassword"}]};

var webcam, left, right;

var av, stereo, mono;

var incoming;

var speaker, win1, win2, win3;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

function prepareForIncomingCall() {

createPC();

getMedia();

}

attachMedia();

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

signalingChannel.send(JSON.stringify({ "sdp": desc }));

function (evt) {handleIncomingStream(evt.stream);};

}

function getMedia() {

navigator.getUserMedia({"video": true }, function (stream) {

webcam = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "left"}}};

navigator.getUserMedia(constraint, function (stream) {

left = stream;

}, e);

}

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "right"}}};

navigator.getUserMedia(constraint, function (stream) {

right = stream;

}, e);

}

function attachMedia() {

av = new MediaStream(

[webcam.getVideoTracks()[0],

left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

stereo = new MediaStream(

[left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

mono = left;

• createAnswer()

automaFcally

uses

value

of

remoteDescription

when

generaFng

new

SDP

// Video

// Left audio

// Right audio

// Left audio

// Right audio

// Treat the left audio as the mono stream

pc.addStream(av);

pc.addStream(stereo);

pc.addStream(mono);

}

function answer() {

pc.createAnswer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.id === incoming.presentation) {

speaker.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

win1.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else if (st.id === incoming.presenter) {

win2.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else {

win3.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

}

}

signalingChannel.onmessage = function (msg) {

if (!pc) {

prepareForIncomingCall();

}

var sgnl = JSON.parse(msg.data);

if (sgnl.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(sgnl.sdp), s, e);

answer();

} else if (sgnl.candidate) {

pc.addIceCandidate(new RTCIceCandidate(sgnl.candidate));

} else {

incoming = sgnl;

}

};

AdhearsionConf

2013

80](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-80-320.jpg)

![FuncFon

handleIncomingStream()

if (st.id === incoming.presentation) {

speaker.srcObject =

new MediaStream(st.getAudioTracks()[0]);

win1.srcObject =

new MediaStream(st.getVideoTracks()[0]);

} else if (st.id === incoming.presenter) {

win2.srcObject =

new MediaStream(st.getVideoTracks()[0]);

} else {

win3.srcObject =

new MediaStream(st.getVideoTracks()[0]);

}

• Use

ids

to

disFnguish

streams

• Extract

one

audio

and

all

video

tracks

• Assign

to

element

sources

AdhearsionConf

2013

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var webcam, left, right;

var av, stereo, mono;

var incoming;

var speaker, win1, win2, win3;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

function prepareForIncomingCall() {

createPC();

getMedia();

}

attachMedia();

function createPC() {

pc = new RTCPeerConnection(configuration);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function getMedia() {

navigator.getUserMedia({"video": true }, function (stream) {

webcam = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "left"}}};

navigator.getUserMedia(constraint, function (stream) {

left = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "right"}}};

navigator.getUserMedia(constraint, function (stream) {

right = stream;

}, e);

}

function attachMedia() {

av = new MediaStream(

[webcam.getVideoTracks()[0],

left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

stereo = new MediaStream(

[left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

mono = left;

// Video

// Left audio

// Right audio

// Left audio

// Right audio

// Treat the left audio as the mono stream

pc.addStream(av);

pc.addStream(stereo);

pc.addStream(mono);

}

function answer() {

pc.createAnswer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.id === incoming.presentation) {

speaker.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

win1.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else if (st.id === incoming.presenter) {

win2.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else {

win3.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

}

}

signalingChannel.onmessage = function (msg) {

if (!pc) {

prepareForIncomingCall();

}

var sgnl = JSON.parse(msg.data);

if (sgnl.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(sgnl.sdp), s, e);

answer();

} else if (sgnl.candidate) {

pc.addIceCandidate(new RTCIceCandidate(sgnl.candidate));

} else {

incoming = sgnl;

}

};

82](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-82-320.jpg)

![FuncFon

getMedia()

[1]

navigator.getUserMedia({"video": true}, function (stream) {

webcam = stream;

}, e);

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var webcam, left, right;

var av, stereo, mono;

var incoming;

var speaker, win1, win2, win3;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

function prepareForIncomingCall() {

createPC();

getMedia();

}

attachMedia();

function createPC() {

pc = new RTCPeerConnection(configuration);

. . .

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function getMedia() {

navigator.getUserMedia({"video": true }, function (stream) {

webcam = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "left"}}};

navigator.getUserMedia(constraint, function (stream) {

left = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "right"}}};

navigator.getUserMedia(constraint, function (stream) {

right = stream;

}, e);

}

function attachMedia() {

av = new MediaStream(

[webcam.getVideoTracks()[0],

left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

stereo = new MediaStream(

[left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

mono = left;

• Request

webcam

video

// Video

// Left audio

// Right audio

// Left audio

// Right audio

// Treat the left audio as the mono stream

pc.addStream(av);

pc.addStream(stereo);

pc.addStream(mono);

}

function answer() {

pc.createAnswer(gotDescription, e);

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}

function handleIncomingStream(st) {

if (st.id === incoming.presentation) {

speaker.src = URL.createObjectURL(

new MediaStream(st.getAudioTracks()[0]));

win1.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else if (st.id === incoming.presenter) {

win2.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

} else {

win3.src = URL.createObjectURL(

new MediaStream(st.getVideoTracks()[0]));

}

}

signalingChannel.onmessage = function (msg) {

if (!pc) {

prepareForIncomingCall();

}

var sgnl = JSON.parse(msg.data);

if (sgnl.sdp) {

pc.setRemoteDescription(

new RTCSessionDescription(sgnl.sdp), s, e);

answer();

} else if (sgnl.candidate) {

pc.addIceCandidate(new RTCIceCandidate(sgnl.candidate));

} else {

incoming = sgnl;

}

};

AdhearsionConf

2013

84](https://image.slidesharecdn.com/introtowebrtcahnconf2013-140113122046-phpapp01/85/WebRTC-Overview-by-Dan-Burnett-84-320.jpg)

![FuncFon

getMedia()

[2]

. . .

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "left"}}};

navigator.getUserMedia(constraint,

function (stream) {left = stream;}, e);

var pc;

var configuration =

{"iceServers":[{"url":"stun:198.51.100.9"},

{"url":"turn:198.51.100.2",

"credential":"myPassword"}]};

var webcam, left, right;

var av, stereo, mono;

var incoming;

var speaker, win1, win2, win3;

function s(sdp) {} // stub success callback

function e(error) {}

//

stub error callback

var signalingChannel = createSignalingChannel();

function prepareForIncomingCall() {

createPC();

getMedia();

}

attachMedia();

function createPC() {

pc = new RTCPeerConnection(configuration);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "right"}}};

navigator.getUserMedia(constraint,

function (stream) {right = stream;}, e);

pc.onicecandidate = function (evt) {

signalingChannel.send(

JSON.stringify({ "candidate": evt.candidate }));

};

pc.onaddstream =

function (evt) {handleIncomingStream(evt.stream);};

}

function getMedia() {

navigator.getUserMedia({"video": true }, function (stream) {

webcam = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "left"}}};

navigator.getUserMedia(constraint, function (stream) {

left = stream;

}, e);

constraint =

{"audio": {"mandatory": {"audioDirectionEnum": "right"}}};

navigator.getUserMedia(constraint, function (stream) {

right = stream;

}, e);

}

function attachMedia() {

av = new MediaStream(

[webcam.getVideoTracks()[0],

left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

stereo = new MediaStream(

[left.getAudioTracks()[0],

right.getAudioTracks()[0]]);

mono = left;

// Video

// Left audio

// Right audio

// Left audio

// Right audio

// Treat the left audio as the mono stream

pc.addStream(av);

pc.addStream(stereo);

pc.addStream(mono);

}

function answer() {

pc.createAnswer(gotDescription, e);

• Request

lem

and

right

audio

streams

• Save

them

as

left

and

right

variables

function gotDescription(desc) {

pc.setLocalDescription(desc, s, e);

signalingChannel.send(JSON.stringify({ "sdp": desc }));

}

}