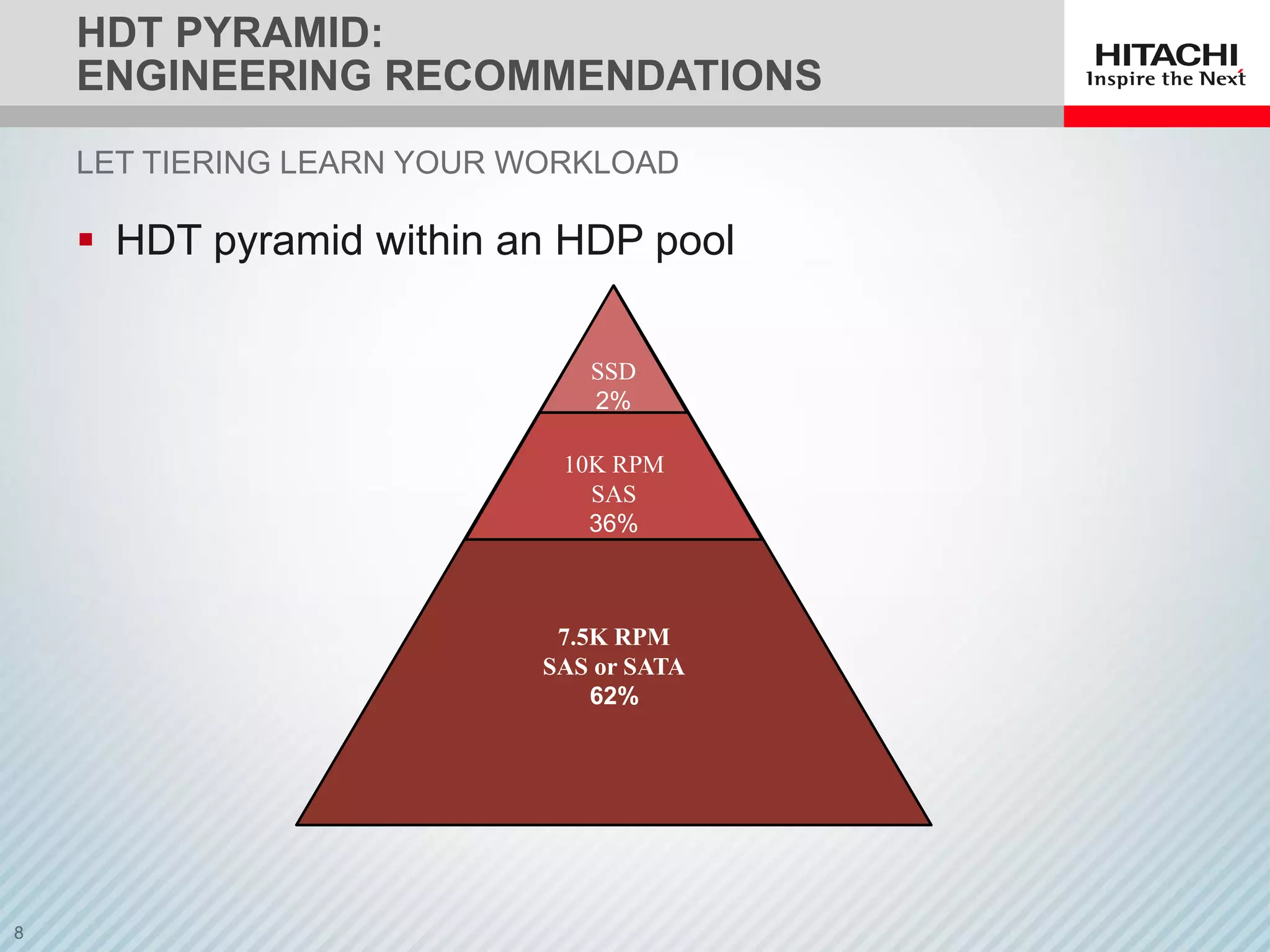

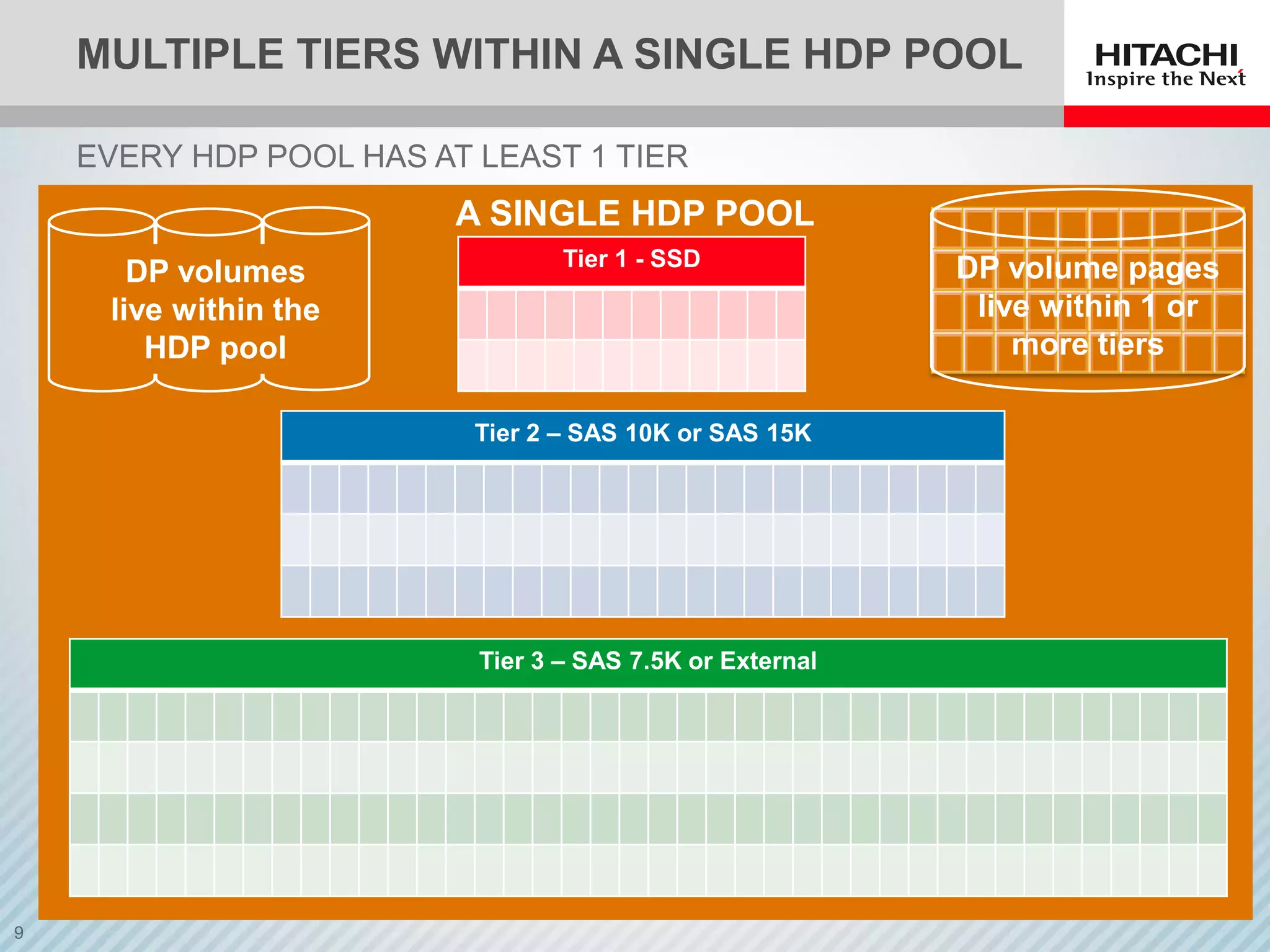

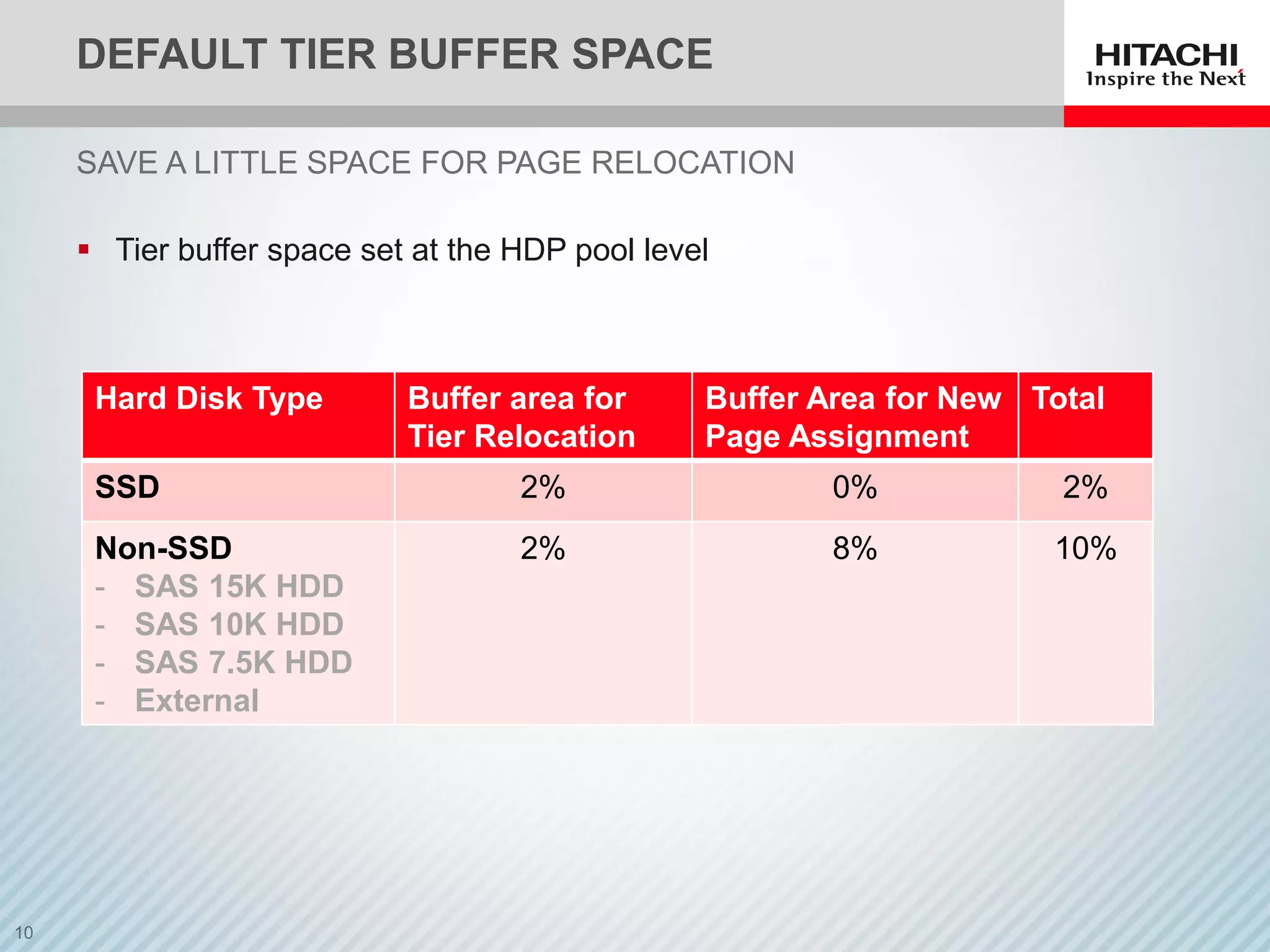

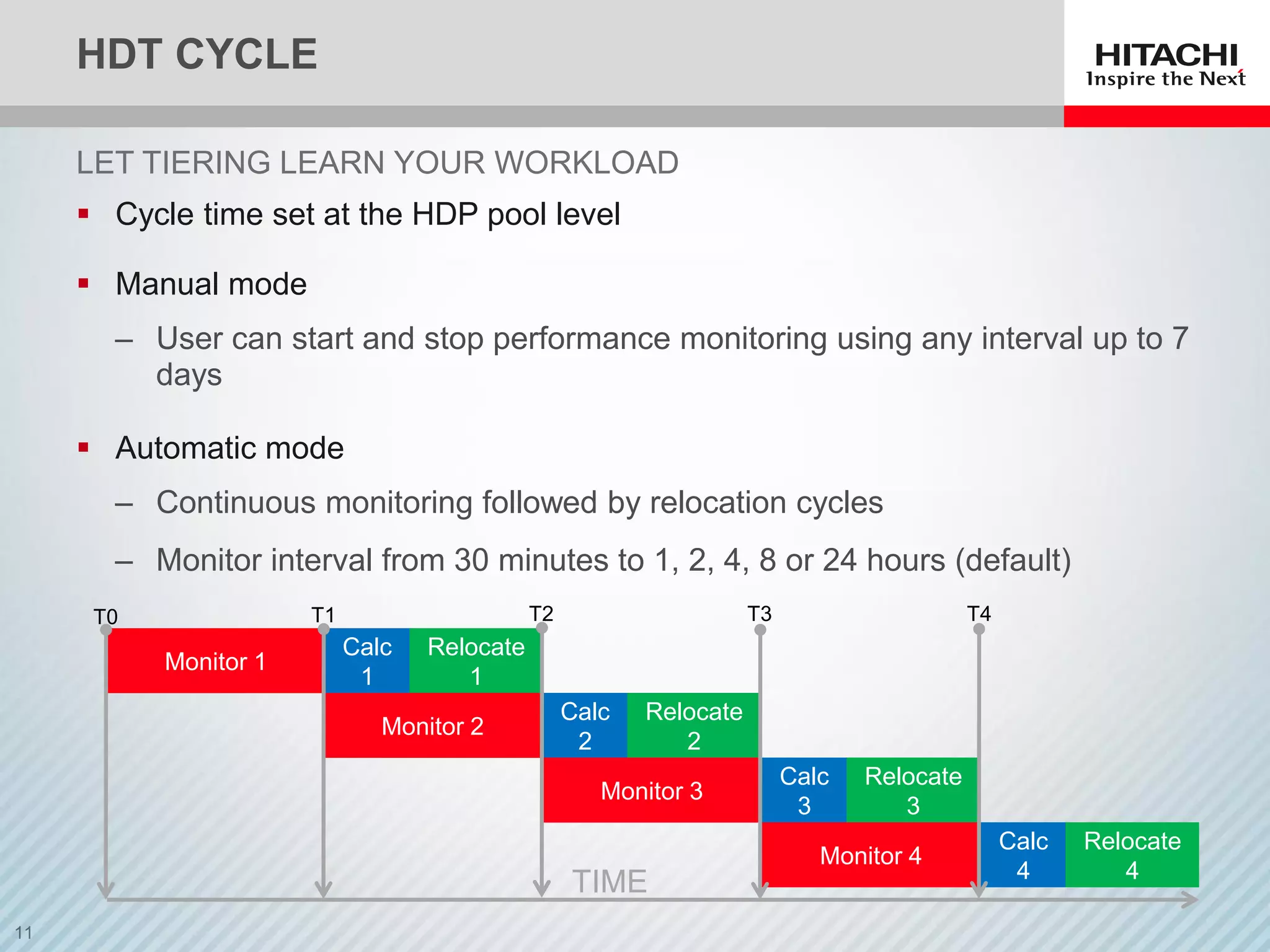

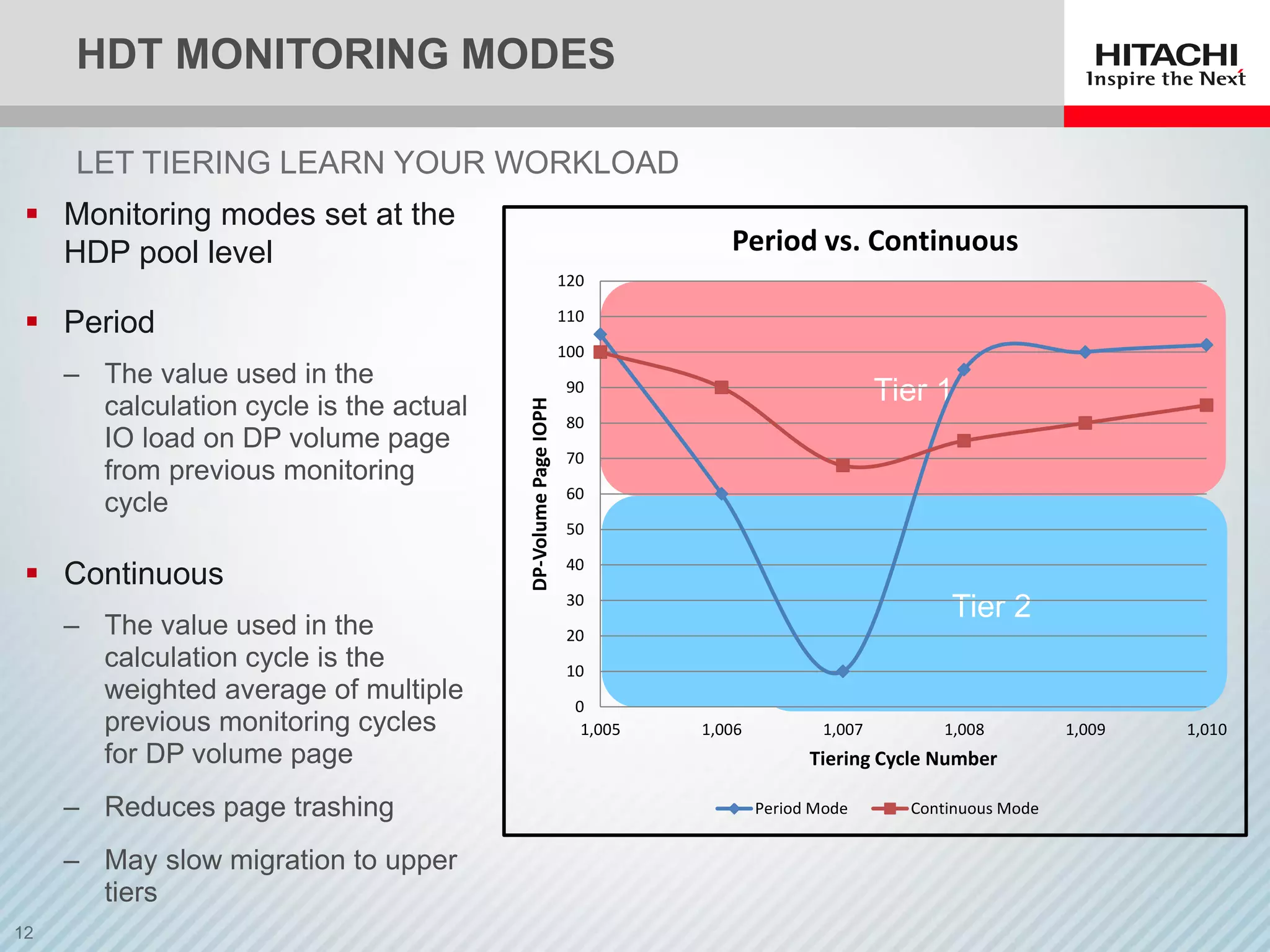

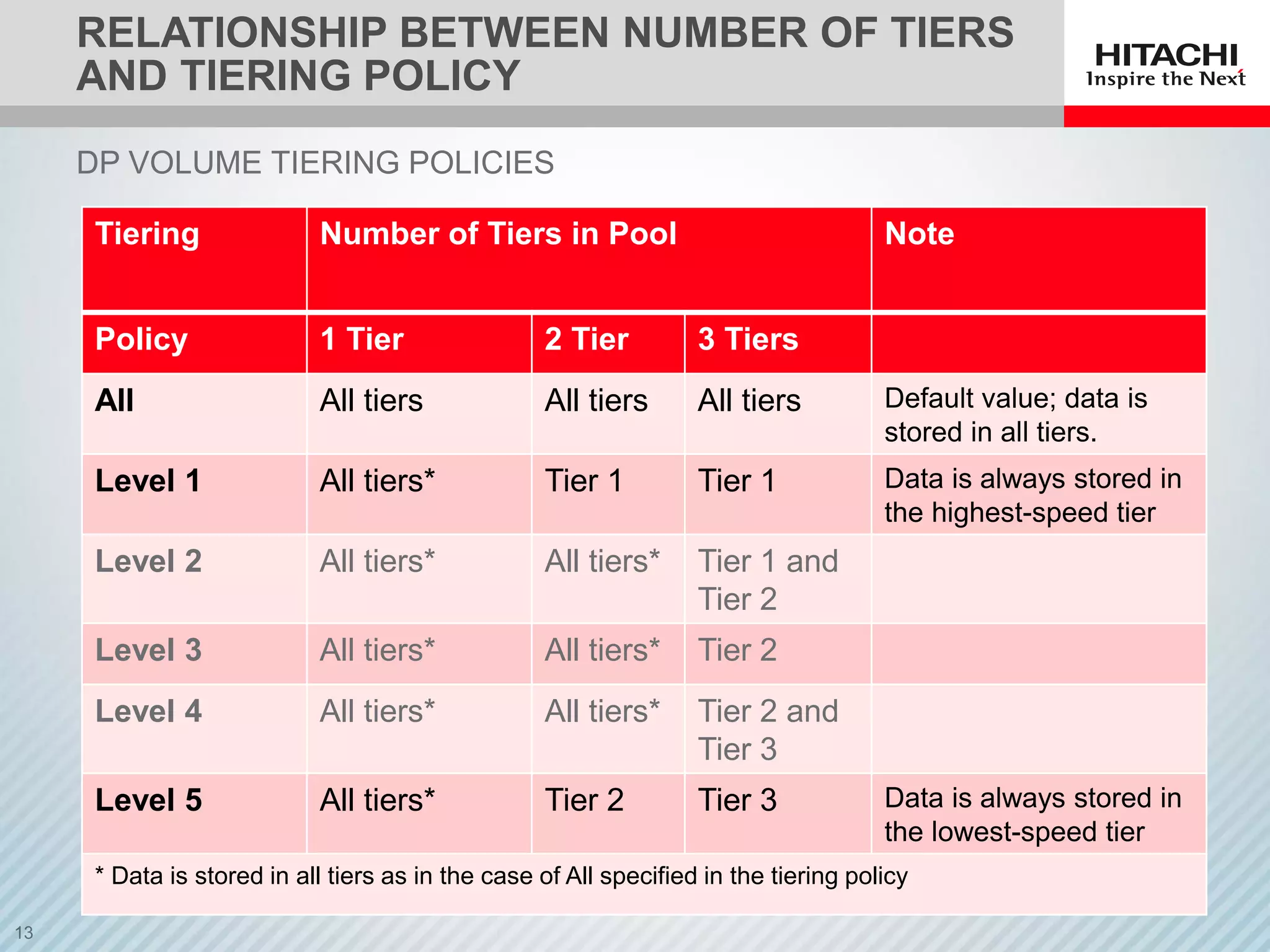

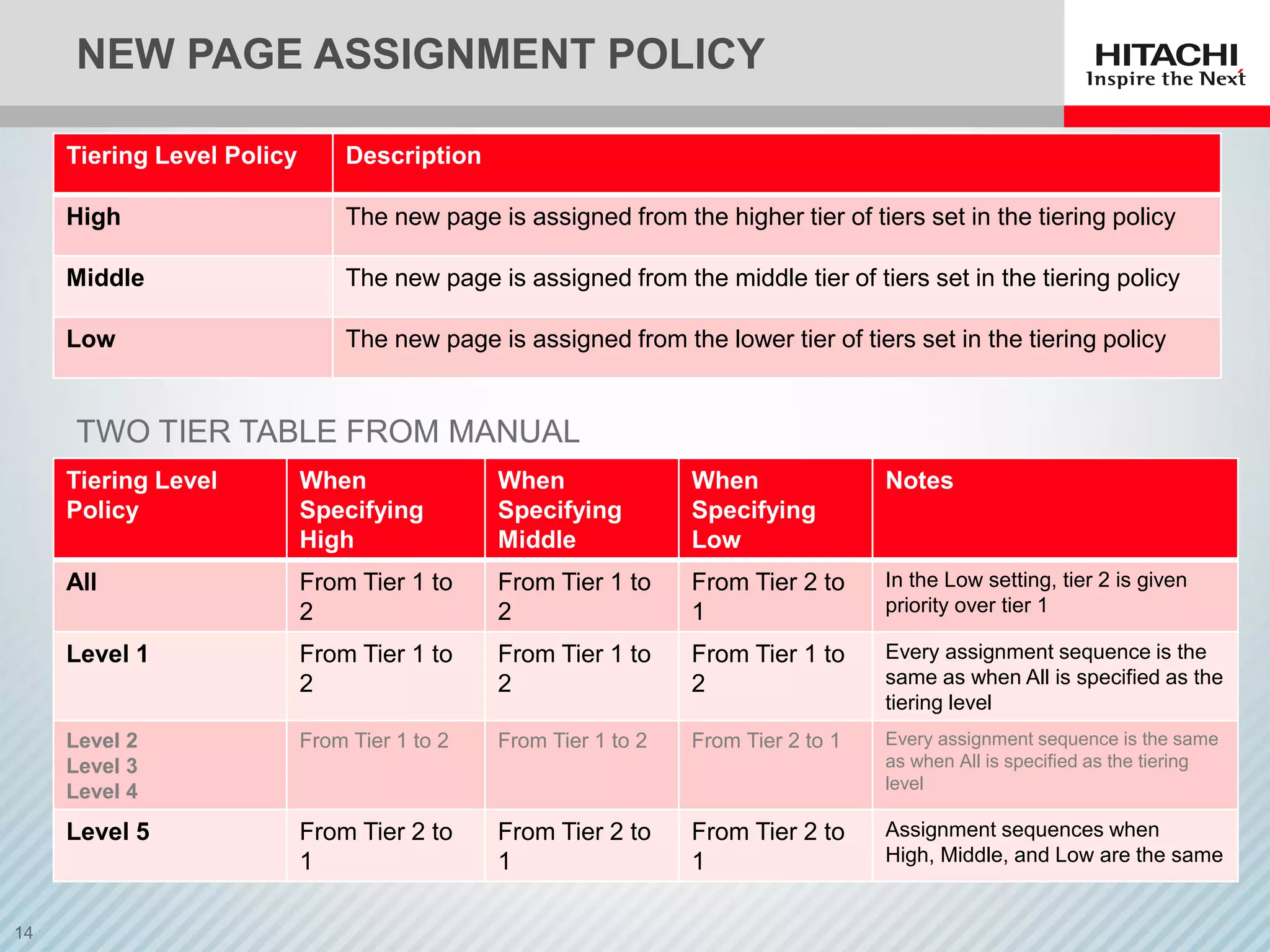

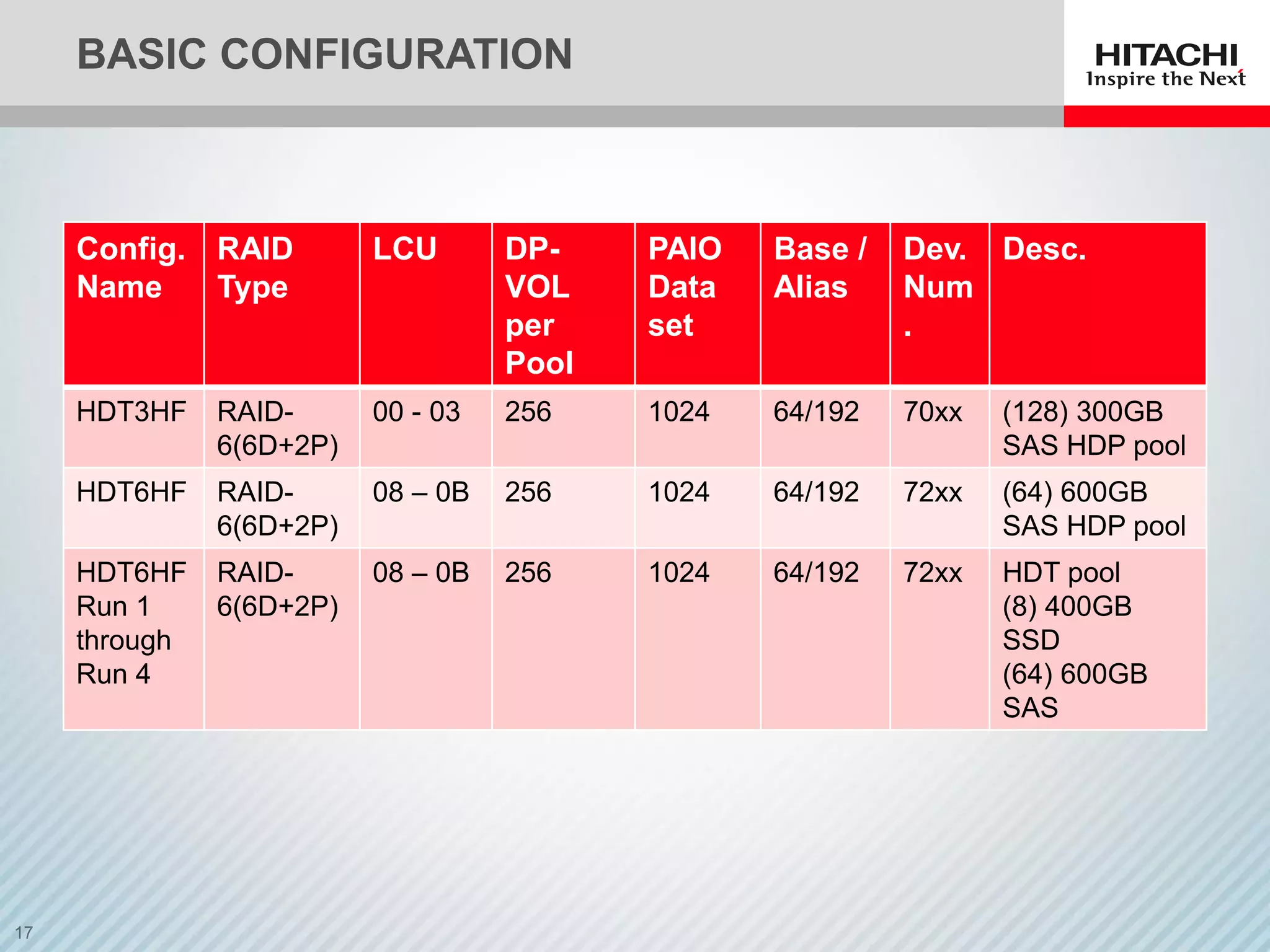

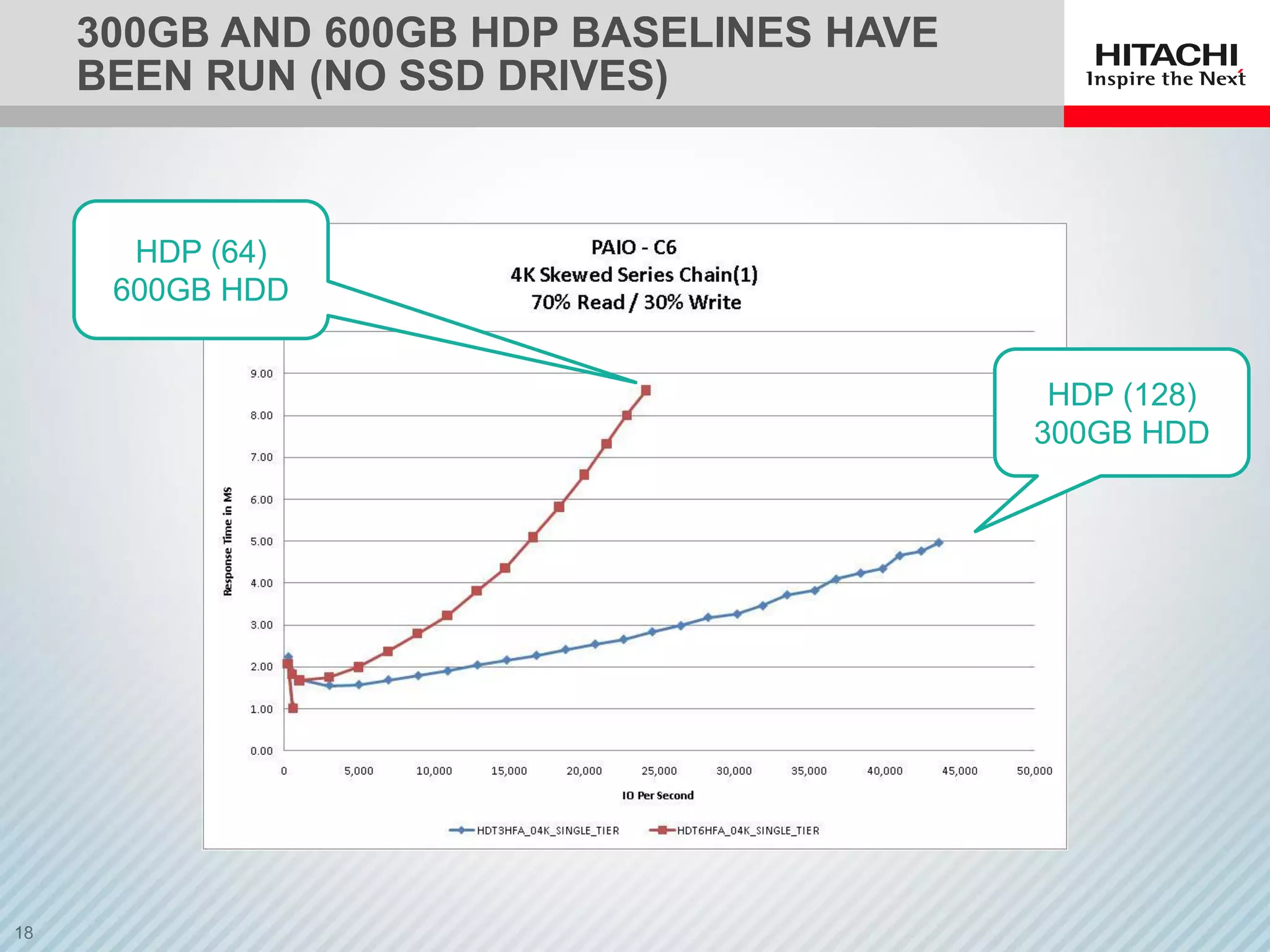

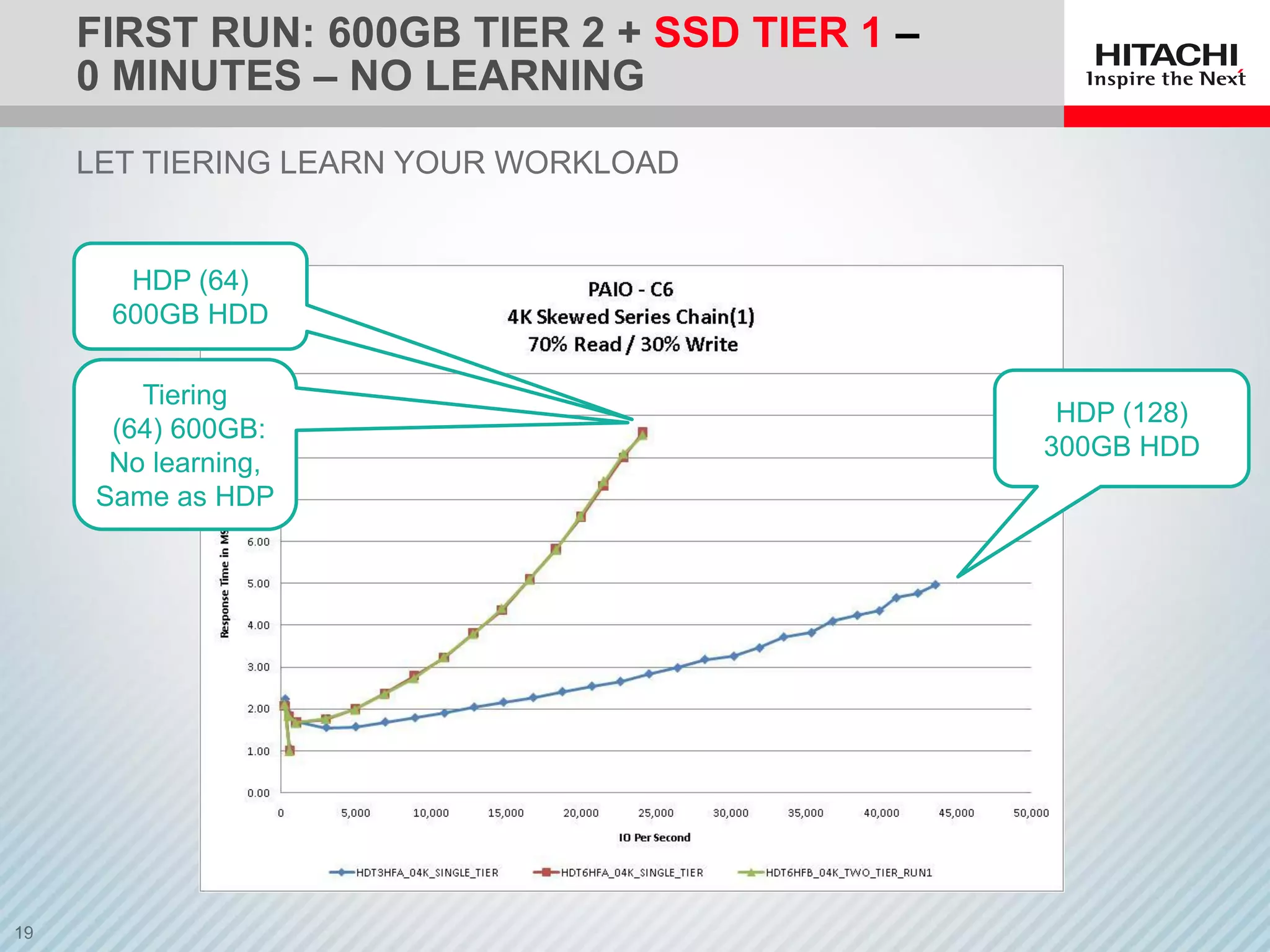

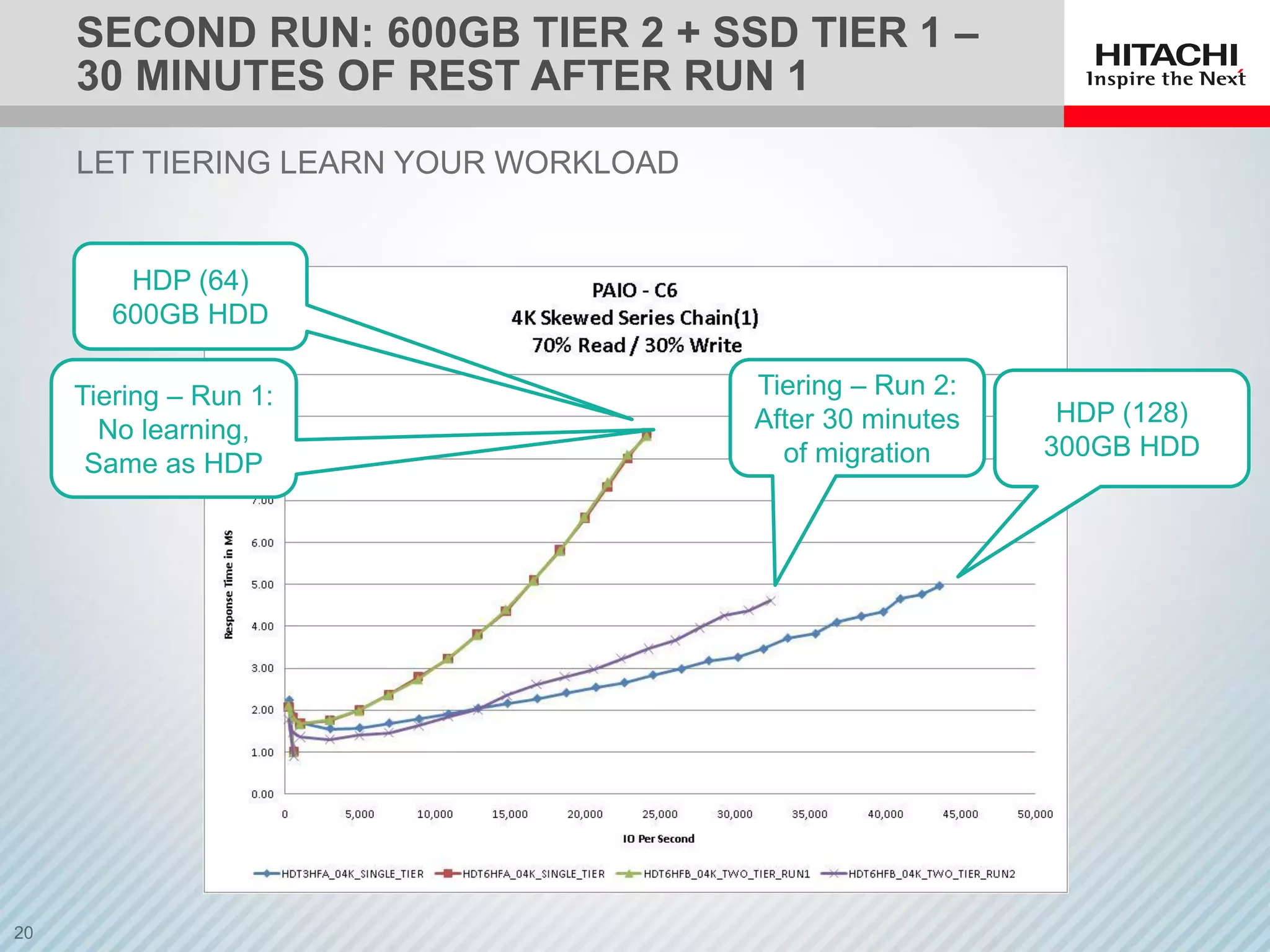

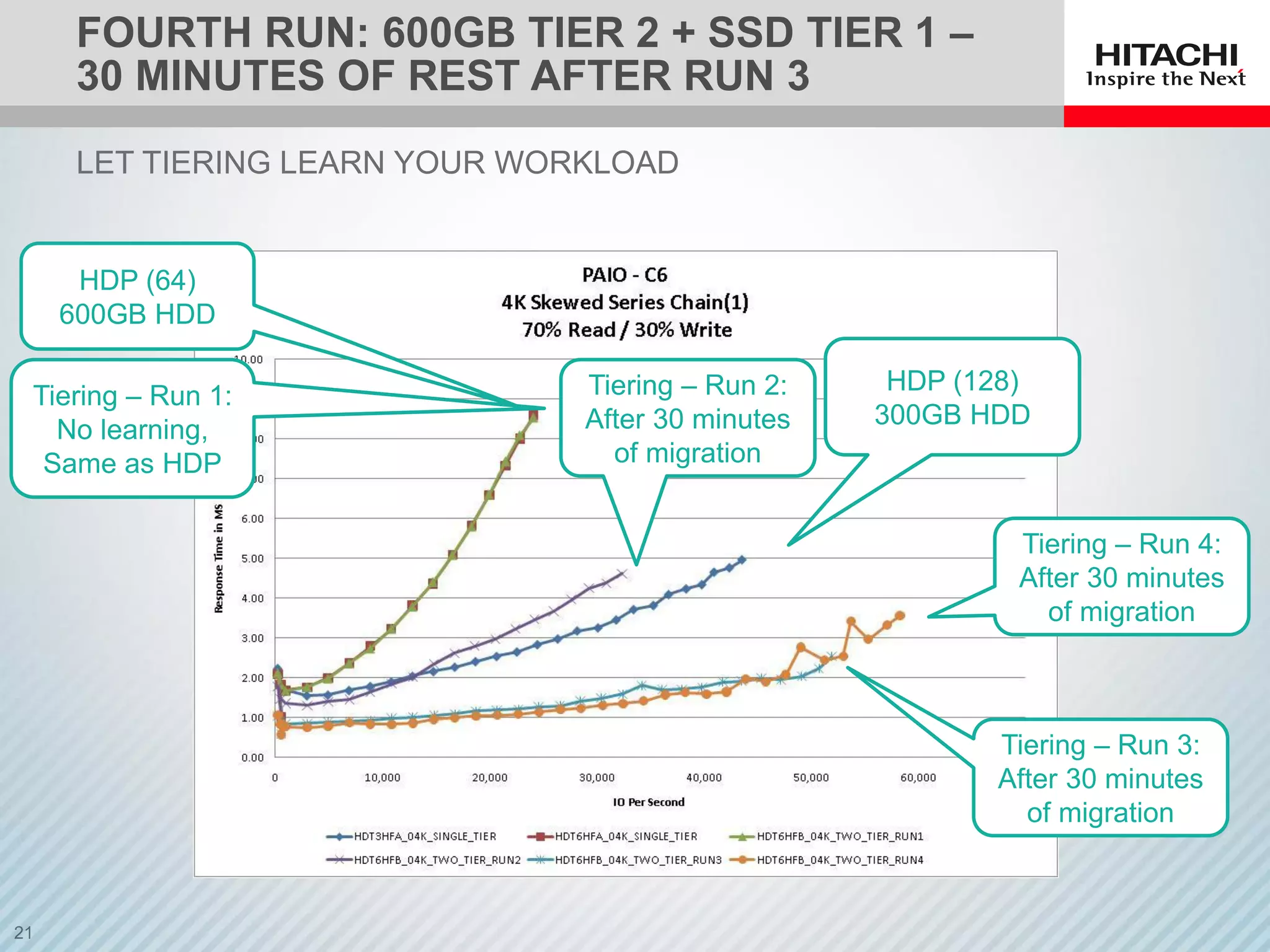

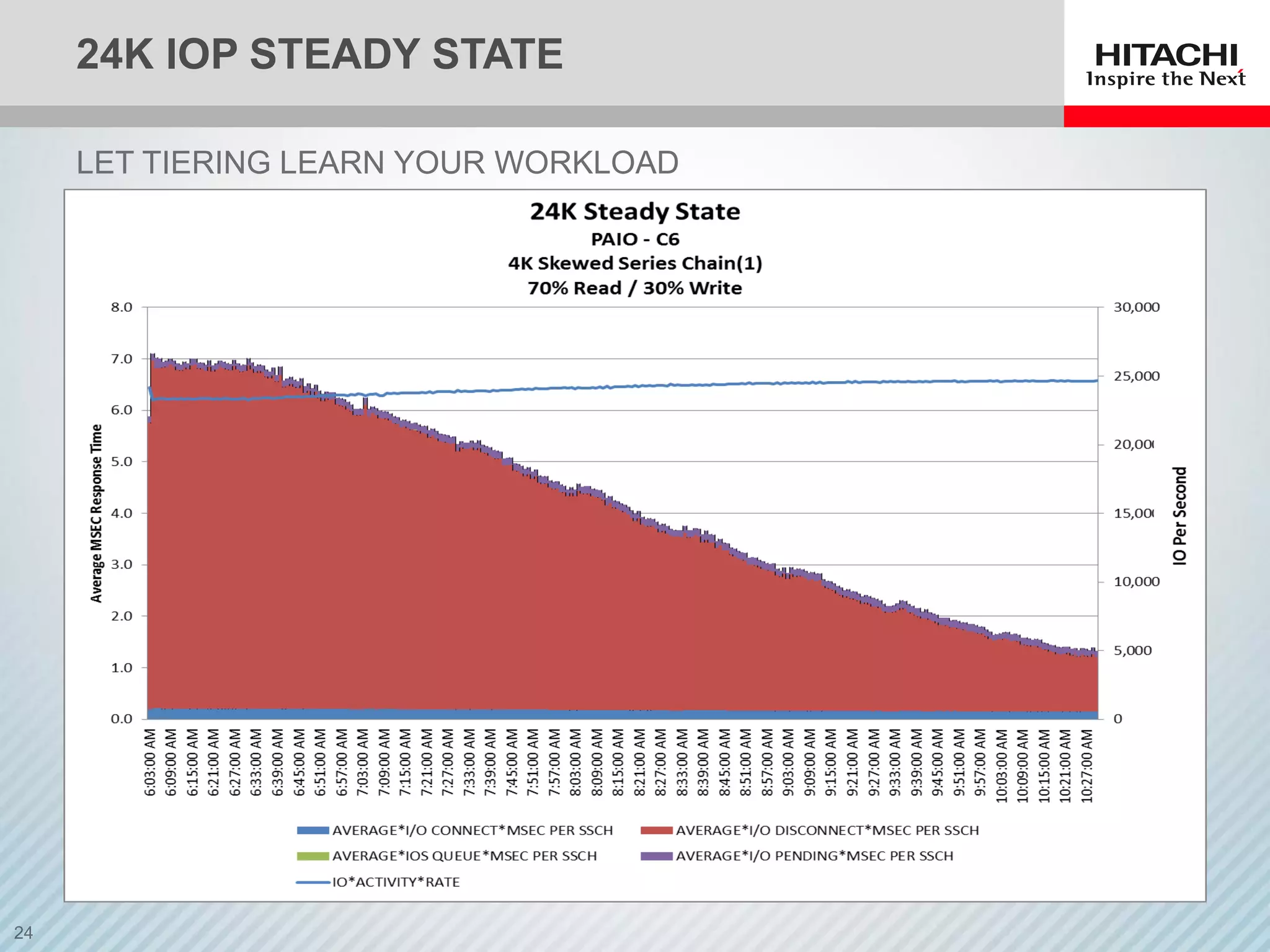

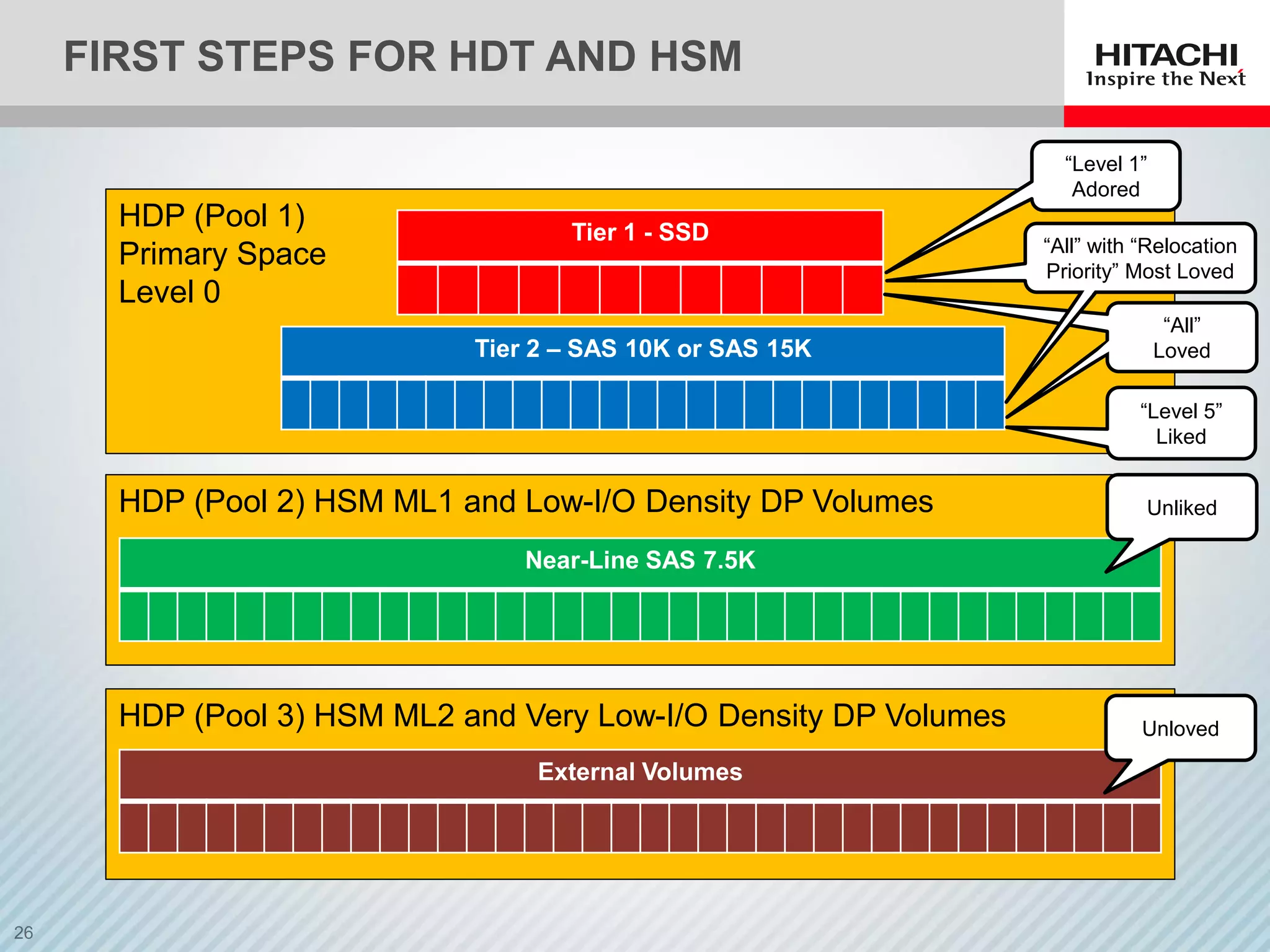

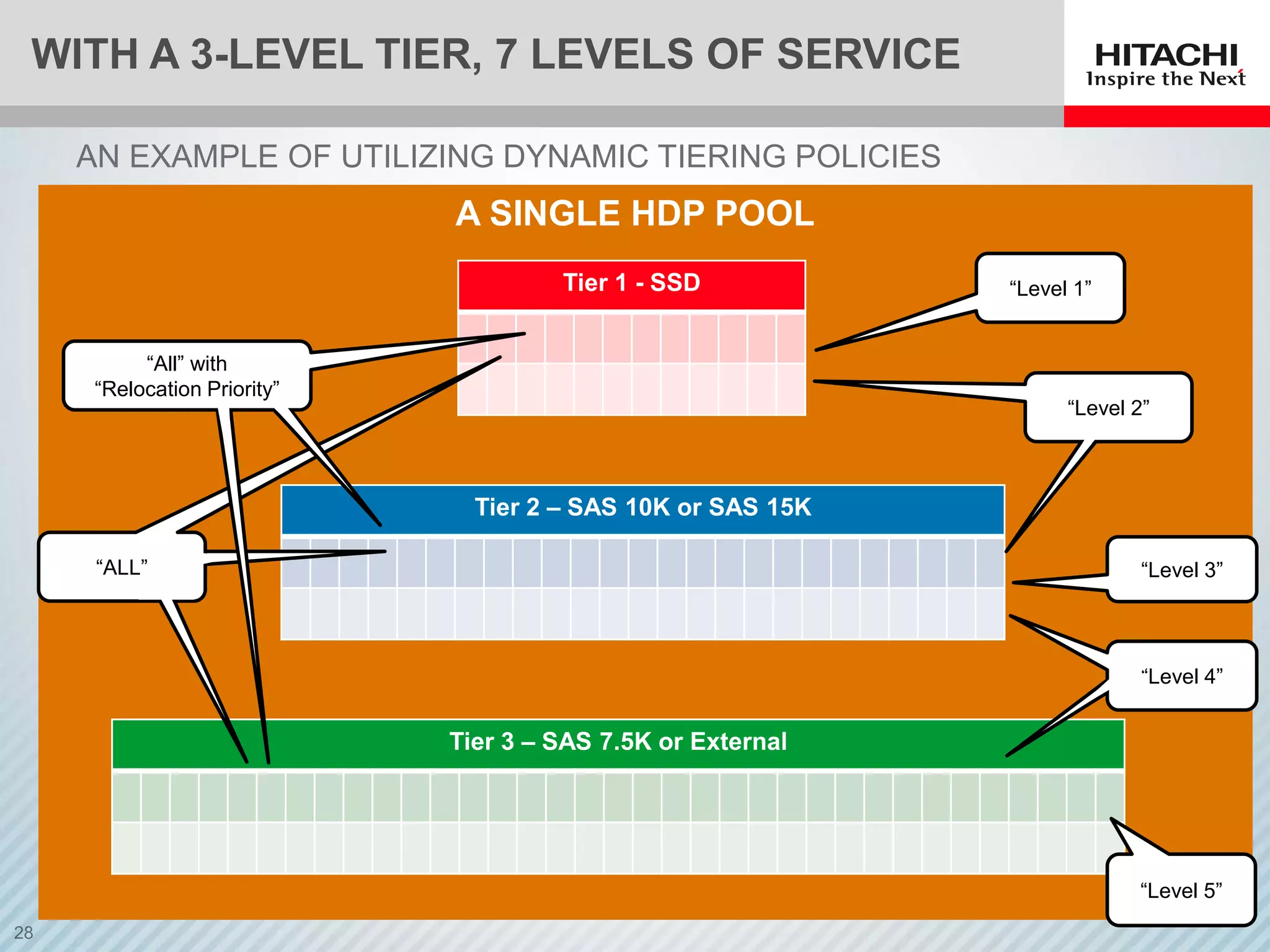

This document discusses the performance considerations of dynamic tiering for mainframe storage using Hitachi's technology, focusing on its configuration and control. It outlines the benefits of dynamic tiering, compares tier types, and provides detailed experimental results that showcase performance improvements. Additionally, it includes information on upcoming educational web sessions related to mainframe storage solutions.