1. The document discusses uncertainty and different methods for handling it, including probability theory.

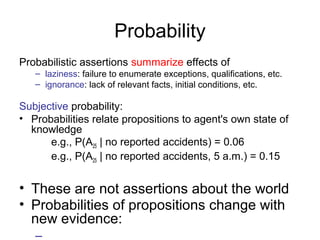

2. It explains that probability can be used to represent an agent's degree of belief in a proposition given available evidence.

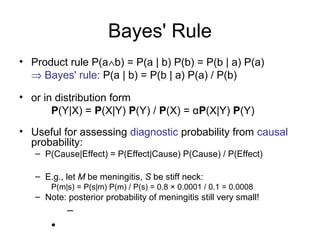

3. Key concepts covered include prior and conditional probability, Bayes' rule, and independence assumptions which allow reducing the size of probability models.

![Normalization

• Denominator can be viewed as a normalization constant α

P(Cavity | toothache) = α, P(Cavity,toothache)

= α, [P(Cavity,toothache,catch) + P(Cavity,toothache,Ø catch)]

= α, [<0.108,0.016> + <0.012,0.064>]

= α, <0.12,0.08> = <0.6,0.4>

General idea: compute distribution on query variable by fixing evidence

variables and summing over hidden variables

•](https://image.slidesharecdn.com/m13-uncertainty24sldes-141210114649-conversion-gate01/85/Uncertainity-17-320.jpg)