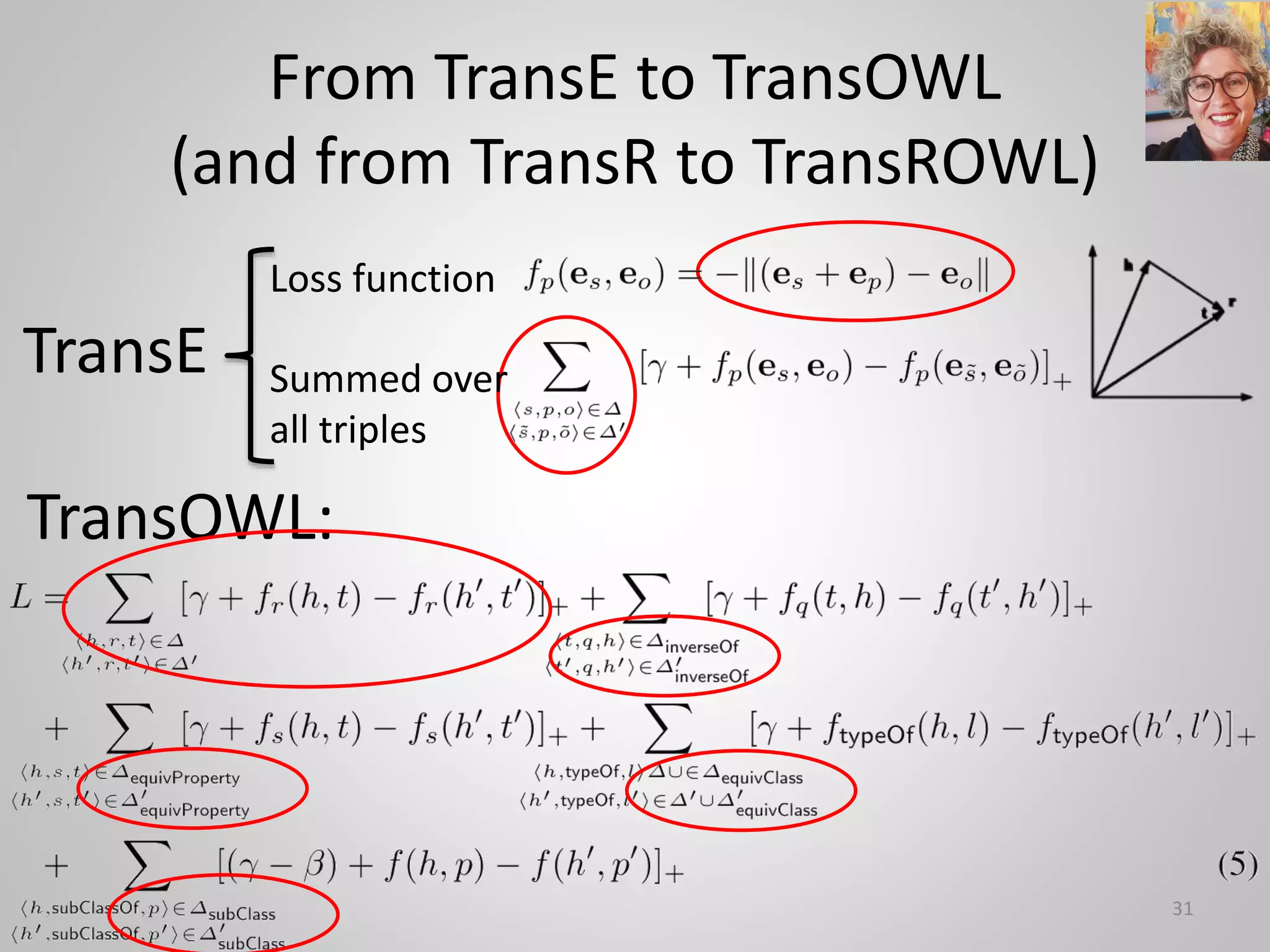

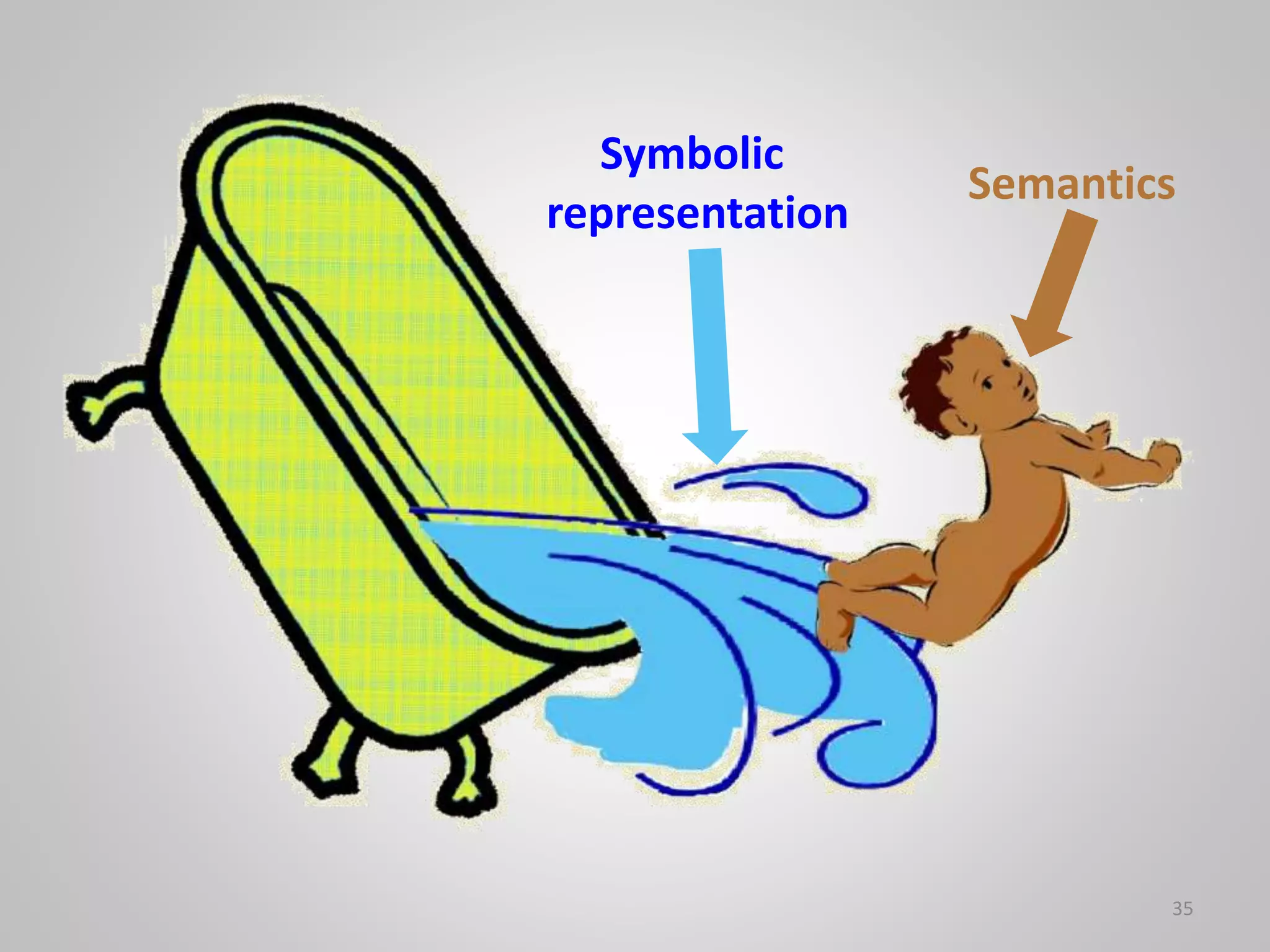

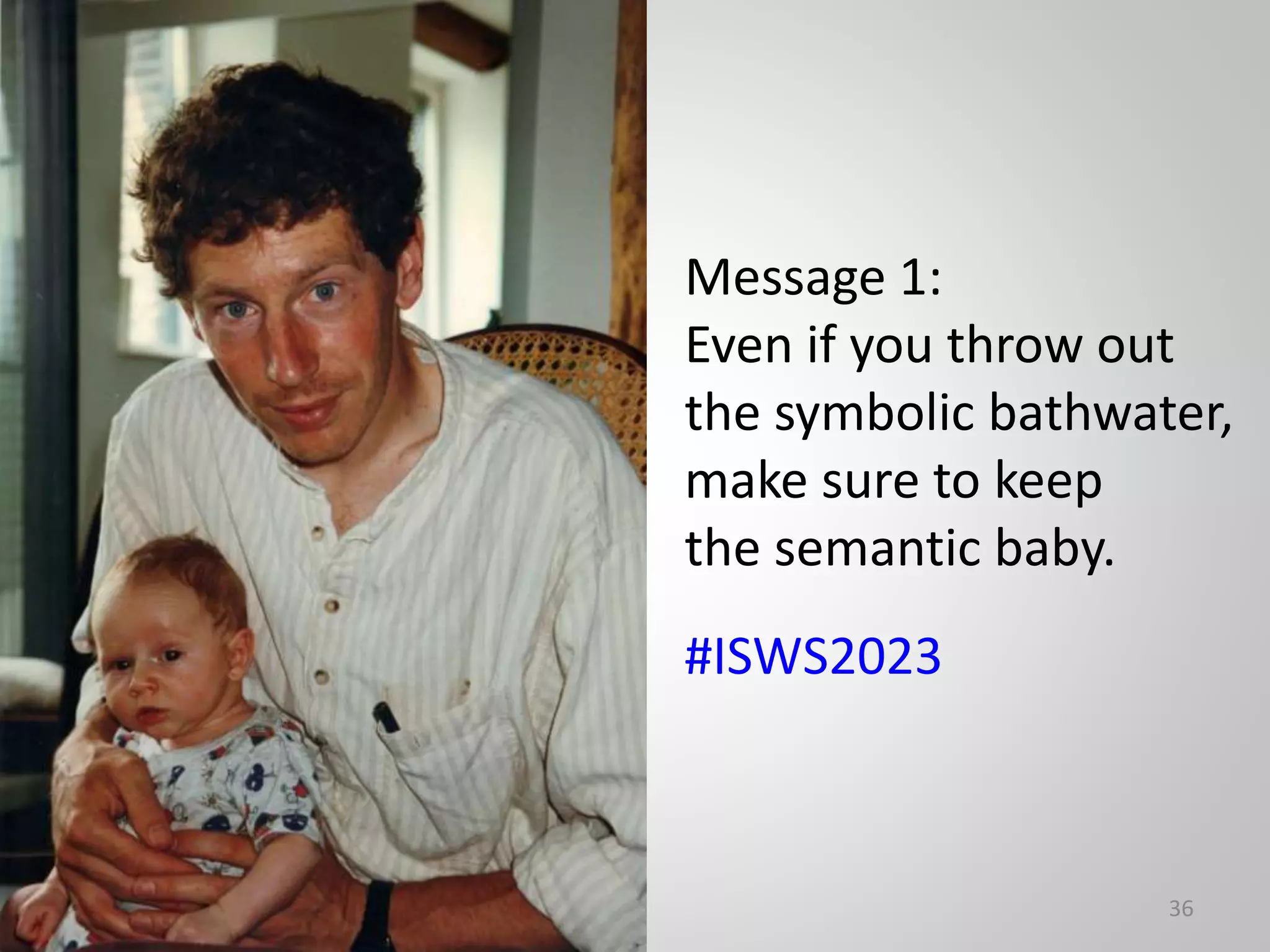

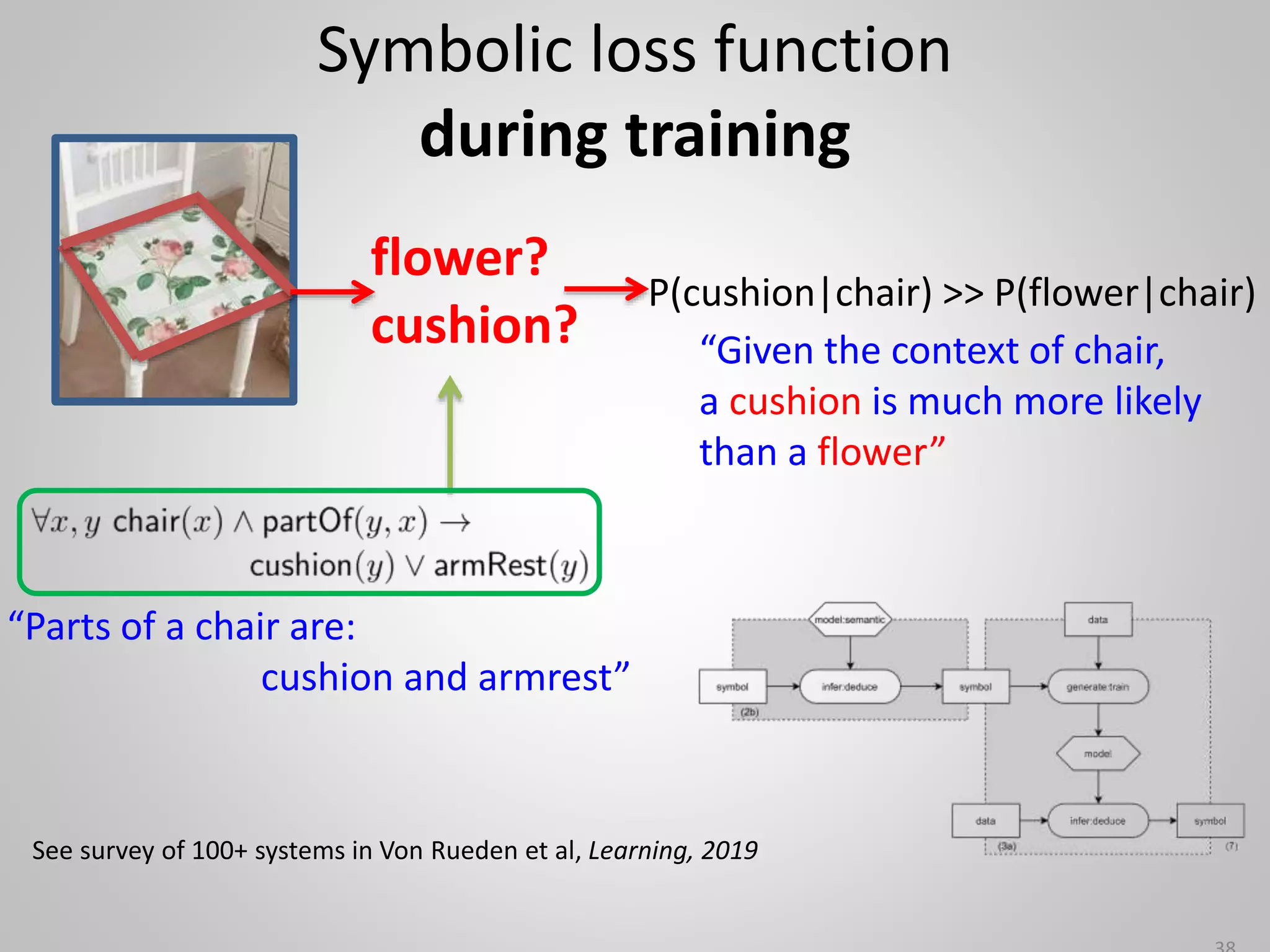

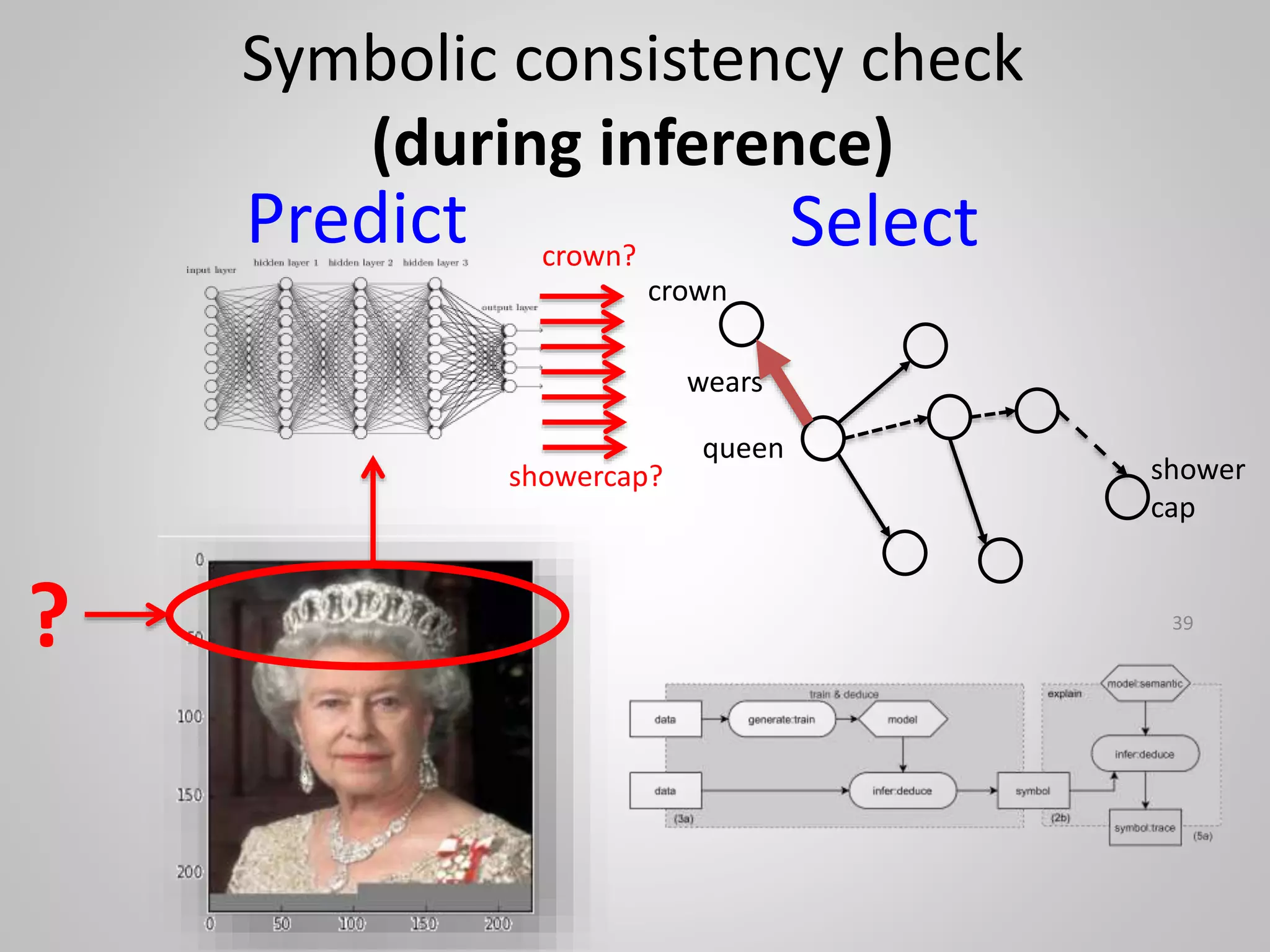

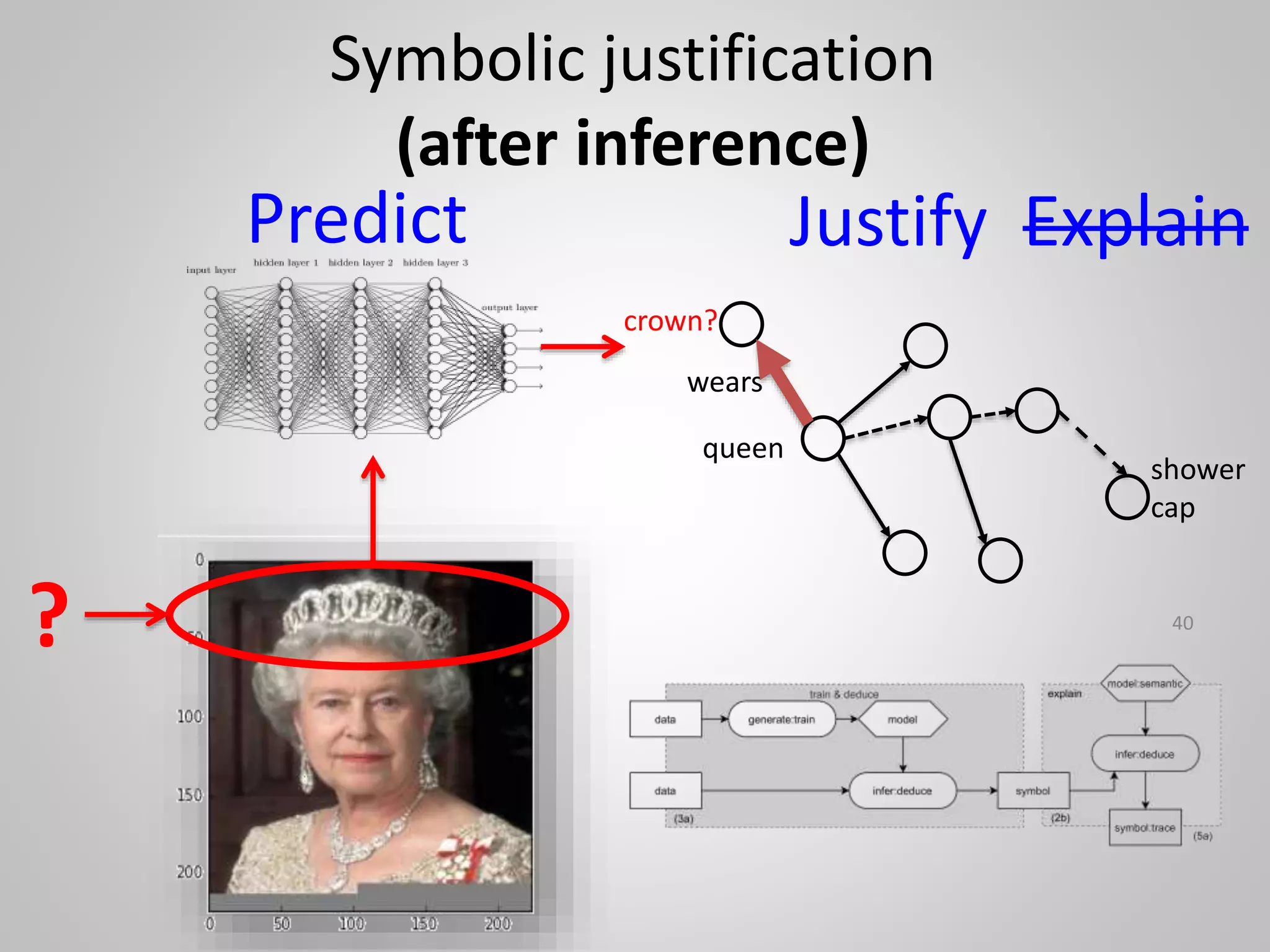

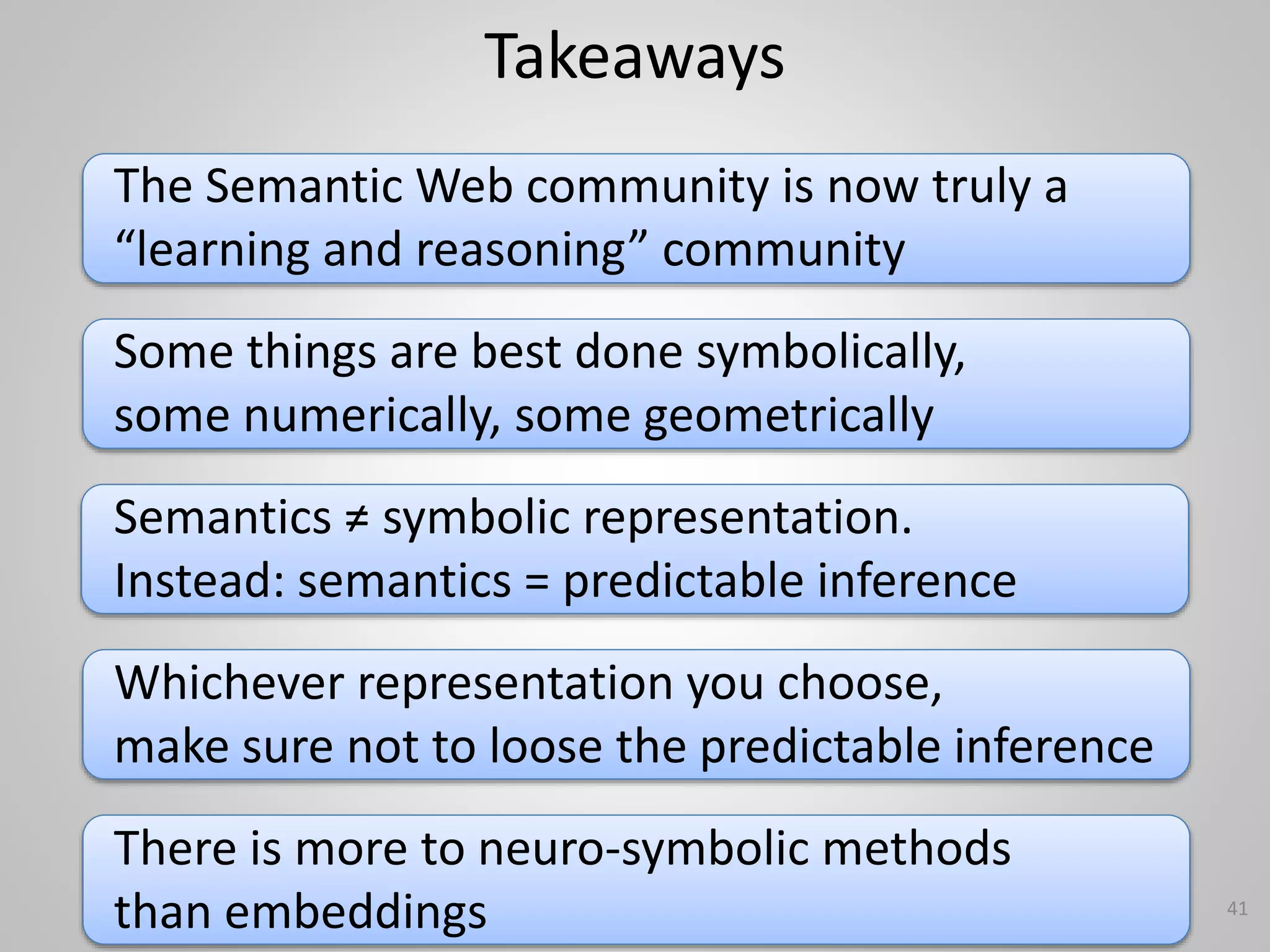

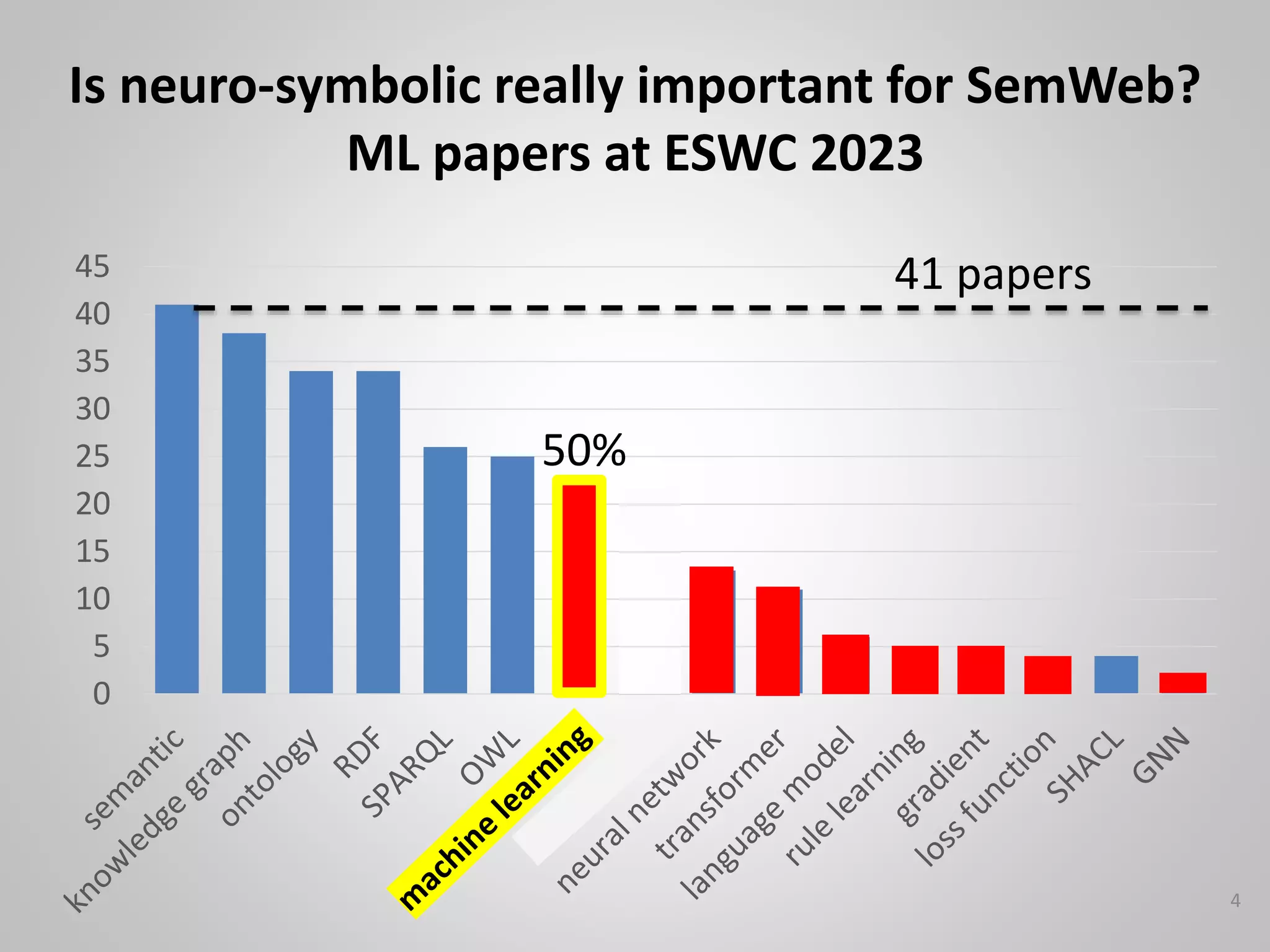

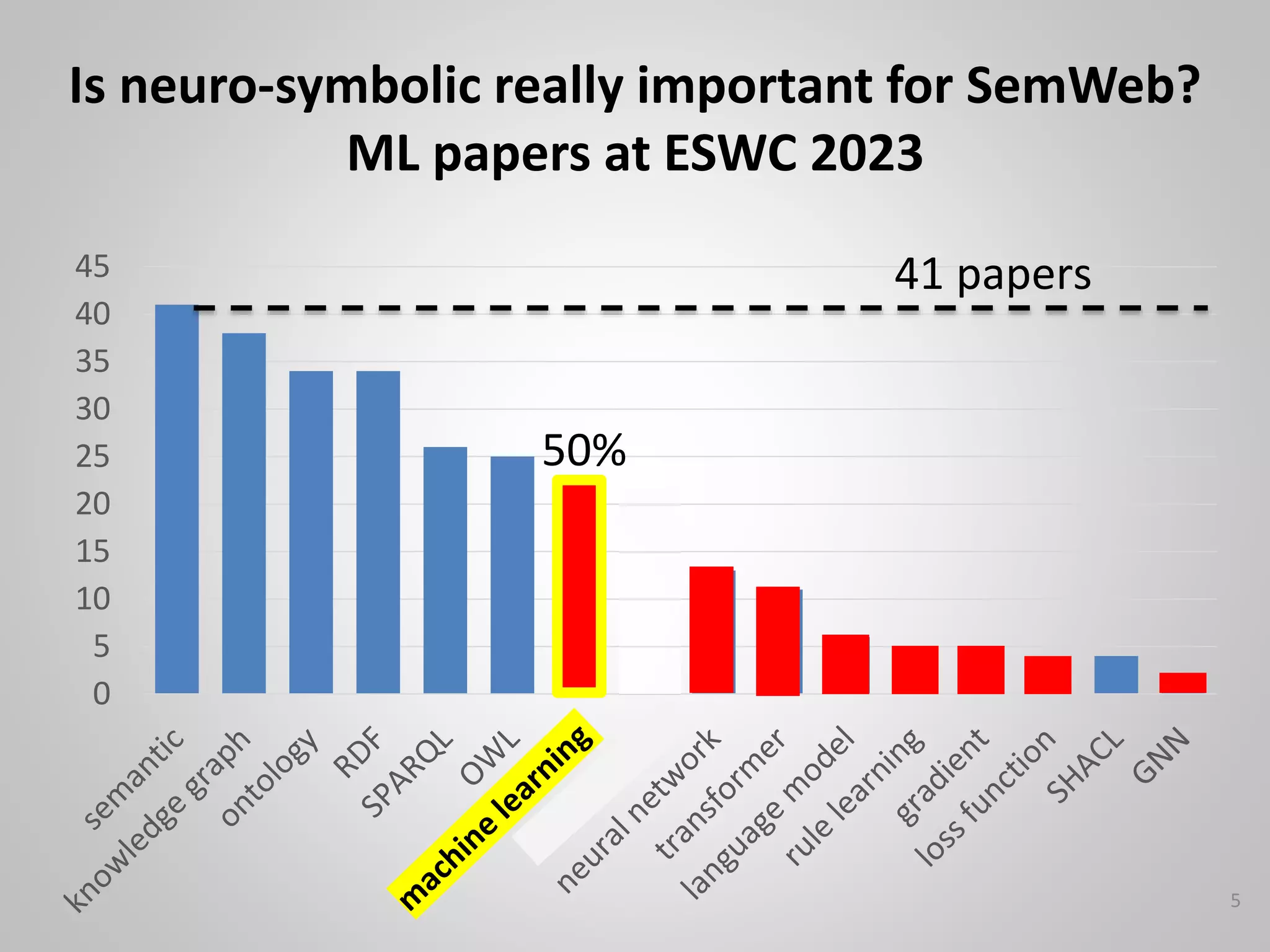

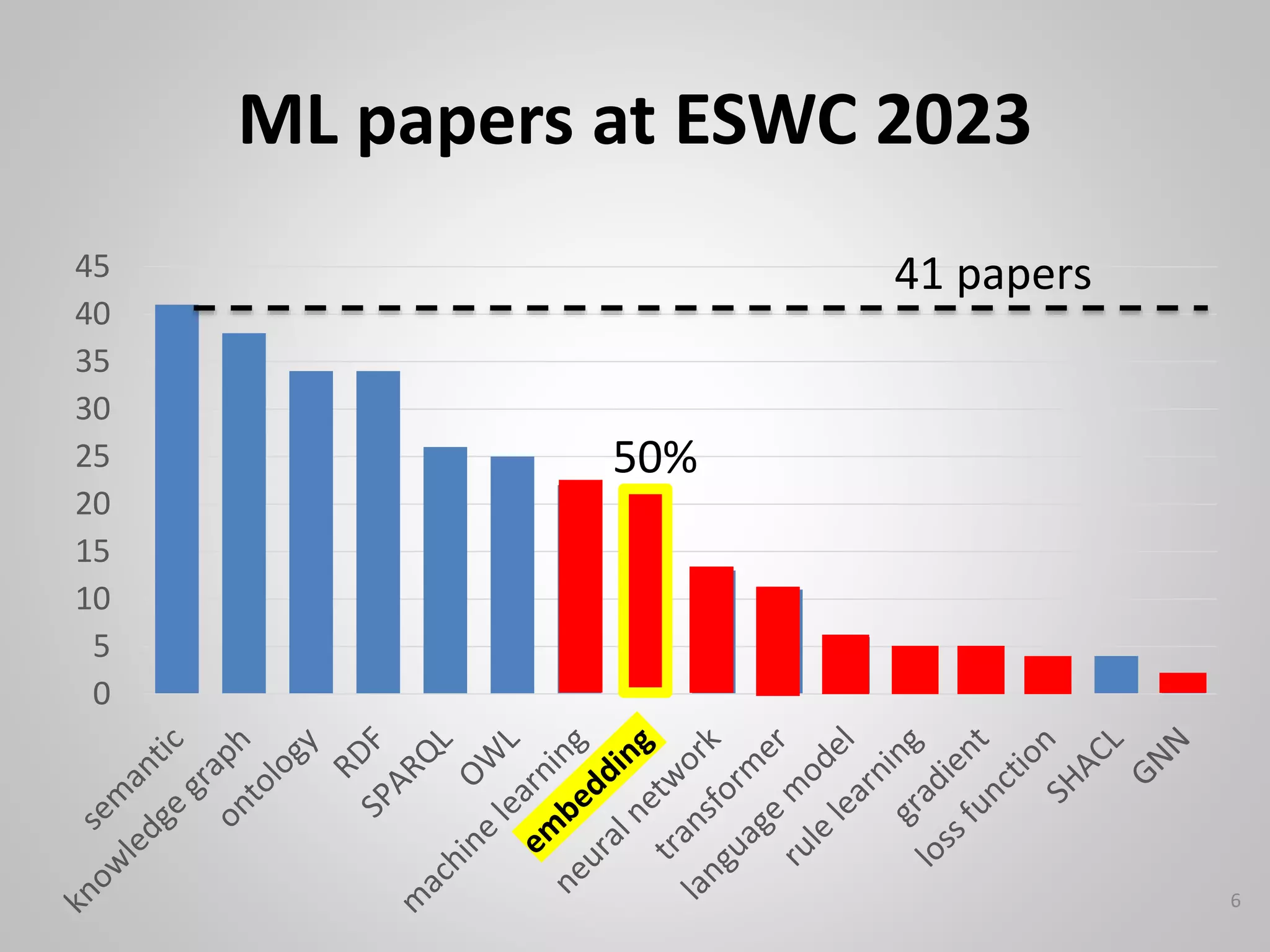

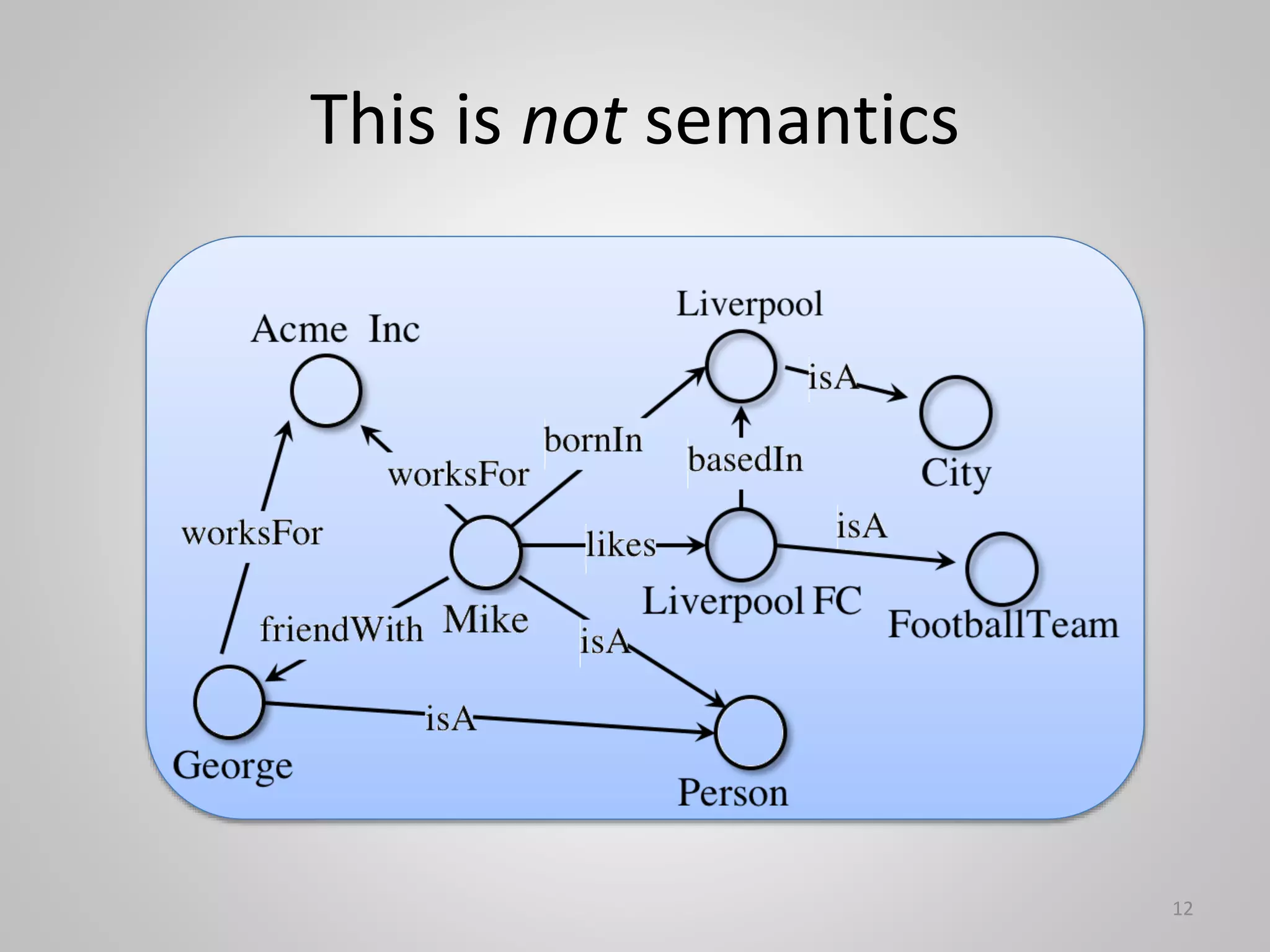

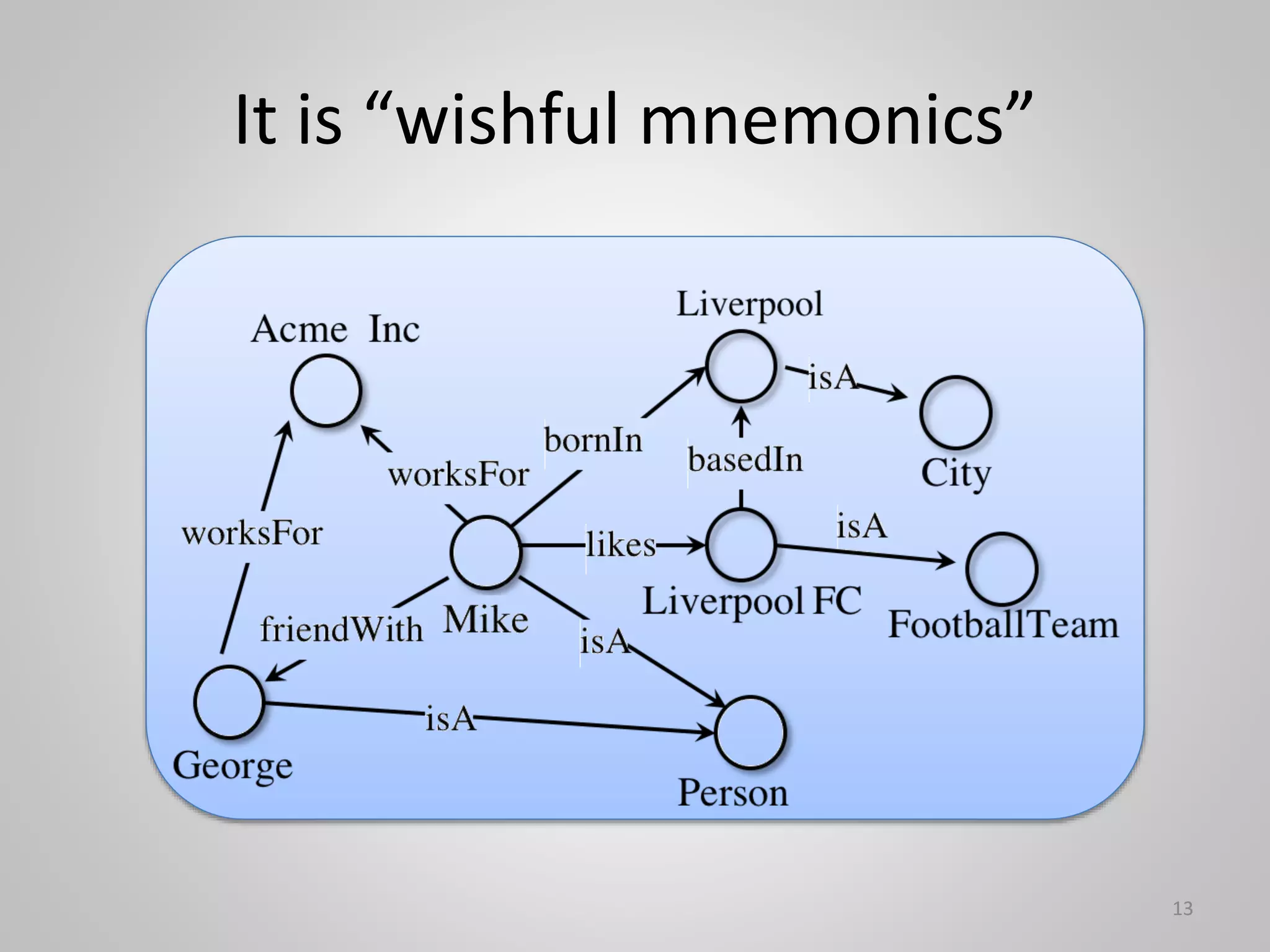

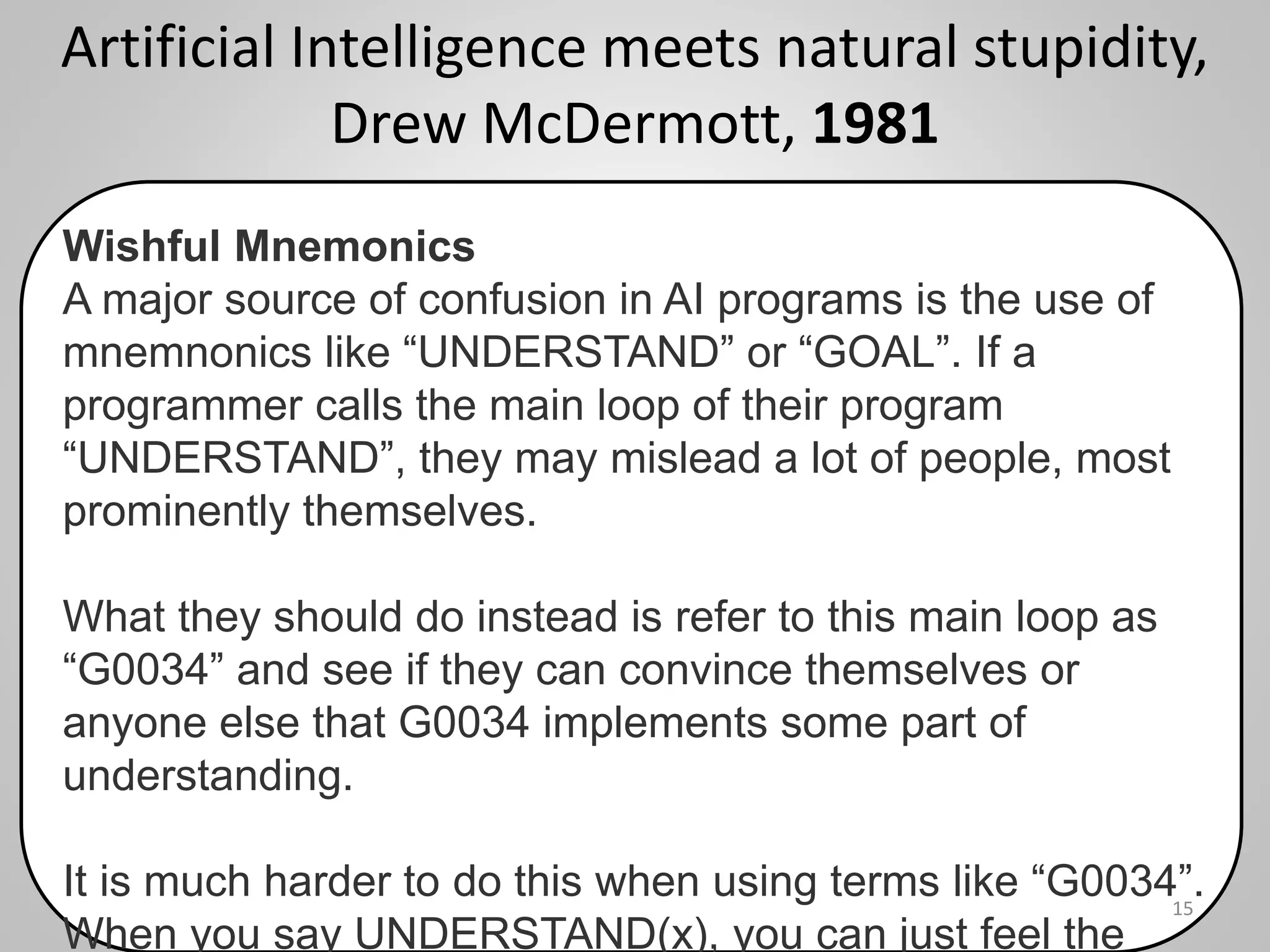

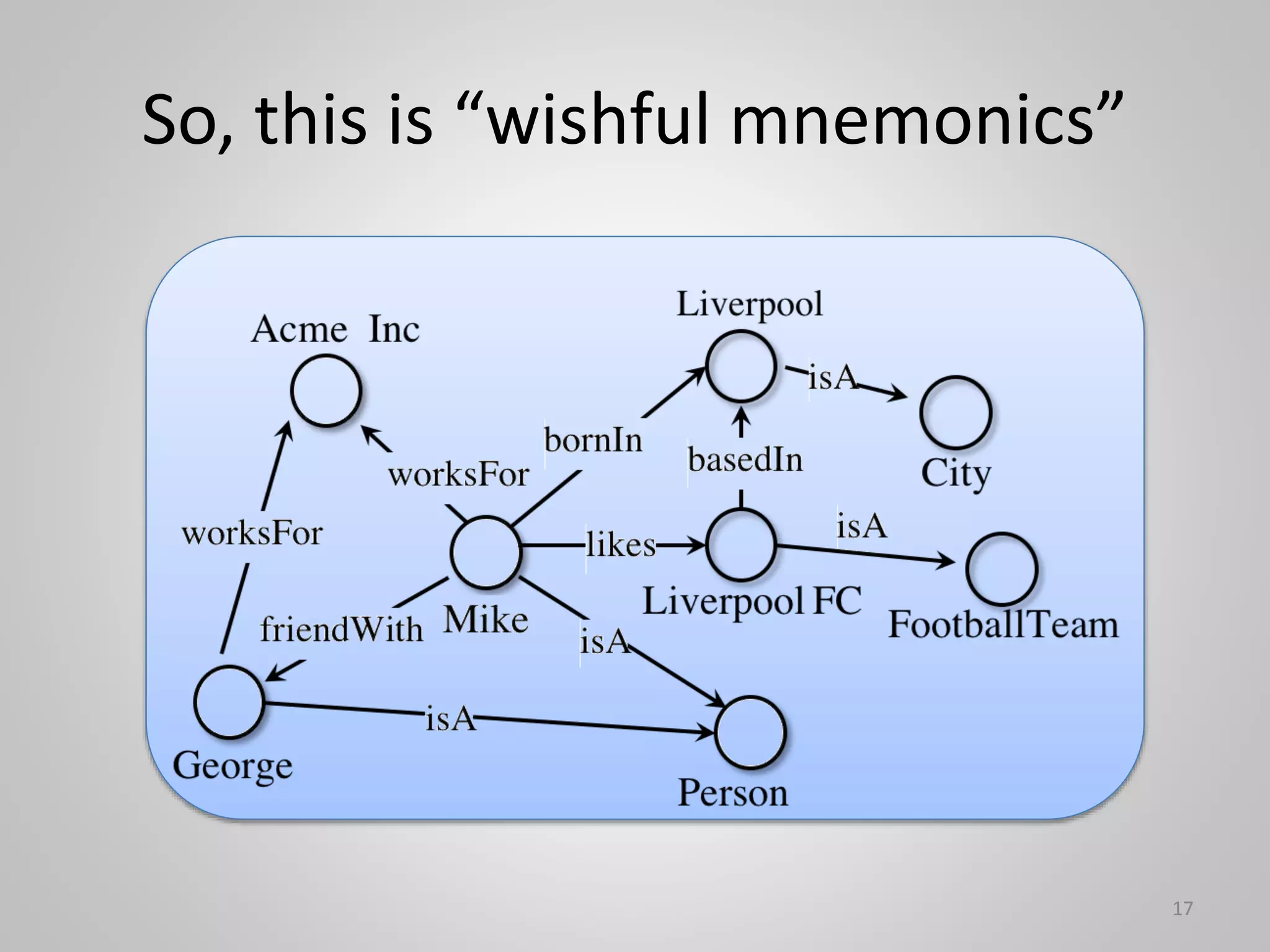

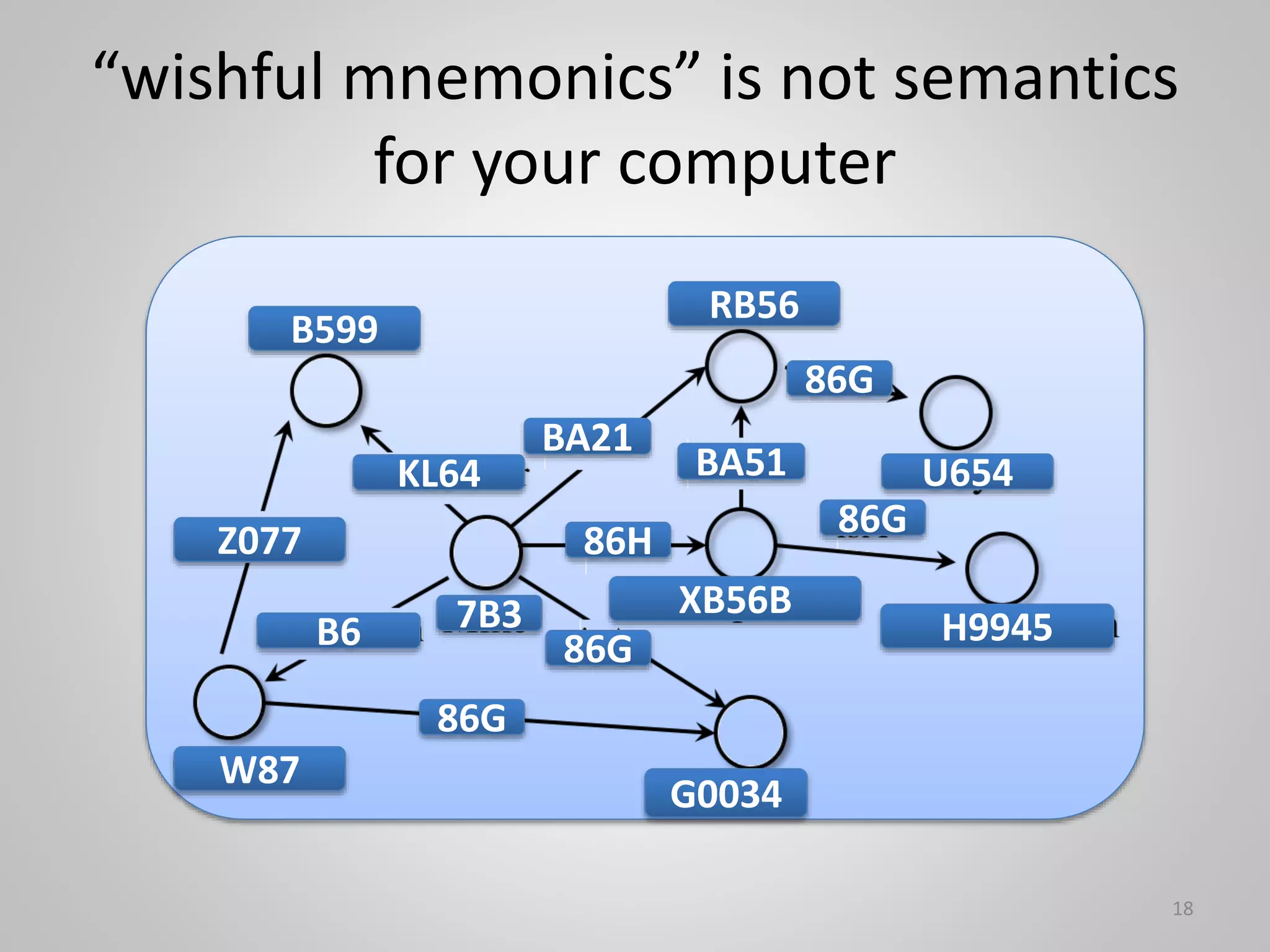

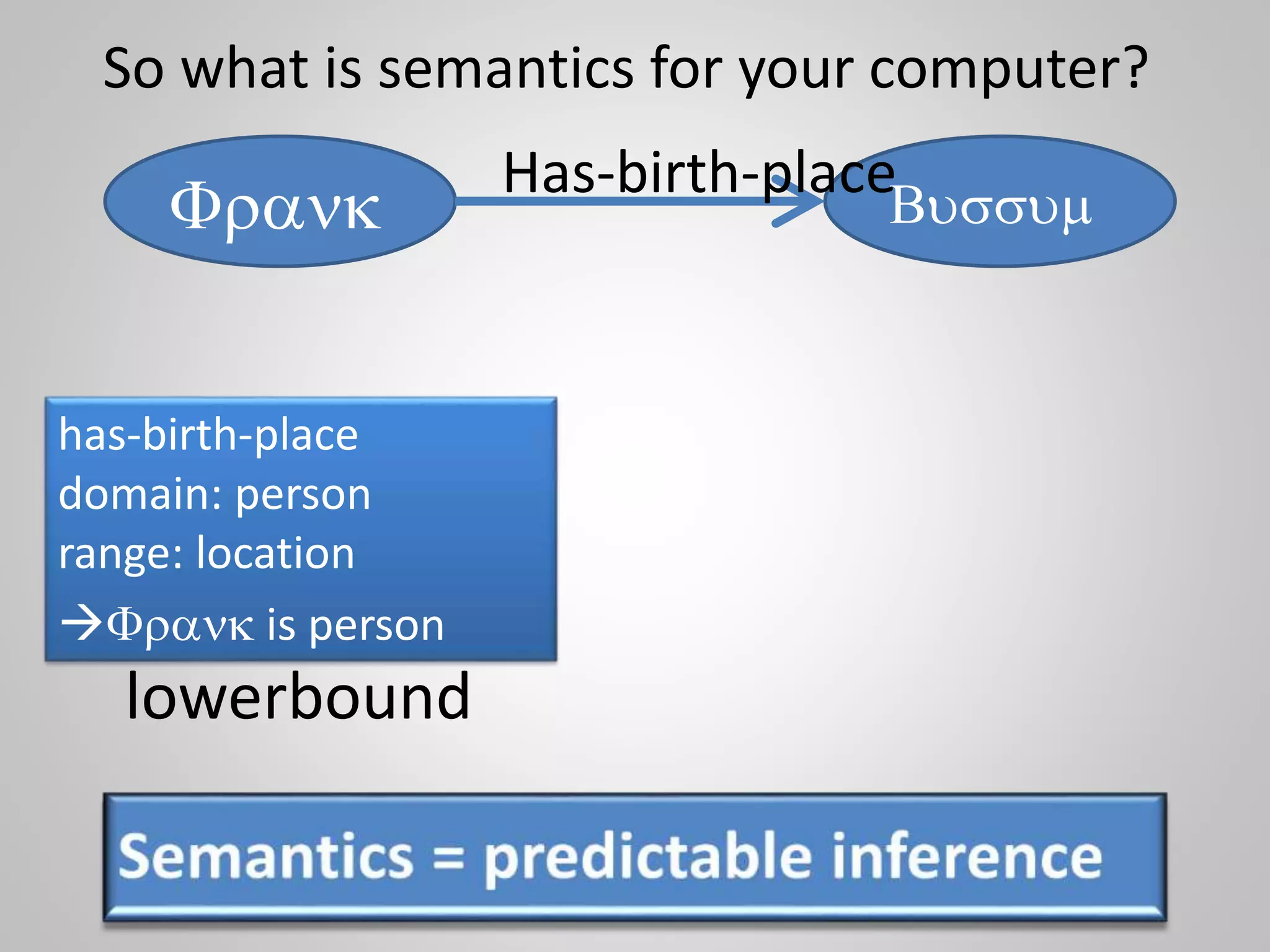

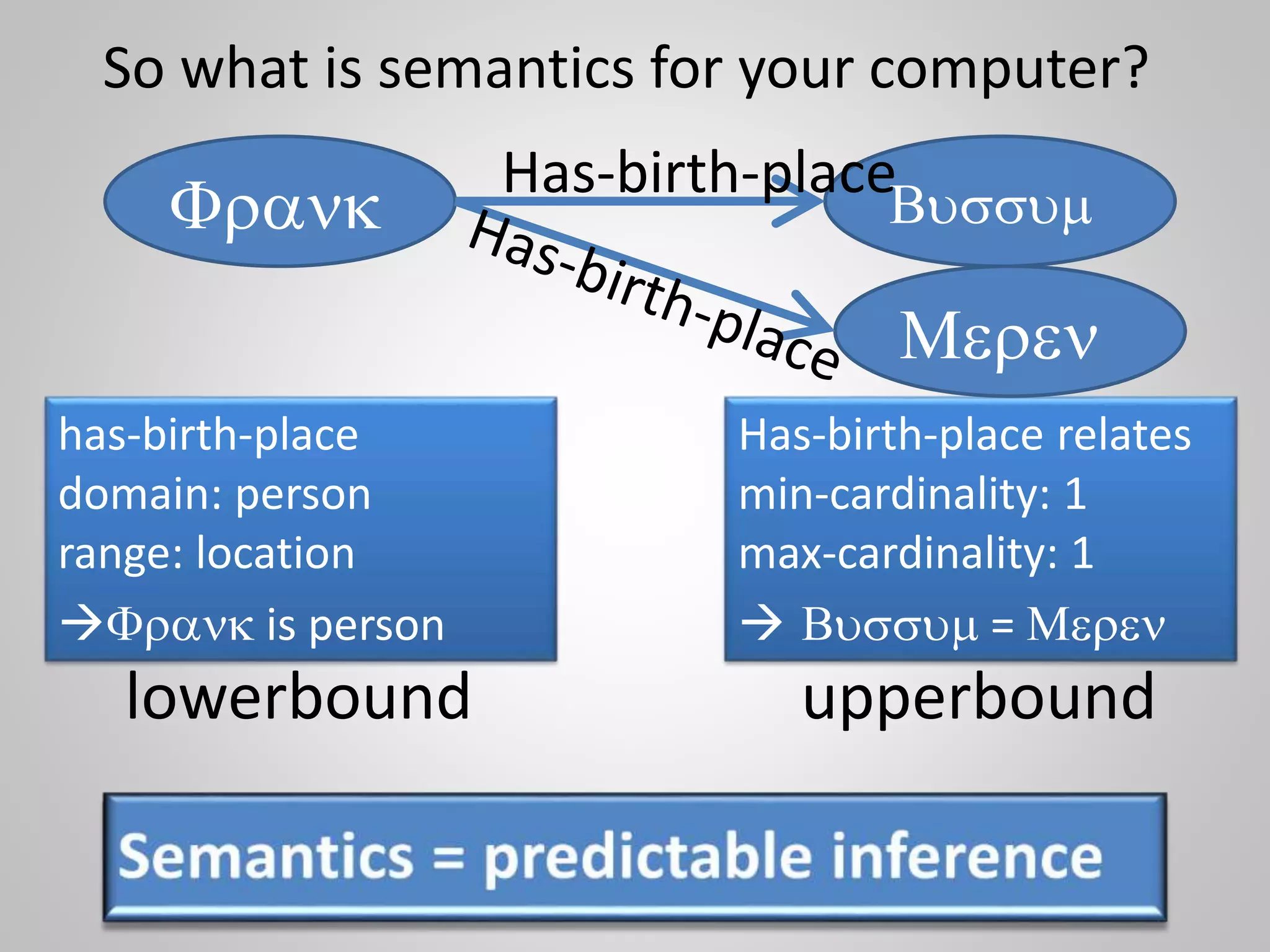

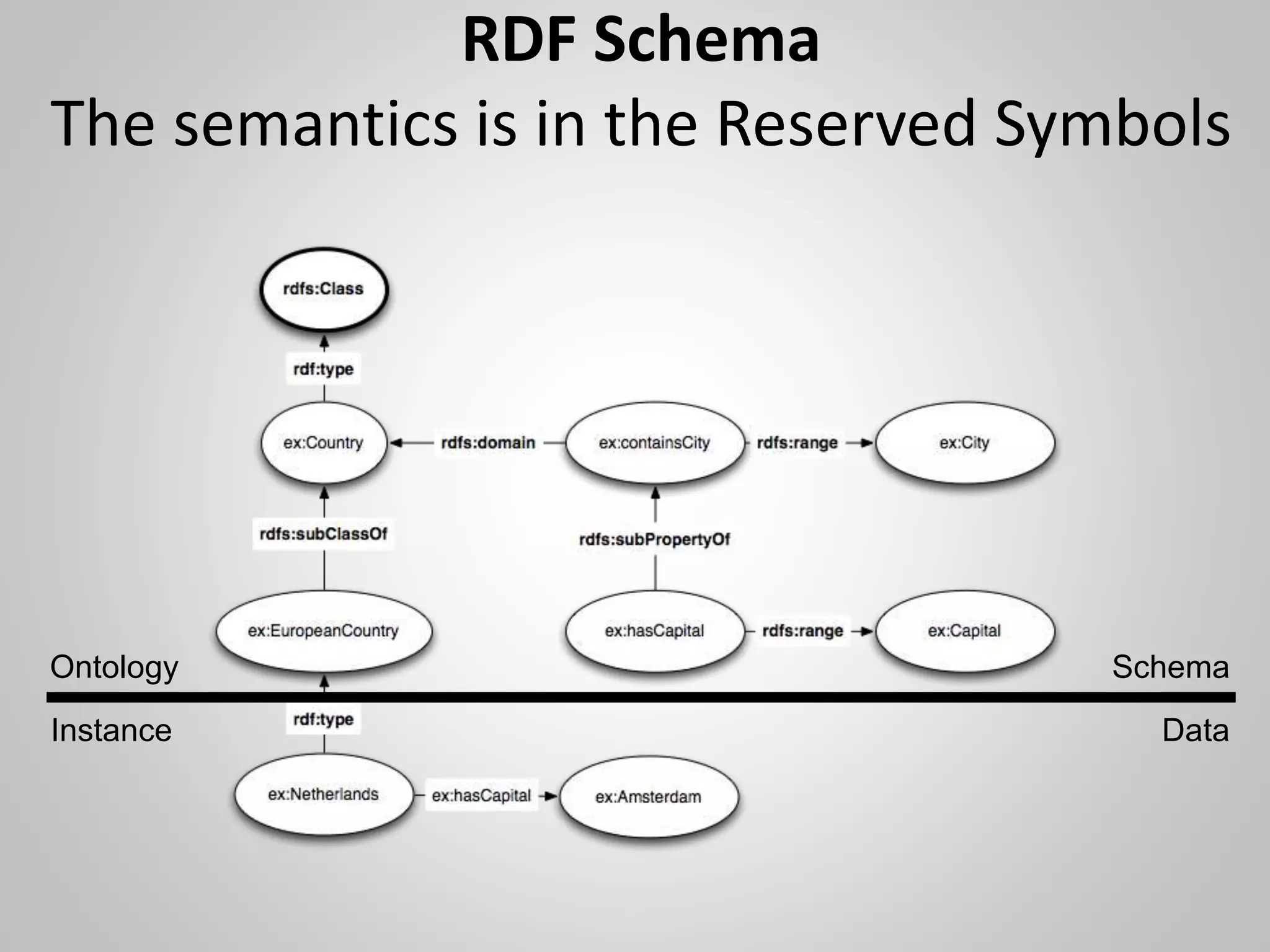

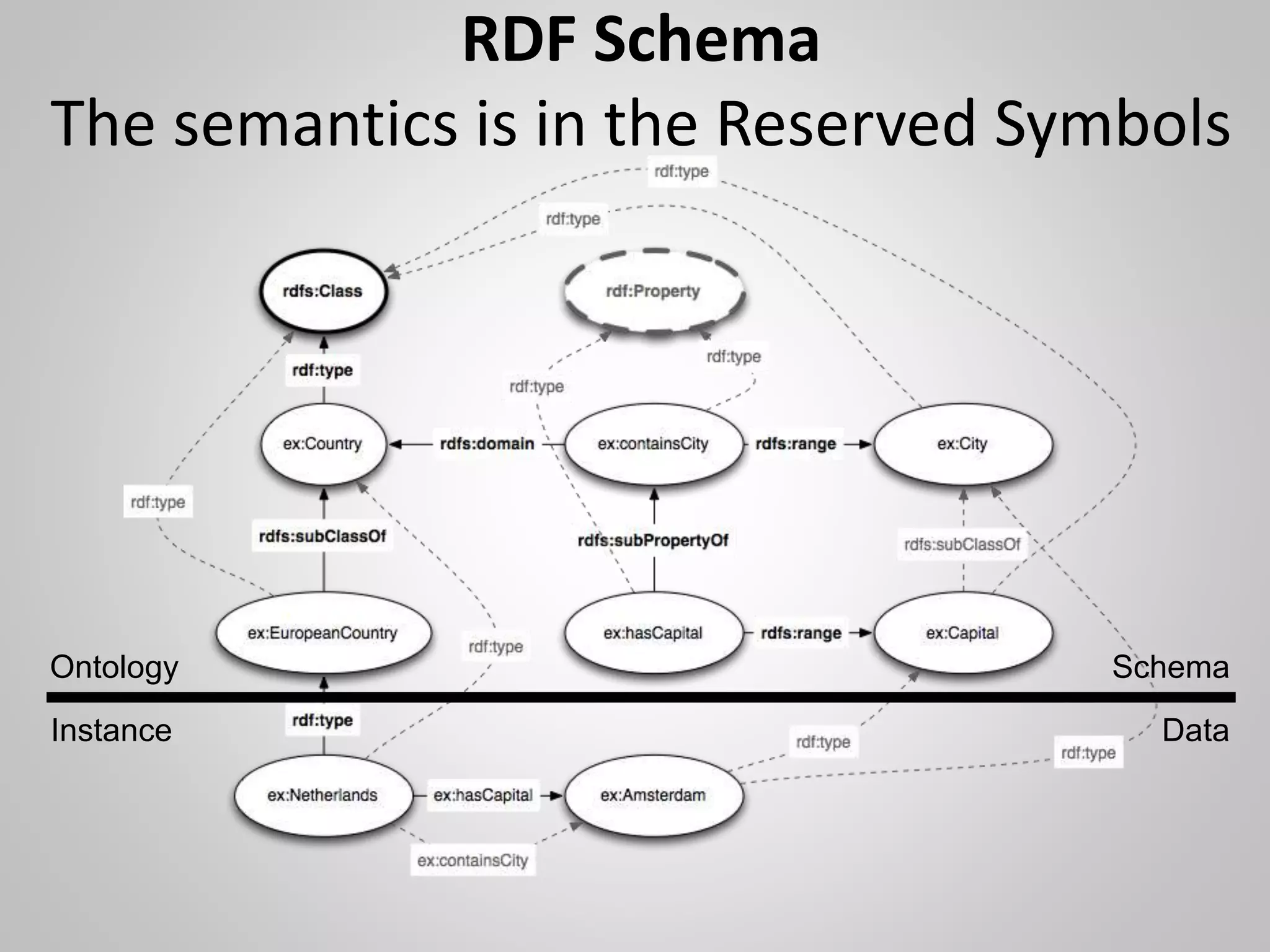

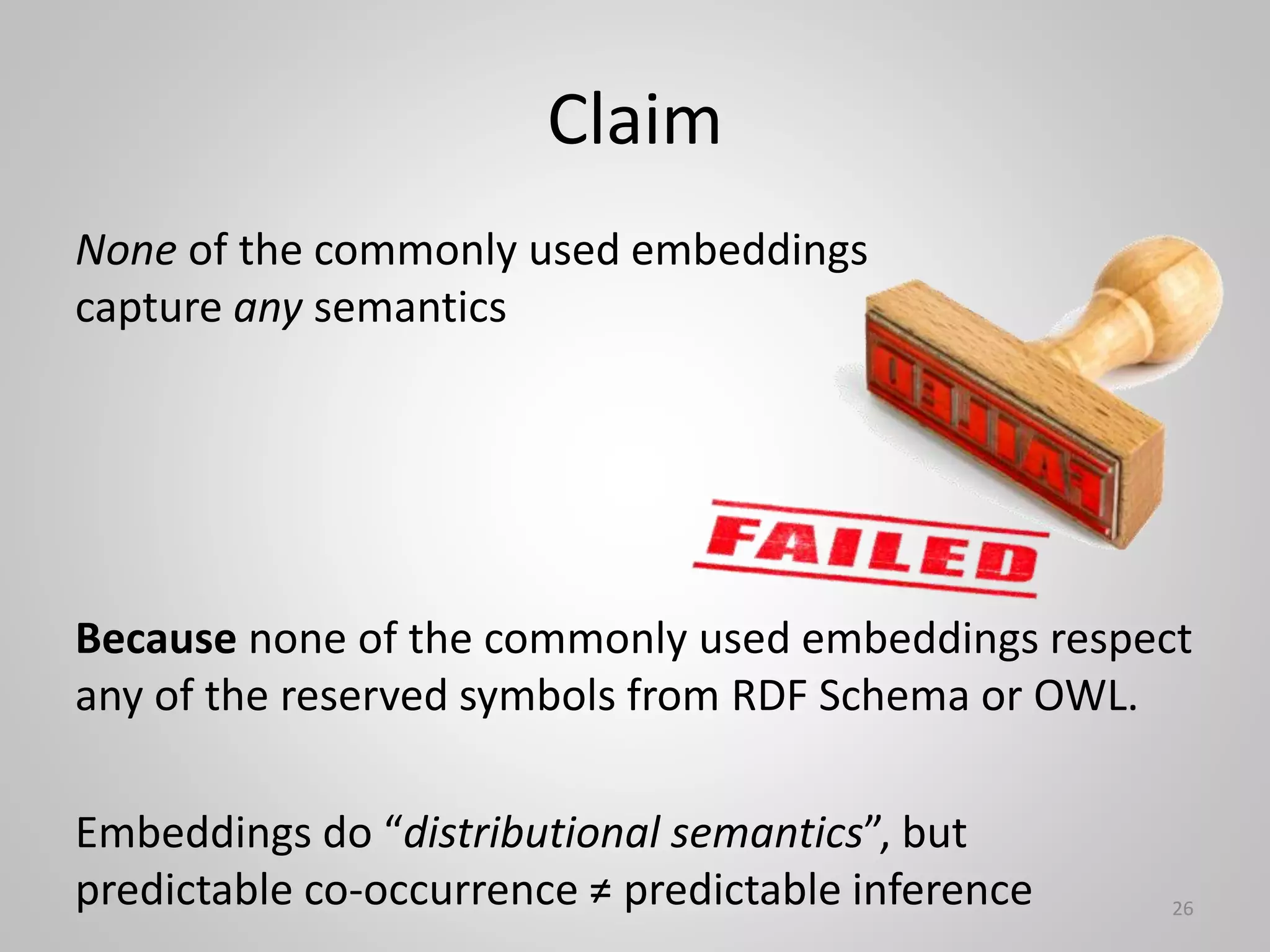

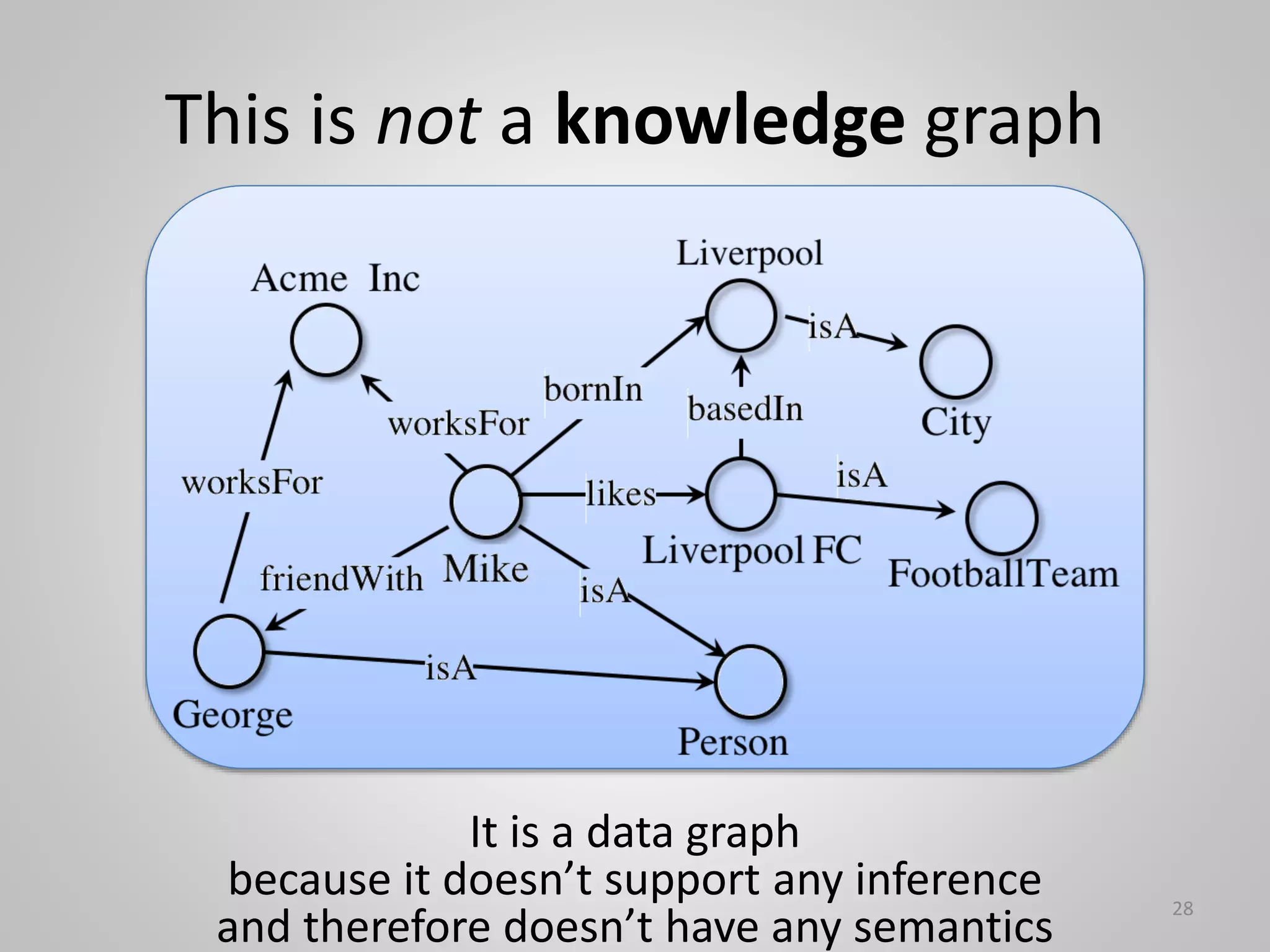

The document discusses the role of knowledge in neuro-symbolic AI and critiques current knowledge graph embeddings for lacking true semantics, arguing that most embeddings do not support inference. It presents proposals for improving semantic embeddings and stresses the importance of retaining semantic meaning in AI representations. Ultimately, it emphasizes that semantics should focus on predictable inference rather than just symbolic representation.

![Make embeddings semantic again!

(Outrageaous Ideas paper at ISWC 2018)

Abstract

The original Semantic Web vision foresees to describe

entities in a way that the meaning can be interpreted both

by machines and humans. [But] embeddings describe an

entity as a numerical vector, without any semantics

attached to the dimensions. Thus, embeddings are as far

from the original Semantic Web vision as can be. In this

paper, we make a claim for semantic embeddings.

Proposal 1: A Posteriori Learning of Interpretations.

Reconstruct a human-readable interpretation from the

vector space.

Proposal 2: Pattern-based Embeddings.

Use patterns in the knowledge graph to choose

human-interpretable dimensions in the vector space.

30

Neither of these are aimed at predictable inference

-> no semantics ](https://image.slidesharecdn.com/isws2023keynotefrankvanharmelen-230623180813-4ebe3ffc/75/The-K-in-neuro-symbolic-stands-for-knowledge-30-2048.jpg)