This document describes the design and implementation of a trade finance application built on the Hyperledger Fabric permissioned blockchain platform. It discusses the architecture of blockchain-based applications in general and this trade finance application specifically. Key aspects covered include identifying different types of software connectors (linkage, arbitrator, event, adaptor) that are important building blocks in the architecture. The trade finance application uses connectors like the blockchain facade connector and block/transaction event connector to interface between layers and handle asynchronous event propagation. Overall the document aims to provide insights into architectural considerations and best practices for developing blockchain-based applications.

![The Design and Implementation of Trade Finance

Application based on Hyperledger Fabric

Permissioned Blockchain Platform

Nizamuddin Ariffin

Fintech & System Engineering

Mimos Berhad

Kuala Lumpur, Malaysia

nizamuddin.ariffin@mimos.my

Ahmad Zuhairi Ismail

Fintech & System Engineering

Mimos Berhad

Kuala Lumpur, Malaysia

ahmadz@mimos.my

Abstract—Blockchain has grown beyond cryptocurrency. It has

found a sweet spot in applications that required increased trust

and transparency among multi-party transactions. This paper

describes our experience in the design, implementation and

architecture of blockchain-based trade finance application.

The implementation is based on permissioned blockchain

Hyperledger Fabric. Recently, the number of projects

embarked on blockchain application have grown significantly

over the year. However, the current level of understanding of

blockchain application is insufficient and the architectural

aspects of the system has remained largely unexplored. This

paper attempts to solve this problem. It applies the concept of

software connectors as a medium to explore fundamental

building blocks of software interaction and how they are

composed into a more complex interaction

Keywords—permissioned blockchain, software connector,

trade finance, smart contract, asynchronous

I. INTRODUCTION

Blockchain is an emerging technology that enables new

forms of distributed software architectures, where

components can find agreements on their shared states for

decentralized and transactional data sharing across a large

network of untrusted participants without relying on a

central integration point that should be trusted by every

component within the system. At the heart of its

implementation is the classic state machine replication

protocol [8]. What made blockchain so appealing is the fact

that the network is so robust and runs well even in the

presence of malicious nodes e.g. Byzantine nodes [9].

The blockchain data structure is a timestamped list of

blocks, which records and aggregates data about

transactions that have ever occurred within the blockchain

network. Thus, the blockchain provides an immutable data

storage, which only allows inserting transactions without

updating or deleting any existing transaction on the

blockchain to prevent tampering and revision. The whole

network reaches a consensus before a transaction is included

into the immutable data storage via different mechanisms,

for example, Proof-of-work or Proof-of-stake [7].

The first generation of blockchain is a public ledger for

monetary transactions with very limited capability to

support programmable transactions. A typical type of

applications is cryptocurrency [7]. Cryptocurrency is a

digital currency that is based on peer-to-peer network and

cryptographic tools.

The second generation of blockchain became a generally

programmable infrastructure with a public ledger that

records computational results. Smart contracts [6] were

introduced as autonomous programs running across the

blockchain network and can express triggers, conditions and

business logic to enable complicatedly programmable

transactions.

The design of a blockchain-based system however has

not yet been systematically explored, and there is little

understanding about the impact of introducing the

blockchain in a software architecture. In this paper, we

discuss our experience drawn from applying the blockchain

into a trade finance prototype application. The prototype

was implemented on enterprise blockchain called

Hyperledger Fabric.

The chief contribution of this paper is the identification of

software connectors [10] in blockchain-based application

architecture. The main goal for this identification is to

provide for deeper insight into what constitutes the

fundamental building blocks of the software interactions in

blockchain-based application. We use an extended example

based on our experience to illustrate this. In large distributed

system, identifying the key software connectors has become

increasingly significant as they play the key determinant of

non-functional system properties such as performance,

scalability, resource utilization, reliability, and so forth. Our

experience is indispensable for other software architects

aspire to construct similar enterprise blockchain-based

application in the future.

This paper proceeds by introducing blockchain and trade

finance in Section II, followed by discussing application

design and architecture with blockchain in Section III.

Section IV discusses detailed application design from

software connector perspectives. Section V enumerates the

lesson learned from our experience. Section VI concludes

the paper.

II. BLOCKCHAIN AND TRADE FINANCE MODEL

A. Distruptive Potential

Blockchain has the potential to disrupt traditional

business models described by FinTech Futures [3]. It is

achieved by eliminating the need for complex

reconciliations or trusted centralized parties, as all parties in

the network derive their position from a single source of

2019 International Seminar on Research of Information Technology and Intelligent Systems (ISRITI)

978-1-7281-4520-4/19/$31.00 ©2019 IEEE 488](https://image.slidesharecdn.com/thedesignandimplementationoftradefinanceapplicationbasedonhyperledgerfabricpermissionedblockchainpla-211217084801/75/The-design-and-implementation-of-trade-finance-application-based-on-hyperledger-fabric-permissioned-blockchain-platform-1-2048.jpg)

![truth, mutually agreed by the members in the network and

all information is available in real-time.

B. Conventional Trade Finance

The conventional system, multiparty transactions

requires third party intermediaries. The intermediaries that

facilitate payment are the respective banks of the exporter

and the importer. In this case, the trade arrangement is

fulfilled by the trusted relationships between a bank and its

client, and between the two banks. Such banks typically

have international connections and reputations to maintain.

Therefore, a commitment (or promise) by the importer's

bank to make a payment to the exporter's bank is sufficient

to trigger the process. The goods are dispatched by the

exporter through a reputed international carrier after

obtaining regulatory clearances from the exporting country's

government. Proof of delivery to the carrier is sufficient to

clear payment from the importer's bank to the exporter's

bank, and such clearance is not contingent on the goods

reaching their intended destination (it is assumed that the

goods are insured against loss or damage in transit.)

The traditional trade finance requires constant reliance

on purely documentary evidences, suffer greatly from

inherent inefficiencies in the processes. The risks inherent in

transferring goods or making payments in the absence of

trusted mediators inspired the involvement of banks and led

to the creation of the letter of credit and bill of lading.

Fig. 1. Conventional Trade Finance Model

C. Blockchain-based Trade Finance

In an ideal trade scenario, only the process of preparing

and shipping the goods would take time. A blockchain, on

the other hand, with its fast transaction commitments and

assurance guarantees, opens possibilities that did not

previously exist. As an example, we can introduce payment

by instalments, which cannot be implemented in the

conventional trade finance framework because there is no

guaranteed way of knowing and sharing information about a

shipment's progress. Such a variation would be deemed too

risky, that is why payments are linked purely to documentary

evidence. By getting all participants in a trade agreement on

a single blockchain implementing a common smart contract,

we can provide a single shared source of truth that will

minimize risk and simultaneously increase accountability.

Another key advantage is the increased trust in the trade

finance processes as all relevant documentary evidences e.g.

letter of credit and invoices are stored in blockchain. Hence,

they are clearly visible to the network participants and

whatever have been recorded are irreversible [2].

Fig. 2. Blockchain-based Trade Finance Model

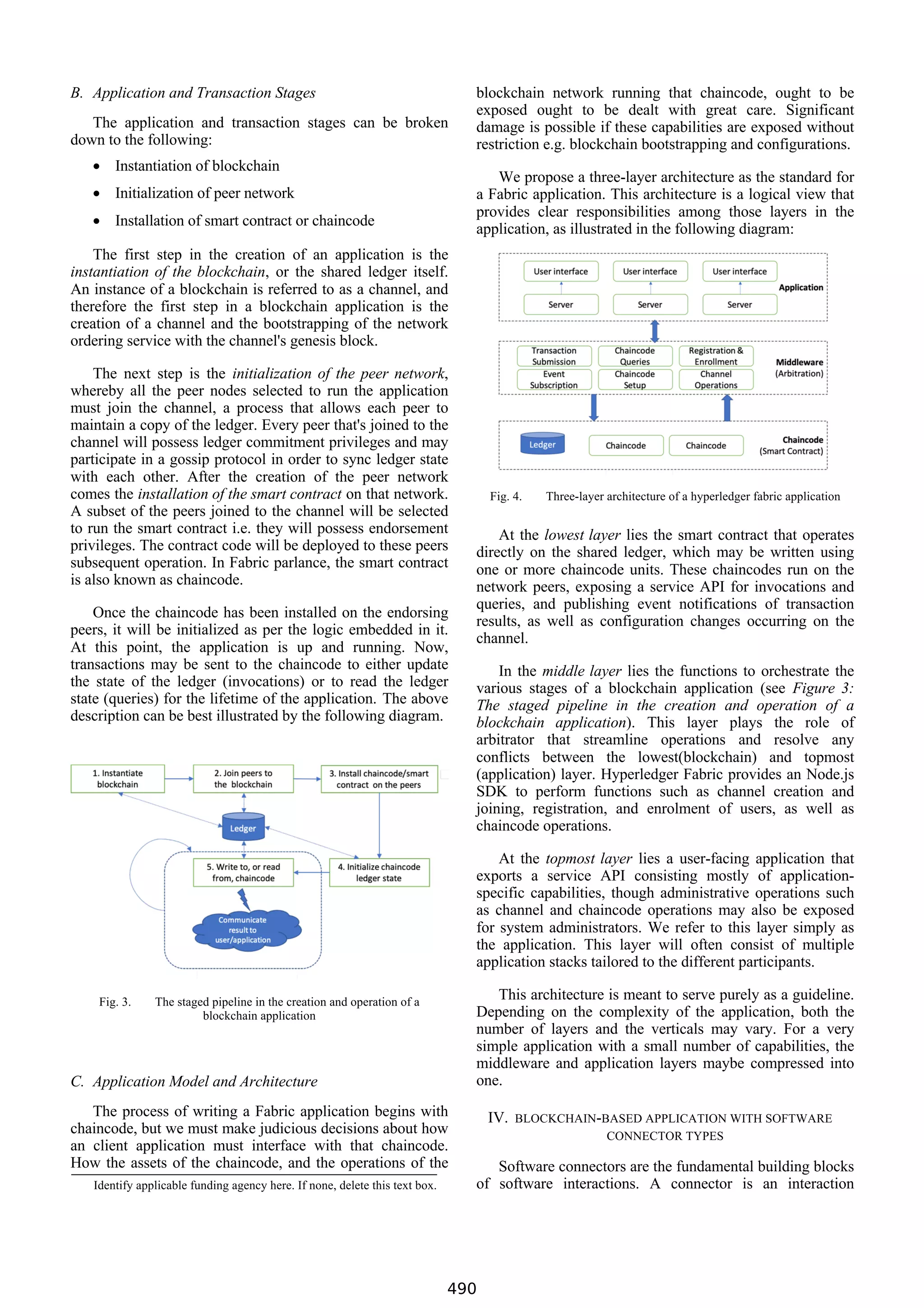

III. APPLICATION ARCHITECTURE

Designing a system based on blockchain poses many

challenges as the designers may face difficulties due to

many variants and configurations the technology can offer

[5]. Apart from implementation choices, performance is

another area that must be carefully considered in the

implementation. Since blockchain requires consensus on

agreed state among multiple participants when doing

transaction, changing state transaction may not perform that

well as compared to doing similar transaction in the

traditional database.

Xiwei Xu et al. have discussed detailed architecture of

blockchain as software connector [4]. This paper will

discuss from the overall blockchain-based application

architecture from software connector perspective. The

discussion will focus primarily on the following connector

types: arbitrator, linkage, event, and adaptor [10].

A. The Nature of Hyperledger Fabric Application

Hyperledger Fabric can be viewed as a distributed

transaction processing system, with a staged pipeline of

operations that may eventually result in a change to the state

of the shared replicated ledger maintained by the network

peers. A blockchain application is a collection of processes

through which a user may submit transactions to, or read

state from, a smart contract. Under the hood, a user request is

channeled into the different stages of the transaction pipeline

and results will be extracted to provide feedback at the end of

the process.

An application developed with the smart contract at its core

can be viewed as a transaction-processing database

application with a set of views or a service API. However,

every Hyperledger Fabric transaction is asynchronous i.e.

the result of the transaction will not be available in the same

communication session that it was submitted in. This is

because a transaction must go through consensus which

requires collective approval by the peers in the network and

may take unbounded amount time for completion.

489](https://image.slidesharecdn.com/thedesignandimplementationoftradefinanceapplicationbasedonhyperledgerfabricpermissionedblockchainpla-211217084801/75/The-design-and-implementation-of-trade-finance-application-based-on-hyperledger-fabric-permissioned-blockchain-platform-2-2048.jpg)

![mechanism for the components. Connectors include pipes,

repositories, and sockets. For example, middleware can be

viewed as a connector between the components that use the

middleware [6]. Connectors in distributed systems are the

key elements to achieve system properties, such as

performance, reliability, security, etc. Connectors provide

interaction services, which are largely independent of the

functionality of the interacting components [11].

The discussion on connectors used can be broken down

into two kinds: static and dynamic connector. Static

connectors are those tying system components together and

hold them in such state statically at compile time. In

contrast, dynamic connectors allows interactions between

system component dynamically at runtime.

We have identified a number of connectors that were

applied in the application architecture. These key connectors

play significant roles and provide rich description of

capturing invaluable interactions within the architecture.

The summary of each connector, dimension and values are

shown in the table below.

TABLE I. BLOCKCHAIN FAÇADE CONNECOTR

Connector Type Dimension Value

Arbitrator Concurrency Weight Heavy

Arbitrator Fault Handling Authoritative

Arbitrator Authorization

Access Control

List

Adaptor Invocation Conversion Translation

Adaptor Invocation Conversion Marshalling

Linkage Binding Compile-time

Event Synchronicity Asynchronous

Event Notification Publish/subscribe

Each of the connector type and role is described in more

detailed in the subsequent sections.

A. Linkage Connector

Linkage connectors are used to tie the system

components together and enable the establishment of the

channel of communication and coordination. They do not

necessarily contribute towards enhancing the system but

merely serve to monitor, grow and repair the system. In our

scenario, linkage connectors are used to describe the static

dependency relationship among software modules as shown

below.

Fig. 5. Lingkage connector showing module dependencies

B. Blockchain Façade Connector

Blockchain Façade Connector is a commonly used

abstraction to allow application system to access back-end

systems i.e. blockchain system in our case. It acts like a

façade to access the blockchain services. It dispatches

incoming calls for blockchain system operations; the actual

behaviour of this connector depends on the calling

application and the requested operations. The façade

connector implemented the functionality of middleware as

shown in Figure 4. The façade is composed of routes API

connector, application API connector and blockchain API

connector, as illustrated in Figure 6 below.

Fig. 6. Blockchain façade connector

The façade connector is a high-order connector that

provides rich interaction among its contained connectors.

The asynchronous nature of ledger-update transactions of

the blockchain requires an arbitration layer between the

chaincode and the client application. The façade connector

performs arbitration of interaction between the client

application and the chaincode and adaptation by dispatching

calls to routes API, application API and finally the

blockchain API.

In essence the façade connector also serves to hide as

much complexity as possible to allow client application to

focus on transactions that impact the application rather than

the details of the backend blockchain operations. Consider

the complexity of a client application invoking chaincode

operation that updates the state of the ledger. As we have

learned earlier, operation that changes the state of the ledger

would require consensus. Since consensus would take

unbounded amount of time, the result of the said operation

would be communicated back to the client asynchronously

via event subscription.

491](https://image.slidesharecdn.com/thedesignandimplementationoftradefinanceapplicationbasedonhyperledgerfabricpermissionedblockchainpla-211217084801/75/The-design-and-implementation-of-trade-finance-application-based-on-hyperledger-fabric-permissioned-blockchain-platform-4-2048.jpg)

![C. Block and Transaction Event Connector

All of the state-changing operations of the ledger are

asynchronous. As a result block and transaction event

connector plays significant role to allow the result of

invoking chaincode to be communicated to client

application. There are two types of events can be generated

by the event connector: block and transaction events. Once

the event connector learns about the occurrence of an event,

it generates messages for all interested parties and yields

control to the components for processing the events. The

contents of the event contain information such as the block

ID, block number, transaction ID, time-stamped etc.

We setup listeners to receive block and transaction events

from event connector as shown below

var eventPromises = [];

eventhubs.forEach((eh) => {

let txPromise =

new Promise((resolve, reject) =>

{

let handle = setTimeout(reject,

40000);

// Registering block event

// listener

…

// Registering transaction

// event listener

…

});

eventPromises.push(txPromise);

});

D. Event Adaptor Connector

The event details and the communication channel

abstraction used to transmit the events determine the type of

adaptation required at each layer. The blockchain API

connector would use different communication channel to

transmit the event than the application API connector. For

example, blockchain API connector would require an

abstraction called EventEmitter to transmit event. In

contrast, application API connector would use a WebSocket

to transmit event from application API connector to client

application.

Blockchain events are very raw in details and some of

the details may not be required by the next listener down the

chain. Hence adaptation is required to filter out some of

these details. As shown in Fig 6, the façade connector

provides two event adaptors. The first is blockchain event

adaptor which received raw blockchain events such as

transaction and block events. These events would be

retransmitted to the application event connector through

EventEmitter object. Subsequently, application event

connector had to adapt it further by transmitting other event

details through WebSocket to client application.

First step in the adaptation model is to provide the

EventEmitter as the first communication channel and

simplify the API the listener would use to listen to the event

as show in the code snippet below:

var blockchainEvents = new EventEmitter()

var on = blockchainEvents.on

.bind(blockchainEvents) (1)

var emit = blockchainEvents.emit

.bind(blockchainEvents) (2)

module.exports.on = on

module.exports.emit = emit

For ease of using API, we simplified the API for listener

to use by hiding the type communication channel used to

transmit the event as shown in (1) and (2). For

example instead of calling

ClientUtils.blockchainEvents.emit(), the

client can simply issue simplified call as

ClientUtils.emit(). Next we setup blockchain

event adaptor to receive events from blockchain and

unmarshall the data associated with the event for the

application API connector listener. The unmarshalling is

part of the connector adapting process. In this scenario, the

block data was unmarshalled (block object creation) from

byte streams which had been passed down by the

blockchain. The unmarshalling process is shown in (3).

There can be block event as well as transaction event.

Registering listener or callback for listening to newly

created block events is shown below:

const blockEventCb = (block) => {

clearTimeout(handle)

ClientUtils.emit(‘block’,

ClientUtils.unmarshall(block)) (3)

}

eh.registerblockEvent(blockEventCb)

Similarly, we can register callback for listening transaction

events:

const transactionEventCb =

(data, code) => {

clearTimeout(handle)

if(code !== ‘VALID) {

reject()

}

else {

ClientUtils.emit(‘tx’, data)

Resolve()

}

}

eh.registerblockEvent(transactionEventCb)

The parameters passed to the listener include a handle to

the transaction and a status code, which can be checked to

see whether the chaincode invocation result was

successfully committed to the ledger. Once the event has

been received, the event listener is unregistered to free up

system resources. Finally, application event adaptor would

receive event from application API connector and adapt the

event further by transmitting the event as is through

WebSocket to client application:

const blockEventCb = (block) => {

websocket.emit(‘block’, block)

}

const txEventCb = (transaction) => {

websocket.emit(‘tx, transaction)

}

// retransmit events to client application

// after received them from application event

// connector

492](https://image.slidesharecdn.com/thedesignandimplementationoftradefinanceapplicationbasedonhyperledgerfabricpermissionedblockchainpla-211217084801/75/The-design-and-implementation-of-trade-finance-application-based-on-hyperledger-fabric-permissioned-blockchain-platform-5-2048.jpg)

![ClientUtils.on(‘block’, blockEventCb)

ClientUtils.on(‘tx, txEventCb)

Both of the block and transaction events can be directly

consumed by the client applications via browser or mobile

application.

V. DISCUSSION AND CONCLUSION

It is hoped the software connectors discussed would

benefit other software architecture practitioners in

recognizing the challenges that might surface in building

blockchain-based application of this scale. We do not expect

our treatment of the software connectors is exhaustive.

Other connectors could have been discovered and hence

shed new light in gaining a thorough understanding.

Many issues remain for future work. We intend to

investigate the non-functional system properties such as

performance and scalability. Performance aspect especially

remains an elusive feature in blockchain network. In an

effort to achieve better performance, Hyperledger Fabric

took an extreme approach of having an Orderer Nodes for

creating new block and leaving out a decentralized

consensus. We have yet to conduct any real performance

test to measure the performance characteristics of this

approach. This is something we intend to do in the near

future.

We believe that the identification of primitive building

blocks of software connectors and the comprehensive

discussion of the application of those connectors in

blockchain-based application architecture in this paper form

the necessary foundation for building similar architecture in

the future.

ACKNOWLEDGMENT

The authors would like to acknowledge MIMOS Berhad

to allow in carrying out this research. The views and

conclusions contained herein are those of the authors and

should not be interpreted as necessarily representing the

official policies or endorsement, either expressed or implied,

of MIMOS Berhad.

REFERENCES

[1] G. Eason, B. Noble, and I. N. Sneddon, “Hyperledger Fabric: A

Distributed Operating System for Permissioned Blockchain” Eurosys

2018.

[2] A.V.Baguscharkov, I.E.Pokamestove,K.R.Adamova and Zh.N

Tropina: Adoption of Blockchain Technology in Trade Finance, Nov

2018

[3] FinTech Futures, Why blockchain could revolutionise trade finance

documentation, June 2018 (on web page https://www.fintechfutures

.com/2018/06/why-blockchain-could-revolutionise-trade-finance-

documentation/ )

[4] Xiwei Xu, Cessare Paustasso, Liming Zhu, Vincent Gramoli,

Alexander Panomarev, Shiping Chen: Blockchain as Software

Connector, 2016

[5] Xiwei Xu, Ingo Weber, Liming Zhu, Jan Bosch, Cesare Pautasso,

Paul Rimba: A Taxonomy of Blockchain-Based Systems for

Architecture Design, April 2017

[6] S. Omohundro. Cryptocurrencies, smart contracts, and artificial

intelligence. AI Matters, 1(2):19–21, Dec. 2014.

[7] M. Swan. Blockchain: Blueprint for a New Economy. O’Reilly, US,

[8] Fred B.Schneider, “Implementing Fault-Tolerance Service Using

State Machine Approach: A Tutorial” ACM Computing Survey, vol.

22 issue 4, Dec. 1990, pp. 299-319.

[9] Leslie Lamport, Robert Shostak and Marshall Pease, “The Byzantine

General Problems” ACM Transactions on Programming Languages

and Systems, vol. 4, Dec. 1982.

[10] Nikunj R.Mehta, Nenad Medvidovic, Sandeep Phadke: Towards a

Taxonomy of Software Connectors, 2000

[11] R.N.Taylor, N.Medvidovic, and E.M.Dashofy: Software Architecture:

Foundations, Theory, and Practice. Wiley, 20009

493](https://image.slidesharecdn.com/thedesignandimplementationoftradefinanceapplicationbasedonhyperledgerfabricpermissionedblockchainpla-211217084801/75/The-design-and-implementation-of-trade-finance-application-based-on-hyperledger-fabric-permissioned-blockchain-platform-6-2048.jpg)