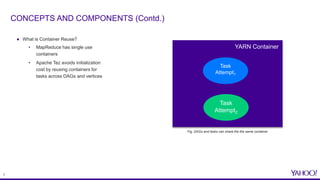

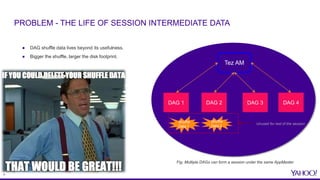

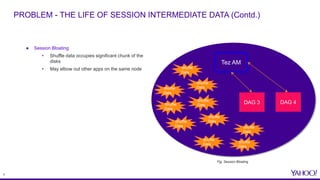

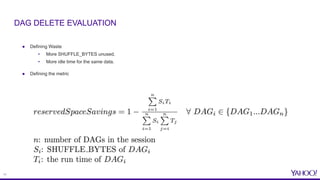

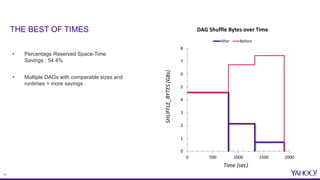

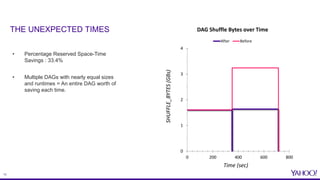

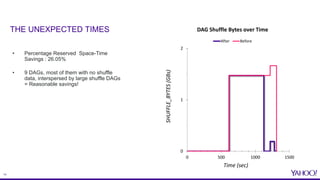

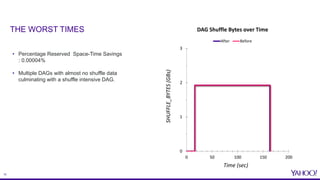

The document discusses improvements in reserved space utilization through the concept of 'dag delete' within Apache Tez, which aims to manage intermediate shuffle data across multiple DAGs within a single session. Key features include container reuse to reduce initialization costs and a deletion policy that can be customized to optimize resource usage. The evaluation shows significant percentage savings in reserved space-time, especially in shuffle-heavy sessions, leading to better performance and utilization of disk space.