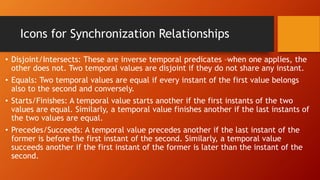

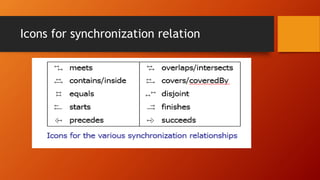

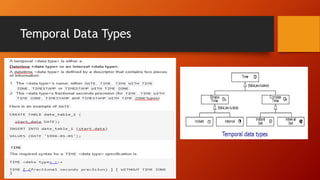

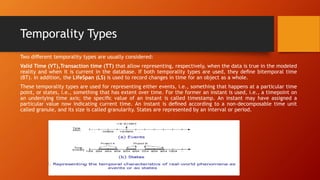

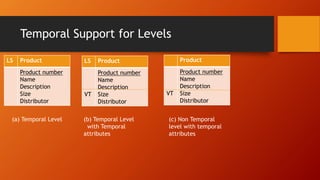

The document discusses temporal data warehousing and databases. A temporal data warehouse stores historical information from multiple sources and allows querying past data to identify trends. It contains atomic and summarized data relevant to specific time periods. Temporal data has applications in domains like banking, retail, and healthcare. A temporal database supports handling data involving time through features like valid time and transaction time. It stores facts that were true at different points in time rather than just the current time.