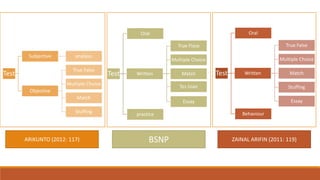

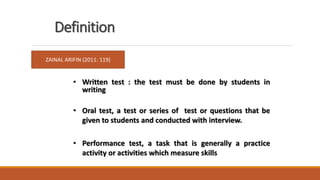

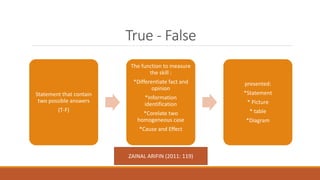

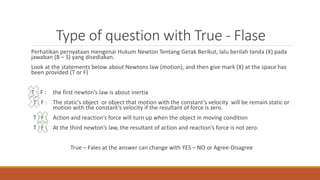

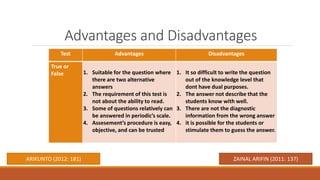

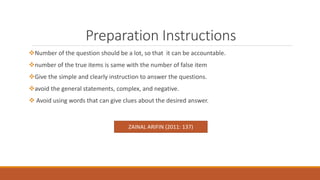

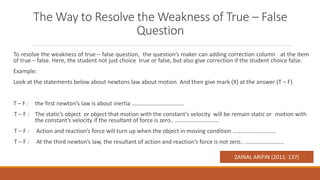

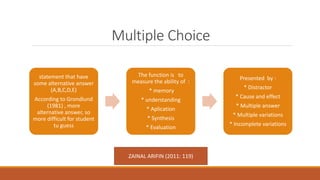

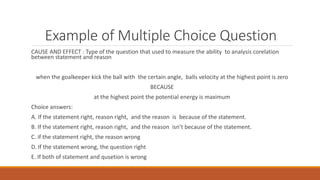

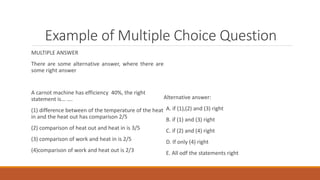

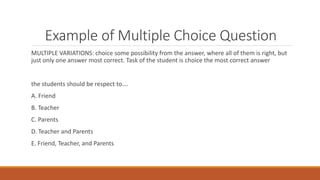

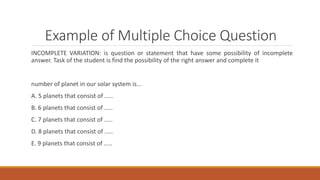

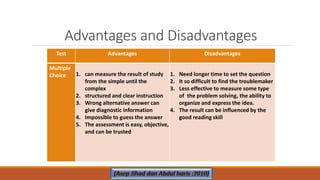

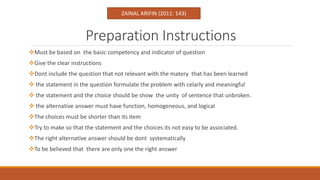

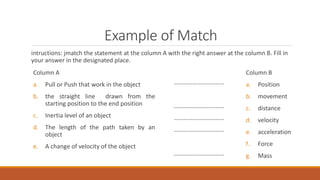

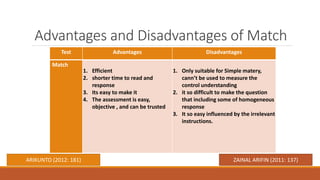

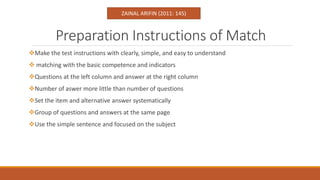

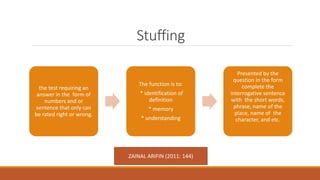

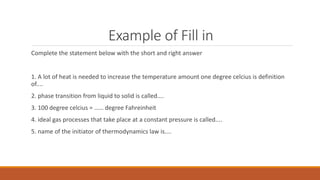

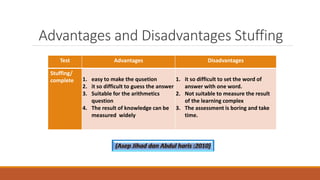

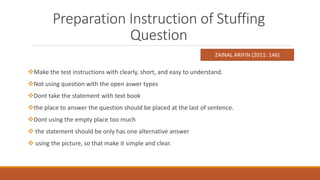

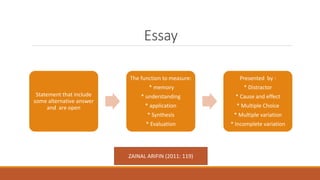

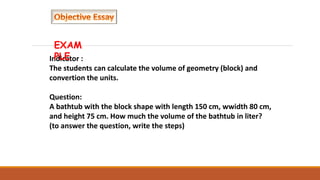

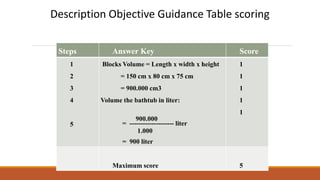

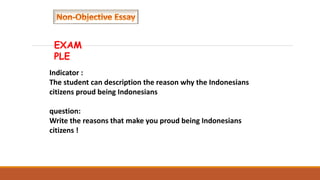

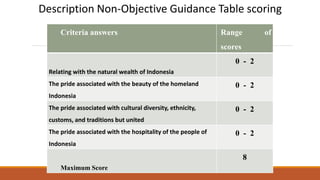

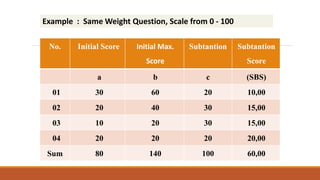

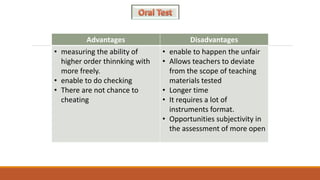

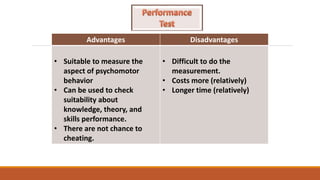

The document discusses different types of tests used to measure student achievement, including true-false, multiple choice, and matching questions. It provides definitions of tests from various authors and explains the purpose and proper construction of each question type. The advantages and disadvantages of each test type are outlined. Guidance is given on writing clear instructions, ensuring questions align with learning objectives, and developing distractors or response options to effectively measure student skills and knowledge.