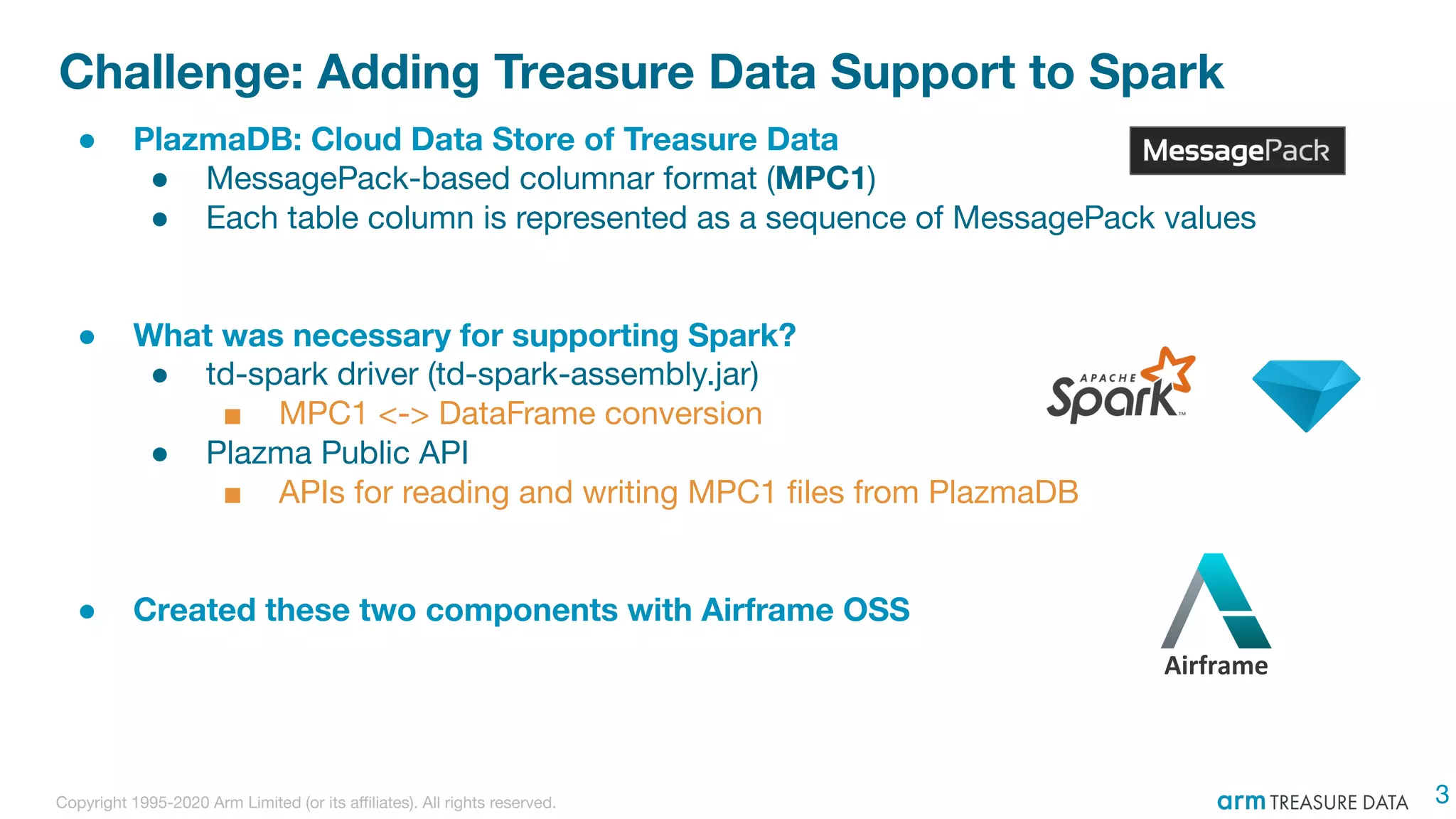

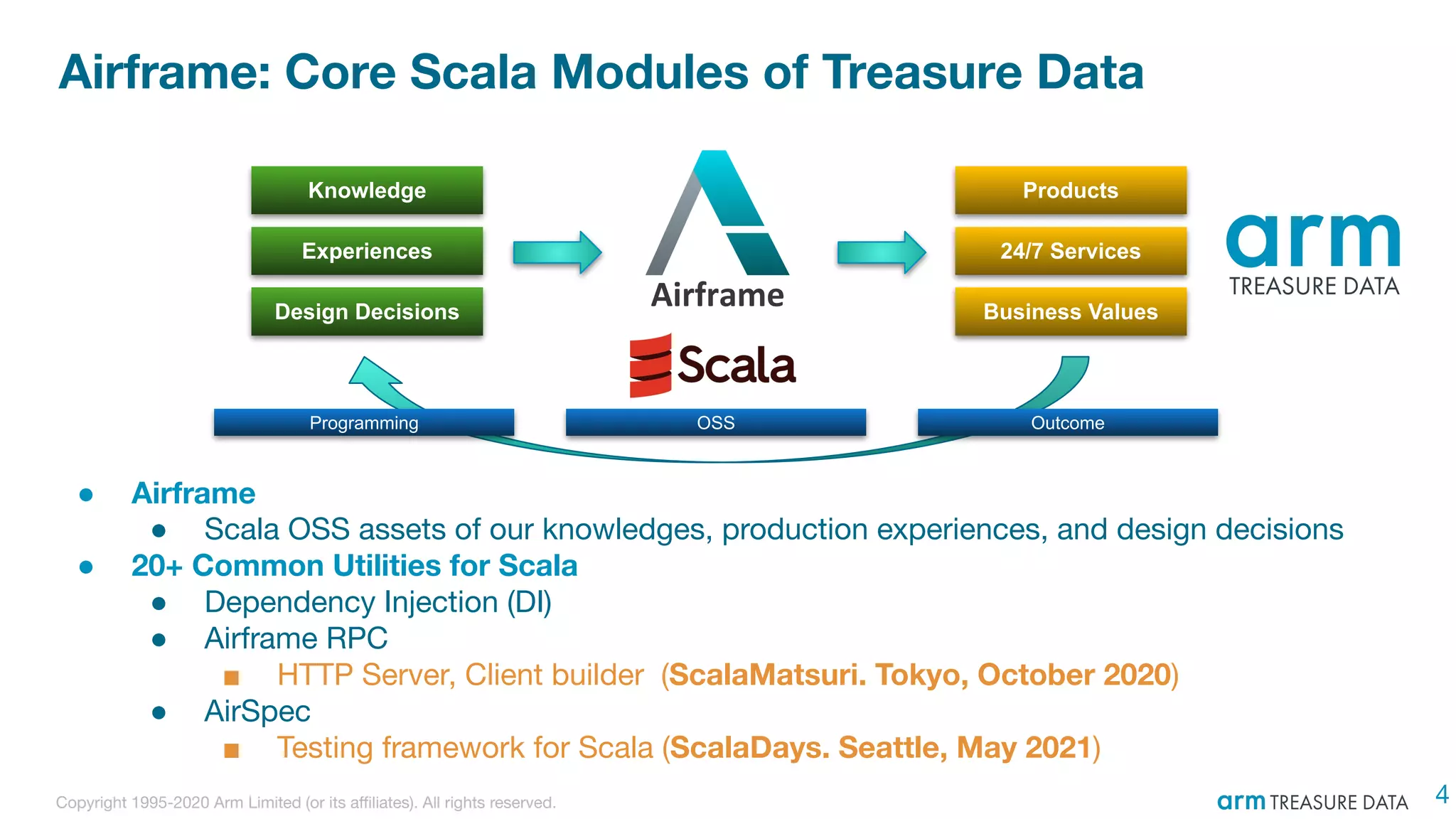

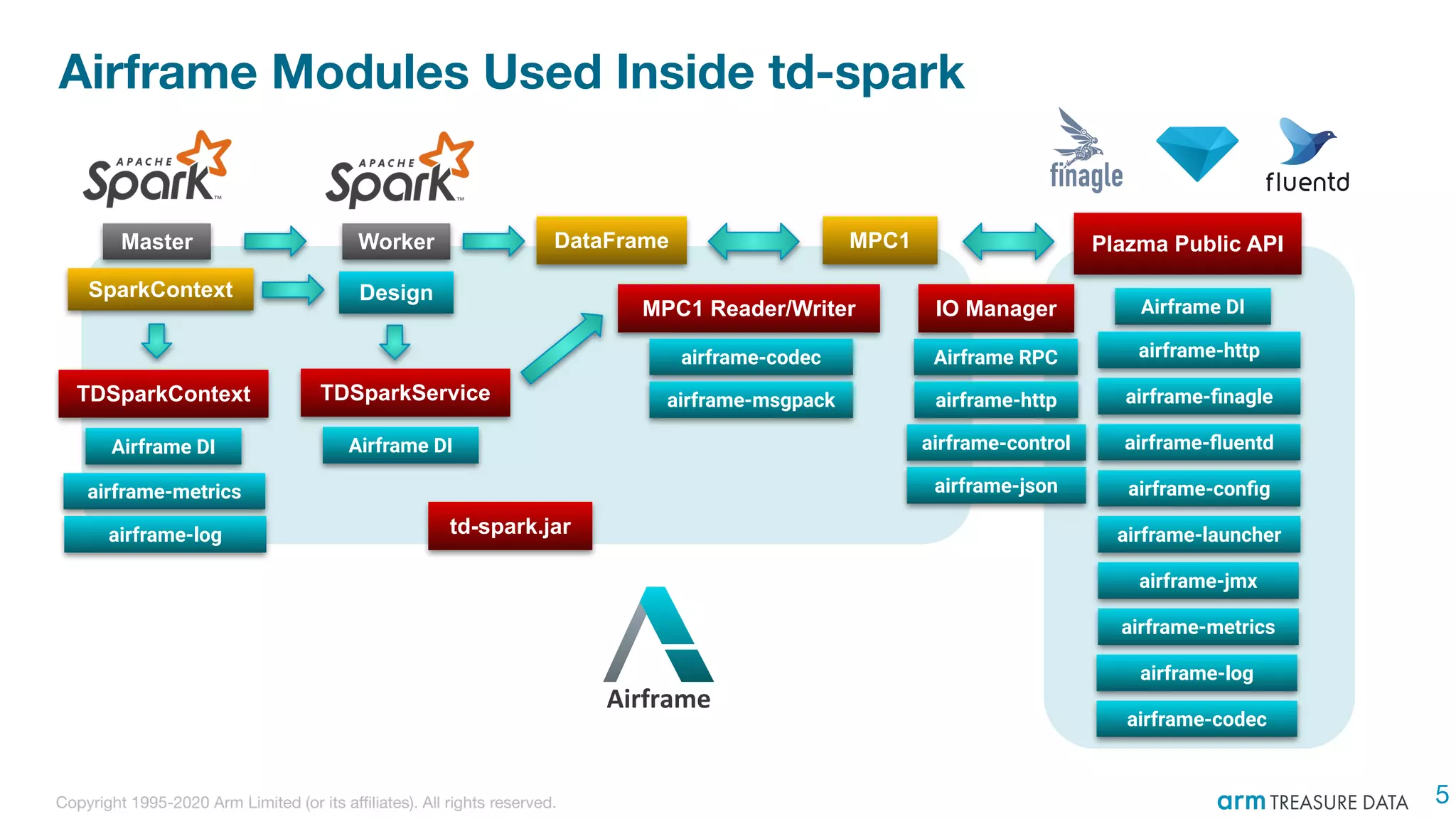

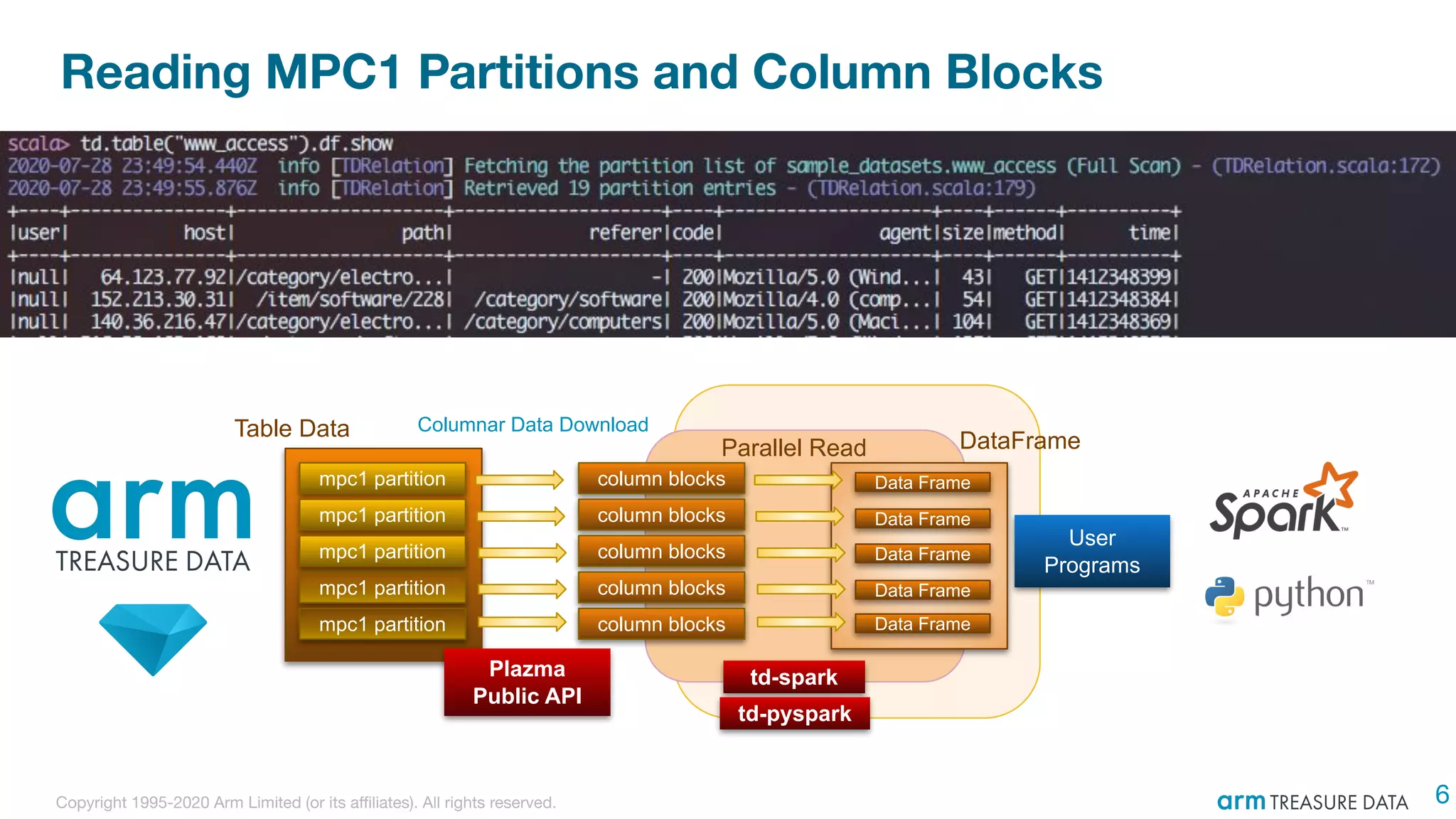

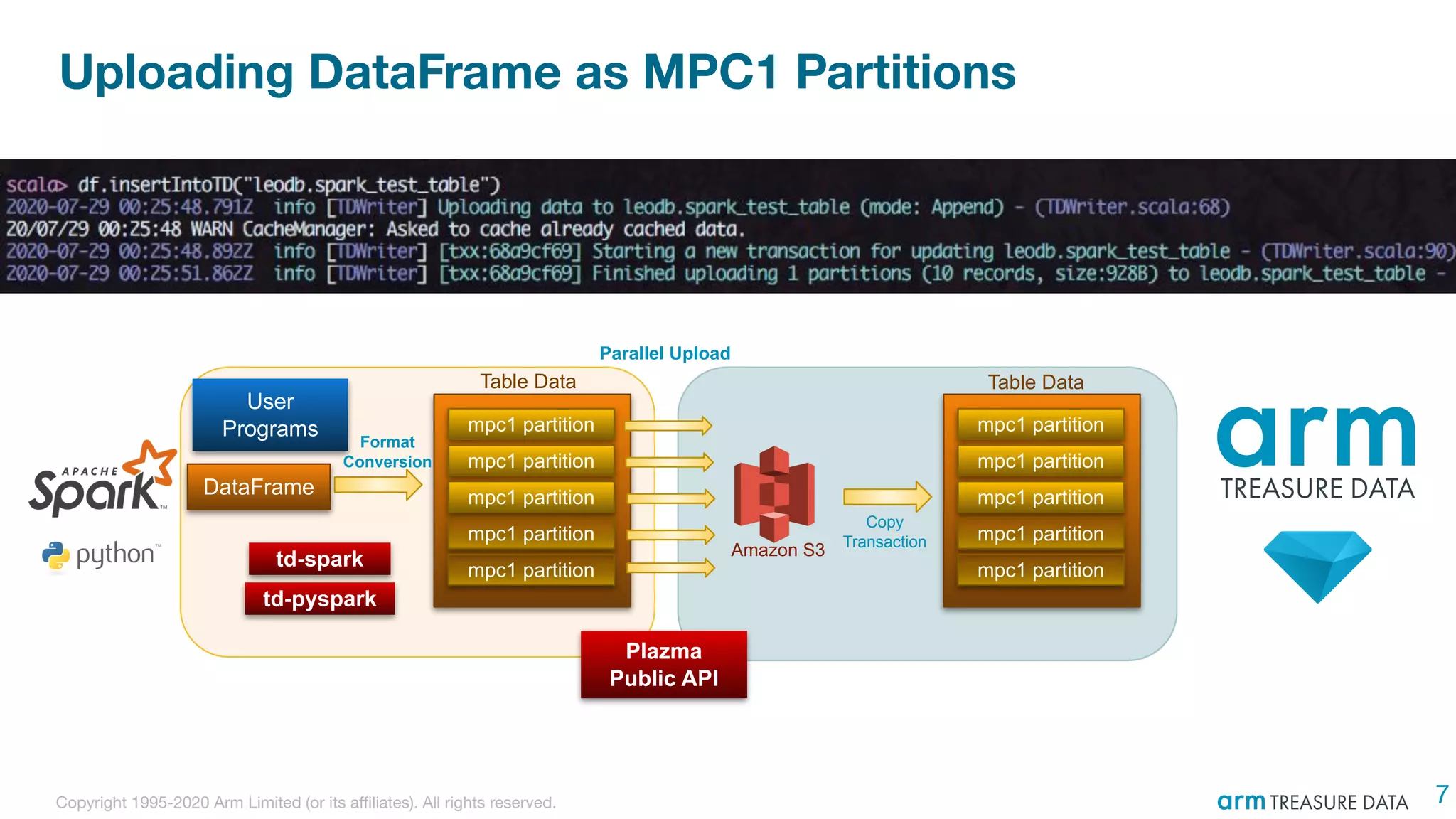

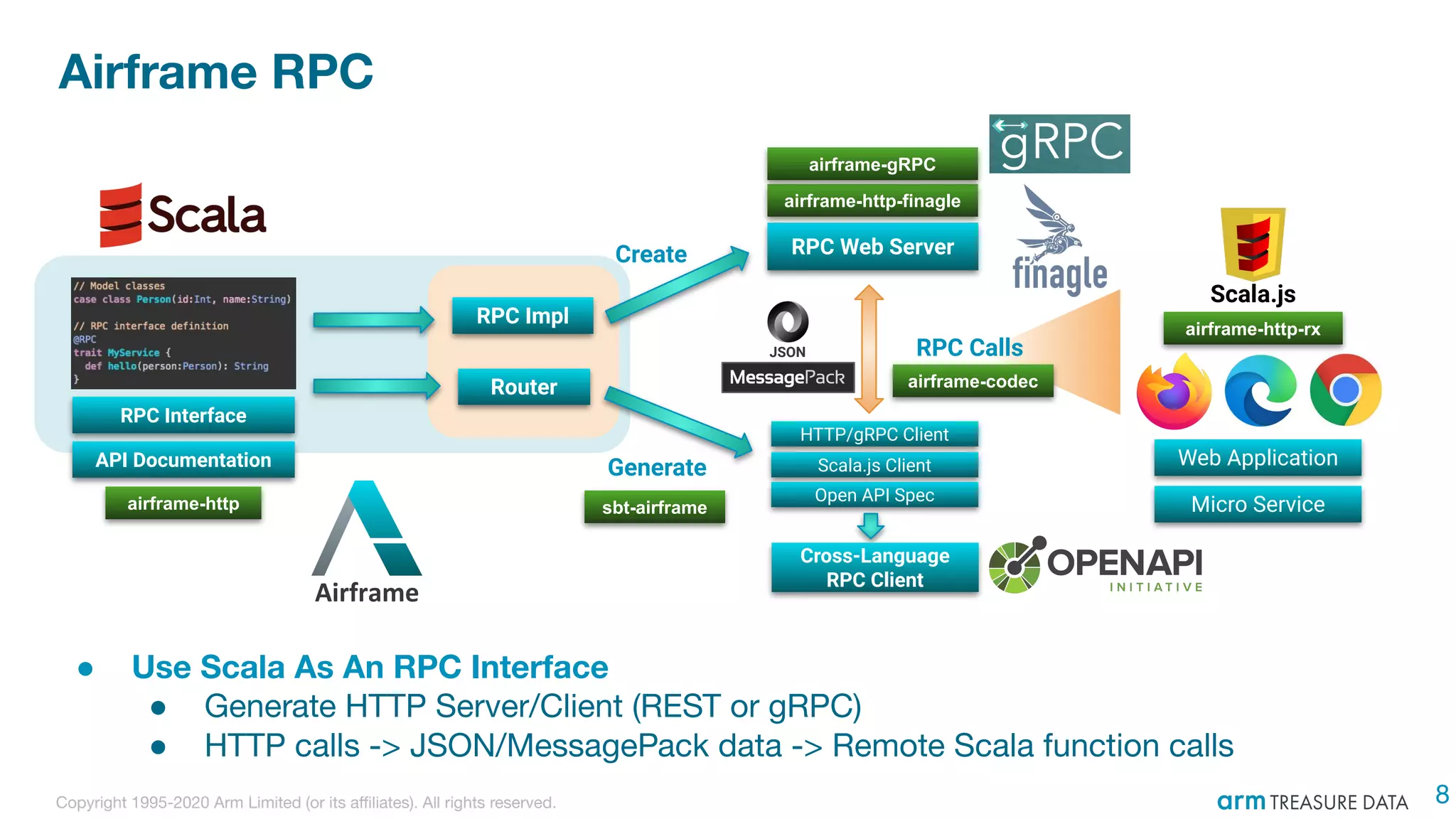

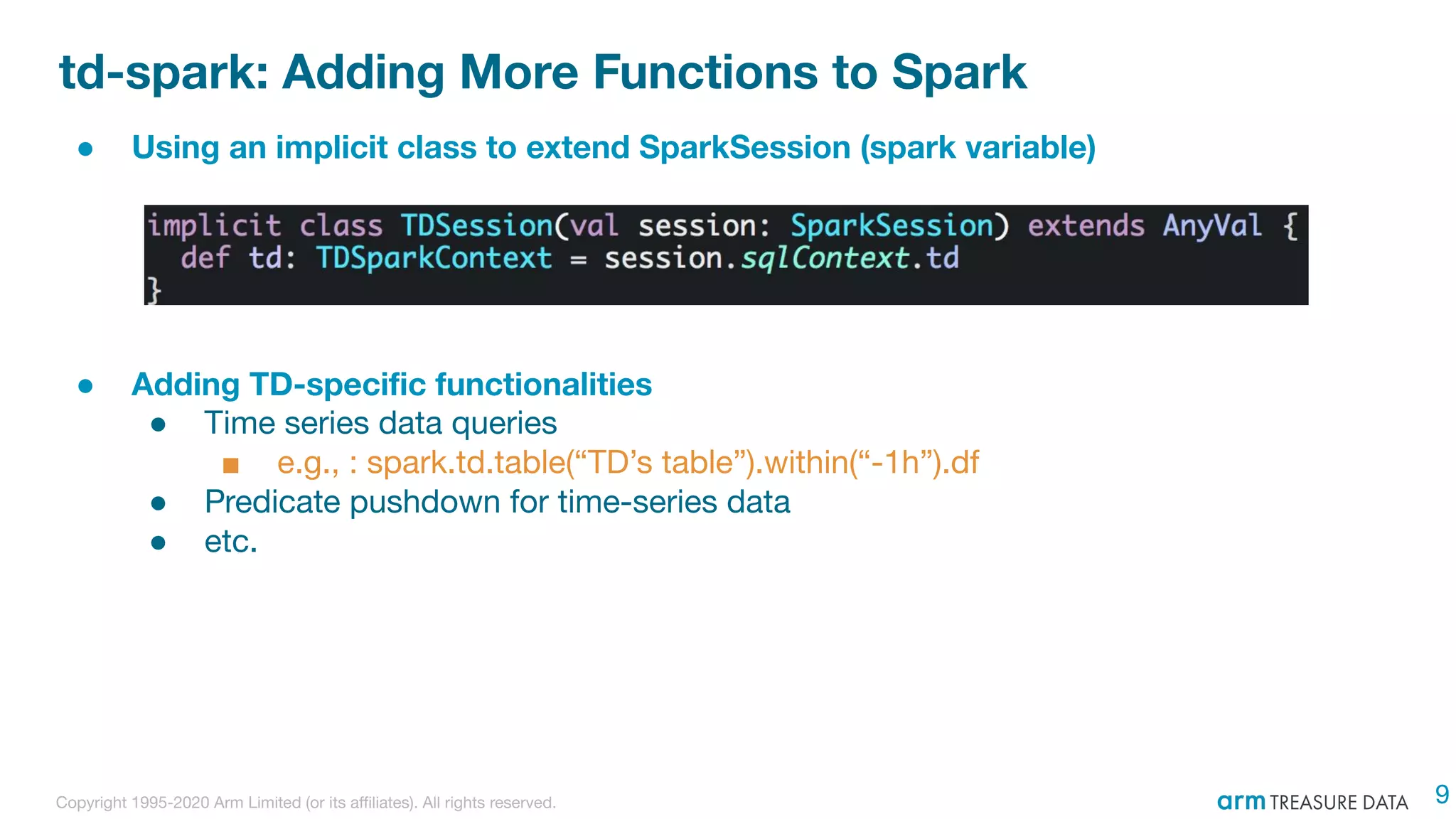

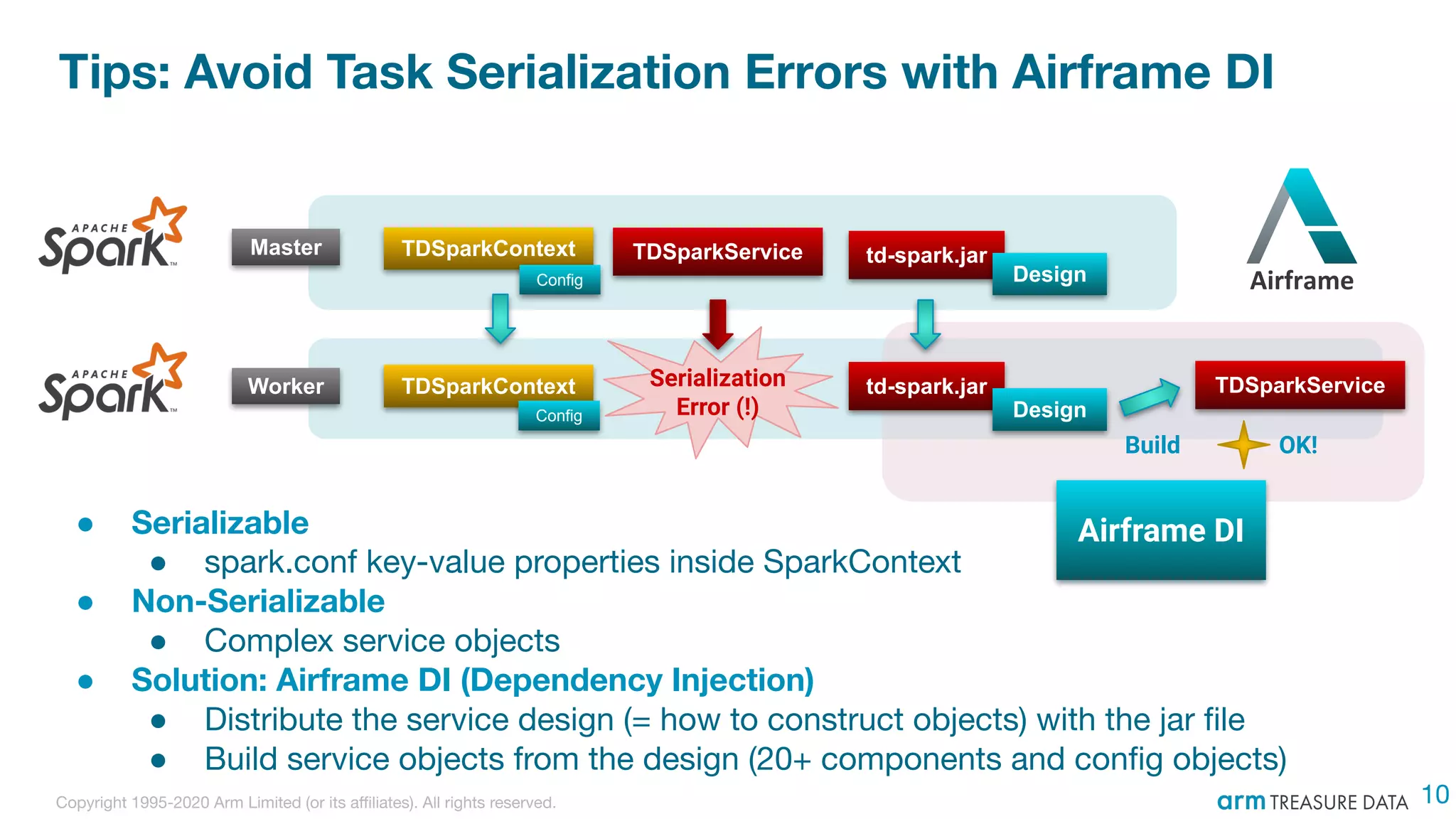

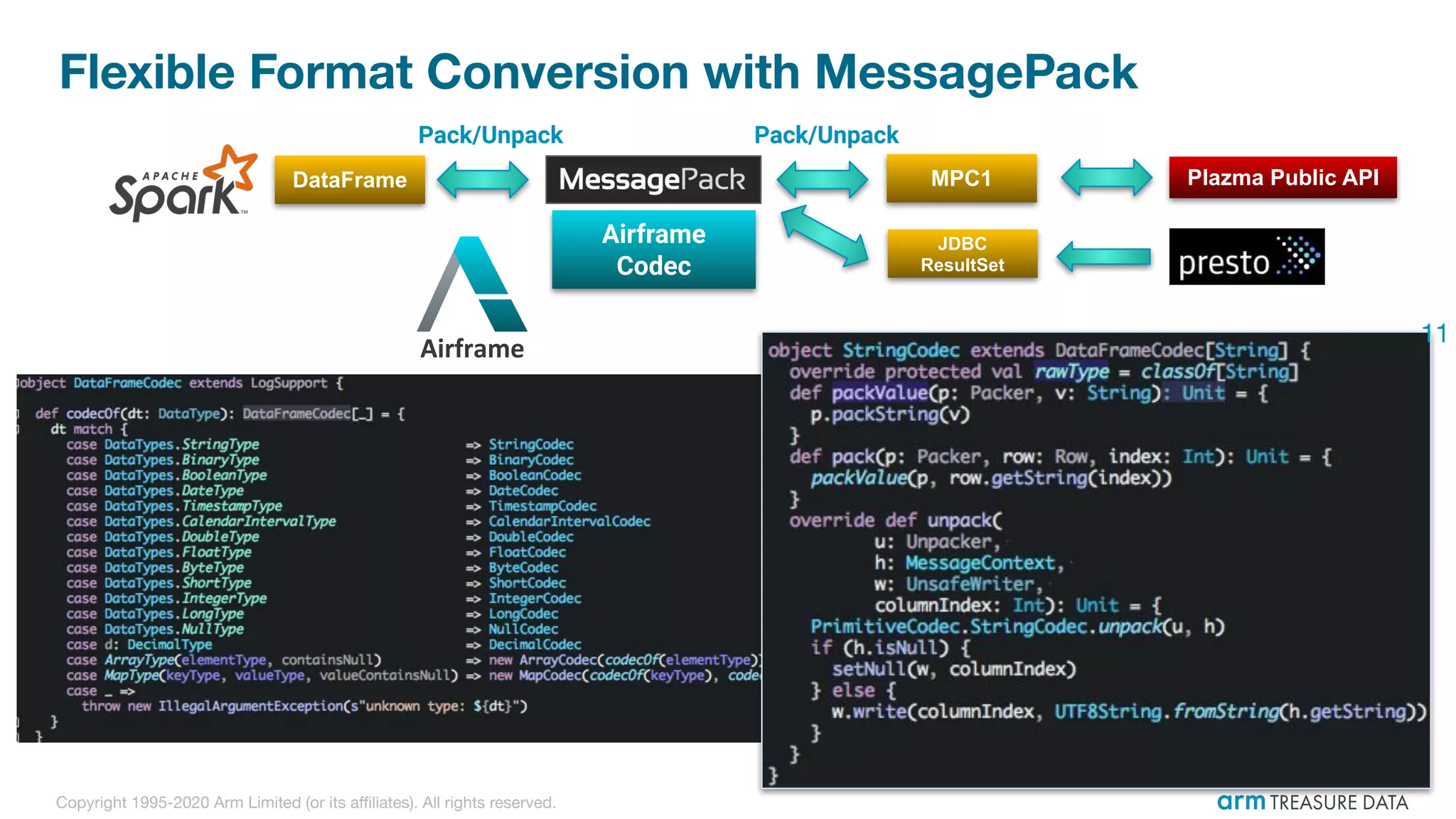

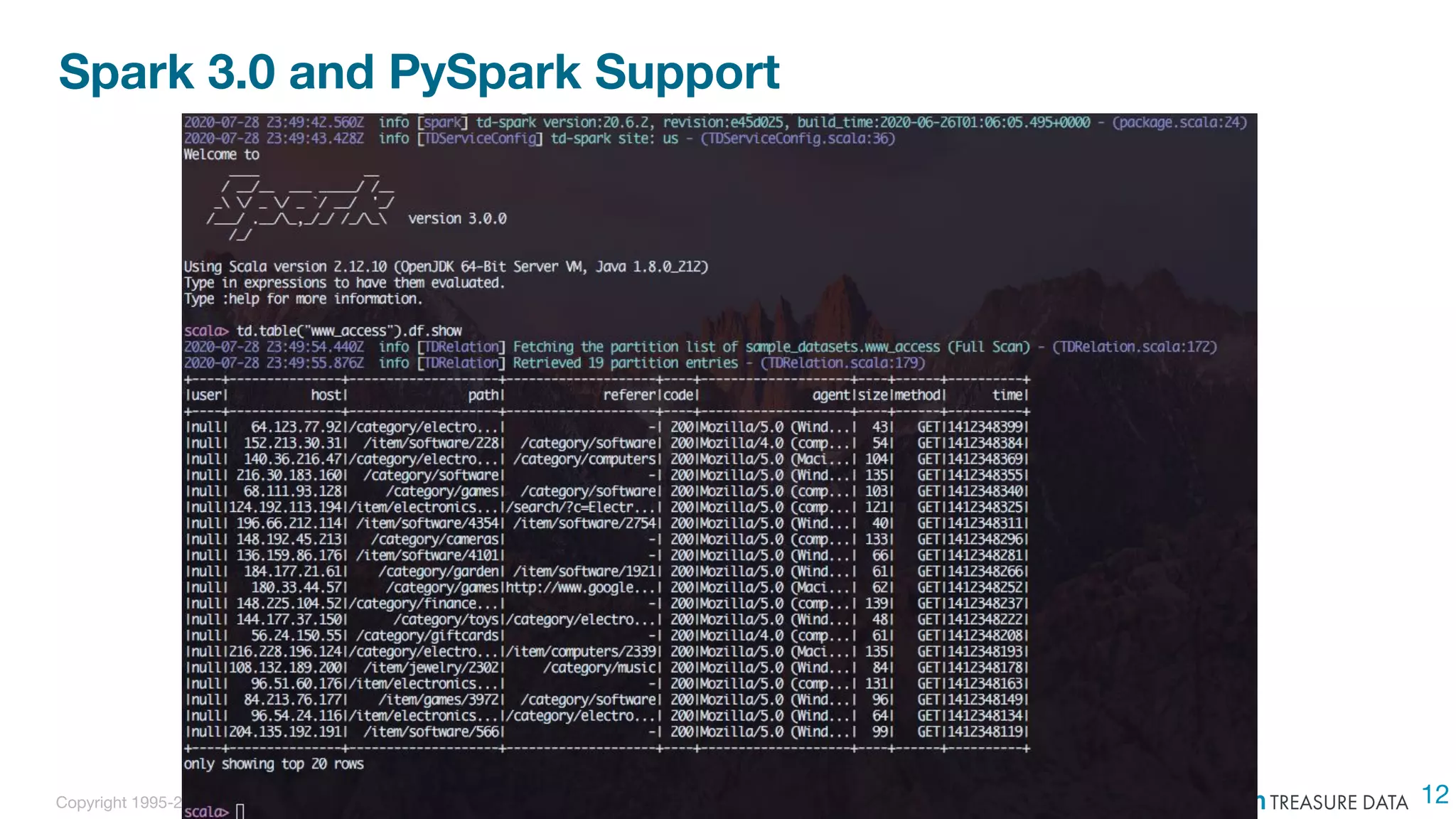

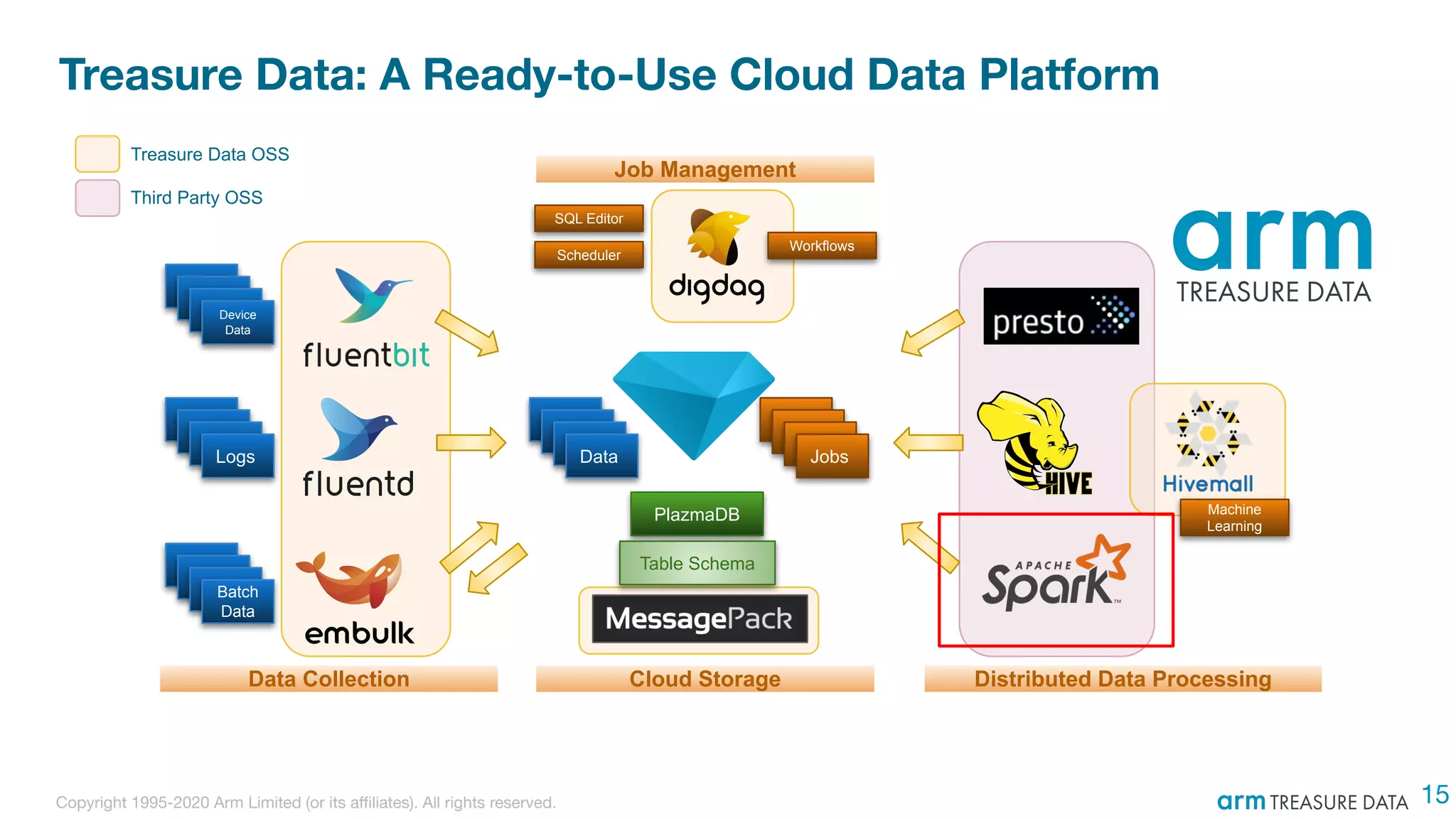

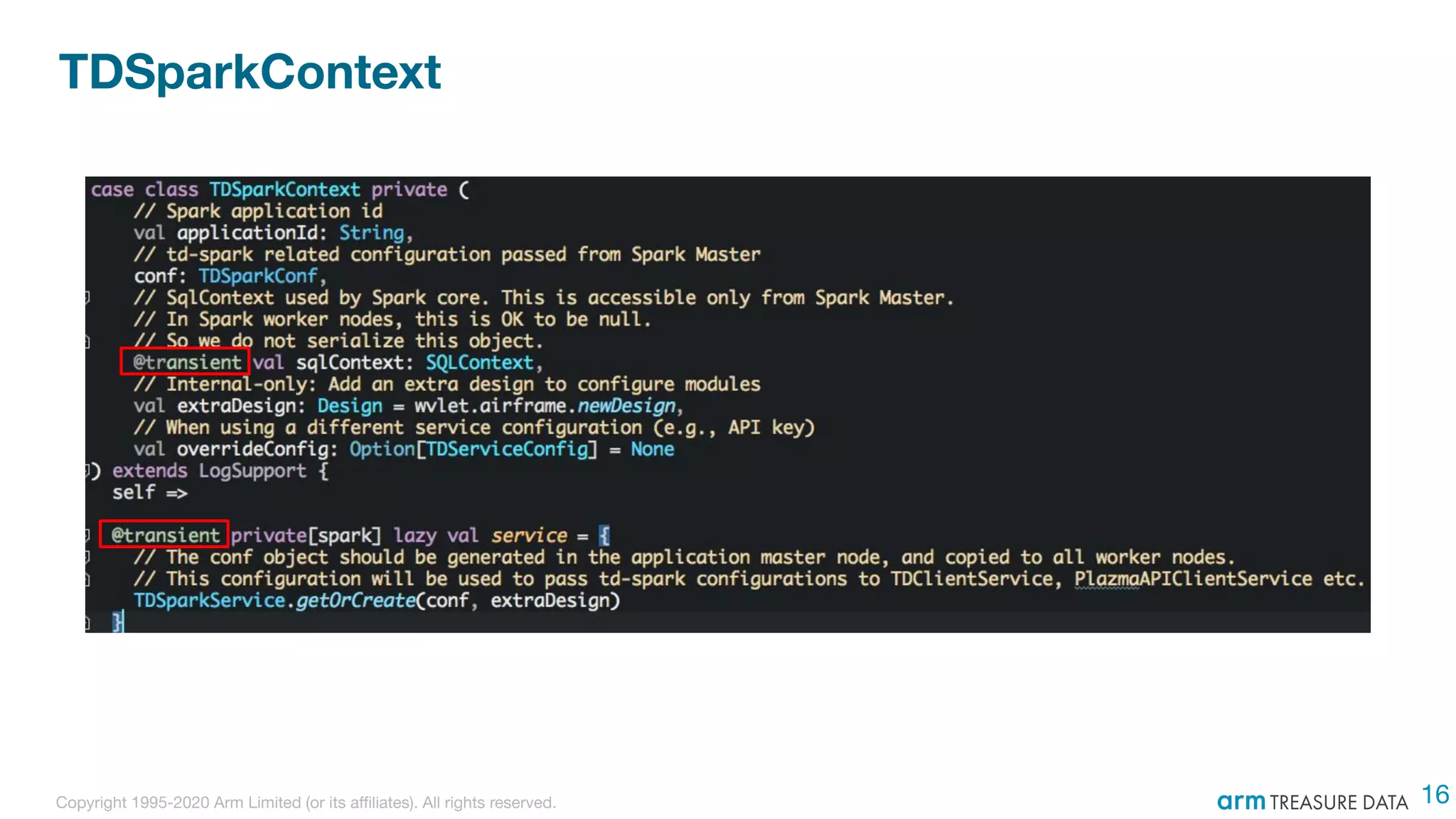

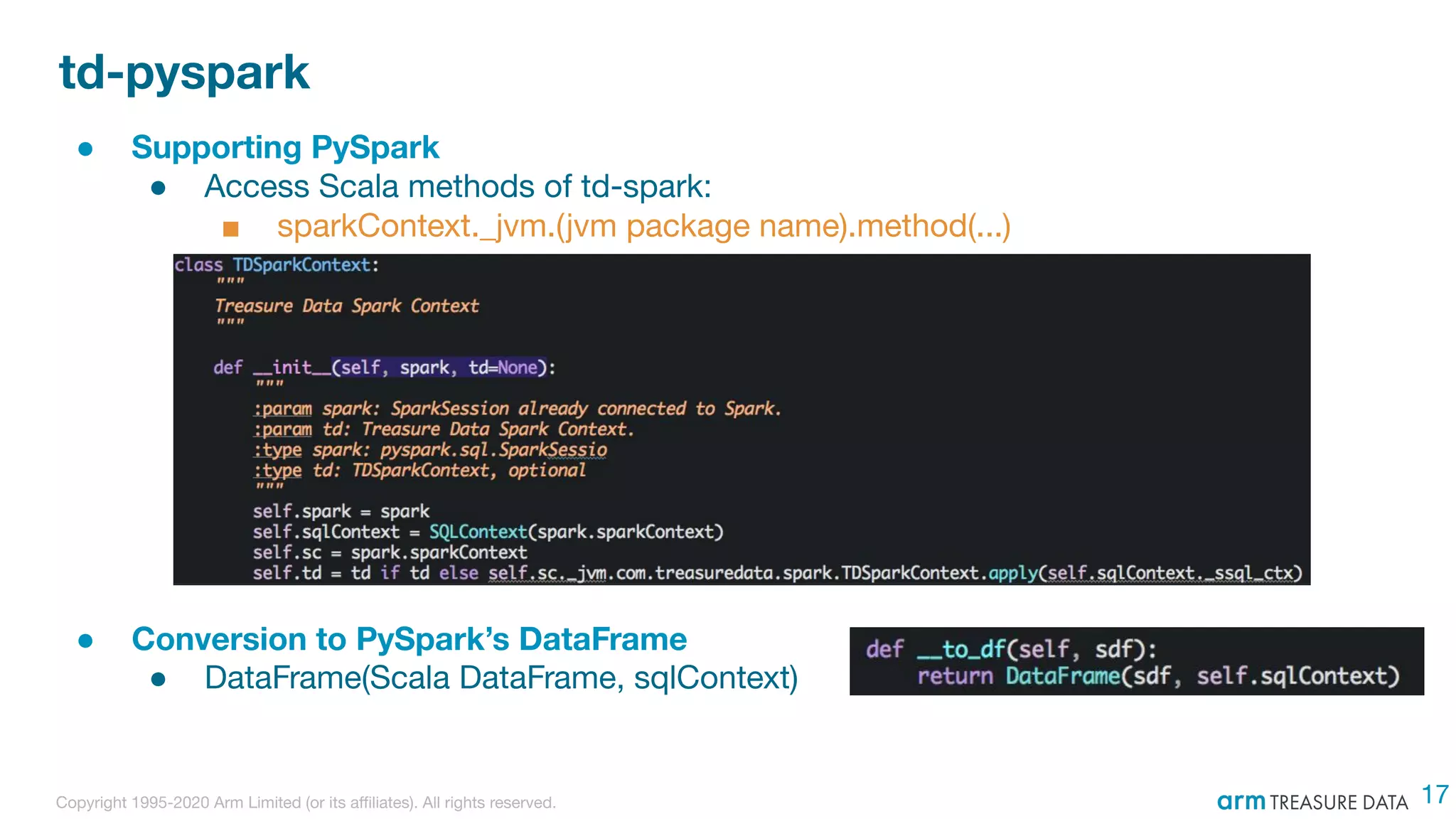

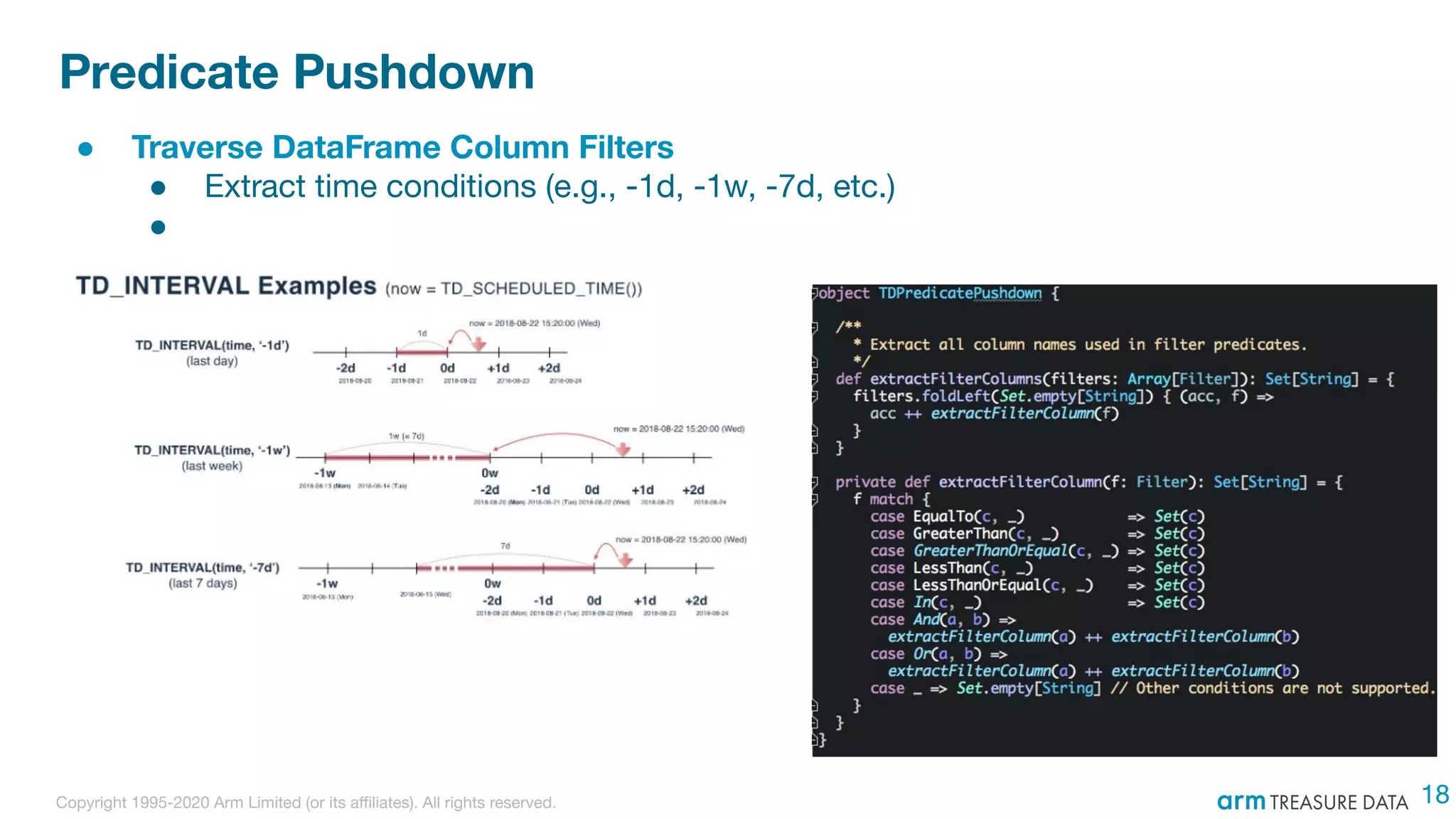

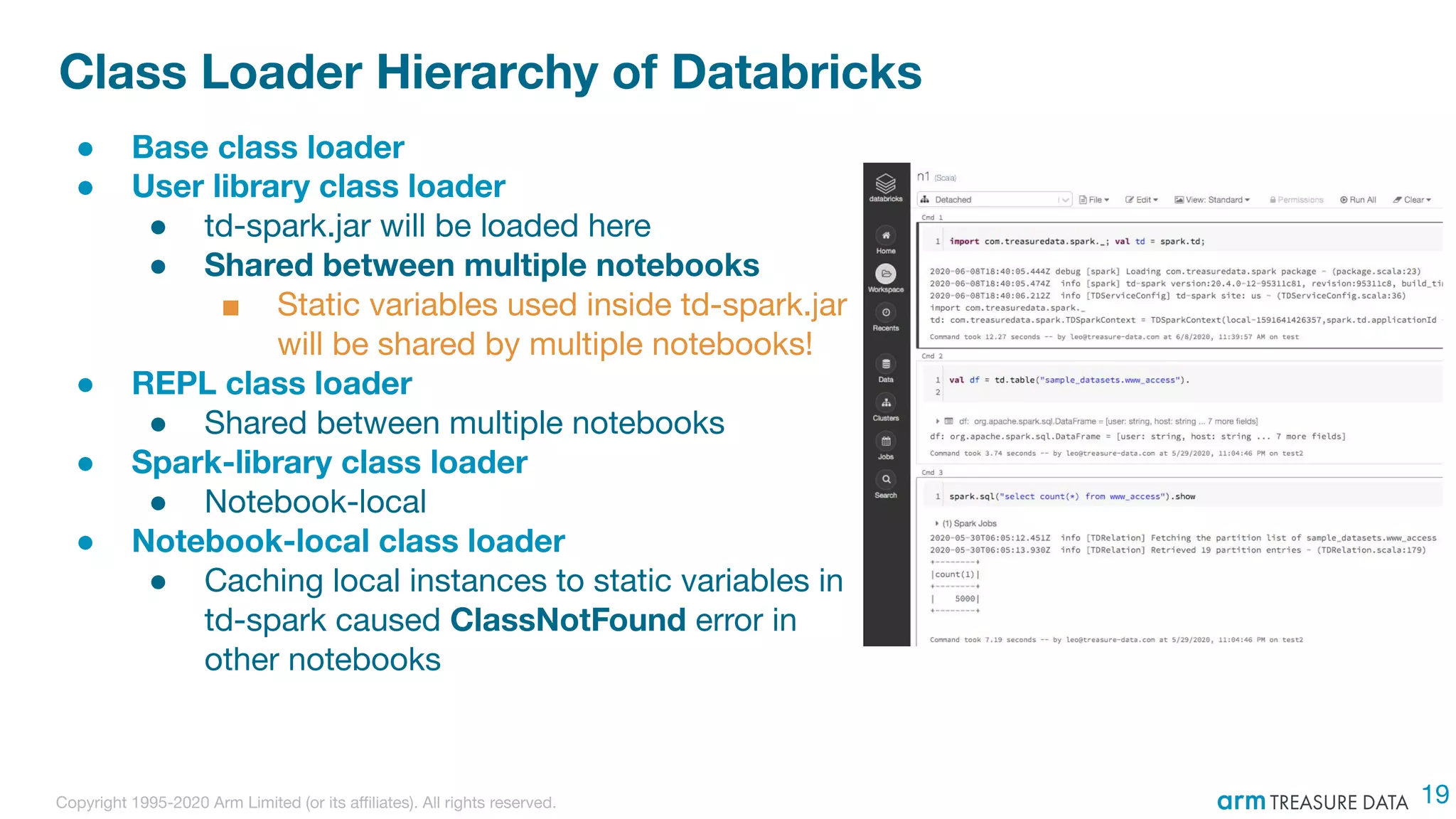

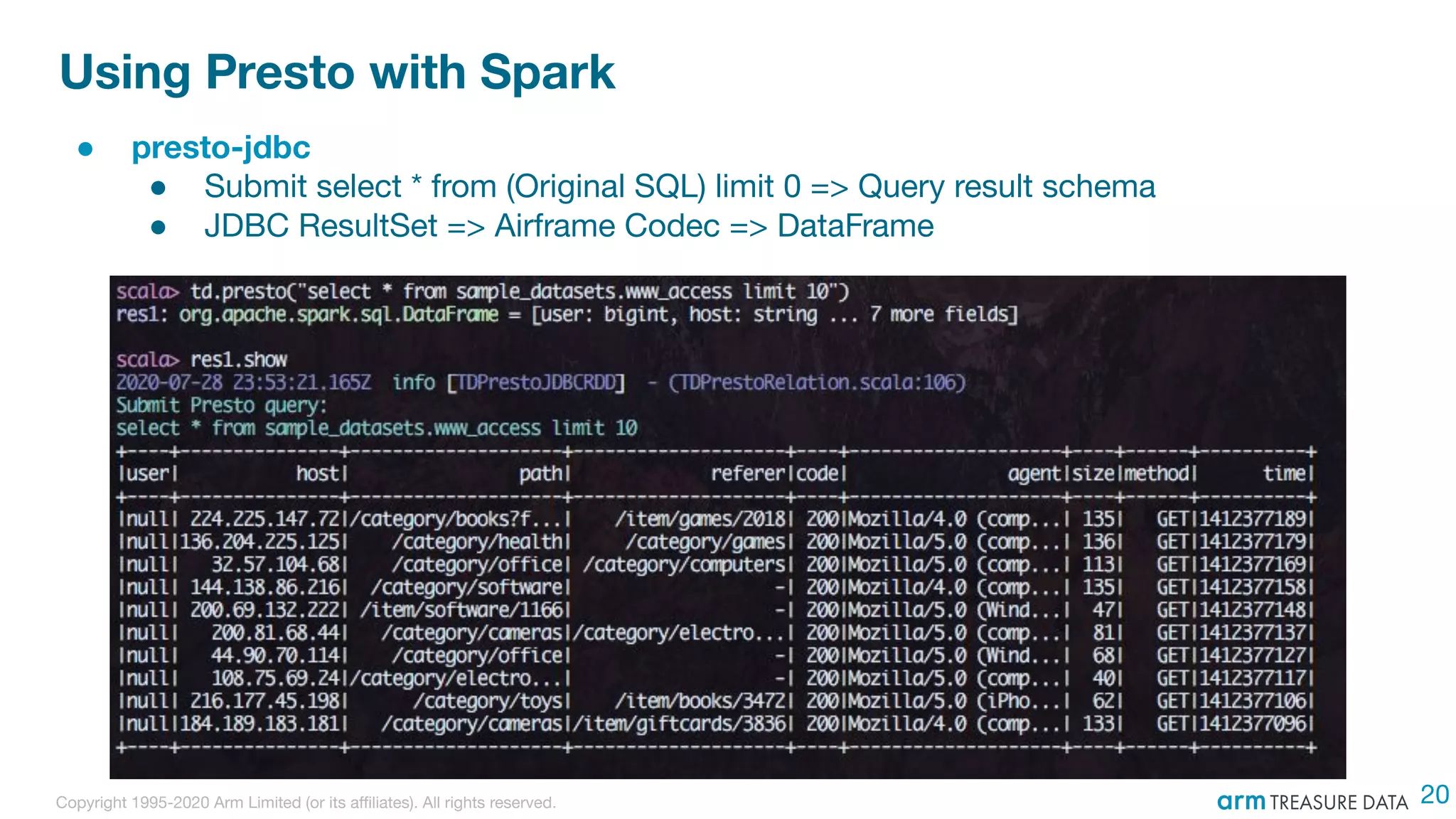

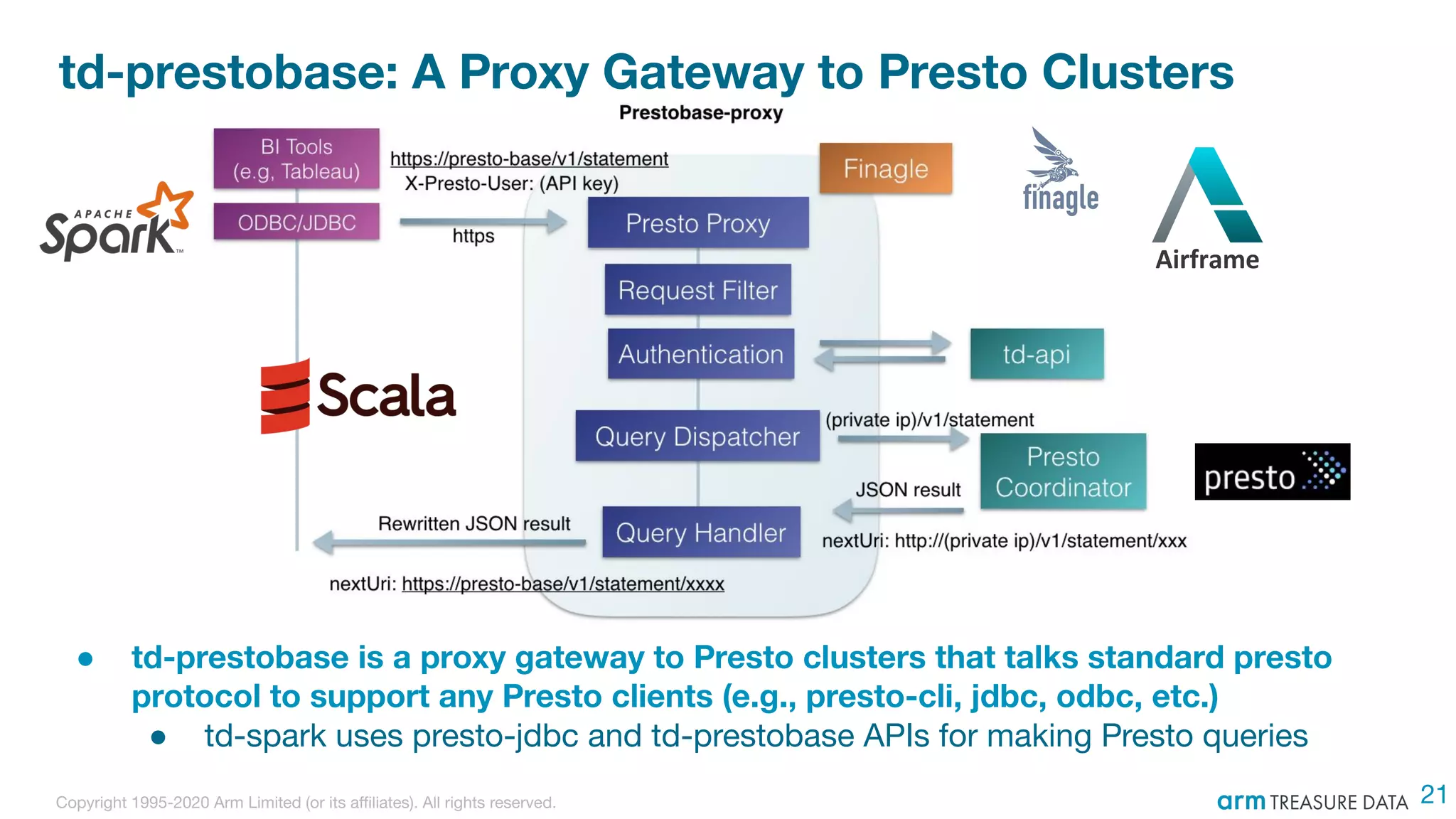

The document discusses the integration of Treasure Data's support into Spark through the development of the td-spark driver and the PlazmaDB cloud data store. It details the architecture and components of Airframe, which serves as a core set of Scala modules to facilitate this integration, as well as its utilities for improving functionality and performance. Additionally, it highlights features such as dependency injection, time series queries, and support for PySpark, along with resources for further information.