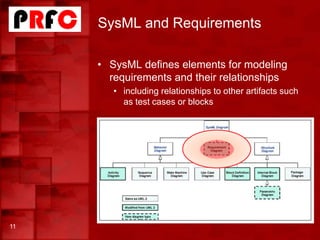

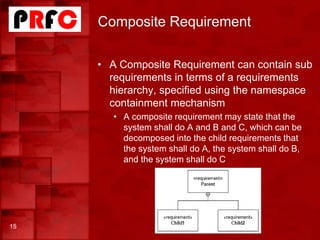

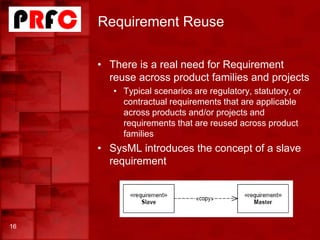

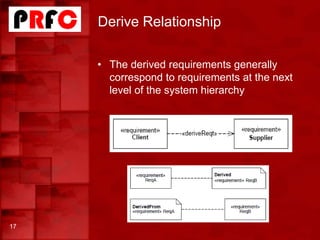

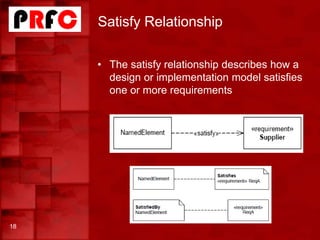

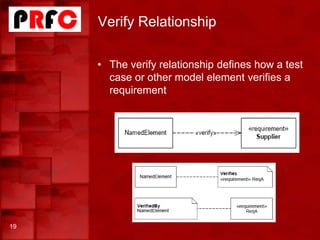

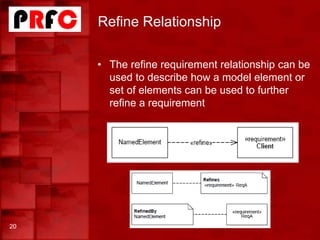

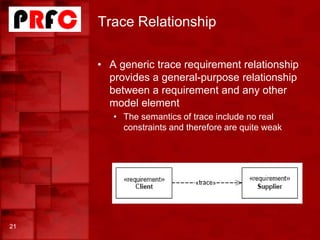

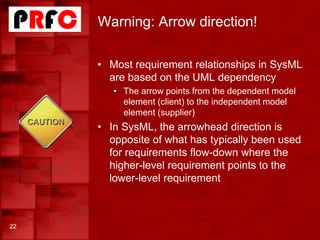

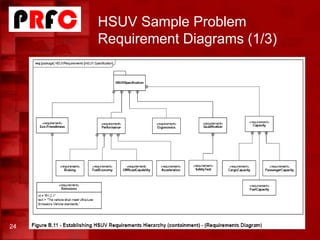

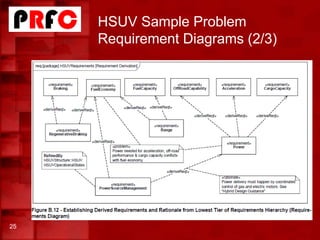

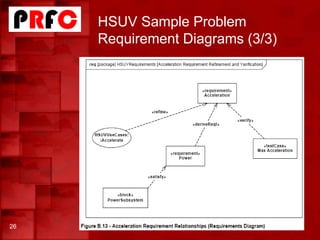

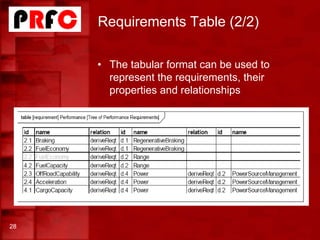

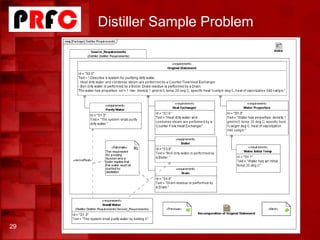

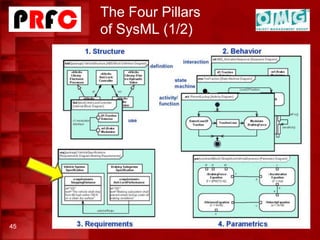

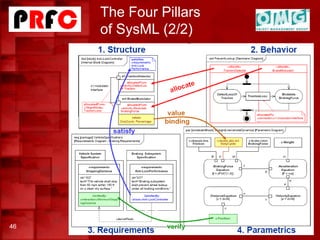

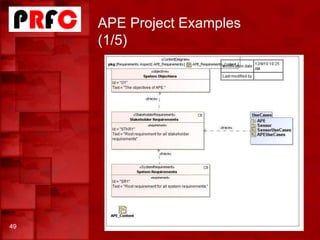

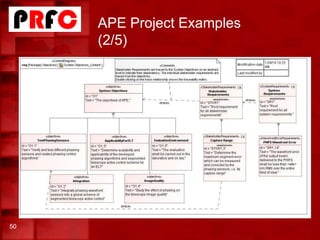

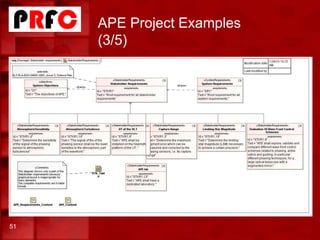

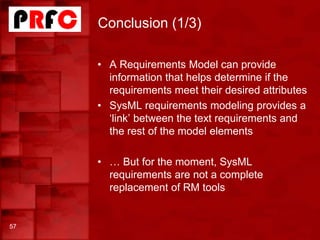

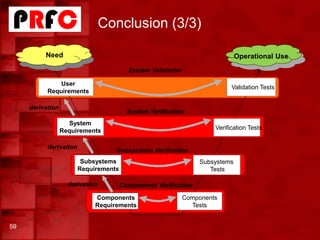

Pascal Roques gave a tutorial on requirements modeling with SysML. SysML is a graphical modeling language for specifying, analyzing, designing, and verifying complex systems. It provides constructs for modeling requirements and relating them to other system elements. Requirements can be organized hierarchically and related to other artifacts through trace, derive, satisfy, verify, and refine relationships. Industrial examples demonstrated how SysML enables improved collaboration, traceability, and management of requirements.