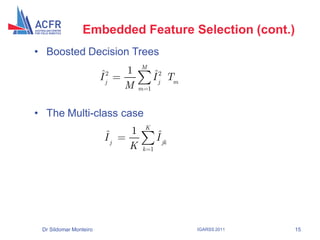

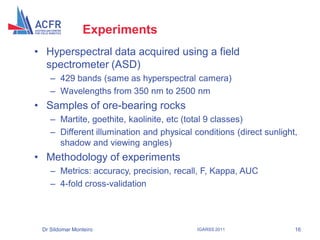

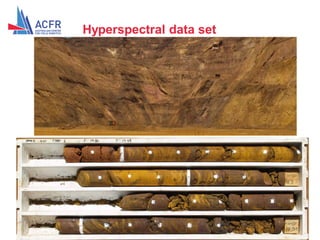

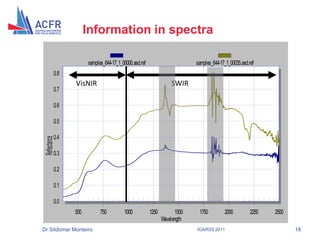

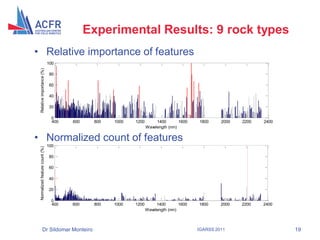

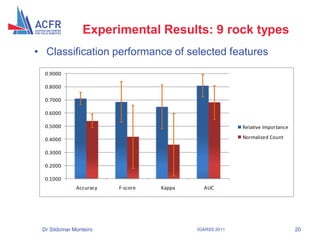

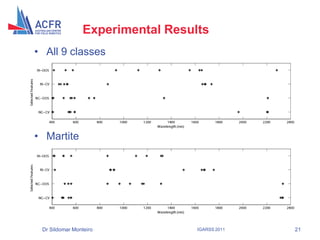

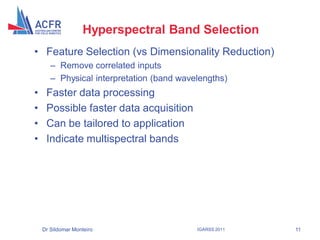

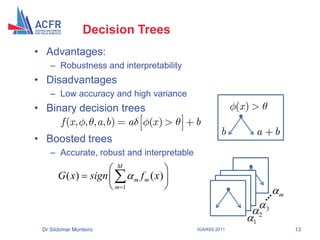

This document discusses using boosted decision trees to select important hyperspectral bands for geology classification. It aims to reduce dimensionality and processing time while maintaining classification accuracy. The method embeds band selection within the boosting process to identify the most informative bands. Experiments are conducted on hyperspectral data from an iron ore mine to evaluate the approach.

![Mine Face Scanning

[Nieto, Viejo and

Monteiro, 2010]

IGARSS 2011 6](https://image.slidesharecdn.com/st-110727101221-phpapp02/85/ST-Monteiro-EmbeddedFeatureSelection-pdf-6-320.jpg)

![Boosting

• Sound theoretical foundation

– Additive Logistic Regression [Friedman, 2000]

• Empirical studies show that boosting

– Yields small classification error rates

– Is very resilient to overfitting

• State-of-the-art results in many applications, e.g. face

recognition in computer vision

• The idea of Boosting is to train many “weak” learners

on various distributions (or set of weights) of the input

data and then combine the resulting classifiers into a

single “committee”

Dr Sildomar Monteiro IGARSS 2011 12](https://image.slidesharecdn.com/st-110727101221-phpapp02/85/ST-Monteiro-EmbeddedFeatureSelection-pdf-12-320.jpg)

![Embedded Feature Selection

• Relative Importance of input variables

1

ˆ

F (x )

2

Ij Ex . varx x j

xj

• Approximation for decision trees (heuristic)

[Friedman, 1999]

J 1

ˆ

I j2 (T ) ˆ

it2 ( (t ) j)

t 1

• Least-squares improvement criterion

2 wl wr 2

i Rl , Rr yl yr

wl w r

Dr Sildomar Monteiro IGARSS 2011 14](https://image.slidesharecdn.com/st-110727101221-phpapp02/85/ST-Monteiro-EmbeddedFeatureSelection-pdf-14-320.jpg)