Statistics mis

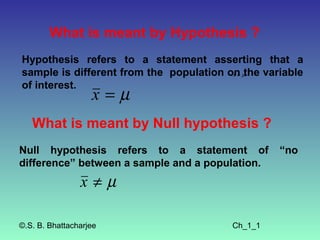

- 1. What is meant by Hypothesis ? Hypothesis refers to a statement asserting that a sample is different from the population on µthe variable x≠ of interest. x=µ What is meant by Null hypothesis ? Null hypothesis refers to a statement of “no difference” between a sample and a population. x≠µ ©.S. B. Bhattacharjee Ch_1_1

- 2. What is meant by proportion? The proportion refers to the number of cases in one category of a variable divided by number of cases in all categories of the variable. What is meant by ratio? It is the number of cases in one category divided by the number of cases in some other category. Female=10 Male= 20 ©.S. B. Bhattacharjee Ch_1_2

- 3. What is meant by mid- point ? This is the point exactly halfway between the upper and lower limits of a class interval. What is meant by bivariate table? It is a table that displays the joint frequency distribution of two variables. What is meant multiple table? It is a table that displays the joint frequency distribution of more than two variables. ©.S. B. Bhattacharjee Ch_1_3

- 4. What is meant by rate? It is the number of actual occurrences of some phenomenon or trait divided by the number of possible occurrences per some unit of time. Number of deaths Crude death rate = × 1000 per year Total populations Number of deaths = 10 Total population = 1000 10 Crude death rate = × 1000 1000 = 10 ©.S. B. Bhattacharjee Ch_1_4

- 5. What is meant by data ? Data refer to information expressed as numbers. What is percentage? It is the number of cases in a category of a variable divided by the number of cases in all categories of the variables, multiplied by 100. What is bar chart? A bar chart is a graphic display device for nominal and ordinal variables. ©.S. B. Bhattacharjee Ch_1_5

- 6. What is frequency distribution ? It is a table that displays the number of cases in each category of a variable . What is frequency polygon? It is a graphic display device for interval–ratio variables. What is histogram? It is a graphic display device for inter–ratio variables. ©.S. B. Bhattacharjee Ch_1_6

- 7. What are characteristics of the three levels of measurement? ©.S. B. Bhattacharjee Ch_1_7

- 8. Table 1.3 Basic Characteristics of the three levels of measurement Measurement Mathematical Levels Examples procedures operations permitted Nominal Sex, race Classification into Counting number religion, categories of cases in each marital status category of the variable; comparing sizes of categories Social class Classification into All above plus Ordinal (SES), attitude categories plus judgments of and opinion ranking of “greater than” and scales categories with “less than” respect to each other ©.S. B. Bhattacharjee Ch_1_8 Continued…….

- 9. Table 1.3 Basic Characteristics of the three levels of measurement Measurement Mathematical Levels Examples procedures operations permitted Interval- Age, number of All above plus All above plus all other ratio children, income distances mathematical between scores operations (addition, can be subtraction, described in multiplication, division, terms of equal square roots, etc.) units ©.S. B. Bhattacharjee Ch_1_9

- 10. What is meant by a variable ? A variable is any trait that can change values from case to case. Example: Person Age A 20 B 25 C 30 D 31 ©.S. B. Bhattacharjee Ch_1_10

- 11. What are the levels of measurement ? ©.S. B. Bhattacharjee Ch_1_11

- 12. Table 1.3 Basic Characteristics of the three levels of measurement Measurement Mathematical Levels Examples procedures operations permitted Normal Sex, race Classification into Counting number of religion, categories cases in each category of the variable; marital status comparing sizes of categories Ordinal Social class Classification into All above plus (SES), attitude categories plus judgments of “greater and opinion ranking of than” and “less than” scales categories with respect to each other ©.S. B. Bhattacharjee Continued……. Ch_1_12

- 13. Table 1.3 Basic Characteristics of the three levels of measurement Measurement Mathematical Levels Examples procedures operations permitted Interval- Age, number of All above plus All above plus all other ratio children, income distances mathematical between scores operations (addition, can be subtraction, described in multiplication, division, terms of equal square roots, etc.) units ©.S. B. Bhattacharjee Ch_1_13

- 14. What is meant by mean? The mean is an average that is computed by adding up the scores and then diving the same by the number of scores. Example: 10, 20, 30 ©.S. B. Bhattacharjee Ch_1_14

- 15. What is meant by mode? The mode of any distribution is the value that occurs most frequently. Example: 58, 82, 82, 90, 98 Here the mode is 82 since it occurs twice and others scores occur only once. ©.S. B. Bhattacharjee Ch_1_15

- 16. What is meant by median? The median is the exact center of a distribution scores. It is the point in a distribution of scores above and below which exactly half of the cases fall. Example: 10, 15, 20, 25, 30 ©.S. B. Bhattacharjee Ch_1_16

- 17. What is meant by guidelines that one has to keep in mind in choosing a measure of central tendency? The mode may be used when: Variables are measured at the nominal level. You want a quick and easy measure for ordinal and interval-ratio variables. You want to report the most common score. ©.S. B. Bhattacharjee Ch_1_17

- 18. What is meant by guidelines that one has to keep in mind in choosing a measure of central tendency? The median may be used when: Variables are measured at the ordinal level. Variables measured at the interval-ratio level have badly skewed distributions. You want to report the central score. The median always lies at the exact center of a distribution. ©.S. B. Bhattacharjee Continued…….. Ch_1_18

- 19. What is meant by guidelines that one has to keep in mind in choosing a measure of central tendency? The mean may be used when: Variables are measured at the interval-ratio level (except for badly skewed distributions). You want to report the typical score. The mean is “ the fulcrum that exactly balances all of the scores.” You anticipate additional statistical analysis. ©.S. B. Bhattacharjee Ch_1_19

- 20. What are the data from counseling center survey? ©.S. B. Bhattacharjee Ch_1_20

- 21. Table 2.4 Marital Satisfaction Student Sex Age Status With Services A Male Single 4 18 B Male Married 2 19 C Female Single 4 18 D Female Single 2 19 E Male Married 1 20 F Male Single 3 20 G Female Married 4 18 Continued…. ©.S. B. Bhattacharjee Ch_1_21

- 22. Table 2.4 Marital Satisfaction Student Sex Age Status With Services H Female Single 3 21 I Male Single 3 19 J Female Divorced 3 23 K Female Single 3 24 L Male Married 3 18 M Female Single 1 22 N Female Married 3 26 Continued…. ©.S. B. Bhattacharjee Ch_1_22

- 23. Table 2.4 Marital Satisfaction Student Sex Age Status With Services O Male Single 3 18 P Male Married 4 19 Q Female Married 2 19 R Male Divorced 1 19 S Female Divorced 3 21 T Female Single 2 20 ©.S. B. Bhattacharjee Ch_1_23

- 24. Application 2.4 The following list shows the ages of 50 prisoners enrolled in a work-release program. Is this groups young or old? A frequency distribution will provide an accurate picture of the overall age structure. 18 57 27 19 60 20 32 62 26 20 25 35 75 25 21 30 45 67 41 30 37 47 65 42 25 18 51 22 52 30 22 18 27 53 38 27 23 32 35 42 32 37 32 40 45 55 42 45 50 47 ©.S. B. Bhattacharjee Continued……. Ch_1_24

- 25. Application 2.4 ©.S. B. Bhattacharjee Ch_1_25 Continued…….