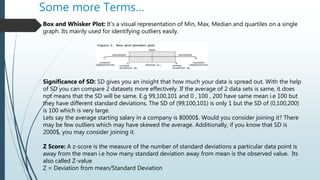

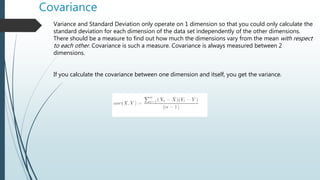

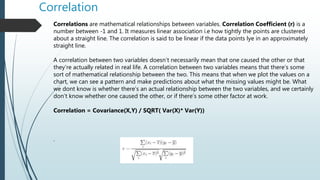

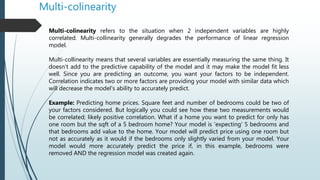

The document explains the differences between quantitative and qualitative variables, emphasizing that quantitative variables have meaningful numerical values while qualitative variables represent categories. It covers statistical concepts including descriptive and inferential statistics, measures of central tendency, dispersion, and the significance of correlation and covariance in data analysis. Additionally, it addresses issues like multicollinearity in regression models and how it affects prediction accuracy.