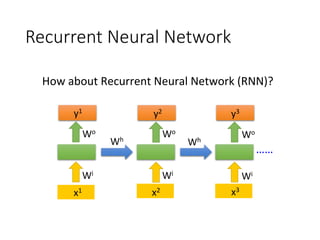

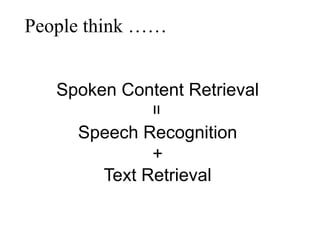

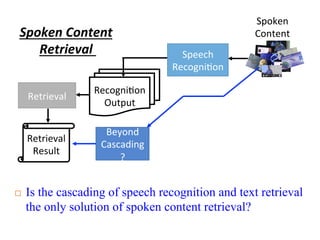

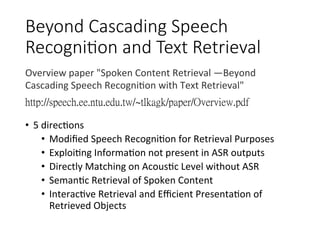

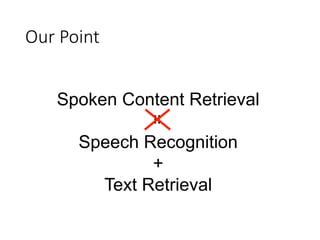

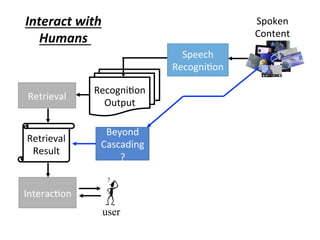

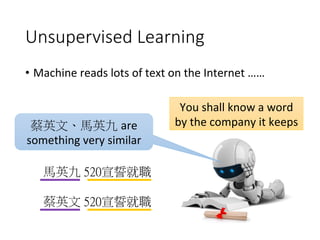

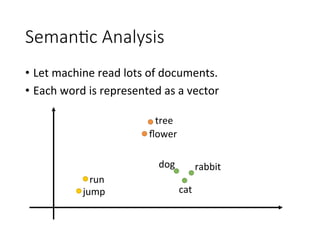

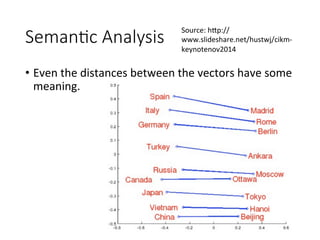

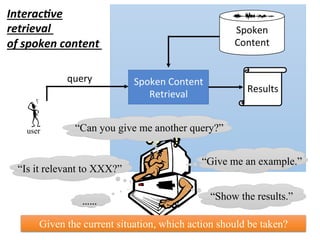

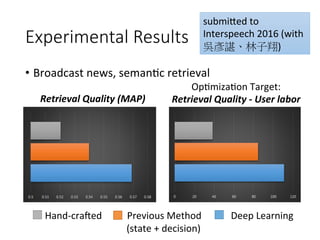

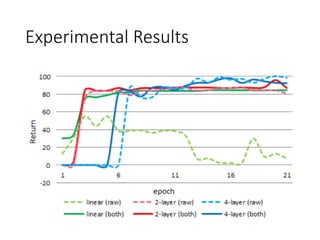

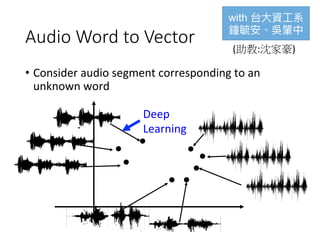

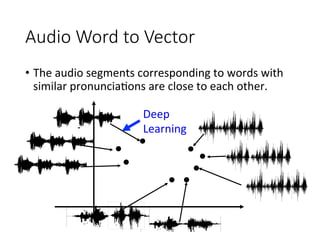

The document discusses the application of deep learning techniques in speech processing, highlighting challenges and advancements in speech recognition and spoken content retrieval. It covers methods such as recurrent neural networks, sequence-to-sequence learning, and semantic retrieval, as well as the integration of speech recognition and text retrieval systems. Additionally, it explores unsupervised learning and interactive retrieval systems to improve accuracy in understanding spoken content.

![Recurrent Neural Network

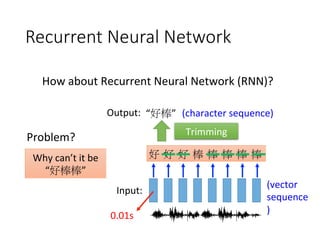

• Connec4onist Temporal Classifica4on (CTC) [Alex Graves,

ICML’06][Alex Graves, ICML’14][Haşim Sak, Interspeech’15][Jie Li,

Interspeech’15][Andrew Senior, ASRU’15]

好

φ

φ

棒

φ

φ

φ

φ

好

φ

φ

棒

φ

棒

φ

φ

“好棒”

“好棒棒”

Add an extra symbol

“φ” represen4ng “null”](https://image.slidesharecdn.com/random-160717002419/85/slide-6-320.jpg)

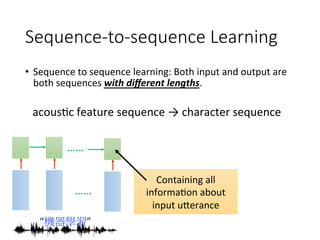

![Sequence-to-sequence Learning

• Sequence to sequence learning: Both input and output are

both sequences with different lengths.

……

……

“機器學習”

機

習

器

學

Add a symbol “。 “ (句點)

[Ilya Sutskever, NIPS’14][Dzmitry Bahdanau, arXiv’15]

。](https://image.slidesharecdn.com/random-160717002419/85/slide-9-320.jpg)

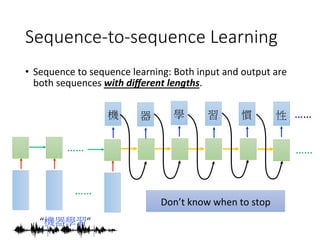

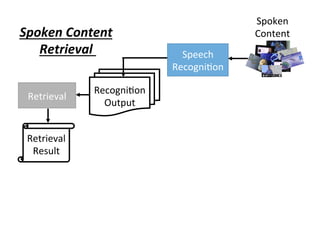

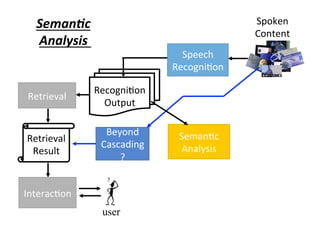

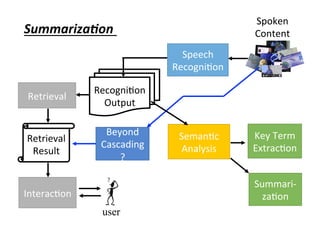

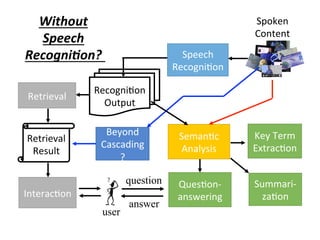

![Spoken

Content

Speech

Recogni4on

Beyond

Cascading

?

Recogni4on

Output

Retrieval

Seman4c

Analysis

Key Term

Extrac4on

Retrieval

Result

Interac4on

user

Key Term

Extrac,on

[Interspeech

2015]

(with 沈昇勳)](https://image.slidesharecdn.com/random-160717002419/85/slide-22-320.jpg)

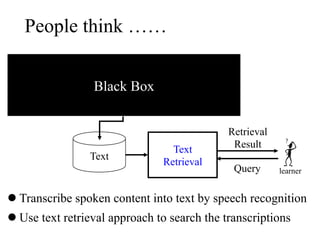

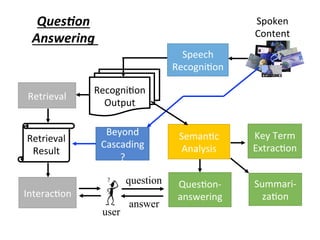

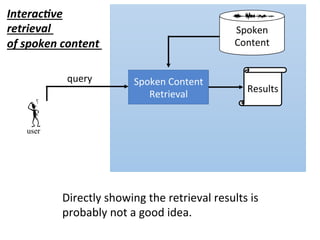

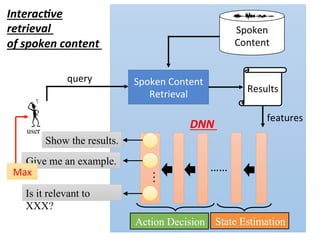

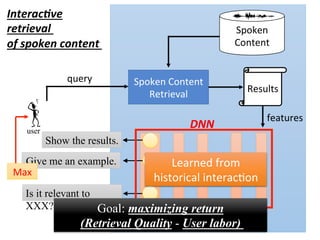

![user

Spoken Content

Retrieval

Results

Spoken

Content

Interac,ve

retrieval

of spoken content

query

State

Es4ma4on

Ac4on

Decision

state

The degree of

clarity from the

retrieval results

ac4on

features

¤ The policy π(s) is a function

¤ Input: state s, output: action a

Decide the actions by intrinsic

policy π(S)

[Interspeech 2012][ICASSP 2013]](https://image.slidesharecdn.com/random-160717002419/85/slide-43-320.jpg)

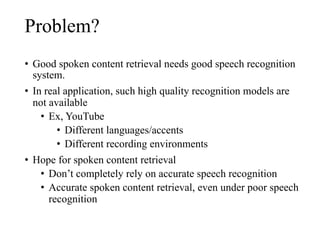

![Spoken Content Retrieval without

Speech Recognition

user

“US President”

spoken query

[Hazen, ASRU 09]

[Zhang Glass, ASRU 09]

[Chan Lee, Interspeech 10]

[Zhang Glass, ICASSP 11]

[Gupta, Interspeech 11]

[Zhang Glass, Interspeech 11]

[Zhang Glass, ASRU 09]

[Huijbregts, ICASSP 11]

[Chan Lee, Interspeech 11]

Computing similarity between spoken queries and audio

files on signal level

Spoken Content

Handheld

device](https://image.slidesharecdn.com/random-160717002419/85/slide-58-320.jpg)