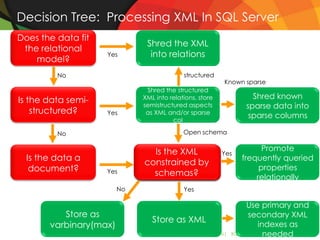

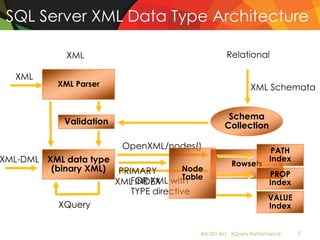

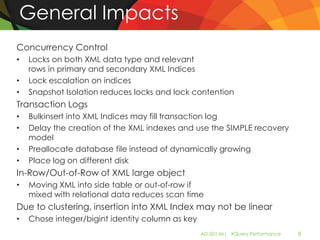

The document outlines best practices and performance tuning techniques for using XML queries in SQL Server, highlighting when to use XML and how to optimize performance with various methods and indexing strategies. Key topics include XML data type architecture, the importance of using appropriate XML models, the use of XQuery methods for effective querying, and the creation and maintenance of XML indexes. The document provides practical examples and guidance to improve performance metrics for XML data handling within SQL Server.

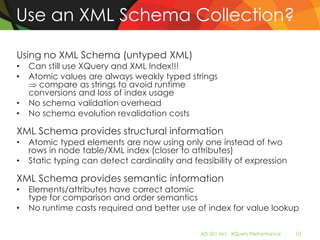

![Choose The Right XML Model

• Element-centric versus attribute-centric

<Customer><name>Joe</name></Customer>

<Customer name="Joe" />

+: Attributes often better performing querying

–: Parsing Attributes uniqueness check

• Generic element names with type attribute vs Specific

element names

<Entity type="Customer">

<Prop type="Name">Joe</Prop>

</Entity>

<Customer><name>Joe</name></Customer>

+: Specific names shorter path expressions

+: Specific names no filter on type attribute

/Entity[@type="Customer"]/Prop[@type="Name"] vs /Customer/name

• Wrapper elements

<Orders><Order id="1"/></Orders>

+: No wrapper elements smaller XML, shorter path expressions

AD-501-M| XQuery Performance 9](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-9-320.jpg)

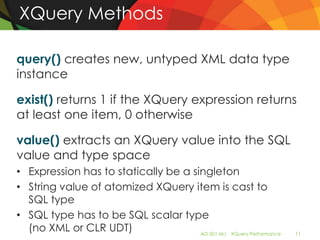

![sql:column()/sql:variable()

Map SQL value and type into XQuery values and types in context of XQuery or

XML-DML

• sql:variable(): accesses a SQL variable/parameter

declare @value int

set @value=42

select * from T

where

T.x.exist('/a/b[@id=sql:variable("@value")]')=1

• sql:column(): accesses another column value

tables: T(key int, x xml), S(key int, val int)

select * from T join S on T.key=S.key

where T.x.exist('/a/b[@id=sql:column("S.val")]')=1

• Restrictions in SQL Server:

No XML, CLR UDT, datetime, or deprecated text/ntext/image

AD-501-M| XQuery Performance 13](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-13-320.jpg)

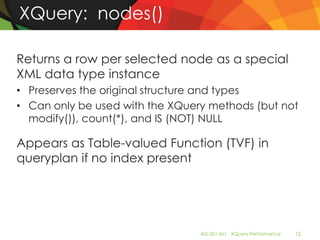

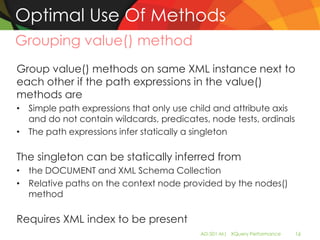

![Optimal Use Of Methods

How to Cast from XML to SQL

BAD:

CAST( CAST(xmldoc.query('/a/b/text()') as

nvarchar(500)) as int)

GOOD:

xmldoc.value('(/a/b/text())[1]', 'int')

BAD:

node.query('.').value('@attr',

'nvarchar(50)')

GOOD:

node.value('@attr', 'nvarchar(50)')

AD-501-M| XQuery Performance 15](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-15-320.jpg)

![Optimal Use of Methods

Using the right method to join and compare

Use exist() method, sql:column()/sql:variable() and an

XQuery comparison for checking for a value or joining

if secondary XML indices present

BAD:*

select doc

from doc_tab join authors

on doc.value('(/doc/mainauthor/lname/text())[1]',

'nvarchar(50)') = lastname

GOOD:

select doc

from doc_tab join authors

on 1 = doc.exist('/doc/mainauthor/lname/text()[. =

sql:column("lastname")]')

* If applied on XML variable/no index present, value()

method is most of the time more efficient

AD-501-M| XQuery Performance 17](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-17-320.jpg)

![Optimal Use of Methods

Avoiding bad costing with nodes()

nodes() without XML index is a Table-valued function (details later)

Bad cardinality estimates can lead to bad plans

• BAD:

select c.value('@id', 'int') as CustID

, c.value('@name', 'nvarchar(50)') as CName

from Customer, @x.nodes('/doc/customer') as N(c)

where Customer.ID = c.value('@id', 'int')

• BETTER (if only one wrapper doc element):

select c.value('@id', 'int') as CustID

, c.value('@name', 'nvarchar(50)') as CName

from Customer, @x.nodes('/doc[1]') as D(d)

cross apply d.nodes('customer') as N(c)

where Customer.ID = c.value('@id', 'int')

Use temp table (insert into #temp select … from nodes()) or Table-

valued parameter instead of XML to get better estimates

AD-501-M| XQuery Performance 18](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-18-320.jpg)

![Optimal Use Of Methods

Avoiding multiple method evaluations

Use subqueries

• BAD:

SELECT CASE isnumeric (doc.value(

'(/doc/customer/order/price)[1]', 'nvarchar(32)'))

WHEN 1 THEN doc.value(

'(/doc/customer/order/price)[1]', 'decimal(5,2)')

ELSE 0 END

FROM T

• GOOD:

SELECT CASE isnumeric (Price)

WHEN 1 THEN CAST(Price as decimal(5,2))

ELSE 0 END

FROM (SELECT doc.value(

'(/doc/customer/order/price)[1]',

'nvarchar(32)')) as Price FROM T) X

Use subqueries also with NULLIF()

AD-501-M| XQuery Performance 19](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-19-320.jpg)

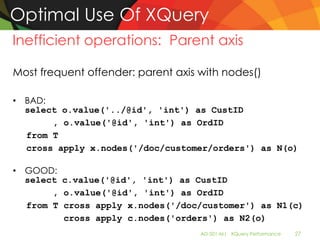

![Optimal Use Of XQuery

Atomization of nodes

Value comparisons, XQuery casts and value() method

casts require atomization of item

• attribute:

/person[@age = 42]

/person[data(@age) = 42]

• Atomic typed element:

/person[age = 42] /person[data(age) = 42]

• Untyped, mixed content typed element (adds UDX):

/person[age = 42] /person[data(age) = 42]

/person[string(age) = 42]

• If only one text node for untyped element (better):

/person[age/text() = 42]

/person[data(age/text()) = 42]

• value() method on untyped elements:

value('/person/age', 'int')

value('/person/age/text()', 'int')

String() aggregates all text nodes, prohibits index use

AD-501-M| XQuery Performance 23](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-23-320.jpg)

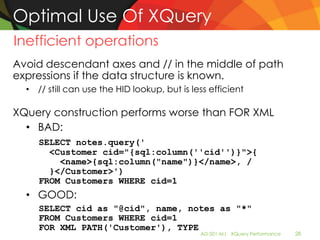

![Optimal Use Of XQuery

Casting Values

Value comparisons require casts and type promotion

• Untyped attribute:

/person[@age = 42] /person[xs:decimal(@age) = 42]

• Untyped text node():

/person[age/text() = 42]

/person[xs:decimal(age/text()) = 42]

• Typed element (typed as xs:int):

/person[salary = 3e4] /person[xs:double(salary) =

3e4]

Casting is expensive and prohibits index lookup

Tips to avoid casting

• Use appropriate types for comparison (string for untyped)

• Use schema to declare type AD-501-M| XQuery Performance 24](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-24-320.jpg)

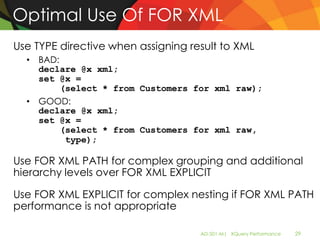

![Optimal Use Of XQuery

Maximize XPath expressions

Single paths are more efficient than twig paths

Avoid predicates in the middle of path expressions

book[@ISBN = "1-8610-0157-6"]/author[first-

name = "Davis"]

/book[@ISBN = "1-8610-0157-6"] "∩"

/book/author[first-name = "Davis"]

Move ordinals to the end of path expressions

• Make sure you get the same semantics!

• /a[1]/b[1] ≠ (/a/b)[1] ≠ /a/b[1]

• (/book/@isbn)[1] is better than/book[1]/@isbn

AD-501-M| XQuery Performance 25](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-25-320.jpg)

![Optimal Use Of XQuery

Maximize XPath expressions in exist()

Use context item in predicate to lengthen path in exist()

• Existential quantification makes returned node irrelevant

• BAD:

SELECT * FROM docs WHERE 1 = xCol.exist

('/book/subject[text() = "security"]')

• GOOD:

SELECT * FROM docs WHERE 1 = xCol.exist

('/book/subject/text()[. = "security"]')

• BAD:

SELECT * FROM docs WHERE 1 = xCol.exist

('/book[@price > 9.99 and @price < 49.99]')

• GOOD:

SELECT * FROM docs WHERE 1 = xCol.exist

('/book/@price[. > 9.99 and . < 49.99]')

This does not work with or-predicate AD-501-M| XQuery Performance 26](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-26-320.jpg)

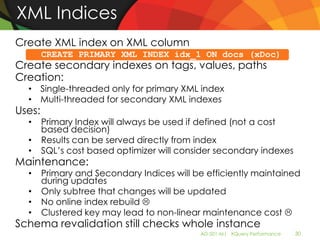

![Takeaway: XML Indices

PRIMARY XML Index – Use when lots of XQuery

FOR VALUE – Useful for queries where values are

more selective than paths such as

//*[.=“Seattle”]

FOR PATH – Useful for Path expressions: avoids

joins by mapping paths to hierarchical index

(HID) numbers. Example: /person/address/zip

FOR PROPERTY – Useful when optimizer chooses

other index (for example, on relational column,

or FT Index) in addition so row is already known

AD-501-M| XQuery Performance 35](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-35-320.jpg)

![Use of Full-Text Index for Optimization

Can provide improvement for XQuery contains() queries

Query for documents where section title contains “optimization”

Use Fulltext index to prefilter candidates (includes false positives)

SELECT * FROM docs

WHERE contains(xCol, 'optimization')

1 = xCol.exist('

/book/section/title/text()[contains(.,"optimization")]

AND 1 = xCol.exist('

')

/book/section/title/text()[contains(.,"optimization")]

')

AD-501-M| XQuery Performance 39](https://image.slidesharecdn.com/ad501mxquerymrys-111027014257-phpapp01/85/SQLPASS-AD501-M-XQuery-MRys-39-320.jpg)