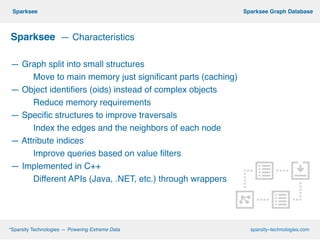

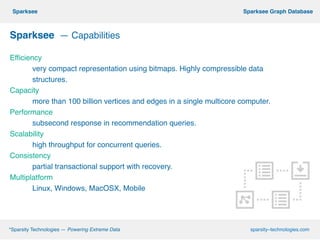

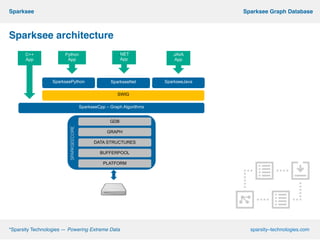

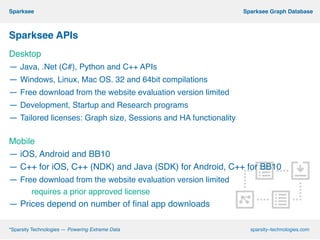

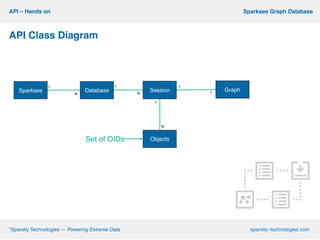

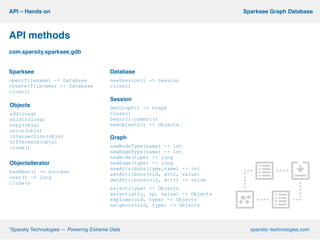

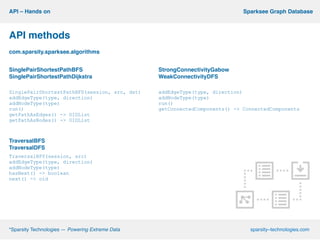

This document describes Sparksee, a graph database created by Sparsity Technologies. Sparksee is designed for large, labeled graphs and uses vertical partitioning and bitmaps to store graph data compactly. It provides high performance for queries and analytics on social networks and bibliographic databases. The document outlines Sparksee's architecture, capabilities including efficiency, capacity, and scalability. It also describes the desktop and mobile APIs available for accessing Sparksee and provides examples of common API methods.