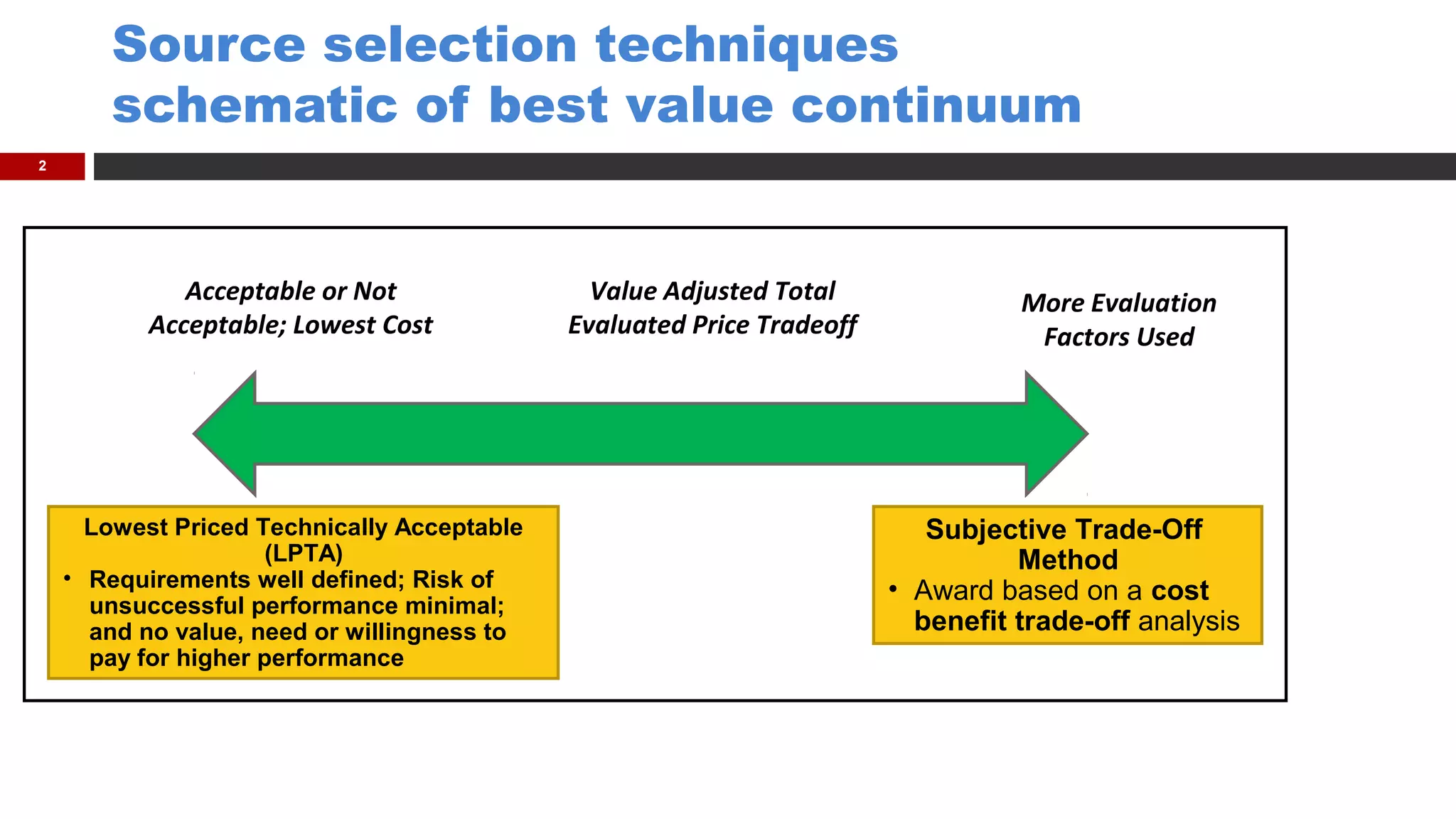

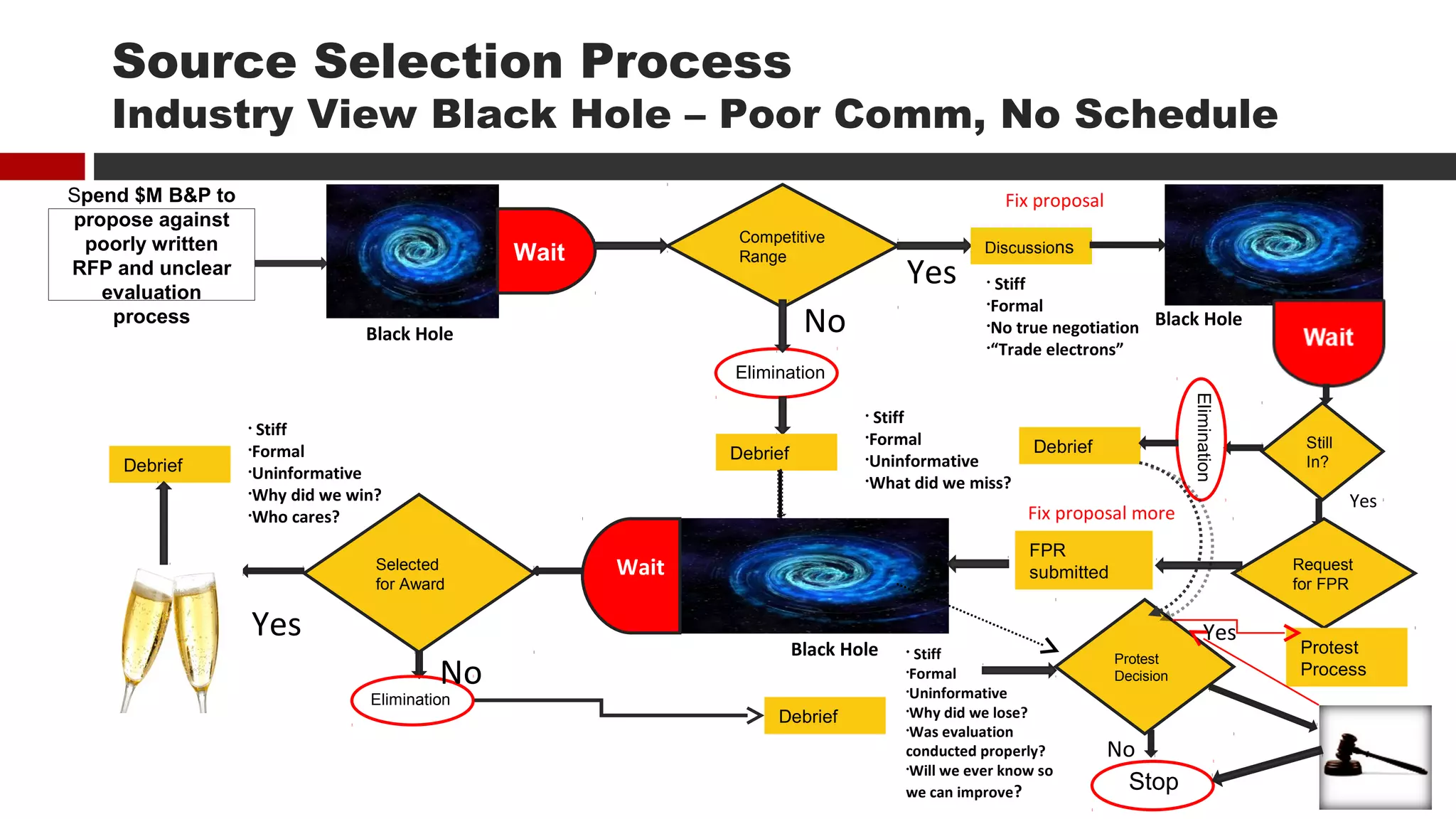

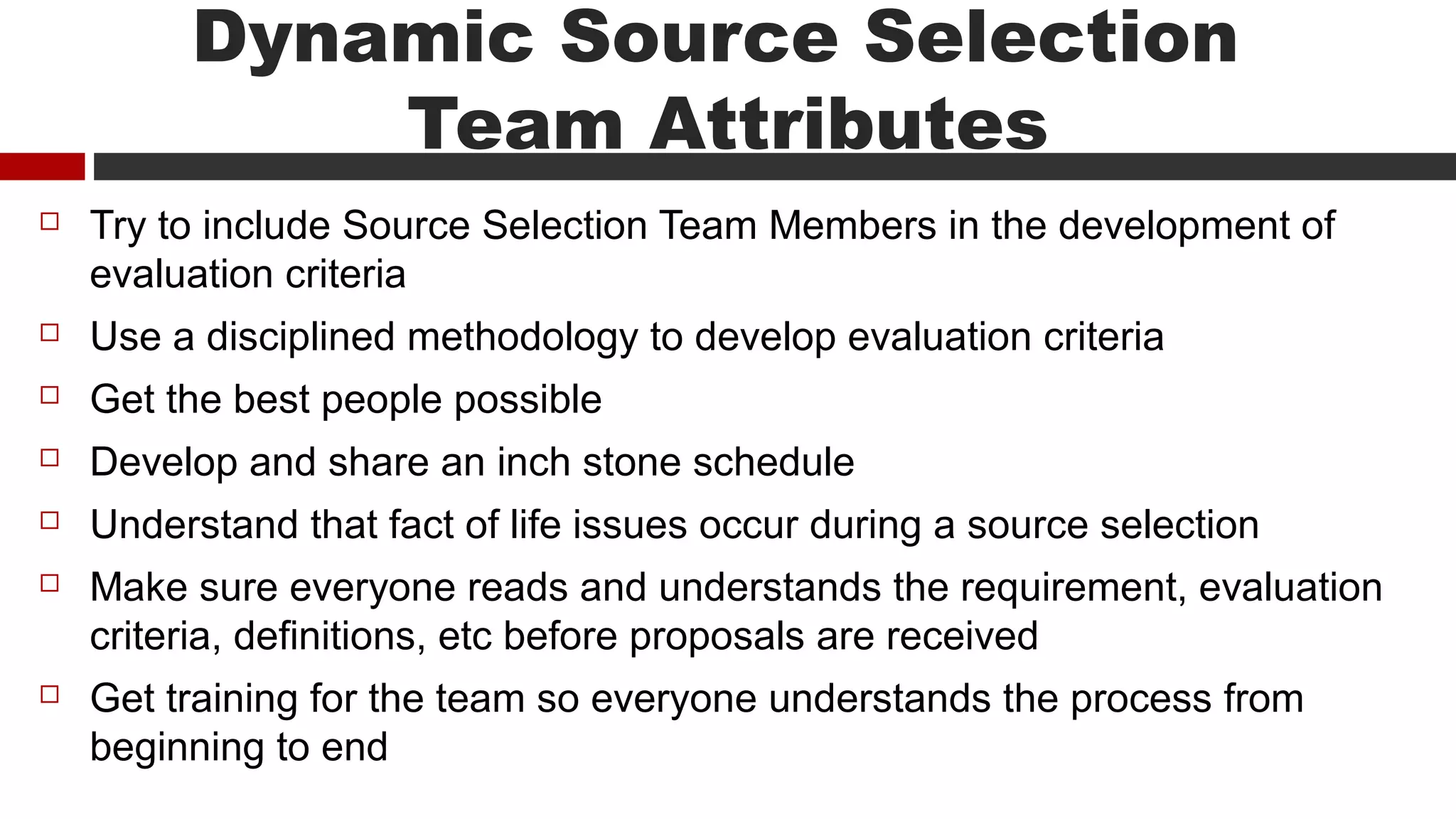

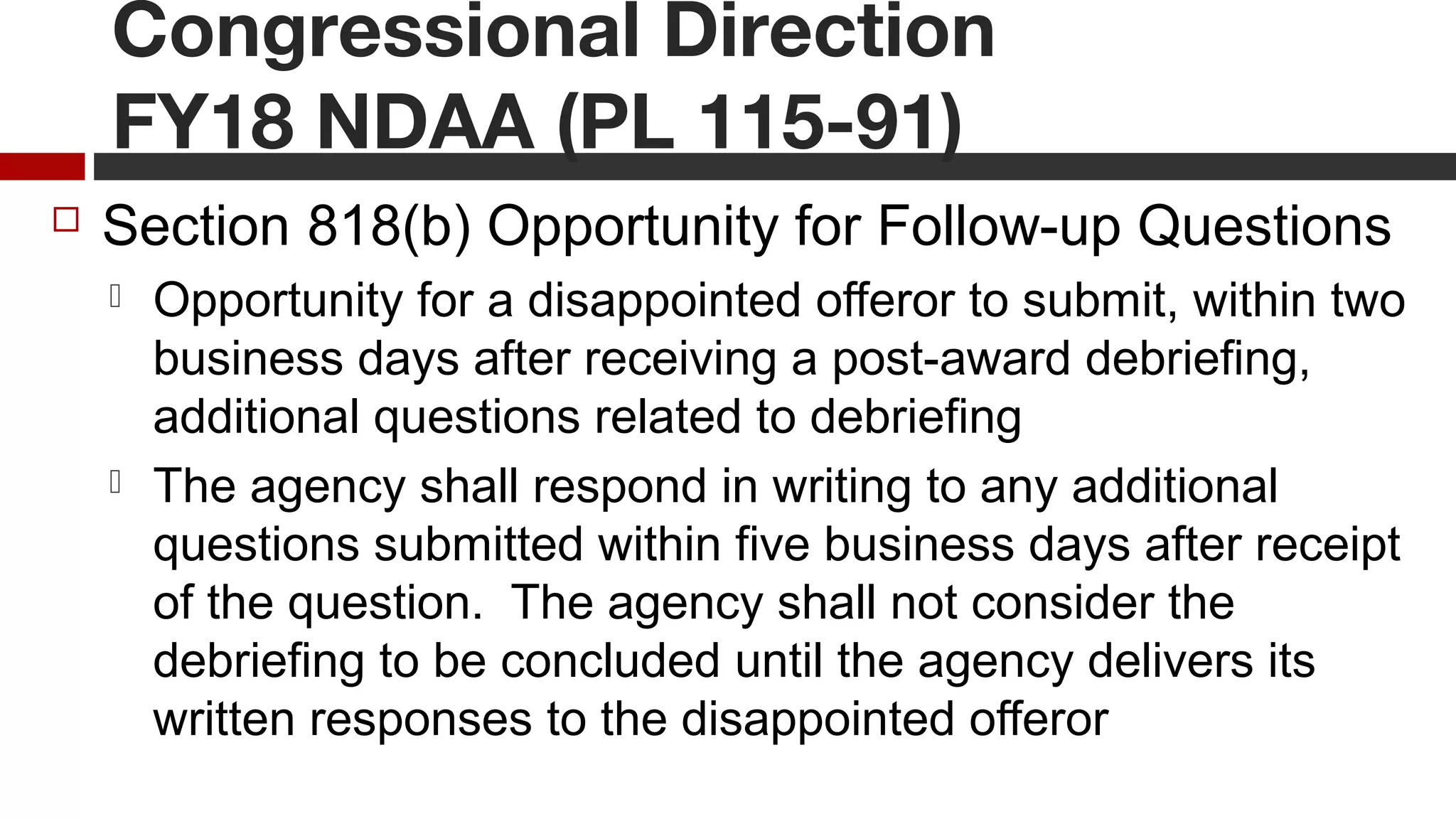

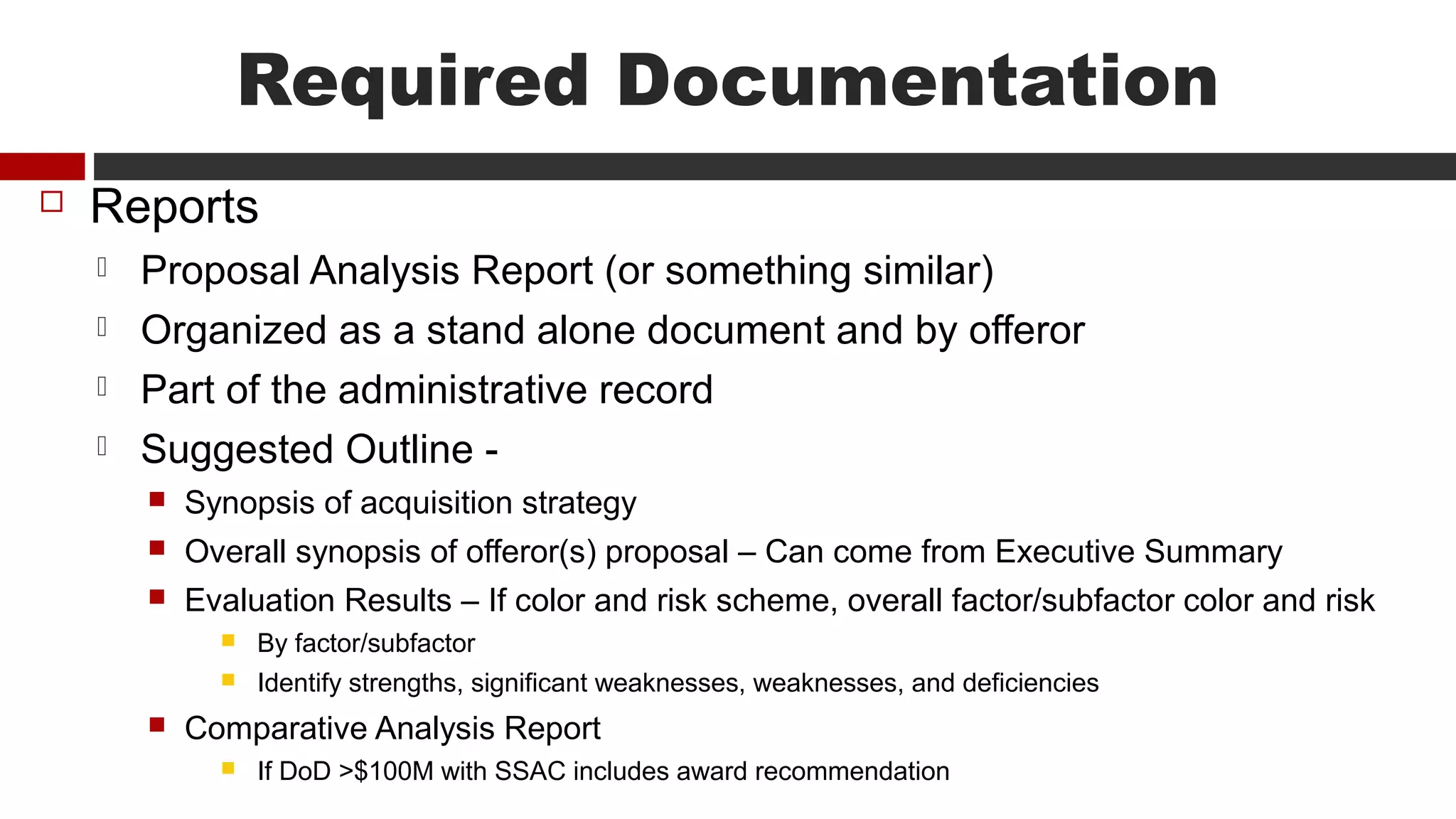

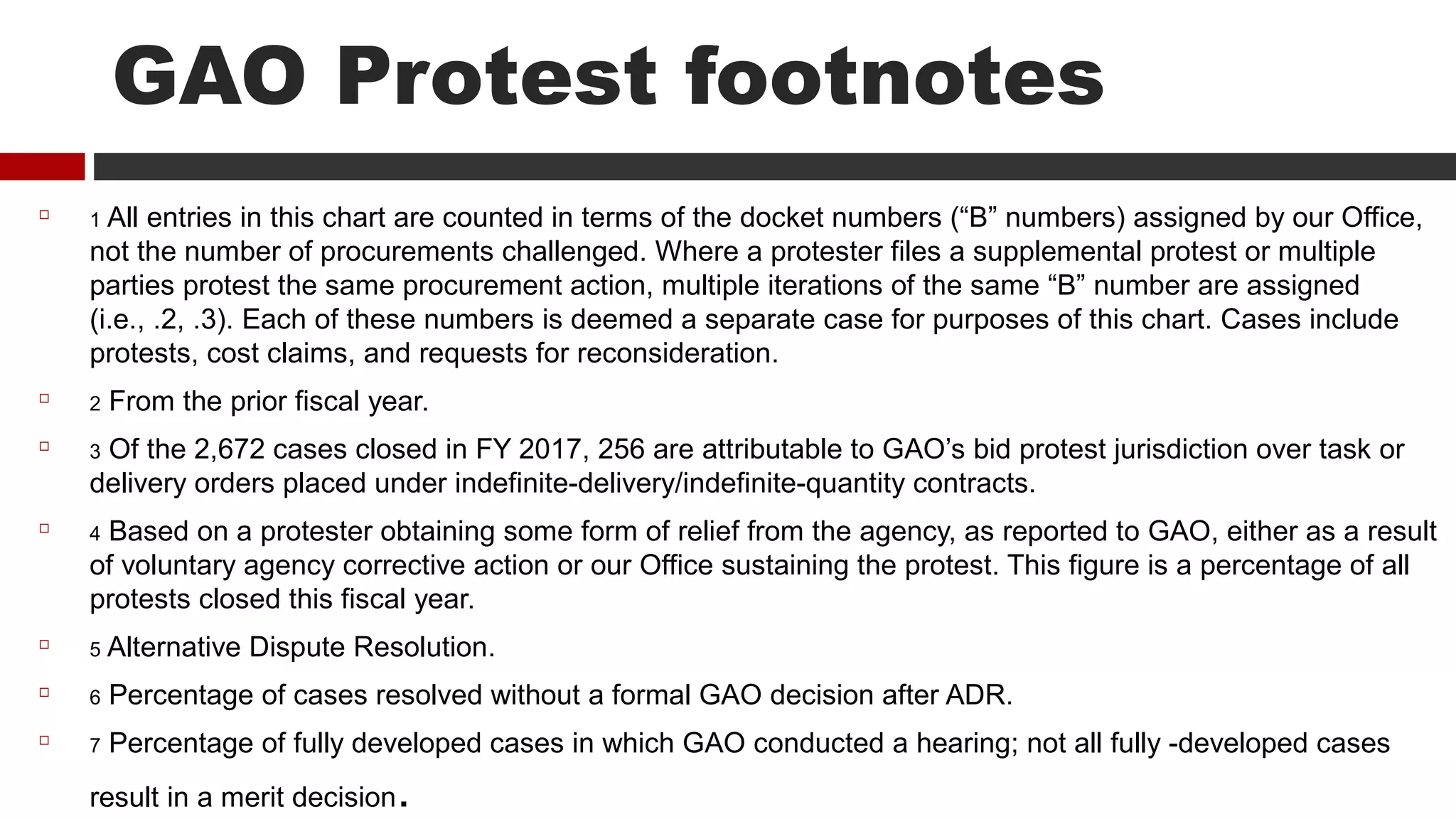

The document discusses source selection techniques and best practices for government contracting source selections. It provides a schematic of the best value continuum from lowest price technically acceptable to more subjective tradeoff methods. It outlines the typical "black hole" process experienced by industry, from proposal submission through potential debriefs and protests. It then provides lessons learned and best practices for source selections, including training evaluators, developing evaluation criteria, conducting industry engagement, using electronic tools, and documenting the process. Key aspects of the source selection process such as evaluation factors, consensus meetings, and debriefings are examined.