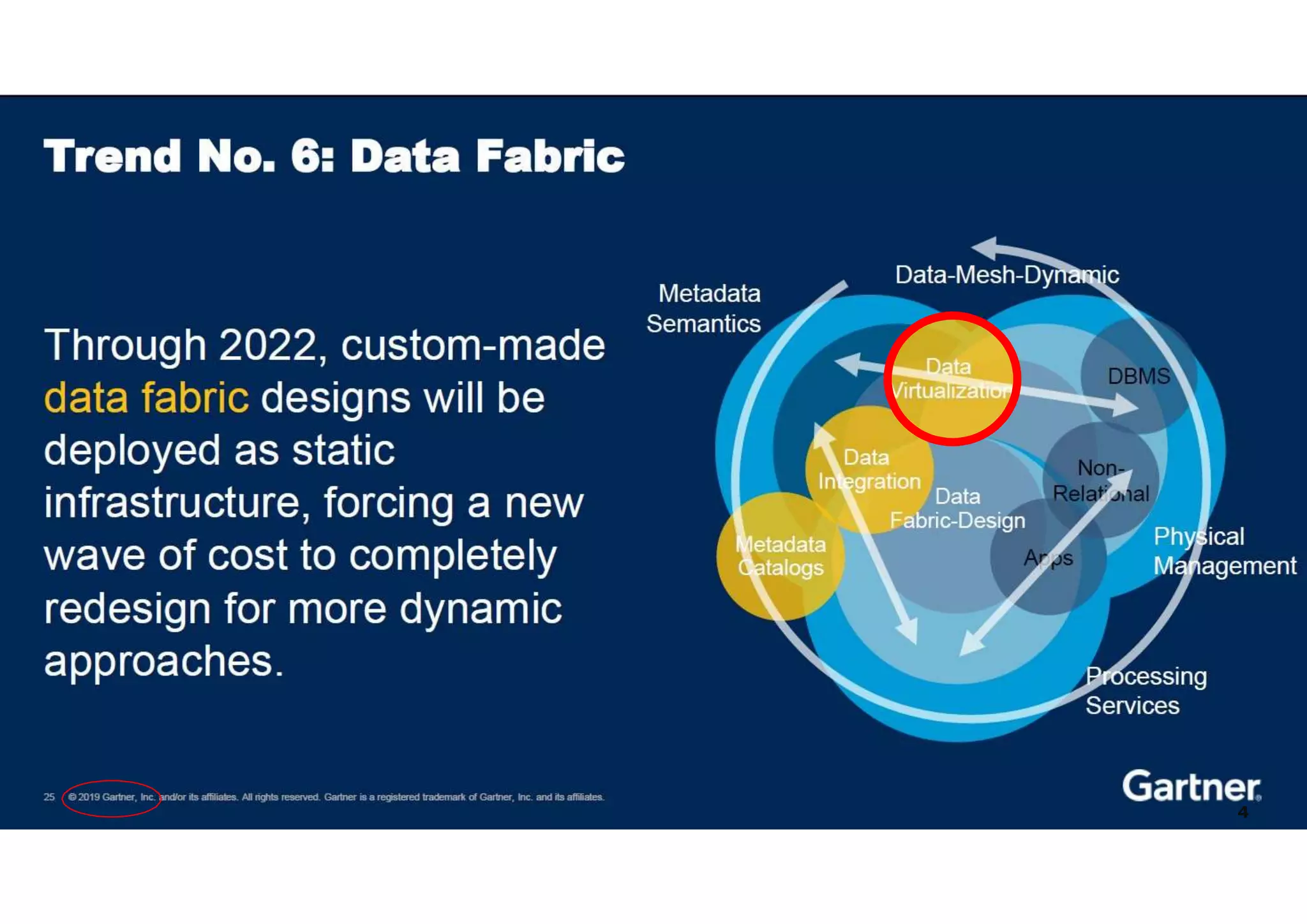

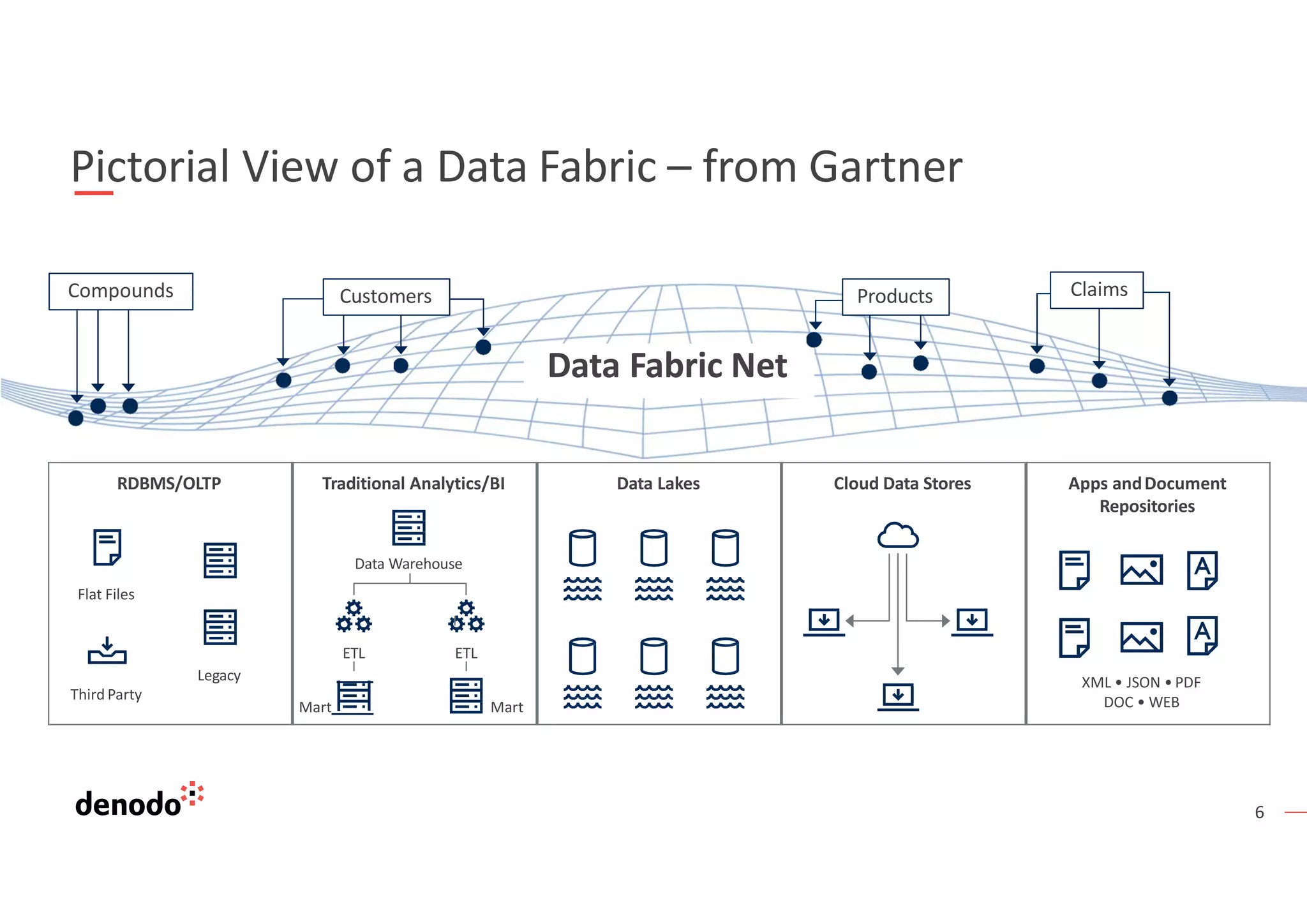

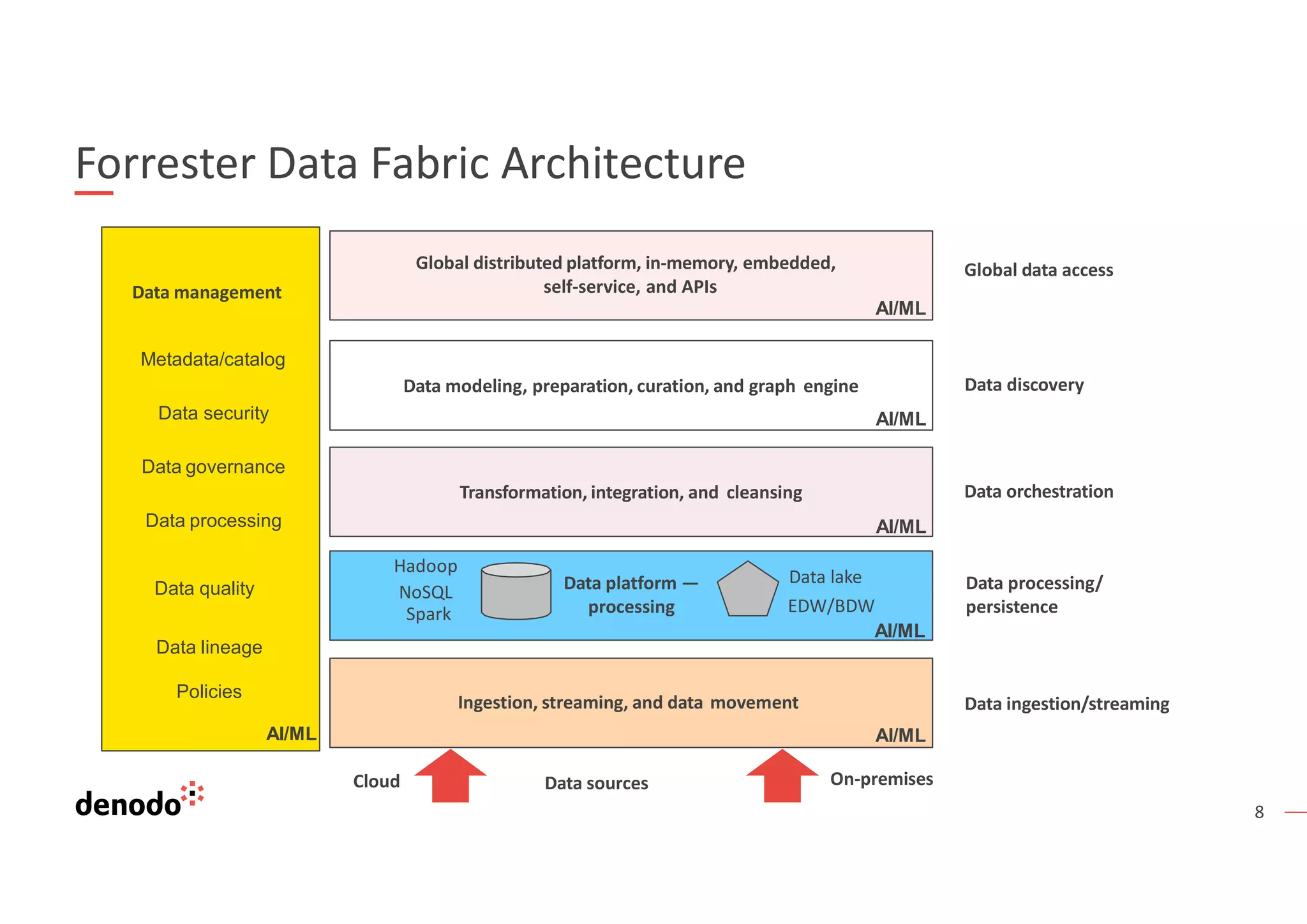

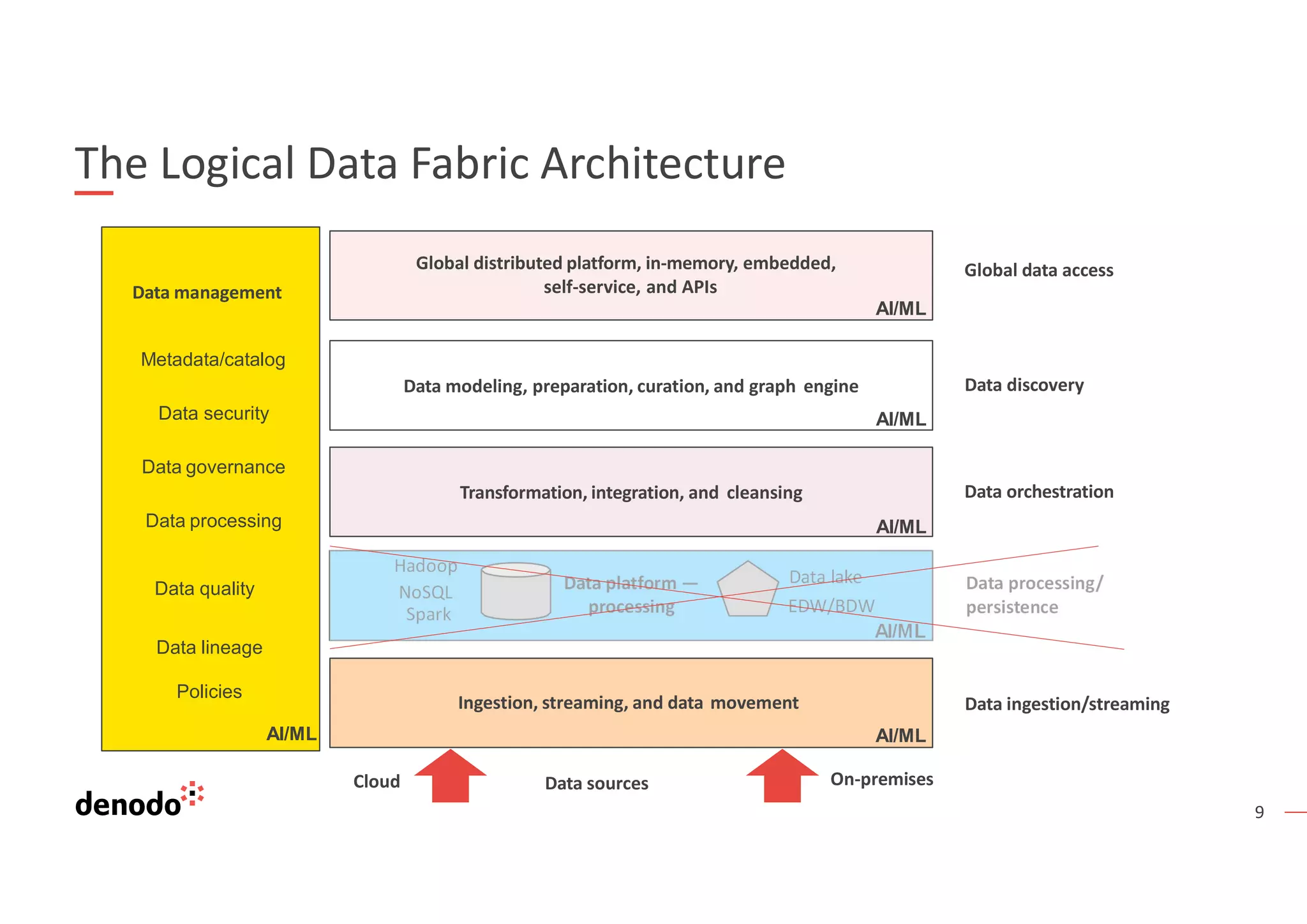

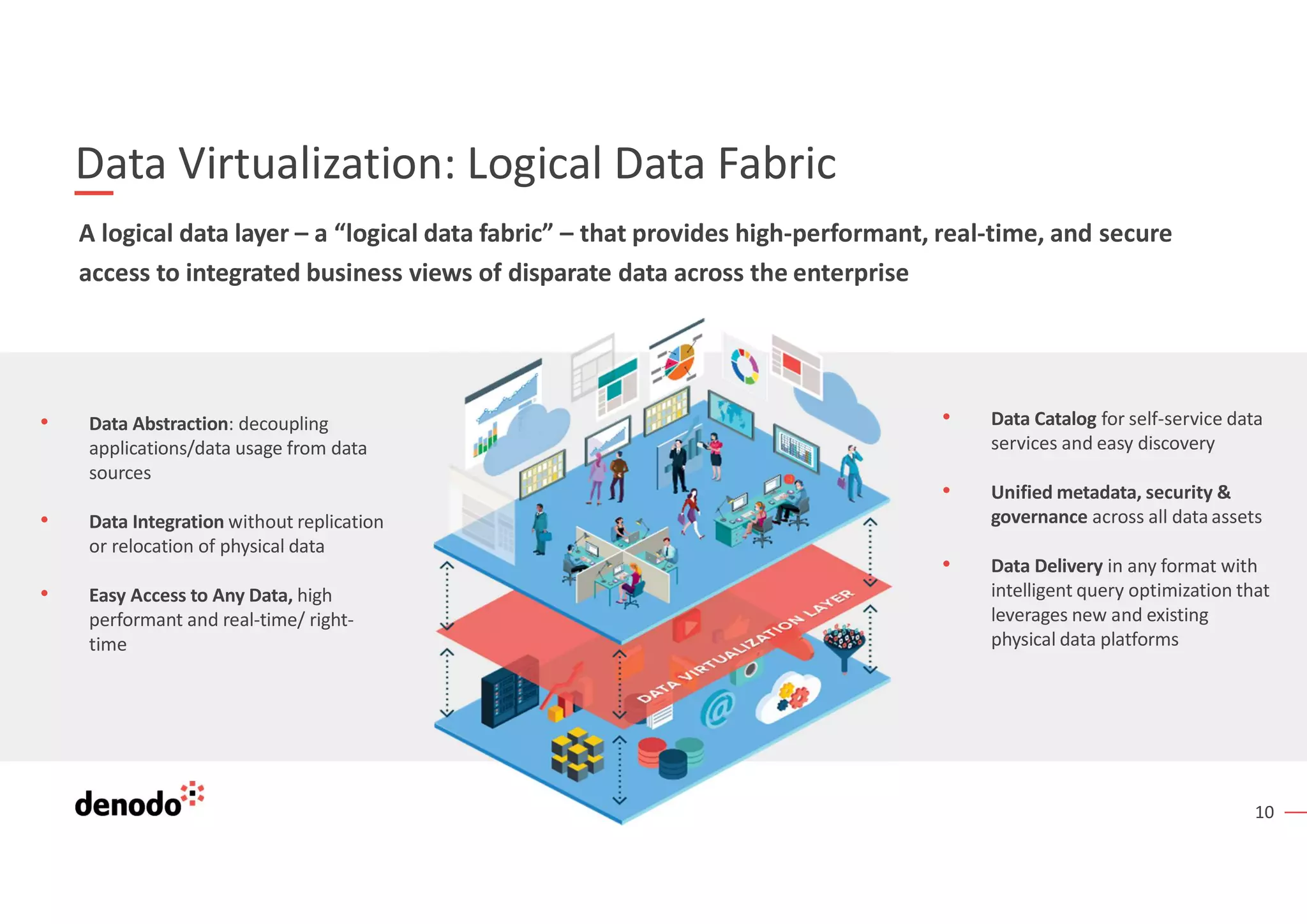

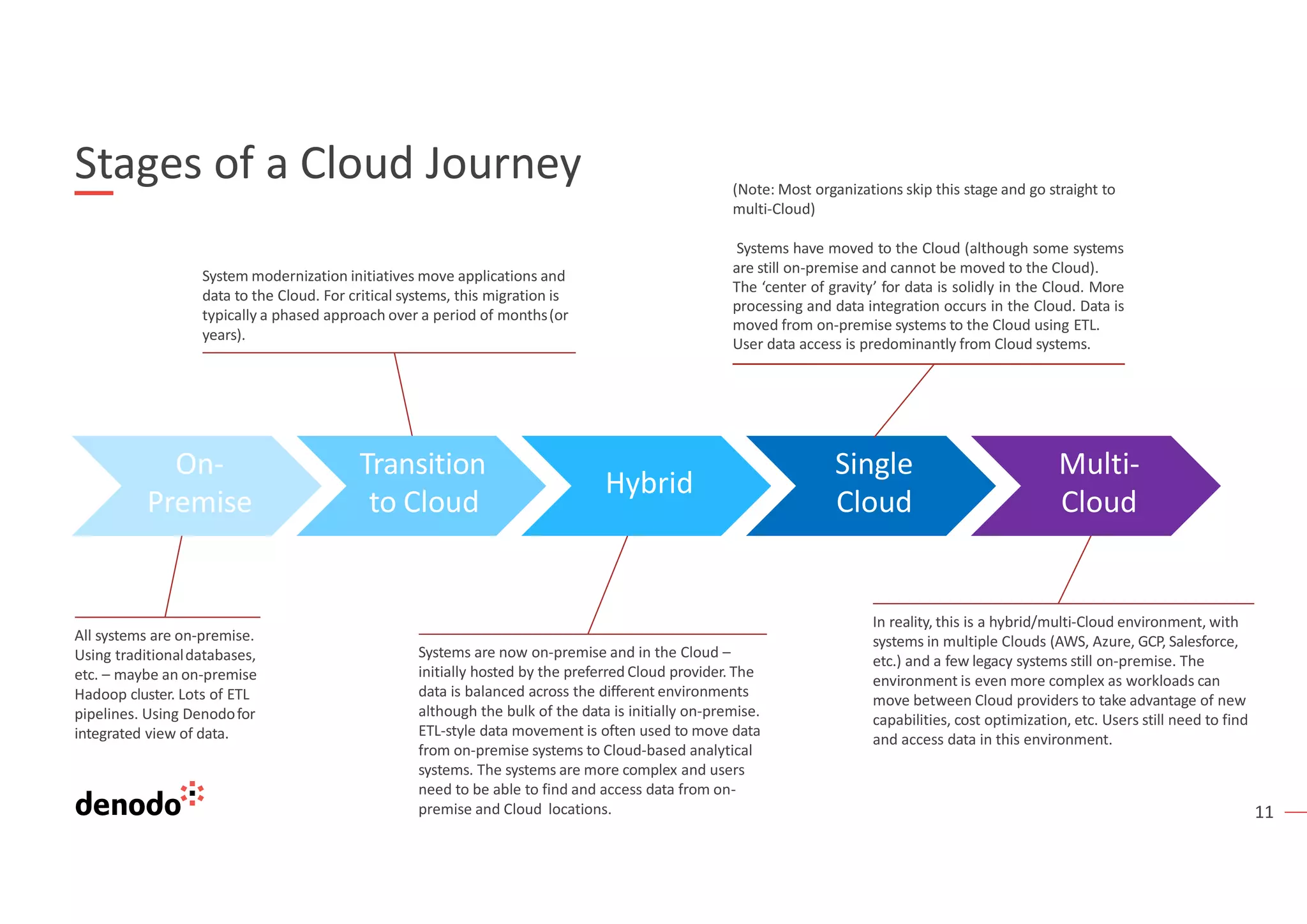

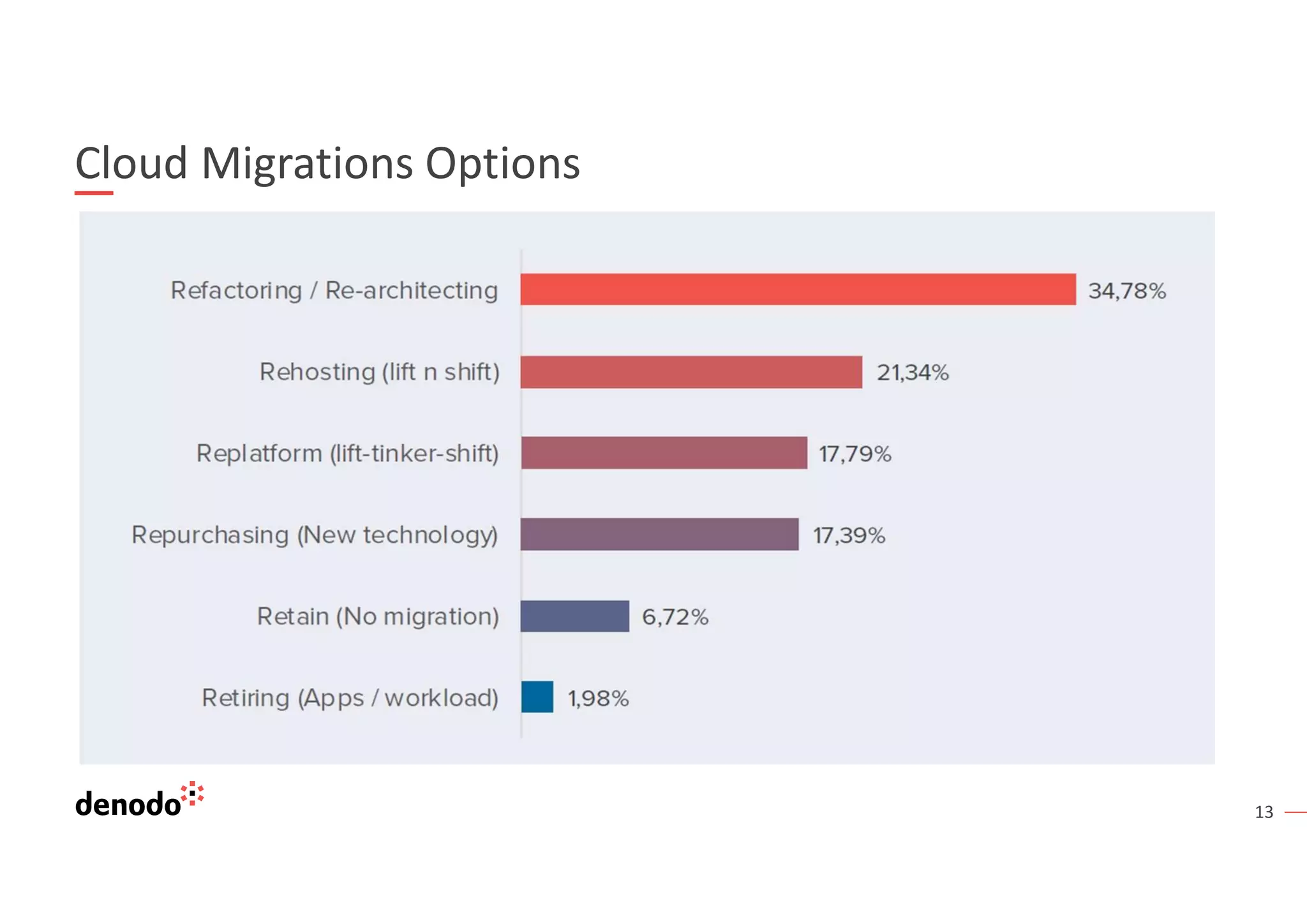

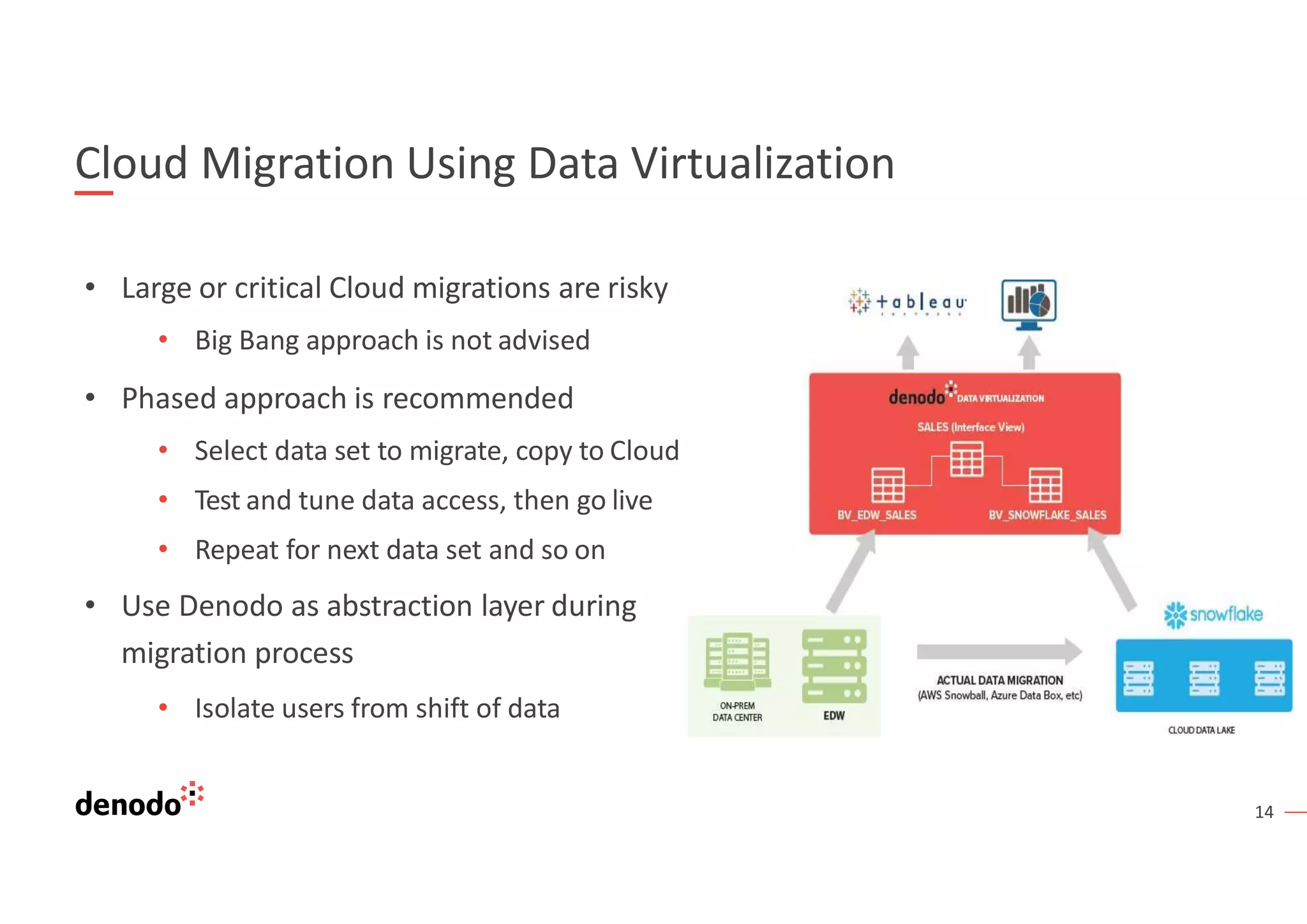

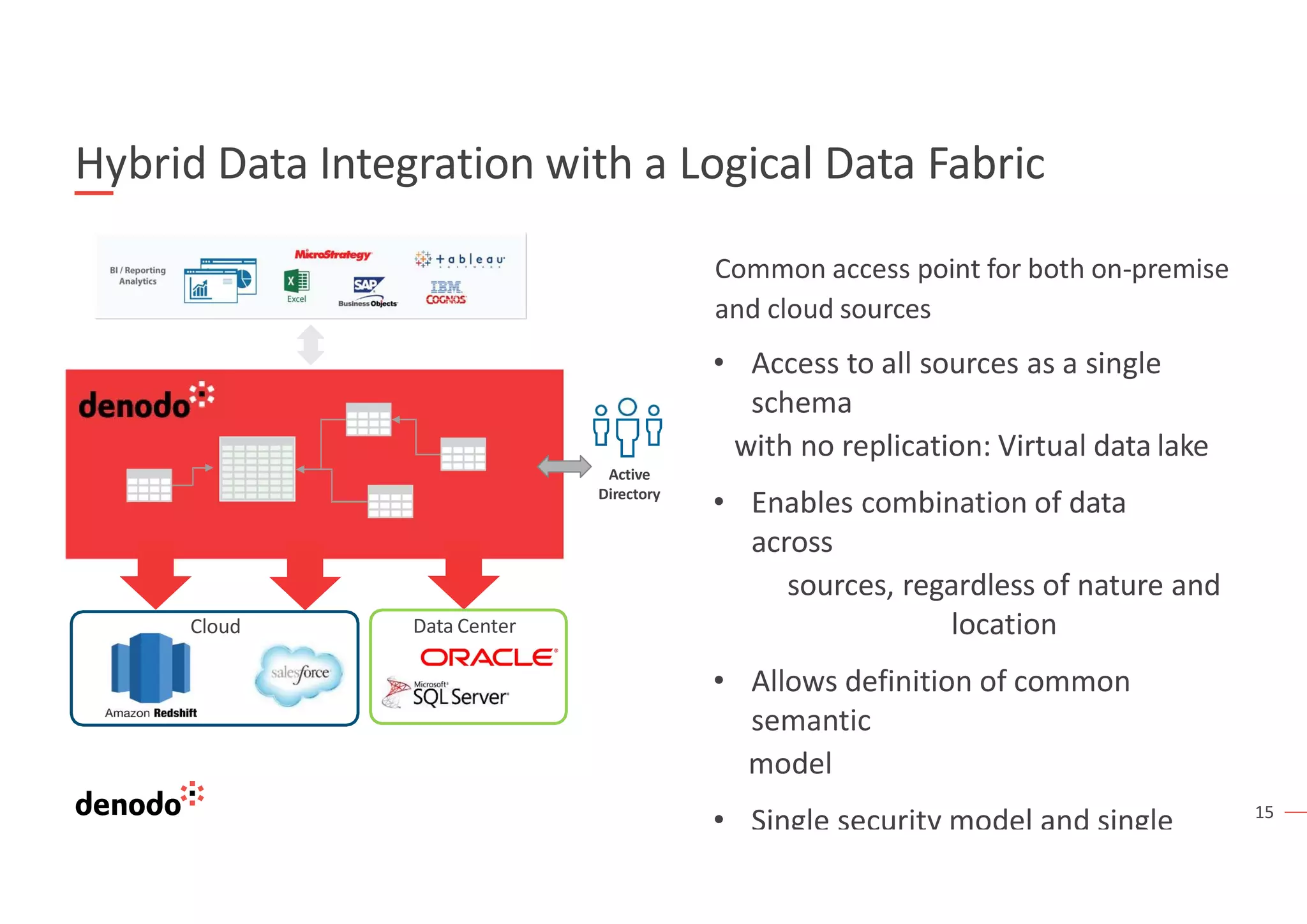

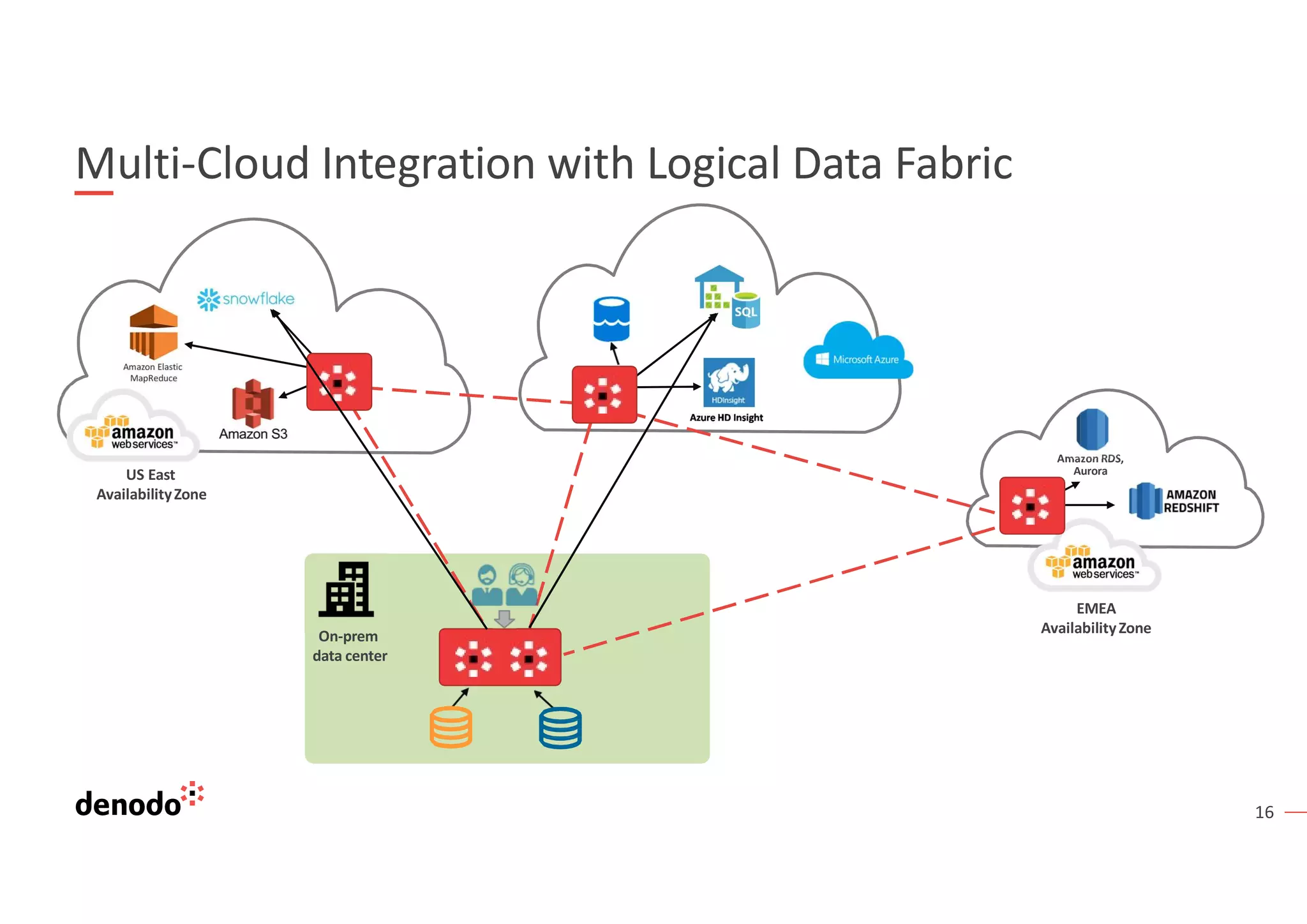

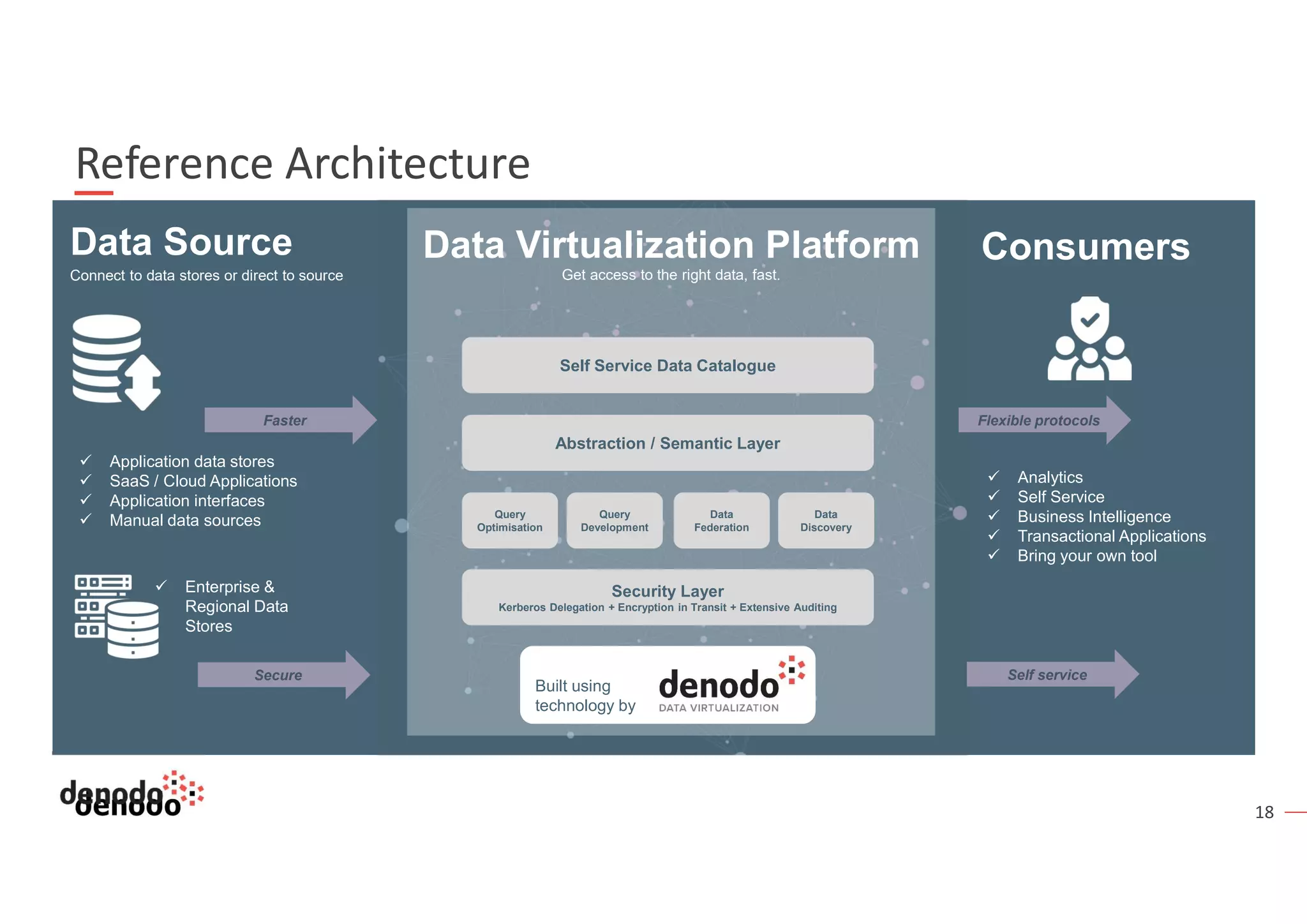

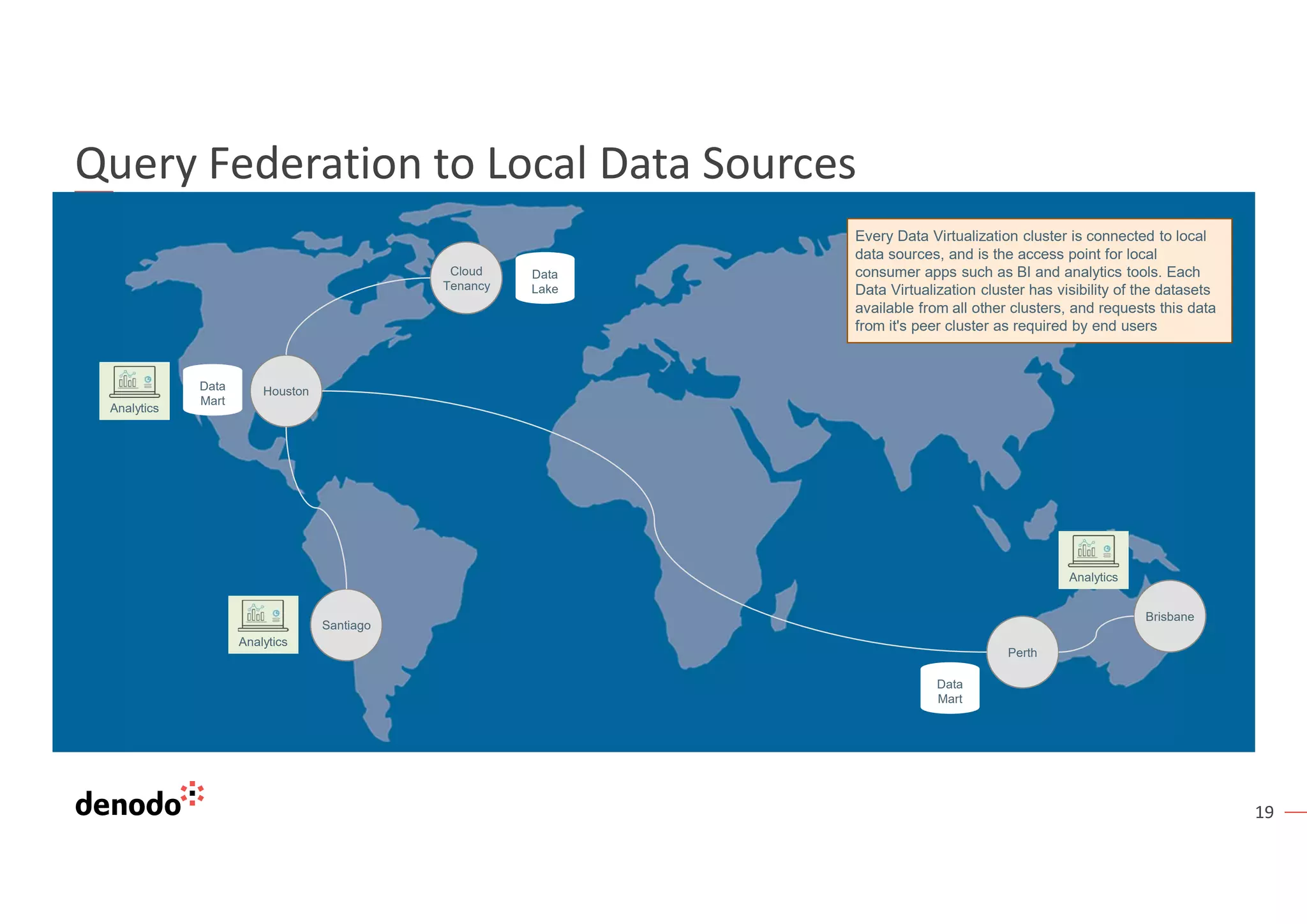

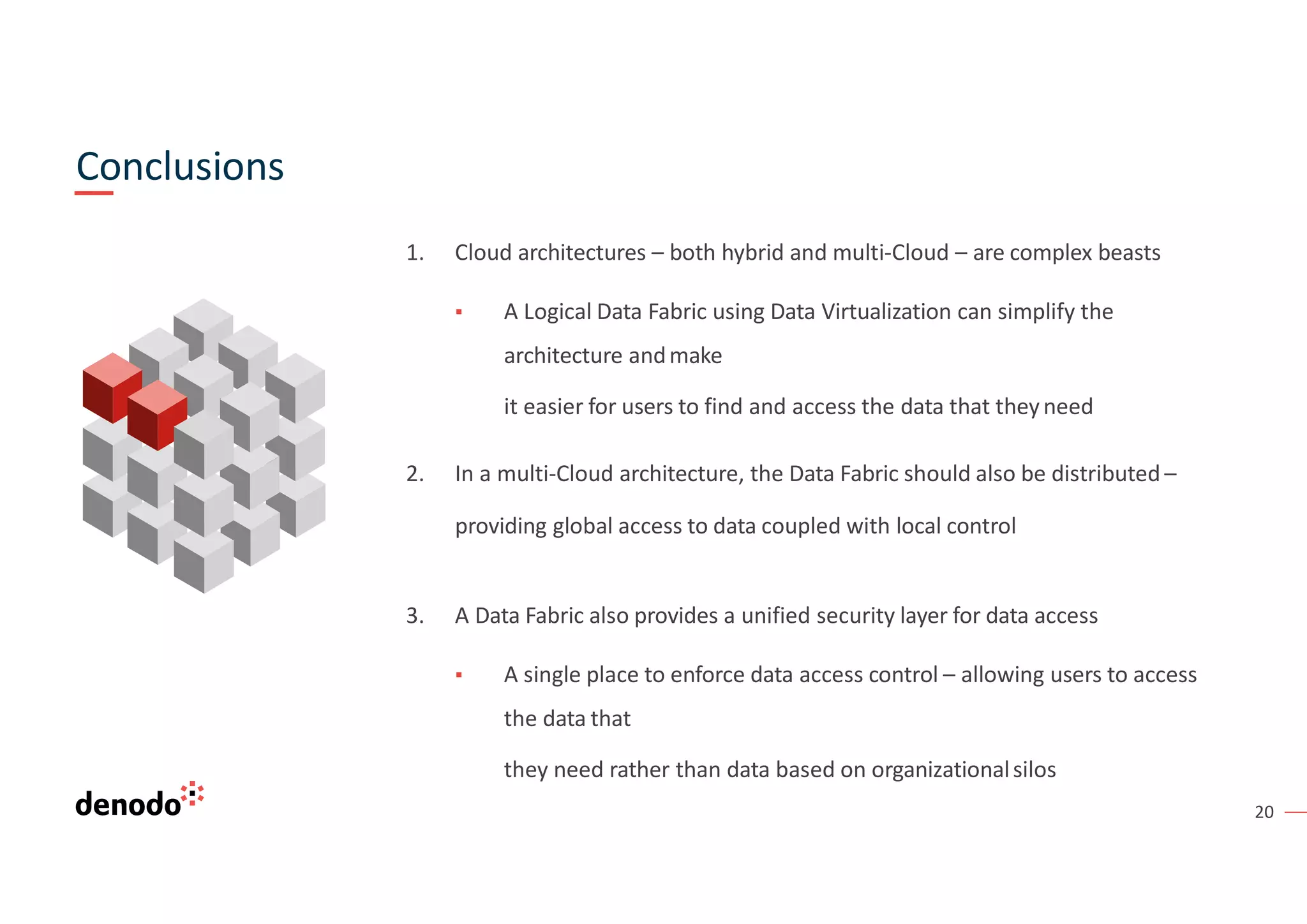

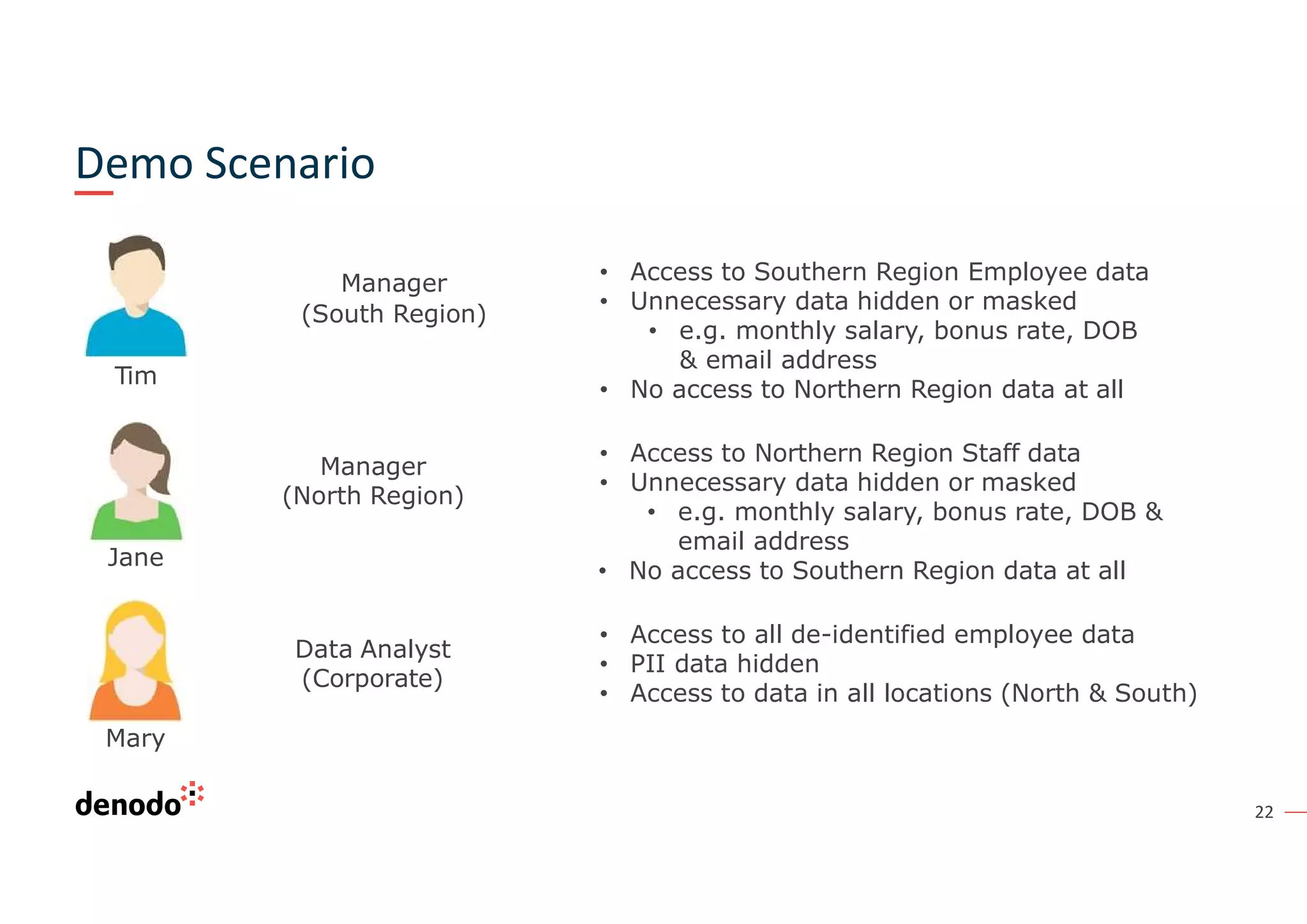

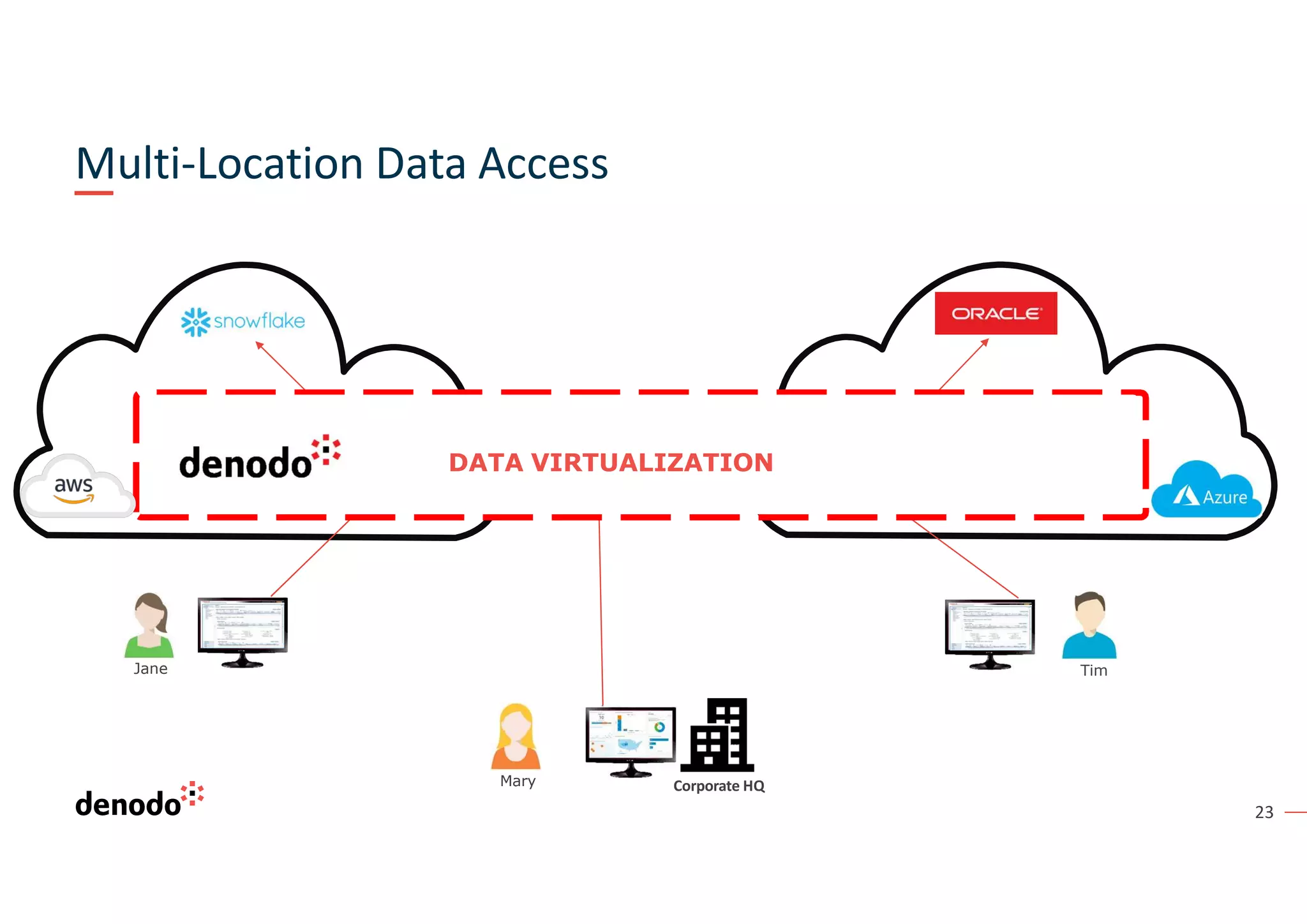

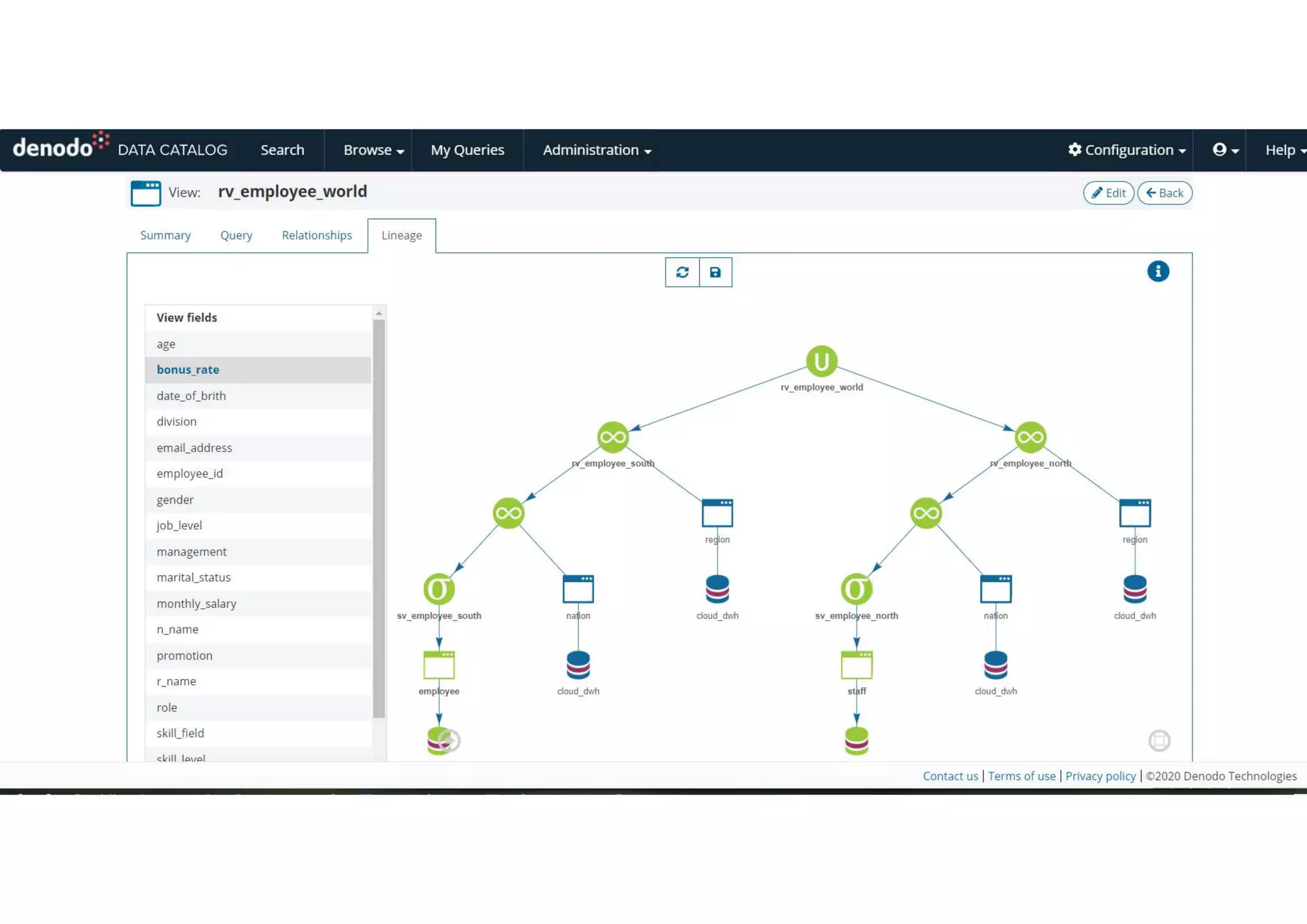

The document outlines a webinar series focusing on data virtualization and its role in addressing data integration challenges within cloud architectures, highlighting a logical data fabric approach. It introduces the concept of data fabric as an architecture pattern that simplifies data management and integration across diverse environments, enhancing accessibility and security. Key topics include cloud migration strategies, customer case studies, and product demonstrations to facilitate efficient data access and management in complex, multi-cloud setups.