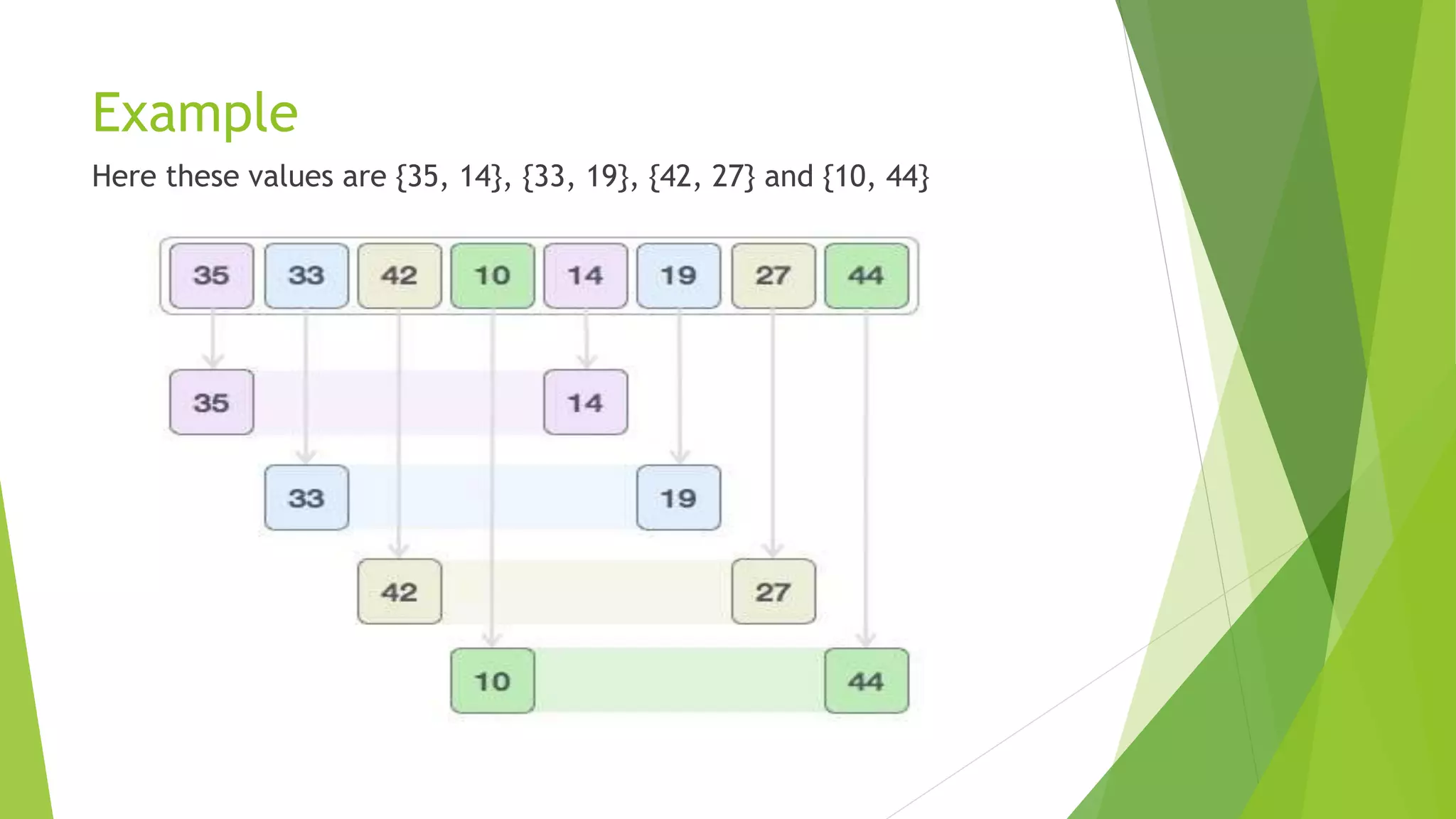

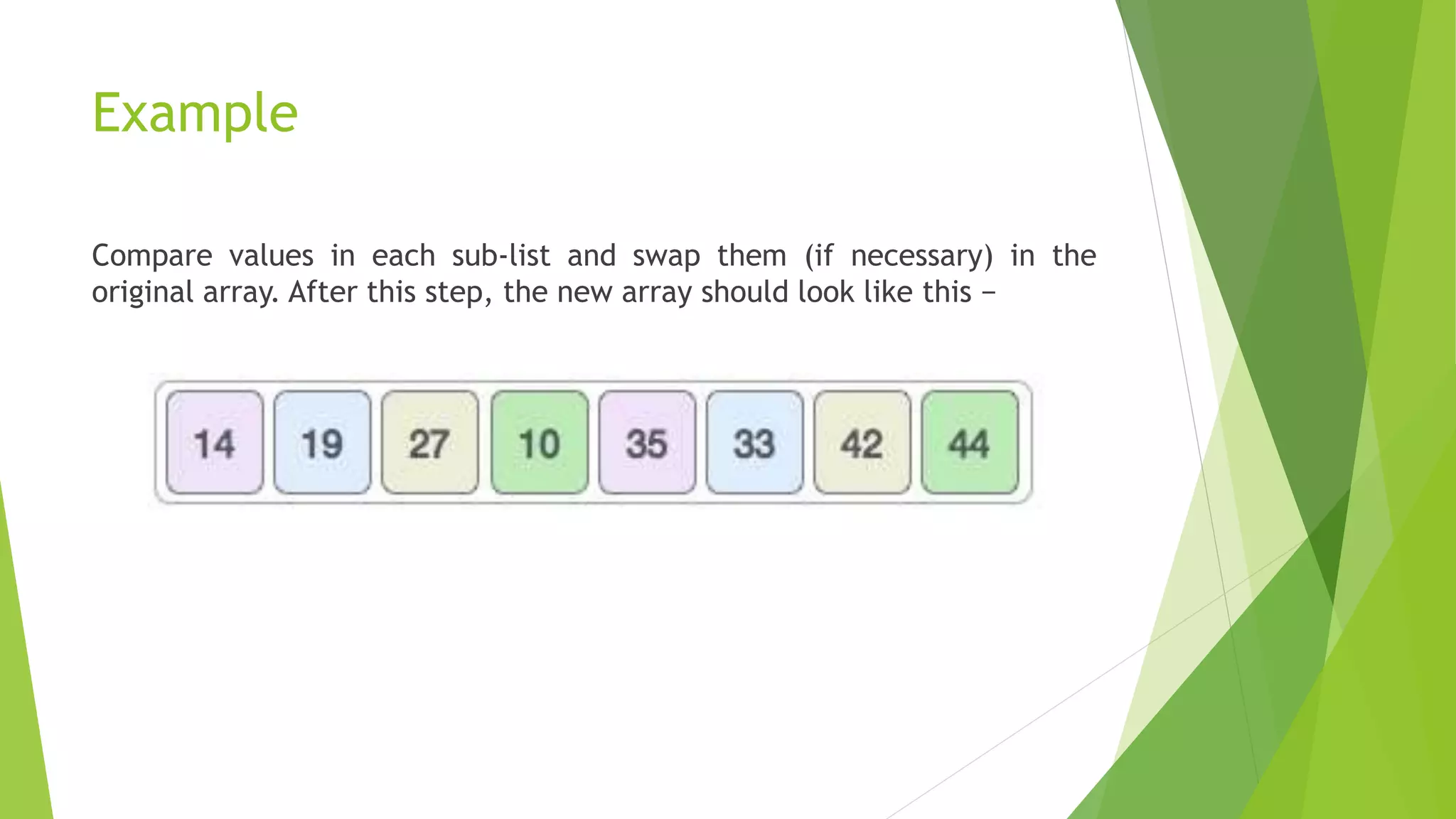

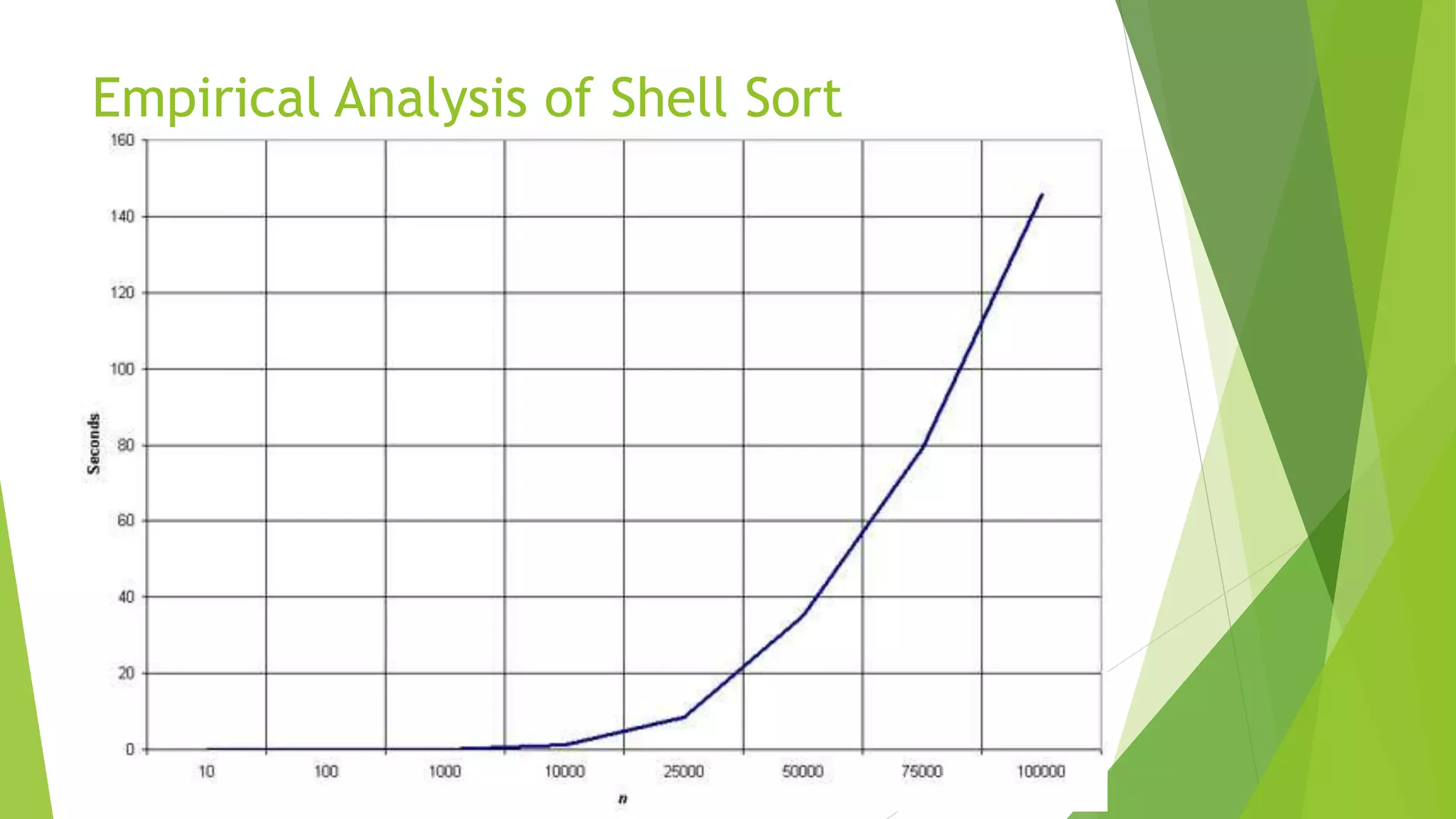

Shell sort, developed by Donald Shell in 1959, is a highly efficient sorting algorithm that minimizes the time complexity of sorting by comparing distant elements instead of adjacent ones. It operates using an increment sequence, allowing for multiple passes through a list and is generally faster than bubble and insertion sorts, though it is less efficient than merge, heap, and quick sorts. The algorithm’s performance depends on the choice of increment sequence, with its best case occurring when the array is already sorted.