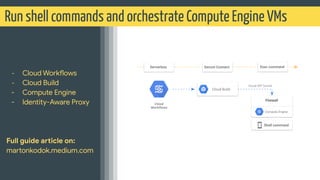

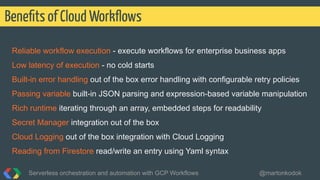

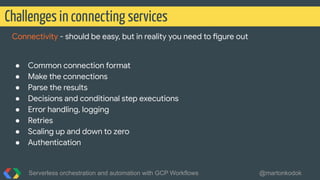

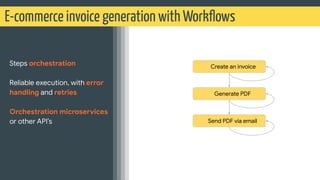

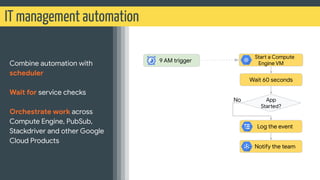

The document presents a talk on serverless orchestration and automation using GCP Workflows, highlighting challenges in connecting services and introducing workflows as a solution for automating complex processes. It covers practical use cases, including integration with APIs and microservices, as well as features like error handling and authentication. The presentation emphasizes the ease, scalability, and flexibility of GCP Workflows for building and managing serverless applications.

![retries.yaml

Retries

Serverless orchestration and automation with GCP Workflows @martonkodok

Retries HTTP status codes [429, 502, 503, 504], connection error, or timeout](https://image.slidesharecdn.com/serverlessorchestrationandautomationwithgcpworkflows1-201215211428/85/Serverless-orchestration-and-automation-with-Cloud-Workflows-21-320.jpg)