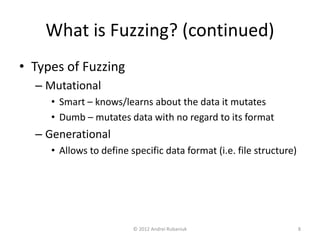

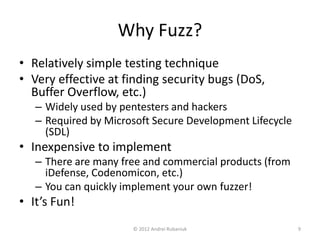

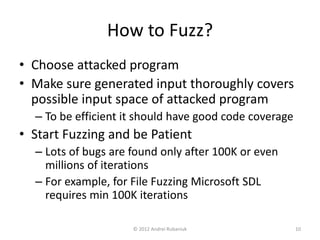

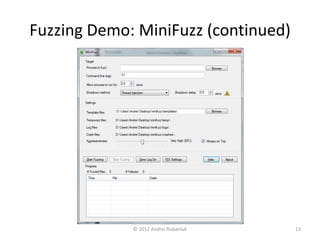

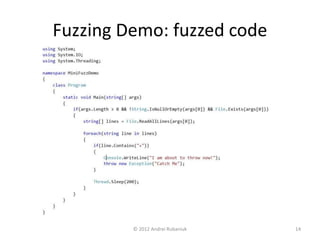

The document discusses security testing through fuzzing, a technique that involves providing malformed inputs to software to uncover exceptions and vulnerabilities. It highlights the effectiveness of fuzzing in identifying security bugs, its simplicity, and low cost of implementation, including a demonstration of a basic fuzzing tool called Minifuzz. The presentation emphasizes that while fuzzing is useful, it should supplement other testing methods rather than replace them.