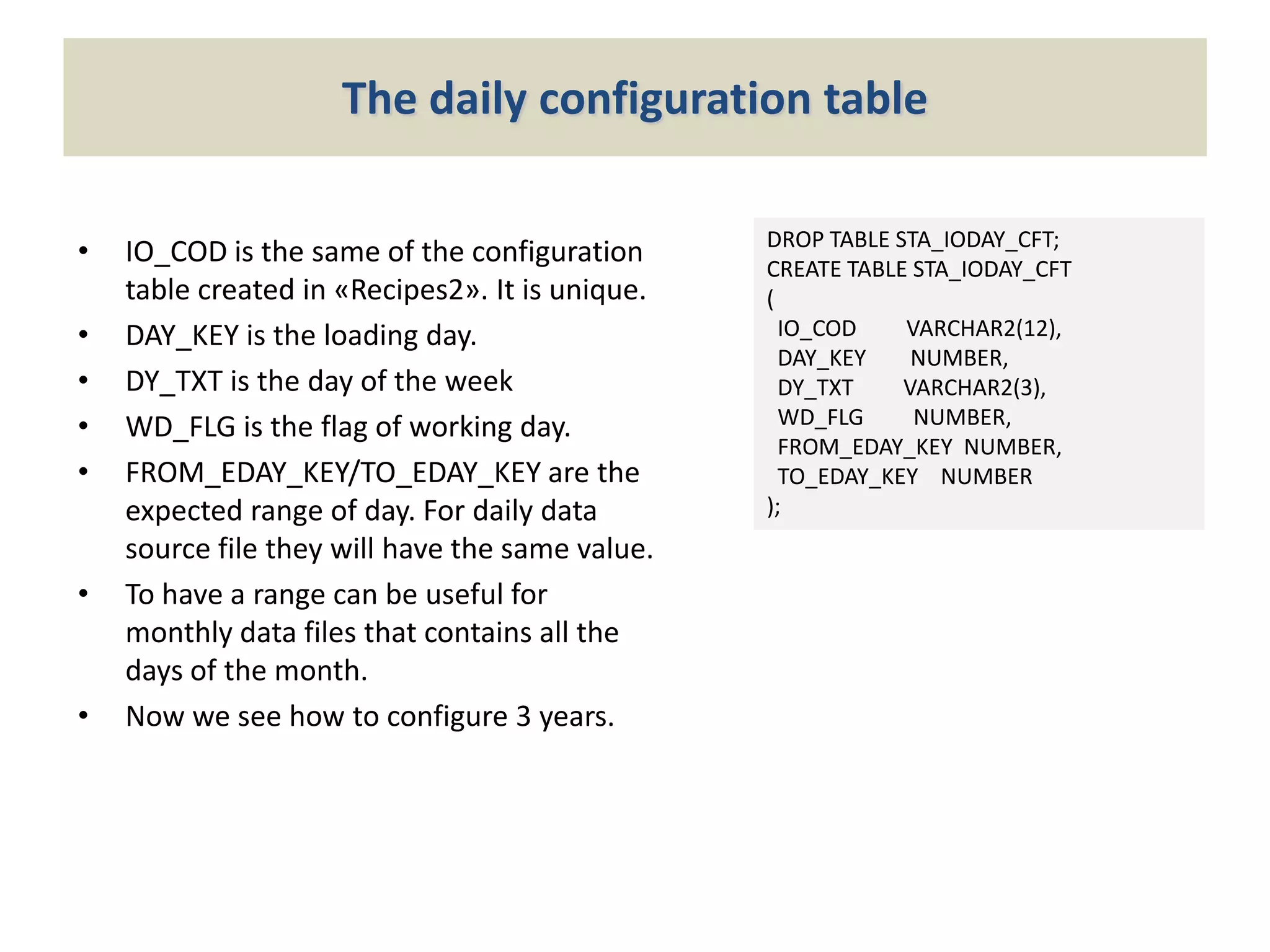

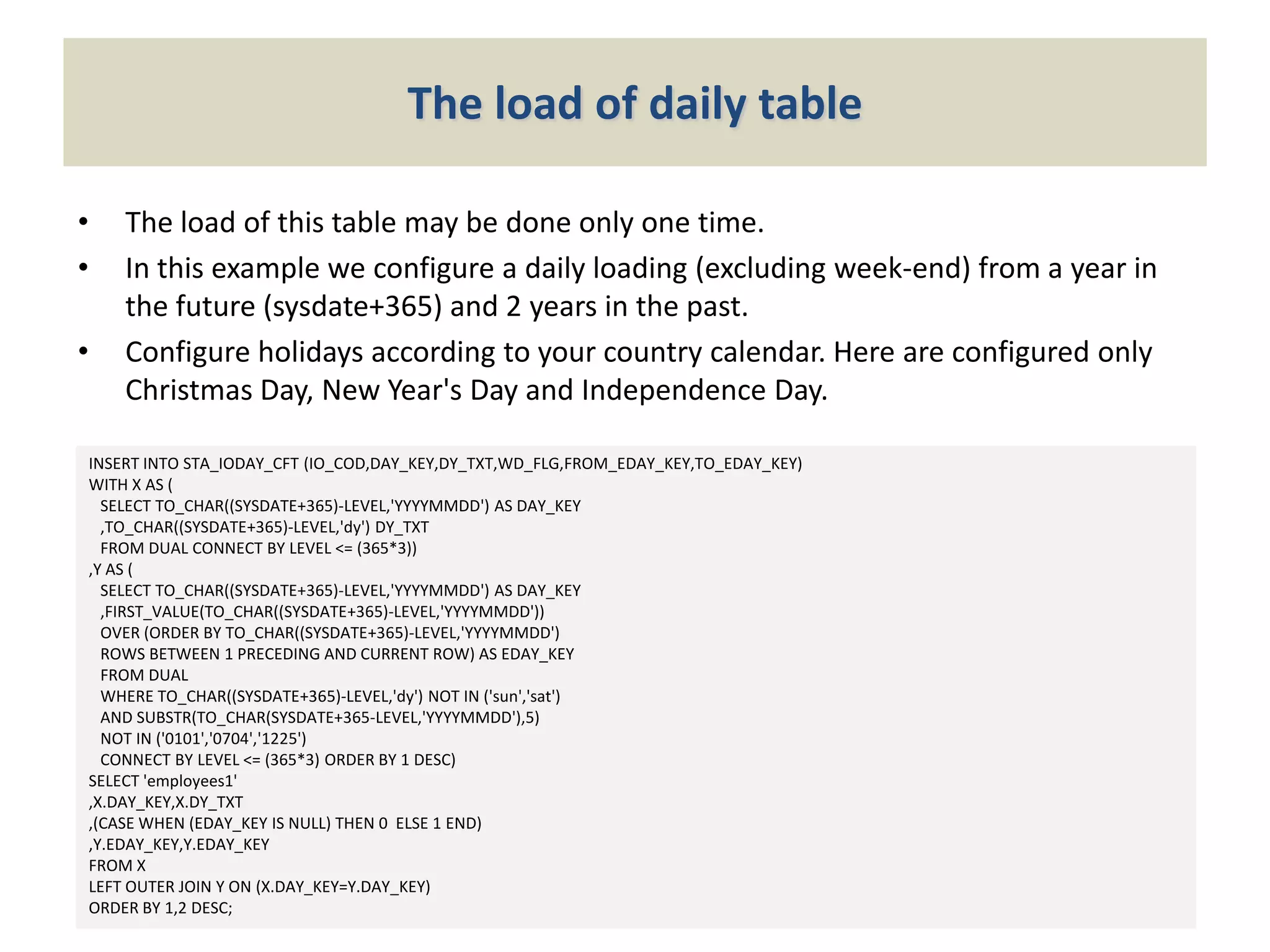

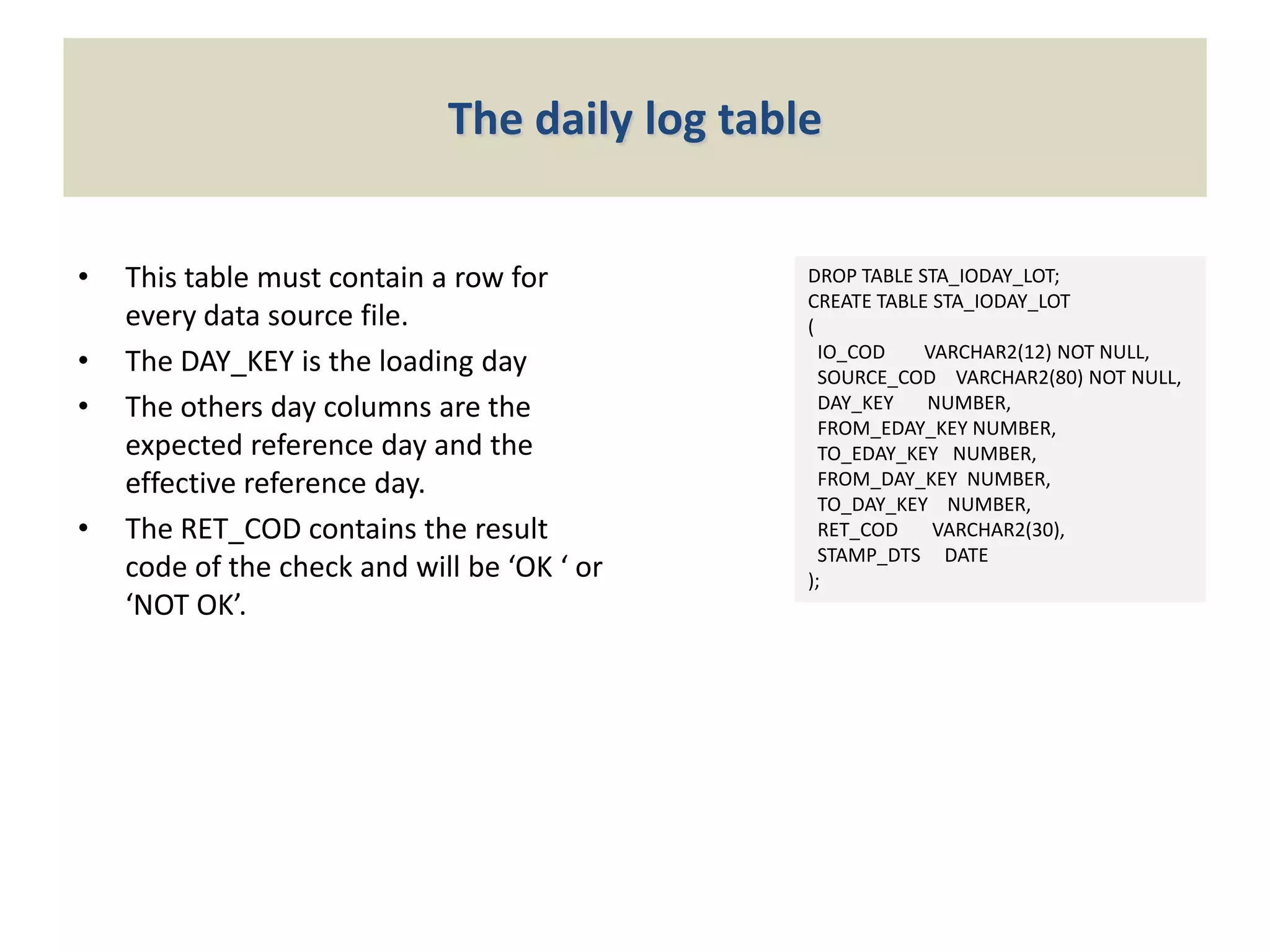

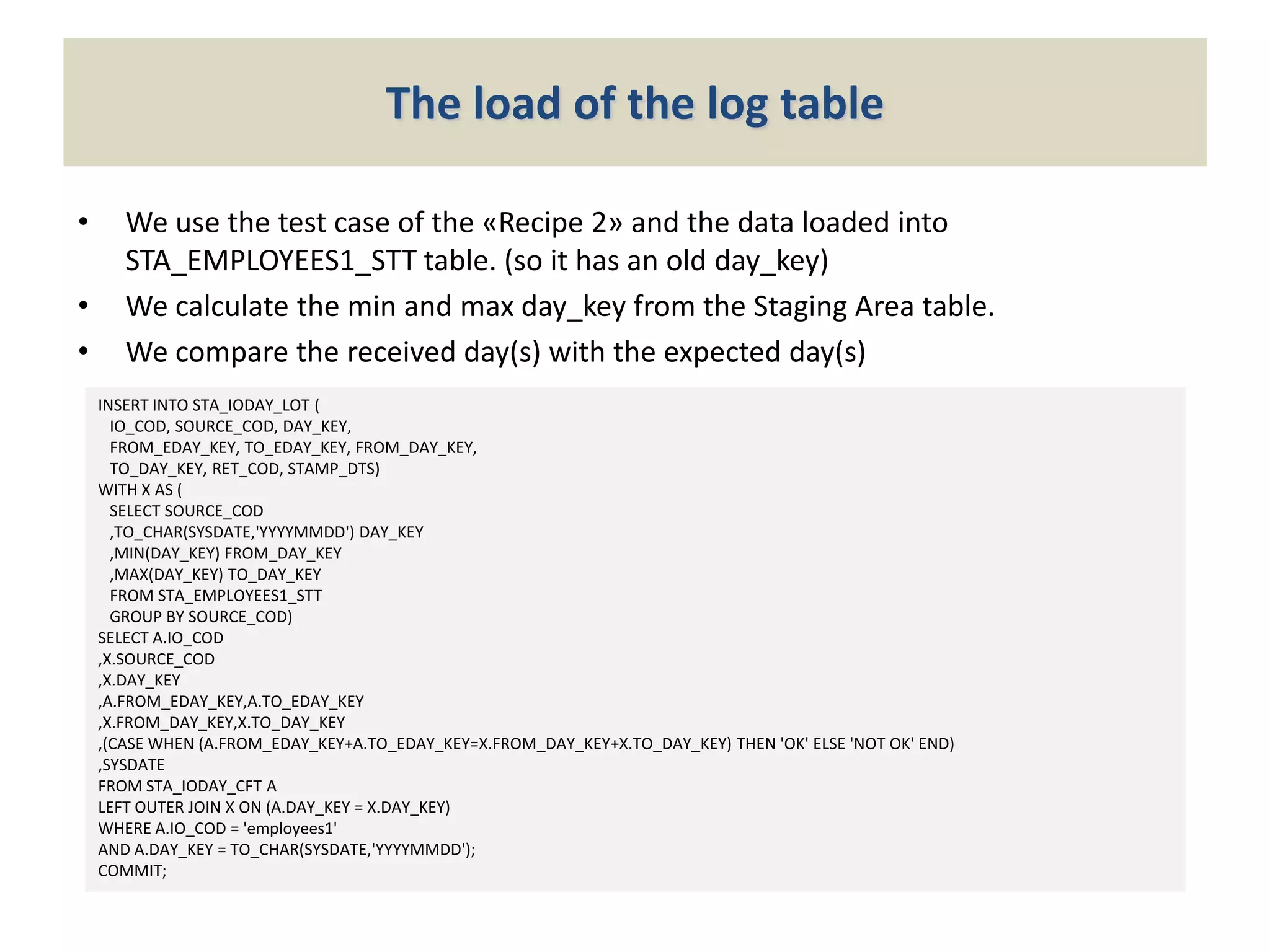

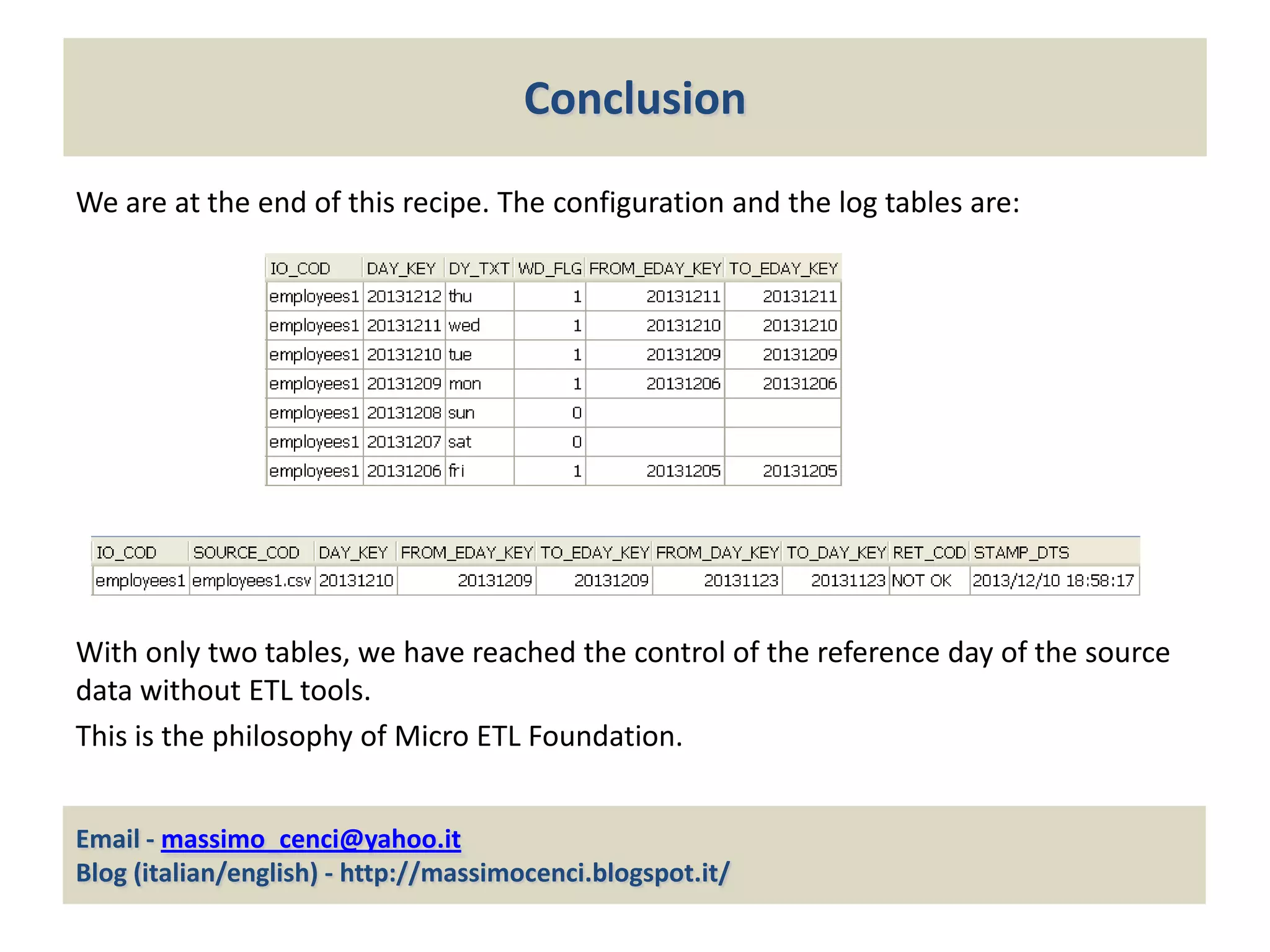

The document outlines the Micro ETL Foundation, a framework for managing data warehousing and business intelligence without expensive ETL tools by utilizing SQL features in an Oracle environment. It specifically describes a method to ensure the accuracy of the reference day of data being loaded into the staging area, requiring the creation of configuration and log tables to verify expected data integrity. The approach facilitates comprehensive control over data loading processes while adhering to the principles of the Micro ETL Foundation.