Ran zhou poster 2018

•Download as PPTX, PDF•

0 likes•38 views

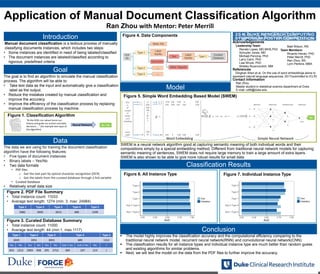

This is a poster I did to show my internship work for a computing symposium. The content is about medical document classification using neural network.

Report

Share

Report

Share

Recommended

Lec1-Into

This document provides an overview of the CSE 591: Machine Learning and Applications course taught by Dr. Jieping Ye at Arizona State University. The following key points are discussed:

- Course information including instructor, time/location, prerequisites, objectives to provide an understanding of machine learning methods and applications.

- Topics covered include clustering, classification, dimensionality reduction, semi-supervised learning, and kernel learning.

- The grading breakdown includes homework, a group project, and an exam. Students are required to participate in class discussions.

- An introduction to machine learning is provided including definitions of supervised vs. unsupervised learning and applications in domains like bioinformatics.

My experiment

This document outlines the PhD journey of Boshra F. Zopon Al_Bayaty in India. It discusses her coursework, research on knowledge discovery from web search, conferences attended both nationally and internationally, and her research contributions. Her research focused on using a "Master-Slave" model combining multiple supervised algorithms to improve word sense disambiguation and accuracy of over 70%. The document provides details on her research methodology, experiments conducted, results and opportunities for future work improving the model for other languages and applications. It demonstrates the various stages of her PhD journey and research progress.

Advanced Question Paper Generator using Fuzzy Logic

This document describes a proposed system called the Advanced Question Paper Generator that uses fuzzy logic to automatically generate question papers. The current manual process for generating question papers has disadvantages like dependency on individuals, potential for errors or bias, and lack of secrecy. The proposed system aims to make the process more efficient, reliable, improve quality, and maintain secrecy by using fuzzy logic to logically select question difficulty levels. It will take inputs from users on the desired paper pattern and difficulty level. The system is intended to reduce workload and issues like duplicity, costs, and time wastage compared to the existing manual method.

Determining the Credibility of Science Communication

Most work on scholarly document processing assumes that the information processed is trustworthy and factually correct. However, this is not always the case. There are two core challenges, which should be addressed: 1) ensuring that scientific publications are credible -- e.g. that claims are not made without supporting evidence, and that all relevant supporting evidence is provided; and 2) that scientific findings are not misrepresented, distorted or outright misreported when communicated by journalists or the general public. I will present some first steps towards addressing these problems and outline remaining challenges.

Contextual Definition Generation

The document discusses a study that trained a GPT-2 model to generate contextual definitions for words based on the provided context. The model was trained on a new dataset containing definition and context pairs from various sources. It was evaluated through surveys where human raters assessed definitions generated by the model for short and long contexts, as well as real human-generated definitions. The results found that while the model performed significantly better at generating definitions for short contexts compared to long ones, human-generated definitions were still significantly more accurate. Areas for improvement included reducing fluctuations depending on context and better interpreting some contexts.

EFFICIENCY OF DECISION TREES IN PREDICTING STUDENT’S ACADEMIC PERFORMANCE

Educational data mining is used to study the data available in the educational field and bring

out the hidden knowledge from it. Classification methods like decision trees, rule mining,

Bayesian network etc can be applied on the educational data for predicting the students

behavior, performance in examination etc. This prediction will help the tutors to identify the

weak students and help them to score better marks. The C4.5 decision tree algorithm is applied

on student’s internal assessment data to predict their performance in the final exam. The

outcome of the decision tree predicted the number of students who are likely to fail or pass. The

result is given to the tutor and steps were taken to improve the performance of the students who

were predicted to fail. After the declaration of the results in the final examination the marks

obtained by the students are fed into the system and the results were analyzed. The comparative

analysis of the results states that the prediction has helped the weaker students to improve and

brought out betterment in the result. To analyse the accuracy of the algorithm, it is compared

with ID3 algorithm and found to be more efficient in terms of the accurately predicting the

outcome of the student and time taken to derive the tree.

Sentiment analysis using naive bayes classifier

This ppt contains a small description of naive bayes classifier algorithm. It is a machine learning approach for detection of sentiment and text classification.

Attention scores and mechanisms

DELAB - Sequence generation seminar

Title

Attention scores and mechanisms

Table of contents

• Sequence to sequence and bottleneck problem

• Sequence to sequence with attention

• Attention variants (1) Score

• Attention variants (2) Self-attention

• Attention variants (3) Multi-headed attention

• Attention variants (4) Others

Recommended

Lec1-Into

This document provides an overview of the CSE 591: Machine Learning and Applications course taught by Dr. Jieping Ye at Arizona State University. The following key points are discussed:

- Course information including instructor, time/location, prerequisites, objectives to provide an understanding of machine learning methods and applications.

- Topics covered include clustering, classification, dimensionality reduction, semi-supervised learning, and kernel learning.

- The grading breakdown includes homework, a group project, and an exam. Students are required to participate in class discussions.

- An introduction to machine learning is provided including definitions of supervised vs. unsupervised learning and applications in domains like bioinformatics.

My experiment

This document outlines the PhD journey of Boshra F. Zopon Al_Bayaty in India. It discusses her coursework, research on knowledge discovery from web search, conferences attended both nationally and internationally, and her research contributions. Her research focused on using a "Master-Slave" model combining multiple supervised algorithms to improve word sense disambiguation and accuracy of over 70%. The document provides details on her research methodology, experiments conducted, results and opportunities for future work improving the model for other languages and applications. It demonstrates the various stages of her PhD journey and research progress.

Advanced Question Paper Generator using Fuzzy Logic

This document describes a proposed system called the Advanced Question Paper Generator that uses fuzzy logic to automatically generate question papers. The current manual process for generating question papers has disadvantages like dependency on individuals, potential for errors or bias, and lack of secrecy. The proposed system aims to make the process more efficient, reliable, improve quality, and maintain secrecy by using fuzzy logic to logically select question difficulty levels. It will take inputs from users on the desired paper pattern and difficulty level. The system is intended to reduce workload and issues like duplicity, costs, and time wastage compared to the existing manual method.

Determining the Credibility of Science Communication

Most work on scholarly document processing assumes that the information processed is trustworthy and factually correct. However, this is not always the case. There are two core challenges, which should be addressed: 1) ensuring that scientific publications are credible -- e.g. that claims are not made without supporting evidence, and that all relevant supporting evidence is provided; and 2) that scientific findings are not misrepresented, distorted or outright misreported when communicated by journalists or the general public. I will present some first steps towards addressing these problems and outline remaining challenges.

Contextual Definition Generation

The document discusses a study that trained a GPT-2 model to generate contextual definitions for words based on the provided context. The model was trained on a new dataset containing definition and context pairs from various sources. It was evaluated through surveys where human raters assessed definitions generated by the model for short and long contexts, as well as real human-generated definitions. The results found that while the model performed significantly better at generating definitions for short contexts compared to long ones, human-generated definitions were still significantly more accurate. Areas for improvement included reducing fluctuations depending on context and better interpreting some contexts.

EFFICIENCY OF DECISION TREES IN PREDICTING STUDENT’S ACADEMIC PERFORMANCE

Educational data mining is used to study the data available in the educational field and bring

out the hidden knowledge from it. Classification methods like decision trees, rule mining,

Bayesian network etc can be applied on the educational data for predicting the students

behavior, performance in examination etc. This prediction will help the tutors to identify the

weak students and help them to score better marks. The C4.5 decision tree algorithm is applied

on student’s internal assessment data to predict their performance in the final exam. The

outcome of the decision tree predicted the number of students who are likely to fail or pass. The

result is given to the tutor and steps were taken to improve the performance of the students who

were predicted to fail. After the declaration of the results in the final examination the marks

obtained by the students are fed into the system and the results were analyzed. The comparative

analysis of the results states that the prediction has helped the weaker students to improve and

brought out betterment in the result. To analyse the accuracy of the algorithm, it is compared

with ID3 algorithm and found to be more efficient in terms of the accurately predicting the

outcome of the student and time taken to derive the tree.

Sentiment analysis using naive bayes classifier

This ppt contains a small description of naive bayes classifier algorithm. It is a machine learning approach for detection of sentiment and text classification.

Attention scores and mechanisms

DELAB - Sequence generation seminar

Title

Attention scores and mechanisms

Table of contents

• Sequence to sequence and bottleneck problem

• Sequence to sequence with attention

• Attention variants (1) Score

• Attention variants (2) Self-attention

• Attention variants (3) Multi-headed attention

• Attention variants (4) Others

Assessment of Programming Language Reliability Utilizing Soft-Computing

The document discusses assessing programming language reliability using soft computing techniques like fuzzy logic and genetic algorithms. It proposes using these methods to model programming language reliability based on linguistic variables like "Reliable", "Moderately Reliable", and "Not Reliable". The key factors examined for determining a programming language's reliability include syntax consistency, semantic consistency, error handling, modularity, and documentation. A soft computing system is simulated to evaluate programming languages based on these reliability criteria.

NLP_Project_Paper_up276_vec241

The document discusses two neural network models for reading comprehension tasks: the Attentive Reader model proposed by Herman et al. in 2015 and the Stanford Reader model proposed by Chen et al. in 2016. The author implemented a two-layer attention model inspired by these previous models that achieves a 1.5% higher accuracy on reading comprehension tasks compared to the Stanford Reader.

Дмитрий Ветров. Математика больших данных: тензоры, нейросети, байесовский вы...

Лекция одного из самых известных в России специалистов по машинному обучению Дмитрия Ветрова, который руководит департаментом больших данных и информационного поиска на факультете компьютерных наук, работающим во ВШЭ при поддержке Яндекса.

Machine Reading Using Neural Machines (talk at Microsoft Research Faculty Sum...

The document discusses machine reading using neural machines. It presents goals of fact checking claims and understanding scientific publications. It outlines challenges in tasks like stance detection on tweets and summarizing scientific papers. These include interpreting statements based on the target or headline, handling unseen targets, and the small size of benchmark datasets which makes neural machine reading computationally costly.

Genetic algorithms vs Traditional algorithms

Genetic algorithms and traditional algorithms differ in their definitions, usages, and complexity. Genetic algorithms are based on genetics and natural selection, and help find optimal solutions to difficult problems. They are more advanced than traditional algorithms which provide step-by-step procedures. Genetic algorithms are used in fields like machine learning and artificial intelligence, while traditional algorithms are used in programming and mathematics.

NLP and its application in Insurance -Short story presentation

Powerpoint Presentation for the short story assignment.

Master's In Data Science at San Jose State University

CMPE-258

Image references: https://www.einfochips.com/

Not Good Enough but Try Again! Mitigating the Impact of Rejections on New Con...

Presentation at the University of Miami on 3 December 2021 on how Stack Overflow improved the retention of new contributors whose initial question is rejected (closed) as substandard. The presentation is based on a paper coauthored with Sunil Wattal.

4 de47584

This document summarizes a journal article that evaluates an e-assessment system for automatically scoring short-answer free-text questions. The system uses natural language processing techniques to match student responses to predefined model answers. A study was conducted using this system to assess Open University students. Results found the system could accurately score responses, with accuracy similar to or greater than human markers. The system provides immediate feedback to students to help them learn from mistakes.

Getting better at detecting anomalies by using ensembles

Ensemble learning combines many algorithms or models to obtain better predictive performance. Ensembles have produced the winning algorithm in competitions such as the Netflix Prize. They are used in climate modelling and relied upon to make daily forecasts.

In this talk we will explore an anomaly detection ensemble. Anomaly detection is used is many practical applications including detecting intrusions in computer networks. Anomaly detection is generally an unsupervised task, that is, we do not train models using labelled data. Constructing an unsupervised anomaly detection ensemble is challenging because we do not know the labels.

We use Item Response Theory (IRT) – a class of models used in educational psychometrics – to construct an unsupervised anomaly detection ensemble. IRT’s latent trait computation lends itself to anomaly detection because the latent trait can be used to uncover the hidden ground truth (labels). Using a novel IRT mapping to the anomaly detection problem, we construct an ensemble that can downplay noisy, non-discriminatory methods and accentuate sharper methods.

Language Models for Information Retrieval

The document provides background information on Christopher Manning, Prabhakar Raghavan, and Hinrich Schutze, who are authors of the book "Introduction to Information Retrieval: Language models for information retrieval". It then outlines the presentation which discusses language models for information retrieval, including query likelihood models, estimating query generation probabilities, and experiments comparing language modeling approaches to other IR techniques.

Topic modeling

- Topic modeling uses statistical models like LDA to discover abstract topics that occur in a collection of documents. LDA represents documents as mixtures of topics that generate words with certain probabilities.

- Three approaches to topic modeling on social media were discussed: 1) applying LDA to each user, 2) applying LDA to the top K influential users based on PageRank, and 3) detecting communities, then applying LDA to each community.

- Due to the large dataset, approach 1 was infeasible. Approach 2 was used but had limitations if all influential users belonged to one community. Approach 3, using community detection prior to LDA, is the best approach but requires further development of suitable community detection algorithms for large graphs.

Doc format.

This document summarizes Jessica Hullman's project modeling word sense disambiguation using support vector machines. She used a dataset from Senseval-2 and achieved an average accuracy of 87% at assigning word senses, with a standard deviation of 15% and median of 92%. The project involved modifying an existing implementation that used part-of-speech tags of neighboring words to classify word senses, training support vector machine classifiers on the Senseval-2 data.

A review on Exploiting experts’ knowledge for structure learning of bayesian ...

It is A review on a paper titled "Exploiting experts’ knowledge for structure learning of Bayesian networks" published in 2017.

Unit 1 Introduction to Data Compression

This document provides an introduction to data compression techniques. It discusses lossless and lossy compression methods. Lossless compression removes statistical redundancy without any loss of information, while lossy compression removes unnecessary or less important information, providing higher compression but allowing the reconstructed data to differ from the original. The document also covers measures of compression performance such as compression ratio, rate of compression, and distortion for lossy techniques. Overall, the document serves as an introduction and overview of key concepts in data compression.

Linked Data Quality Assessment – daQ and Luzzu

Presentation at the Ontology Engineering Group at UPM related to Linked Data Quality and the work done in the Enterprise Information System Group at Universität Bonn

Hybrid ga svm for efficient feature selection in e-mail classification

1. The document discusses using a hybrid genetic algorithm-support vector machine (GA-SVM) approach for feature selection in email classification to improve SVM performance.

2. SVM has been shown to be inefficient and consume a lot of computational resources when classifying large email datasets with many features.

3. The hybrid GA-SVM approach uses a genetic algorithm to optimize feature selection for SVM in order to improve classification accuracy and reduce computation time for email spam detection.

11.hybrid ga svm for efficient feature selection in e-mail classification

The document summarizes a study that develops a hybrid genetic algorithm-support vector machine (GA-SVM) technique for feature selection in email classification. The technique uses a genetic algorithm to optimize the feature selection and parameters of an SVM classifier. The goal is to improve the SVM's classification accuracy and reduce computation time for large email datasets. The study tests the hybrid GA-SVM approach on a spam email dataset. The results show improvements in classification accuracy and computation time over using SVM alone.

Resume - Orit Eistein

This document is a resume for Orit Einstein. It summarizes her educational and professional background, including an MSc and BSc in Engineering from Tel Aviv University. Her professional experience includes 4 years of research experience and skills in image acquisition, processing, analysis and modeling using MATLAB. She also has experience analyzing quantitative and qualitative data from her time serving as a Behavioral Science Examiner in the IDF Intelligence Corps.

A New Active Learning Technique Using Furthest Nearest Neighbour Criterion fo...

Active learning is a supervised learning method that is based on the idea that a machine learning algorithm can achieve greater accuracy with fewer labelled training images if it is allowed to choose the image from which it learns. Facial age classification is a technique to classify face images into one of the several predefined age groups. The proposed study applies an active learning approach to facial age classification which allows a classifier to select the data from which it learns. The classifier is initially trained using a small pool of labeled training images. This is achieved by using the bilateral two dimension linear discriminant analysis. Then the most informative unlabeled image is found out from the unlabeled pool using the furthest nearest neighbor criterion, labeled by the user and added to the

appropriate class in the training set. The incremental learning is performed using an incremental version of bilateral two dimension linear discriminant analysis. This active learning paradigm is proposed to be applied to the k nearest neighbor classifier and the support vector machine classifier and to compare the performance of these two classifiers.

IMPROVED SENTIMENT ANALYSIS USING A CUSTOMIZED DISTILBERT NLP CONFIGURATION

The document presents the results of a study that compares several natural language processing (NLP) techniques for sentiment analysis: distilBERT, VADER, and LSTM. DistilBERT achieved the highest accuracy of 92.4% for predicting sentiment polarity in a restaurant review dataset, significantly outperforming VADER (72.3% accuracy) and conventional LSTM approaches (78% accuracy). The document describes the methodology used in the comparative study and provides details on how each NLP technique approaches the task of sentiment analysis.

Deep Attention Model for Triage of Emergency Department Patients - Djordje Gl...

Deep Attention Model for Triage of Emergency Department Patients - Djordje Gl...Institute of Contemporary Sciences

"Optimization of patient throughput and wait time in emergency departments (ED) is an important task for hospital systems. For that reason, Emergency Severity Index (ESI) system for patient triage was introduced to help guide manual estimation of acuity levels, which is used by nurses to rank the patients and organize hospital resources. However, despite improvements that it brought to managing medical resources, such triage system greatly depends on nurse’s subjective judgment and is thus prone to human errors. Here, we propose a novel deep model based on the word attention mechanism designed for predicting a number of resources an ED patient would need.

Our approach incorporates routinely available continuous and nominal (structured) data with medical text (unstructured) data, including patient’s chief complaint, past medical history, medication list, and nurse assessment collected for 338,500 ED visits over three years in a large urban hospital. Using both structured and unstructured data, the proposed approach achieves the AUC of 88% for the task of identifying resource intensive patients, and the accuracy of 44% for predicting exact category of number of resources, giving an estimated lift over nurses’ performance by 16% in accuracy. Furthermore, the attention mechanism of the proposed model provides interpretability by assigning attention scores for nurses’ notes which is crucial for decision making and implementation of such approaches in the real systems working on human health."313 IDS _Course_Introduction_PPT.pptx

This document provides information for the course "Introduction to Data Science" (ITEC-313) at Jazan University. The course is a required 3 credit hour course consisting of 2 hours of theory and 2 hours of lab per week. The course objectives are to describe data science and the needed skill sets, understand the data science process and how its components interact, carry out basic statistical modeling and analysis, and apply the data science process in a case study. Topics covered include data collection/integration, exploratory data analysis, predictive/descriptive modeling, and effective communication. The course aims to equip students with basic data science principles, concepts, techniques and tools. It will be assessed through assignments, exams, quizzes

More Related Content

What's hot

Assessment of Programming Language Reliability Utilizing Soft-Computing

The document discusses assessing programming language reliability using soft computing techniques like fuzzy logic and genetic algorithms. It proposes using these methods to model programming language reliability based on linguistic variables like "Reliable", "Moderately Reliable", and "Not Reliable". The key factors examined for determining a programming language's reliability include syntax consistency, semantic consistency, error handling, modularity, and documentation. A soft computing system is simulated to evaluate programming languages based on these reliability criteria.

NLP_Project_Paper_up276_vec241

The document discusses two neural network models for reading comprehension tasks: the Attentive Reader model proposed by Herman et al. in 2015 and the Stanford Reader model proposed by Chen et al. in 2016. The author implemented a two-layer attention model inspired by these previous models that achieves a 1.5% higher accuracy on reading comprehension tasks compared to the Stanford Reader.

Дмитрий Ветров. Математика больших данных: тензоры, нейросети, байесовский вы...

Лекция одного из самых известных в России специалистов по машинному обучению Дмитрия Ветрова, который руководит департаментом больших данных и информационного поиска на факультете компьютерных наук, работающим во ВШЭ при поддержке Яндекса.

Machine Reading Using Neural Machines (talk at Microsoft Research Faculty Sum...

The document discusses machine reading using neural machines. It presents goals of fact checking claims and understanding scientific publications. It outlines challenges in tasks like stance detection on tweets and summarizing scientific papers. These include interpreting statements based on the target or headline, handling unseen targets, and the small size of benchmark datasets which makes neural machine reading computationally costly.

Genetic algorithms vs Traditional algorithms

Genetic algorithms and traditional algorithms differ in their definitions, usages, and complexity. Genetic algorithms are based on genetics and natural selection, and help find optimal solutions to difficult problems. They are more advanced than traditional algorithms which provide step-by-step procedures. Genetic algorithms are used in fields like machine learning and artificial intelligence, while traditional algorithms are used in programming and mathematics.

NLP and its application in Insurance -Short story presentation

Powerpoint Presentation for the short story assignment.

Master's In Data Science at San Jose State University

CMPE-258

Image references: https://www.einfochips.com/

Not Good Enough but Try Again! Mitigating the Impact of Rejections on New Con...

Presentation at the University of Miami on 3 December 2021 on how Stack Overflow improved the retention of new contributors whose initial question is rejected (closed) as substandard. The presentation is based on a paper coauthored with Sunil Wattal.

4 de47584

This document summarizes a journal article that evaluates an e-assessment system for automatically scoring short-answer free-text questions. The system uses natural language processing techniques to match student responses to predefined model answers. A study was conducted using this system to assess Open University students. Results found the system could accurately score responses, with accuracy similar to or greater than human markers. The system provides immediate feedback to students to help them learn from mistakes.

Getting better at detecting anomalies by using ensembles

Ensemble learning combines many algorithms or models to obtain better predictive performance. Ensembles have produced the winning algorithm in competitions such as the Netflix Prize. They are used in climate modelling and relied upon to make daily forecasts.

In this talk we will explore an anomaly detection ensemble. Anomaly detection is used is many practical applications including detecting intrusions in computer networks. Anomaly detection is generally an unsupervised task, that is, we do not train models using labelled data. Constructing an unsupervised anomaly detection ensemble is challenging because we do not know the labels.

We use Item Response Theory (IRT) – a class of models used in educational psychometrics – to construct an unsupervised anomaly detection ensemble. IRT’s latent trait computation lends itself to anomaly detection because the latent trait can be used to uncover the hidden ground truth (labels). Using a novel IRT mapping to the anomaly detection problem, we construct an ensemble that can downplay noisy, non-discriminatory methods and accentuate sharper methods.

Language Models for Information Retrieval

The document provides background information on Christopher Manning, Prabhakar Raghavan, and Hinrich Schutze, who are authors of the book "Introduction to Information Retrieval: Language models for information retrieval". It then outlines the presentation which discusses language models for information retrieval, including query likelihood models, estimating query generation probabilities, and experiments comparing language modeling approaches to other IR techniques.

Topic modeling

- Topic modeling uses statistical models like LDA to discover abstract topics that occur in a collection of documents. LDA represents documents as mixtures of topics that generate words with certain probabilities.

- Three approaches to topic modeling on social media were discussed: 1) applying LDA to each user, 2) applying LDA to the top K influential users based on PageRank, and 3) detecting communities, then applying LDA to each community.

- Due to the large dataset, approach 1 was infeasible. Approach 2 was used but had limitations if all influential users belonged to one community. Approach 3, using community detection prior to LDA, is the best approach but requires further development of suitable community detection algorithms for large graphs.

Doc format.

This document summarizes Jessica Hullman's project modeling word sense disambiguation using support vector machines. She used a dataset from Senseval-2 and achieved an average accuracy of 87% at assigning word senses, with a standard deviation of 15% and median of 92%. The project involved modifying an existing implementation that used part-of-speech tags of neighboring words to classify word senses, training support vector machine classifiers on the Senseval-2 data.

A review on Exploiting experts’ knowledge for structure learning of bayesian ...

It is A review on a paper titled "Exploiting experts’ knowledge for structure learning of Bayesian networks" published in 2017.

Unit 1 Introduction to Data Compression

This document provides an introduction to data compression techniques. It discusses lossless and lossy compression methods. Lossless compression removes statistical redundancy without any loss of information, while lossy compression removes unnecessary or less important information, providing higher compression but allowing the reconstructed data to differ from the original. The document also covers measures of compression performance such as compression ratio, rate of compression, and distortion for lossy techniques. Overall, the document serves as an introduction and overview of key concepts in data compression.

Linked Data Quality Assessment – daQ and Luzzu

Presentation at the Ontology Engineering Group at UPM related to Linked Data Quality and the work done in the Enterprise Information System Group at Universität Bonn

Hybrid ga svm for efficient feature selection in e-mail classification

1. The document discusses using a hybrid genetic algorithm-support vector machine (GA-SVM) approach for feature selection in email classification to improve SVM performance.

2. SVM has been shown to be inefficient and consume a lot of computational resources when classifying large email datasets with many features.

3. The hybrid GA-SVM approach uses a genetic algorithm to optimize feature selection for SVM in order to improve classification accuracy and reduce computation time for email spam detection.

11.hybrid ga svm for efficient feature selection in e-mail classification

The document summarizes a study that develops a hybrid genetic algorithm-support vector machine (GA-SVM) technique for feature selection in email classification. The technique uses a genetic algorithm to optimize the feature selection and parameters of an SVM classifier. The goal is to improve the SVM's classification accuracy and reduce computation time for large email datasets. The study tests the hybrid GA-SVM approach on a spam email dataset. The results show improvements in classification accuracy and computation time over using SVM alone.

Resume - Orit Eistein

This document is a resume for Orit Einstein. It summarizes her educational and professional background, including an MSc and BSc in Engineering from Tel Aviv University. Her professional experience includes 4 years of research experience and skills in image acquisition, processing, analysis and modeling using MATLAB. She also has experience analyzing quantitative and qualitative data from her time serving as a Behavioral Science Examiner in the IDF Intelligence Corps.

A New Active Learning Technique Using Furthest Nearest Neighbour Criterion fo...

Active learning is a supervised learning method that is based on the idea that a machine learning algorithm can achieve greater accuracy with fewer labelled training images if it is allowed to choose the image from which it learns. Facial age classification is a technique to classify face images into one of the several predefined age groups. The proposed study applies an active learning approach to facial age classification which allows a classifier to select the data from which it learns. The classifier is initially trained using a small pool of labeled training images. This is achieved by using the bilateral two dimension linear discriminant analysis. Then the most informative unlabeled image is found out from the unlabeled pool using the furthest nearest neighbor criterion, labeled by the user and added to the

appropriate class in the training set. The incremental learning is performed using an incremental version of bilateral two dimension linear discriminant analysis. This active learning paradigm is proposed to be applied to the k nearest neighbor classifier and the support vector machine classifier and to compare the performance of these two classifiers.

IMPROVED SENTIMENT ANALYSIS USING A CUSTOMIZED DISTILBERT NLP CONFIGURATION

The document presents the results of a study that compares several natural language processing (NLP) techniques for sentiment analysis: distilBERT, VADER, and LSTM. DistilBERT achieved the highest accuracy of 92.4% for predicting sentiment polarity in a restaurant review dataset, significantly outperforming VADER (72.3% accuracy) and conventional LSTM approaches (78% accuracy). The document describes the methodology used in the comparative study and provides details on how each NLP technique approaches the task of sentiment analysis.

What's hot (20)

Assessment of Programming Language Reliability Utilizing Soft-Computing

Assessment of Programming Language Reliability Utilizing Soft-Computing

Дмитрий Ветров. Математика больших данных: тензоры, нейросети, байесовский вы...

Дмитрий Ветров. Математика больших данных: тензоры, нейросети, байесовский вы...

Machine Reading Using Neural Machines (talk at Microsoft Research Faculty Sum...

Machine Reading Using Neural Machines (talk at Microsoft Research Faculty Sum...

NLP and its application in Insurance -Short story presentation

NLP and its application in Insurance -Short story presentation

Not Good Enough but Try Again! Mitigating the Impact of Rejections on New Con...

Not Good Enough but Try Again! Mitigating the Impact of Rejections on New Con...

Getting better at detecting anomalies by using ensembles

Getting better at detecting anomalies by using ensembles

A review on Exploiting experts’ knowledge for structure learning of bayesian ...

A review on Exploiting experts’ knowledge for structure learning of bayesian ...

Hybrid ga svm for efficient feature selection in e-mail classification

Hybrid ga svm for efficient feature selection in e-mail classification

11.hybrid ga svm for efficient feature selection in e-mail classification

11.hybrid ga svm for efficient feature selection in e-mail classification

A New Active Learning Technique Using Furthest Nearest Neighbour Criterion fo...

A New Active Learning Technique Using Furthest Nearest Neighbour Criterion fo...

IMPROVED SENTIMENT ANALYSIS USING A CUSTOMIZED DISTILBERT NLP CONFIGURATION

IMPROVED SENTIMENT ANALYSIS USING A CUSTOMIZED DISTILBERT NLP CONFIGURATION

Similar to Ran zhou poster 2018

Deep Attention Model for Triage of Emergency Department Patients - Djordje Gl...

Deep Attention Model for Triage of Emergency Department Patients - Djordje Gl...Institute of Contemporary Sciences

"Optimization of patient throughput and wait time in emergency departments (ED) is an important task for hospital systems. For that reason, Emergency Severity Index (ESI) system for patient triage was introduced to help guide manual estimation of acuity levels, which is used by nurses to rank the patients and organize hospital resources. However, despite improvements that it brought to managing medical resources, such triage system greatly depends on nurse’s subjective judgment and is thus prone to human errors. Here, we propose a novel deep model based on the word attention mechanism designed for predicting a number of resources an ED patient would need.

Our approach incorporates routinely available continuous and nominal (structured) data with medical text (unstructured) data, including patient’s chief complaint, past medical history, medication list, and nurse assessment collected for 338,500 ED visits over three years in a large urban hospital. Using both structured and unstructured data, the proposed approach achieves the AUC of 88% for the task of identifying resource intensive patients, and the accuracy of 44% for predicting exact category of number of resources, giving an estimated lift over nurses’ performance by 16% in accuracy. Furthermore, the attention mechanism of the proposed model provides interpretability by assigning attention scores for nurses’ notes which is crucial for decision making and implementation of such approaches in the real systems working on human health."313 IDS _Course_Introduction_PPT.pptx

This document provides information for the course "Introduction to Data Science" (ITEC-313) at Jazan University. The course is a required 3 credit hour course consisting of 2 hours of theory and 2 hours of lab per week. The course objectives are to describe data science and the needed skill sets, understand the data science process and how its components interact, carry out basic statistical modeling and analysis, and apply the data science process in a case study. Topics covered include data collection/integration, exploratory data analysis, predictive/descriptive modeling, and effective communication. The course aims to equip students with basic data science principles, concepts, techniques and tools. It will be assessed through assignments, exams, quizzes

Text Analytics for Legal work

AlgoAnalytics is an analytics consultancy that uses advanced mathematical techniques and machine learning to solve business problems for clients across various industries. It has over 30 data scientists with expertise in mathematics, engineering, and cutting-edge methodologies like deep learning. AlgoAnalytics works closely with domain experts to effectively model problems and develop predictive analytics solutions using structured, text, image, sound, and other types of data. Some of its service offerings include contracts management, document decomposition, sentiment analysis, and predictive maintenance. The company is led by CEO and founder Aniruddha Pant, who has over 20 years of experience applying machine learning and analytics to academic and enterprise challenges.

لموعد الإثنين 03 يناير 2022 143 مبادرة #تواصل_تطوير المحاضرة ال 143 من المباد...

لموعد الإثنين 03 يناير 2022 143 مبادرة #تواصل_تطوير المحاضرة ال 143 من المباد...Egyptian Engineers Association

الموعد الإثنين 03 يناير 2022

143

مبادرة

#تواصل_تطوير

المحاضرة ال 143 من المبادرة

المهندس / محمد الرافعي طرباي

نقيب المبرمجين بالدقهلية

بعنوان

"IT INDUSTRY"

How To Getting Into IT With Zero Experience

وذلك يوم الإثنين 03 يناير2022

السابعة مساء توقيت القاهرة

الثامنة مساء توقيت مكة المكرمة

و الحضور من تطبيق زووم

https://us02web.zoom.us/meeting/register/tZUpf-GsrD4jH9N9AxO39J013c1D4bqJNTcu

علما ان هناك بث مباشر للمحاضرة على القنوات الخاصة بجمعية المهندسين المصريين

ونأمل أن نوفق في تقديم ما ينفع المهندس ومهمة الهندسة في عالمنا العربي

والله الموفق

للتواصل مع إدارة المبادرة عبر قناة التليجرام

https://t.me/EEAKSA

ومتابعة المبادرة والبث المباشر عبر نوافذنا المختلفة

رابط اللينكدان والمكتبة الالكترونية

https://www.linkedin.com/company/eeaksa-egyptian-engineers-association/

رابط قناة التويتر

https://twitter.com/eeaksa

رابط قناة الفيسبوك

https://www.facebook.com/EEAKSA

رابط قناة اليوتيوب

https://www.youtube.com/user/EEAchannal

رابط التسجيل العام للمحاضرات

https://forms.gle/vVmw7L187tiATRPw9

ملحوظة : توجد شهادات حضور مجانية لمن يسجل فى رابط التقيم اخر المحاضرةNLP Techniques for Text Classification.docx

Natural Language Processing (NLP) is an area of computer science and artificial intelligence that aims to enable machines to understand and interpret human language. Text classification is one of the most common tasks in NLP, and it involves categorizing text into predefined categories or classes. In this blog post, we will explore some of the most effective NLP techniques for text classification.

Data science lecture4_doaa_mohey

The document presents an overview of data classification by Doaa Mohey Eldin. It begins with defining data classification as the process of organizing data into classes based on attributes. It then discusses key terminology, why data classification is used, and how it can be applied to problems like disease classification. The main data classification techniques covered include logistic regression, naive bayes, decision trees, random forests, support vector machines, and deep learning models like convolutional neural networks. The document provides details on each technique's definition, advantages, and disadvantages.

Data Analysis in Research: Descriptive Statistics & Normality

This document discusses different types of data and data analysis techniques used in research. It defines data as any set of characters gathered for analysis. Research data can take many forms including documents, laboratory notes, questionnaires, and digital outputs. There are two main types of data: quantitative data which can be measured numerically, and qualitative data involving words and symbols. Common quantitative analysis techniques described are descriptive statistics to summarize variables and inferential statistics to understand relationships. Qualitative analysis techniques include content analysis, narrative analysis and grounded theory.

Machine learning meets user analytics - Metageni tech talk

Data analytics experts Metageni briefly explain how global information giant LexisNexis models user success from user analytics data using machine learning. A Moo.com tech talk for analysts and engineers with an interest in data science, covering the high level classifier method used in support of LexisNexis, working with their global digital team.

The Pupil Has Become the Master: Teacher-Student Model-Based Word Embedding D...

Recent advances in deep learning have facilitated the demand of neural models for real applications. In practice, these applications often need to be deployed with limited resources while keeping high accuracy. This paper touches the core of neural models in NLP, word embeddings, and presents a new embedding distillation framework that remarkably reduces the dimension of word embeddings without compromising accuracy. A novel distillation ensemble approach is also proposed that trains a high-efficient student model using multiple teacher models. In our approach, the teacher models play roles only during training such that the student model operates on its own without getting supports from the teacher models during decoding, which makes it eighty times faster and lighter than other typical ensemble methods. All models are evaluated on seven document classification datasets and show significant advantage over the teacher models for most cases. Our analysis depicts insightful transformation of word embeddings from distillation and suggests a future direction to ensemble approaches using neural models.

IRJET-Classifying Mined Online Discussion Data for Reflective Thinking based ...

This document presents a methodology for classifying mined online discussion data to identify reflective thinking based on ontology. It involves the following steps:

1. Collecting online discussion data and preprocessing it by removing stop words and punctuation.

2. Implementing inductive content analysis to categorize the data into six types of reflective thinking.

3. Training a Naive Bayes classifier on the categorized data to classify new data.

4. Applying the trained model to large scale unlabeled online discussion data.

5. Using ontology to provide a deeper classification of topics in the data beyond the six reflective thinking categories. This allows extraction of additional knowledge from the classified text data.

Hypothesis on Different Data Mining Algorithms

In this paper, different classification algorithms for data mining are discussed. Data Mining is about

explaining the past & predicting the future by means of data analysis. Classification is a task of data mining,

which categories data based on numerical or categorical variables. To classify the data many algorithms are

proposed, out of them five algorithms are comparatively studied for data mining through classification. There are

four different classification approaches namely Frequency Table, Covariance Matrix, Similarity Functions &

Others. As work for research on classification methods, algorithms like Naive Bayesian, K Nearest Neighbors,

Decision Tree, Artificial Neural Network & Support Vector Machine are studied & examined using benchmark

datasets like Iris & Lung Cancer.

Identifying and classifying unknown Network Disruption

This document discusses identifying and classifying unknown network disruptions using machine learning algorithms. It begins by introducing the problem and importance of identifying network disruptions. Then it discusses related work on classifying network protocols. The document outlines the dataset and problem statement of predicting fault severity. It describes the machine learning workflow and various algorithms like random forest, decision tree and gradient boosting that are evaluated on the dataset. Finally, it concludes with achieving the objective of classifying disruptions and discusses future work like optimizing features and using neural networks.

Document Analyser Using Deep Learning

The document presents a method for document analysis using deep learning. It proposes a multi-modal transformer model to jointly analyze the text, layout, and visual features of documents. The model is trained on various types of documents like marksheets and certificates. It uses techniques like optical character recognition (OCR) to extract text and analyze layout and formatting to classify documents into categories like marksheets or certificates with significant accuracy. The methodology involves preprocessing documents, extracting features, encoding text into vectors, and using classification algorithms like naive bayes or random forest for classification. The model is able to accurately classify sample input documents into predefined classes.

IRJET- Deep Learning Model to Predict Hardware Performance

This document discusses using deep learning models to predict hardware performance. Specifically, it aims to predict benchmark scores from hardware configurations, or predict configurations from scores. It explores various machine learning algorithms like linear regression, logistic regression, and multi-linear regression on hardware performance data. The best results were from backward elimination and linear regression, achieving over 80% accuracy. Data preprocessing like encoding was important. The model can help analyze hardware performance more quickly than manual methods.

IRJET- Analysis of PV Fed Vector Controlled Induction Motor Drive

The document describes a project to develop a deep learning model to predict hardware performance. The model takes hardware configuration parameters like CPU, memory, etc. as input and predicts benchmark scores. The authors preprocessed data, tested various regression models like linear regression and lasso regression, and techniques like backward elimination and cross-validation. Their best model used backward elimination and linear regression, achieving 80.82% accuracy. The project aims to automate hardware performance analysis and prediction to save time compared to manual methods.

J48 and JRIP Rules for E-Governance Data

Data are any facts, numbers, or text that can be processed by a computer. Data Mining is an analytic process which designed to explore data usually large amounts of data. Data Mining is often considered to be \"a blend of statistics. In this paper we have used two data mining techniques for discovering classification rules and generating a decision tree. These techniques are J48 and JRIP. Data mining tools WEKA is used in this paper.

An Efficient PSO Based Ensemble Classification Model on High Dimensional Data...

This summary provides the high-level information from the document in 3 sentences:

The document proposes a Particle Swarm Optimization (PSO) based ensemble classification model to improve classification of high-dimensional biomedical datasets. It develops an optimized PSO technique to select optimal features and initialize weights for base classifiers in the ensemble model. Experimental results on microarray datasets show the proposed model achieves higher accuracy, true positive rate, and lower error rate compared to traditional feature selection based classification models.

AN EFFICIENT PSO BASED ENSEMBLE CLASSIFICATION MODEL ON HIGH DIMENSIONAL DATA...

As the size of the biomedical databases are growing day by day, finding an essential features in the disease prediction have become more complex due to high dimensionality and sparsity problems. Also, due to the

availability of a large number of micro-array datasets in the biomedical repositories, it is difficult to analyze, predict and interpret the feature information using the traditional feature selection based classification models. Most of the traditional feature selection based classification algorithms have computational issues such as dimension reduction, uncertainty and class imbalance on microarray datasets. Ensemble classifier is one of the scalable models for extreme learning machine due to its high efficiency, the fast processing speed for real-time applications. The main objective of the feature selection

based ensemble learning models is to classify the high dimensional data with high computational efficiency

and high true positive rate on high dimensional datasets. In this proposed model an optimized Particle swarm optimization (PSO) based Ensemble classification model was developed on high dimensional microarray

datasets. Experimental results proved that the proposed model has high computational efficiency compared to the traditional feature selection based classification models in terms of accuracy , true positive rate and error rate are concerned.

Muhammad Usman Akhtar | Ph.D Scholar | Wuhan University | School of Co...

1 Machine Learning (ML)

2 Ingredients for training ML

3 Types of ML algorithms

3.1 Supervised Learning

3.2 Un-supervised Learning

3.3 Reinforcement Learning

4 Deep Learning (DL)

4.1 Why DL useful

4.2 Application

5 Architectures

6 Activation Function

7 Popular Neural Network Architecture

7.1 Feedforward Neural network

7.2 Recurrent Neural network

7.3 Convolutional neural network

Similar to Ran zhou poster 2018 (20)

Deep Attention Model for Triage of Emergency Department Patients - Djordje Gl...

Deep Attention Model for Triage of Emergency Department Patients - Djordje Gl...

لموعد الإثنين 03 يناير 2022 143 مبادرة #تواصل_تطوير المحاضرة ال 143 من المباد...

لموعد الإثنين 03 يناير 2022 143 مبادرة #تواصل_تطوير المحاضرة ال 143 من المباد...

Data Analysis in Research: Descriptive Statistics & Normality

Data Analysis in Research: Descriptive Statistics & Normality

Machine learning meets user analytics - Metageni tech talk

Machine learning meets user analytics - Metageni tech talk

The Pupil Has Become the Master: Teacher-Student Model-Based Word Embedding D...

The Pupil Has Become the Master: Teacher-Student Model-Based Word Embedding D...

IRJET-Classifying Mined Online Discussion Data for Reflective Thinking based ...

IRJET-Classifying Mined Online Discussion Data for Reflective Thinking based ...

Identifying and classifying unknown Network Disruption

Identifying and classifying unknown Network Disruption

IRJET- Deep Learning Model to Predict Hardware Performance

IRJET- Deep Learning Model to Predict Hardware Performance

IRJET- Analysis of PV Fed Vector Controlled Induction Motor Drive

IRJET- Analysis of PV Fed Vector Controlled Induction Motor Drive

An Efficient PSO Based Ensemble Classification Model on High Dimensional Data...

An Efficient PSO Based Ensemble Classification Model on High Dimensional Data...

AN EFFICIENT PSO BASED ENSEMBLE CLASSIFICATION MODEL ON HIGH DIMENSIONAL DATA...

AN EFFICIENT PSO BASED ENSEMBLE CLASSIFICATION MODEL ON HIGH DIMENSIONAL DATA...

Muhammad Usman Akhtar | Ph.D Scholar | Wuhan University | School of Co...

Muhammad Usman Akhtar | Ph.D Scholar | Wuhan University | School of Co...

Recently uploaded

University of New South Wales degree offer diploma Transcript

澳洲UNSW毕业证书制作新南威尔士大学假文凭定制Q微168899991做UNSW留信网教留服认证海牙认证改UNSW成绩单GPA做UNSW假学位证假文凭高仿毕业证申请新南威尔士大学University of New South Wales degree offer diploma Transcript

一比一原版(Glasgow毕业证书)格拉斯哥大学毕业证如何办理

毕业原版【微信:41543339】【(Glasgow毕业证书)格拉斯哥大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

原版制作(Deakin毕业证书)迪肯大学毕业证学位证一模一样

学校原件一模一样【微信:741003700 】《(Deakin毕业证书)迪肯大学毕业证学位证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

在线办理(英国UCA毕业证书)创意艺术大学毕业证在读证明一模一样

学校原件一模一样【微信:741003700 】《(英国UCA毕业证书)创意艺术大学毕业证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

一比一原版(UCSB文凭证书)圣芭芭拉分校毕业证如何办理

毕业原版【微信:176555708】【(UCSB毕业证书)圣芭芭拉分校毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

State of Artificial intelligence Report 2023

Artificial intelligence (AI) is a multidisciplinary field of science and engineering whose goal is to create intelligent machines.

We believe that AI will be a force multiplier on technological progress in our increasingly digital, data-driven world. This is because everything around us today, ranging from culture to consumer products, is a product of intelligence.

The State of AI Report is now in its sixth year. Consider this report as a compilation of the most interesting things we’ve seen with a goal of triggering an informed conversation about the state of AI and its implication for the future.

We consider the following key dimensions in our report:

Research: Technology breakthroughs and their capabilities.

Industry: Areas of commercial application for AI and its business impact.

Politics: Regulation of AI, its economic implications and the evolving geopolitics of AI.

Safety: Identifying and mitigating catastrophic risks that highly-capable future AI systems could pose to us.

Predictions: What we believe will happen in the next 12 months and a 2022 performance review to keep us honest.

The Building Blocks of QuestDB, a Time Series Database

Talk Delivered at Valencia Codes Meetup 2024-06.

Traditionally, databases have treated timestamps just as another data type. However, when performing real-time analytics, timestamps should be first class citizens and we need rich time semantics to get the most out of our data. We also need to deal with ever growing datasets while keeping performant, which is as fun as it sounds.

It is no wonder time-series databases are now more popular than ever before. Join me in this session to learn about the internal architecture and building blocks of QuestDB, an open source time-series database designed for speed. We will also review a history of some of the changes we have gone over the past two years to deal with late and unordered data, non-blocking writes, read-replicas, or faster batch ingestion.

STATATHON: Unleashing the Power of Statistics in a 48-Hour Knowledge Extravag...

"Join us for STATATHON, a dynamic 2-day event dedicated to exploring statistical knowledge and its real-world applications. From theory to practice, participants engage in intensive learning sessions, workshops, and challenges, fostering a deeper understanding of statistical methodologies and their significance in various fields."

Population Growth in Bataan: The effects of population growth around rural pl...

A population analysis specific to Bataan.

4th Modern Marketing Reckoner by MMA Global India & Group M: 60+ experts on W...

The Modern Marketing Reckoner (MMR) is a comprehensive resource packed with POVs from 60+ industry leaders on how AI is transforming the 4 key pillars of marketing – product, place, price and promotions.

一比一原版(UofS毕业证书)萨省大学毕业证如何办理

原版定制【微信:41543339】【(UofS毕业证书)萨省大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

一比一原版(UIUC毕业证)伊利诺伊大学|厄巴纳-香槟分校毕业证如何办理

UIUC毕业证offer【微信95270640】☀《伊利诺伊大学|厄巴纳-香槟分校毕业证购买》GoogleQ微信95270640《UIUC毕业证模板办理》加拿大文凭、本科、硕士、研究生学历都可以做,二、业务范围:

★、全套服务:毕业证、成绩单、化学专业毕业证书伪造《伊利诺伊大学|厄巴纳-香槟分校大学毕业证》Q微信95270640《UIUC学位证书购买》

(诚招代理)办理国外高校毕业证成绩单文凭学位证,真实使馆公证(留学回国人员证明)真实留信网认证国外学历学位认证雅思代考国外学校代申请名校保录开请假条改GPA改成绩ID卡

1.高仿业务:【本科硕士】毕业证,成绩单(GPA修改),学历认证(教育部认证),大学Offer,,ID,留信认证,使馆认证,雅思,语言证书等高仿类证书;

2.认证服务: 学历认证(教育部认证),大使馆认证(回国人员证明),留信认证(可查有编号证书),大学保录取,雅思保分成绩单。

3.技术服务:钢印水印烫金激光防伪凹凸版设计印刷激凸温感光标底纹镭射速度快。

办理伊利诺伊大学|厄巴纳-香槟分校伊利诺伊大学|厄巴纳-香槟分校毕业证offer流程:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)

-办理真实使馆公证(即留学回国人员证明)

-办理各国各大学文凭(世界名校一对一专业服务,可全程监控跟踪进度)

-全套服务:毕业证成绩单真实使馆公证真实教育部认证。让您回国发展信心十足!

(详情请加一下 文凭顾问+微信:95270640)欢迎咨询!的鬼地方父亲的家在高楼最底屋最下面很矮很黑是很不显眼的地下室父亲的家安在别人脚底下须绕过高楼旁边的垃圾堆下八个台阶才到父亲的家很狭小除了一张单人床和一张小方桌几乎没有多余的空间山娃一下子就联想起学校的男小便处山娃很想笑却怎么也笑不出来山娃很迷惑父亲的家除了一扇小铁门连窗户也没有墓穴一般阴森森有些骇人父亲的城也便成了山娃的城父亲的家也便成了山娃的家父亲让山娃呆在屋里做作业看电视最多只能在门口透透气间

一比一原版(Dalhousie毕业证书)达尔豪斯大学毕业证如何办理

原版定制【微信:41543339】【(Dalhousie毕业证书)达尔豪斯大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Harness the power of AI-backed reports, benchmarking and data analysis to predict trends and detect anomalies in your marketing efforts.

Peter Caputa, CEO at Databox, reveals how you can discover the strategies and tools to increase your growth rate (and margins!).

From metrics to track to data habits to pick up, enhance your reporting for powerful insights to improve your B2B tech company's marketing.

- - -

This is the webinar recording from the June 2024 HubSpot User Group (HUG) for B2B Technology USA.

Watch the video recording at https://youtu.be/5vjwGfPN9lw

Sign up for future HUG events at https://events.hubspot.com/b2b-technology-usa/

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You...

This webinar will explore cutting-edge, less familiar but powerful experimentation methodologies which address well-known limitations of standard A/B Testing. Designed for data and product leaders, this session aims to inspire the embrace of innovative approaches and provide insights into the frontiers of experimentation!

办(uts毕业证书)悉尼科技大学毕业证学历证书原版一模一样

原版一模一样【微信:741003700 】【(uts毕业证书)悉尼科技大学毕业证学历证书】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

一比一原版(UMN文凭证书)明尼苏达大学毕业证如何办理

毕业原版【微信:176555708】【(UMN毕业证书)明尼苏达大学毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

Intelligence supported media monitoring in veterinary medicine

Media monitoring in veterinary medicien

Recently uploaded (20)

University of New South Wales degree offer diploma Transcript

University of New South Wales degree offer diploma Transcript

The Building Blocks of QuestDB, a Time Series Database

The Building Blocks of QuestDB, a Time Series Database

STATATHON: Unleashing the Power of Statistics in a 48-Hour Knowledge Extravag...

STATATHON: Unleashing the Power of Statistics in a 48-Hour Knowledge Extravag...

Population Growth in Bataan: The effects of population growth around rural pl...

Population Growth in Bataan: The effects of population growth around rural pl...

4th Modern Marketing Reckoner by MMA Global India & Group M: 60+ experts on W...

4th Modern Marketing Reckoner by MMA Global India & Group M: 60+ experts on W...

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You...

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You...

Influence of Marketing Strategy and Market Competition on Business Plan

Influence of Marketing Strategy and Market Competition on Business Plan

Intelligence supported media monitoring in veterinary medicine

Intelligence supported media monitoring in veterinary medicine

Ran zhou poster 2018

- 1. Application of Manual Document Classification Algorithm Ran Zhou with Mentor: Peter Merrill Data The data we are using for training the document classification algorithm have the following features: • Five types of document instances • Binary labels – Yes/No • Two data formats • PDF files o Get the text part by optical character recognition (OCR) o Get the labels from the curated database through a link variable • Curated Database • Relatively small data size 2018 DUKE RESEARCH COMPUTING SYMPOSIUM POSTER COMPETITIONIntroduction Manual document classification is a tedious process of manually classifying documents instances, which includes two steps: • Some instances are identified in need of being labeled/classified • The document instances are labeled/classified according to rigorous, predefined criteria Acknowledgements Leadership Team: Renato Lopes, MD,MHS,PhD Schuyler Jones, MD Michael Pencina, PhD Larry Carin, PhD Lisa Wruck, PhD Shelley Rusincovitch, MM References Dinghan Shen et al, On the use of word embeddings alone to represent natural language sequences, 2017(submitted to ICLR) Contact Information Ran Zhou Master student in statistical science department at Duke E-mail: rz69@duke.edu Goal The goal is to find an algorithm to simulate the manual classification process. The algorithm will be able to: • Take text data as the input and automatically give a classification label as the output. • Reduce the mistakes created by manual classification and improve the accuracy. • Improve the efficiency of the classification process by replacing manual classification process by machine. Figure 4. Data Components Figure 3. Curated Database Summary • Total instance count: 11000 • Average text length: 44 (min:1, max:1117) Figure 2. PDF File Summary • Total instance count: 11033 • Average text length: 1274 (min: 3, max: 24984) Model “At the DCRI, our values honor our history and guide our actions and daily decisions.… “ (An example text input of the algorithm) Yes / NoNeural Network Figure 1. Classification Algorithm Figure 5. Simple Word Embedding Based Model (SWEM) e_1 e_2 E = e_84 w_1 w_2 w_84 E = Yes Word Embedding Simple Neural Network Classification Results Figure 7. Individual Instance TypeFigure 6. All Instance Type Conclusion The model highly improves the classification accuracy and the computational efficiency comparing to the traditional neural network model, recurrent neural network(RNN) and convolutional neural network(CNN). The classification results for all instance types and individual instance type are much better than random guess and existing algorithms for similar problems. Next, we will test the model on the data from the PDF files to further improve the accuracy. Type 1 Type 2 Type 3 Type 4 Type 5 2082 3950 3013 689 1299 Type 1 Type 2 Type 3 Type 4 Type 5 2045 3951 3003 689 1312 Yes No Yes No Yes No Sub 1 Yes Sub 2 Yes No / 833 1212 2993 958 291 2712 369 107 213 / Matt Wilson, RN Team Members: Ricardo Henao, PhD Peter Merrill, PhD Ran Zhou, BS Lynn Perkins, MBA SWEM is a neural network algorithm good at capturing semantic meaning of both individual words and their compositions simply by a special embedding method. Different from traditional neural network models for capturing semantic meaning of sentences, SWEM does not require large memory to train a large amount of extra layers. SWEM is also shown to be able to give more robust results for small data.

Editor's Notes

- Note: This slide master is 18 x 21, which will scale to 36 x 42 for printing Be sure to use the option to save as PDF, optimize for: standard (publishing online and printing)