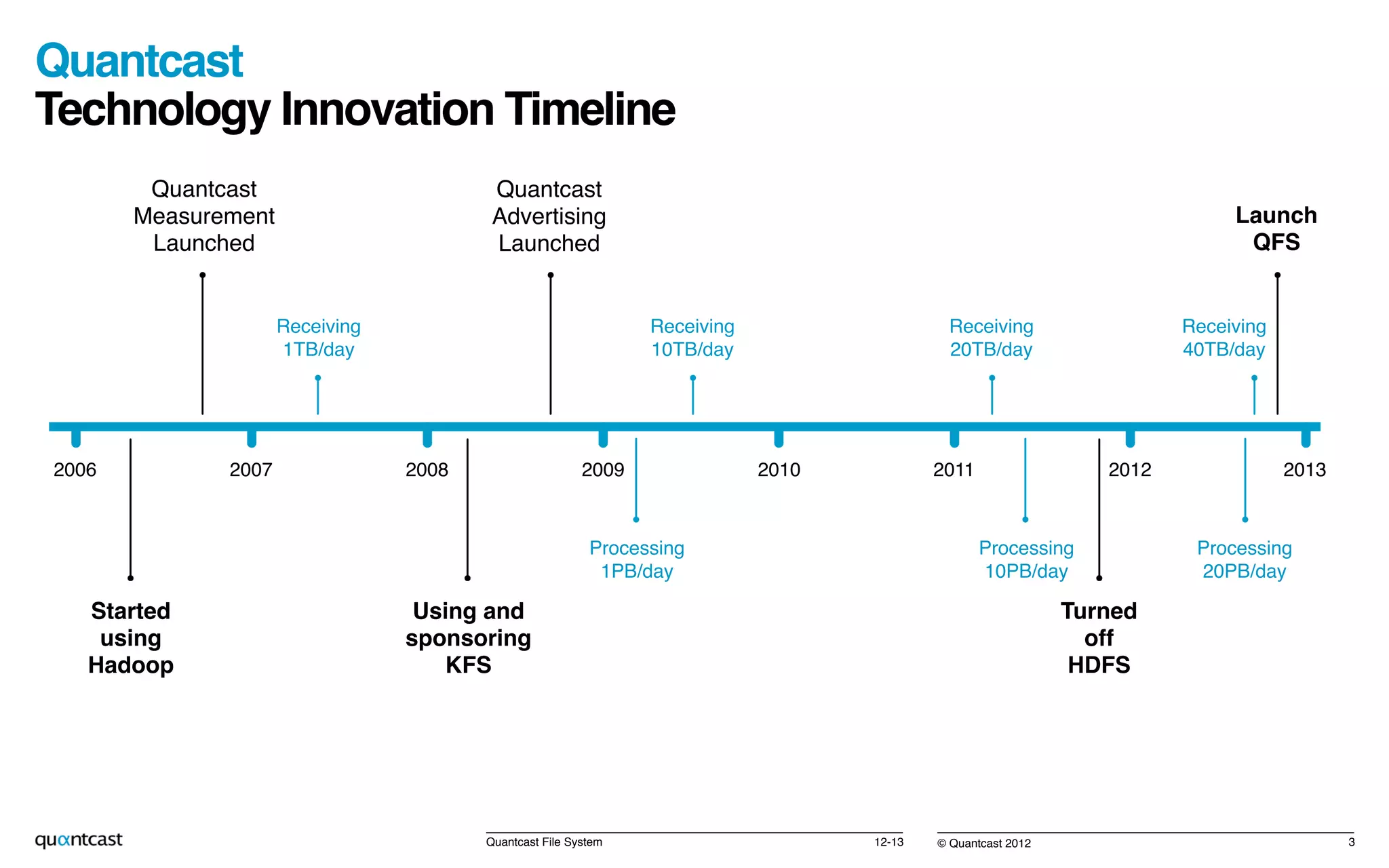

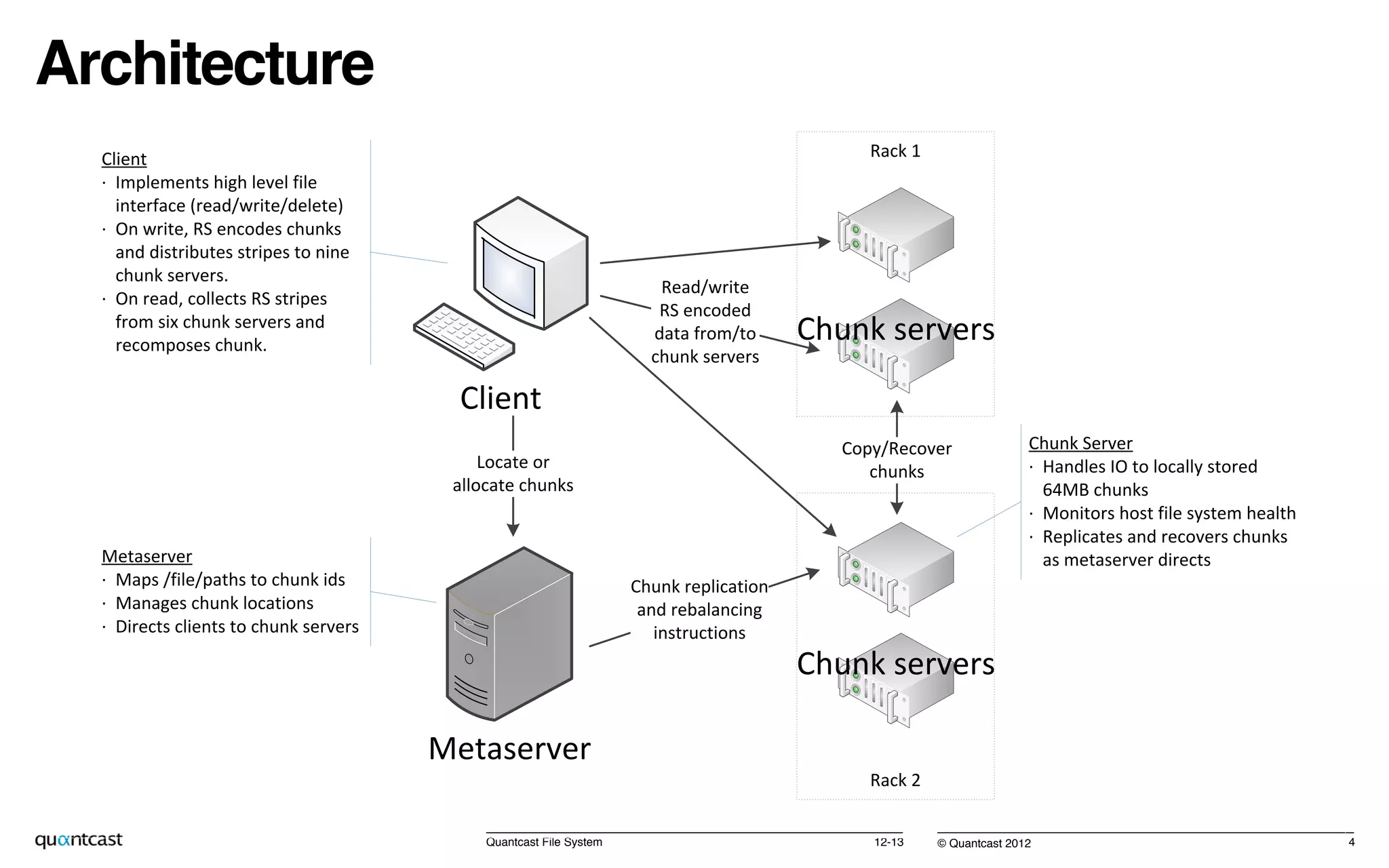

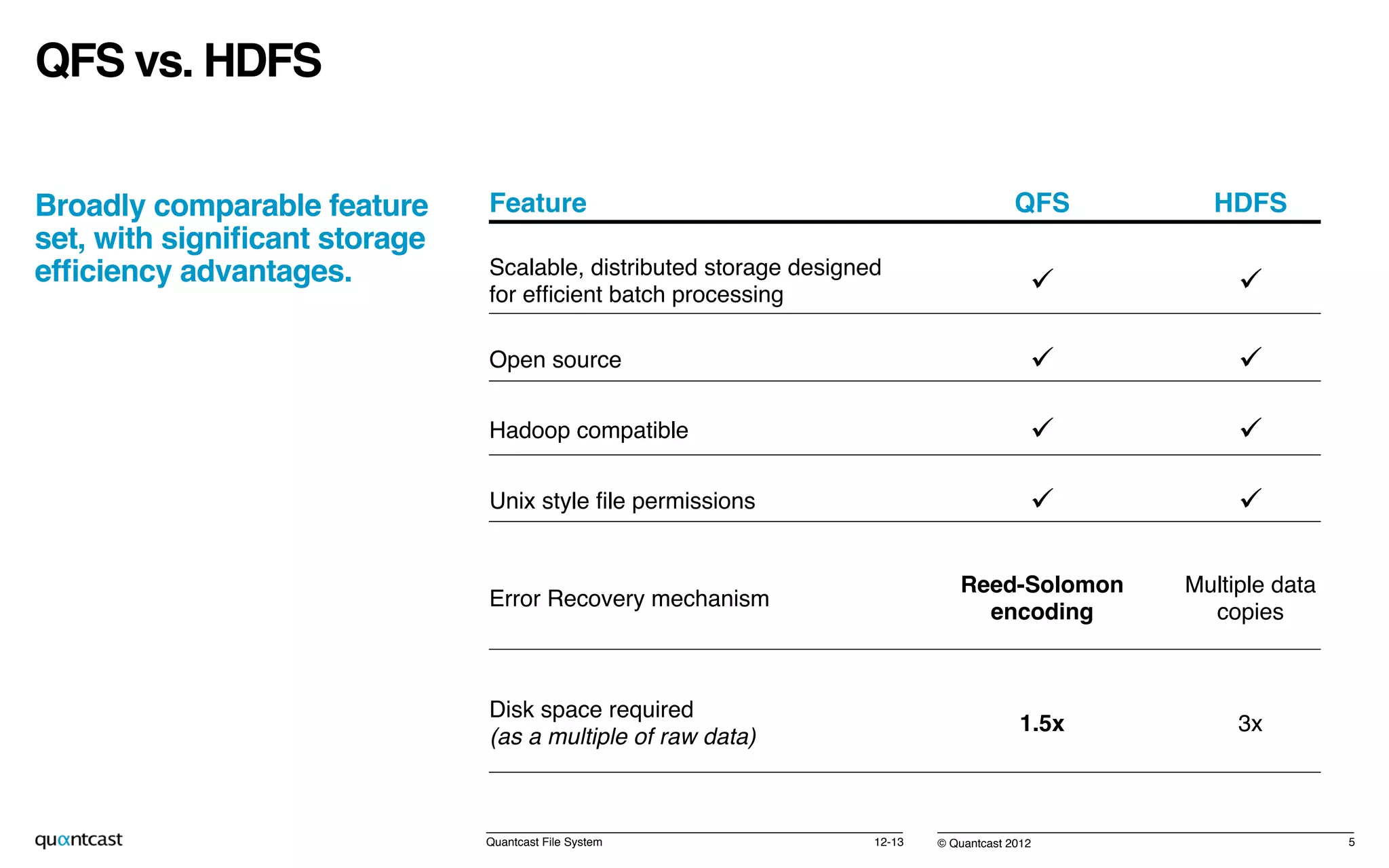

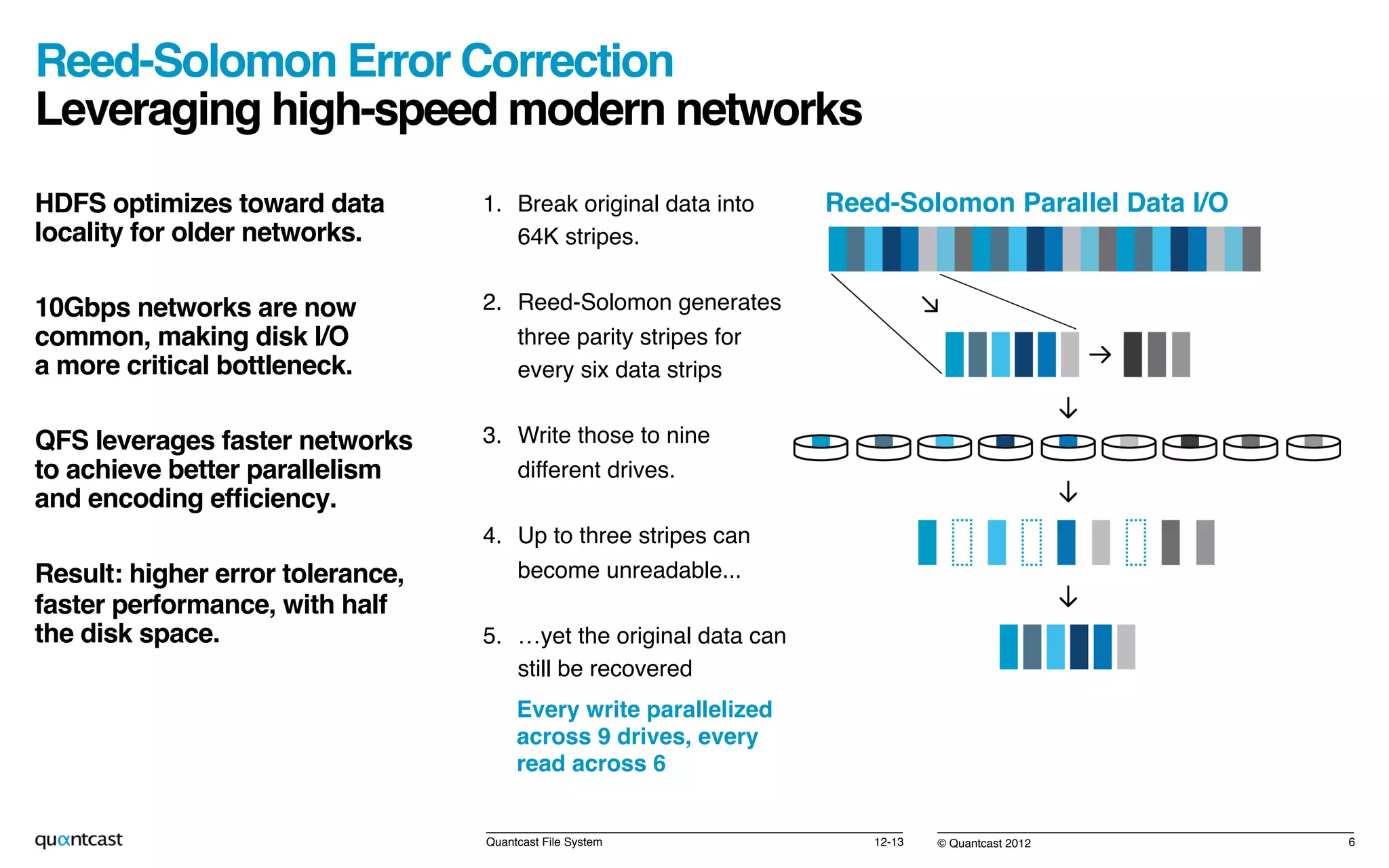

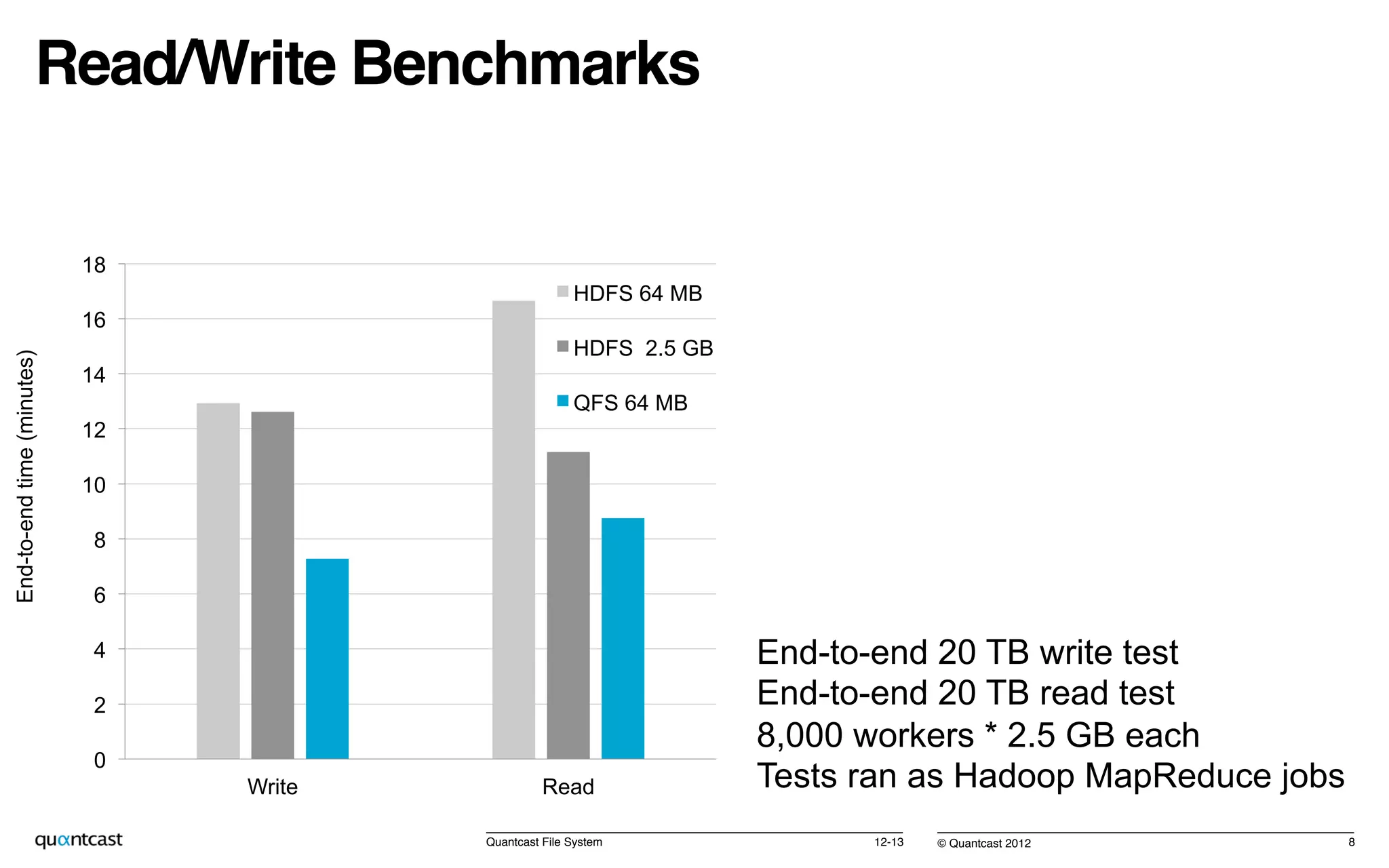

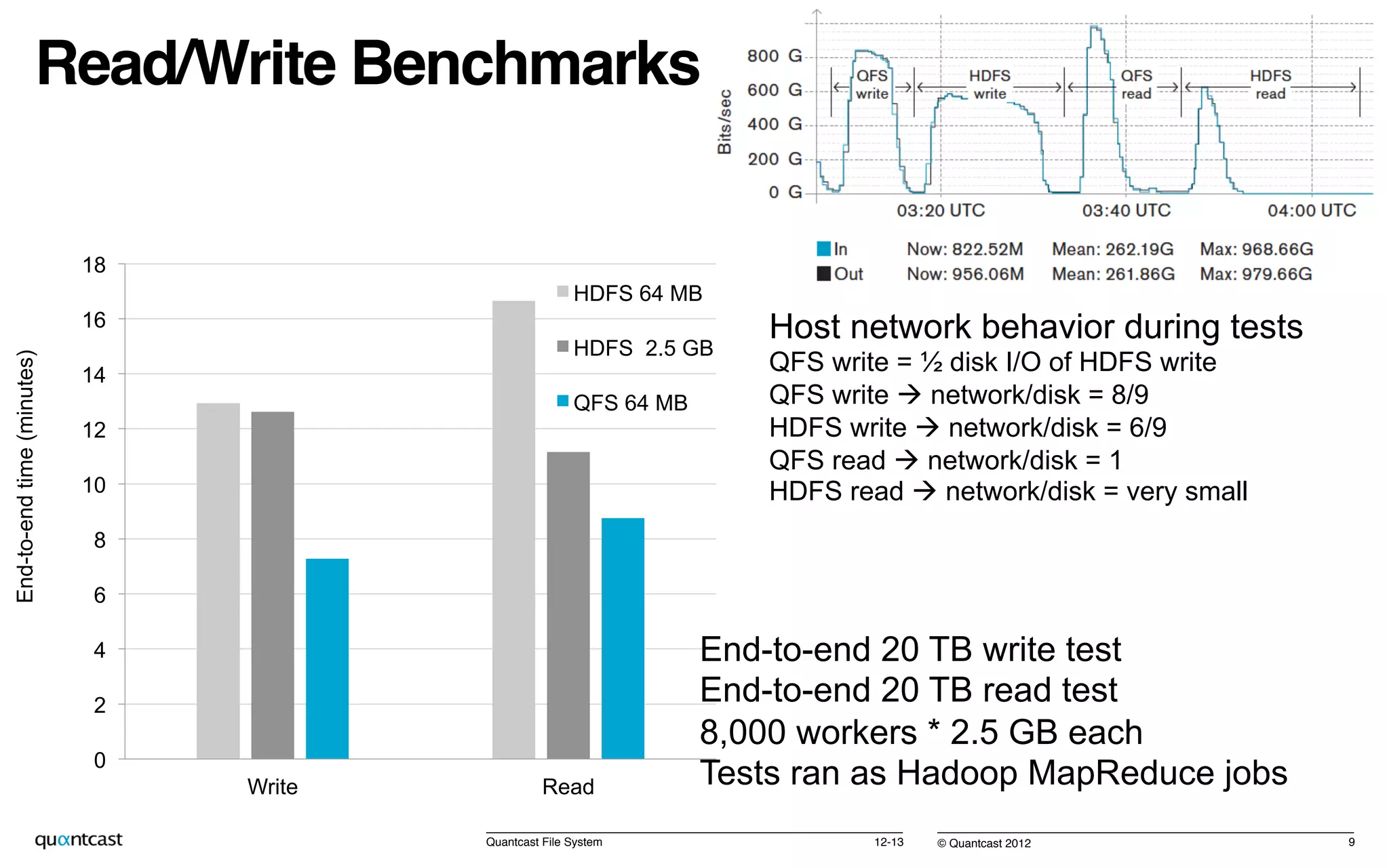

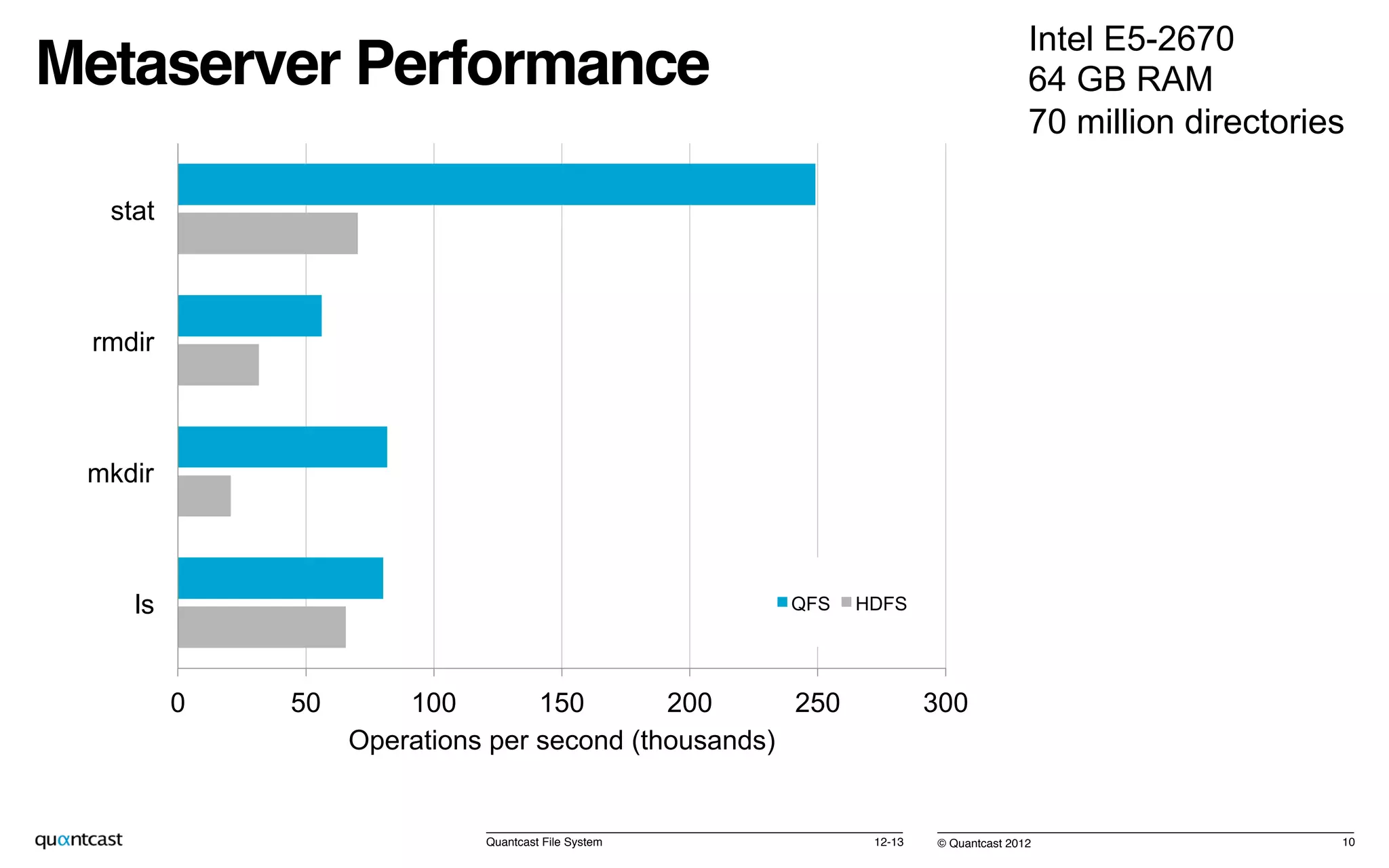

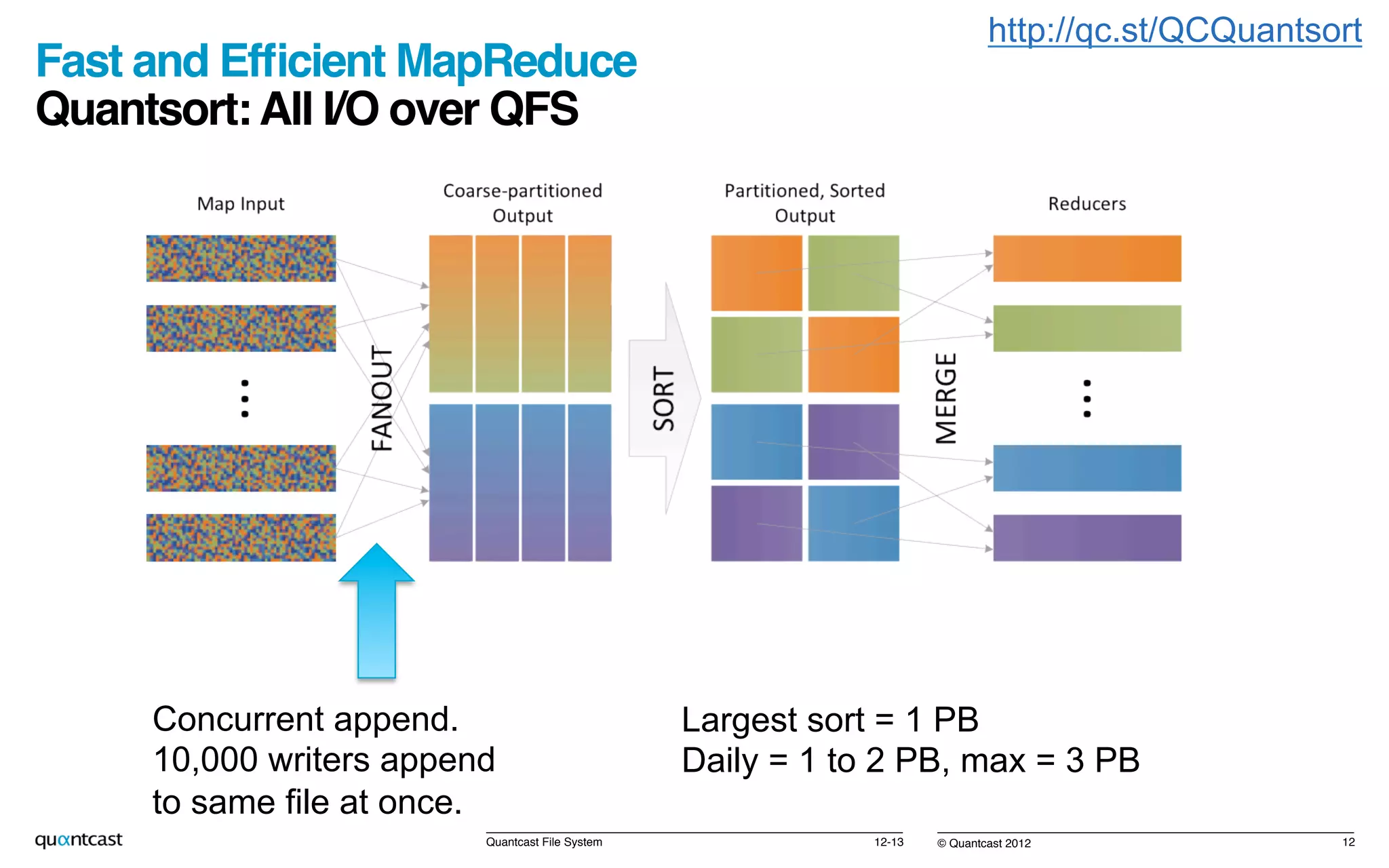

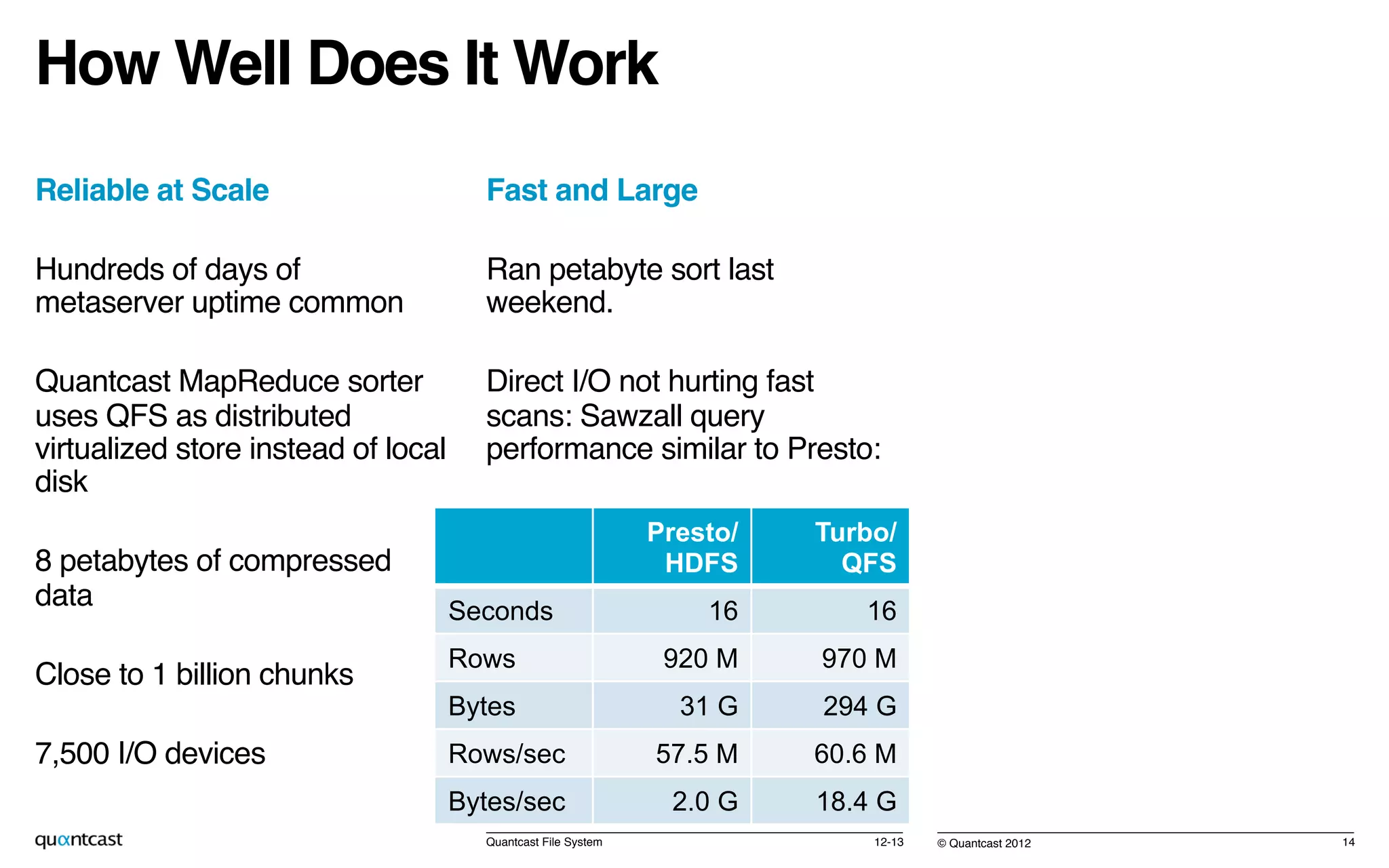

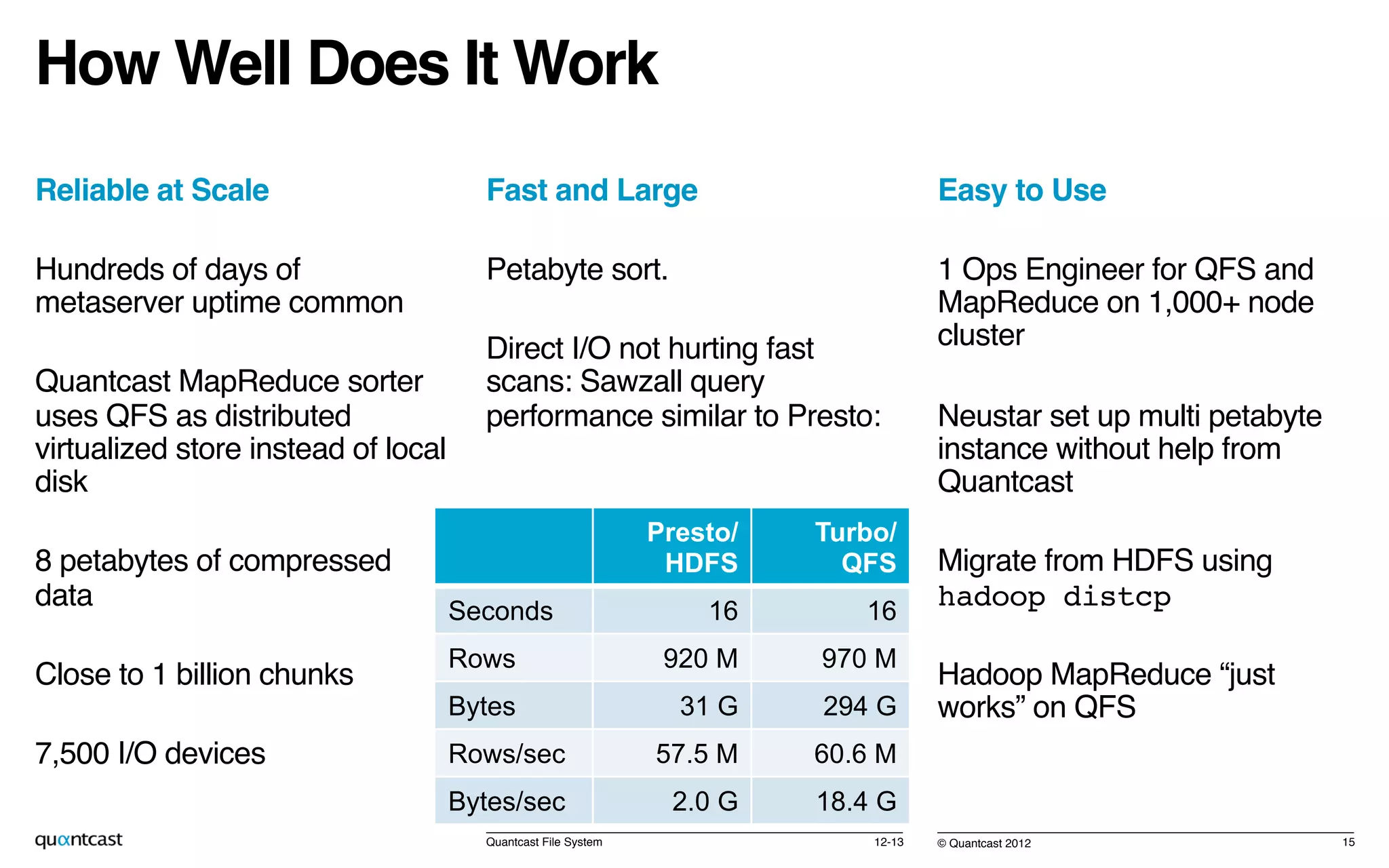

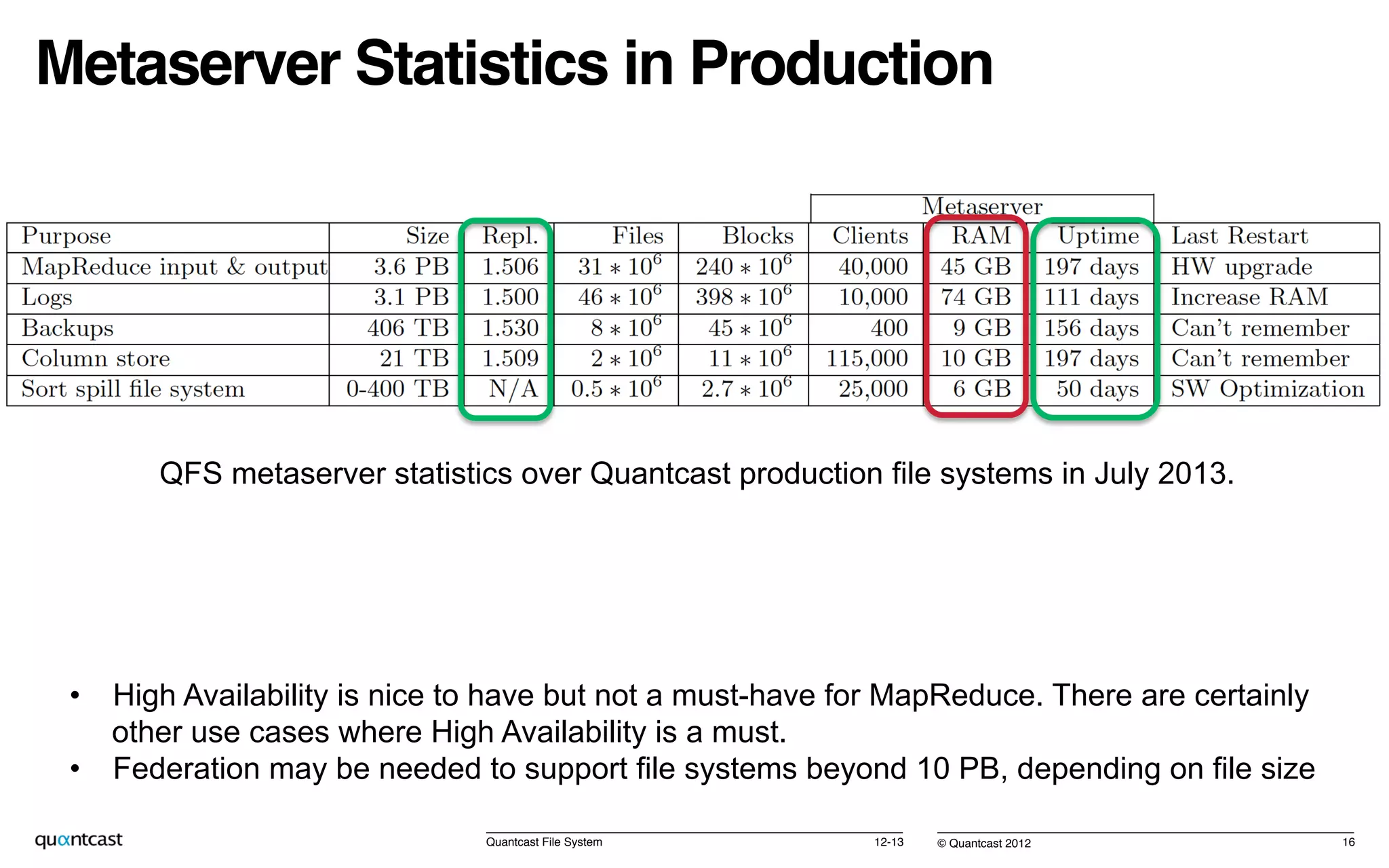

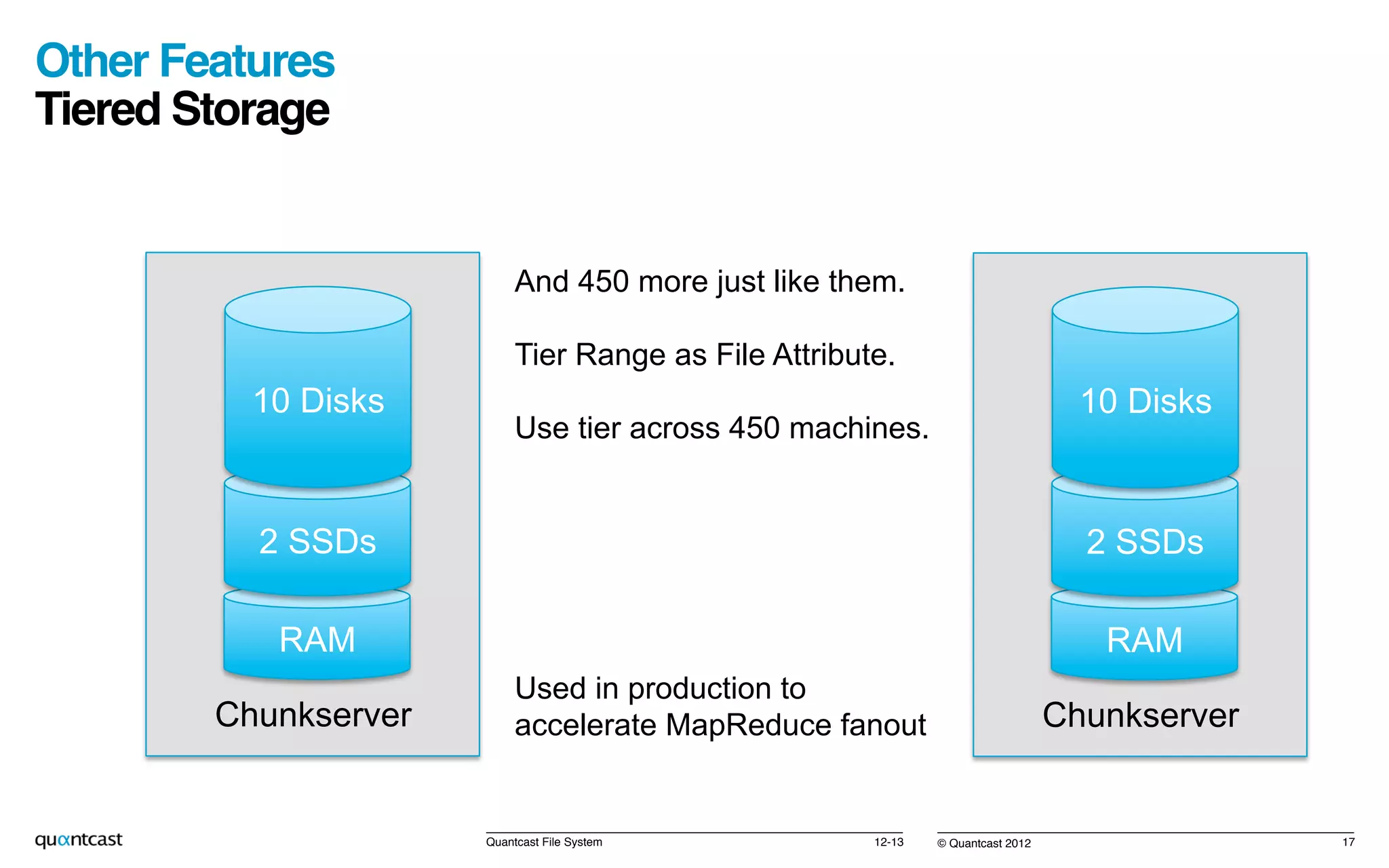

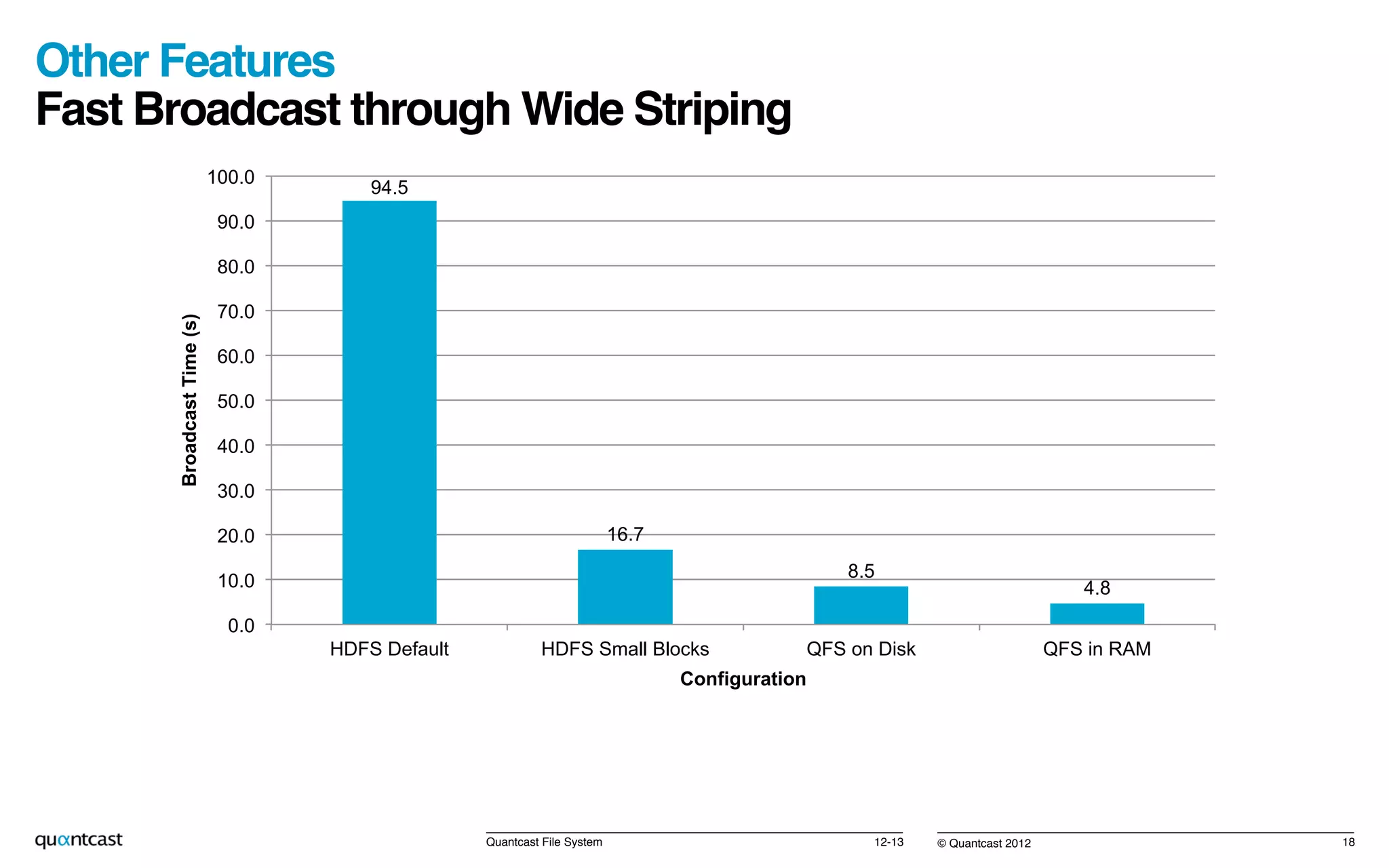

The document summarizes Quantcast File System (QFS), an alternative to HDFS that provides petabyte storage at half the disk space of HDFS. QFS offers significantly faster I/O than HDFS through the use of Reed-Solomon encoding, requiring only 1.5x disk space compared to HDFS's 3x. It has been production hardened at Quantcast under massive processing loads and is fully compatible with Apache Hadoop. Benchmark results show QFS writes are half the disk I/O of HDFS writes and reads require accessing the network versus HDFS's focus on data locality.