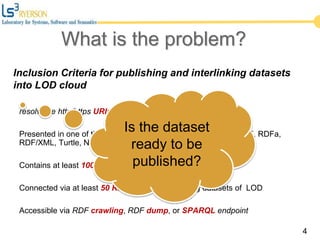

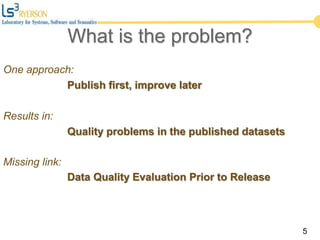

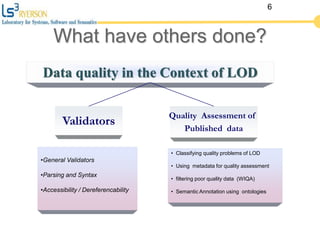

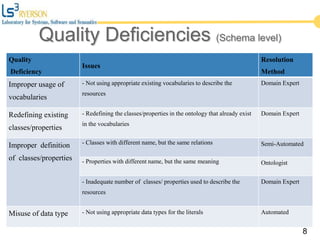

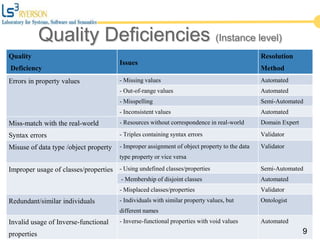

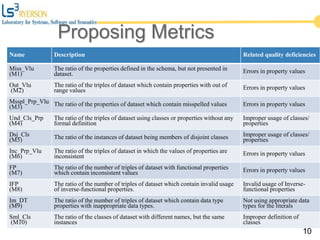

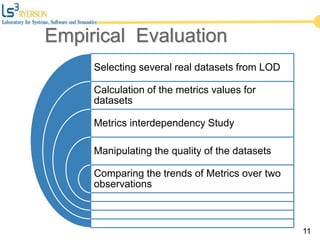

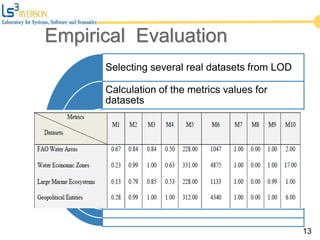

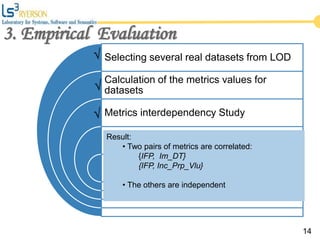

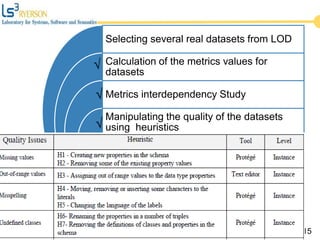

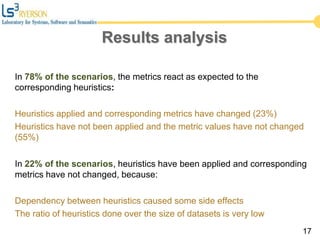

The document discusses the quality issues associated with Linked Open Data (LOD) and proposes a set of metrics to evaluate data quality prior to publication. It highlights the need for proper data quality assessment to prevent common deficiencies, such as improper usage of vocabularies and data types. The empirical evaluation demonstrates the effectiveness of these metrics in identifying quality problems across various datasets.