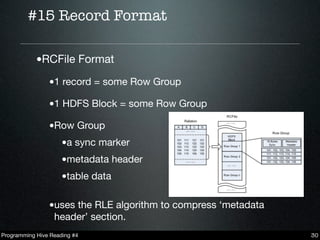

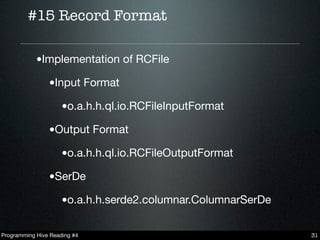

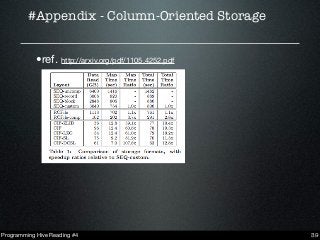

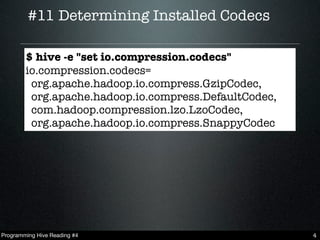

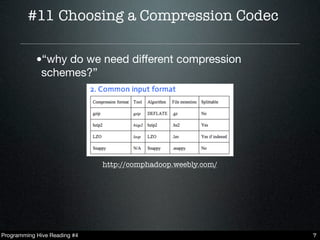

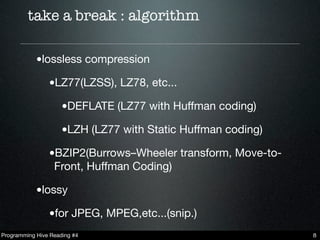

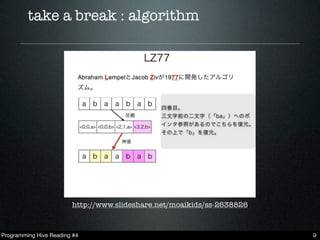

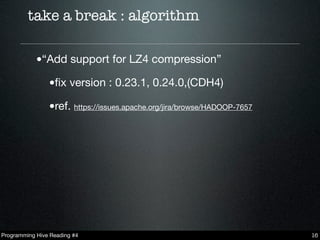

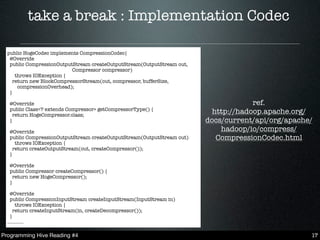

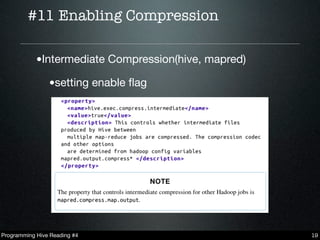

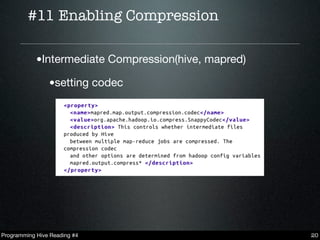

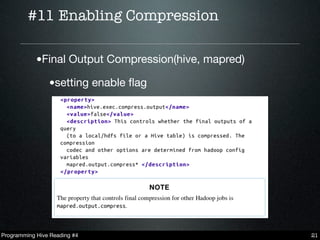

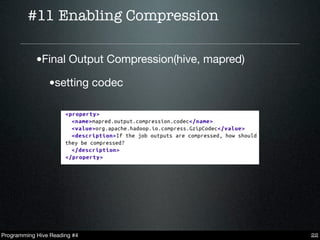

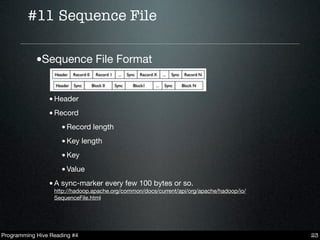

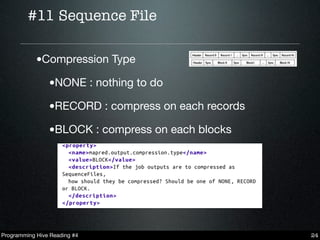

This document summarizes key points from chapters 11 and 15 of Programming Hive. It discusses choosing compression codecs for intermediate and final outputs in Hive, how different compression schemes like LZO, Snappy, and BWT work, and enabling compression in Hive. It also covers Hive file formats like SequenceFiles, RCFiles, and ORCFiles. RCFiles store data columnarly and use RLE compression. ORCFiles provide faster reads than RCFiles. The document recommends LZO and Snappy as fast compression codecs that still achieve good compression rates.

![#15 Record Format

•TEXTFILE

•SEQUENCEFILE

•RCFILE

CREATE TABLE hoge (.

........

)

STORED AS [TEXTFILE|SEQUENCEFILE|RCFILE]

Programming Hive Reading #4 28](https://image.slidesharecdn.com/20130220programminghive-130225060435-phpapp02/85/Programming-Hive-Reading-4-28-320.jpg)