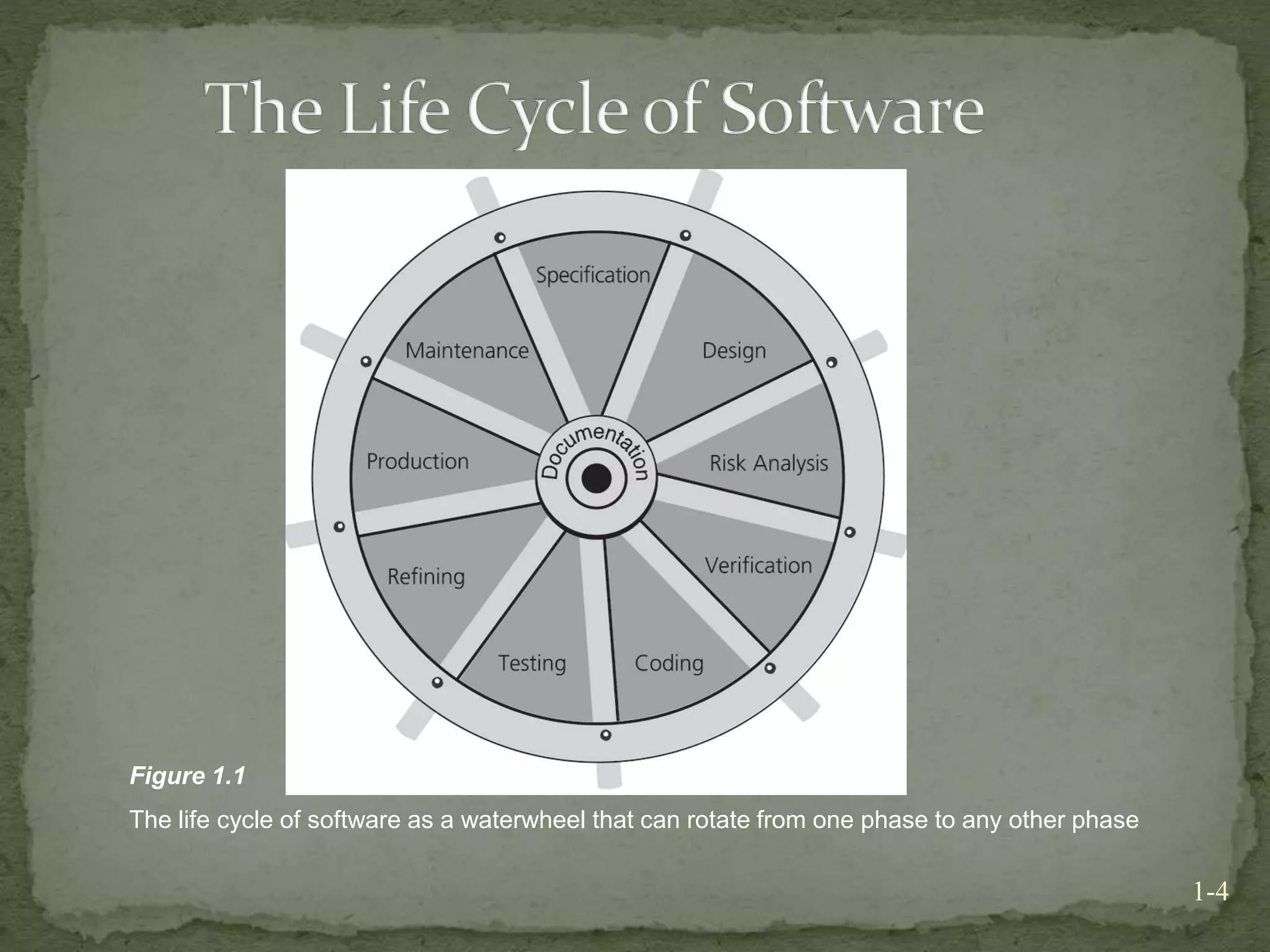

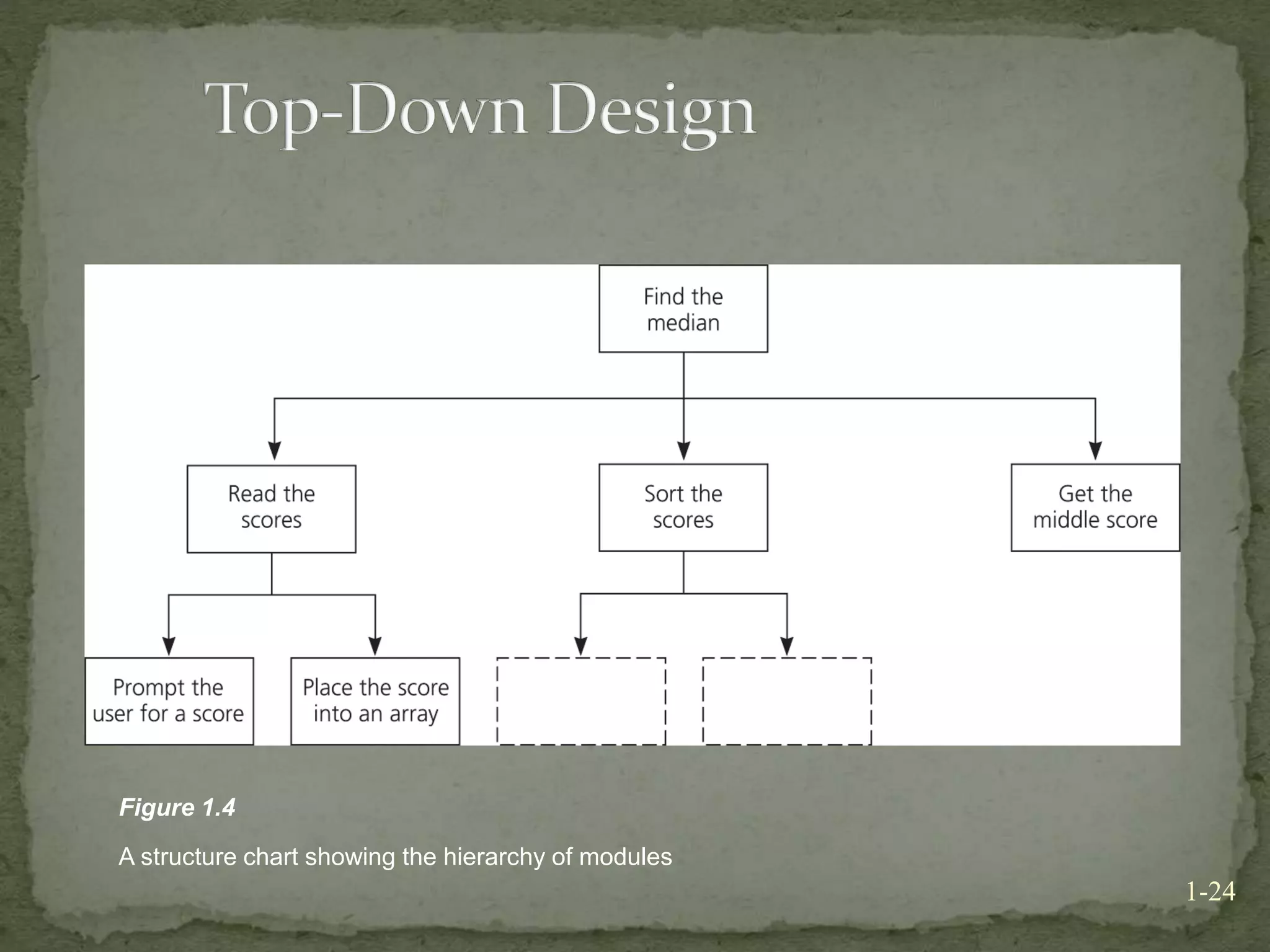

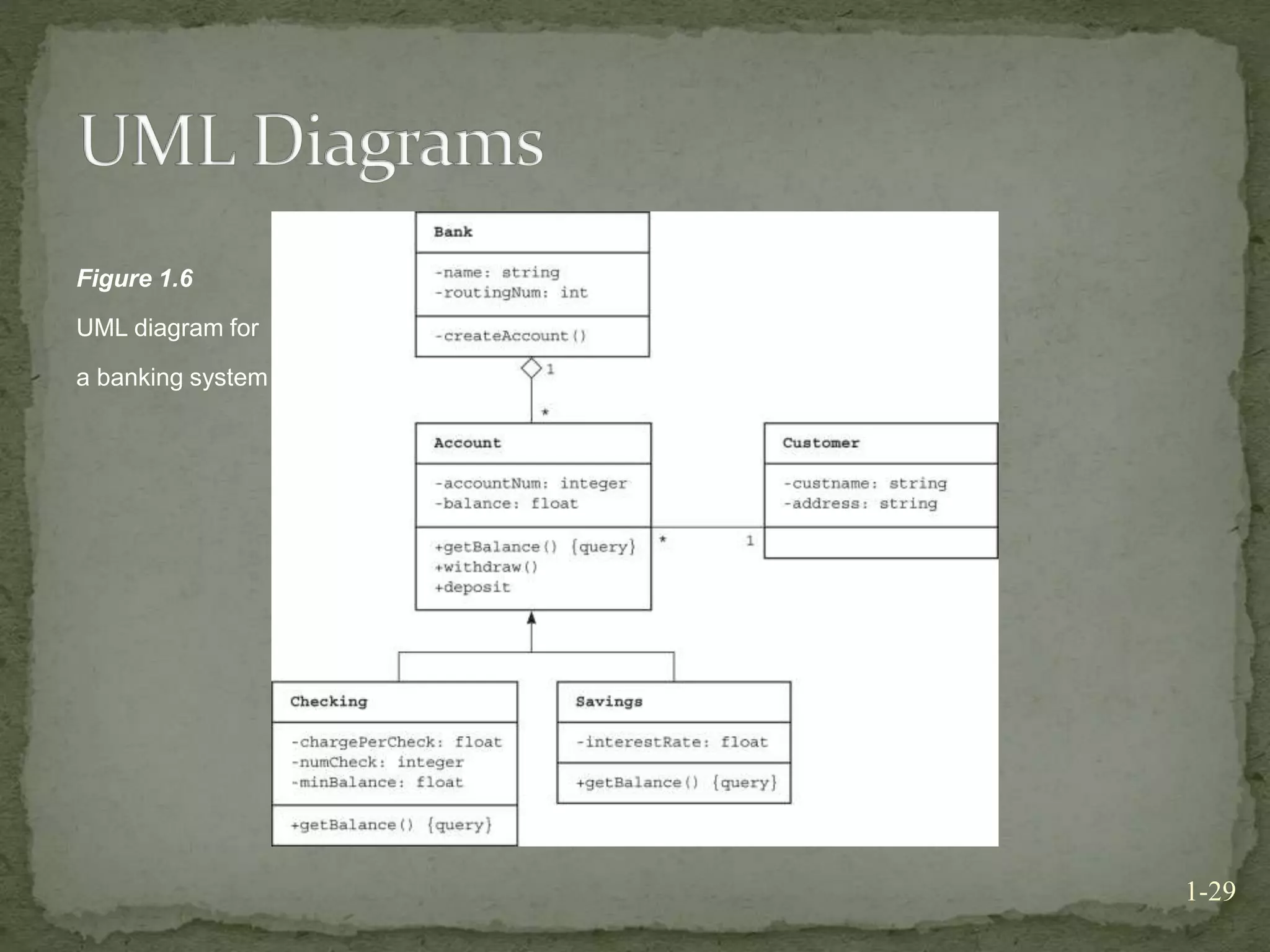

This document discusses principles of programming and software engineering. It describes the software development life cycle, which consists of nine phases: specification, design, risk analysis, verification, coding, testing, refining the solution, production, and maintenance. It also discusses problem solving through algorithms, data storage, object-oriented programming concepts like encapsulation and inheritance, and design techniques like top-down design and object-oriented design. The document emphasizes that modularity, ease of use, and fail-safe programming are important for developing quality software solutions.