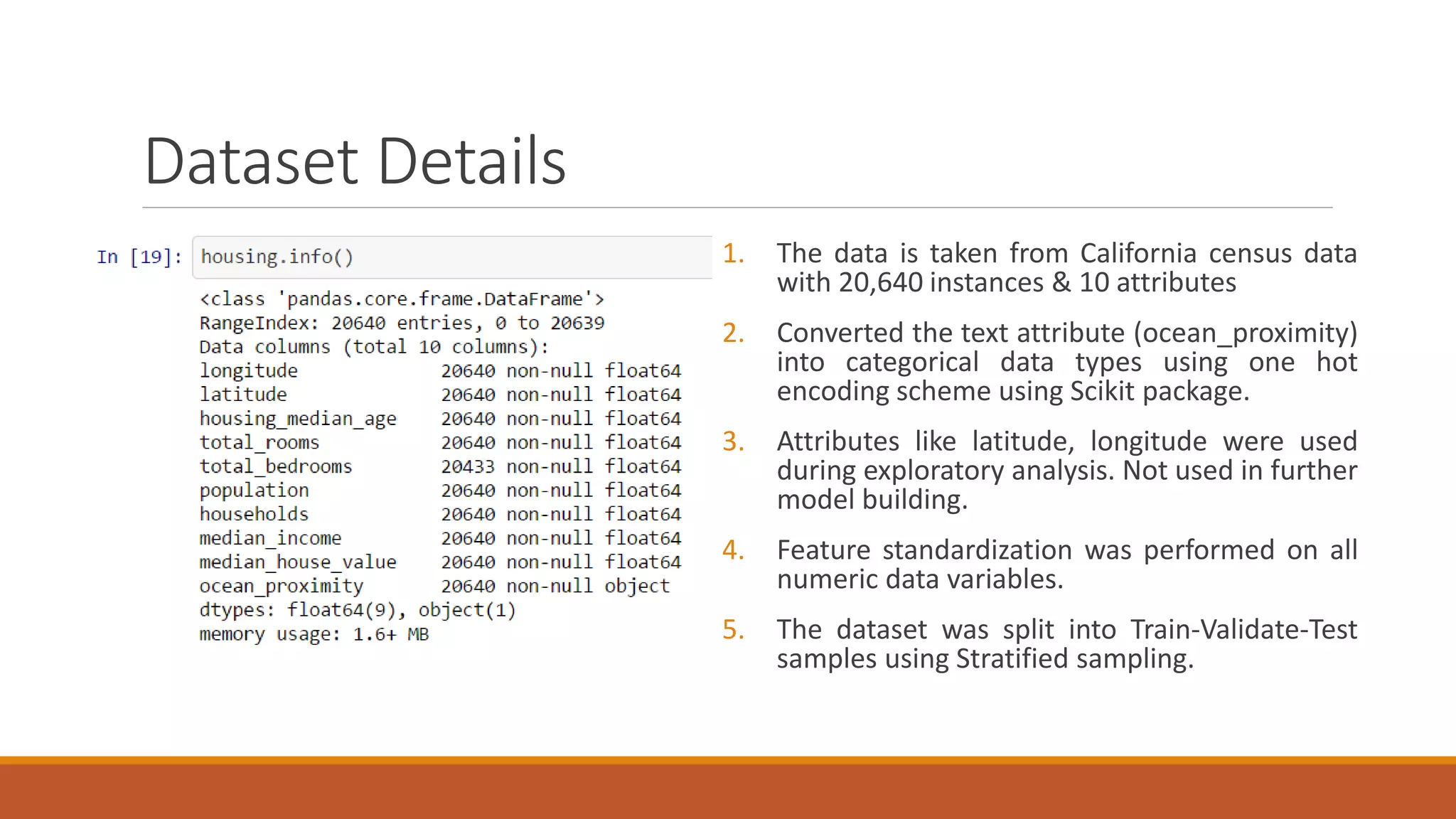

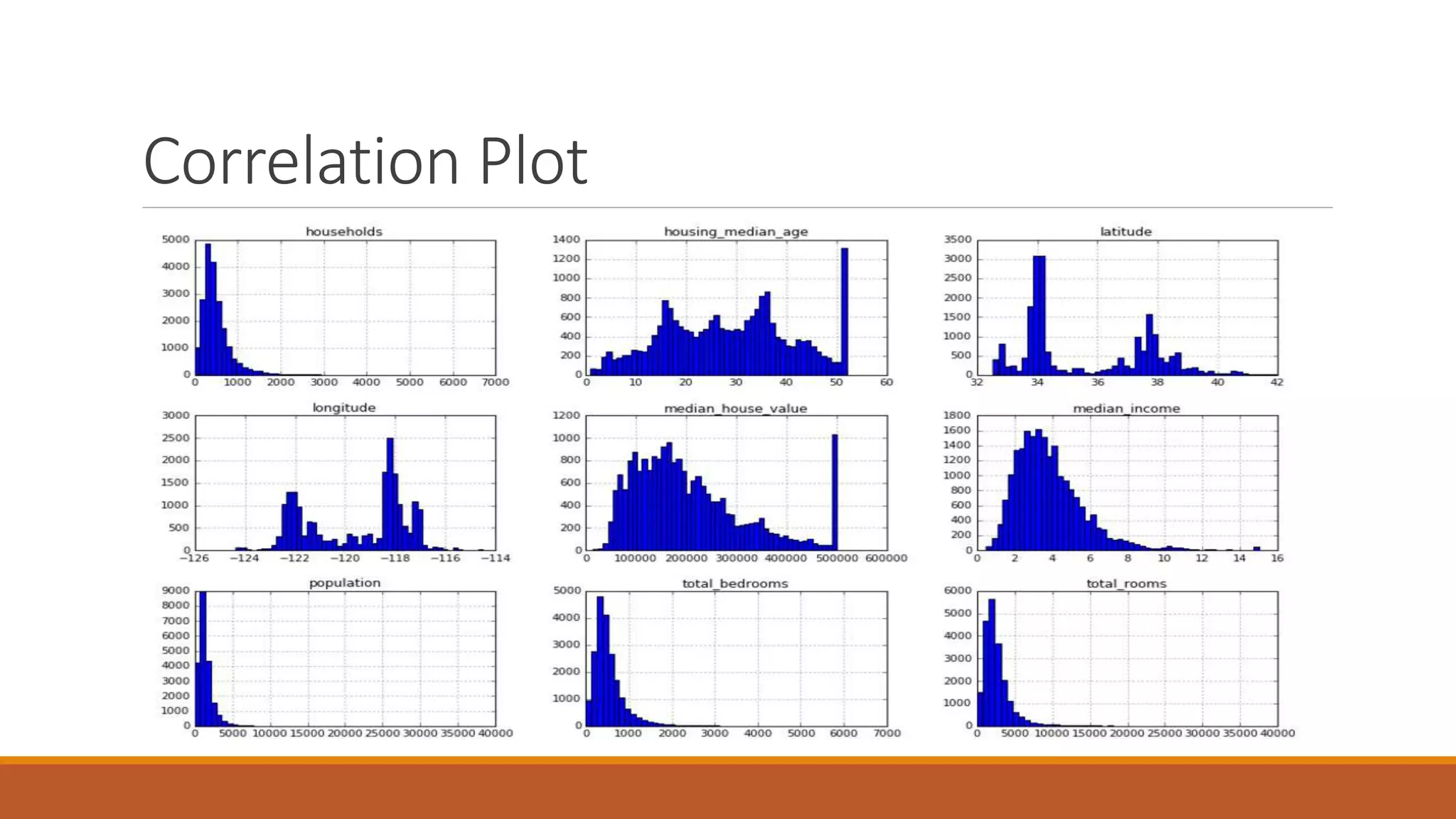

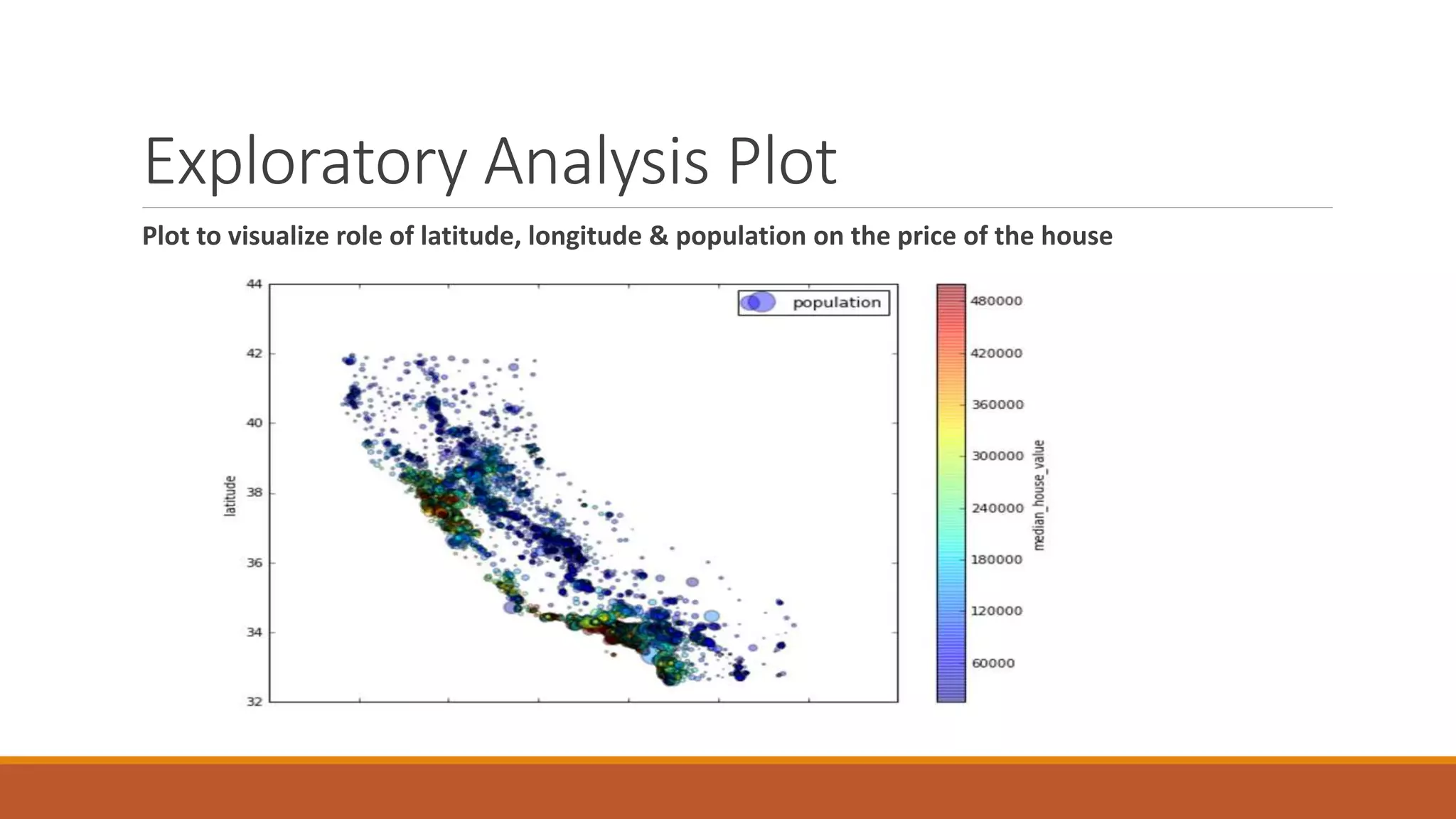

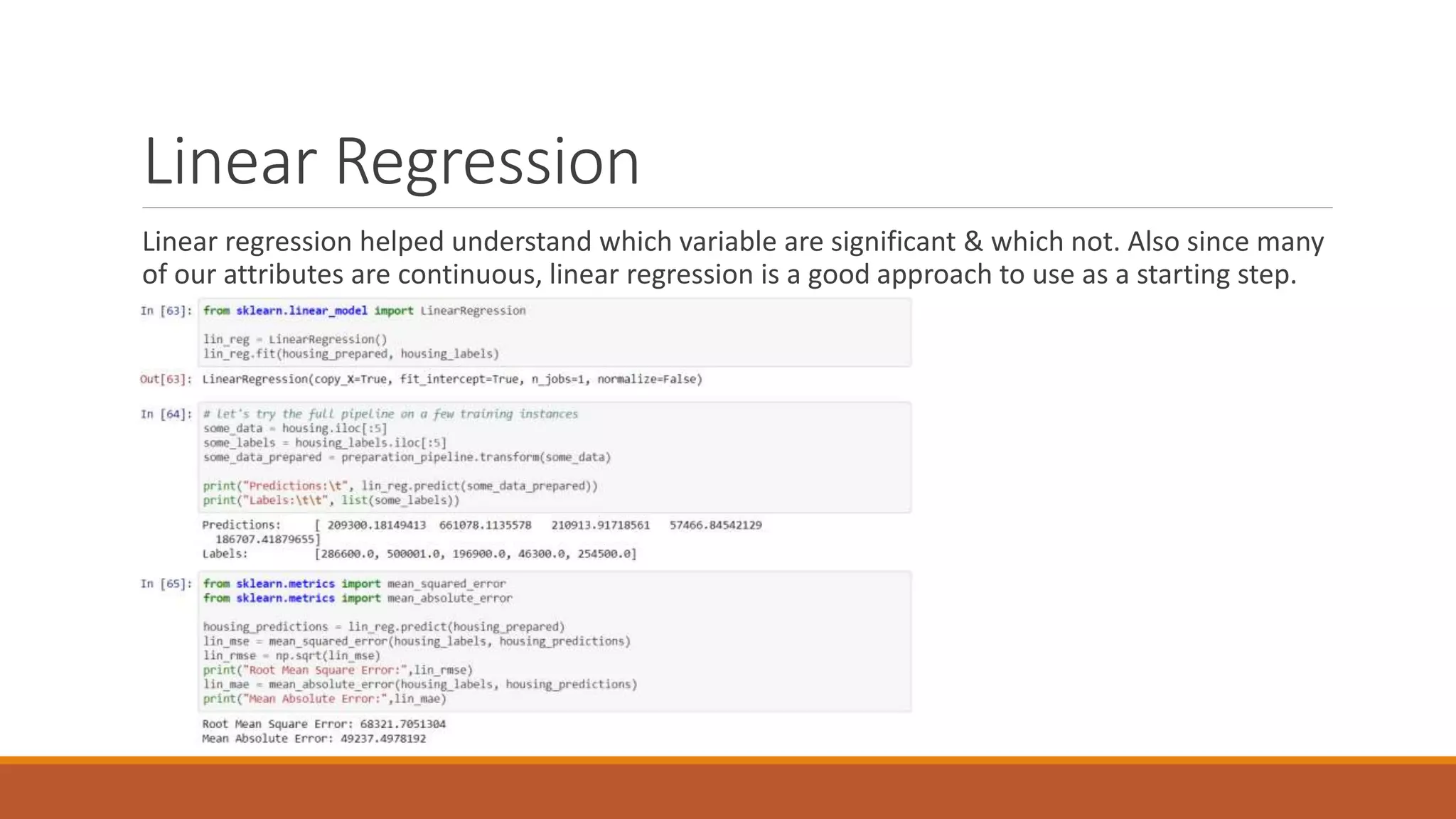

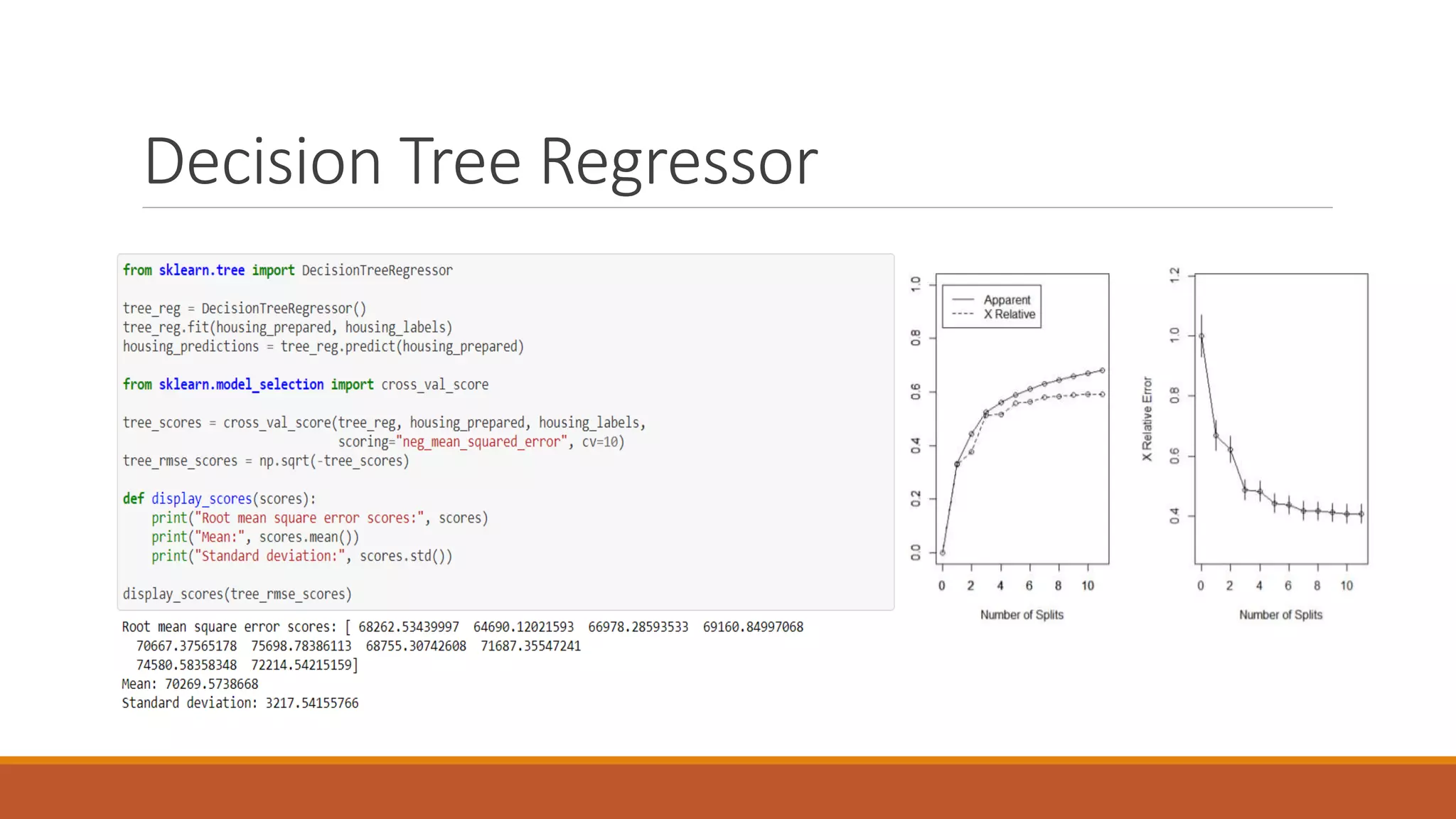

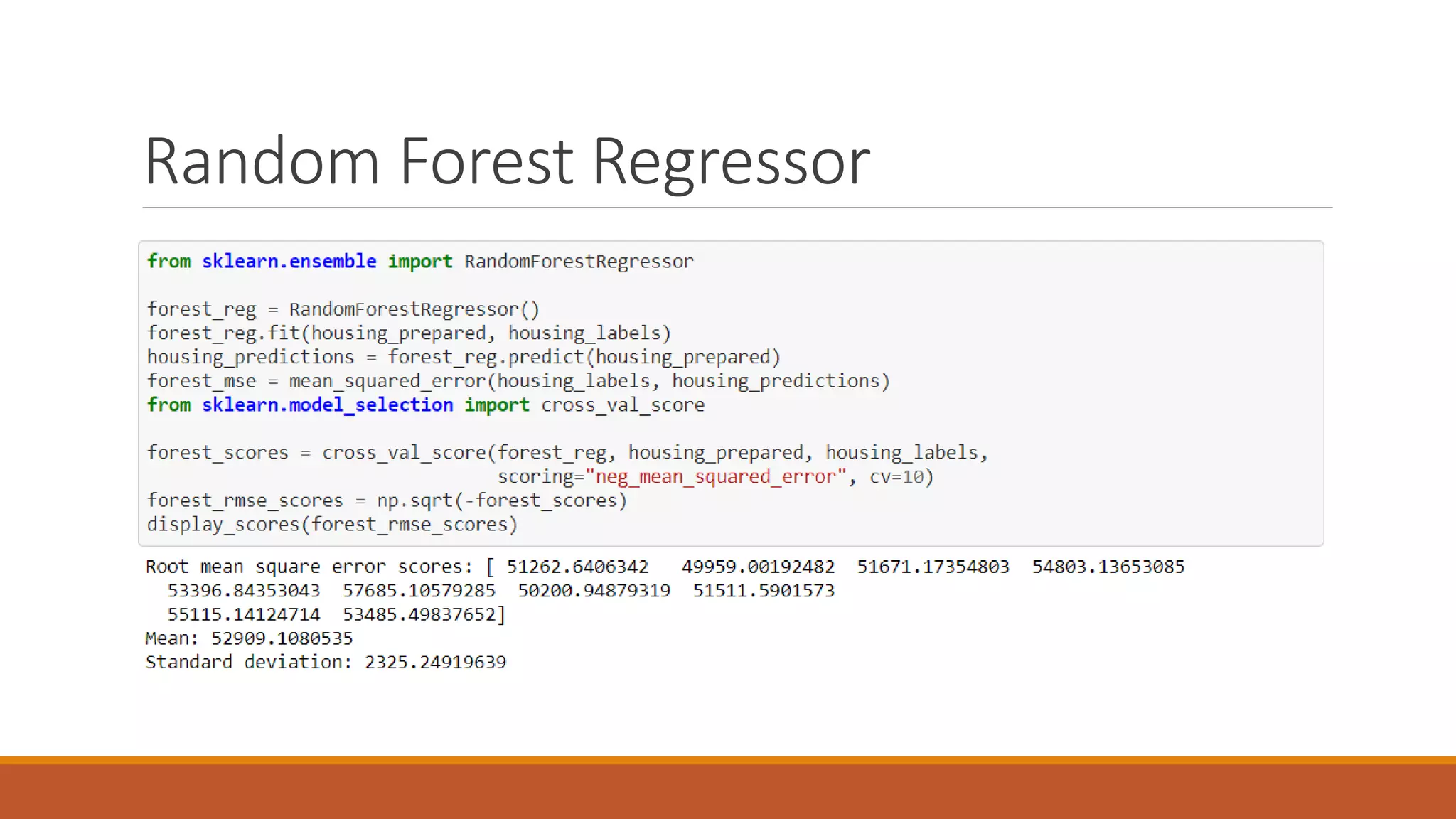

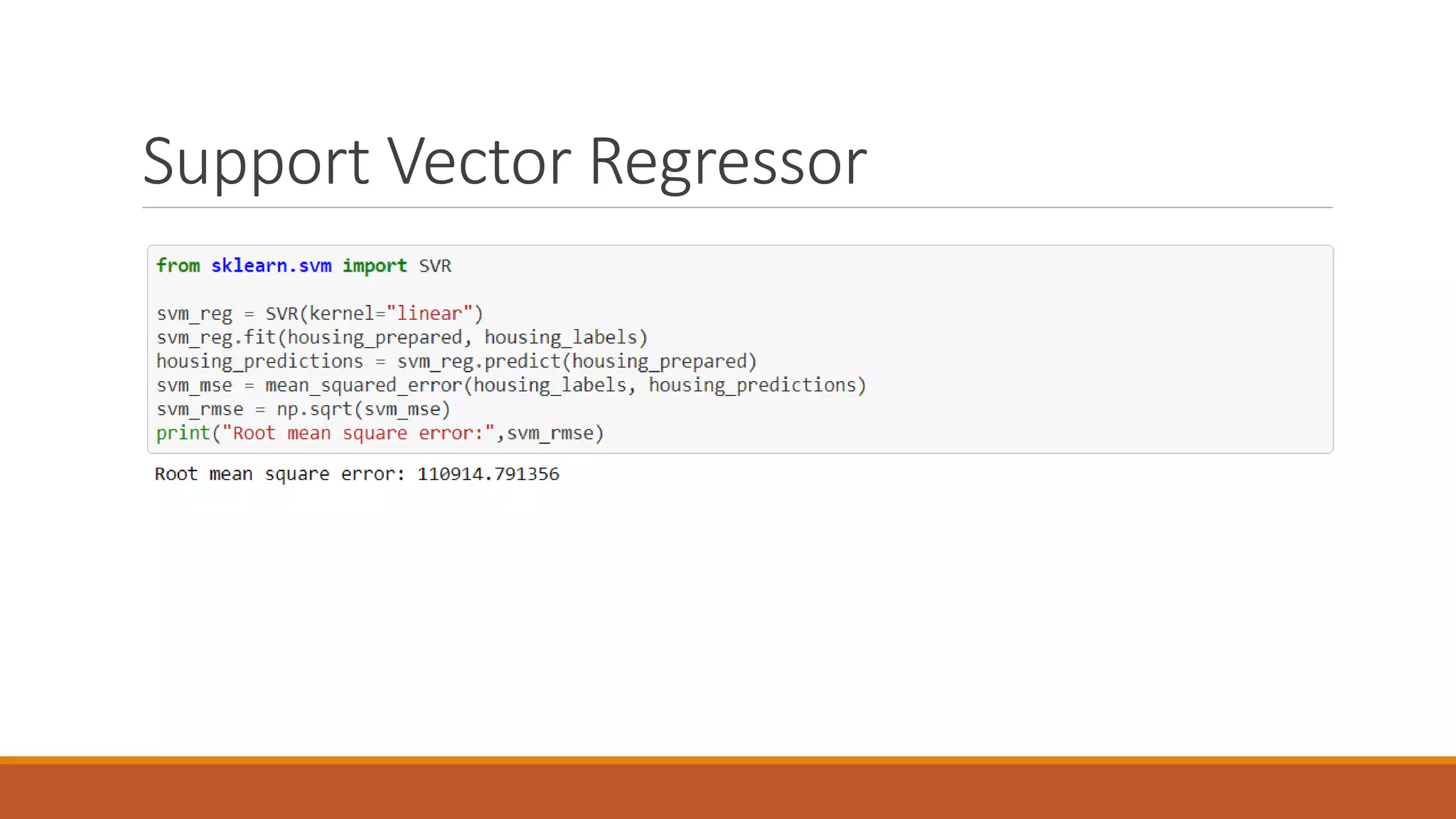

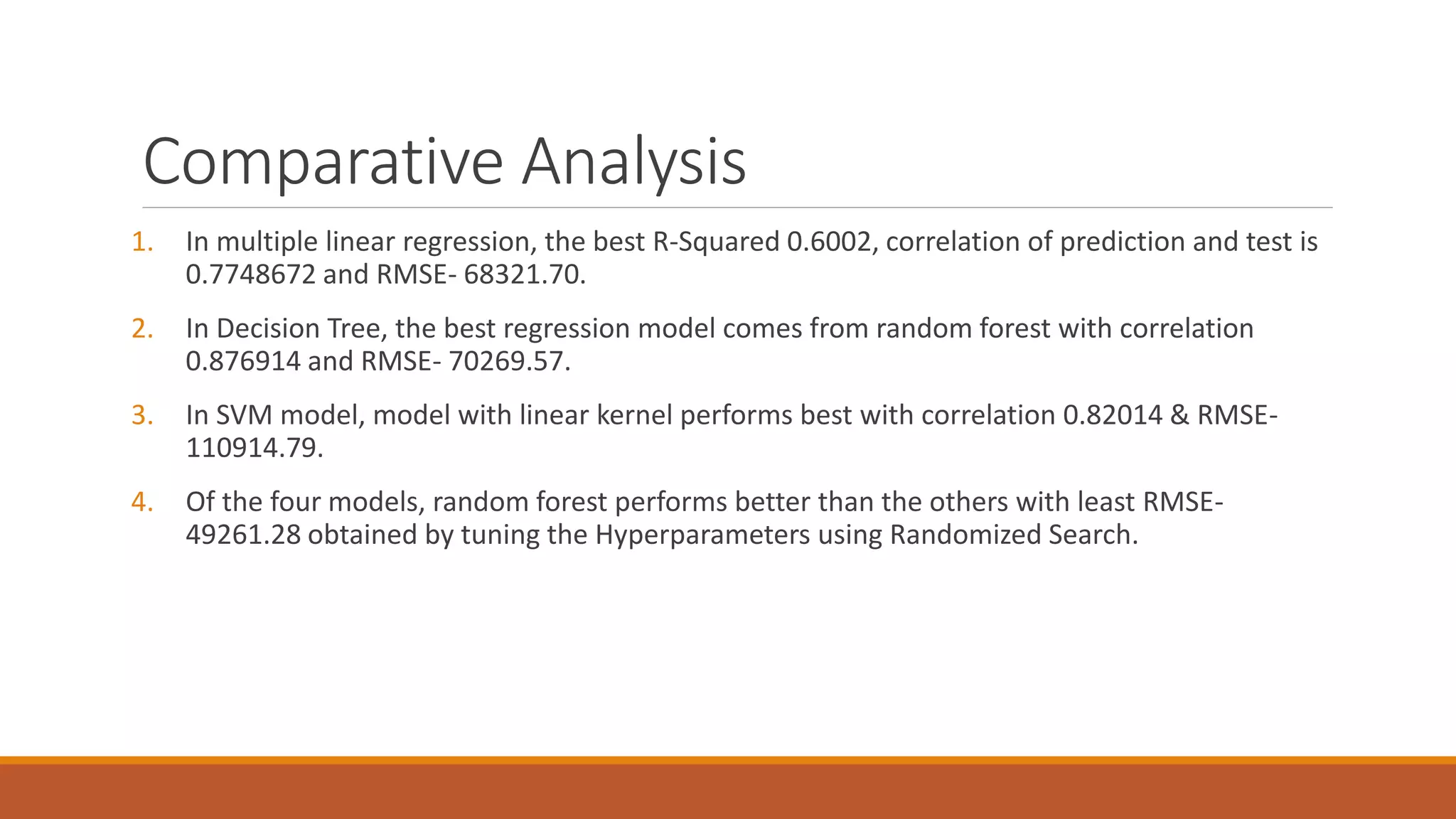

This document discusses using regression models to predict California housing prices from census data. It explores linear regression, decision tree regression, random forest regression and support vector regression. The random forest model performed best with the lowest RMSE of 49261.28 after hyperparameter tuning. The dataset contained 20,640 instances with 10 attributes describing California properties for which housing values needed to be estimated. Feature engineering steps like one-hot encoding and standardization were applied before randomly splitting the data into training, validation and test sets.