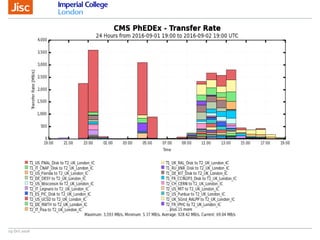

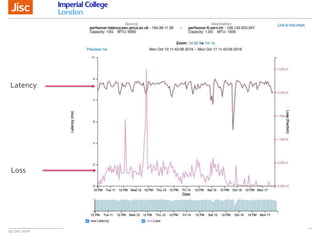

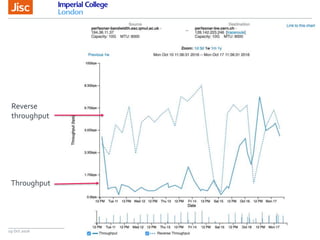

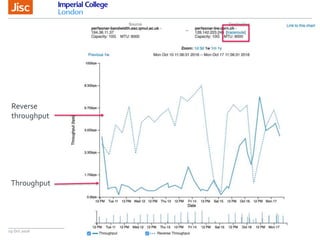

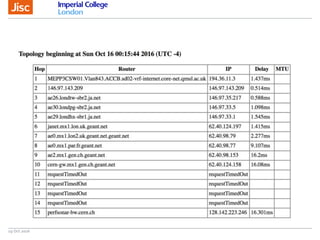

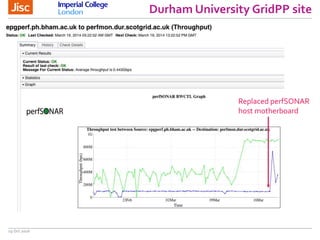

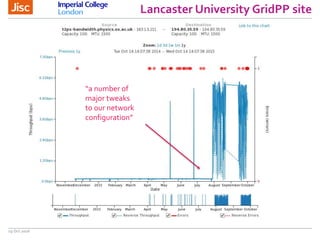

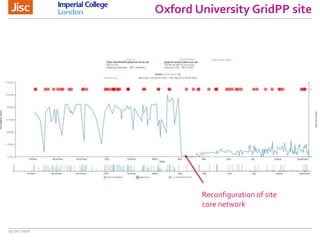

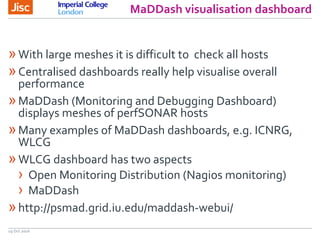

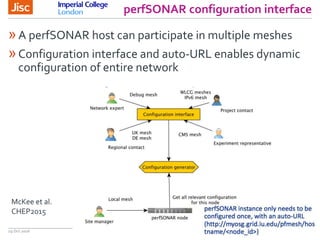

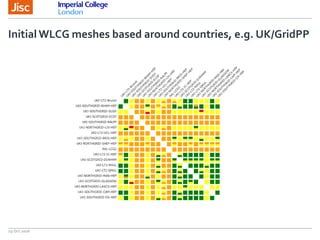

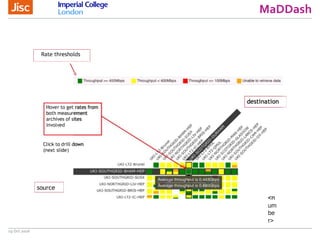

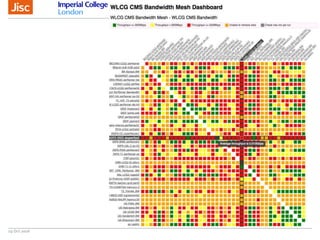

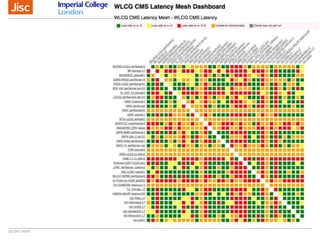

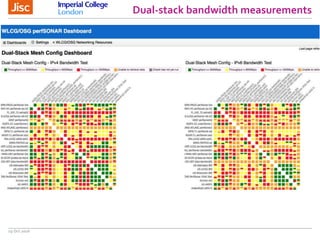

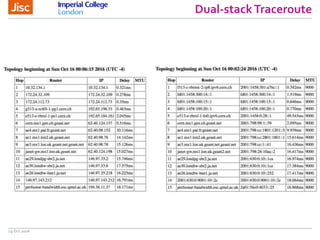

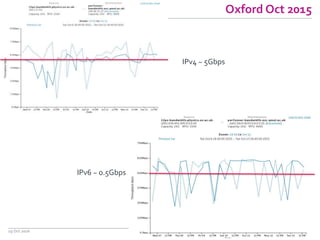

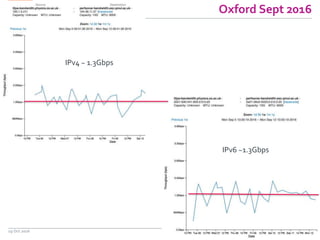

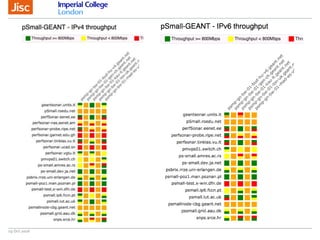

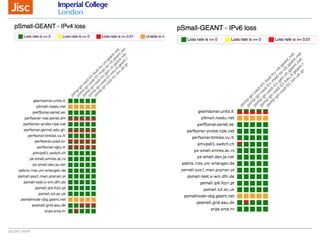

The document discusses the Worldwide Large Hadron Collider Computing Grid (WLCG) and its collaborative structure for data management and analysis. It highlights the importance of the perfSONAR network monitoring tool for ensuring reliable network performance across various sites, including the configuration and visualization of data transfer. Current efforts include promoting IPv6 adoption and enhancing network diagnostics through projects like Pundit to improve fault detection and monitoring capabilities.