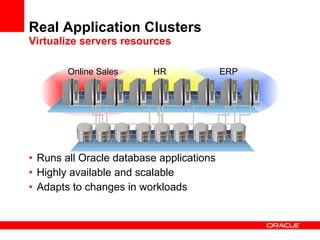

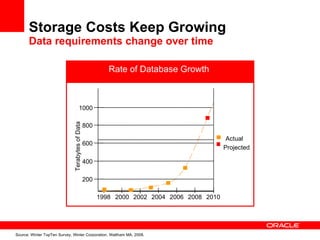

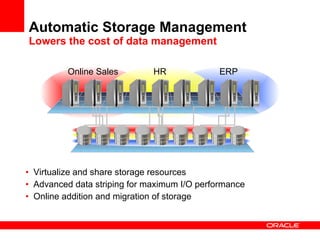

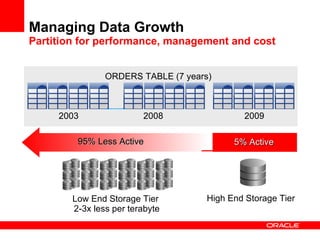

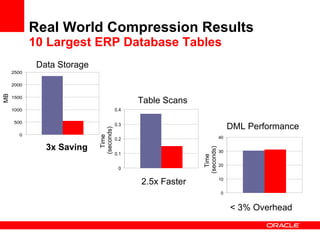

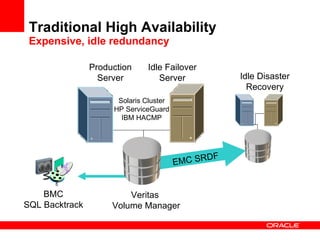

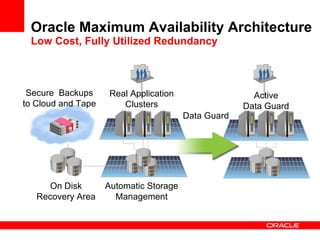

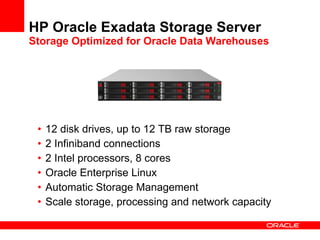

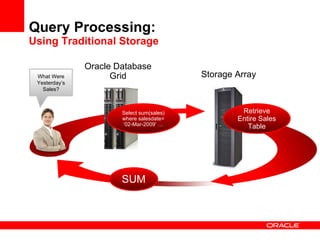

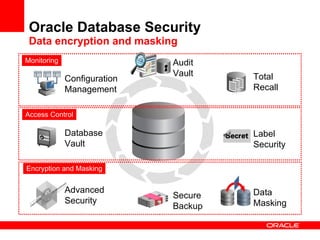

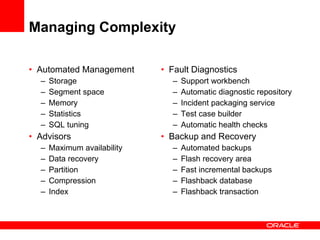

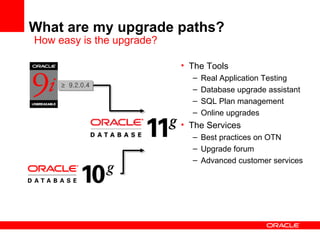

The document discusses how Oracle Database 11g can help lower IT costs through features like grid computing, high availability, storage optimization, and security. It provides examples of how Oracle RAC, Exadata, Automatic Storage Management, compression, and other 11g capabilities allow customers to consolidate servers and storage, improve performance, and reduce costs compared to alternative solutions. Overall the document promotes Oracle Database 11g as enabling lower costs through grid computing, optimized storage, high performance, and security.