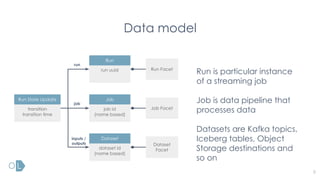

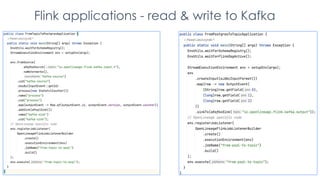

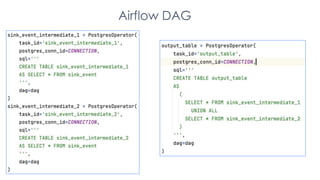

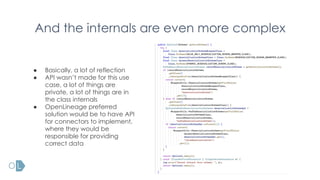

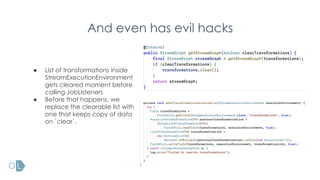

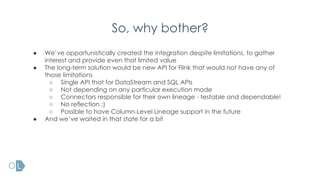

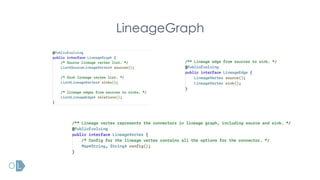

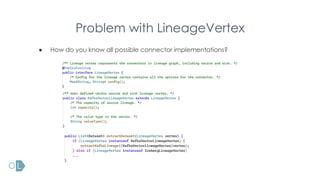

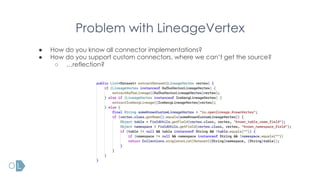

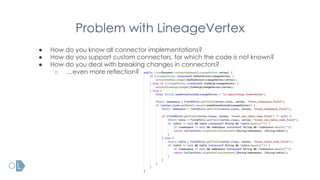

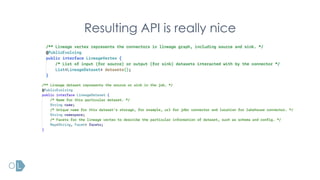

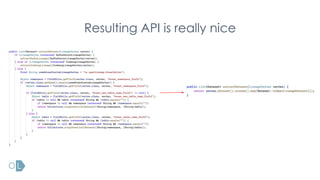

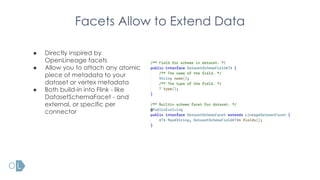

The document presents an overview of Openlineage's integration with Flink for stream processing, discussing its mission to establish an open standard for lineage metadata collection. It highlights the challenges and complexities related to lineage in streaming jobs compared to batch jobs, as well as the newly introduced features from FLIP-314 aimed at improving the architecture and functionality of lineage tracking within Flink. Additionally, it emphasizes community involvement and future directions for supporting more streaming systems and enhancing lineage capabilities.