Embed presentation

Download to read offline

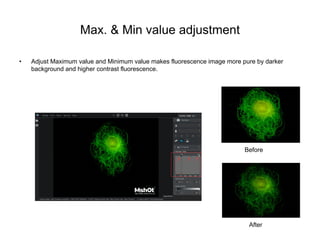

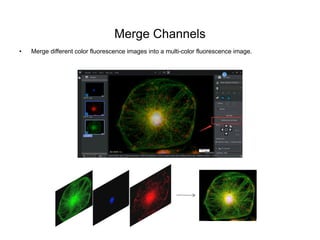

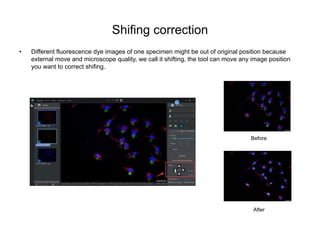

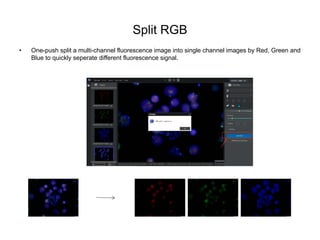

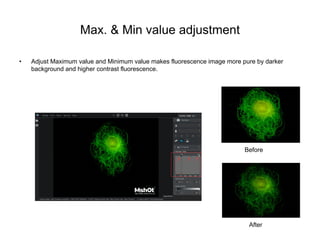

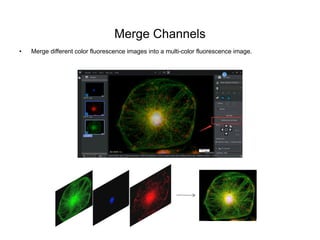

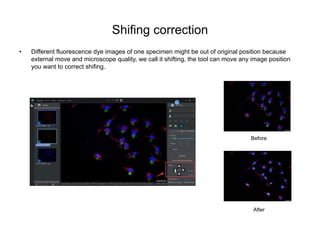

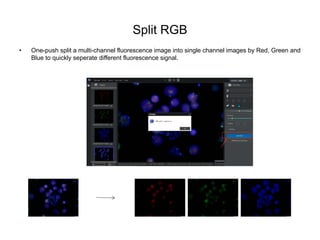

The document outlines various image processing techniques for enhancing fluorescence images, including adjusting maximum and minimum values to improve contrast, merging multiple images to increase brightness, and correcting for image shifting. It describes functionalities such as quick merging of different channel fluorescence images, splitting multi-channel images into single channels, and displaying light intensity through line profiles. Overall, these tools aim to optimize the observation and analysis of fluorescence signals in specimens.