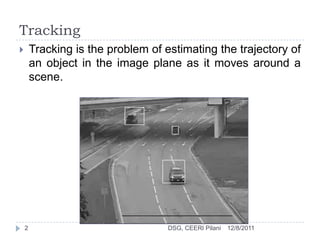

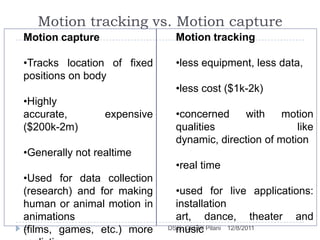

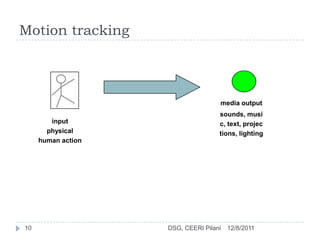

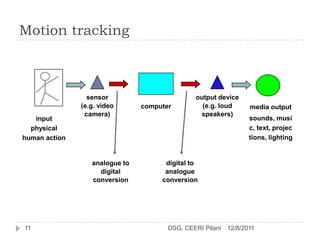

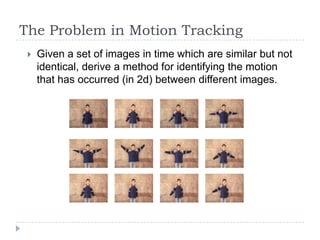

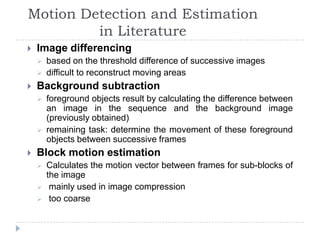

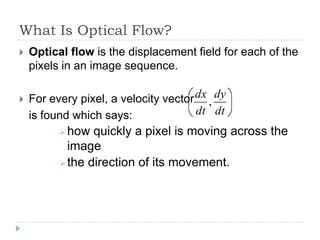

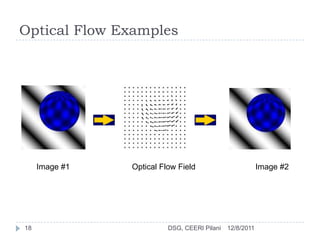

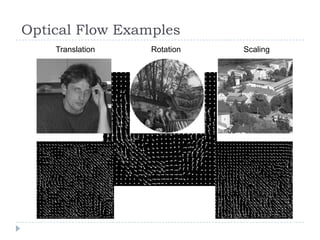

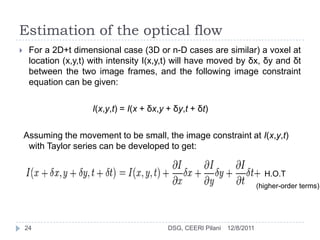

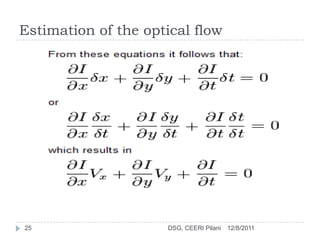

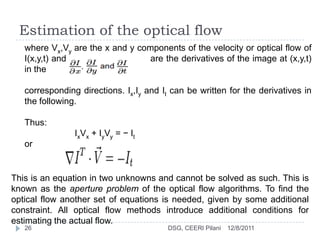

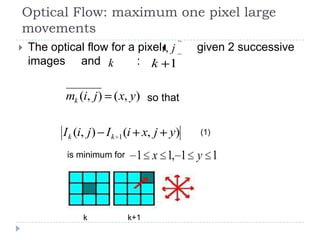

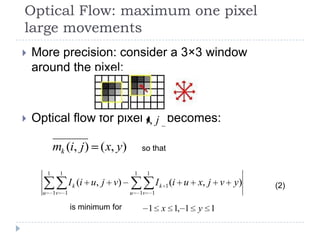

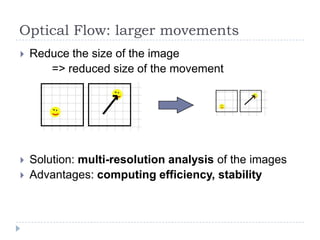

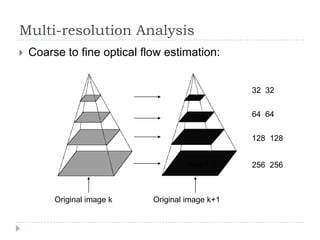

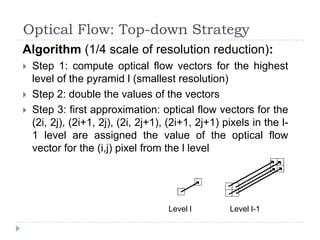

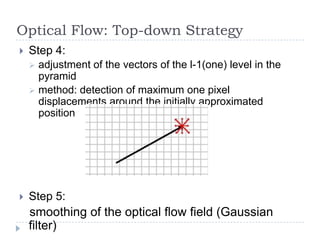

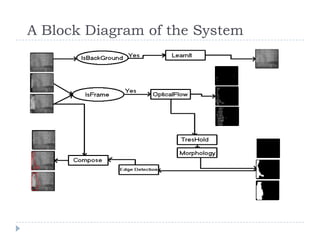

Tracking is the problem of estimating the trajectory of an object as it moves around a scene. Motion tracking involves collecting data on human movement using sensors to control outputs like music or lighting based on performer actions. Motion tracking differs from motion capture in that it requires less equipment, is less expensive, and is concerned with qualities of motion rather than highly accurate data collection. Optical flow estimates the pixel-wise motion between frames in a video by calculating velocity vectors for each pixel.