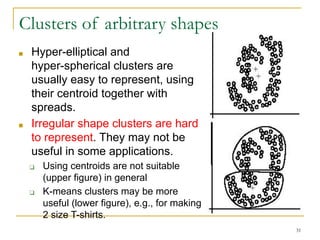

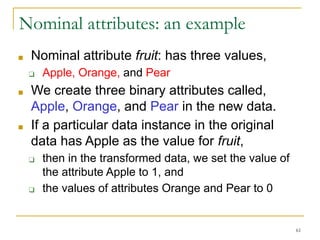

This document provides an overview of unsupervised learning and clustering algorithms. It discusses key concepts in unsupervised learning and clustering such as k-means clustering and hierarchical clustering. K-means clustering partitions data into k clusters by minimizing distances between data points and cluster centroids. Hierarchical clustering builds nested clusters by successively merging or dividing clusters based on distance measures. Representing clusters and evaluating clustering results are also covered.