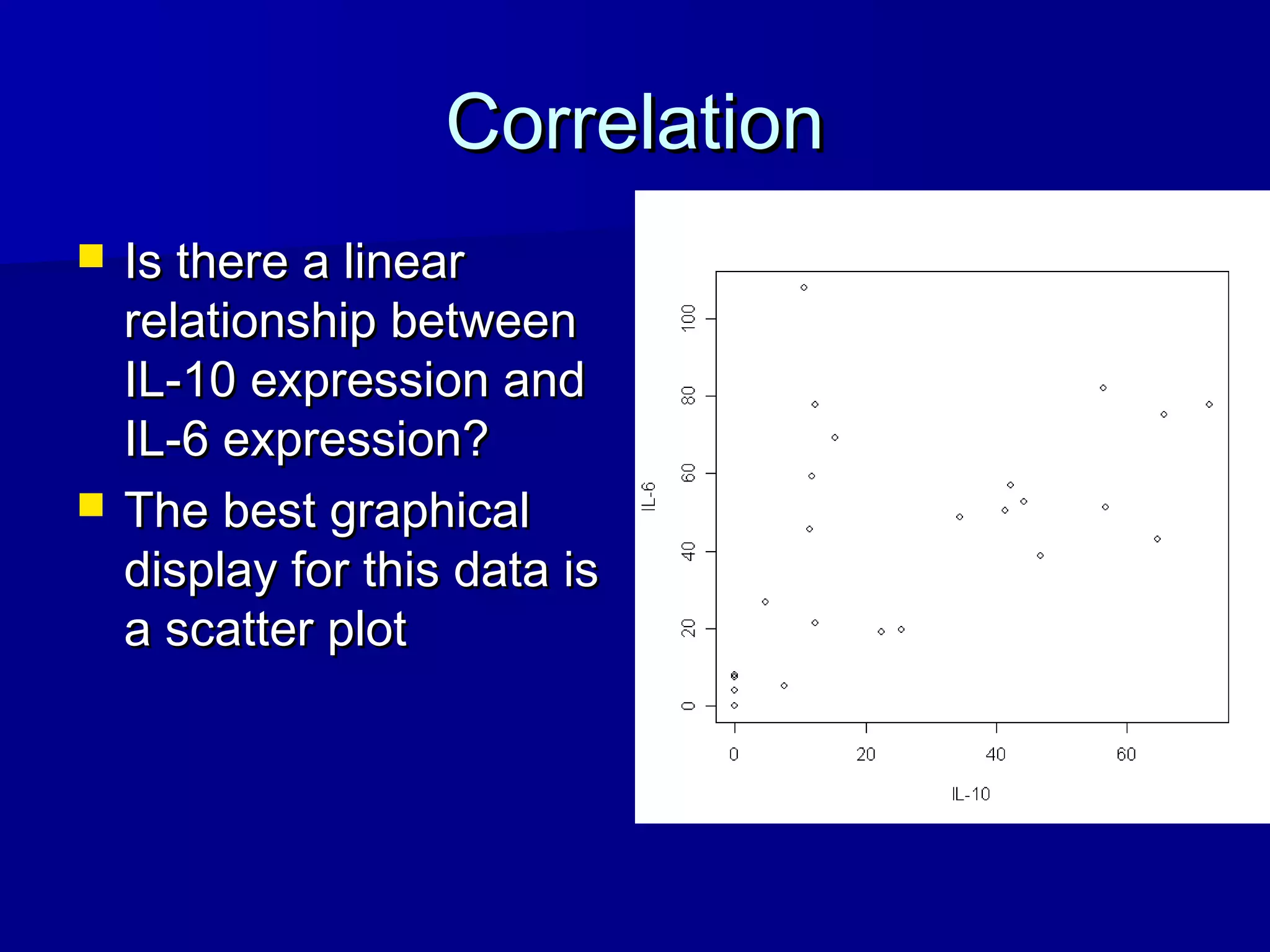

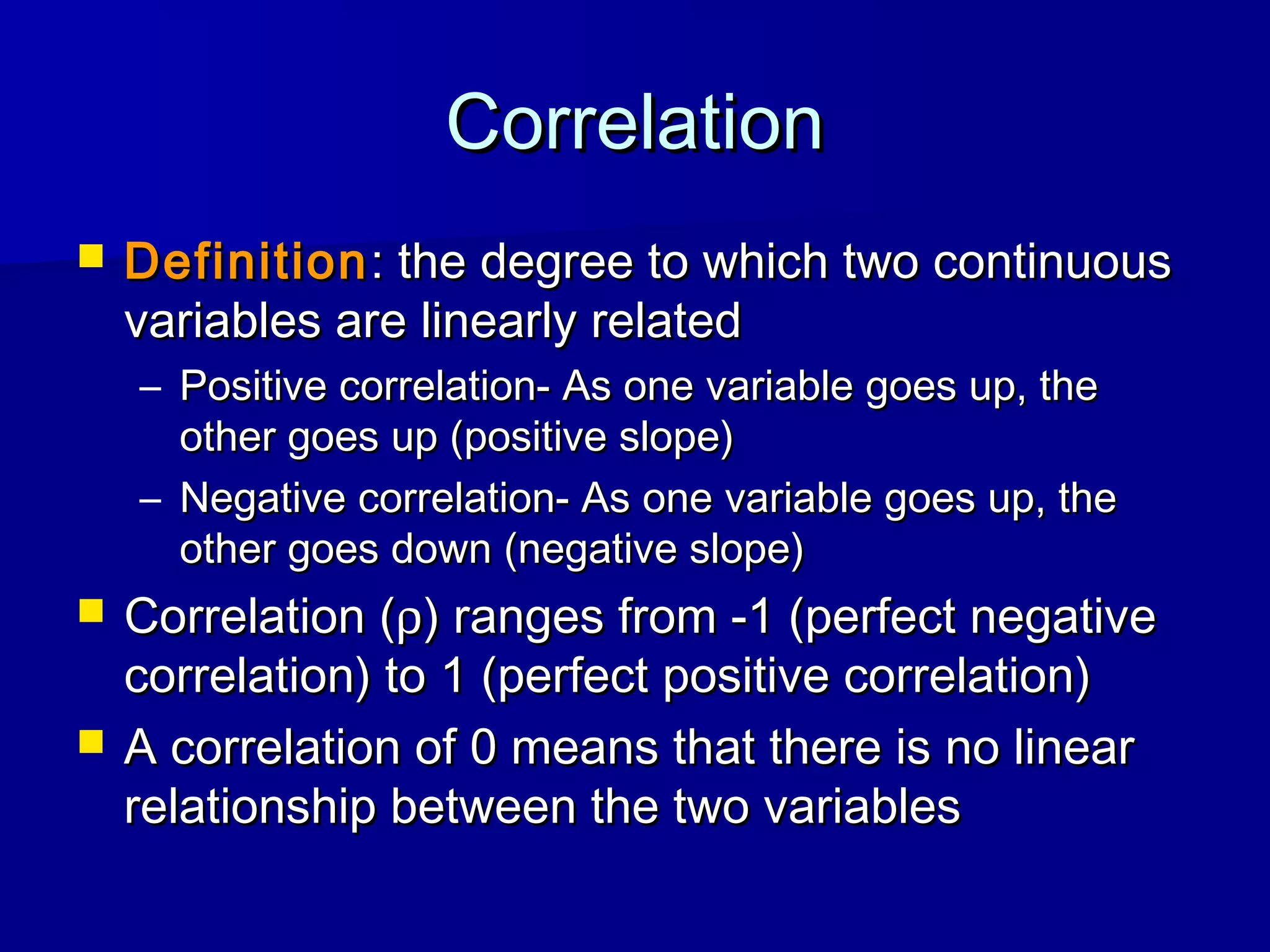

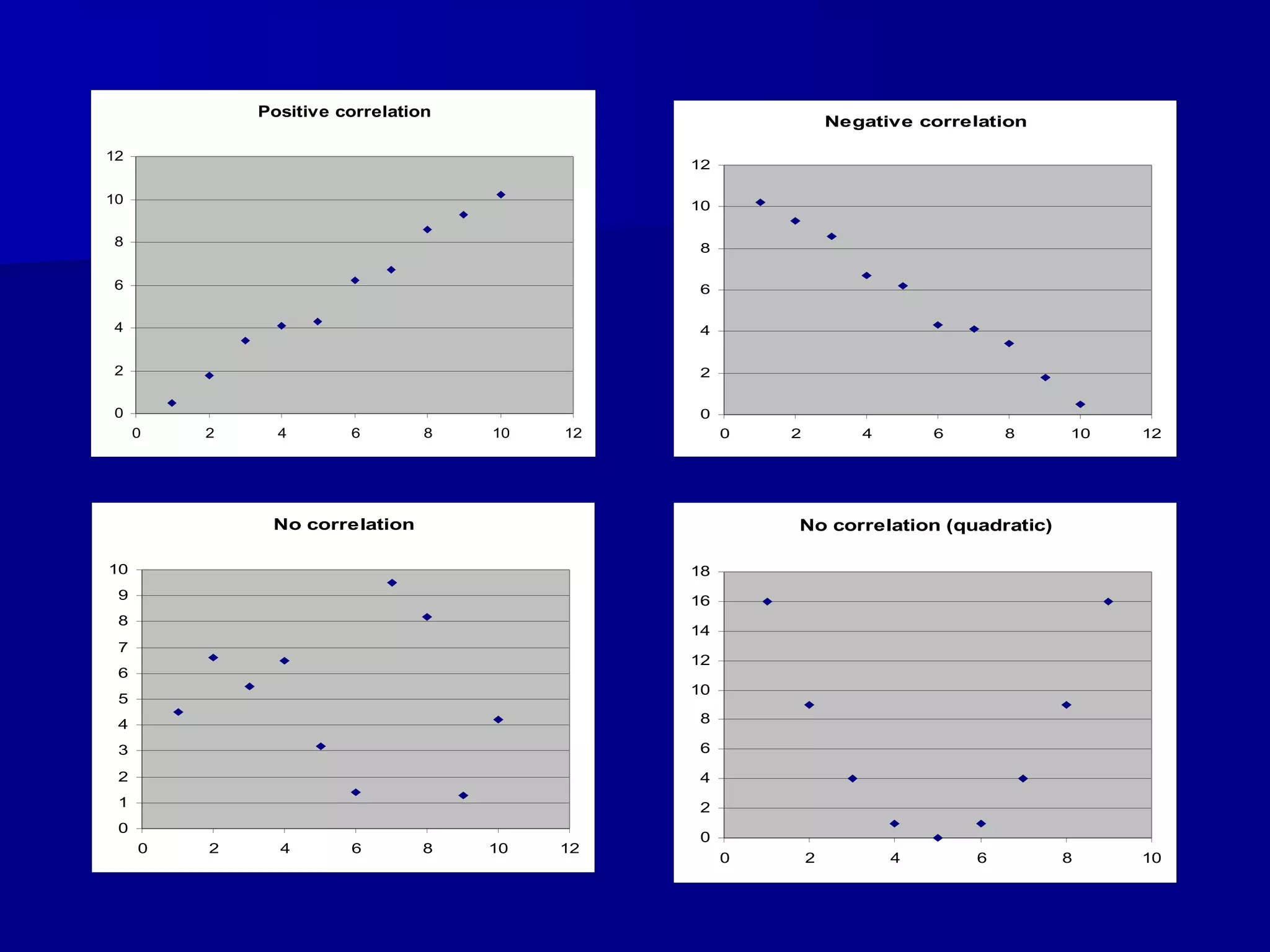

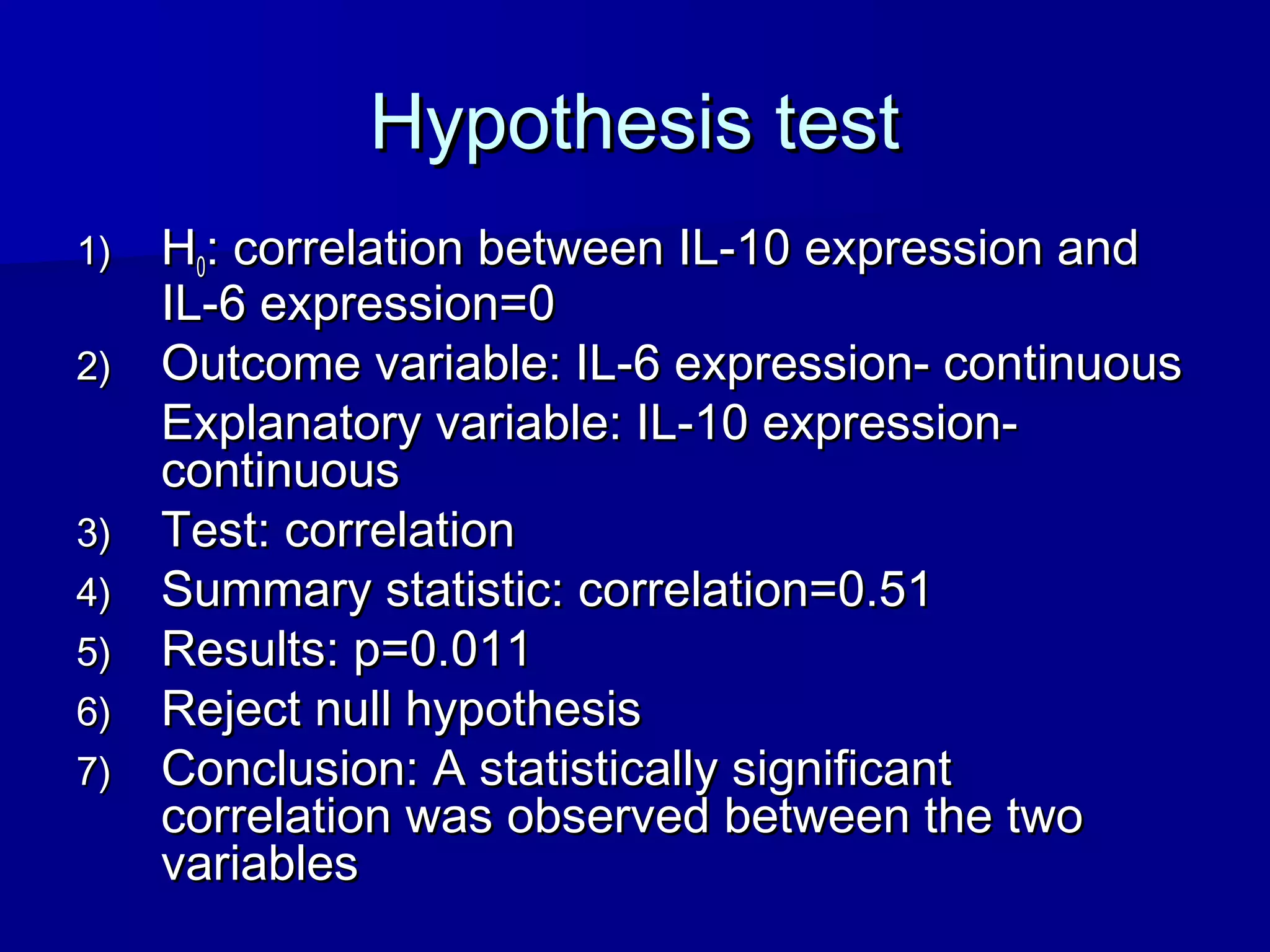

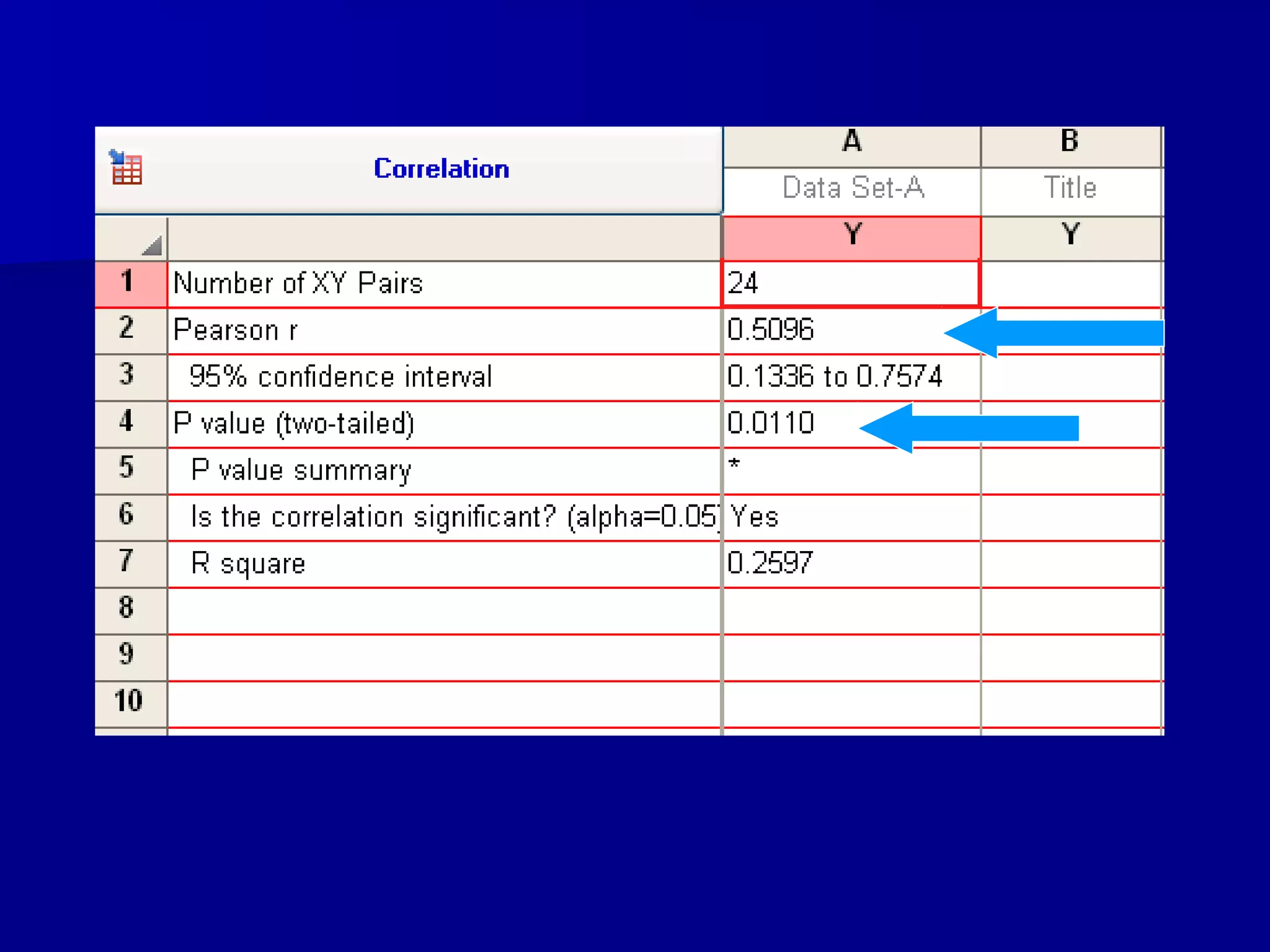

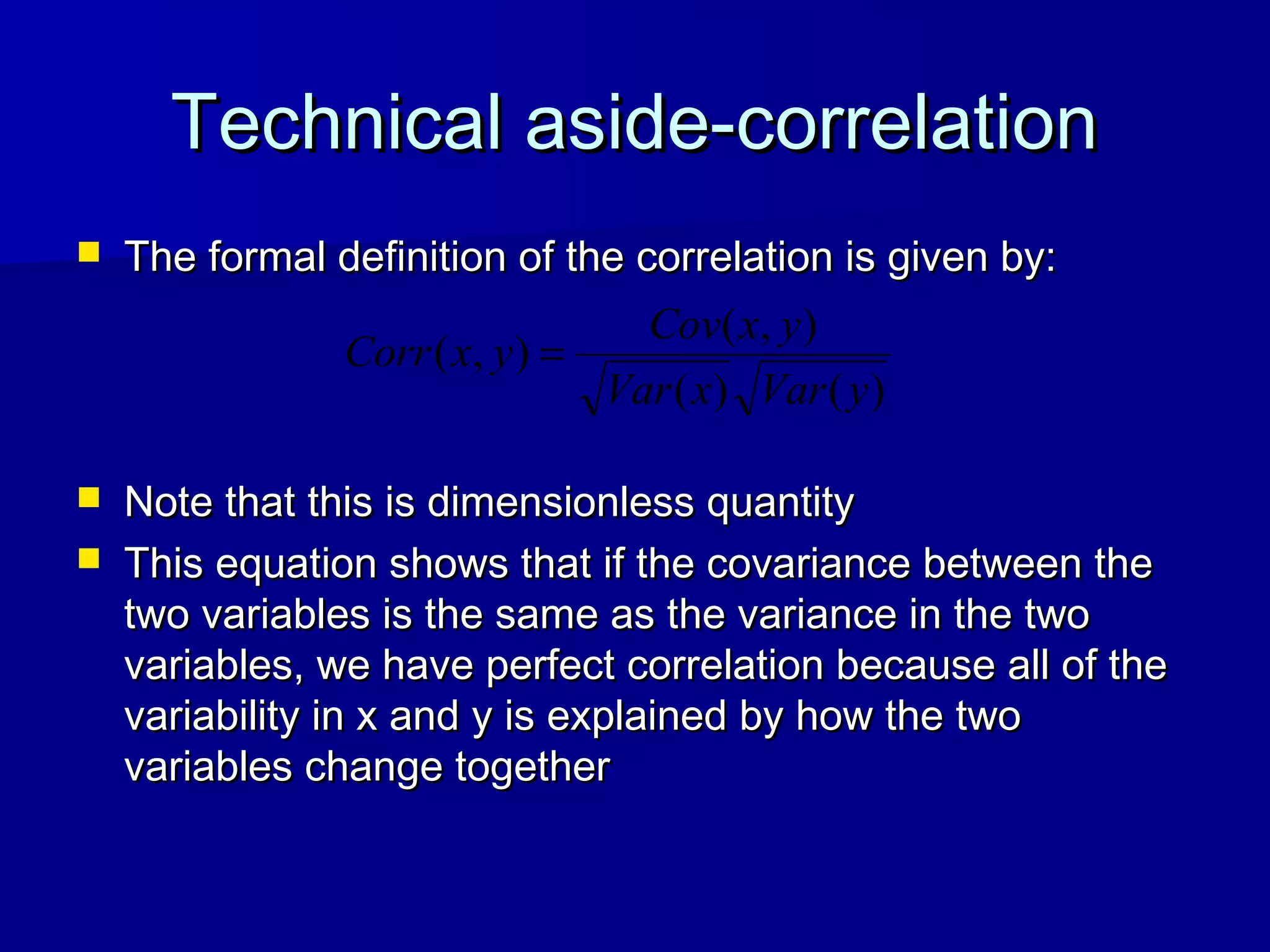

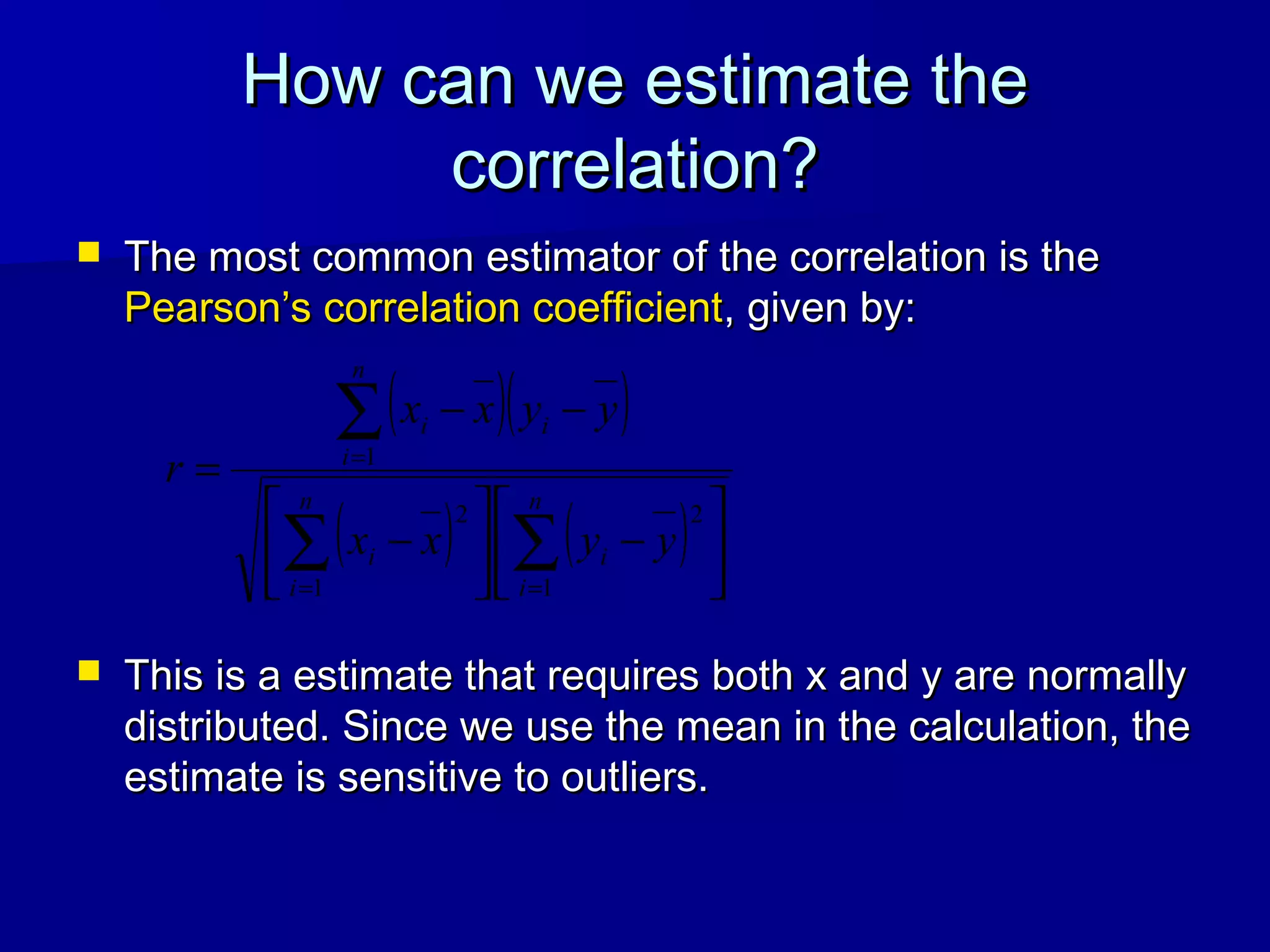

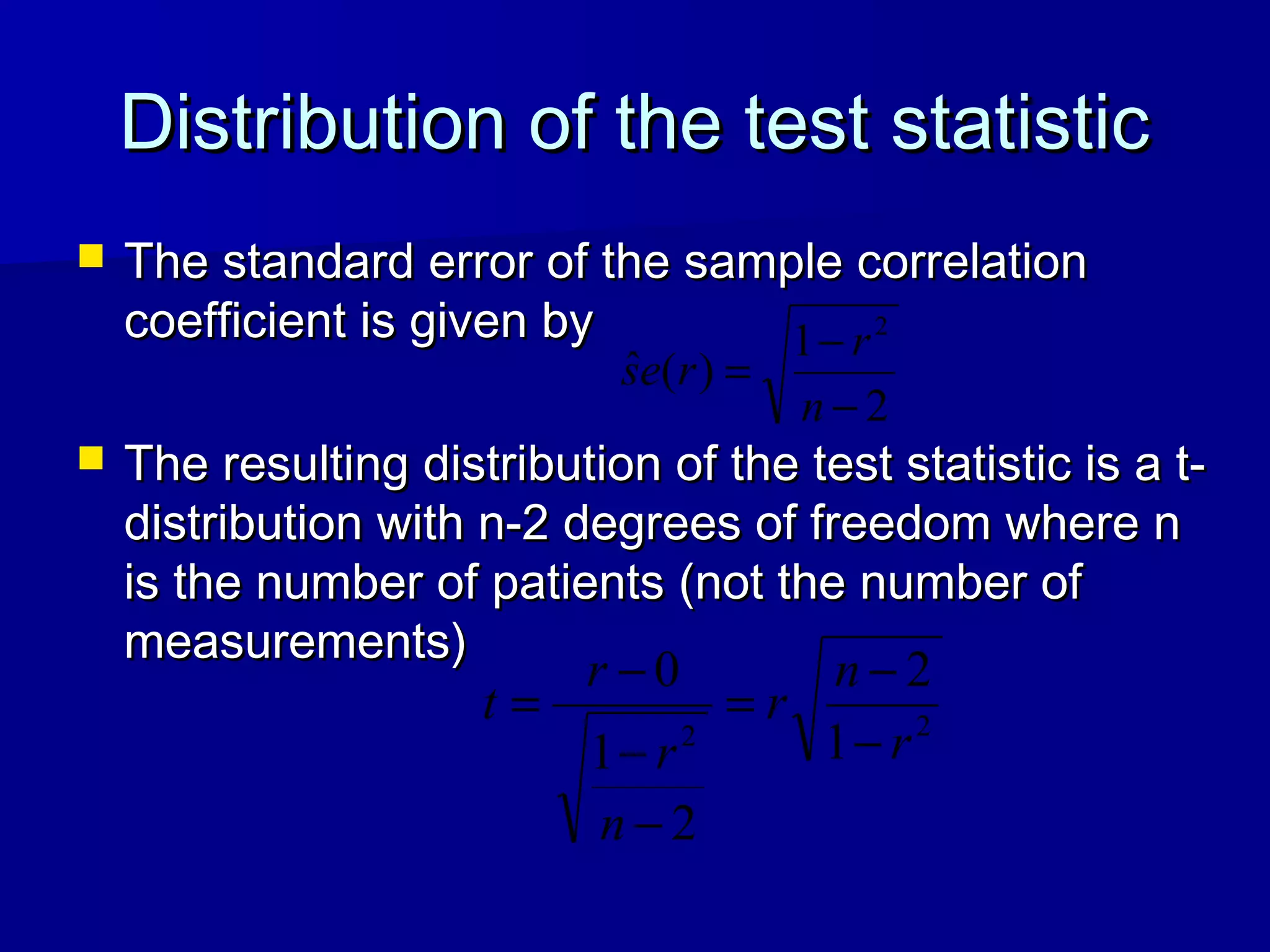

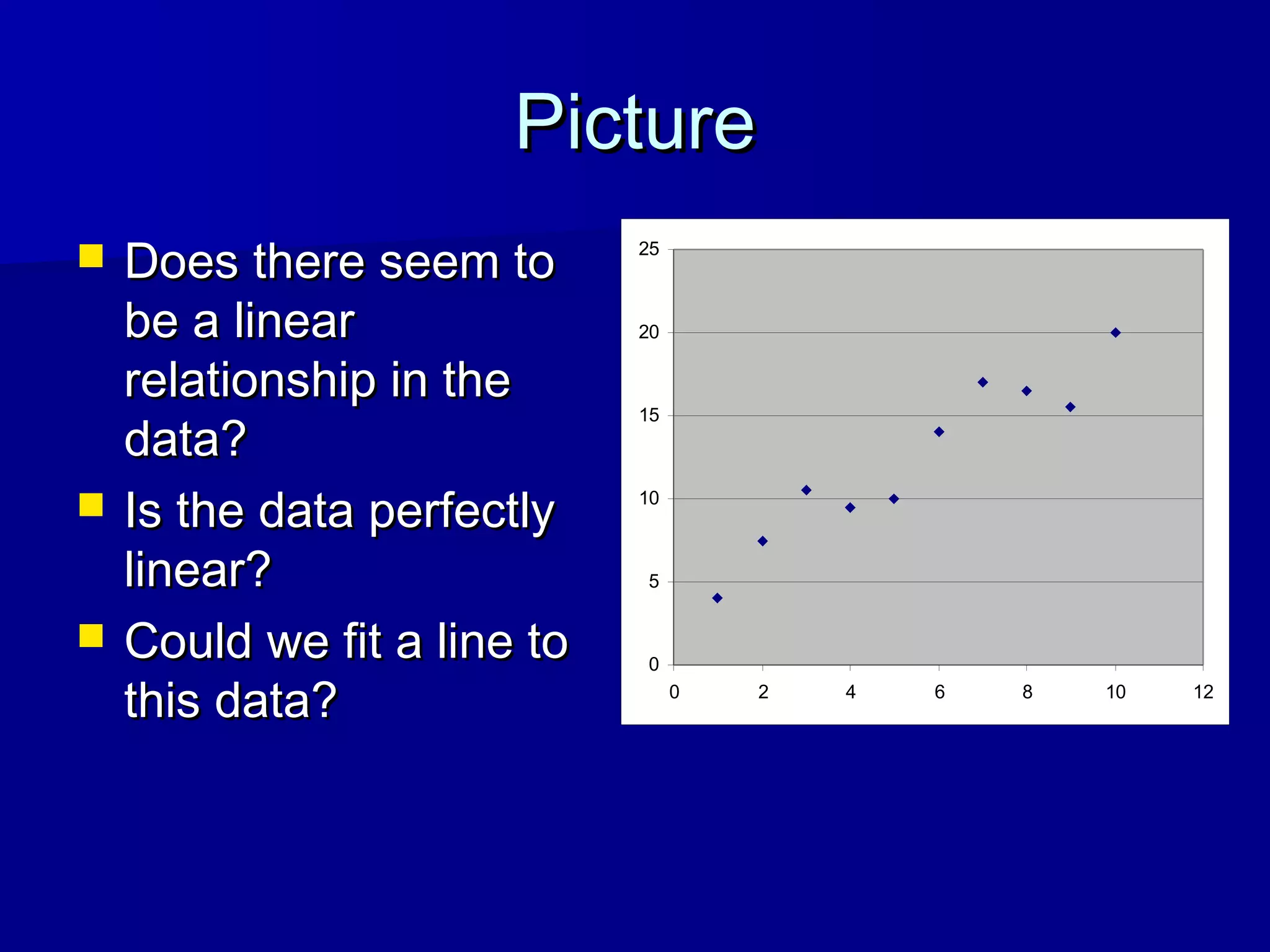

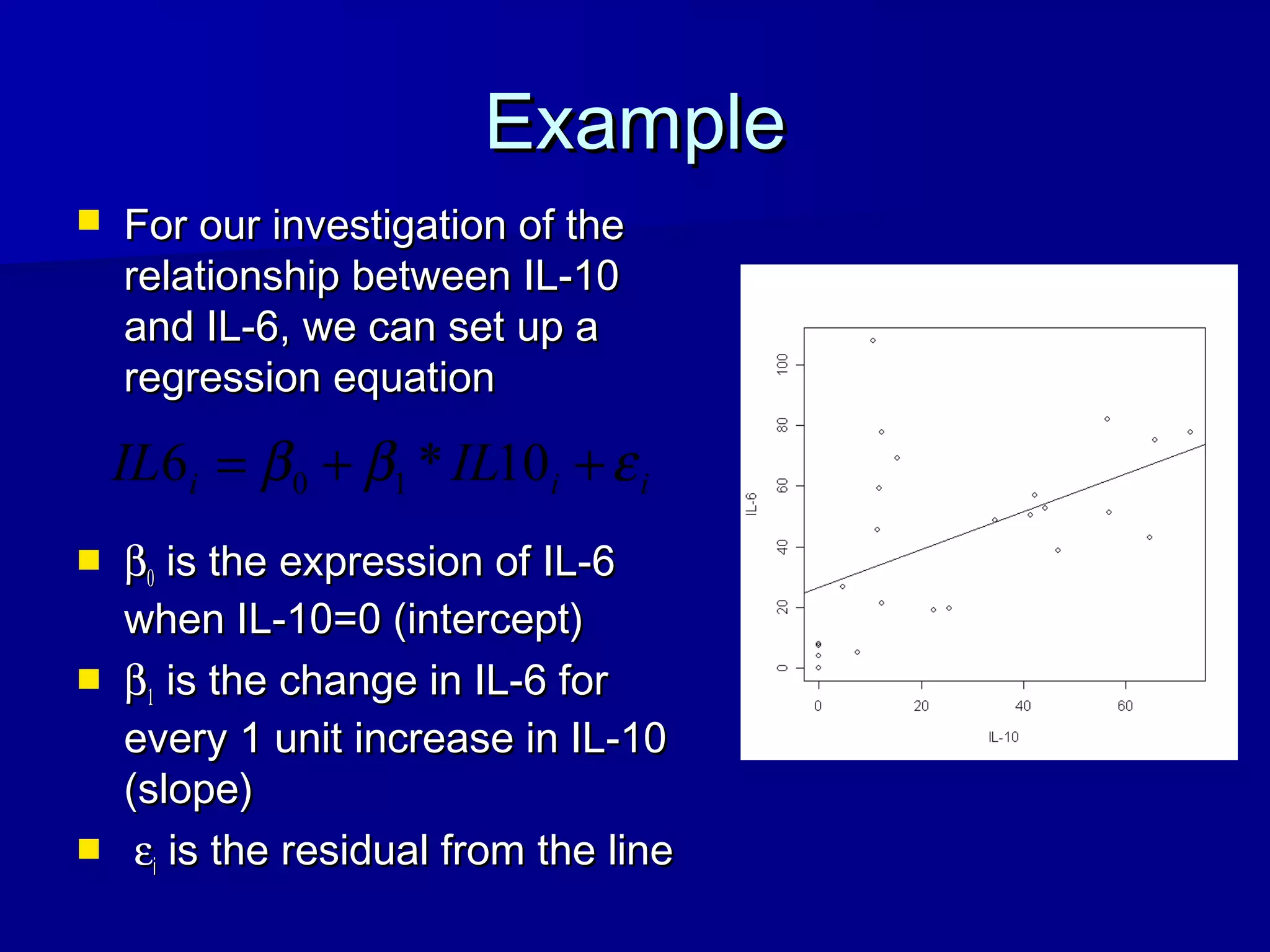

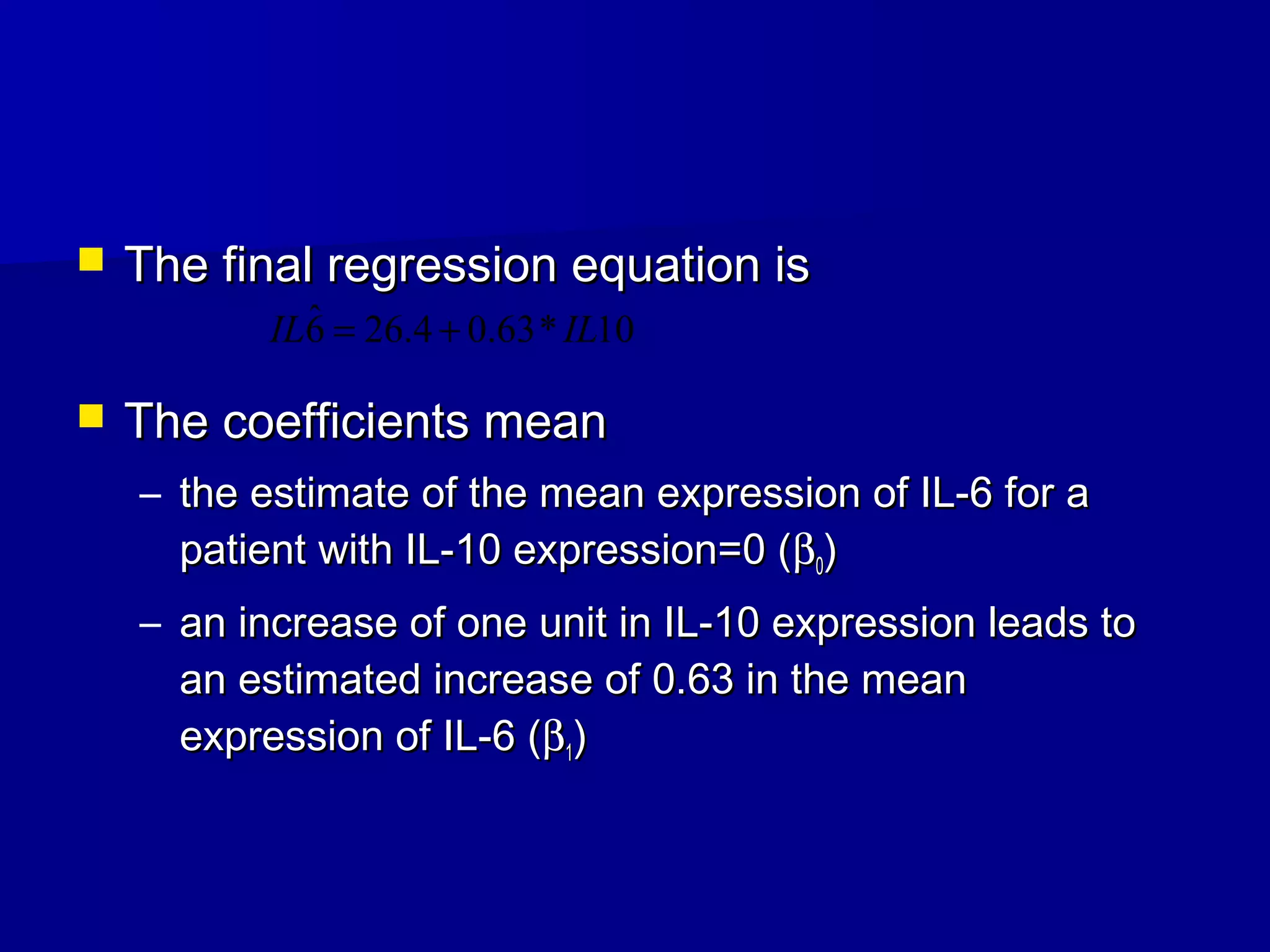

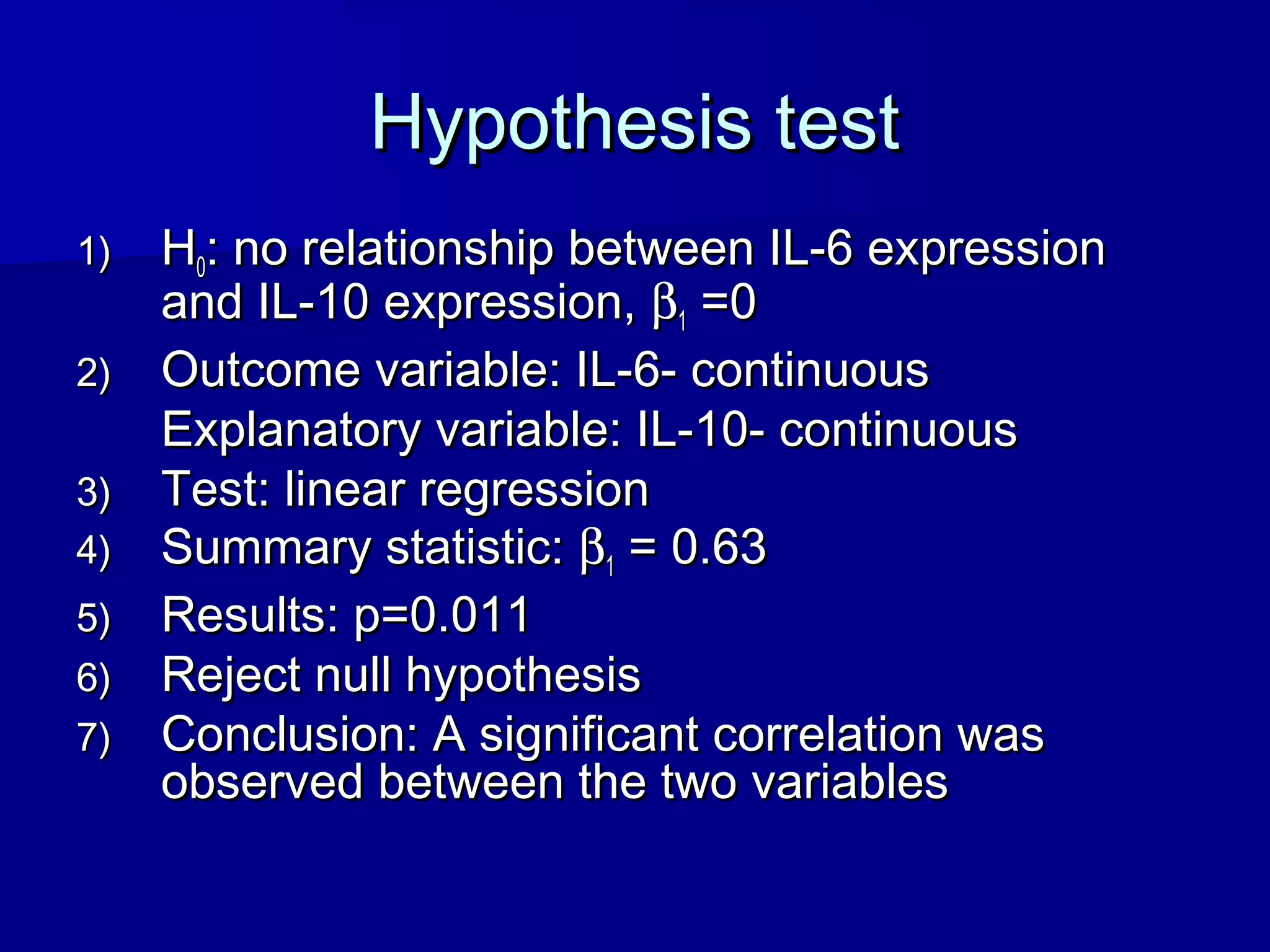

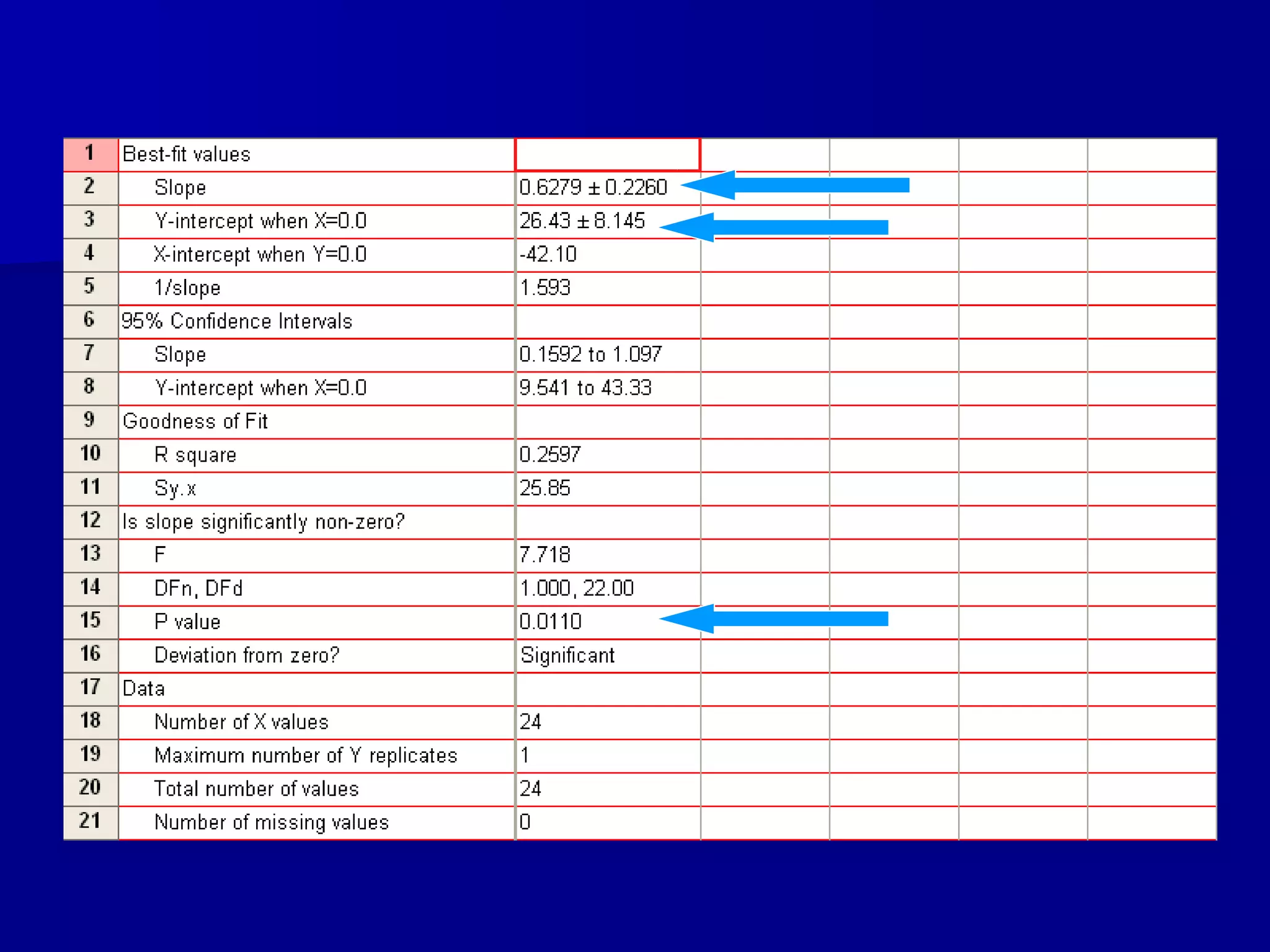

This document discusses hypothesis testing for correlation between two continuous variables. It defines correlation, outlines the steps for a hypothesis test comparing correlation to zero, and provides the technical details of calculating Pearson's correlation coefficient. A key point is that the distribution of the test statistic is t-distributed, allowing assessment of statistical significance through the p-value. The goal of the example analysis is to determine if there is a linear relationship between IL-10 and IL-6 expression levels in patients.