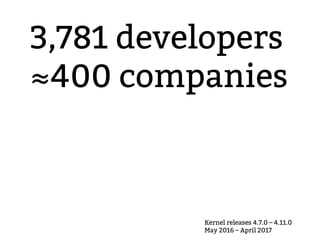

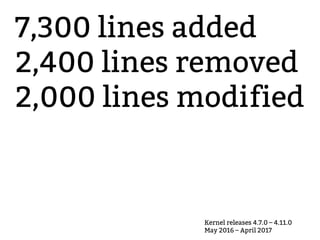

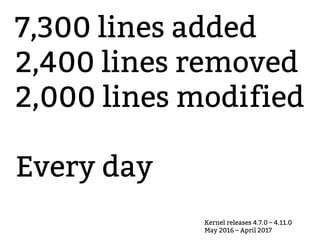

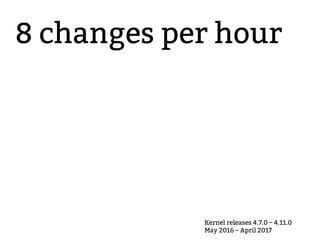

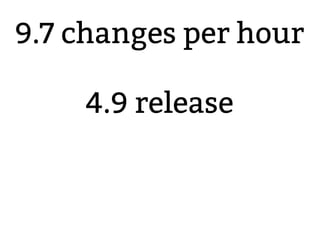

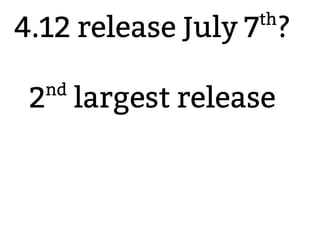

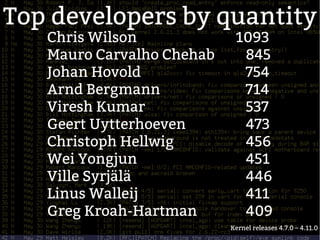

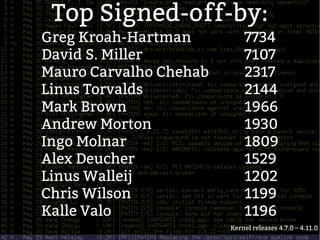

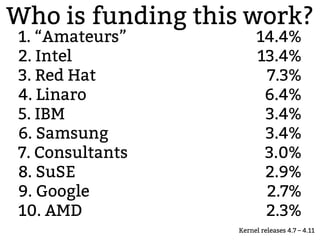

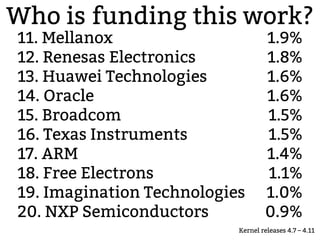

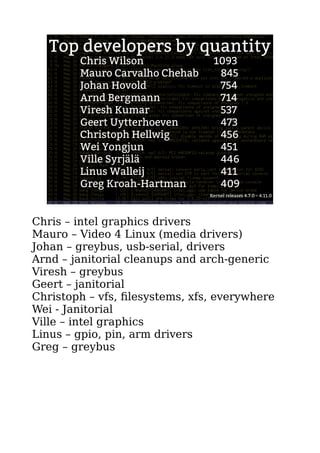

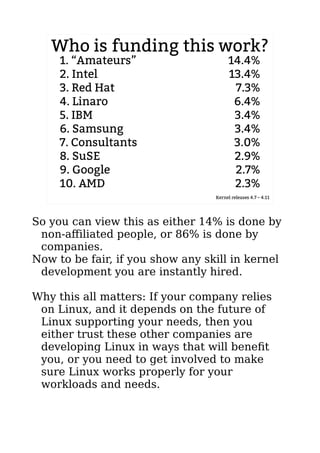

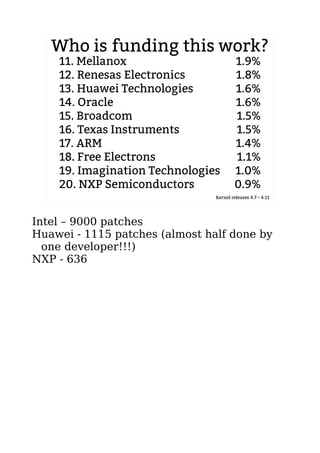

This document outlines the rapid development and contributions to the Linux kernel between releases 4.7.0 and 4.11.0, highlighting statistics such as 7,300 lines added and an average of 9.7 changes per hour. It lists top developers and companies funding this work, with 14.4% contributions coming from 'amateurs' and major contributions from companies like Intel and Red Hat. The author emphasizes the importance of community involvement for improving the kernel's functionality for diverse workloads.